Modern Economy

Vol.05 No.12(2014), Article ID:51802,7 pages

10.4236/me.2014.512106

Value Added in Undergraduate Business Education: An Empirical Analysis*

Thomas P. Breslin, Subarna K. Samanta

Economics Department, The College of New Jersey, Ewing, NJ, USA

Email: breslint@tcnj.edu

Copyright © 2014 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 13 October 2014; revised 15 November 2014; accepted 25 November 2014

ABSTRACT

A substantial body of research since the early fifties has been conducted addressing the economic benefits from higher education. A more limited body of research has further demonstrated that there exist important qualitative differences in the academic returns or academic performances due to college education. It has been established that such qualitative differences occur because of the choice of the major, quality of institution and quality of the students. Although graduates from higher quality institutions generally exhibit higher academic performance, this can explain only a small proportion of the variability of student’s performance. The present study attempts to address the institutional quality issue from a value-added perspective. We investigate how the business school education from an undergraduate institution can affect the academic performance of its students. Data for this study were collected in a four-year college in the northeast region of the United States. The School of Business in that institution offers majors in Accounting, Business Administration (with five specializations), and Economics. All business students must take a common core of required courses including accounting, economics, finance, marketing, management, information technology, and statistics prior to taking their courses in the major discipline. Our sample size contains 415 graduating students over two time periods: 2008 and 2012. Results suggest that undergraduate business school education accounts for about 25% to 35% of the variability of the academic performance for the students.

Keywords:

Learning Outcomes, ETS Study, Value-Added Measures, Major Field Test, Effective Learning

1. Introduction

A substantial body of research since the early fifties has examined the economic benefits of higher education, especially college education. An important stream of research [1] - [5] has demonstrated that there exist important qualitative differences in the academic accomplishment and employment opportunities from such college education. It has been suggested that such qualitative differences occur because of the choice of the major, quality of the institution, and quality of the students. Although graduates from higher quality institutions exhibit higher academic performance in general, the question of how much value is added as a result of attending such higher quality institutions has been only partially addressed. In this study, we attempt to address the institutional quality issue from a different angle; we investigate how the performance in the business program in a quality undergraduate institution affects the learning outcomes of the students.

As an increasing number of traditional universities expand their course offerings, assessment of student learning outcomes, particularly at AACSB accredited business schools, has become an important measure of comparing impact of such courses on student performance. Recent studies [6] - [8] which analyze student performance in courses across schools, suggest that business school courses compare favorably (in terms of learning outcomes) to traditional courses offered in many liberal arts schools (such as social sciences, arts and humanities etc.). In order to examine how a business school education affects the academic performance of the students, we followed AACSB Assurance of Learning guidelines for assessment of students and used AACSB assessment procedures to analyze the efficacy of learning in a business program. This study uses assessment data of recent college graduating classes to address the following research questions: 1) What are the effects of undergraduate business education on the academic performance of the business students? and 2) Does liberal arts education contribute to the overall learning outcomes of the business majors?

2. Literature review

The concept of value-added, as applied to institutions of higher education, seeks to accurately measure the overall value that an institution adds to a student over the course of his or her education, while accounting for discrepancies in pre-college attributes of students. Measures of value-added in higher education are required for a progressive market of education, as they provide information both to consumers (i.e., employers), participants (i.e., students who need to make career choices) and producers (i.e., program directors, faculty) of higher education. For example, Jesse M. Cunha and Trey Miller [9] , summarize the main categories of “value” that should be accounted for in a measure of value-added: 1) increase in a student’s general and specific knowledge, so that his economic productivity is increased and rewarded in the labor market with a higher wage; 2) knowledge gained for its significance in and of itself, in terms of personal enjoyment and sense of accomplishment (which may vary immensely across individuals); 3) positive externalities created, specifically social improvement both personally and to society; and 4) applicability of experiences and knowledge gained to job productivity1. They also describe the two major uses of value-added measures in higher education: 1) to capture the causal unique influence of institution on their students, as a better substitute to raw outcomes; and 2) to incentivize better performance across dimensions of student life among institutions of higher education2.

Theoretically, students and their parents would apply the information they derive from educational value-added measures to estimate what they are willing to pay for education from a specific institution. Ideally, high value-added scores would correlate with high prices of tuition because of higher demand3, and government agencies would use the data to make decisions about funding and other related policies. However useful they are theoretically, measures of value-added in higher education are still relatively unrevealing due to many shortcomings of existing models. The biggest problems faced in this field relate to the quality of the available data: lack of sufficient output measures, inadequacy of the statistics used in measuring a specific construct, reliability of the data, and unquantifiable variables that must be omitted due to the difficulty they pose in their application. In addition, individual problems arise specific to the specific models that have been developed by various parties, as reviewed below.

The U.S. News and World Report provide an annual ranking of institutions of higher education, including a ranking of business schools. The variables reported in their rankings include: 1) how much an institution spends on education per student; 2) the salary of its faculty members; 3) the percentage of its faculty members that are full time; 4) the percentage of its faculty with the highest degrees in their fields; 5) overall student/faculty ratio, and 6) class size. Student selectivity or how different students pick different schools for different criteria is measured by the percentage of students who graduated in top 10% of their high school class and the average SAT/ACT scores of entering class. Other variables included in their valuation are yearly retention rates, graduation rates, school reputation according to personal rankings of deans, presidents, and provosts, the college’s acceptance rate, and its yield (percentage of accepted students who matriculate)4,5. This approach focuses on the inputs and reputation of an institution, but gives little emphasis to output measures among students. The only output measures used is graduation and retention rates, but these variables provide no information about the knowledge and benefits actually gained by students. Measures of the resources an institution uses also fail as a reliable variable because the uses and applications of these resources can vary in degree. Similarly, college acceptance and matriculation rates provide no information about what is gained through the education6. Reputation being a subjective matter is measured in an unreliable way.

J. J. Arias and Douglas Walker [12] conducted a controlled study which found statistically significant evidence that small class size has a positive impact on student performance. Several previous studies indicated that there was no to little correlation between the two, but Arias and Walker controlled for more variables than in those contradicting studies: all sample sections were taught by the same instructor in order to control for teaching pedagogies and differences in lecture material. Arias and Walker also controlled for other factors that could have confounding results in the previous contradictory studies. The only major change among samples was the class size. Over two semesters, the study analyzed four samples, 2 large (a maximum of 89 students) and 2 small (a maximum of 25 students), in the introductory business course “economics and society” at Georgia College and State University. All sections (one large and one small in each semester) were scheduled back-to-back and on the same days of the week. With the testing data from each section from each semester, the variances were found to be statistically equal7. The next test was to test the differences in means across sections and Arias and Walker could not reject the null hypothesis of there being a larger mean score in smaller classes, while controlling for student inputs such as GPA and SAT scores. Although this study suffers from some statistical limitations, its findings enable us to consider class sizes as a contributing factor in the amount of value an education adds to a student, independently from other underlying factors such as a student’s shyness, a teacher’s personality, student motivation, attentiveness, and attendance.

Another approach to measuring value added focuses on the analysis surveys of students designed to assess factors that are believed to influence the value-added provided by an institution of higher education. For example, the National Survey of Student Engagement (NSSE) focuses on valuating the quantity of educational processes that have been scientifically proven to be good methods of education. The NSSE uses self-reported student data on factors such as how many hours a week spent on school work, how many papers written per semester, whether interaction with professors outside of class time occurs regularly and whether and to what degree extracurricular activities are pursued8. Although this approach has encompassed multidimensional profiles of institutions and students, it does suffer from the inherent flaws of self-report measurements and it fails to consider objective learning output measures.

Schlee, Harich, Kiesler, and Curren [14] attempted to look at differences among undergraduate business students’ self-perceptions according to their majors, levels of self-enhancement in self-perception, and enhanced perceptions of students of their own major. Students were surveyed asking to what extent various characteristics described them or not, as well as to what extent they described students of other majors. From the sample size of 428 students from several universities, it was found there was no statistical difference among the results of the different universities, and so the data was pooled together as one. Statistical inferences do indicate that self- perception bias does exist. Student does perceive students of other majors to have certain abilities differing from their own, even if students of that major do not necessarily find it in their own self-evaluations. These conclusions give us some insight into the use of surveys and the limitations of using “first-person data” as explanatory variables in such value-added studies. (All schools sampled were from the same area in western United States and subject to sample selection bias).

A major recurring issue in these models is the lack of available student output data. In response to this problem, the Educational Testing Services (ETS) has, created a variety of tests to assess the students’ knowledge in their field in general and their ability to apply the methods, techniques, and knowledge they have gained. Every four or five years, a test is reevaluated by a curriculum review survey to insure the test encompasses the major educational points in a field. For example, the ETS Major Field Test in Business (MFT-B) tests students in the following areas: Economics, Management, Quantitative Business Analysis, Marketing, Legal and Social Environment, Information Systems, and International Issues9. Multiple choice questions cover these core topics, and an institution’s test scores are compared to those of other institutions, give the participant institutions and students alike a sense of how well they perform; the strengths and weaknesses of an institution’s curriculum and practices can be identified and monitored. The ETS measures it’s the reliability of its measurement instruments using the Kuder-Richardson Formula 20, with a reported reliability coefficient of 0.89 for the MFT-B test. According to Ebel and Frisbie [15] , a reliability coefficient above 0.65 allows conclusions to be drawn about groups, while a reliability coefficient above 0.85 allows conclusions to be drawn about individual students10,11. Thus, the ETS Major Field tests is a reliable measure of knowledge gained in business related areas.

P. Bycio and J. S. Allen [18] examined the external validity (difference in populations and difference in settings) of the ETS Major Field Test in business. After running several predictive analyses using different combinations of variables, they found that the factors that most highly influence the MFT-B scores are business core GPA, SAT scores, and (self-reported) motivation scores. This is consistent with considering the MFT-B score as a measure of intellect and knowledge, as it correlates with other generally accepted measures of intellect and knowledge (SAT and GPA scores). However, they pointed out a possible flaw in the value-added measuring capabilities of the MFT-B. The third variable included, the self-reported survey of student motivational scales, had a significant effect on test scores of students at a specific institution. Since the motivation factors are not uniform across schools, a major flaw of the ETS Major Field Test in business as a measure of value-added by a business education is the potential for synthetically enhanced scores. Despite such limitations, it has been proved through several studies to be a relatively reliable measure of the knowledge a student gains in his time at a given higher education institution [19] - [21] . It provides a measure of value-added learning as a component of output, which should be the key constituent in measuring value-added overall by a higher institution.

3. The Model Specification and Analysis

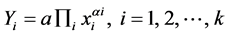

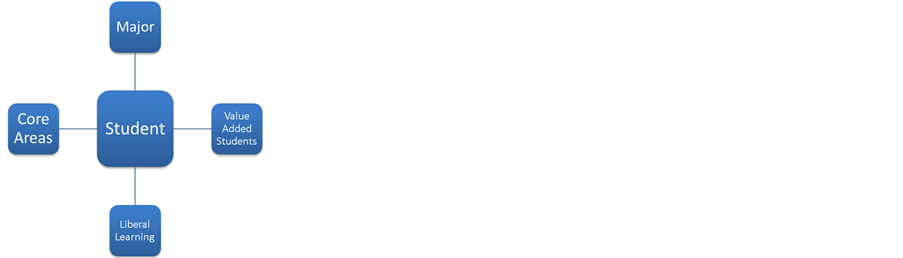

Following [18] , this study also uses the statistical predictive modeling based on the Cobb-Douglas production function. The model specification used to fit to the individual responses is

(1)

(1)

where  represents the i-th student’s score on the ETS examination, xi is the vector with the values of k characteristics of student i, αi represents the impact of such characteristics,

represents the i-th student’s score on the ETS examination, xi is the vector with the values of k characteristics of student i, αi represents the impact of such characteristics,  represents the multiplication of all variables, and a is a constant. The schematic presentation of the model can be portrayed as below:

represents the multiplication of all variables, and a is a constant. The schematic presentation of the model can be portrayed as below:

Data for this study was collected from a four year college in the northeastern region of the United States. The School of Business in that institution offers majors in Accounting, Business Administration (with five specializations), and Economics. All business students must take 32 course units to graduate, of which 16 are liberal learning courses and 16 are business courses, including core courses and courses in the major. Many students (over 75%) choose major when they apply for admission to the college. A total of 415 graduating students (202 students in 2008 and 213 students in 2012) took the ETS exam at the end of their senior year. Score on the exit exam is treated as a proxy of the final product of teaching and learning outcomes for four academic years. Summary statistics for both groups of students are presented in the following table 1, table 2.

As Table 2 indicates, students present similar SAT, GPA and ETS scores. To transform equation (1) into the traditional linear regression model, we take logarithmic transformation of both sides of this equation and add a random error term εi, and get

(2)

(2)

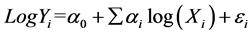

A survey of the literature on student performance (as explained in Section II) suggests that the factors that may influence student results in the senior exit exams include: intellectual capability, quality of education in the school and other non-observable factors. For intellectual capability we used the two main SAT scores: SAT-Math and SAT-Verbal. For the quality of education we used two GPA measures: GPA in the major subject and the overall GPA. Thus, SAT-Verbal and SAT-Math are proxies of the students’ intellectual capabilities, whereas GPA in Business Major is the measurement of value added by the business program and overall GPA is the measurement of overall valued added from the four years education. Thus, using SAT-M as the SAT-Math score, and SAT-V, GPA-B, and GPA-G as a student’s SAT-Verbal, GPA-Business and GPA-overall scores, we get the basic predictive equation as

(3)

(3)

We estimated equation (3) by using a robust error estimation methodology and the estimated results for the year 2008 are reported in Table 3. We have also estimated the model using standardized regression methodology. It is apparent that these four variables explain about 48% variability of the (log ETS scores) of the graduating seniors. We have also reported the standardized beta to measure the relative importance of each of the explanatory variables. Only three variables—SAT-Math, SAT-V and GPA in business—are statistically significant. Standardized betas indicate that GPA in business is the most important variable affecting the ETS score. Using the stepwise regression procedure, it has been found that GPA-Business accounts for about 35% of the variability of the ETS score in the year 2008 and about 29% of the variability in the year 2012. It is interesting to note that overall GPA is not statistically significant for both years.

The estimation result for the year 2012 is reported in Table 4. Statistical results are somewhat different form the year 2008. First, the contribution of the business GPA is not as large as in the year 2008, although it is still the most important variable. Overall GPA or GPA in non-business courses affect positively the ETS scores contrary to the year of 2008, though not significant

We have conducted a test for structural changes between these two years by computing the Chow test statistic for both Model 1 and Model 2, and the numerical value of this statistic is statistic is 2.02 (p = 0.0671) for model 1 and 2.02 (p = 0.0749) for Model 2, respectively. Since p-values are more than 5% in both cases, we can infer that there is no significant structural change between these two years and therefore, can combine both groups of students and measure the factors that affect students’ performance in the ETS exam. The regression results of the combined sample are reported in Table 5.

Table 1. Summary Statistics for the year 2008.

Table 2. Summary Statistics for the year 2012.

Table 3. Predictors of ETS Scores 2008.

(p-values in parentheses).

Table 4. Predictors of ETS Scores 2012.

(p-values in parentheses).

In this pooled cross section and time series results reported in Table 5, we find that GPA in major is the most significant variable to explain the variability of the ETS scores. It is evident from the magnitude of the standar-

Table 5. Predictors of ETS Scores (2008 & 2012).

(p-values in parentheses).

dized beta 0.568 and 0.459. We also find that SAT score are highly significant whereas GPA overall and GPA in non-business major are not statistically significant. The regression model explains about 44% variability of the ETS scores and the F-statistics suggest that the model is adequate to measure the relevance of the explanatory variables. We also find that 1% increase in the school of business GPA will lead to approximately 0.30% increase in ETS score. Similarly, 1% increase is STA-math will lead to 0.163% increase in ETS score. On the other hand 1% increase in GPA other (non-business) will lead to a 0.06% decline in the ETS score.

4. Discussion and Conclusion

The focal point of this empirical study was to determine to what extent a business school curriculum impacts student learning from a value-added perspective. However, the findings of this study may not be generalizable to other universities since it is limited to only one school setting. Also Students in our study have relatively high average SAT scores (for example 1226 for accounting majors and 1225 for finance majors). Therefore, it is possible that the results may not hold true in institutions that have students with lower average SAT scores. Moreover, the class size in this study ranges from 22 to 32 students. Evidence does exist to suggest that smaller class sizes lead to higher student performance. Finally, there is a possibility of not including all explanatory variables in this study.

Despite such limitations, the findings of this study could be useful for effective learning process in business schools. As an increasing number of traditional universities expand their course offerings, assessment of student learning outcomes, particularly at AACSB accredited business schools, has become an important measure of comparing quality and quantity of courses delivered, on student performance. As stated earlier, we do not represent these to be all inclusive. However, the findings of this study could assist educators in identifying those students who are likely to underperform in the overall learning outcome process, and in providing an opportunity for early intervention. That is, students with low GPA in the business school courses could be counseled long time before taking the senior ETS exam. Academic advisers may develop appropriate academic plans for such students.

References

- Green, J.J., Stone, C.C. and Zegeye, A. (2012) The Major Field Test in Business: A Pretend Solution to the Real Problem of Assurance of Learning Assessment. Department of Economics. Ball State University, 9. http://econfac.iweb.bsu.edu/research/workingpapers/bsuecwp201201green.pdf

- Durden, G.C. and Ellis, L.V. (1995) The Effects of Attendance on Student Learning in Principles of Economics. American Economic Review, 85, 343-346.

- Ely, D.P. and Hittle, L. (1990) The Impact of Performance in Managerial Economics and Basic Finance Course. Journal of Financial Education, 8, 59-61.

- Anderson, G. and Benjamin, D. (1994) The Determinants of Success in University Introductory Economics Courses. Journal of Economic Education, 25, 99-118. http://dx.doi.org/10.1080/00220485.1994.10844820

- Ekpenyong, B. (2000) Empirical Analysis of the Relationship between Students’ Attributes and Performance: A Case Study of the University of Ibadan (Nigeria) MBA Programme. Journal of Financial Management & Analysis, 13, 54- 63.

- Cheung, L.W. and Kan, C.N. (2002) Evaluation of Factors Related to Student Performance in a Distance-Learning Business Communication Course. Journal of Education for Business, 77, 257-259. http://dx.doi.org/10.1080/08832320209599674

- Ehrenberg, R.G., Brewer, D., Gamoran, A. and Willms, J.D. (2001) Class Size and Student Achievement. Psychological Science in the Public Interest, 2, 1-30. http://dx.doi.org/10.1111/1529-1006.003

- Haemmerlie, F.M., Robinson, D.A. and Carmen, R.C. (1991) Type A Personality Traits and Adjustment to College. Journal of College Student Development, 32, 81-82.

- Cunha, J.M. and Miller, T. (2012) Measuring Value-Added in Higher Education. HCM Strategists, 4-5. http://www.hcmstrategists.com/contextforsuccess/papers/CUNHA_MILLER_PAPER.pdf Didia, D. and Hasnat, B. (1998) The Determinants of Performance in Finance Courses. Financial Practice and Education, 8, 102-107.

- Bennett, D.C. (2001) Assessing Quality in Higher Education. Liberal Education. Association of American Colleges and Universities, 87. http://www.aacu.org/liberaleducation/le-sp01/le-sp01bennett2.cfm

- (2012) Best Undergraduate Business Programs Rankings. Education: Colleges. U.S. News, May 2012. http://colleges.usnews.rankingsandreviews.com/best-colleges/rankings/business-overall/data

- Arias, J.J. and Walker, D.M. (2004) Additional Evidence on the Relationship between Class Size and Student Performance. Journal of Economic Education, 35, 311-329. http://dx.doi.org/10.3200/JECE.35.4.311-329

- NSSE: About NSSE. National Survey of Student Engagement. Indiana University Bloomington, n.d. Web. http://nsse.iub.edu/html/about.cfm

- Schlee, R.P., Harich, K.R., Kiesler, T. and Curren, M.T. (2007) Perception Bias among Undergraduate Business Students by Major. Journal of Education for Business, 82, 169-177. http://dx.doi.org/10.3200/JOEB.82.3.169-177

- Ebel, R.L. and Frisbie, D.A. (1991) Essentials of Educational Measurement. 5th Edition, Prentice-Hall, Englewood Cliffs.

- (2011) Find out How to Prove- and Improve- the Effectiveness of Your Business Program with the ETS Major Field Tests. Educational Testing Service. http://www.ets.org/Media/Tests/MFT/pdf/mft_testdesc_business_4cmf.pdf

- Black, H.T. and Duhon, D.L. (2003) Evaluating and Improving Student Achievement in Business Programs: The Effective Use of Standardized Assessment Tests. Journal of Education for Business, 79, 90-98. http://www.tandfonline.com/doi/pdf/10.1080/08832320309599095

- Bycio, P. and Allen, J.S. (2007) Factors Associated With Performance on the Educational Testing Service (ETS) Major Field Achievement Test in Business (MFT-B). Journal of Education for Business, 82, 196-201. http://dx.doi.org/10.3200/JOEB.82.4.196-201

- Biddle, B.J. and Berliner, D.C. (2002) Small Class Size and Its Effects. Educational Leadership, 59, 12-23.

- Blatchford, P. (2005) A Multi-Method Approach to the Study of School Class Size Differences. International Journal of Social Research Methodology, 8, 195-205. http://dx.doi.org/10.1080/13645570500154675

- Blaylock, C. and Lacewell, S. (2006) Do Prerequisites Measure Success in a Basic Finance Course? A Quantitative Investigation. Proceedings of the Academy of Educational Leadership, 11, 39-43.

NOTES

*Initial Draft of this paper was presented at the Annual conference of the Eastern Economic Association held at New York, NY May 9-May 11, 2013. Not to be quoted. Authors are grateful to Jacklyn Dejohn for her research assistance (in overall editorial work and especially in section ii, and to Joao Neves for his incisive comments and suggestions.

1Cunha, Jesse M., and Trey Miller. “Measuring Value-Added in Higher Education.” HCM Strategists, Sept. 2012. Pages 4, 5. Web. http://www.hcmstrategists.com/contextforsuccess/papers/CUNHA_MILLER_PAPER.pdf

2Cunha, Miller. Pages 4, 5.

3Bennett, Douglas C. “Assessing Quality in Higher Education.” Liberal Education. Association of American Colleges and Universities, Apr. 2001. Volume 87, Number 2. Web. http://www.aacu.org/liberaleducation/le-sp01/le-sp01bennett2.cfm [10] .

4Bennett.

5Best Undergraduate Business Programs Rankings. Education: Colleges. U.S. News, May 2012. Web. http://colleges.usnews.rankingsandreviews.com/best-colleges/rankings/business-overall/data [11] .

6Bennett.

7Arias, J.J., Walker, D.M. (2004). Additional Evidence on the Relationship between Class Size and Student Performance. Journal of Economic Education, 35(4): 311-329. [12] .

8NSSE: About NSSE. National Survey of Student Engagement. Indiana University Bloomington, n.d. Web. http://nsse.iub.edu/html/about.cfm [13] .

9Find out How to Prove- and Improve- the Effectiveness of Your Business Program with the ETS Major Field Tests. Educational Testing Service, 2011. Web. http://www.ets.org/Media/Tests/MFT/pdf/mft_testdesc_business_4cmf.pdf [16] .

[1] 0H. Tyrone Black & David L. Duhon (2003) [17] : Evaluating and Improving Student Achievement in Business Programs: The Effective Use of Standardized Assessment Tests, Journal of Education for Business, 79, 2, 90-98. Page 92. Web. http://www.tandfonline.com/doi/pdf/10.1080/08832320309599095

[1] 1Ebel, R. L., & Frisbie, D. A. (1991). Essentials of educational measurement (5th ed.). Englewood Cliffs, NJ: Prentice-Hall.