World Journal of Engineering and Technology

Vol.04 No.03(2016), Article ID:72368,7 pages

10.4236/wjet.2016.43D028

Dynamic Programming to Identification Problems

Nina N. Subbotina1, Evgeniy A. Krupennikov2

1Krasovskii Institute of Mathematics and Mechanics, Ekaterinburg, Russia

2Ural Federal University, Ekaterinburg, Russia

Received: June 11, 2016; Accepted: October 22, 2016; Published: October 29, 2016

ABSTRACT

An identification problem is considered as inaccurate measurements of dynamics on a time interval are given. The model has the form of ordinary differential equations which are linear with respect to unknown parameters. A new approach is presented to solve the identification problem in the framework of the optimal control theory. A numerical algorithm based on the dynamic programming method is suggested to identify the unknown parameters. Results of simulations are exposed.

Keywords:

Nonlinear System, Optimal Control, Identification, Discrepancy, Dynamic Programming

1. Introduction

Mathematical models described by ordinary differential equations are considered. The equations are linear with respect to unknown constant parameters. Inaccurate measurements of the basic trajectory of the model are given with known restrictions on admissible small errors.

The history of study of identification problems is rich and wide. See, for example, [1] [2]. Nevertheless, the problems stay to be actual.

In the paper a new approach is suggested to solve them. The identification problems are reduced to auxiliary optimal control problems where unknown parameters take the place of controls. The integral discrepancy cost functionals with a small regularization parameter are implemented. It is obtained that applications of dynamic programming to the optimal control problems provide approximations of the solution of the identification problem.

See [3] [4] to compare different close approaches to the considered problems.

2. Statement

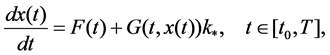

We consider a mathematical model of the form

(1)

(1)

where  is the state vector,

is the state vector,  ,

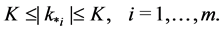

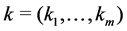

,  is the vector of unknown para- meters satisfying the restrictions

is the vector of unknown para- meters satisfying the restrictions

(2)

(2)

Let the symbol  denote the Euclidean norm of the vector

denote the Euclidean norm of the vector .

.

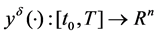

It is assumed that a measurement  of a realized (basic) solution

of a realized (basic) solution  of Equation (1) is known, and

of Equation (1) is known, and

(3)

(3)

We consider the problem assuming that the elements  of the

of the  matrix

matrix  are twice continuously differentiable functions in

are twice continuously differentiable functions in . The coordinates

. The coordinates  of the measurement

of the measurement  are twice continuously differentiable functions in

are twice continuously differentiable functions in , too. The coordinates

, too. The coordinates  of the vector- function

of the vector- function

We assume also that the following conditions are satisfied

are true.

Here

The identification problem is to create parameters

where

3. Solution

3.1. An Auxiliary Optimal Control Problem

Let us introduce the following auxiliarly optimal control problem for the system

where

for a large constant

Admissible controls are all measurable functions

Here

N o t e 1. A solution

which can be considered as an approximstion of the solution of the identification problem (1), (2).

3.2. Necessary Optimality Conditions: The Hamiltonian

Recall necessary optimality conditions to problem (6), (7), (8) in terms of the hami- ltonian system [5] [6].

It is known that the Hamiltonian

where

It is not difficult to get

where

Here the vector-column

3.3. The Hamiltonian System

Necessary optimality conditions can be expressed in the hamiltonian form. An optimal trajectory

and the boundary conditions

where symbols

Parameters

We introduce the last important assumption.

where

N o t e 2. Using definition (10) one can check that constant K, satisfying assumtion

where

Here

If

and the differential inclusions (11) transform into the ODEs.

Let us introduce the discrepancies

and the boundary conditions

where

3.4. Main Result: Dynamic Programming

Using skims of proof for similar results in papers [8] [9] [10] we have provided the following assertion.

Theorem 1 Let assumptions

It follows from theorem 1, that the average values

4. Numerical Example

A series of numerical experiments, realizing suggested method, has been carried out. As an example a simple mechanical model has been taken into consideration.

This simplified model describes a vertical rocket launch after engines depletion. The dynamics are described as

where

A function

The suggested method is applied to solve the identification problem for

We introduce new variables

where

We put

The corresponding hamiltonian system (16) for problem (21),(8) has the form

with initial conditions

The solutions were obtained numerically. On the Figure 1 and Figure 2 the graphs

Figure 1. k(t) graph for δ = 5; k(α, δ) = 0.375.

Figure 2. k(t) graph for δ = 2; k(α, δ) = 0.325.

of functions

Acknowledgements

This work was supported by the Russian Foundation for Basic Research (projects no. 14-01-00168 and 14-01-00486) and by the Ural Branch of the Russian Academy of Sciences (project No. 15-16-1-11).

Cite this paper

Subbotina, N.N. and Krupennikov, E.A. (2016) Dynamic Program- ming to Identification Problems. World Jour- nal of Engineering and Technology, 4, 228-234. http://dx.doi.org/10.4236/wjet.2016.43D028

References

- 1. Billings, S.A. (1980) Identification of Nonlinear Systems—A Survey. IEE Proceedings D— Control Theory and Applications, 127, 272-285. http://dx.doi.org/10.1049/ip-d.1980.0047

- 2. Nelles, O. (2001) Nonlinear System Identification: From Classical Approaches to Neural Networks and Fuzzy Models. Springer, New York. http://dx.doi.org/10.1007/978-3-662-04323-3

- 3. Kryazhimskiy, A.V. and Osipov, Yu.S. (1983) Modelling of a Control in a Dynamic Sys-tem. Engrg. Cybernetics, 21, 38-47.

- 4. Osipov, Yu.S. and Kryazhimskiy, A.V. (1995) Inverse Problems for Ordinary Differential Equa-tions: Dynamical Solutions. Gordon and Breach, London.

- 5. Pontryagin, L.S., Boltyanskij, V.G., Gamkrelidze, R.V. and Mishchenko, E.F. (1962) The Mathematical Theory of Optimal Processes. Interscience Publishers, a Division of John Wiley and Sons, Inc., New York and London.

- 6. Krasovskii, N.N. and Subbotin, A.I. (1977) Game Theoretical Control Problem. Springer- Verlag, New York.

- 7. Clarke, F.H. (1983) Optimization and Nonsmooth Analysis. John Wiley and Sons, New York.

- 8. Subbotina, N.N., Kolpakova, E.A., Tokmantsev, T.B. and Shagalova, L.G. (2013) The Method of Characteristics to Hamilton-Jacobi-Bellman Equations (Metod Harakteristik Dlja Uravnenija Gamil’tona-Jakobi-Bellmana) (in Russian). Ekaterinburg: RIO UrO RAN.

- 9. Subbotina, N.N., Tokmantsev, N.B. and Krupennikov, E.A. (2015) On the Solution of Inverse Problems of Dynamics of Linearly Controlled Systems by the Negative Discrepancy Method. Optimal Control, Collected Papers. In Commemoration of the 105th Anniversary of Academician Lev Semenovich Pontryagin, Tr. Mat. Inst. Steklova, 291 MAIK. Nauka/ Interperiodica, Moscow.

- 10. Subbotina, N.N. and Tokmantsev, N.B. (2015) A Study of the Stability of Solutions to Inverse Problems of Dynamics of Control Systems under Perturbations of Initial Data. Proceedings of the Steklov Institute of Mathematics December 2015, 291, 173-189. http://dx.doi.org/10.1134/s0081543815090126