Journal of High Energy Physics, Gravitation and Cosmology

Vol.02 No.04(2016), Article ID:70019,6 pages

10.4236/jhepgc.2016.24044

Using a Multiverse Version of Penrose Cyclic Conformal Cosmology to Obtain Ergodic Mixing Averaging of Cosmological Information Transfer to Fix H Bar (Planck’s Constant) in Each New Universe Created during Recycling of Universes Due to CCC, Multiverse Style

Andrew Walcott Beckwith

Physics Department, College of Physics, Chongqing University Huxi Campus, Chongqing, China

Copyright © 2016 by author and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY 4.0).

http://creativecommons.org/licenses/by/4.0/

Received: June 24, 2016; Accepted: August 21, 2016; Published: August 24, 2016

ABSTRACT

What we are doing is to use a generalization of the Penrose Cyclic conformal cosmology in order to argue for ergodic mixing of cosmological “information”, from cycle to cycle. While there would be a causal discontinuity, as is by example explained, in terms of breaking down of individual “particles” from cycle to cycle, there would be (analogous to a seed setting of pseudo-randomization of Fortran) informational “bits” transferred, as far as mixing of “information” collected from cycle to cycle, from N number of contributing universes via modified CCC, so as to create a uniform value of H bar (Planck’s constant) from cycle to cycle. Moreover this would do away with the “baby universes” Darwinian hypothesis, set up by String theorists whom postulate that up to 101000 or so universes would be created, with only say 1010 or so surviving (most dying off) because of “improperly” set variance in the values of H bar (Planck’s constant). i.e. the laws of physics would not be different from universe to universe.

Keywords:

Multiverse, Dawinian Evolution, Planck’s Constant, Baby Universes

1. Introduction

We refer the readers to an ergodic mixing procedure [1] - [3] as outlined in [4] by the author which is a way to have consistent mixing of “information” from the evolution to the death of universes, in terms of what is known as Penrose Cyclic conformal Cosmology [5] . What is also a bonus is that we also throw in by [6] a referral to the Alireza Sepehri, Ahmed Farag Ali supposition of reference [6] as to the formation of Wormholes for relic gravitons, as a way to argument the use of [1] - [4] .

2. A Brief Review as to the Use of the Material Given by Penrose, [5] Consistent with [4]

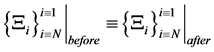

The key attribute as to the use of information and of the meta structure, is to use partition functions for a meta structure. Our idea is to use what is called a partition function [7] [8] for a universe, in order to

. (1)

. (1)

However, there is non-uniqueness of information put into each partition function . Furthermore Hawking radiation from the black holes is collated via a strange attractor collection in the mega universe structure to form a new big bang for each of the N universes represented by

. Furthermore Hawking radiation from the black holes is collated via a strange attractor collection in the mega universe structure to form a new big bang for each of the N universes represented by . Verification of this mega structure compression and expansion of information with a non-uniqueness of information placed in each of the N universes favors ergodic mixing treatments of initial values for each of N universe. How to tie in this energy expression, as in Equation (1) will be to look at the formation of a nontrivial gravitational measure as a new big bang for each of the N universes as by

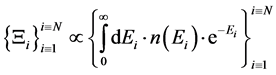

. Verification of this mega structure compression and expansion of information with a non-uniqueness of information placed in each of the N universes favors ergodic mixing treatments of initial values for each of N universe. How to tie in this energy expression, as in Equation (1) will be to look at the formation of a nontrivial gravitational measure as a new big bang for each of the N universes as by  the density of states at a given energy Ei for a partition function. As is given in [4] we have

the density of states at a given energy Ei for a partition function. As is given in [4] we have

. (2)

. (2)

Each of Ei identified with Equation (2) above, are with the iteration for N universes [1] . Then the following holds, namely, from [1] .

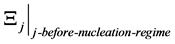

Claim 1:

. (3)

. (3)

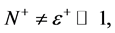

For N number of universes, with each  for j = 1 to N being the partition function of each universe just before the blend into the RHS of Equation (3) above for our present universe. Also, each of the independent universes given by

for j = 1 to N being the partition function of each universe just before the blend into the RHS of Equation (3) above for our present universe. Also, each of the independent universes given by  are constructed by the absorption of one to ten million black holes taking in energy. i.e. [1] . Furthermore, the main point is similar to what was done in terms of general ergodic mixing.

are constructed by the absorption of one to ten million black holes taking in energy. i.e. [1] . Furthermore, the main point is similar to what was done in terms of general ergodic mixing.

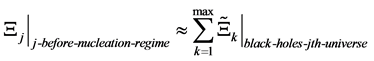

Claim 2:

. (4)

. (4)

What is done in Claim 1 and Claim 2 is to come up with a protocol as to how a multi dimensional representation of black hole physics enables continual mixing of spacetime largely as a way to avoid the Anthropic principle, as to a preferred set of initial conditions. How can a graviton with a wavelength 10−4 the size of the universe interact with a Ker black hole, spatially. Embedding the BH in a multiverse setting may be the only way out.

Claim 1 is particularly important. Since it is a way to tie in, an averaging procedure for information as in the cycle per cycle re booting of the universe by Cyclic conformal cosmology, and this should be a way to insure that there is a through mixing of information, as to cosmological constants per cycle.

Claim 3, is our new one, which is important. We assume that for each partition function that each partition function as identified by Z, with F being the Hemoltz free energy that by [1] [8] [9]

(5)

(5)

Here in the above, we make the following identification, namely that partition function Z, is related to Equation (2), and that the partition function of the universe, is mixed according to Equation (3). The last part of Equation (5) is a direct result of the Ng infinite quantum statistics hypothesis, and leads to an averaged out value for Equation (5) due to Equation (3). We then will close with the last part of this.

3. Conclusion, Using the Alireza Sepehri, Ahmed Farag Ali Supposition of Reference [5] as to the Formation of Wormholes for Relic Gravitons to Full Effect

In our document, we have labored as to use of initial conditions which may allow for use of a Tokamak to generate gravitational waves, and gravitons, of a frequency, commensurate with the relic graviton frequency of an initial universe setting in terms of measurable consequences today. To do so, we once again, restate what was said earlier.

Quote:

Here in the above, we make the following identification, namely that partition function Z, is related to Equation (2), and that the partition function of the universe, is mixed according to Equation (3).

End of quote.

Having said this how do we come up with a protocol, as to how to do this, in the early universe? A worthwhile suggestion as to incorporate both the early universe modification of the Heisenberg uncertainty principle [6] [10] and [7] [11] , and the possible avoidance of something from nothing conundrum which has bedeviled cosmologists ever since the Guth Big bang concept, is the examination of what reference [6] has to offer.

i.e. their seminal idea in [6] is to use the idea of wormholes. i.e. a worm hole which can do this has been brought up by L. Crowell, in [12] as a bridge between universes.

We wager that appropriate use of [6] may enable the use of this worm hole idea, and to avoid the conundrum of “something from nothing” which has been hotly contentious. Note that we can write our idea as of saying that gravitons may form information carriers from a prior universe to our own. To do this, we can refer back again to preliminary analogy with regards to Seth Lloyds universe as a quantum computer paper We begin, using the formula given by Seth Lloyd [13] 2001 with respect to the number of operations the “Universe” can “compute” during its evolution. To begin with, we use formula

. (6)

. (6)

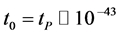

We assume that t1 = final time of physical evolution, whereas  seconds and that we can set an energy input via assuming in early universe conditions that

seconds and that we can set an energy input via assuming in early universe conditions that  and

and

. (7)

. (7)

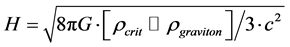

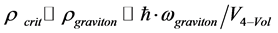

Furthermore, if we use the assumption that the temperature is close to  Kelvin initially, if we have an initial flux of gravitons as given through a worm hole as given in reference [13]

Kelvin initially, if we have an initial flux of gravitons as given through a worm hole as given in reference [13]

and

. (8)

. (8)

Then

i.e. the idea given in [6] and energy fluctuations may enable the transfer of information for the starting configuration of our universe, and this not through a singular big bang, but maybe through a nonsingular start to the universe as given by [14] .

Having said that, we also refer the reader to what the author did in reference [4] . i.e. in order to have a fully homogeneous start to the universe, the author in [4] generalized the Penrose supposition of a cyclic universe, to a multiverse, with repeated Ergodic mixing of initial components. The idea being that ergodic mixing through the multi universes, averaged out together, was a way to get rid of the possibility of having to use the “Darwinian” approach of only a few universes being survivable after being recycled. i.e. this Ergodic mixing is a way to insure that if one has the same Planck’s constant,

In doing so, we lay the ground work for perhaps full investigation of what was brought up by Corda, in [15] as well as getting answers to the suppositions given by Dyson in [16] . And of course we will give more support to the research findings as given in [17] in maybe an early universe configuration.

What is our final take away from this research? As given by the abstract.

Quote:

Moreover this would do away with the “baby universes” Darwinian hypothesis, set up by String theorists whom postulate that up to 10^1000 or so universes would be created, with only say 10^10 or so surviving(most dying off because of “improperly” set variance in the values of H bar (Planck’s constant).

End of quote.

Susskind in [18] is the foremost proponent of the Darwinian baby Universe picture, one which we state, due to the above, is not necessary.

Acknowledgements

This work is supported in part by National Nature Science Foundation of China grant No. 11375279.

Cite this paper

Beckwith, A.W. (2016) Using a Multiverse Version of Penrose Cyclic Conformal Cosmology to Obtain Ergodic Mixing Averaging of Cosmological Information Transfer to Fix H Bar (Planck’s Constant) in Each New Universe Created during Recycling of Universes Due to CCC, Multiverse Style. Journal of High Energy Physics, Gravitation and Cosmology, 2, 506-511. http://dx.doi.org/10.4236/jhepgc.2016.24044

References

- 1. Reichl, L. (1980) A Modern Course in Statistical Physics. University of Texas Press, Austin.

- 2. Birkhoff, G.D. (1931) Proof of the Ergodic Theorem. Proceedings of the National Academy of Sciences of the United States of America, 17, 656-660. http://dx.doi.org/10.1073/pnas.17.2.656

- 3. Birkhoff, G.D. (1942) "What Is the Ergodic Theorem? American Mathematical Monthly, 49, 222-226.

http://dx.doi.org/10.2307/2303229 - 4. Beckwith, A. (2014) Analyzing Black Hole Super-Radiance Emission of Particles/Energy from a Black Hole as a Gedanken Experiment to Get Bounds on the Mass of a Graviton. Advances in High Energy Physics, 2014, Article ID: 230713.

http://www.hindawi.com/journals/ahep/2014/230713/

http://dx.doi.org/10.1155/2014/230713 - 5. Penrose, R. (2012) Cycles of Time, an Extrardinary New View of the Universe. Vintage Books, a Division of Random House Incorporated, New York.

- 6. Sepehri, A. and Ali, A.F. (2016) Birth and Growth of Nonlinear Massive Gravity and It’s Transition to Nonlinear Electrodynamics in a System of Mp-Branes. http://arxiv.org/pdf/1602.06210.pdf

- 7. Shankar, R. (1994) Principle of Quantum Mechanics. 2nd Edition, Springer Verlag, Heidelberg.

http://dx.doi.org/10.1007/978-1-4757-0576-8 - 8. Shankar, R. (2014) Fundamentals of Physics, Mechanics, Relativity, and Thermodynamics. Yale University Press, New Haven.

- 9. Ng, Y.J. (2008) Spacetime Foam: From Entropy and Holography to Infinite Statistics and Nonlocality. Entropy, 10, 441-461. http://dx.doi.org/10.3390/e10040441

- 10. Beckwith, A. (2016) Gedanken Experiment for Refining the Unruh Metric Tensor Uncertainty Principle via Schwarzschild Geometry and Planckian Space-Time with Initial Nonzero Entropy and Applying the Riemannian-Penrose Inequality and Initial Kinetic Energy for a Lower Bound to Graviton Mass (Massive Gravity). Journal of High Energy Physics, Gravitation and Cosmology, 2, 106-124.

http://dx.doi.org/10.4236/jhepgc.2016.21012 - 11. Beckwith, A. (2016) Gedanken Experiment for Delineating the Regime for the Start of Quantum Effects, and Their End, Using Turok’s Perfect Bounce Criteria and Radii of a Bounce Maintaining Quantum Effects, as Delineated by Haggard and Rovelli. Journal of High Energy Physics, Gravitation and Cosmology, 2, 287-292. http://dx.doi.org/10.4236/jhepgc.2016.23024

- 12. Crowell, L. (2005) Quantum Fluctuations of Spacetime. World Scientific Series in Contemporary Chemical Physics Vol. 25, Singapore.

- 13. Lloyd, S. (2002) Computational Capacity of the Universe. Physical Review Letters, 88, 237901.

http://arxiv.org/abs/quant-ph/0110141

http://dx.doi.org/10.1103/PhysRevLett.88.237901 - 14. Turok, N. (2015) A Perfect Bounce.

http://www.researchgate.net/publication/282580937_A_Perfect_Bounce - 15. Corda, C. (2009) Interferometric Detection of Gravitational Waves: The Definitive Test for General Relativity. International Journal of Modern Physics D, 18, 2275-2282.

http://arxiv.org/abs/0905.2502

http://dx.doi.org/10.1142/s0218271809015904 - 16. Dyson, F. (2013) Is a Graviton Detectable? International Journal of Modern Physics A, 28, 1330041.

http://dx.doi.org/10.1142/S0217751X1330041X - 17. Abbott, B.P., et al. and LIGO Scientific Collaboration and Virgo Collaboration (2016) Observation of Gravitational Waves from a Binary Black Hole Merger. Physical Review Letters, 116, Article ID: 061102. https://physics.aps.org/featured-article-pdf/10.1103/PhysRevLett.116.061102

- 18. Susskind, L. (2005) The Cosmic Landscape: String Theory and the Illusion of Intelligent Design. Little, Brown.