Open Access Library Journal

Vol.02 No.11(2015), Article ID:68857,4 pages

10.4236/oalib.1102067

Application of Neural Network to Medicine

Bahman Mashood1, Greg Milbank2

1San Francisco, CA, USA

2Praxis Technical Group Inc., Nanaimo, BC, Canada

Copyright © 2015 by authors and OALib.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 24 October 2015; accepted 8 November 2015; published 13 November 2015

ABSTRACT

In this article we are going to introduce certain applications of the theory of neural network to medicine. In particular suppose for a given disease D, belonging to the category of immune deficiency diseases or cancers, there are numbers of drugs each with partial remedial effect on D. We are going to formulate a cocktail consisting of mixture of the above drugs with optimal remedial effect on D.

Keywords:

Disease, Cocktail, Optimization, Neural Network, Back Propagation, Trails, Data

Subject Areas: Drugs & Devices

1. Introduction

Many problems in the industry involved optimization of certain complicated function of several variables. Furthermore there is usually a set of constrains to be satisfied. The complexity of the function and the given constrains make it almost impossible to use deterministic methods to solve the given optimization problem. Most often we have to approximate the solutions. The approximating methods are usually very diverse and particular for each case. Recent advances in theory of neural network are providing us with a completely new approach. This approach is more comprehensive and can be applied to a wide range of problems at the same time. In the preliminary section we are going to introduce the neural network methods that are based on the works of D. Hopfield, Cohen and Grossberg. One can see these results at Section-4 [1] and Section-14 [2] . We are going to use the generalized version of the above methods to find the optimum points for some given problems. The results in this article are based on our common work with Greg Millbank of praxis group. Many of our products used neural network of some sorts. Our experiences show that by choosing appropriate initial data and weights we are able to approximate the stability points very fast and efficiently. In [3] , we introduce the extension of Cohen and Grossberg theorem to larger class of dynamic systems. The appearance of new generation of super computers will give neural network a much more vital role in the industry, intelligent machine and robotics.

2. On the Structure and Application of Neural Networks

Neural networks are based on associative memory. We give a content to neural network and we get an address or identification back. Most of the classic neural networks have input nodes and output nodes. In other words every neural networks is associated with two integers m and n. Where the inputs are vectors in  and outputs are vectors in

and outputs are vectors in . Neural networks can also consist of deterministic process like linear programming. They can consist of complicated combination of other neural networks. There are two kind of neural networks. Neural networks with learning abilities and neural networks without learning abilities. The simplest neural networks with learning abilities are perceptrons. A given perceptron with input vectors in

. Neural networks can also consist of deterministic process like linear programming. They can consist of complicated combination of other neural networks. There are two kind of neural networks. Neural networks with learning abilities and neural networks without learning abilities. The simplest neural networks with learning abilities are perceptrons. A given perceptron with input vectors in  and output vectors in

and output vectors in ,

,

is associated with threshold vector  and

and  matrix

matrix . The matrix W is called matrix of synaptical values. It plays an important role as we will see. The relation between output vector

. The matrix W is called matrix of synaptical values. It plays an important role as we will see. The relation between output vector  and input vector

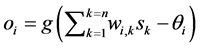

and input vector  is given by

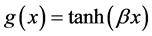

is given by , with g a logistic function usually

, with g a logistic function usually

given as  with

with  This neural network is trained using enough number of corresponding patterns until synaptical values stabilized. Then the perceptron is able to identify the unknown patterns in term of the patterns that have been used to train the neural network. For more details about this subject see for example (Section-5) [1] . The neural network called back propagation is an extended version of simple perceptron. It has similar structure as simple perceptron. But it has one or more layers of neurons called hidden layers. It has very powerful ability to recognize unknown patterns and has more learning capacities. The only problem with this neural network is that the synaptical values do not always converge. There are more advanced versions of back propagation neural network called recurrent neural network and temporal neural network. They have more diverse architect and can perform time series, games, forecasting and travelling salesman problem. For more information on this topic see (Section-6) [1] . Neural networks without learning mechanism are often used for optimizations. The results of D. Hopfield, Cohen and Grossberg, see (Section-14) [2] and (Section-4) [1] , on special category of dynamical systems provide us with neural networks that can solve optimization problems. The input and output to these neural networks are vectors in

This neural network is trained using enough number of corresponding patterns until synaptical values stabilized. Then the perceptron is able to identify the unknown patterns in term of the patterns that have been used to train the neural network. For more details about this subject see for example (Section-5) [1] . The neural network called back propagation is an extended version of simple perceptron. It has similar structure as simple perceptron. But it has one or more layers of neurons called hidden layers. It has very powerful ability to recognize unknown patterns and has more learning capacities. The only problem with this neural network is that the synaptical values do not always converge. There are more advanced versions of back propagation neural network called recurrent neural network and temporal neural network. They have more diverse architect and can perform time series, games, forecasting and travelling salesman problem. For more information on this topic see (Section-6) [1] . Neural networks without learning mechanism are often used for optimizations. The results of D. Hopfield, Cohen and Grossberg, see (Section-14) [2] and (Section-4) [1] , on special category of dynamical systems provide us with neural networks that can solve optimization problems. The input and output to these neural networks are vectors in  for some integer m. The input vector will be chosen randomly. The action of neural network on some vector

for some integer m. The input vector will be chosen randomly. The action of neural network on some vector  consist of inductive applications of some function

consist of inductive applications of some function  which provide us with infinite sequence

which provide us with infinite sequence , where

, where

3. On Providing a Cocktail Consisting of Certain Drugs Having Optimum Effect

Suppose there is a certain disease D. Next assume that there are number of drugs

We are searching for the optimal solution where the cocktail consisting of certain daily amount

to minimize

and to maximize

keeping the constrain

Beside that we usually have to consider certain limitation of daily use of each one of the products which are expressed in the following inequalities,

The above conditions are equivalent in optimization the function F, given in the following

Together with the set of constrains

where

Finally the above system of optimization corresponds to the following energy function,

where

Also we can choose simpler approach by formulating the energy function E in the following

where we keep the set of constrains,

The problem with this approach is that now the boundary points can be candidate for optimum point too.

Provided we have the values,

then using the arguments in [3] , we are able to calculates immediately the vector

This process depending on the number of available patients and duration of the trails might takes up to two years. At this point we have to mention that though it seems very complicated but in the future the simulation on neural network might replace the human trails which will contribute immensely to the field of medical research.

Next we feed the vectors

Finally for a given small

Next let

Now when we feed the new patient with the optimum cocktail, to the end of the trail we measure the actual values of

4. Conclusion

There is a set of diseases which cannot be cured using the existing set of drugs. But some of the above drugs can improve the state of health of an infected person. We suggest that it is possible to make a cocktail consisting of the above drugs which will provide us with the most effective treatment of the above person. We suggest that this method can be set on trails and be examined.

Cite this paper

Bahman Mashood,Greg Milbank, (2015) Application of Neural Network to Medicine. Open Access Library Journal,02,1-4. doi: 10.4236/oalib.1102067

References

- 1. Hetz, J., Krough, A. and Palmer, R. (1991) Introduction to the Theory of Neural Computation. Addison Wesley Company, Boston.

- 2. Haykin, S. (1999) Neural Networks: A Comprehensive Foundation. 3rd Edition, Prentice Hall Inc., Upper Saddle River.

- 3. Mashood, B. and Millbank, G. (2015) Advances in Theory of Neural Network and Its Application. Journal of Global Prospect of Artificial Intelligent, Preprint.

- 4. Takefuji, Y. Neural and Parallel Processing. The Kluwer International Series in Engineering and Computer Science, SEeS0164.