Journal of Computer and Communications

Vol.03 No.11(2015), Article ID:61282,7 pages

10.4236/jcc.2015.311011

Discriminant Neighborhood Structure Embedding Using Trace Ratio Criterion for Image Recognition

Jing Wang1, Fang Chen1, Quanxue Gao1,2

1School of Telecommunications Engineering, Xidian University, Xi’an, China

2State Key Laboratory of Integrated Services Networks, Xidian University, Xi’an, China

Received September 2015

ABSTRACT

Dimensionality reduction is very important in pattern recognition, machine learning, and image recognition. In this paper, we propose a novel linear dimensionality reduction technique using trace ratio criterion, namely Discriminant Neighbourhood Structure Embedding Using Trace Ratio Criterion (TR-DNSE). TR-DNSE preserves the local intrinsic geometric structure, characterizing properties of similarity and diversity within each class, and enforces the separability between different classes by maximizing the sum of the weighted distances between nearby points from different classes. Experiments on four image databases show the effectiveness of the proposed approach.

Keywords:

Dimensionality Reduction, Manifold Learning, Variability, Trace Ratio

1. Introduction

Linear discriminant analysis (LDA) has been used widely in pattern recognition, machine learning, and image recognition [1] [2]. However, methods based on LDA techniques are optimal under Gaussian assumption [3] [4] and they effectively capture only the global Euclidean structure which may impair the local geometrical structure of data [5]-[7]. Recently, many approaches have shown the importance of local geometrical structure for dimensionality reduction and image classification. One of the most popular linear approaches is neighbourhoods preserving embedding (NPE) [8]. NPE aims to discover the local structure of the data and find projection directions along which the local geometric reconstruction relationship of data can be preserved.

Motivated by NPE, many discriminant approaches have been developed to further improve the data classification accuracy [9]-[11], such as margin fisher analysis (MFA) [12], locality sensitive discriminant analysis (LSDA) [13], locally linear discriminant embedding (LLDE) [14] and discriminative locality alignment (DLA) [15]. They preserve the intrinsic geometrical structure by minimizing a quadratic function.

The local variation of data characterizes the most important modes of variability of data and is important for data representation and classification [13] [16]-[19]. By maximizing the variance, we can unfold the manifold structure of data and may preserve the geometry of data. Structure of real-world data is complex and unknown, thus a single characterization may not be sufficient to represent the underlying intrinsic structure. It indicates that none of the aforementioned approaches can detect a stable and robust intrinsic structure representation.

In this paper, we propose a novel dimensionality reduction approach, namely discriminant neighbourhood structure embedding using trace ratio criterion (TR-DNSE) which explicitly considers the global variation, local variation, and local geometry. Experiments on four image databases indicate the effectiveness of TR-DNSE.

The remainder of this paper is organized as follows: Section 2 analyzes NPE. The idea of TR-DNSE is presented in Section 3. Section 4 describes some experimental results. Section 5 offers our conclusions.

2. Problem Statements

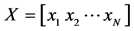

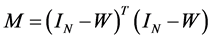

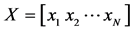

Given training data matrix , where

, where

denotes the i-th training data,

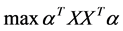

denotes the i-th training data,  is the number of training data. The objective function of NPE is [8]

is the number of training data. The objective function of NPE is [8]

(1)

(1)

where  denotes a projection matrix,

denotes a projection matrix, . The elements

. The elements  in weight matrix

in weight matrix  denote the coefficients for reconstructing

denote the coefficients for reconstructing  from its neighbours

from its neighbours , and can be calculated using [6].

, and can be calculated using [6].

The objective Function (1) can be decomposed into the following two objective functions:

(2)

(2)

(3)

(3)

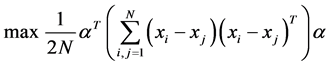

The objective Function (2) aims to preserve the intrinsic geometry of the local neighborhoods [6] [8]. Given that all data points are centered, i.e. , then the objective function (3) becomes

, then the objective function (3) becomes

(4)

(4)

Obviously, the objective function (4) which is equal to principal component analysis [2] aims to preserve the amount of variation of the values of data in the reduced space. However, it results in the following problems. It distorts the local geometry of data. As aforementioned analysis, the objective function (4) does not detect the local discriminating information among the nearby data points. Furthermore, NPE is an unsupervised approach, which does not make good use of the label information. It means that the generalization ability and stableness of NPE are not good enough.

3. Discriminant Neighborhood Structure Embedding Using Trace Ratio Criterion

3.1. The Objective Function for Dimensionality Reduction

Given training data matrix , where

, where

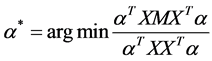

[6] [8] [18]-[20], we construct two adjacency graphs, namely geometry graph

local geometry and variation of data, respectively. The elements

Subject to two constraints: first, enforcing

From the viewpoint of statistics, if two points

where

Moreover, motivated by LDA [1], we construct two global graphs

responding Laplacian matrices are denoted as

The goal of TR-DNSE is to find projection directions such that both the amount of variation of values of data and local geometry can be preserved in the reduced space. A reasonable criterion for choosing a good map is to optimize the following four objective functions

where

The objective function (7) ensures that the weights, which reconstruct the point

3.2. Optimal Linear Mapping

Suppose

where

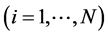

3.3. Discriminant Neighborhood Structure Embedding Using Trace Ratio Criterion

Generally, the objected function (13) can be solved by using generalized eigenvalue decomposition. Given that the low-dimensional data representation is

training data matrix

where

The above optimization problem in (14) is solved by Algorithm 1.

Explanation of Algorithm 1: With

From (16), it can be observed that

where

Algorithm 1. TR-DNSE algorithm.

In (18) we use the property

4. Experiments

In this section, we employ four widely used image databases (YALE, PIE, FERET and COLL20) to evaluate the performance of TR-DNSE and compare it with some classical approaches including Fisher face [22], MFA [12], LSDA [13], DLA [14] and LLDE [15] in the experiments. In classification stage, we use the Euclidean metric to measure the dissimilarity between two feature vectors and the nearest classifier for classification.

In our experiments, we first use the PCA to reduce the dimension of the training data by keep 80% - 97% energy of images. Likewise, we empirically determine a proper parameter

The CMU PIE database [23] contains 68 subjects with 41368 face images as a whole. We select pose-29 images as gallery that includes 24 samples per person. The training set is composed of the first 12 images per person, and the corresponding remaining images for testing. Moreover, each image is of the size

The Yale Face Database contains 165 grayscale images of 15 individuals. There are 11 images per subject. In our experiments the images are normalized to the size of

The FERET database [24] includes 1400 images of 200 individuals (each with seven images). All the images were cropped and resized to

The COIL20 image library contains 1440 gray scale images of 20 objects (72 images per object) [25]. Each image is of size

Table 1 shows the best results of six approaches on four databases. Figure 2 plot the curves of recognition accuracy vs. number of projected vectors on four databases.

TR-DNSE has the best recognition accuracy than the other approaches in all the experiments. This is probably due to the fact that TR-DNSE preserves both the local geometry and variation of data, especially the discriminating information embedded in nearby data from different classes. Different from other approaches, TR-DNSE approach has a trace ratio criterion in solution. Related work demonstrates that the projection matrix solved

Figure 1. Some sample images of one subject in the FERET database.

Table 1. Top recognition accuracy (%) of six approaches on four databases and the corresponding number of features.

Figure 2. Recognition accuracy vs. number of projection vectors on the four databases.

by using trace ratio criterion is generally better than the projection matrix solved by using generalized eigenvalue decomposition. By the trace ratio criterion, we can get an orthogonality projection matrix which helps to unfold the geometry and encode discriminating information of data. So the trace ratio criterion of TR-DNSE helps to get a better projection which results in better results.

5. Conclusion

Our method, TR-DNSE, which is proposed for dimensionality reduction, incorporates the intrinsic geometry, local variation, and global variation into the object function of dimensionality reduction. Geometry guarantees that nearby points can be mapped to a subspace in which they are still very close, which characterizes the similarity of data. Global variation and local variation characterize the most important modes of variability of patterns, and help to unfold the manifold structure of data and encode the discriminating information, especially the discriminating information embedded in nearby data from different classes. Experiments on four real-world image databases indicate the effectiveness of our TR-DNSE approach.

Cite this paper

Jing Wang,Fang Chen,Quanxue Gao, (2015) Discriminant Neighborhood Structure Embedding Using Trace Ratio Criterion for Image Recognition. Journal of Computer and Communications,03,64-70. doi: 10.4236/jcc.2015.311011

References

- 1. Fukunaga, K. (1990) Introduction to Statistical Pattern Recognition. 2nd Edition, Academic Press.

- 2. Jolliffe, T. (1986) Principal Component Analysis. Springer-Verlag, New York. http://dx.doi.org/10.1007/978-1-4757-1904-8

- 3. Strassen, V. (1969) Gaussian Elimination Is Not Optimal. Numer Math., 13, 54-356. http://dx.doi.org/10.1007/BF02165411

- 4. Tao, D., Li, X., Wu, X. and Maybank, S.J. (2007) General Tensor Discriminant Analysis and Gabor Features for Gait Recognition. IEEE Trans. Pattern Anal. Mach. Intell., 29, 1700-1714. http://dx.doi.org/10.1109/TPAMI.2007.1096

- 5. Saul, L.K. and Roweis, S.T. (2003) Think Globally, Fit Locally: Unsupervised Learning of Low Dimensional Manifolds. J. Mach. Learn. Res., 4, 119-155.

- 6. Roweis, S. and Saul, L. (2000) Nonlinear Dimensionality Reduction by Locally Linear Embedding. Science, 290, 2323-2326. http://dx.doi.org/10.1126/science.290.5500.2323

- 7. Belkin, M. and Niyogi, P. (2003) Laplacian Eigenmaps for Dimensionality Reduction and Data Representation. Neural Computation, 15, 1373-1396. http://dx.doi.org/10.1162/089976603321780317

- 8. He, X., Cai, D., Yan, S. and Zhang, H. (2005) Neighbourhood Preserving Embedding. Proc. ICCV.

- 9. Lai, Z., Wan, M., Jin, Z. and Yang, J. (2011) Sparse Two-Dimensional Local Discriminant Projections for Feature Extraction. Neurocomputing, 74, 629-637. http://dx.doi.org/10.1016/j.neucom.2010.09.010

- 10. Yu, J., Liu, D., Tao, D. and Seah, H.S. (2011) Complex Object Corresponding Construction in Two-Dimensional Animation. IEEE Trans. Image Processing, 20, 3257-3269. http://dx.doi.org/10.1109/TIP.2011.2158225

- 11. Xu, Y., Feng, G. and Zhao, Y. (2009) One Improvement to Two-Dimensional Locality Preserving Projection Method for Use with Face Recognition. Neurocomputing, 73, 245-249. http://dx.doi.org/10.1016/j.neucom.2009.09.010

- 12. Xu, D., Yan, S., Tao, D., et al. (2007) Marginal Fisher Analysis and Its Variants for Human Gait Recognition and Content-Based Image Retrieval. IEEE Transactions on Image Processing, 16, 2811-2821. http://dx.doi.org/10.1109/TIP.2007.906769

- 13. Gao, Q., Xu, H., Li, Y. and Xie, D. (2010) Two-Dimensional Supervised Local Similarity and Diversity Projection, Pattern Recognition, 43, 3359-3363. http://dx.doi.org/10.1016/j.patcog.2010.05.017

- 14. Zhang, T., Tao, D., Li, X. and Yang, J. (2009) Patch Alignment for Dimensionality Reduction. IEEE Trans. Knowl. Data Eng., 21, 1299-1313. http://dx.doi.org/10.1109/TKDE.2008.212

- 15. Li, B., Zheng, C.H. and Huang, D.S. (2008) Locally Linear Discriminant Embedding: An Efficient Method for Face Recognition. Pattern Recognition, 41, 3813-3821. http://dx.doi.org/10.1016/j.patcog.2008.05.027

- 16. Weinberger, K.Q. and Saul, L.K. (2004) Unsupervised Learning of Image Manifolds by Semi-Definite Programming. Proc. IEEE Con. Computer Vision and Pattern Recognition’2004, 988-995. http://dx.doi.org/10.1109/CVPR.2004.1315272

- 17. Weinberger, K.Q., Packer, B.D. and Saul, L.K. (2005) Nonlinear Dimensionality Reduction by Semi-Definite Programming and Kernel Matrix Factorization. Proc. the Tenth Workshop Artificial Intelligence and Statistics (AISTATS- 2005), 381-388.

- 18. Zhou, T., Tao, D. and Wu, X. (2011) Manifold Elastic Net: A Unified Framework for Sparse Dimension Reduction. Data Min. Knowl. Disc., 22, 340-371. http://dx.doi.org/10.1007/s10618-010-0182-x

- 19. Gao, Q., Zhang, H. and Liu, J. (2012) Two-Dimensional Margin, Similarity and Variation Embedding. Neurocomputing, 86, 179-183. http://dx.doi.org/10.1016/j.neucom.2012.01.023

- 20. Yan, S., Xu, D., Zhang, B., Zhang, H., Yang, Q. and Lin, S. (2007) Graph Embedding and Extensions: A General Framework for Dimensionality Reduction. IEEE Trans. Pattern Anal. Mach. Intell., 29, 40-51. http://dx.doi.org/10.1109/TPAMI.2007.250598

- 21. Nie, F., Xiang, S. and Zhang, C. (2007) Neighbourhood Minmax Projections. IJCAI-07, 993-998.

- 22. Belhumeur, P., Hespanha, P. and Kriegman, D. (1997) Eigenfaces vs. fisherfaces: Recognition Using Class Specific Linear Projection. IEE Trans. Pattern Anal. Mach. Intell., 19, 711-720. http://dx.doi.org/10.1109/34.598228

- 23. Gao, Q., Liu, J., Zhang, H., Hou, J. and Yang, X. (2012) Enhanced Fisher Discriminant Criterion for Image Recognition. Pattern Recognition, 45, 3717-3724. http://dx.doi.org/10.1016/j.patcog.2012.03.024

- 24. Phillips, P., Moon, H., Rizvi, S. and Rauss, P. (2000) The FERET Evaluation Methodology for Face-Recognition Algorithms. IEEE Trans. Pattern Anal. Mach. Intell., 22, 1090-1104. http://dx.doi.org/10.1109/34.879790

- 25. Nene, S.A., Nayar, S.K. and Murase, H. (1996) Columbia Object Image Library (COIL-20). Technical Report CUCS- 005-96.