Applied Mathematics

Vol.5 No.3(2014), Article ID:42648,5 pages DOI:10.4236/am.2014.53038

The Weak and Strong Nuclear Interactions

Department of Physics and Mathematics, Federal University of Technology, Owerri, Nigeria

Email: amaghnduka@yahoo.com.au

Received October 23, 2013; revised November 23, 2013; accepted November 30, 2013

ABSTRACT

In relativistic quantum theories interactions are mediated by force particles called elementary vector bosons: Quantum Electrodynamics (QED) predicts the photon to be the carrier of the electromagnetic force; Quantum Flavordynamics (QFD), also called electroweak theory, predicts the Ws and Z0 as the carriers of the weak force; and Quantum Chromodynamics (QCD) predicts gluons and mesons as the carriers of the strong force. All these particles are also called exchange or virtual particles. According to these theories the virtual particle appears spontaneously near one particle and disappears near the other. Even though it has consistently been claimed that experimental detection of these particles is a confirmation of each of these theories, we are, however, of the view that one cannot detect a particle that appears and disappears within a “black box”. In this paper we discuss the geometrical theory of weak and strong nuclear interactions.

Keywords:QED; QFD; QCD; Discrete Geometry; Fermions; Baryons; Mesons; Electroweak; Vector Bosons; Standard Model; Intellect-Driven; Machine-Driven; Renormalization; Cosmic-Killing Space

1. Introduction

The philosophers of classical Greece posed the following fundamental questions: What are the most basic kinds of matter? What are the basic inter-actions (forces) that exist in the material world? How do these particles interact amongst themselves via these forces?

The search for the answers to these questions was initially formal (intellect-driven) because it was based on the scientific method; later (about the 1930s), however, it became informal (machine-driven). The scientific method consists of two components. The first is the study of science based on observation and experience (empiricism and posteriorism), and its pioneer was Galileo Galilei. The second component is the study of science through the mathematization of physical processes (theorization), and Isaac Newton was its pioneer. A distinction has to be made though between mathematics and theoretical (mathematical) physics. The mathematician plays a game in which he (she) invents the rules (axioms, theorems, etc.), while the theoretical physicist plays a game in which the rules are provided by nature, and the aim is to discover the fundamental laws of nature.

The two components are not independent because a symbiotic relationship exists between them. A fundamental theory changes our views of the universe, its unifying synthesis joins two or more separate bodies of established knowledge whose connection at some deep levels had not been previously recognized, and at the same time extends our understanding of nature beyond the established knowledge. Examples include Newton’s demonstration that gravity acts on the heavenly bodies in the same way that acts on objects on the Earth, Maxwell’s unification of electricity and magnetism into electromagnetism, and Einstein’s unification of the distinct concepts of space and time into space-time. On the other hand observations may form the basis of a new theory, and the theory extends and generalizes the observation.

By the year 1900 it had been empirically established by Hertz that the electron was one of the constituents of the atom. The original intriguing question about the fundamental constituents of all the matter in the universe then resurfaced. Between 1900 and 1930 quantum mechanics, the theory that described microscopic phenomena, had become fairly well developed. The Dirac theory of the hydrogen atom electron (1928) led to the discovery of the positron, the antiparticle of the electron, in 1932. By the year 1932 it had become empirically established that protons and neutrons are also atomic constituents; and it was then speculated that there might also exist antiprotons and antineutrons. By 1955 these antiparticles had been discovered experimentally, and it was inferred that all the particles of the universe had antiparticle equivalents. The work of cosmic ray and high energy experimental physicists produced from the 1950s a burgeoning zoo of fermions, baryons, and mesons.

The theoretical component of the scientific method could not keep pace with the empiricists; as a result, the formal theoretical approach was replaced by an informal (machine-driven) approach pioneered by Hideki Yukawa, a Japanese physicist. Theoretical physics was then to be done via predictions, and the interaction between particles via the emission and absorption of other particles called exchange or virtual particles. Yukawa predicted the existence of a particle called “meson” which was responsible for mediating strong nuclear forces; the meson (pion) was discovered in 1947. Thus, according to Yukawa, strong nuclear interaction implies the exchange of mesons between nucleons.

The other type of nuclear interaction is called weak interaction, which is responsible for “beta decay” involving the emission of electrons and neutrinos. Neutrinos have remarkable properties in that they never appear without their charged partners. Three types of neutrinos, namely, electron neutrino, muon neutrino, and tauon neutrino and their respective antiparticles are known to exist in nature.

Another adhoc theoretical development was enunciated by Murray Gell-Mann and George Zweig in 1961. Murray Gell-Mann and George Zweig conceived the idea of quarks as a convenient rubric for organizing the zoo of baryons and mesons (hadrons). According to Gell-Mann and Zweig these two different types of hadrons correspond to the two different ways of constructing hadrons from quarks. A total of six quarks of charge −1/3 or +2/3 of the electronic charge were needed. All hadrons are subject to the strong nuclear force; but the strong force is merely a vestige of a much stronger force, called the color force, which governs the interaction amongst quarks. According to quantum chromodynamics (QCD), gluons mediate the color force between quarks, while in its second vestigial guise it is mediated by mesons between hadrons, the meson then acts as its own exchange particle [1].

In 1967 Steven Weinberg, Abdus Salam, and Sheldon Glashow created the electroweak model, another adhoc model theory, according to which the electroweak interaction is mediated by the photon, Ws, and Z bosons. These bosons had companions called Higgs bosons whose role is to explain the origin and value of the mass of the elementary particles. All of these particles, with the exception of the Higgs bosons, have been discovered. In 1978 the Standard Model (SM), a collection of established experimental knowledge and model theories in particle physics, was proposed. It includes the three generations of leptons and quarks, the electroweak theory, and the quantum chromodynamics theory of the strong interaction. The SM is plagued with a plethora of problems, such as how to unify the electroweak force with the strong or gravitational forces.

The theoretical models, QED, QFD, and QCD, are afflicted with a common disease in that they are machinedriven in contrast with the intellect-driven theories of Newton, Maxwell, Einstein and Dirac. These model theories, with the exception of QFD, were formulated to explain experimental results, via a strange and absurd mathematical procedure called renormalization. Any of these models is called a “gauge theory”. A gauge theory involves abelian or non-abelian group for the fields and particles: QED is abelian, QFD and QCD are non-abelian. Non-abelian gauge theories are called Yang-Mills theories [1].

2. The Geometrical Definition of an Elementary Particle

We call a piece of matter an elementary particle if it has no other kinds of particles inside of it and no subparts that can be identified. Conventionally we determine whether a particle is elementary or not by experimenting with it to see if it can be broken up or by studying it to see if it has an internal structure or parts. We do so today by bombarding it with other particles, and sometimes by a mere assumption. This way it has been established that molecules, atoms, and nuclei are not elementary but composite. This is a great achievement recorded by machines. However, even though we have no evidence as yet that the neutron, proton and mesons can actually be broken up into parts we have assumed since the 1960s that these particles are composite with quarks as their constituents. From all the experimentation over the years with leptons no evidence exists that leptons have internal parts or structure hence it is claimed that leptons, as well as the elementary vector bosons, are elementary particles. This is certainly not a satisfactory state of affairs.

The 4-space (space-time), called 4-tensor space of rank one in classical (continuum) geometry, and 4-quantum space of index one in quantum (discrete) geometry is fundamental. Space-Time exists in four vestigial guises: In Newtouian physics it is reducible to a pair of irreducible subspaces of ranks zero (time-axis) and one (3-dimensional space, with 3-dimensional composite objects as its residents (observables)); in Eisteinian physics it is an irreducible 4-tensor space of rank one; in the fermion world it is a 4-quantum space of index n, n = 1, 2, …, ¥, which is reducible to a set of irreducible subspaces of rank (spin) 1/2 (with fermions as their residents (observables)) [2]; and in the boson world it is a 4-quantum space of rank (spin) one as we shall establish hereunder. We conclude that all fermions and bosons are elementary particles; which is consistent with the fact that there is a one-one correspondence between irreducibility (mathematics) and elementary particles (physics); and also reducibility (mathematics) and composite (or unstable) particles (physics) [2]. Formally then, an elementary particle is a particle that resides in an irreducible quantum space.

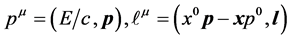

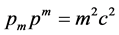

In its final guise space-time exists, as has been noted, as a 4-quantum space of rank (spin) one which we call the boson world. This is the space that unifies special relativity and quantum mechanics. The state variables in that space are 4-momentum (pm) and 4-angular momentum  defined by

defined by

(1)

(1)

respectively. The dynamical variable pm, a 4-operator, is well known in special relativity; its most important property is the Einstein 4-invariant.

(2)

(2)

which applies to all ementary particles. The first of Equation (1) shows that there exists in this space a particle which is absolutely at rest  relative to us, called graviton.

relative to us, called graviton.

The 4-operator  does not feature in conventional physical theories, and therein lies the problem of conventional elementary particle theories. Its zeroth component

does not feature in conventional physical theories, and therein lies the problem of conventional elementary particle theories. Its zeroth component  is asymmetric under parity (P) and time-reversal (T), but symmetric under PT! Therein lies the explanation of the 1956 cobalt-60 nuclei and 1964 kaon observations, not the weak force per say. Further, if x = 0,

is asymmetric under parity (P) and time-reversal (T), but symmetric under PT! Therein lies the explanation of the 1956 cobalt-60 nuclei and 1964 kaon observations, not the weak force per say. Further, if x = 0,  , which gives the energy of the photon of speed c and momentum p; hence the photon is a resident (observable) of this space. On the other hand if p = 0, it gives

, which gives the energy of the photon of speed c and momentum p; hence the photon is a resident (observable) of this space. On the other hand if p = 0, it gives , which is associated with a space-like object of spin one called Ws. Consequently the graviton, photon, and the Ws are residents of the 4-quantum space of rank (spin) one.

, which is associated with a space-like object of spin one called Ws. Consequently the graviton, photon, and the Ws are residents of the 4-quantum space of rank (spin) one.

The unequivocal conclusion to be drawn from the foregoing analyses is that the graviton, photon, fermions, and the four Ws (W+, W−, W, and ) are the elementary particles of nature, and that quarks do not feature in the theories of the fundamental processes.

) are the elementary particles of nature, and that quarks do not feature in the theories of the fundamental processes.

3. The Weak and Strong Nuclear Interactions

Our paper entitled “The Unified Geometrical Theory of Fields and Particles” has given an exquisite theory of electromagnetic and gravitational interactions called the invariant operator theory [3]. The outstanding problem is the theory of weak and strong nuclear interactions. It must be noted abinitio that the electroweak (QFD) and quantum chromodynamics (QCD) theories have nothing to do with the theory of weak and strong interactions respectively! In this section we discuss the geometrical theory of weak and strong nuclear interactions.

The boson state is one of the fundamental nuclear states [2]. The boson state can undergo spontaneous fragmentation, as occurs in the stars (example, our Sun), and in some unstable nuclei, or induced fragmentation as occurs in ultra high energy laboratories. The fragmentation liberates the Ws, Z0, and also releases huge amount of energy (g). This is actually how the sun shines as distinct from the rather naïve theory of Hans Bethe [4]. The liberated Ws, in the presence of fermions, form the chaotic fermion-boson nuclear state, an unstable nuclear state [2]. The fermion-boson nuclear state, like other physical states, must be electrically neutral, hence only the neutrinos (weak interaction) and neutrons (strong interaction) can form fermion-boson states with the Ws. Thus, charged fermions interact electromagnetically; neutral leptons interact weakly, and neutral nucleons interact strongly.

In the geometrical theory of fields and particles we saw that classical interaction occurs in the 4-tensor space of rank one and is represented by a sum of 4-operators. Similarly in the quantum case of weak and strong interactions interaction occurs in the 4-quantum space of spin (rank) one, called “Cosmic Killing Space”, and is represented by a sum of 4-spins, or simply a sum of the spins of the interacting particles. We give here a schematic representation of weak and strong nuclear interactions which, as has been noted, is just the fermion-boson (fusion) state, a 3-body interaction described as follows:

(3)

(3)

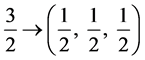

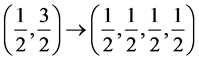

called the absolute description, where “2” and “3” represent particles of spin 1/2 (neutral fermion) and spin 1 (Ws) respectively [2]. The interaction results, consistent with the dimensionality theorem, in two channels with absolute representations  and

and , where 3/2 is the spin of a baryon resonance resident in the quantum space of index 3/2, and 5/2 is the spin of a baryon resonance resident in the quantum space of index 5/2.

, where 3/2 is the spin of a baryon resonance resident in the quantum space of index 3/2, and 5/2 is the spin of a baryon resonance resident in the quantum space of index 5/2.

As is the case with fermion quantum spaces the fermion-boson spaces of indices 3/2 and 5/2 are reducible; correspondingly the respective residents (baryon resonances) are unstable. The reduction is to irreducible quantum spaces as follows:

(4)

(4)

so that,

(5)

(5)

The reduction  giving

giving  does not occur because it violates the dimensionality theorem [2]. Similarly the only allowed reduction of the quantum space of index 5/2, consistent with the dimensionality theorem, is

does not occur because it violates the dimensionality theorem [2]. Similarly the only allowed reduction of the quantum space of index 5/2, consistent with the dimensionality theorem, is

(6)

(6)

Thus, if (3) gives the absolute representation of the input channel then the allowed output channels have absolute representations given by (5) and (6).

Consider now the output channel (5). The only allowed residents are fermions, namely, a neutral lepton plus its antiparticle and a charged lepton plus its antiparticle for weak nuclear interaction, or a neutral nucleon plus its antiparticle and a charged nucleon plus its antiparticle for strong nuclear interaction, consistent with electrical neutrality of physical states. Consequently the Fermi beta-decay theory whereby a neutron in a nucleus emits an electron (a beta particle) and antineutrino, becoming a proton in the process; or a proton in a nucleus emits a positron and neutrino becoming a neutron in the process must be rejected. Indeed there has not been an experimental confirmation of the Fermi process in over eighty years.

Let us now consider the output channel (6). The only allowed residents are a neutron and a meson plus its antimeson arising from the reaction , or anti neutron and a meson plus its anti meson arising from the reaction

, or anti neutron and a meson plus its anti meson arising from the reaction , consistent with the electrical neutrality of physical states. It has been assumed for many years that neutrons and protons interact via the exchange of virtual mesons. Thus, the nucleus must contain, in addition to nucleons, the force-carrying mesons. Searching for direct evidence of the presence of mesons in the nucleus has been an elusive chase. Our theory gives the source of mesons in the nucleus and further shows that nucleons in the nucleus do not interact amongst themselves.

, consistent with the electrical neutrality of physical states. It has been assumed for many years that neutrons and protons interact via the exchange of virtual mesons. Thus, the nucleus must contain, in addition to nucleons, the force-carrying mesons. Searching for direct evidence of the presence of mesons in the nucleus has been an elusive chase. Our theory gives the source of mesons in the nucleus and further shows that nucleons in the nucleus do not interact amongst themselves.

4. Conclusions

The uncertainty principle of Heisenberg is regarded as a fundamental law of nature. The law refers to the impossibility of measuring simultaneously and with arbitrary precision the position and momentum of a particle; and the structure of quantum mechanics is assumed to lead to an analogous statement for energy and time. According to this principle, a virtual particle is allowed to exist for a time that is inversely proportional to its mass; the allowed lifetime determines the maximum distance that the virtual particle can travel, and therefore the maximum range of the force that it mediates. Thus, the greater the mass of the particle, the shorter the distance can travel as a virtual particle, and vice versa. Hence, because photons have zero mass, the range of the electromagnetic force is infinite. This rather absurd reasoning leads to the erroneous inference that graviton has zero mass since the gravitational force has infinite range. The graviton is the heaviest elementary particle [5].

As we have seen, the force-particles reside in the 4-quantum space of rank (spin) one, and are circumscribed by the fundamental quantum definitions given by Equation (1). Contrary to the Heisenberg’s uncertainty principle, if the coordinate vanishes, the second of Equation (1) leads to a massless particle of great speed (photon), and if the momentum vanishes, the first of (1) gives a particle of great mass which is at rest relative to us (graviton), and the second equation gives particles of great mass which are also at rest relative to us (Ws). In fact the product DqDp of classical geometry has no meaning in the quantum world. Hence, it is inappropriate to claim that the uncertainty principle of Heisenberg is a fundamental law of nature.

Earthquakes and Global Warming are two of the well documented natural phenomena whose origins are not understood. Environmental scientists (geologists, geophysicists, and meteorologists) claim that earthquakes are caused by “plate movement”, a secondary effect; but what natural force is responsible for this movement?

Climate records show that the earth’s climate is not immutable, but in fact is rather sensitive especially at long term scales. The fundamental question that troubled the 19th century scientists was whether global temperatures were rising? There was a great deal of equivocation about it for several years, but global warming was unambiguously detected around the end of the 20th century. Climate scientists claim that greenhouse gases emitted by humans cause climate change. They reasoned that the Sun is Earth’s only source of energy; hence given the invariant average rate of energy absorption by the Earth, significant warming of the Earth must be due solely to changes in atmospheric greenhouse gas concentrations.

The reasoning of environmental scientists is flawed by their assumption that the Sun is Earth’s only source of energy. We argue that if greenhouse effect was solely responsible, it would not have taken about 200 years after the first industrial revolution before detecting global warming.

Ultra high energy machines (particle accelerators operating in the tera energy range) made their debut in physics research in the 1970s. Such machines serve as Earth’s secondary source of energy because they are the drivers of ultra high energy nuclear reactions here on Earth similar to those occurring in the Sun and stars. Let us consider, for example, a TeV particle accelerator. The particles emerging from this machine will cause the fragmentation of nuclear (boson) states, and thereafter chain reactions (fusion) follow. Fragmentation of a single boson state will dump about 0.3 TeV energy on the Earth; fragmentation of 1023 (Avogadro’s number) such states dumps an amount of energy on the Earth equivalent to that from the Sun. Such machines are therefore responsible for incessant earthquakes of great vehemence and global warming; the two natural phenomena’s effects are negligible ordinarily.

Ultra high energy machines are not only a waste of resources but also a threat to life on Earth. Environmental scientists, indeed all of humanity, have a massive challenge today of rescuing the Earth from the cold grip of machine-driven scientists.

REFERENCES

- “Physics through the 1990s, Elementary Particle Physics, and Nuclear Physics,” National Academy Press, Washington DC, 1986.

- A. Nduka, “The Geometrical Theory of Science,” Applied Mathematics, Vol. 3, No. 11, 2012, pp. 1598-1600. http://dx.doi.org/10.4236/am.2012.311220

- A. Nduka, “The Unified Geometrical Theory of Particles and Fields,” To be published.

- H. A. Bethe, “How the Sun Shines,” Physical Review, Vol. 55, No. 5, 1939, pp. 434-456. http://dx.doi.org/10.1103/PhysRev.55.434

- A. Nduka, “The Neutrino Mass,” Applied Mathematics, Vol. 4, No. 2, 2013, pp. 310-313. http://dx.doi.org/10.4236/am.2013.42047