Open Journal of Optimization

Vol.04 No.03(2015), Article ID:60043,30 pages

10.4236/ojop.2015.43012

A Maximum Principle for Smooth Infinite Horizon Optimal Control Problems with State Constraints and with Terminal Constraints at Infinity

Atle Seierstad

University of Oslo, Oslo, Norway

Email: atle.seierstad@econ.uio.no

Copyright © 2015 by author and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 14 May 2015; accepted 26 September 2015; published 29 September 2015

ABSTRACT

Necessary conditions for optimality are proved for smooth infinite horizon optimal control problems with unilateral state constraints (pathwise constraints) and with terminal conditions on the states at the infinite horizon. The aim of the paper is to obtain strong necessary conditions including transversality conditions at infinity, which in many cases lead to a set of candidates for optimality containing only a few elements, similar to what is the case in finite horizon problems. However, strong growth conditions are needed for the results to hold.

Keywords:

Infinite Horizon, Optimal Control, State Constraints

1. Introduction

The aim of this paper is, in a control problem with unilateral state constraints and terminal conditions at infinity, to obtain necessary conditions, with a full set of transversality conditions at infinity, which frequently make it possible to narrow down the set of candidates for optimality to only a few, or sometimes a single one. In infinite horizon problems without unilateral state constraints (pathwise constraints), with or without terminal conditions on the states at the infinite horizon, there exist various types of necessary conditions for optimality, and examples are [1] (without a transversality condition), and a number of results with certain limited types of transversality conditions, for example [2] , slightly generalized in [3] . See the latter paper and [4] for several further references (see also [5] ). The limited types of transversality conditions mentioned are in problems with several states-often insufficient if one wishes to avoid getting an infinite number of candidates. With strong growth conditions there exist necessary conditions, with a full set of transversality conditions at infinity, which in many cases make it possible to narrow down the set of candidates to only a few, or sometimes a single one, see Theorem 16, p. 2441 in [5] . For nonsmooth problems with a full set of transversality conditions in the infinite horizon case, see [6] . For such problems, see also [7] .

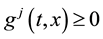

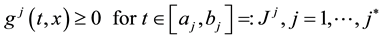

The novelty of the results in this paper is hence the establishment of necessary conditions that include a full set of transversality conditions at infinity in an infinite horizon problem with both terminal constraints at the infinite horizon and unilateral state constraints (constraints of the form

for all t). Strong growth conditions are needed for the results to hold.

for all t). Strong growth conditions are needed for the results to hold.

For Michel-type necessary condition in the case of unilateral state constraints, sees [8] .

The growth conditions used below, ((11), (12), (13)) are more demanding than the conditions applied in [9] for the case of no unilateral state constraints and no terminal constraints (problems with a dominant discount). In later work the authors use even more general conditions, see [10] (see also [11] , and [12] for problems with a special structure).

The results below are of especial interest in the case where not all states are completely constrained at infinity. In the opposite case, generalizations of Halkin’s infinite horizon theorem in [1] to problems with unilateral state constraints where no transversality conditions appear, like Theorem 9, p. 381 in [6] , frequently yield enough information for determining one or a few candidates for optimality. When not all states are completely constrained at infinity, transversality conditions related to the terminal conditions are needed, unless one can accept the possibility of an infinite number of candidates for optimality.

In certain cases there is a danger of degeneracy of multipliers. See the early review in [13] and [14] . We have added conditions that secure nondegeneracy of multipliers in some such cases, in particular in the case where unilateral constraints are satisfied as equalities by the initial state (the state at time zero). See [15] -[17] for a presentation of similar conditions in the finite horizon case, as well as for a number of references for this case (see for example [18] -[22] ).

2. The Control Problem, Necessary Conditions, and Examples

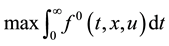

Consider the problem

(1)

(1)

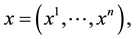

where

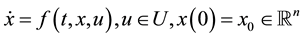

subject to

subject to

(2)

(2)

(3)

(3)

(4)

(4)

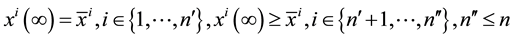

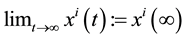

where we require that

exists for

exists for

and where

and where

Here,

Here,

and n are given natural numbers, and we allow for the case where there are no equality constraints or no inequality constraints in (4) (in which cases

and n are given natural numbers, and we allow for the case where there are no equality constraints or no inequality constraints in (4) (in which cases

and

and

so

so

and/or

and/or

are empty sets). Furthermore,

are empty sets). Furthermore,

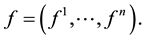

to (2)-(4). When the solution

We assume that

and that for any x,

assumptions. At various points some strengthening of these assumptions are added.

The following definitions are needed: let

In Theorem 1, in addition to the basic assumptions, assumptions (5)-(15) below are needed. It is assumed that for all

We shall make use of some constraint qualifications, (6) and (8) below, related to

((6) holds vacuously if

Either2

The following growth conditions are also needed: For some

and there exist some positive constants

where

Assume finally that, for all j

Define

Theorem 1. (Necessary condition, infinite horizon) Assume (5)-(8), (11)-(15) and the basic smoothness as

sumptions. There exist a number

then

Moreover,

Finally,

Remark 1. For

In the sequel three trivial examples with rather obvious optimal controls will be presented, but to illustrate the use of the necessary conditions, we derive the form of the optimal controls from these conditions.

Example 1.

Solution:

Evidently

Consider first the case that we might have

see the expression for

the expression for

when

Remark 2. (Further non-triviality properties)

a) Replace (6) by the assumption that either

b) Assume in addition that, for any

For finite horizon normality conditions, see [23] and [24] .

The main reason for including the next theorem is that it forms a basis for obtaining Theorem 1, but it has some interest of its own.

It contains necessary conditions for the case where (14) and/or (15) fail, in particular where

Theorem 2. In the situation of Theorem 1, with (5), (6), (8), (14), and (15) deleted, assume that the three conditions (25), (26), (27) below are satisfied. Then the following necessary conditions hold: for some

where

If (7) and (8) hold,

As before when

Assume, for some arbitrarily large

Let

Moreover, for any given number

As an example in which (25)-(27) hold, consider a case where

is concave in x, and where, for some positive

hand notation

where

Remark 3. For Theorem 2 to hold, we can weaken (7) and the basic assumptions on

Moreover in the growth conditions (12) and (13), roughly speaking, the inequalities need not hold for states x that cannot possibly occur, more precisely, the conditions can be modified as follows. Define for each t,

Finally, U can be replaced by a time dependent subset

Example 2.

and

Let

argument would not work if we had replaced

stant on

possible, is impossible: Let

Example 3.

Again, assuming, for some

Remark 4. Assume in the problem (1)-(7), (11)-(15), that

negative finitely additive set functions

for

(

When for some vectors

mum condition (17) holds for

for all

and where, for some nonnegative

represented by a bounded nondecreasing right-continuous function

When, in addition, for some

3. Proofs of the Results

Proof of Theorem 2. To simplify the notation, instead of the criterion (1), we can and shall assume that

Overview of the proof. A rough outline of the proof is as follows. We are going to make a number of strong (needleshaped) perturbations of

Detailed proof. To avoid certain problems connected with coinciding perturbation time points, the following construction is helpful (we then avoid coinciding perturbation time points). Let

such that for each

that

to

Let

and

where a sum over an empty set is put equal to zero. For

Let

where

The linear variations

3.1. Satisfaction of the Unilateral Constraints by Perturbed Solutions

Fix a pair

To see this, choose

For some positive

(

Now,

by (40) because

mula

is small when

Now, for

Because

Next, it is well-known that

uniformly in

for all

both for all

bine (27) and (26)). Then, by (45), for some positive

for some positive

Hence, (44) has been shown, and in particular, (see (42))

So far, we have only used the basic assumptions and the first of the three conditions on

We want to show that

So far, we have proved (44) for

Finally, let us prove (44) for

Then the right derivative

consider the subcase where

Consider next the subcase where

Thus, when

3.2. Local Controllability at Infinity

Observation 1. Define

the moment we allow the pairs in

indices on

single index, with the time points in the pairs in increasing order. Let

The following result should surprise nobody, a proof however is given in Appendix.

Lemma 1. Assume that for

3.3. Separation Arguments That Yield the Multipliers

By optimality, for all

that for some

for some

Define

for all

finitely additive nonnegative set function

nishes on

where

Let us now choose a sequence

described for

(for some subsequence

equality holds). The cluster point

Now

Finally, let us extract an additional property. If

for (say)

3.4. Further Information on the Multipliers in Special Cases

Let us prove the results concerning the multipliers in the three last sentences in Theorem 2 in the case where

Define

Now, assume (9) and (10) (a), with

holds for this cluster point

Evidently,

When

i.e. (10) (g), see Appendix), we don’t need the assumption that

when (8) holds, in Theorem 2, we can assume

Define

(see the end of Theorem 2). For

Assume for the moment that

Can

Finally, assume that both (58) and (8) are satisfied. By contradiction assume now that

Proof of Theorem 1.

We still keep the assumption that

on

Now,

Let

and

In Theorem 1, we have written

Note that (6) implies that (58) holds, as

Proof of Remark 2. We give a proof for the case where

Proof of a)

Let

(the last term is

Proof of b) Assume by contradiction that

If

Using the vectors

Next, let

There exist

Proof of Remark 4. We construct an auxiliary problem: assume for given functions

The necessary conditions in Theorem 2 are now applied to this auxiliary system (they apply even when admissible controls are restricted as above, see the inequalities involving

where

for some constant Q, independent of t and

From now on assume

Let

cluster point of any given sequence

Let

(67) holds for all

The proof of

Using the inequality

(i.e. (67)), we get

Note that

represented by nonnegative functions

Let us finally show that

But then

4. Conclusion

The paper establishes necessary conditions for optimality in a smooth infinite horizon optimal control problem with unilateral state constraints and terminal constraints at the infinite horizon. The necessary conditions include a complete set of transversality conditions at infinity. The specific growth conditions placed upon the system in this paper can easily be modified, but strong growth conditions are in any case needed for the full set of necessary conditions to hold.

Acknowledgements

I was deeply grateful to the referees. Their detailed comments made it possible to correct omissions and improve the exposition.

Cite this paper

AtleSeierstad, (2015) A Maximum Principle for Smooth Infinite Horizon Optimal Control Problems with State Constraints and with Terminal Constraints at Infinity. Open Journal of Optimization,04,100-130. doi: 10.4236/ojop.2015.43012

References

- 1. Halkin, H. (1974) Necessary Conditions for Optimal Control Problems with Infinite Horizons. Econometrica, 42, 267-272.

http://dx.doi.org/10.2307/1911976 - 2. Michel, P. (1982) On the Transversality Condition in Infinite Horizon Problems. Econometrica, 50, 975-985.

http://dx.doi.org/10.2307/1912772 - 3. Seierstad, A. and Sydsaeter, K. (2009) Conditions Implying the Vanishing of the Hamiltonian at Infinity in Optimal Control Problems. Optimization Letters, 3, 507-512.

http://dx.doi.org/10.1007/s11590-009-0128-7 - 4. Aseev, S.M. and Veliov, V.M. (2011) Maximum Principles for Infinite-Horizon Optimal Control Problems with Dominating Discount. Research Report 2011-06 June, Operations Research and Control Systems, Institute of Mathematical Methods in Economics, Vienna University of Technology, Vienna.

- 5. Benveneniste, L. and Scheinkman, J. (1982) Duality Theory for Dynamic Optimization Models in Economics. Journal of Economic Theory, 27, 1-19.

http://dx.doi.org/10.1016/0022-0531(82)90012-6 - 6. Seierstad, A. and K. Sydsaeter (1987) Optimal Control Theory with Economic Applications. Amsterdam, The Netherland.

- 7. Seierstad, A. (1999) Necessary Conditions for Non-Smooth Infinite Horizon Control Problems. Journal of Optimization Theory and Applications, 103, 201-229.

http://dx.doi.org/10.1023/A:1021733719020 - 8. Pereira, F.L. and Silva, G.N. (2011) A Maximum Principle for Constrained Infinite Horizon Dynamic Control Systems. Preprints of the 18th IFAC World-Congress, Milano, 28 August-2 September 2011, 10207-10212.

- 9. de Oliveira, V.A. and Silva, G.N. (2009) Optimality Conditions for Infinite Horizon Control problems with State Constraints. Nonlinear Analysis, 71, e1788-e1795.

- 10. Aseev, S.M. and Veliov, V.M. (2012) Needle Variations in Infinite-Horizon Optimal Control. Research Report 2012-4, September, Operations Research and Control Systems, Institute of Mathematical Methods in Economics, Vienna University of Technology, Vienna.

- 11. Aseev, S.M. and Kryazhimskii, A.V. (2004) The Pontryagin Maximum Principle and Transversality Conditions for a Class of Optimal Control Problems with Infinite Time Horizons. SIAM Journal on Control and Optimization, 43, 1094-1119.

- 12. Weber, T.A. (2006) An Infinite-Horizon Maximum Principle with Bounds on the Adjoint Variable. Journal of Economic Dynamics and Control, 30, 229-241.

http://dx.doi.org/10.1016/j.jedc.2004.11.006 - 13. Arutyunov, A.V. and Aseev, S.M. (1977) Investigation of the Degeneracy Phenomenon of the Maximum Principle for Optimal Control with State Constraints. SIAM Journal on Control and Optimization, 35, 930-952.

http://dx.doi.org/10.1137/S036301299426996X - 14. Vinter, R.B. and Ferreira, M.M.A. (1994) When Is the Maximum Principle for State Constrained Problems Nondegenerate? Journal of Mathematical Analysis and Applications, 187, 438-467.

http://dx.doi.org/10.1006/jmaa.1994.1366 - 15. Ferreira, M.M.A. and Fontes, F.A.C.C. (2004) Nondegeneracy and Normality in Necessary Conditions for Optimality: An Overview. Proceedings of the 6th Portuguese Conference on Automatic Control, CONTROLO, Faro, Portugal, 1-9 June 2004.

- 16. Vinter, R.B. (2000) Optimal Control. Birkhäuser, Boston.

- 17. Arutyunov, A.V., Karamzin, D.Y. and Pereira, F.L. (2011) The Maximum Principle for Optimal Control Problems with State Constraints by R.V. Gamkrelidze: Revisited. Journal of Optimization Theory and Applications, 149, 474-493.

http://dx.doi.org/10.1007/s10957-011-9807-5 - 18. Arutyunov, A.V., Aseev, S.M. and Blagodatskikh, V.I. (1994) First Order Necessary Conditions in the Problem of Optimal Control of a Differential Inclusion with Phase Constraints. Russian Academy of Sciences Sbornik Mathematics, 79, 117-139.

http://dx.doi.org/10.1070/sm1994v079n01abeh003493 - 19. Arutyunov, A.V. (2000) Optimality Conditions: Abnormal and Degenerate Problems. Kluwer Academic, Dortdrecht.

http://dx.doi.org/10.1007/978-94-015-9438-7 - 20. Arutyunov, A.V. and Aseev, S.M. (1995) State Constraints in Optimal Control: The Degeneracy Phenomenon. Systems & Control Letters, 26, 267-273.

http://dx.doi.org/10.1016/0167-6911(95)00021-Z - 21. Rampazzo, F. and Vinter, R.B. (1999) A Theorem on the Existence of a Neighbouring Feasible Trajectory Satisfying a State Constraint, with Application to Optimal Control. IMA Journal of Mathematical Control and Information, 16, 335-351.

http://dx.doi.org/10.1093/imamci/16.4.335 - 22. Rampazzo, F. and Vinter, R.B. (2000) Degenerate Optimal Control Problems with State Constraints. SIAM Journal on Control and Optimization, 39, 989-1007.

http://dx.doi.org/10.1137/S0363012998340223 - 23. Bettiol, P. and Frankowska, H. (2007) Normality of the Maximum princIple for Nonconvex Constrained Bolza Problems. Journal of Differential Equations, 243, 2565-2569.

http://dx.doi.org/10.1016/j.jde.2007.05.005 - 24. Fontes, F.A.C.C. (2000) Normality in the Necessary Conditions of Optimality for Control Problems with State Constraints. Proceedings of the IASTED Conference on Control and Applications, Cancun, Mexico.

Appendix

Below, for any matrix

Lemma A. Let

where, in

Lemma B. Let

Note that

The proofs of the lemmas A and B are of a standard type and omitted in order to save space.

Let

Lemma C. Let

continuous in

that for any

Proof of Lemma C. Note that

Lemma D. Let

common Lipschitz rank

Proof of Lemma D. The proof of

for a.e. t. Dividing by d, we get

By Lebesgue’s dominated convergence theorem,

then

and then by Gronwall’s inequality,

where

Lemma E. In the situation of Lemma B, let

Proof of Lemma E. By Lemma D, for some term

By Lebesgue’s dominated convergence theorem the conclusion in the lemma follows if we can prove that for

each t,

where the second order term

Proof of Lemma 1.

Consider the map

for the existence of

Note that for

Observation A. On the space of continuous real-valued functions on

Note that if

Let

Proof of (10) (g) Þ

Assume again that

Then, for some

and, for

Let

for any

Let

Consider the following auxiliary control problem on

where

Assume by contradiction both that

contradicting

The optimality of

and hence

So

From (74) and

Using

Then, by (72), (73), (76), for all

19In case we have constraints

As

Now,

Let now

We can extend this result to problems that are nonautonomous on

NOTES

1In that theorem, correct the inequality

2A right Lebesgue point s of any integrable function

3

4For

5Or even

6One may consult Observation 1 below at this point.

7Only when there are more than one perturbation

8If

9For simplicity, assume that

10If U is replaced by

11In case

12If (8) holds only for a subset

13Observation A in Appendix may be consulted at this point.

14Compare e.g. Section 9.5 in [16] .

15As an alternative to the left continuity assumption on

16Thus we don’t need (and often don’t have!) differentiability of

17The growth conditions related to

18We can again let