Applied Mathematics, 2011, 2, 914-917 doi:10.4236/am.2011.27124 Published Online July 2011 (http://www.SciRP.org/journal/am) Copyright © 2011 SciRes. AM Fourier Truncation Method for Fractional Numerical Differentiation Ailin Qian, Jianfeng Mao Department of Mathematics and Statistics, Xianning University, Xianning, China E-mail: junren751113@126.com Received February 13, 2011; revised May 31, 2011; accepted June 3, 2011 Abstract We consider an ill-posed problem-fractional numerical differentiation with a new method. We propose Fou- rier truncation method to compute fractional numerical derivatives. A Hölder-type stability estimate is ob- tained. A numerical implementation is described. Numerical examples show that the proposed method is ef- fective and stable. Keywords: Inverse Problems, Ill-Posed Problem, Fractional Numerical Differentiation, Fourier Regularization, Error Estimate 1. Introduction In this paper we shall consider the problem of stably calculating the fractional derivative of a function given in , p LR d 1d 1d x tt Dfx x t , (1.1) for . Such problem is frequently encountered in many practical contexts [1-3]. It is well known that is a formal solution of the Abel integral equa- tion. 0,1 Dfx 1 1d, . xut uxtf x xt x (1.2) That is very important in various areas of mechanics, spectroscopy, computerized tomography [2]. The process of numerical fractional differentiation is well known to be an ill-posed problem [1-3], and it has been discussed by many authors, and a large number of different solu- tion methods have been proposed. For references we refer the reader to [1-5] and references therein. Finite difference approaches for numerical differentiation have been used, for example, in [6-9]. However, these ap- proaches require knowledge of a bound of the second or third derivatives of the function under consideration that are not always available. Furthermore, there exist infi- nitely many functions that do not have bounded sec- ond derivatives at all. The same situation occurs also in numerical fractional differentiation [2]; one requires a high smoothness of the functions under consideration that does not always exist. In the present paper, as an alterna- tive way of dealing with fractional numerical differentia- tion, we introduce a new regularization method, i.e., Fourier truncation. We simply analyze the cause of ill- posedness of fractional numerical differentiation and then propose the method. The idea of Fourier truncation method is very simple and natural: since the ill-posed- ness of fractional numerical differentiation is caused by the high frequency components, we cut off them. Actu- ally, such a similar idea of solving numerical differentia- tion has occurred in [10,11]. We can easily find this fact by studying [10,11] in frequency space. However, Fou- rier truncation method is more direct, natural and simple. 2. Regularization in the Fourier Domain In this section we simply analyze the ill-posedness of fractional numerical differentiation and discuss how to stabilize the numerical derivaties. We set a function p xHR which is a Soblev space, p . Let be the Fourier transform of , i.e., 1 ˆd. 2π ix fxe x (2.1) Now we consider the -th order derivative of func- tion . Taking the Fourier transform, we have  A. L. QIAN ET AL.915 ^ˆ, fx if (2.2) i.e., 1ˆd. 2π ix fx ife (2.3) From the right hand side of (2.2) or (2.3), we know that i can be seen as an amplification factor of ˆ f . Therefore, when we consider our problem in LR 2, the exact data function ˆ f must decay rap- idly as . But in the applications the input data x can only be measured and never be exact. We assume, say that, the measured data function 2 xLR satisfies ,ff (2.4) where denotes - norm, the constant 2 L0 represents a noise level. Thus, if we try to obtain frac- tional numerical derivatives, high frequency components in the error are magnified and can destroy the solution. In this sense it is impossible to solve the problem using classical numerical methods and requires special tech- niques to be employed. In the following, we will propose our regularization strategy to deal with the ill-posed problem. However, before doing that, we impose a priori bound on the input data (this is necessary in solving ill-posed problems), i.e., , p fEp, (2.5) where is a constant, 0E denotes the norm in Soblev space p R defined by 1 22 2ˆ :1 d p p ff (2.6) Note that (2.2) or (2.3), since the ill-posedness of the problem is caused by the high frequency components, a natural way to stabilize the problem is to eliminate all high frequencies and instead consider (2.3) only for max , where max will be seen that it exists as a regularization parameter. Then we get a regularized frac- tional derivative max 1ˆ :d. 2π ix Rfxi fe (2.7) where max is the characteristic function of the interval max max , , i.e., max max max max. 1, , 0, In the following sections we will derive an error esti- mate for the approximate derivative (2.7) and discuss how to compute it numerically. 3. Error Estimate In this section, we derive a bound on the difference be- tween the derivatives (2.3) and (2.7). We assume that we have an a priori bound on the exact input data. p E (See (2.5)). The relation between any two regularized solutions (2.7) is given by the following lemma. Lemma 3.1. Suppose that we have two regularized derivatives 1 Rf x 1 and defined by (2.7) with data 2 Rf x and 2 , satisfying 12 .ff If we select 1 max , E 1p , then we get the error bound 1 () 12 . p Rf RfE (3.1) Proof. From the Parseval relation we get max max max max 2 () () 12 2 12 2 12 2 2 12 22 22 max2max 12 d d 1 Rf Rf Rf Rf iff ff ff ff Using 1 max E and12 ff , we obtain 1 12 ,. pp RfRfE p From Lemma 3.1 we see that the derivative defined by (2.7) depends continuously on the input data . Next, we will investigate the difference between the derivatives (2.3) and (2.7) with the same exact . Lemma 3.2. Let x and be the de- rivatives (2.3) and (2.7) with the same exact data Rf x , and let 1 max ,1 p Ep . Suppose that p E . Then 1 . p fRf E (3.2) Proof. As in Lemma 3.1 we start with the Parseval re- lation, and using the fact that the derivatives coincide for max max , we get Copyright © 2011 SciRes. AM  A. L. QIAN ET AL. 916 max max max max 2 2 2 2 22 2 2 22 22 2 max ˆd ˆd ˆ 1d 1 ˆˆ 1d p p p pp. fRfif f f ff Now we use the bound p E,and as before we have 1 max E which leads to the error bound 1 . p fRf E Now we are ready to formulate the main result of this section: Theorem 3.3. Suppose that x is given by (2.3) with exact data and that is given by Rf x (2.7) with measured data x . If we have a bound p E, and the measured function x satisfies ff , and if we choose 1 max E where 1p , then we get the error bound 1 2 pp fRf E. (3.3) Proof. Let be the derivative defined by (2.7) with exact data Rf x . Then by using the triangle inequality and the two previous Lemmas we get 1 2. pp fRf fRf Rf RfE From Theorem 3.3, we find that (2.7) is an approxima- tion of the exact derivative f . The approximation error depends continuously on the measurement error. Remark 3.4. In our application is usually un- known, therefore we have no the exact a priori bound E. However, if we select 1 max C C , where is a positive constant, we can also obtain 1 1 0, when 0,, p Rf Cp where the constant C depends on ,, pf . This choice is helpful in our realistic computation. 4. Numerical Implement (2.7) numerically. Given a vector containing samples from on an equidistant grid 1, nthen the unique 0 jj x trigon etric polynomial interpof om lating on the grid can be written 1 2 2 1 ˆˆ (), 2π, 2π j n ix jj n j fxfe k (4.1) where the sequence 1 2 2 ˆ n n jj f are the discrete Fourier coefficients, and we have assumed thatis even. The n pudiscrete Fourier coefficients can be comted by taking the FFT of the vector . Thus we can approximate the derivative operator (2.7s follow: ) a H RFL L ,F where is the Fourier matrix [9], and is a diago- L tri nal max corresponding to differentiation of trigono- metric interpolant, but where the frequency components with maxj are explicitly set to zero. Thus the di- agonas of l element are max max , , 0, , jj ij j i (4.2) where an are defined as in (4.1). The product of L and a vector c be computed using the Fast Fourier Transform (FFT) which leads to an efficient way to compute the derivative (2.7). When using the FFT algorithm we im- plicitly assume that the vector represents a periodic function. This is not realistic in our application, and thus we need to modify the algorithm. A discussion on how to make the function “periodic” can be found in [12]. 5. Numerical Examples For verifying the effect of the proposed algorithm, a smooth function and a non-smooth function will be tested in various cases. In the numerical experiment, we always fix 01x . For an exact data function x, its discrete noiion is sy vers f randn size f , (5.1) where 2 1 2 1 1 ,,,1,2, 1 1. Nj N jj lj j ,,fxfxxjN N ff fx fx N The function “ randn ” generates arrays of random numbers whose eare normally distributed with lements Here we discuss how to compute the regularized solution Copyright © 2011 SciRes. AM  A. L. QIAN ET AL. Copyright © 2011 SciRes. AM 917 mean 0, variance 21 , and standard deviation 1 , “(())randn sizef s an array of random s thize as “return entrie at is the same s . In our computations, we al- ways take 4097N (Ife take N = 100,513,1025,, we can also satisfactory result.)The derivative errors are measured by the weighted 2 L-norms defined as follows: in a w obta [3] A. K. Louis, “Inverse und Schlecht Gesteellte Problem,” Teubner Verlag, Wiesbaden, 1989. [4] D. A. Murio, “Automatic Numerical Differentiation by Discrete Molification,” Computers and Mathematics with Applications, Vol. 13, No. 4, 1987, pp. 381-386. doi:10.1016/0898-1221(87)90006-X 2 1 1N j N tion pa . (5.2) [5] D. A. Murio and L. Guo, “Discrete Stability Analysis of Molification Method for Numerical Differentiation,” Journal of Computational Applied Mathematics, Vol. 19, No. 6, 1990, pp. 15-25. jj x Rx eter ERf The regulariza f ram f [6] I. Daubechies, “Ten Lectures on Wavelets (CBMS-NSF Regional Conference Series in Applied Mathematics),” SIAM: Society for Industrial and Applied Mathematics, Philadelphia, 1992. max was selected ac- 4, , cording to Remark 3. i.e. 1 max c . The erro norms in r es- timates of Section 3 contain 2 LR,and the [7] J. N. Lyness, “Has Numerical Differentiation a Future?” Utilitas Mathematics, Winnipeg, 1978. numerical experiments are performed witite inter- val. However, the method for selecting max h a fin works well,also in the case where the norms are comed for a finite interval. put [8] J. Oliver, “An Algorithm for Numerical Differentiation of a Funcion of One Real Variable,” Journal of Computa- tional Applied Mathematics, Vol. 6, No. 2, 1980, pp. 145- 160. doi:10.1016/0771-050X(80)90008-X [9] T. Strom and J. N. Lyness, “On Numerical Differentia- tion,” BIT Numerical Mathematics, Vol. 15, No. 3, 1975, pp. 314-322. doi:10.1007/BF01933664 6. Acknowle The author wants to 7. References [1] J. Baumeist dgements ex er, “S press table So his thanks to the refe lution of Inverse Problems,” F. ral Equ ree for ations,” [10] D. N. Hao, “A Molification Method for Ill-Posed Prob- lems,” Numerische Mathematik, Vol. 68. No. 4, 1994, pp. 469-506. doi:10.1007/s002110050073 many valuable comments. This work is supported by Educational Commission of Hubei Province of China (Q20102804,T201009). [11] D. A. Murio, C. E. Mejia and S. Zhan, “Discrete Molifi- cation and Automatic Numerical Differentiation,” Com- puters and Mathematics Application, Vol. 35, No. 5, 1998, pp. 1-16. doi:10.1016/S0898-1221(98)00001-7 [12] L. Elden, F. Berntsson and T. Reginska, “Wavelet and Fourier Methods For Solving the Sideways Heat Equa- tion,” SIAM Journal on Scientific Computing, Vol. 21, No. 6, 2000, pp. 2187-2205. Vieweg and Sohn, Braunschweig, 1987. [2] R. Gorenflo and S. Vessela, “Abel Integ Springer-Verlag, Berlin, Heidelberg, New York, 1991.

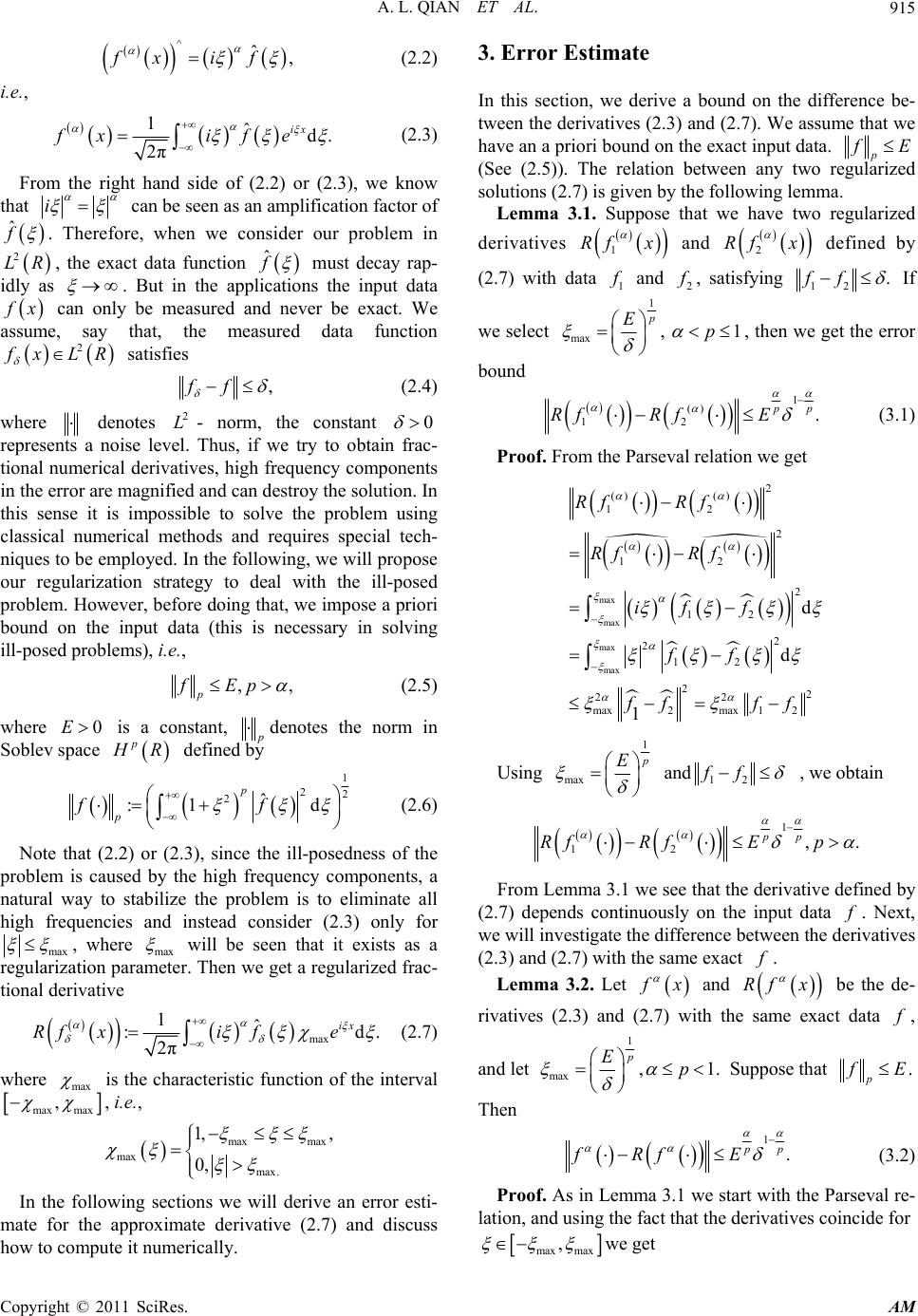

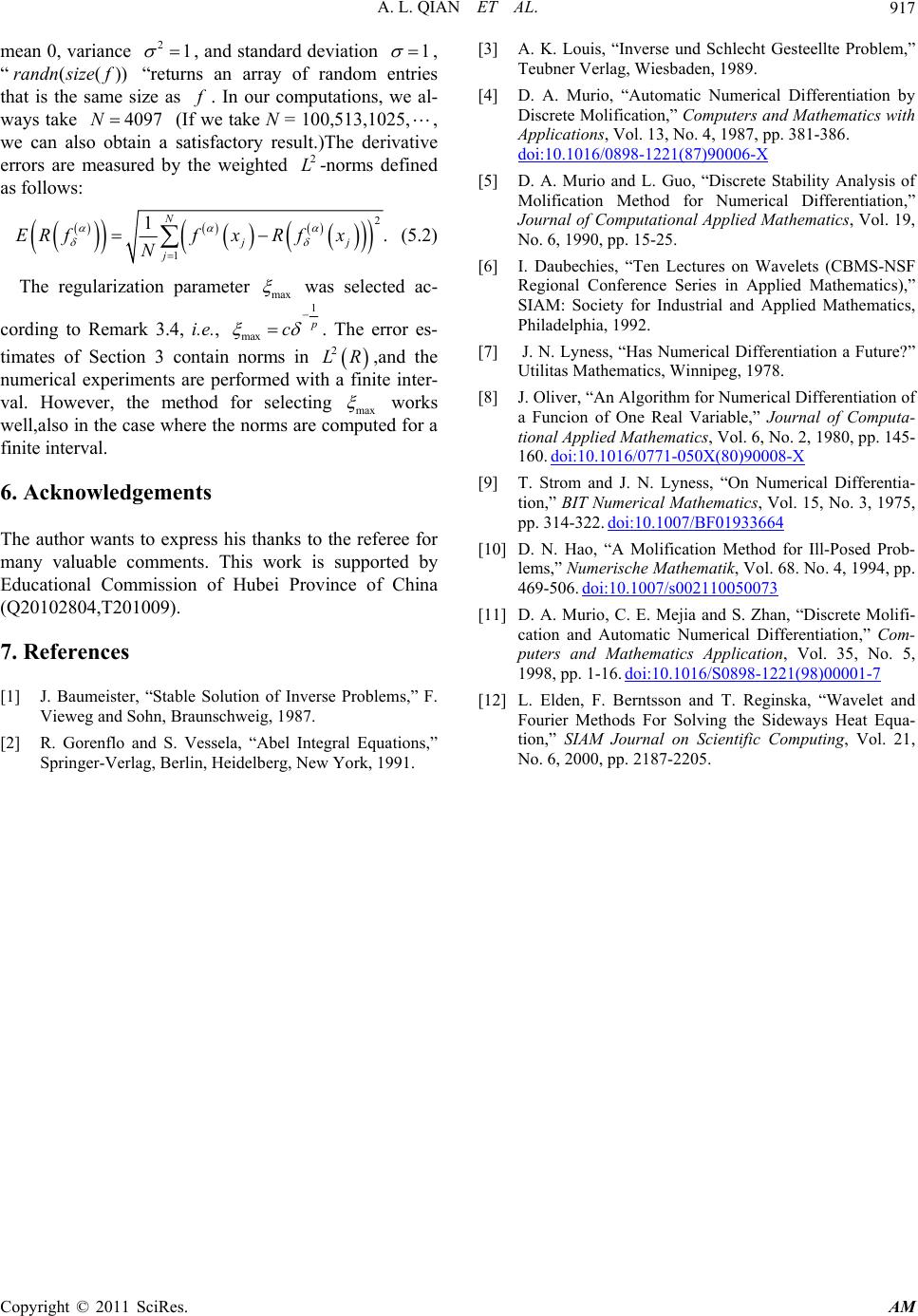

|