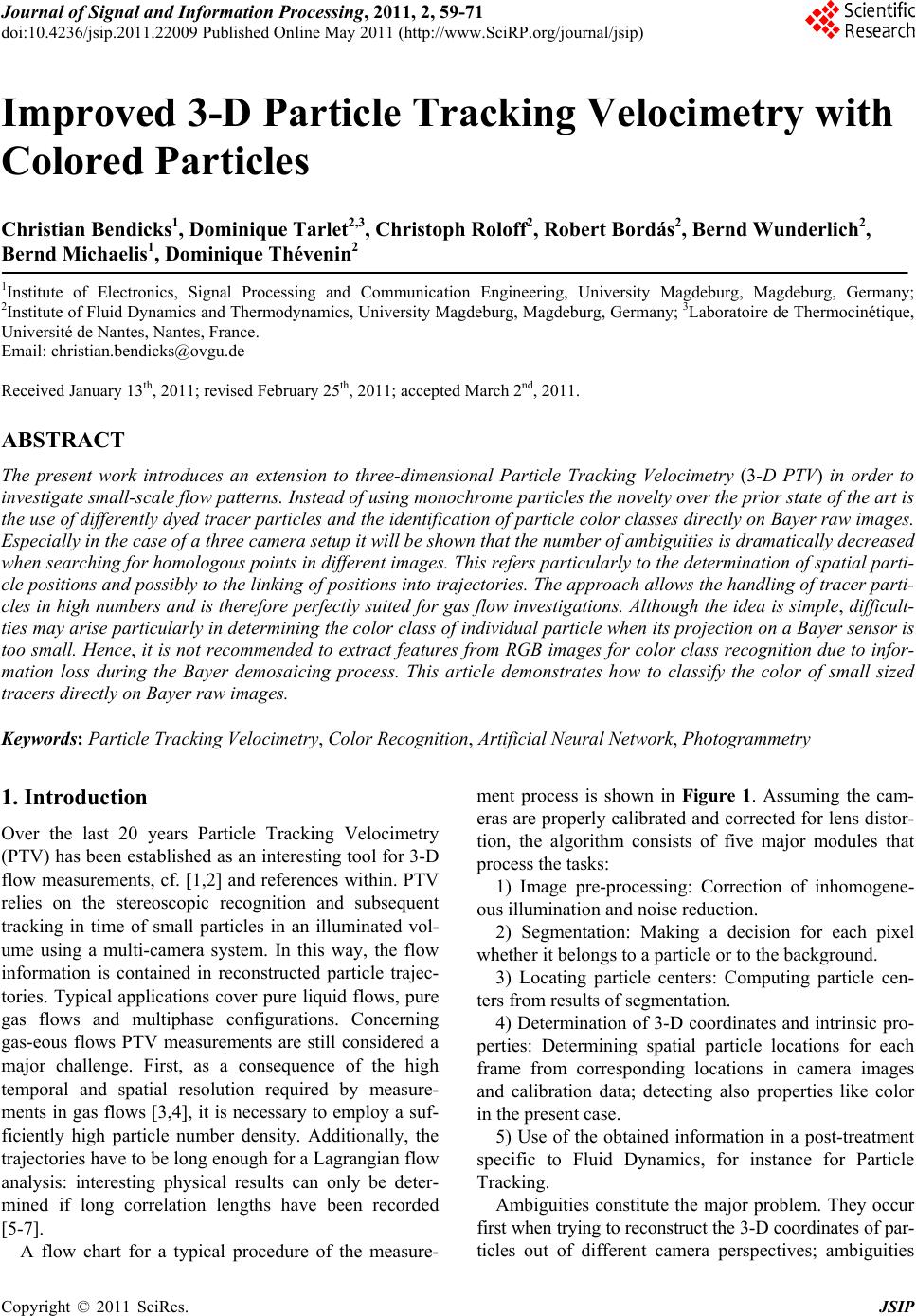

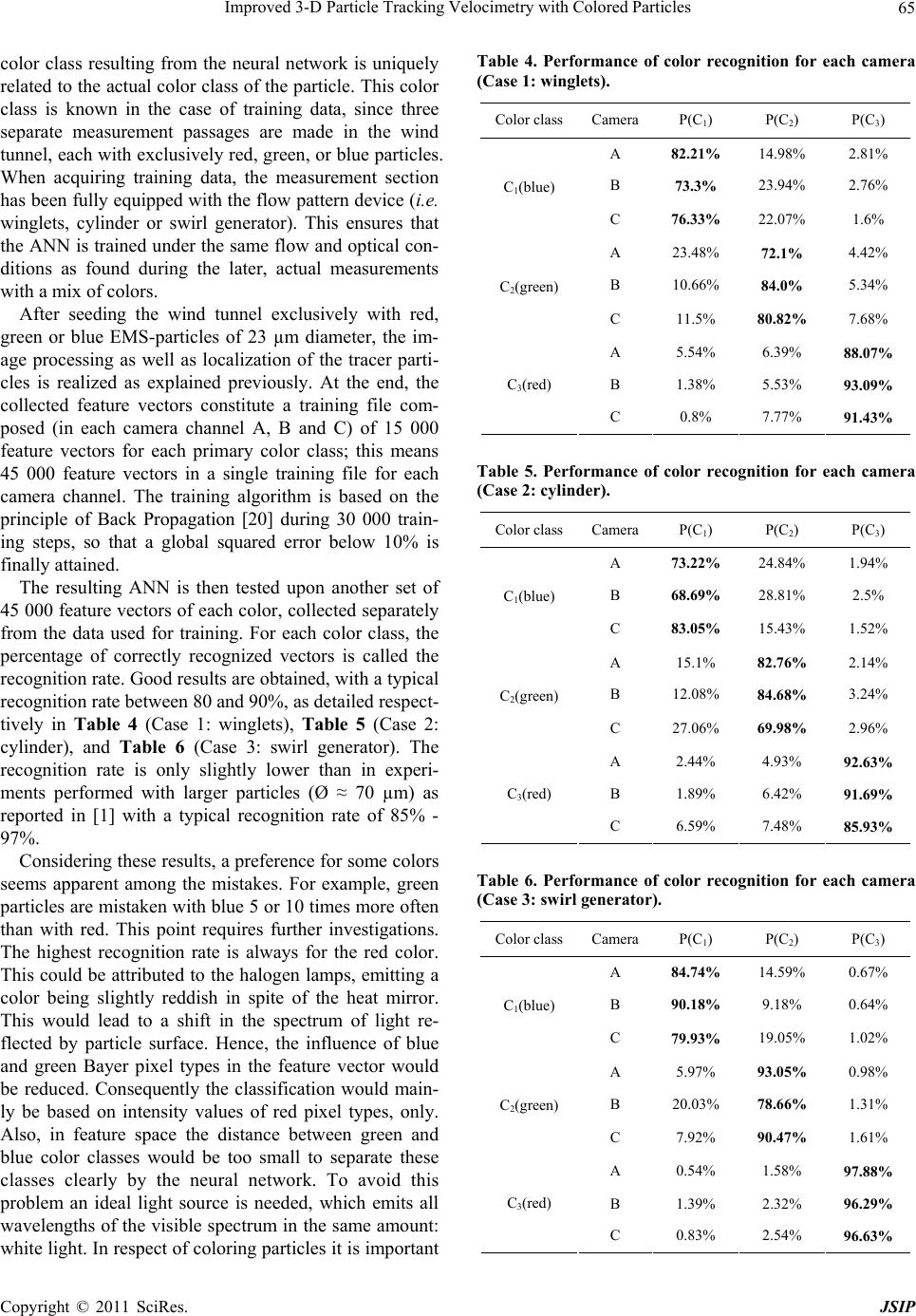

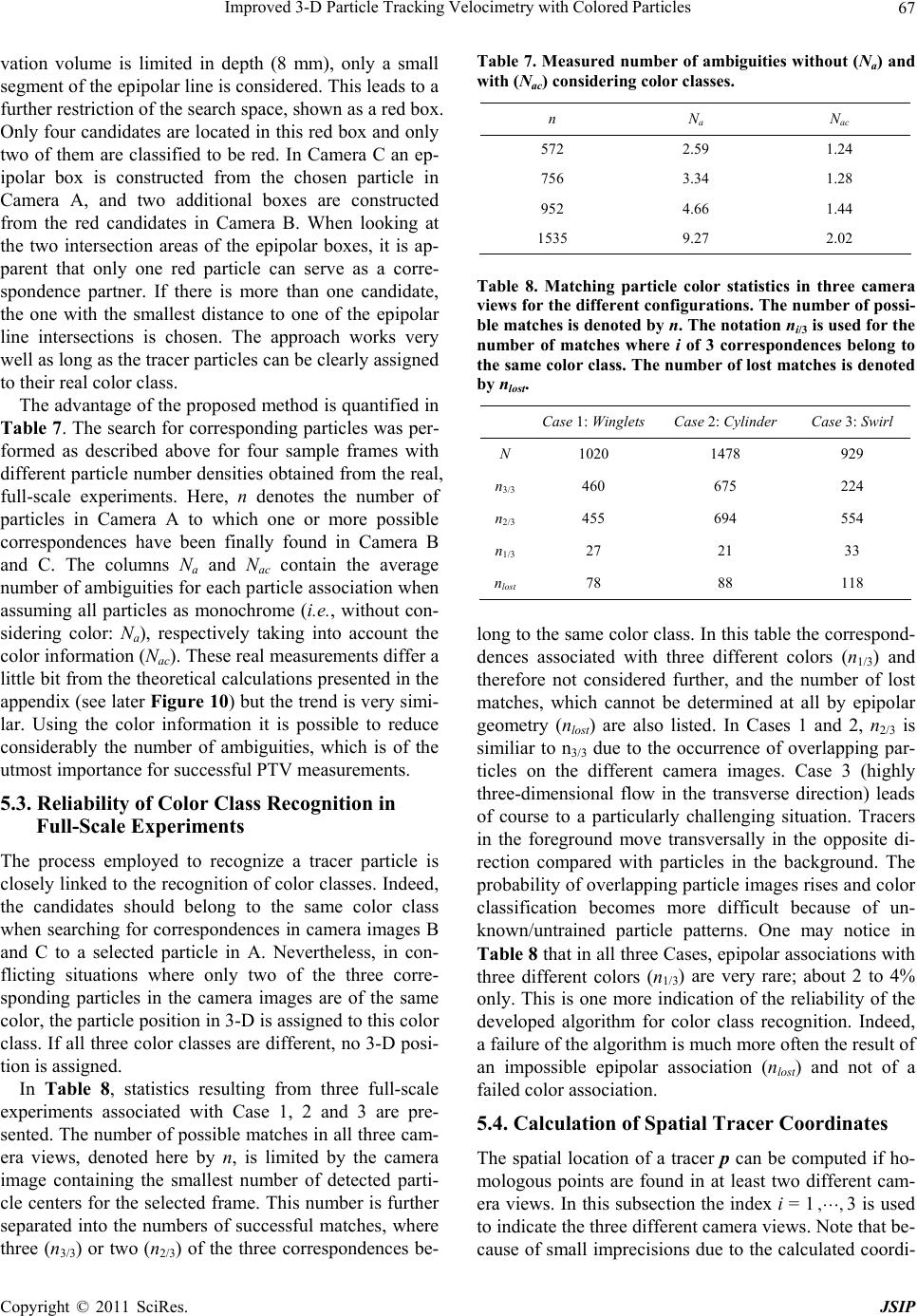

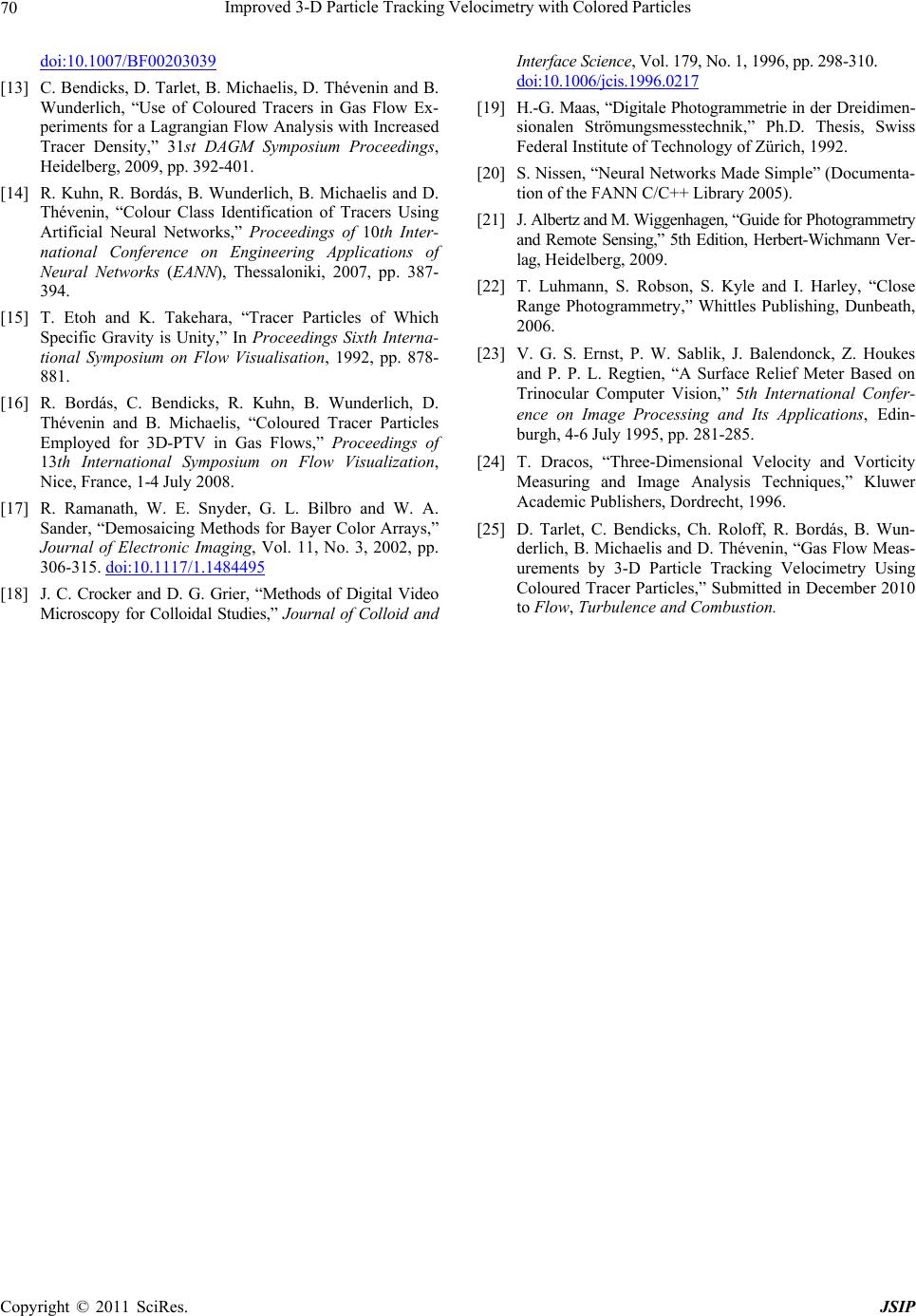

Journal of Signal and Information Processing, 20 11 , 2, 59 - 71 doi:10.4236/jsip.2011.22009 Published Online May 2011 (http://www.SciRP.org/journal/jsip) Copyright © 2011 SciRes. JSIP 59 Improved 3-D Particle Tracking Velocimetry with Colored Particles Christian Bendicks1, Dominique Tarlet2,3, Christoph Roloff2, Robert Bordás2, Bernd Wunderlich2, Bernd Michaelis1, Dominique Thévenin2 1Institute of Electronics, Signal Processing and Communication Engineering, University Magdeburg, Magdeburg, Germany; 2Institute of Fluid Dynamics and Thermodynamics, University Magdeburg, Magdeburg, Germany; 3Laboratoire de Thermocinétique, Université de Nantes, Nantes, France. Email: christian.bendicks@ovgu.de Received January 13th, 2011; revised February 25th, 2011; accepted March 2nd, 2011. ABSTRACT The present work introduces an extension to three-dimensional Particle Tracking Velocimetry (3-D PTV) in order to investigate small-scale flow patterns. Instead of using monochrome particles the novelty over the prior state of the art is the use of differently dyed tracer particles and the identification of particle color classes directly on Bayer raw images. Especially in the case of a three camera setup it will be shown that the number of ambiguities is dramatically decreased when searching for homologous points in different images. This refers particularly to the determination of spatial parti- cle positions and possibly to the linking of positions into trajectories. The approach allows the handling of tracer parti- cles in high numbers and is therefore perfectly suited for gas flow investigations. Although the idea is simple, difficult- ties may arise particularly in determining the color class of individual particle when its projection on a Bayer sensor is too small. Hence, it is not recommended to extract features from RGB images for color class recognition due to infor- mation loss during the Bayer demosaicing process. This article demonstrates how to classify the color of small sized tracers directly on Bayer raw images. Keywords: Particle Tracking Velocimetry, Color Recognition, Artificial Neural Network, Photogrammetry 1. Introduction Over the last 20 years Particle Tracking Velocimetry (PTV) has been established as an interesting tool for 3-D flow measurements, cf. [1,2] and references within. PTV relies on the stereoscopic recognition and subsequent tracking in time of small particles in an illuminated vol- ume using a multi-camera system. In this way, the flow information is contained in reconstructed particle trajec- tories. Typical applications cover pure liquid flows, pure gas flows and multiphase configurations. Concerning gas-eous flows PTV measurements are still considered a major challenge. First, as a consequence of the high temporal and spatial resolution required by measure- ments in gas flows [3,4], it is necessary to employ a suf- ficiently high particle number density. Additionally, the trajectories have to be long enough for a Lagrangian flow analysis: interesting physical results can only be deter- mined if long correlation lengths have been recorded [5-7]. A flow chart for a typical procedure of the measure- ment process is shown in Figure 1. Assuming the cam- eras are properly calibrated and corrected for lens distor- tion, the algorithm consists of five major modules that process the tasks: 1) Image pre-processing: Correction of inhomogene- ous illumination and noise reduction. 2) Segmentation: Making a decision for each pixel whether it belongs to a particle or to the background. 3) Locating particle centers: Computing particle cen- ters from results of segmentation. 4) Determination of 3-D coordinates and intrinsic pro- perties: Determining spatial particle locations for each frame from corresponding locations in camera images and calibration data; detecting also properties like color in the present case. 5) Use of the obtained information in a post-treatment specific to Fluid Dynamics, for instance for Particle Tracking. Ambiguities constitute the major problem. They occur first when trying to reconstruct the 3-D coordinates of par- ticles out of different camera perspectives; ambiguities  Improved 3-D Particle Tracking Velocimetry with Colored Particles 60 Figure 1. Usual design of a measurement system to perform particle tracking veloc i metry. are found again when assigning successive particle posi- tions in time using the tracking algorithm (cf. Maas et al. in [8]). To reduce the number of ambiguities the simplest approach would be to use a low seeding density, but this would also reduce the spatial resolution of the system and the basis for an accurate analysis. A more sophisti- cated way of reducing ambiguities is to build classes of particles with distinctive properties, such as size [9], shape [10] or color (Figure 2). The first two criteria are not useful because proper particles for seed- ing in gases have to be very small to follow the flow. Using color as a distinctive criterion has already been proposed and in- vestigated in detail by the research group of Prof. Brod- key [11,12]. Colored particles of a diameter of 38-44 µm were recorded on a 16 mm film and the pictures were digitized with considerable effort in several steps. To identify the color of a particle, particle pixels were aver- aged to create an RGB intensity vector which was com- pared to signature RGB vectors corresponding to one of the applied colors. Because of associated problems the use of color information has been found to be very diffi- cult, if not impossible, using this post-processing strategy [12]. Instead of digitizing analog film material, today, digi- tal high-speed cameras based on one CMOS or CCD sensor are commonly used to image the flow of particles. But since such type of sensor responds to all frequencies in the visible spectrum, it is unable to produce color im- ages. Therefore, a color filter array is placed in front of the sensor of single chip color cameras, which allows only the light of a specific range of wavelengths to pass through each photodetector. The most commonly used color filter array is the Bayer filter array which is based on the human visual system. It consists of three kinds of filters, one for each primary color (red, green and blue). Green is sampled as twice the frequency of the other col- ors since the human visual system is most sensitive to the Figure 2. Reducing the apparent particle number density with the help of color classes. green range of the spectrum. In addition, to ensure full image resolution, missing samples must be reconstructed through a process called demosaicing. This leads to sig- nificant color artifacts especially in high spatial fre- quency and/or small image structures. As a consequence, the assignment of a particle to its color class simply based on reconstructed RGB images is not reliable, when a particle projection covers only a few pixels. In the following, the practical application of colored particles for digital PTV is demonstrated using classify- cation of color classes prior to 3-D localization by the method of epipolar lines based on a three-camera setup. Note, in general the idea shown in Figure 2 is applicable for PTV experiments with two or more than three cam- eras. First, the experimental setup is described, including the choice of appropriate tracers and their coloring. An important step consists of image pre-processing and lo- calization of particle centers on camera images. This in- formation will subsequently be used for color classifica- tion, and will feed the correspondence solver to obtain 3-D localizations. The classification of small-sized col- ored particles (Ø ≈ 20 µm) is obtained by means of Arti- ficial Neural Networks (ANNs). For the investigation of larger particles (Ø ≈ 70 µm), successful application of ANNs has been reported in [1]. The use of other classify- ers like Support Vector Machines (SVM) is also possible and has been investigated by Bendicks et al. [13]. Here, ANNs have been retained because of the considerable experience of our group with this technique and of the huge amount of collected data for training and tests [14]. ANNs are highly flexible and are robust against slight variations in feature space. After the description of parti- cle assignment to color classes, camera calibration is presented for the experimental setup. Then, the advan- tage of using color classes is shown in combination with the epipolar constraint for particle assignment among camera views. This is used as a basis for computing 3-D particle locations in each time frame. Quantitative meas- urement results (for example, proportion of successful correspondences with the same color) are finally dis- cussed for three cases involving 3-D PTV. 2. Experimental Setup: Particles and Imaging Method 2.1. Choosing and Dyeing Tracer Particles The material of the tracer particles is important since it Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 61 determines their density ρp and their agglomeration property. Ideally, a tracer particle of neutrally buoyant density is preferred. This is relatively easily realized in liquids [5,6,15]. In gas, smaller particle diameters are needed to keep the ability to follow the flow. However, the minimum diameter of the particles is limited by the production process and by optical considerations. A minimum number of pixels is needed on the camera sen- sor for each tracer particle to allow proper localization. In the present situation involving color recognition, the minimum number of pixels is about nine. Thus, a diame- ter of 20 µm is at least required for the particles with the present setup. The physical properties of the tracer parti- cles finally retained for the present study are reported in Table 1. The choice of suitable tracers is discussed in detail in other publications (see [1,16]). Expanded Micro Spheres (EMS) have been finally identified as being the most suitable material due to ap- propriate size, low density, acceptable price, and good dyeing properties with low electrostatic loading. Dyeing of the particles is realized according to a standard, man- ual protocol. A small quantity (70 mg) of EMS particles with a diameter of 20 µm is introduced into a polyethyl- ene container that has a volume of 4 cm³. Then, 250 mg of a mix of 50% dye mass (in the present case Edding T25 liquid ink of color red, green or blue), and 50% ethanol is added to the particles. This ethanol mix is re- alized in order to obtain a brighter color for the particles, in comparison with a non-diluted dye. Five minutes of careful mixing by means of a laboratory spatule ensures a homogeneous repartition of the dye through transport and diffusion within this porous medium. The dyed particles are then spread onto a cardboard layer for a day, during which they are turned three times. After drying the ob- tained colored particles are sieved through a metal filter of 25 µm in size in order to remove possibly agglomer- ated particles. A microscopic check confirms that the particles have kept their original structure and that the increase in diameter is at most equal to 3 µm. This dye- ing process results finally in a density of 320 kg/m³ for the colored EMS particles with diameter of 23 µm. Only the three primary colors (red, green and blue) are used for the present study, allowing an easier recognition through a Bayer-Pattern sensor, composed of pixels of these 3 primary colors. The same method is nevertheless applicable to other colors as well as shown by further tests. 2.2. Imaging of Particles in the Flow For suitable test measurements some small-sized (typical scale: mm) flow patterns seeded with colored tracer par- ticles have to be generated within the focal depth of the cameras. Figure 3 shows a drawing of the employed Table 1. Properties of employed tracer particles. Density (kg/m³) Mean diameter (µm) Name availableused here availableused here Expancel® (EMS) 24 - 70 70 20 - 12020 Colored EMS 320 23 Figure 3. Pe rspective view of the eiffe l wind tunnel used for all measurements. wind tunnel and the arrangement of cameras and lighting in front of the observation window (an anti-reflective glass). The depth of the wind tunnel is 8 mm and is tai- lored to match the possible measurement depth (z coor- dinate). The air flow is seeded with the tracer particles at its open extremity on the left (air intake). Beyond the right extremity (outflow), an electric fan entrains the air flow through a filter tissue with a pore size of 11 µm. This filter tissue captures the tracer particles and regular- izes the flow induced by the fan in the wind tunnel. The optical setup is discussed in detail in the next section. Lamps and cameras are typically placed 20 to 30 cm away from the window giving optical access to the mea- surement section. On top of the mainstream velocity, suitably tailored bluff bodies are placed in the measurement section to create a variety of flow properties. In Case 1 a set of three polyethylene winglets (length: 17 mm, see Figure 4) simultaneously induce in the measurement volume large streamline curvature, continuous flow acceleration and small-scale recirculations. In Case 2, a transverse hori- zontal cylinder with a diameter of 6 mm creates a large recirculation zone downstream. These two flow patterns are mostly two-dimensional. To complete this experi- mental campaign of 3-D PTV, Case 3 relies on a rotating asymmetric device (width: 5 mm, 10 rotations per second) leading to spiraling flow structures in the measurement section. In what follows, all aspects pertaining to color recognition and 3-D localization of the tracer particles are discussed. To acquire the images three cameras (CMOS sensor with 1280 × 1024 pixels) are focused on the measure- ment section by means of a LINOS Apo-Rodagon D-2x Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 62 Figure 4. Set of three winglets (Case 1). object lens with a focal length of 75 mm used at an f-number of 11 and located at a distance between 20 and 23 cm from the measurement section. For later calcula- tions of 3-D coordinates, synchronization of the camera images - always acquired at 500 fps during this project - is of high importance. This synchronization is checked by simultaneously imaging a blinking light diode and verifying that illuminated states appear on the same frame. The cameras are designed to obtain a close-up image of the employed tracer particles with a diameter of 23 µm. Recording in monochrome mode enables to cap- ture raw information from the CMOS sensor (Bayer Pat- tern of type G-R/B-G) that has a resolution of 1.3 mega- pixels (1280 × 1024). As later discussed, color recogni- tion will be based on these Bayer raw data. Table 2 sums up the properties of the employed optical systems. It is important to note that the measurement section is of a size 25 × 25 × 8 mm, which is less than the field of view of a 2-D image since all the areas of the 2-D images (es- pecially the edges) have to intersect for 3-D localiza- tion. For illumination four light heads are employed, based on halogen lamps emitting a light of color temperature between 3000 and 3400 K. They are located at a distance of about 30 cm from the measurement section. Addition- ally, the light heads are equipped with hot mirrors and daylight filters, which are actively air-cooled to prevent loss of filtering properties resulting from excessive heat. In this way, the daylight filters and the measuring vol- Table 2. Properties of the DEDOCOOL lamps and the BASLER A504kc cameras. 4 DEDOCOOL Lamps Color temperature (K): 5300 (Halogen + Daylight filter) Distance (cm): 20 30 40 Light intensity (lux):24 × 106 1.1 × 106 0.58 × 106 Footcandle: 222 000 105 000 54 000 Illum. area ø (cm): 6.5 7 9 3 synchronized BASLER 504kc cameras Size of a pixel (µm):12 × 12 Bayer Pattern: G-R/B-G Sensor size (mm): 15.36 × 12.29 Resolution: 1280 × 1024 f-Number: 11 Object dist.(cm): 20 - 23 Focal length (mm):75 Depth of field (mm):≈ 6 Recording rate (fps):500 Exposure time (ms): 0.8 - 1.0 ume are protected against heat produced by the illumine- tion. Furthermore, the resulting illumination spectrum is similar to a light source with a color temperature of 5300 K (daylight). Therefore, Bayer sensor elements are not af- fected by near-infrared or reddish light, which would hinder color recognition. Properties of the lamps for dif- ferent working distances are given in Table 2. Using three light heads placed between the cameras would lead to a more homogeneous illumination. However, tests revealed that the particle and color class recognition rate is higher with four light heads arranged as in Figure 3. 3. Image Pre-Processing and Locating 2-D Particle Centers on an Image 3.1. Image Pre-Processing For each camera channel, an averaged image is first cre- ated over 20 consecutive images of the measurement section without seeding, which is later used to perform background subtraction on the entire Bayer image se- quence. This ensures the removal of irrelevant structures like the winglets or dust deposition on the window. Since the algorithm employed to detect particle center consid- ers grayscale images as input, Bayer images need to be converted in an intermediate step to RGB color images using a bilinear interpolation. This is performed with a demosaicing algorithm [17]. After that the images are converted to grayscaled ones using equal weights for red, green, and blue components to compute the intensity value. The following pre-processing stage on grayscale im- ages (introduced by Crocker and Grier [18]) deals with two problems complicating particle detection: 1) long Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 63 wavelength modulations of the background intensity due to non-uniform sensitivity of the camera pixels or uneven illumination and 2) unavoidable digitization noise in the camera. To solve the first problem the background is removed by a boxcar average (ba) over a square region with a side length of (2w + 1) pixels: 2 1 , , 21 ww ba jwiw Ixy Ixiyj w . (1) The user-defined parameter w denotes a number of pixels larger than the apparent particle radius but smaller than the smallest interparticle separation. Digitization noise is modeled as uniformly Gaussian with a correla- tion length of λ = 1 pixel. Thus, the convolution of image I with a Gaussian surface results in the suppression of such noise without excessively blurring the image: 22 2 1 ,,exp 4 ww fba jwiw ij IxyI xiyj b (2) with normalization 2 2 2 exp . 4 w iw i b (3) The application of Equations (1) and (2) to image I can be combined into one single convolution with the kernel 22 2 2 0 11 1 , exp. 421 ij Kij Kb w (4) The normalisation constant 2 2 02 2 1exp 221 w iw i Kbw b ,. (5) can be used to compare filtered images with different values of w. The improved image is given by: ,, ww f jwiw IxyIxiyjKij (6) 3.2. Locating 2-D Particle Centers To estimate particle center positions in a filtered image If a connectivity analysis is performed as described by Maas [19]. This method can also handle overlapping par- ticles. The goal is to find local maxima and assign sur- rounding pixels over a defined threshold to one maxi- mum. Assigning a pixel to a maximum means assigning a pixel to an individual particle. The algorithm starts the search in the image pixel by pixel. When the pixel value is greater than a given threshold the pixel is tested to check if it is a local maximum. There is a local maximum when the pixel value is greater than or equal to the values of the eight neighboring pixels. If this is not the case, the search is continued. The local maximum is used as a seeding point for a region-growing operation in order to associate and label all pixels belonging to the particle. For this purpose, a so-called discontinuity threshold D is introduced to decide if a pixel is assigned to a maximum or not. Suppose a pixel at location p0 with intensity I(p0) is already assigned to a maximum and the exploration is continued at one of the unlabeled neighbors, called p1 with intensity I(p1). Then, pixel p1 is assigned to the cur- rent maximum if the following two conditions are true: 1) I(p1) ≤ I(p0) + D and (2) there are one or more pn1 which satisfy I(p0) ≥ I(pn1) – D, where pn1 identifies one of the other seven neighbors of p1. The operator described above considers the following rules: 1) All pixels belonging to a particle have values great- er than the threshold. 2) A particle has exactly one local maximum. 3) The grayscale gradient within a particle projection is continuous. 4) A pixel, which represents a local minimum and could be assigned to several neighboring pixels, is as- signed to the neighbor pixel with the largest value. The second and the third rule can be weakened or strengthened by changing the discontinuity threshold D. Discontinuities up to D grayscale values are tolerated within a single particle. During the region-growing search process, the n pixels belonging to the current local maximum are stored in a pixel list. Finally, particle coordinates are computed by the center of mass method: 11 00 11 00 ,, ,. ,, nn iii iii ii nn ii ii ii x Ixyy Ixy xy Ixy Ixy (7) 4. Color Classification by an Artificial Neural Network (ANN) In the present application color classification is needed. Directly using RGB images created by a Bayer conver- sion algorithm and working with thresholds is not possi- ble since this conversion leads to artifacts (see Figure 5). For our purpose, when attributing for instance the color “blue”, the exact shade or brightness of the observed colored particle should not be taken into account since those will vary in time and space and differ slightly from particle to particle. As a consequence, the use of an Arti- ficial Neural Network (ANN) is an appropriate solution for attributing particle color class [14]. ANNs are robust and able to learn and adapt according to training data associated with a given context. Other adequate classify- ers like Support Vector Machines have been tested as well [13] but could not beat ANNs for the present appli- Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 64 cation. The proposed network design is a fully connected back-propagation network illustrated in Figure 6. It con- sists of an input layer with 10 neurons; a first hidden layer with 8 neurons; a second hidden layer with 8 neurons; an output layer with 3 neurons (corresponding to the number of applied color classes). The number of output neurons is determined by the number of color classes used in the measurement ex- periment. Hence, the network has to be adapted when more or less colors are employed. All neurons in hidden layers and the output layer use sigmoid activation func- tions. In this way the network is able to make a predict- tion based on fuzzy logic about the color class of a parti- cle. The output neuron with the largest response value is considered as indicator of the particle color. In Figure 6 the outputs are denoted with C1, C2 and C3 corresponding respectively to blue, green and red color classes. This notation is introduced to remind the reader that these are only color classes that have been determined without considering the exact shade and brightness. In the present work all experiments are performed only with blue, green and red particles. Nevertheless, other tracer colors like cyan, magenta or yellow have been tested as well and are in principle suitable for further measurements. The features used for network input are obtained di- rectly from Bayer raw images (“input data”, see Figure 6). Once a particle is localized on the grayscale image (as described in section 3.2) the values of the red, green and blue pixels (integer values of brightness between 0 and 255 - 8 bit) of the Bayer Pattern around its center are used to build the feature vector. The location of the cen- ter of the tracer particle given in integer coordinates (x0, y0) is taken into account as being located either on a red, a blue or a green pixel of the Bayer Pattern. Green pixels are subdivided into two categories of green, due to their different localizations between red or blue pixels on the Bayer Pattern (cf. Figure 5). Finally, the feature vector includes ten components, the first one being a function Φ of the color associated with the center of the particle (x0, y0): 00 00 00 00 00 0, if , is Green 1 1, if , is Red Φ,2, if , is Blue 3, if , is Green 2 xy xy xy xy xy (8) The nine others are the intensity values I(x,y) at the particle center pixel and at the eight neighboring pixels, in a clockwise scheme reported in Table 3. As explained previously, the image of the tracer particle must cover a Figure 5. Appearance of different colored tracer particles (23 µm) in Bayer images (upper row) and in resulting RGB images created by bilinear demosaicing (lower row). On the right there is the arrangement of color filters (Bayer pat- tern) on the CMOS-Chip. Color distortions appear on the RGB images as a consequence of demosaicing. Therefore, the RGB color space cannot be used for color classification. Figure 6. Principle of color classification with an Artificial Neural Network based on particle center position and sur- rounding Bayer data. Here, the network is designed for the use of three particle colors (therefore 3 output neurons). Table 3. Feature vector definition. Feature vector component value f1 Φ(x0, y0) f2 I(x0, y0) f3 I(x0 + 1, y0) f4 I(x0 + 1, y0 – 1) f5 I(x0, y0 – 1) f6 I(x0 – 1, y0 – 1) f7 I(x0 – 1, y0) f8 I(x0 – 1, y0 + 1) f9 I(x0, y0 + 1) f10 I(x0 + 1, y0 + 1) minimum area of 3 × 3 pixels in a Bayer Pattern in order to recognize particle color classes. The training procedure consists in arranging the weights among the neural network (connections between neurons of adjacent layers, cf. Figure 6) in such a way that the Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 65 color class resulting from the neural network is uniquely related to the actual color class of the particle. This color class is known in the case of training data, since three separate measurement passages are made in the wind tunnel, each with exclusively red, green, or blue particles. When acquiring training data, the measurement section has been fully equipped with the flow pattern device (i.e. winglets, cylinder or swirl generator). This ensures that the ANN is trained under the same flow and optical con- ditions as found during the later, actual measurements with a mix of colors. After seeding the wind tunnel exclusively with red, green or blue EMS-particles of 23 µm diameter, the im- age processing as well as localization of the tracer parti- cles is realized as explained previously. At the end, the collected feature vectors constitute a training file com- posed (in each camera channel A, B and C) of 15 000 feature vectors for each primary color class; this means 45 000 feature vectors in a single training file for each camera channel. The training algorithm is based on the principle of Back Propagation [20] during 30 000 train- ing steps, so that a global squared error below 10% is finally attained. The resulting ANN is then tested upon another set of 45 000 feature vectors of each color, collected separately from the data used for training. For each color class, the percentage of correctly recognized vectors is called the recognition rate. Good results are obtained, with a typical recognition rate between 80 and 90%, as detailed respect- tively in Table 4 (Case 1: winglets), Table 5 (Case 2: cylinder), and Table 6 (Case 3: swirl generator). The recognition rate is only slightly lower than in experi- ments performed with larger particles (Ø ≈ 70 µm) as reported in [1] with a typical recognition rate of 85% - 97%. Considering these results, a preference for some colors seems apparent among the mistakes. For example, green particles are mistaken with blue 5 or 10 times more often than with red. This point requires further investigations. The highest recognition rate is always for the red color. This could be attributed to the halogen lamps, emitting a color being slightly reddish in spite of the heat mirror. This would lead to a shift in the spectrum of light re- flected by particle surface. Hence, the influence of blue and green Bayer pixel types in the feature vector would be reduced. Consequently the classification would main- ly be based on intensity values of red pixel types, only. Also, in feature space the distance between green and blue color classes would be too small to separate these classes clearly by the neural network. To avoid this problem an ideal light source is needed, which emits all wavelengths of the visible spectrum in the same amount: white light. In respect of coloring particles it is important Table 4. Performance of color recognition for each camera (Case 1: winglets). Color classCameraP(C1) P(C2) P(C3) A 82.21% 14.98% 2.81% B 73.3% 23.94% 2.76% C1(blue) C 76.33% 22.07% 1.6% A 23.48% 72.1% 4.42% B 10.66% 84.0% 5.34% C2(green) C 11.5% 80.82% 7.68% A 5.54% 6.39% 88.07% B 1.38% 5.53% 93.09% C3(red) C 0.8% 7.77% 91.43% Table 5. Performance of color recognition for each camera (Case 2: cylinder). Color classCameraP(C1) P(C2) P(C3) A 73.22% 24.84% 1.94% B 68.69% 28.81% 2.5% C1(blue) C 83.05% 15.43% 1.52% A 15.1% 82.76% 2.14% B 12.08% 84.68% 3.24% C2(green) C 27.06% 69.98% 2.96% A 2.44% 4.93% 92.63% B 1.89% 6.42% 91.69% C3(red) C 6.59% 7.48% 85.93% Table 6. Performance of color recognition for each camera (Case 3: swirl generator). Color classCameraP(C1) P(C2) P(C3) A 84.74% 14.59% 0.67% B 90.18% 9.18% 0.64% C1(blue) C 79.93% 19.05% 1.02% A 5.97% 93.05% 0.98% B 20.03% 78.66% 1.31% C2(green) C 7.92% 90.47% 1.61% A 0.54% 1.58% 97.88% B 1.39% 2.32% 96.29% C3(red) C 0.83% 2.54% 96.63% Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 66 to choose ink that can be distinguished well under the prevailing light conditions. This is all the more difficult the more color classes to be used. 5. Locating Particle Centers in 3-D 5.1. Camera Calibration The purpose of calibration is the determination of all extrinsic (6 unknowns: location and orientation of the camera) and intrinsic (5 unknowns: principal point, cali- brated focal length, lens distortion parameters) parame- ters of the used camera model. The mathematical formu- lation of the camera model is expressed by the collinear- ity equations which describe the transformation of 3-D world coordinates to 2-D image coordinates [21]. To compute all unknowns a set of well-known 3-D coordi- nates is needed (reference points), which can be mapped to their corresponding positions in camera images. For each reference point two equations are set up (one for each image coordinate). This leads to an overdetermined system of equations, solved by the least squares method. For calibration a two-level calibration target (shown in Figure 7) with 25 reference points is used. In contrast to a plane target field, reference points are spatially distrib- uted in all three dimensions and the calibration becomes more reliable [22]. First, the target is imaged in eight different orientations to determine the intrinsic parame- ters of each camera precisely. Then the target is placed in the center of the observation volume so that it faces the observation window. One snapshot is taken by each camera and is used to calibrate extrinsic parameters while keeping the intrinsic parameters fixed. This posi- tion of the calibration target determines the origin of the world coordinate system. 5.2. Particle Assignment among Camera Views Figure 8 illustrates the whole process of solving the spa- tial correspondence problem for a single particle. The particle of interest is marked by a surrounding red square in Camera A - hence classified as a red one. Now, one Figure 7. Two-level calibration target (dimensions: 20 mm × 20 mm × 5 mm; level height: 2 mm) with 25 reference points of known coordinates (Ø = 0.5 mm). Figure 8. Reducing the number of ambiguities using epipo- lar geometry and color information. corresponding partner should be found in Camera B and Camera C. There are eight possible candidates near the epipolar line (red, from Camera A) which is constructed in Camera B, which means that their distance to the line is smaller than the tolerance value ε. Because the obser- Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 67 vation volume is limited in depth (8 mm), only a small segment of the epipolar line is considered. This leads to a further restriction of the search space, shown as a red box. Only four candidates are located in this red box and only two of them are classified to be red. In Camera C an ep- ipolar box is constructed from the chosen particle in Camera A, and two additional boxes are constructed from the red candidates in Camera B. When looking at the two intersection areas of the epipolar boxes, it is ap- parent that only one red particle can serve as a corre- spondence partner. If there is more than one candidate, the one with the smallest distance to one of the epipolar line intersections is chosen. The approach works very well as long as the tracer particles can be clearly assigned to their real color class. The advantage of the proposed method is quantified in Table 7. The search for corresponding particles was per- formed as described above for four sample frames with different particle number densities obtained from the real, full-scale experiments. Here, n denotes the number of particles in Camera A to which one or more possible correspondences have been finally found in Camera B and C. The columns Na and Nac contain the average number of ambiguities for each particle association when assuming all particles as monochrome (i.e., without con- sidering color: Na), respectively taking into account the color information (Nac). These real measurements differ a little bit from the theoretical calculations presented in the appendix (see later Figure 10) but the trend is very simi- lar. Using the color information it is possible to reduce considerably the number of ambiguities, which is of the utmost importance for successful PTV measurements. 5.3. Reliability of Color Class Recognition in Full-Scale Experiments The process employed to recognize a tracer particle is closely linked to the recognition of color classes. Indeed, the candidates should belong to the same color class when searching for correspondences in camera images B and C to a selected particle in A. Nevertheless, in con- flicting situations where only two of the three corre- sponding particles in the camera images are of the same color, the particle position in 3-D is assigned to this color class. If all three color classes are different, no 3-D posi- tion is assigned. In Table 8, statistics resulting from three full-scale experiments associated with Case 1, 2 and 3 are pre- sented. The number of possible matches in all three cam- era views, denoted here by n, is limited by the camera image containing the smallest number of detected parti- cle centers for the selected frame. This number is further separated into the numbers of successful matches, where three (n3/3) or two (n2/3) of the three correspondences be- Table 7. Measured number of ambiguities without ( Na) and with (Nac) considering color classes. n Na N ac 572 2.59 1.24 756 3.34 1.28 952 4.66 1.44 1535 9.27 2.02 Table 8. Matching particle color statistics in three camera views for the different configurations. The number of possi- ble matches is denoted by n. The notation ni/3 is used for the number of matches where i of 3 correspondences belong to the same color class. The number of lost matches is denoted by nlost. Case 1: WingletsCase 2: Cylinder Case 3: Swirl N 1020 1478 929 n3/3 460 675 224 n2/3 455 694 554 n1/3 27 21 33 nlost 78 88 118 long to the same color class. In this table the correspond- dences associated with three different colors (n1/3) and therefore not considered further, and the number of lost matches, which cannot be determined at all by epipolar geometry (nlost) are also listed. In Cases 1 and 2, n2/3 is similiar to n3/3 due to the occurrence of overlapping par- ticles on the different camera images. Case 3 (highly three-dimensional flow in the transverse direction) leads of course to a particularly challenging situation. Tracers in the foreground move transversally in the opposite di- rection compared with particles in the background. The probability of overlapping particle images rises and color classification becomes more difficult because of un- known/untrained particle patterns. One may notice in Table 8 that in all three Cases, epipolar associations with three different colors (n1/3) are very rare; about 2 to 4% only. This is one more indication of the reliability of the developed algorithm for color class recognition. Indeed, a failure of the algorithm is much more often the result of an impossible epipolar association (nlost) and not of a failed color association. 5.4. Calculation of Spatial Tracer Coordinates The spatial location of a tracer p can be computed if ho- mologous points are found in at least two different cam- era views. In this subsection the index i = 13 is used to indicate the three different camera views. Note that be- cause of small imprecisions due to the calculated coordi- ,, Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 68 nates of homologous points si and camera parameters the viewing rays do not necessarily intersect exactly in 3-D space. Accuracy and reliability increase when corre- spondences are found in all three camera views. The viewing rays from the homologous points si given in world coordinates on the sensor plane of camera i in di- rection di = oi – si to the projection center oi should al- most intersect at the spatial point p searched for, where si + tidi = p, i.e.: . si di sii di si di xx yty y zz z (9) Since there are three equations and one unknown, one still gets an overdetermined system of equations when performing a triangulation between two cameras only (6 equations → 3 unknowns x, y, z + 2 unknowns t1 and t2). If the solution is computed by adjustment of direct ob- servations [23], this approach can easily be extended to three or more cameras. When rearranging (9) to di si idi si di si xx yty y zzz (10) the unknowns can be separated and (10) can be expressed in matrix form 100 010 . 001 di si di si di si i x x y y z zz t y (11) Combining all viewing rays results in an overdeter- mined equation system of the form: .Axy (12) The design matrix is denoted by A, vector x contains all unknowns x, y, z and the negative scaling parameters –ti, and vector y contains all observations of xsi, ysi and zsi. If there are three corresponding points the system of equ- ations results in 1 1 1 1 1 1 2 2 22 1 22 2 33 3 33 33 1000 0 0100 0 0010 0 10000 010 00. 001 00 10000 010 00 001 00 s d s d s d s d ds ds ds ds ds x x y yxz zyx xz yy t zz t xx t yy zz (13) Finally, the calculation of the unknowns in x can be performed using the least squares method: 1. TT xAAAy (14) 5.5. Experimental Determination of the Localization Uncertainty To get a reliable order of magnitude for the measuring error, a reference plate with circular 49 marks was fixed on a translation stage with manual adjustment of mi- crometer accuracy. It was placed inside the observation volume, so that all marks can be imaged from each cam- era. The 3-D coordinates of the marks were calculated as described above. The new 3-D positions of the marks were determined when the plate was moved 5.98 mm towards the cameras using the translation stage. Ideally, the shift should be the same for all 49 marks. In fact the measurement of 49 shifted marks resulted in a mean shift value of 5.98 mm and a standard deviation of 0.0069 mm. The maximum measured shift within the set of marks was 5.9953 mm and the minimum was 5.9677 mm. This results in a difference of 0.0276 mm. This tiny value in- dicates that the uncertainty is below 0.5% in terms of distance between actual and measured localization. To track the particles in time a 3-frame algorithm based on Minimum Acceleration algorithm is employed [2,24]. The computation of trajectories is described in detail in a separate publication [25]. Figure 9 demon- strates the ability to obtain PTV data within the whole three-dimensional volume of observation around three winglets (Case 1). There is a factor 20 between the high- est and the lowest measured velocity, which already represents a challenge for 3-D PTV [2]. 6. Conclusions and Perspectives Dense trajectory bundles have to be reconstructed to re- solve small flow patterns in PTV experiments. Therefore, a high number of tracer particles is necessary. But this will increase the occurrence of ambiguities leading to problems when searching for corresponding particle lo- cations in different camera views. The relative number of the particles can be reduced if particles can be distin- guished e.g., by color. An original procedure has been developed to dye, localize in 3-D, and identify the color class of small tracer particles on Bayer raw images, used to investigate three different flow patterns by Particle Tracking Velocimetry. A post-processing strategy using Artificial Neural Networks combined with a standardized procedure for particle dyeing results in high color recog- nition rates, typically between 80 and 90%. Furthermore, based on the huge amount of available training data Arti- ficial Neural Networks are robust enough so that the de- veloped procedure can be rapidly adapted to different conditions. The described method for classifying particle color is recommended when particle projections cover Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles 69 Figure 9. Visualization of trajectories in gas flow for case 1 (winglets). only a few pixels. Then, classifying the color in RGB or HSI color space is erroneous, because of artifacts due to the demosaicing process. Finally, the feasibility of the particle recognition in 3-D gas flows has been demon- strated. Typically, 90% of all tracer particles can be fi- nally localized with high precision (spatial uncertainty about 0.5%) using 3-D photogrammetry. When using color information, more tracers can be applied for seed- ing the flow, as required for PTV to resolve small-scale flow patterns. In future measurements, further color classes (starting with yellow) will be added for seeding. Also, first tests involving smaller fluorescent tracer particles appear to be even more interesting, but also challenging. While the employed EMS particles are suitable for present condi- tions and slow gas flows with low Reynolds number, smaller particles are needed to investigate real turbulent flows with higher velocities. Furthermore, using fluores- cence will eliminate the effect of specular reflection on the particle surface, which could be a reason for deterio- rated color classification. 7. Acknowledgements The authors would like to thank the DFG (Deutsche For- schungsgemeinschaft, Schwerpunktprogramm 1147) for the financial support of this project. Interesting discus- sions with R.V. Ayyagari, and T. Ruskowski are grate- fully acknowledged. REFERENCES [1] C. Bendicks, D. Tarlet, B. Michaelis, D. Thévenin and B. Wunderlich, “Coloured Tracer Particles Employed for 3-D Particle Tracking Velocimetry in Gas Flows,” In: W. Nitsche and C. Dobriloff, Eds., Imaging Measurement Methods for Flow Analysis, Springer, Heidelberg, 2009, pp. 93-102. [2] N. T. Ouelette, H. Xu and E. Bodenschatz, “A Quantita- tive Study of Three-Dimensional Lagrangian Particle Tracking Algorithms,” Experiments in Fluids, Vol. 40, No. 2, 2006, pp. 301-313. doi:10.1007/s00348-005-0068-7 [3] T. Netzsch and B. Jähne, “Ein Schnelles Verfahren zur Lösung des Stereokorrespondenz-Problems bei der 3D- Particle Tracking Velocimetry,” Proceedings 15. DAGM Symposium, Lübeck, September 1993, pp. 27-29. [4] H.-G. Maas, “Complexity Analysis for the Determination of Image Correspondences in Dense Spatial Target Fields,” International Archives of Photogrammetry and Remote Sensing, XXIX Part B5, 1992, pp. 102-107. [5] G. A. Voth, A. La Porta, A. M. Crawford, J. Alexander and E. Bodenschatz, “Measurement of Particle Accelera- tions in Fully Developed Turbulence,” Journal of Fluid Mechanics, Vol. 469, 2002, pp. 121-160. doi:10.1017/S0022112002001842 [6] B. Lüthi, “Some Aspects of Strain, Vorticity and Material Element Dynamics as Measured with 3D Particle Track- ing Velocimetry in a Turbulent Flow,” Ph. D. Thesis, Swiss Federal Institute of Technology of Zürich, 2002. [7] Y. Suzuki ans N. Kasagi, “Turbulent Air-Flow Measure- ment with the Aid of 3-D Particle Tracking Velocimetry in a Curved Square Bend,” Flow, Turbulence and Com- bustion, Vol. 63, No. 1-4, 1999, pp. 415-442. [8] H.-G. Maas, T. Putze and P. Westfeld, “Recent Develop- ments in 3D-PTV and Tomo-PIV,” In: W. Nitsche and C. Dobriloff, Eds., Imaging Measurement Methods for Flow Analysis, Springer, Heidelberg, 2009, pp. 53-62. doi:10.1007/978-3-642-01106-1_6 [9] A. V. Mikheev and V. M. Zubtsov, “Enhanced Particle Tracking Velocimetry (EPTV) with a Combined Two-Com- ponent Pair-Matching Algorithm,” Measurement Science and Technology, Vol. 19, No. 8, 2008, pp. 1-16. doi:10.1088/0957-0233/19/8/085401 [10] H. S. Tapia, J. A. G. Aragon, D. M. Hernandez and B. B. Garcia, “Particle Tracking Velocimetry (PTV) Algorithm for Non-Uniform and Non-Spherical Particles,” Elec- tronics, Robotics and Automotive Mechanics Conference, Cuernavaca, September 2006, pp. 325-330. doi:10.1109/CERMA.2006.118 [11] L. Economikos, C. Shoemaker, K. Russ, R. S. Brodkey and D. Jones, “Toward Full-Field Measurements of In- stantaneous Visualizations of Coherent Structures in Turbulent Shear Flows,” Experimental Thermal and Fluid Science, Vol. 3, No. 1, 1990, pp. 74-86. doi:10.1016/0894-1777(90)90102-D [12] Y. G. Guezennec, R. S. Brodkey, N. Trigui and J. C. Kent, “Algorithms for Fully Automated Three-Dimensional Particle Tracking Velocimetry,” Experiments in Fluids, Vol. 17, No. 4, 1994, pp. 209-219. Copyright © 2011 SciRes. JSIP  Improved 3-D Particle Tracking Velocimetry with Colored Particles Copyright © 2011 SciRes. JSIP 70 doi:10.1007/BF00203039 [13] C. Bendicks, D. Tarlet, B. Michaelis, D. Thévenin and B. Wunderlich, “Use of Coloured Tracers in Gas Flow Ex- periments for a Lagrangian Flow Analysis with Increased Tracer Density,” 31st DAGM Symposium Proceedings, Heidelberg, 2009, pp. 392-401. [14] R. Kuhn, R. Bordás, B. Wunderlich, B. Michaelis and D. Thévenin, “Colour Class Identification of Tracers Using Artificial Neural Networks,” Proceedings of 10th Inter- national Conference on Engineering Applications of Neural Networks (EANN), Thessaloniki, 2007, pp. 387- 394. [15] T. Etoh and K. Takehara, “Tracer Particles of Which Specific Gravity is Unity,” In Proceedings Sixth Interna- tional Symposium on Flow Visualisation, 1992, pp. 878- 881. [16] R. Bordás, C. Bendicks, R. Kuhn, B. Wunderlich, D. Thévenin and B. Michaelis, “Coloured Tracer Particles Employed for 3D-PTV in Gas Flows,” Proceedings of 13th International Symposium on Flow Visualization, Nice, France, 1-4 July 2008. [17] R. Ramanath, W. E. Snyder, G. L. Bilbro and W. A. Sander, “Demosaicing Methods for Bayer Color Arrays,” Journal of Electronic Imaging, Vol. 11, No. 3, 2002, pp. 306-315. doi:10.1117/1.1484495 [18] J. C. Crocker and D. G. Grier, “Methods of Digital Video Microscopy for Colloidal Studies,” Journal of Colloid and Interface Science, Vol. 179, No. 1, 1996, pp. 298-310. doi:10.1006/jcis.1996.0 217 [19] H.-G. Maas, “Digitale Photogrammetrie in der Dreidimen- sionalen Strömungsmesstechnik,” Ph.D. Thesis, Swiss Federal Institute of Technology of Zürich, 1992. [20] S. Nissen, “Neural Networks Made Simple” (Documenta- tion of the FANN C/C++ Library 2005). [21] J. Albertz and M. Wiggenhagen, “Guide for Photogrammetry and Remote Sensing,” 5th Edition, Herbert-Wichmann Ver- lag, Heidelberg, 2009. [22] T. Luhmann, S. Robson, S. Kyle and I. Harley, “Close Range Photogrammetry,” Whittles Publishing, Dunbeath, 2006. [23] V. G. S. Ernst, P. W. Sablik, J. Balendonck, Z. Houkes and P. P. L. Regtien, “A Surface Relief Meter Based on Trinocular Computer Vision,” 5th International Confer- ence on Image Processing and Its Applications, Edin- burgh, 4-6 July 1995, pp. 281-285. [24] T. Dracos, “Three-Dimensional Velocity and Vorticity Measuring and Image Analysis Techniques,” Kluwer Academic Publishers, Dordrecht, 1996. [25] D. Tarlet, C. Bendicks, Ch. Roloff, R. Bordás, B. Wun- derlich, B. Michaelis and D. Thévenin, “Gas Flow Meas- urements by 3-D Particle Tracking Velocimetry Using Coloured Tracer Particles,” Submitted in December 2010 to Flow, Turbulence and Combustion.  Improved 3-D Particle Tracking Velocimetry with Colored Particles 71 Appendix: Theoretical Prevision of Ambiguities A significant problem when using imaging measurement methods in 3-D flows is the occurrence of ambiguities during the spatial correspondence analysis. This is par- ticularly true when a high number density of tracer parti- cles is required i.e., when resolving small-scale flow structures. The correlation of particles between different images at a given time is mainly based on geometrical conditions such as the epipolar geometry. The intersect- tion between the image plane and a plane formed by the object point and the perspective centers of the cameras should form a line. A corresponding tracer can only be found along this line. This decreases the search area from 2-D (the whole image) to 1-D (a line in the image). Using more than two cameras, the search space becomes small- er and smaller. Nevertheless, the ambiguities cannot be completely avoided in practice. The same problem arises when correspondence analy- sis is performed in time to deduce information concern- ing the Lagrangian description of the flow field. Trajec- tories should be as long as possible without any interrupt- tion [2]. A restriction of the search space when consider- ing successive time steps can be derived from physical considerations, taking only into account acceptable varia- tions of velocity and acceleration and the local correla- tions between velocity vectors. In what follows, the spatial correspondence problem is analyzed in greater detail. The formula to calculate the total number of expected ambiguities Na in a three-cam- era arrangement is given by (15) [4] and is also illustrated in Figure 10. 22 12 12 23 13 41 sin a nn bb NFb b (15) with n total number of tracers in image F image size α intersecting angle between epipolar lines in the third image ε tolerance of the epipolar band bxy distance between camera x and camera y The number of ambiguities becomes minimal when the cameras are arranged in an equilateral triangle so that b12 = b13 = b23, and α = 60˚. Under this condition, used in the present experimental setup, the term in brackets is simply replaced by the factor 3. Note that the number of ambiguities grows with the square of the number of trac- ers. An introduction of color classes for tracers acts like a reduction of the tracer particle number density. This re- duces the number of ambiguities when the set of particles s separated by color into individual subsets. Assuming i n = 1000 n = 1500 n = 500 n = 572 n = 992 n = 1535 Figure 10. Theoretical reduction of the number of ambigui- ties when using up to six color classes (cf. Table 9). For comparison, the actual measured number of ambiguities for different numbers of particles (n = 572, 952, 1535) when using three color classes is also plotted (cf. Table 7). Table 9. Number of ambiguities predicted by (16), see also Figure 11. c 1 2 3 4 5 6 Nac(c, n = 500) 2.640.66 0.29 0.16 0.10 0.07 Nac(c, n = 1000) 10.562.64 1.17 0.66 0.42 0.29 Nac(c, n = 1500) 23.775.94 2.64 1.48 0.95 0.66 that the colored particles are uniformly distributed in camera images, the number of tracers nc of a particular color in each subset is n/c, where c is the number of used colors. Since the correspondence problem is solved for each subset, the number of ambiguities Nac decreases as the square of the number of color classes (replacing n by nc = n/c in (15)): 22 12 . sin cc ac nn NF (16) Results obtained from this equation are listed in Table 9 and plotted in Figure 10 using the parameters used in the present experiments: F = 1280 × 1024 pixels, α = 60˚ and ε = 1 pixel considering up to six color classes c and three different values for the number of tracers n (500, 1000 and 1500). It can be seen in Figure 10 that the number of ambiguities is theoretically reduced by typi- cally 80% when 3 color classes are taken into account. Copyright © 2011 SciRes. JSIP

|