Journal of Information Security

Vol.5 No.2(2014), Article ID:45019,7 pages DOI:10.4236/jis.2014.52007

Illegal Access Detection in the Cloud Computing Environment

Rasim Alguliev, Fargana Abdullaeva

Institute of Information Technology ANAS, Baku, Azerbaijan

Email: director@iit.ab.az, farqana@iit.ab.az

Copyright © 2014 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 15 December 2013; revised 10 January 2014; accepted 18 January 2014

ABSTRACT

In this paper detection method for the illegal access to the cloud infrastructure is proposed. Detection process is based on the collaborative filtering algorithm constructed on the cloud model. Here, first of all, the normal behavior of the user is formed in the shape of a cloud model, then these models are compared with each other by using the cosine similarity method and by applying the collaborative filtering method the deviations from the normal behavior are evaluated. If the deviation value is above than the threshold, the user who gained access to the system is evaluated as illegal, otherwise he is evaluated as a real user.

Keywords:Cloud Computing; Masquerade Attack; Cloud Model; User Similarity; Collaborative Filtering

1. Introduction

The emergence of the cloud computing technologies nowadays has significantly changed the way we use the computer means as well as the way we access and store our personal and business information. The new computing and communications paradigms based on these technologies cause the emergence of the new security problems. To be more precise, in comparison with the traditional technologies the nature of the threats brought by the cloud technologies to the organization infrastructure has changed. Thus, by the huge CSA (The Cloud Security Alliance) which is actively operating in the area of the standardization of the security issues of cloud technologies classifies the main threats brought by this technology as follows [1] :

• Threat 1. Abuse and Nefarious Use of Cloud Computing;

• Threat 2. Insecure Interfaces and APIs;

• Threat 3. Malicious Insiders;

• Threat 4. Shared Technology Issues;

• Threat 5. Data Loss or Leakage;

• Threat 6. Account or Service Hijacking;

• Threat 7. Unknown Risk Profile.

Among these threats the 1st and the 3rd threats can be implemented in each layer of the cloud computing SPI (Service Platform Infrastructure) model. In both of these threats in order to use the resources illegally the attacker tries to present himself to the system as a legitimate user by capturing legitimate user’s identity information. These threats are known as masquerade attacks in the literature sources [2] . Moreover, according to the cybercrime watch survey [3] conducted by the organization CERT in 2010, the first place among the top 5 electronic crimes belongs to viruses, worms and other malicious codes, the second part takes the unauthorized access.

The Twitter incident committed by the French hacker can be introduced as an example of this type of attacks. Several Twitter corporate and personal documents were leaked to the technological website called “The TechCrunch” [4] [5] and customers’ accounts including the account of the US President Barack Obama were illegally accessed [6] [7] . The attacker used a Twitter administrator’s password in order to gain access to Twitter’s corporate documents hosted on Google’s infrastructure as Google Docs. The damage was significant both for Twitter and for its customers.

There are number of research works devoted to the discovery of masquerade attacks. In one of these studies [8] in order to discover the masqueraders a new approach has been presented. This approach implements the comparison between the two users’ command sequence. This method is based on the similarity measured between the 10 most recent commands and a user’s profile. But this approach is not suitable for cloud infrastructure because cloud systems are heterogeneous systems [9] [10] . Here the virtual machines which form cloud systems are executes under the different operating systems (Windows, Linux, UNIX, Network). It is also likely that different commands are used for the same operation. For this reason, it is impossible to evaluate similar users by their command sequence.

In the other approach a sequence alignment method, which is broadly used in bioinformatics, is applied to discover the illegal accesses [11] [12] . As it can be seen most of the studies are focused on the detection of unusual or variable command sequence applied by the users.

Generally, the legitimate users of the computer system are familiar with the files on that system and are aware of their location. Any search for the specific files is likely to be targeted and limited here. A masquerader, however, who gets access to the victim’s system illegitimately is unlikely to be familiar with the structure and the contents of the file system. For this, his search is likely to be widespread and untargeted as well as the type and sequence of commands applied by the users in the system are being different. Taking this into account, the user’s profile reflecting his search behavior is created at [13] [14] and the one-class modeling technique is developed in order to detect the illegal intrusion.

However it should be noted that, when the attacker is being more deeply familiar with the genuine user’s behavior he can more accurately imitate him in the system. In this case, the above mentioned methods lose their influence and the detection of the illegal accesses by that way does not allow obtaining good results.

Typically, a user who gains access to the cloud system by masquerading under the genuine user always has a unique interest on the selected target resources. In this case the detection of the illegal accesses by modeling the user’s specific interest may allow obtaining significant results.

In this paper we created a profile which reflects the interest behavior of each user and this profile is considered as a cloud model. This cloud model reflects not a command sequence used by the user but the statistical characteristics of the operations conducted by the users. According to this method, in order to discover the illegal attack the normal behavior of a user is modeled as a cloud model first; then these models are compared and the deviations from this behavior are evaluated. If the deviation value becomes above the threshold the user who gained access to the system is evaluated as the illegal user.

2. Masquerade Attack Detection System

Masquerade attacks refer to attacks that use a fake identity in order to gain unauthorized access to the personal computer information through the legitimate access identification.

The most common information which can be used to detect the masquerade attacks is contained within the actions the masquerader performs. This set of actions is known as a behavioral profile. The masquerade detection techniques are based on the premise that when a masquerader attacks the system he will sufficiently deviate from the user’s behavior. In other words, at first the normal behavior of the user is modeled as a profile there, then this profile is compared with his current behavior and the obtained deviation cases are considering as masquerader attacks.

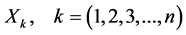

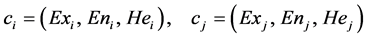

In this paper we propose a new approach for detecting a masquerader in the cloud environment. This approach is schematically described in Figure 1.

According to the architecture the masquerade detection is implemented in two phases:

1) Profile creating phase. The genuine user data collected and on the basis of the colleccted information a behavioral profile is created for each user. This phase consists of two components. The Event logs implements the collection of the information on all events during the session. The Feature Extraction Tool generates the feature vector based on cloud model for the user.

2) Detection phase. The new user profile generating on the basis of the new user records and compared with the genuine user profile. There similarity value calculation block for each input vector there calculates the cosine similarity value. The similarity value here varies between 0 and 1.

If similarity value is high, then the input data become very close to the user profile and if it becomes very low, then it is estimated as very different. According to the input data, the detection module classifies all users as genuine or imposter. For this purpose, first of all by using filtering formula it calculates the deviation value and on the basis of the accepted threshold value in the system it makes a decision determining the user as genuine or imposter.

3. User Profile Generation

In order to recognize the anomalous behavior for the detection system, first it must form a user profile to characterize a normal behavior. In this section we will describe the model underlying our approach to the user profiling and will discuss the implementation details of how profiles are formed from the user’s interest data.

3.1. Data Collection

The detection of the masquerade attacks is carried out on the basis of operations performed by the masqueraders in the system. This set of operations forms a user’s behavior profile.

Based on these real data, it is possible to construct the interest profile in terms of the cloud model. This interest profile also can be called the feature vector of the user.

3.2. Feature Vector Construction

There every action of the user has a score which can be regarded as a drop of interest cloud, but the score value of all operations is regarded as an interest cloud. The cloud uses expectation , entropy

, entropy  and excess entropy

and excess entropy  to represent one digital value. Together these three digital characteristics of

to represent one digital value. Together these three digital characteristics of  -th user’s interest cloud form the

-th user’s interest cloud form the  -th characteristic cloud vector. While computing the similarity between users

-th characteristic cloud vector. While computing the similarity between users  and

and  we can judge from similarity between vector

we can judge from similarity between vector  and

and . The key innovation there is getting characteristic vector

. The key innovation there is getting characteristic vector  of the user’s interest cloud.

of the user’s interest cloud.

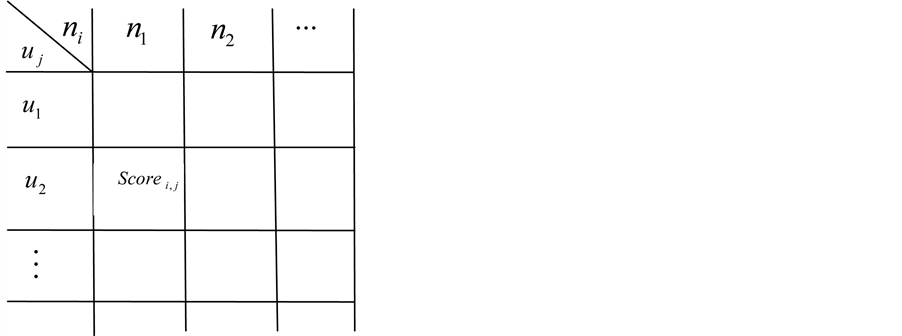

Assume that matrix  represents the score data set of operations used by the users. Here,

represents the score data set of operations used by the users. Here,  implies the users,

implies the users,  the operations used by the users,

the operations used by the users, —the score value of the

—the score value of the  -th operation used by the

-th operation used by the  -th user. This assumption can be represented as follows in figure 2:

-th user. This assumption can be represented as follows in figure 2:

In order to get the cloud feature vector  of the user we need a backward cloud generator.

of the user we need a backward cloud generator.

Assume that a set of score values used operations denoted as . Then an algorithm to determine the digital value

. Then an algorithm to determine the digital value  can be constructed as follows:

can be constructed as follows:

Input: a set of score values of operations

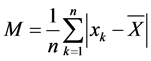

Output:  numerical characteristics 1) The calculation of the average

numerical characteristics 1) The calculation of the average  score value, according to score value of the number of

score value, according to score value of the number of  operations applied by the user

operations applied by the user

(1)

(1)

Figure 1. Masquerade attack detection system.

Figure 2. Score data matrix.

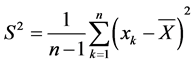

2) Absolute central moment calculation

(2)

(2)

3) The calculation of the variance of the event

(3)

(3)

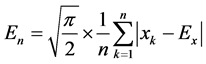

4) The calculation of the mean operation value

(4)

(4)

5) The calculation of the dispersion of operations

(5)

(5)

6) The calculation of the entropy

(6)

(6)

In this work the characteristic vector  of the user cloud is determined by the above mentioned algorithm.

of the user cloud is determined by the above mentioned algorithm.

4. Detecting Anomalous Behavior

For the detection of the anomalous behavior in the cloud infrastructure first of all let us provide comparison of the cloud vectors. Let the vector  present the cloud of

present the cloud of  -th user, then the similarity between the

-th user, then the similarity between the  -th and the

-th and the  -th users is labeled as

-th users is labeled as  and is calculated as follows:

and is calculated as follows:

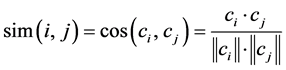

(7)

(7)

where .

.

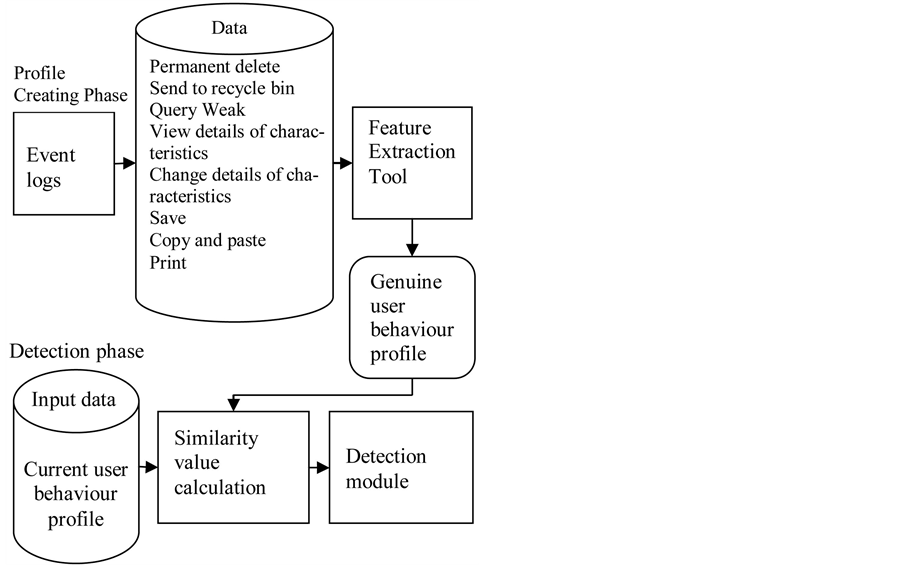

After the calculation of the similarity measure the detection module in Figure 1 classifies the input vector and identifies the access as normal or anomalous. For the implementation of this process the following method is proposed.

5. Classifying User Behavior

It should be noted here that even between the input data of the user and his own profile deviation cases can be take place. In order to accept the decision about normal or anomalous accesses it is necessary to calculate the degree of this deviation value. This deviation value can be calculated via following filtration formula:

(8)

(8)

Here —is a weight ratio, which represents the similarity value between the

—is a weight ratio, which represents the similarity value between the  -th and the

-th and the  -th users;

-th users; —is a score value of the

—is a score value of the  -th operation used by the

-th operation used by the  -th user.

-th user.

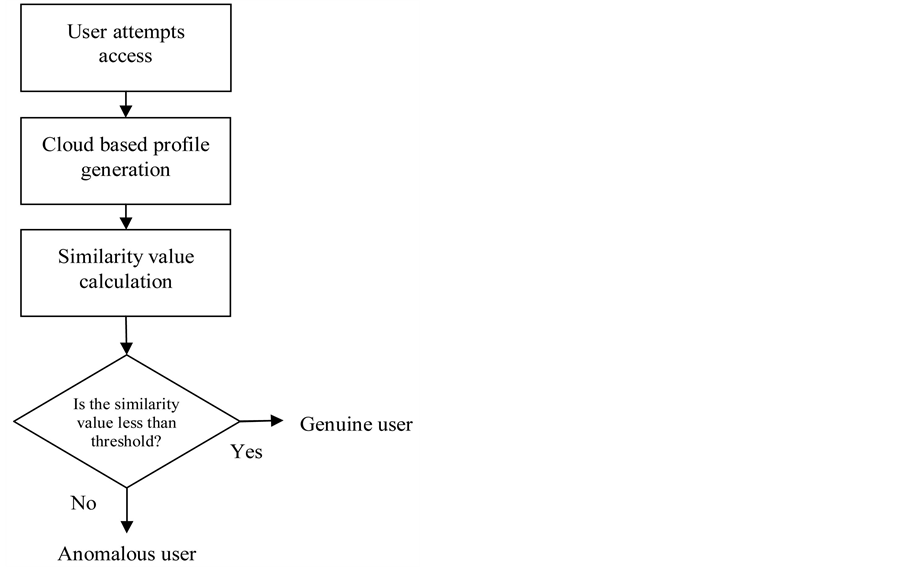

The classification of the users here fulfilled on the basis of the threshold value accepted in the system. If  becomes less than the threshold value, then the system evaluates the user as genuine, otherwise he is evaluated as anomalous.

becomes less than the threshold value, then the system evaluates the user as genuine, otherwise he is evaluated as anomalous.

The operation flow (Figure 3) that covers the above cases can be constructed as follows:

6. Existing Masquerade Datasets

There are numbers of datasets which enable to evaluate the performance of the masquerader attack detection techniques.

SEA dataset. Most papers about the masquerader detection use this dataset [14] with its associated configuration. The SEA consists of the commands collected from the UNIX account audit data. Among all fields of audit data provided by account only the username and the command were taken. The data describe 50 different users each issuing 15,000 commands. The first 5000 commands are considered as genuine. The remaining 10,000 commands of each user are divided into 100 blocks consisting of 100 commands each.

Greenberg dataset. This dataset [15] contains the data from 168 UNIX users. Users are classified into four groups: novice programmers, experienced programmers, computer scientists and non-programmers. The data are stored in the plain text files that record the following information: the session start and end times, the command line as entered by the user, the current working directory, any alias expansion of the previous command, an indication whether the line entered has a history expansion or not, and any error detected in the command line.

Figure 3. Flowchart for illegal access detection in cloud computing environment.

Purdue University dataset. Purdue or just PU dataset [16] consists of the UNIX command histories of 4 users of the Purdue Millennium Lab, collected in four months. A few works use this dataset and this may be due to its low number of users.

RUU dataset. This dataset was collected by Columbia IDS group [17] and consists of Windows commands. The dataset was collected from 34 normal volunteer users and 14 masquerade users. They model how normal users typically search a file system and use these models to detect the unusual searches that may be considered as masquerades. The dataset includes the records of the search conducted by the masquerader who should perform a specific task to find any data useful for his financial gain on a previously unknown file system within 15 minutes.

This datasets suffer partially or fully from the several deficiencies which prevent their adoption for the cloud environments. In this perspective, their most significant weakness is the lack of real masquerade data. No command sequence was issued by the attackers, only the RUU dataset includes the real masquerades but they are predefined limited scenario. The main shortcomings of these datasets are:

• The cloud systems are heterogeneous and the user audits may be distributed among VMs running distinct (e.g., Windows, Linux, and network) operating systems.

• The absence of the command arguments and other useful details such as when the user commands were issued, the duration of each user’s session and the type of operations implemented by the users in the system.

• They are not suitable for training or testing any cloud detection systems because of their small size.

• Any efficient Cloud dataset should coverage for attacks in all cloud service models (SaaS, PaaS, and IaaS).

At present, there is no standard dataset allowing to test the masquerade attacks detection techniques in the cloud infrastructure. This relates to a necessity of special tools for the access of the cloud infrastructure, a necessity of special permissions, with distributions of audit data across different environments (e.g., Windows, Linux, and network), with necessity of a huge size of the audit data for cloud systems (more than 20 GB). At feature it would be significant to provide investigations in this area to create such datasets.

7. Conclusion

In this paper, for the detection of the masquarade attacks in the cloud infrastructure collaborative filtering algorithm based on the cloud model is proposed. One of the advantages of this model is the identification of the similarity between the users on the basis of the cloud model. While using the similarity measurement method based on the cloud model, it does not require a strict comparison between the score value of operations used by different users. Here we provide the calculation of the statistic features of the score values of all operations used by the user at the access point, then we provide a comparison of statistics features of the input data and based on these we determine the similarity between the input data.

8. Future Work

Future work will focused on providing experiments to prove the effectiveness of implementation of collaborative filtering algorithm constructed on the cloud model onto illegal access detection problem in the cloud computing environment.

References

- Cloud Security Alliance (2010) Top Threats to Cloud Computing. https://cloudsecurityalliance.org/topthreats/csathreats.v1.0.pdf

- Salem, M.B. and Stolfo, S.J. (2011) Data Collection and Analysis for Masquerade Attack Detection: Challenges and Lessons Learned. Columbia University Computer Science Technical Reports, Columbia University, 8 p.

- (2010) 2010 Cybersecurity Watch Survey: Cybercrime Increasing Faster than Some Company Defenses. CERT, 17 p.

- Arrington, M. (2009) In Our Inbox: Hundreds of Confidential Twitter Documents. http://techcrunch.com/2009/07/14/in-our-inbox-hundreds-of-confidential-twitter-documents/

- Takahashi, D. (2010) French Hacker Who Leaked Twitter Documents to TechCrunch Is Busted. http://venturebeat.com/2010/03/24/french-hacker-who-leaked-twitter-documents-to-techcrunch-is-busted/

- Danchev, D. (2009) ZDNET: French Hacker Gains Access to Twitter’s Admin Panel. http://www.zdnet.com/blog/security/french-hacker-gains-access-totwitters-admin-panel/3292

- Allen, P. (2010) Obama’s Twitter Password Revealed after French Hacker Arrested for Breaking into U.S. President’s Accoun. http://www.dailymail.co.uk/news/article-1260488/Barack-Obamas-Twitter-password-revealed-French-hacker-arrested.html

- Lane, T. and Brodley, C.E. (1997) Sequence Matching and Learning in Anomaly Detection for Computer Security. Proceedings of the AAAI Workshop on AI Approaches to Fraud Detection and Risk Management, AAAI Press, 43-49.

- Alguliev, R.M. and Abdullayeva, F.C. (2013) Identity Management Based Security Architecture of Cloud Computing on Multi-Agent Systems. Proceedings of the Third International Conference on Innovative Computing Technology, London, 29-31 August, 123-126. http://dx.doi.org/10.1109/INTECH.2013.6653643

- Əliquliyev, R.M. and Abdullayeva, F.C. (2013) Bulud texnologiyalarının təhlükəsizlik problemlərinin tədqiqi və analizi. İnformasiya Texnologiyaları Problemləri, 1, 3-14.

- Coull, S.E., Branch, J., Szymanski, B. and Breimer, E. (2003) Intrusion Detection: A Bioinformatics Approach. Proceedings of the 19th Annual Computer Security Applications Conference, Las Vegas, 8-12 December 24-33.

- Coull, S.E. and Szymanski, B.K. (2008) Sequence Alignment for Masquerade Detection. Computational Statistics and Data Analysis, 52, 4116-4131. http://dx.doi.org/10.1016/j.csda.2008.01.022

- Stolfo, S.J., Salem, M.B. and Keromytis, A.D. (2012) Fog Computing: Mitigating Insider Data Theft Attacks in the Cloud. Proceedings of the IEEE Symposium on Security and Privacy Workshops, San Francisco, 125-128.

- http://www.schonlau.net/intrusion.html

- Greenberg, S. (1988) Using Unix: Collected Traces of 168 Users. Report, University of Calgary.

- Lane, T. and Brodley, C.E. (1997) An Application of Machine Learning to Anomaly Detection. Proceedings of the 20th National Information Systems Security Conference, 14 February, 366-380.

- Salem, M.B. and Stolfo, S.J. (2011) Modeling User Search Behavior for Masquerade Detection. Proceedings of the 14th International Conference on Recent Advances in Intrusion Detection, 181-200.