Paper Menu >>

Journal Menu >>

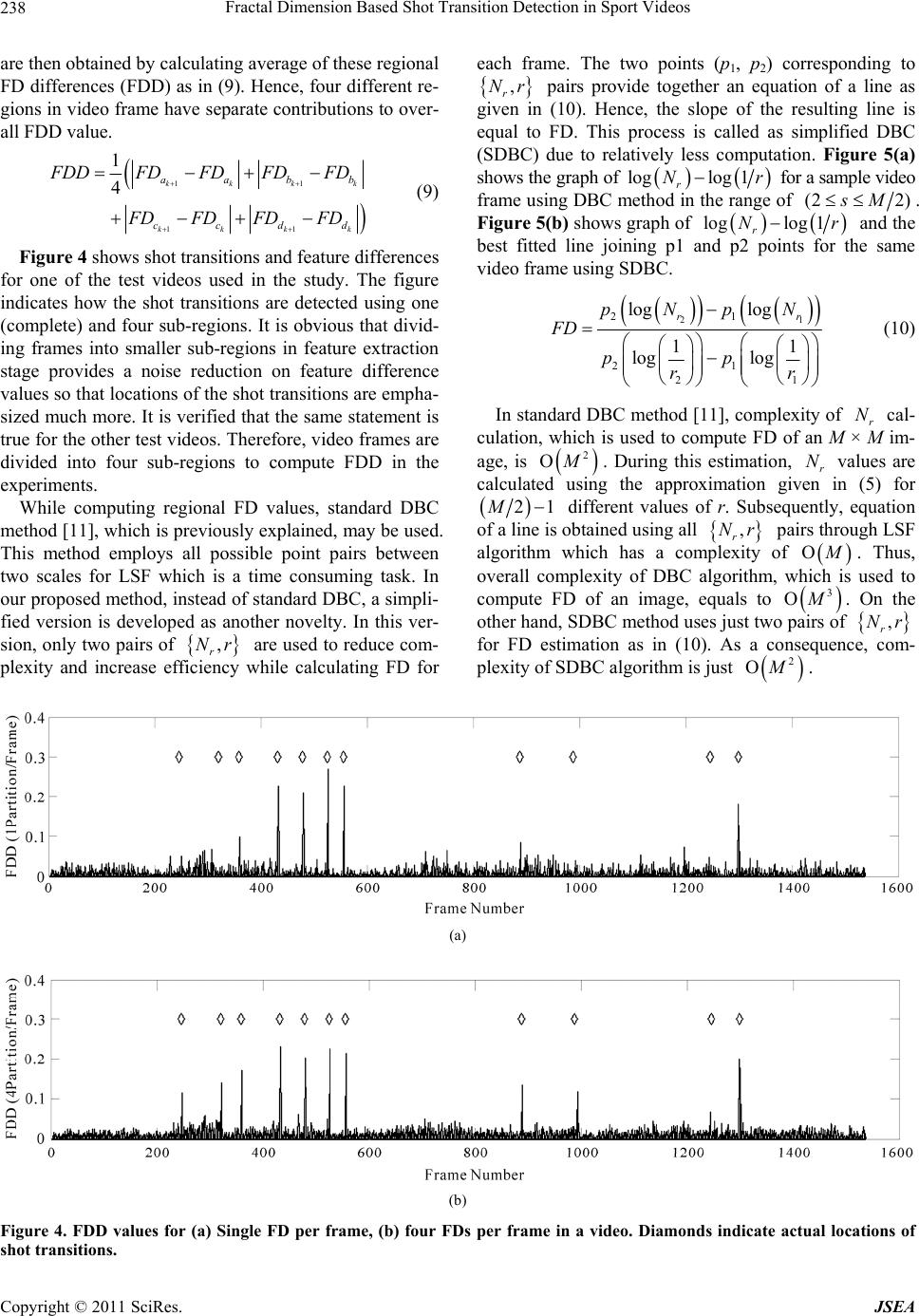

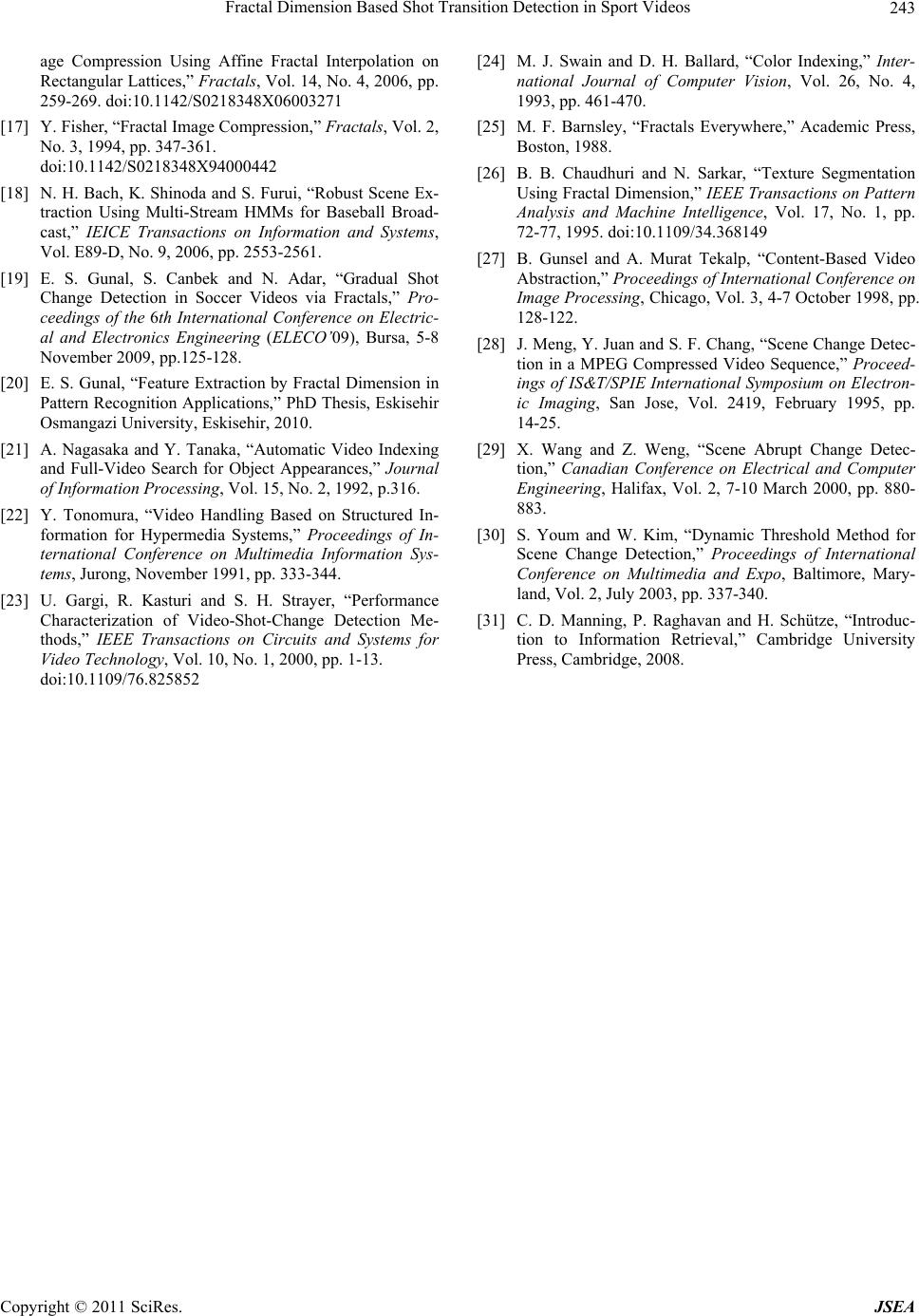

Journal of Software Engineering and Applications, 2011, 4, 235-243 doi:10.4236/jsea.2011.44026 Published Online April 2011 (http://www.SciRP.org/journal/jsea) Copyright © 2011 SciRes. JSEA 235 Fractal Dimension Based Shot Transition Detection in Sport Videos Efnan Sora Gunal1, Selcuk Canbek2, Nihat Adar2 1Department of Electrical and Electronics Engineering, Eskisehir Osmangazi University, Eskisehir, Turkiye; 2Department of Com- puter Engineering, Eskisehir Osmangazi University, Eskisehir, Turkiye. Email: {esora, selcuk, nadar}@ogu.edu.tr Received March 23rd, 2011; revised March 28th, 2011; accepted April 2nd, 2011. ABSTRACT Increase in application fields of video has boosted the demand to analyze and organize video libraries for efficient scene analysis and information retrieval. This paper addresses the detection of shot transitions, which plays a crucial role in scene analysis, using a novel method based on fractal dimension (FD) that carries information on roughness of image intensity surface and textural structure. The proposed method is tested on sport videos including so ccer and ten- nis matches tha t co n tain co n siderab le amoun t of ab rup t and g radual shot trans itions. Exp erimenta l results indicate that the FD based shot tran sition detection method offers promising performance with resp ect to pixel and histogram based methods available in the literature. Keywords: Sce ne An al ysis , Shot Transition, Fractal Dimension, Differential Box Counting 1. Introduction Video can be simply defined as an audio-visual informa- tion type. With the rapid advances on communication technology, computer performance and storage media, video is now available on various applications such as internet conferencing, multimedia authoring systems, e- education and video-on-demand systems. As the applica- tion fields of video increase, the demand for organizing video scenes and retrieving the desired information in huge database increase as well. As a consequence, scene analysis on video has become an important research topic [1,2]. Scenes are formed by semantically related individual shots that are uninterrupted segments of a video frame sequence with static or continuous camera motion [3]. Accurate shot transition detection therefore plays a cru- cial role to organize video contents into meaningful parts for scene analysis [4]. Shot transitio ns may occur with ei- ther abrupt or gr adual shot transition. In abrup t shot tran- sition, the change of video content occurs over a single frame. In gradual shot transition, however, the content change takes place gradually through a short sequence of frames. The gradual transition is also divided into several subgroups such as fading, dissolving, wiping, noise and camera movements (pan, tilt, zoom) [5]. Performance of shot transition detection methods di- rectly depends on the features that are used to represent the video content. Existing shot transition detection tech- niques utilize differences on features over consecutive vi- deo frames. Pixel differences and histogram differences are the most widely used approaches among all [6-8]. In our study, we propose a novel shot transition detec- tion approach which employs fractal dimension (FD) in- formation. FD, which was introduced by Mandelbrot [9], is an important tool to extract roughness of textural fea- tures from images. Most of the real-world objects have complex and irregular structures that cannot be described by using ideal mathematical shapes such as cubes, cones and cylinders defined in Euclidean geometry. It is how- ever easier to characterize these objects by their FD. Us- ing FD in image feature extraction and segmentation is induced by the observation that FD has a strong correla- tion with human judgment of surface roughness and is re- latively insensitive to image deformation as well [10]. FD has been previously applied to many areas in image pro- cessing and pattern recognition. FD, which is calculated from gray level images [11], can be used as a feature in pattern recognition process. Textural segmentation, clas- sification, shape analysis are applicable research areas of FD in satellite, medical and natural images [12,13]. Im- age zooming [14], video coding [15] and compression [16,17] are other research topics where FD is used as a  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 236 feature. Reference [18] is one of the few studies where FD is used for scene analysis purpose. FD is here emplo- yed as one of the features for scene extraction process ra- ther than shot transition detection. In the regarding study, shot transition detection, which is an important part of scene extraction job, is carried out by pixel-difference based motion in tensity method with simple threshold ap- proach. Furthermore, it has been indicated that the per- formance of shot transition detection is not good at all es- pecially for gradual transitions. In our study, the proposed shot transition detection method utilizes FD difference (FDD) values of consecu- tive video frames. Using FD rather than pixel or histo- gram information would help more to define roughness of image intensity surface and textural information of vi- deo frames so that locations of shot transitions can be de- tected with higher precision. Benefits of using FD for shot transition detection are previously shown in [19] particu- larly for gradual transitions. In add ition to getting benefit from FD information of video frames in shot transition detection, this study (originated from [20]) offers addi- tional novelties and improvements with respect to [19]. FD values are now estimated from sub-regions of video frames. In this way, locations of the shot transitions are emphasized much clearly. Another novelty of the study is on computation of FD information itself. Standard FD es- timation method is now simplified so that computational complexity is drastically reduced with just a slight devia- tion on the obtained FD value. FD based shot transition detection method is tested on sport videos including soc- cer and tennis matches, which contains considerable amount of shot transitions with respect to other videos. The proposed method is compared with well-known pix- el and histogram based methods. Experimental results reveal that the proposed method not only offers compa- rable performance with respect to the abovementioned detection methods but also surpasses them in some cases as well. Organization of the rest of the paper is as follows: Section 2 summarizes the pixel and histogram based shot transition detection approaches that are available in the literature. In Section 3, computation of FD is briefly de- scribed. Section 4 introduces the proposed shot transition detection method based on FD. Section 5 includes expe- rimental work and results. Finally, conclusions of the pa- per are given in Section 6. 2. Pixel and Histogram Based Shot Transition Detection In the literature, as mentioned before, pixel differences and histogram differences are the most widely used ap- proaches for shot transition detection. Sum of Absolute Differences (SAD) is the fundamental pixel difference method. In this approach, the difference between two fra- mes is obtained by calculating the value that represents the overall chan ge in p ix el intensities o f imag es [4,6 ]. Th e sum of absolute pixel-wise intensity differences between two frames is used as a frame difference as shown in (1), where 1 f and 2 f are intensity values of consecutive frames, X and Y are the height and weight, x and y represent pixel coordinates of the frames, respectively. All pixel difference methods are very sensitive to noise and camera motion [4]. 11 121 2 00 1 ,,, XY xy SADf ff xyfxy XY (1) Histogram difference method is another widely used approach in shot transition detection. Histogram repre- sents the distribution of pixel values in an image. Gray level and color histograms are very useful tools to meas- ure difference between two frames [4,8,21,22]. There exist various histogram difference methods such as bin to bin difference (B2B) and histogram intersection (INT) methods [23,24]. B2B is computed as in (2) where 1 h and 2 h are histograms of consecutive frames and N is the number of pixels in a single frame. 2121 2 1 ,2 BB i Dhh hihi N (2) Histogram intersection of two consecutive frames can be obtained usi ng 12 12 min , Intersection ,i hihi hh N (3) Histogram difference ( I NT D) is then computed using 12 1 Intersection, INT Dhh (4) In [6,8], pixel and histogram based methods are com- pared and it is observed that the histogram methods pro- vide a good trade-off between accuracy and speed. All these methods however have several disadvantages in de- termining the shot tran sitions. They are sensitive to cam- era motion, large-sized object motion, noise, sudden ob- ject appearance, pan and zoom which make it difficult to determine gradual transitio ns particularly. 3. Fractal Dimension Several FD calculation methods have been developed following the increase in usage of fractal geometry for different research fields. The choice of which method to use depends on the application field and algorithmic complexity. All these FD computation method s, however, follow the same principles of Hausdorff-Besicovitch (D) Dimension [25]. D dimension of a bounded set A in n is a real number used to characterize the geometrical  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 237 complexity of A. Here, the set A is called a fractal set if its D dimension is strictly greater than its topological dimension [26]. D dimension of a subset X of Euclidean space can be replaced by counting number of open balls used to cover X. The box counting dimension ( B D) of set A is defined as 0 log lim log 1 r Br N Dr (5) where r N is the number of the boxes of size r needed to cover A [9]. According to [11], gray level values are assumed to be a 3D surface to calculate the FD of an image. Three di- mensional view of gray scale image given in Figure 1(a) is shown in Figure 1(b ). FD can be estimated by using well known Differential Box Counting (DBC) method, also known as Blanket method. To calculate r N, the image of size M × M pix- els is scaled down to a size s × s (Figure 2) where 12sM and s is an integer. r is the scaling ratio and calculated using rsM (6) The image is considered as 3-D space with (x, y, z) axes. While (x, y) is denoting 2-D position, the z axis denotes gray level. After (x, y) space is partitioned into grids of size (s × s), each grid contains column of boxes of size s × s × h. If the total number of gray levels is G then (G/h) is equal to (M/s). Let the minimum and max- imum gray level of the image in the (i, j) th grid fall in box number k and l, respectively. For each (i, j) th grid, (,) r nij is the contribution of r N as shown in (7). Considering the contributions from all grids, r N is then obtained as in (8). ,1 r nijlk (7) ,, rr ij Nnij (8) r N is obtained for different values of r, i.e., different values of s. Then, using (5), FD is estimated using linear least square fitting (LSF) of log(r N) against log(1/r). 4. FD based Shot Transition Detection In this study, video frames, which will be segmented into shots, are first converted to gray scale before computing FD. Here, gray level values are assumed to be a 3D sur- face just as in standard DBC method. Instead of compu- ting FD value for complete frame, each frame is first par- titioned into four regions of equal size as illustrated in Figure 3. Features that are used in the shot transition detection (a) (b) Figure 1. (a) 100 × 100 gray scale image; (b) Corresponding 3-D intensity surface. Figure 2. Image intensity surface. (a) (b) Figure 3. Regions in consecutive frames in (a) soccer video (not shot transition), (b) tennis video (shot transition).  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 238 are then obtained by calculating average of these regional FD differences (FDD ) as in (9). Hence, four differ ent re- gions in video frame have separate contributions to over- all FDD value. 11 11 1 4kkkk kk k k aabb ccdd F DDFDFD FD FD FD FD FDFD (9) Figure 4 shows shot transitions and feature differences for one of the test videos used in the study. The figure indicates how the shot transitions are detected using one (complete) and four sub-regions. It is obvious that divid- ing frames into smaller sub-regions in feature extraction stage provides a noise reduction on feature difference values so that locations of the shot tran sitions are empha- sized much more. It is verified that the same statement is true for the other test videos. Therefore, video frames are divided into four sub-regions to compute FDD in the experiments. While computing regional FD values, standard DBC method [11], which is previously explained, may be used. This method employs all possible point pairs between two scales for LSF which is a time consuming task. In our proposed method, instead of standard DBC, a simpli- fied version is developed as another novelty. In this ver- sion, only two pairs of , r Nr are used to reduce com- plexity and increase efficiency while calculating FD for each frame. The two points (p1, p 2) corresponding to , r Nr pairs provide together an equation of a line as given in (10). Hence, the slope of the resulting line is equal to FD. This process is called as simplified DBC (SDBC) due to relatively less computation. Figure 5(a) shows the graph of loglog 1 r Nr for a sample video frame using DBC metho d in the range of (2 2)sM . Figure 5(b) shows graph of loglog 1 r Nr and the best fitted line joining p1 and p2 points for the same video frame using SDBC. 21 21 21 21 log log 11 log log rr pNpN FD pp rr (10) In standard DBC method [11], complexity of r N cal- culation, which is used to compute FD of an M × M im- age, is 2 M. During this estimation, r N values are calculated using the approximation given in (5) for 21M different values of r. Subsequently, equation of a line is obtained using all , r Nr pair s through LSF algorithm which has a complexity of M . Thus, overall complexity of DBC algorithm, which is used to compute FD of an image, equals to 3 M . On the other hand, SDBC method uses just two pairs of , r Nr for FD estimation as in (10). As a consequence, com- plexity of SDBC algorithm is just 2 M. (a) (b) Figure 4. FDD values for (a) Single FD per frame, (b) four FDs per frame in a video. Diamonds indicate actual locations of shot transitions.  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 239 (a) (b) Figure 5. Graph of log(Nr) – log(1/r) for single video frame using (a) DBC for 22sM, (b) DBC and the line fitted using SDBC method through points 12 p,p where 12 =14, =140 pp rr . FD values that are computed by SDBC are pretty close to the ones obtained by standard DBC method. Moreover, achieving low processing time in computing the FD dif- ferences of consecutive video frames is much more im- portant than computing the FD values precisely in shot transition detection process. Figure 6 shows the similar- ity between SDBC and standard DBC for the features of a test video. Shot transition locations can be clearly ob- served in both cases (Figure 6(a) and (b)). Figure 6(c) shows the absolute error between FDD values obtained by DBC and SDBC methods. Low error values prove that SDBC provides quite similar FD values to DBC method. As explained be fo re, when FD of images are computed using DBC method, the images are divided into (s × s) grids, where 12sM . Since the grids contain few amounts of pixels for small values of s, r n would be close to 1. Hence, r N gets close to2 1r. It is shown in Figure 5 that the slope of the line starts changing for small values of s. To overcome this problem, minimum value of s is determined as 6. Thus, low limit of r value at point 2 p is computed as 1/40 using (6). In DBC me- thod, r N is an integer between 2 1r and 3 1r. For s = M/2, r N is in the range of (4, 8). On the other hand, for s = M/4, r N would have 49 possible values between (16, 64). In this sense, high limit of r value at point 1 p is defined as 1/4. Consequently, r N computation be- comes more accurate. Additionally, minimum average absolute error is achieved when FDD features, which are obtained for different values of 12 ,pp using DBC and SDBC method, are compared (Figure 6(c)). After computation of FDD values, shot transitions can be located using a threshold approach. Thresholding is an important tool in video shot transition detection. Among different threshold techniques [27-29], typical approach is to define a fixed threshold value. Nevertheless, this approach may not provide good detection performance because every video has different characteristics. While a high threshold value increases the number of missed de- tections, a lower threshold increases the number of false detections. In our study, therefore, a dynamic threshold method (DTM) is used where threshold is dynamically changed during shot transition detection process [30]. The threshold in DTM is computed by considering the presence of a shot transition and the variation of frame contents. DTM consists of two threshold stages: fixed and dynamic. Initially, a fixed threshold is used. After a shot transition occurs, a dynamic threshold then takes place of the fixed one. Dynamic value is determined as given in (11), where low T is a fixed threshold, high T is a dynamically raised threshold, and t is the elapsed time after a shot transition occurrence. dynlowhigh lowhighlow TTTTftTT . (11) In (11), time varying function f t can be linearly defined as, max 1 0max t ft t t , (12) where max t is calculated by 1 max 0 max 1 nk nk t tn . (13) In all shot transition detection methods, which are tested in the study, DTM is used as the thresholding tool. 5. Experimental Study In the experimental work, soccer and tennis match videos containing various types of shot transitions are used to justify the proposed method. The reason to use sport  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 240 (a) (b) (c) Figure 6. FDD values of a test video, (a) SDBC method (4 Partitions/Frame); (b) DBC method; (c) Absolute error between DBC and SDBC 12 =14, =140 pp rr . Diamonds indicate actual locations of shot transitions. videos particularly for this study is that lots of object moves and shot transitions are present in this type of videos. A total of seven videos consisting of 17,985 fra- mes are used in testing. The frame size is 240 × 320 pix- els. Before testing, actual locations of shot boundaries through the test videos are identified by a thorough ma- nual analysis. Thus, total numbers of gradual (dissolve, pan, wipe, zoom) and abrupt shot transitions are deter- mined as 89 and 95, respectively. Figures 7-11 contain sample video frames from the test set displaying abrupt, dissolve, pan, wipe, and zoom type shot transitions. After manual detection of shot transition locations on the test videos, the method proposed in the current study (FDDnew), the previously proposed FDD method (FDDold) [19], and classic methods (SAD, B2B, INT) are conduc- ted to detect the shot transition locatio ns tagged previou- sly. Detection performances of the methods are measured and compared using popular F1 measure [31], which uses both recall and precision information. Recall and preci- sion parameters are obtained as in (14) and (15). In these formulas, NM, NC and NF represent missed, correct and false detections, respectively. C Cm N RECALL NN (14) C CF N PRECISIONNN (15) In case of our study, recall is the ratio of number of correctly detected shot transitions obtained by a particu- lar method to total number of actual shot transitions. Si- milarly, precision is the ratio of number of correctly de- tected shot transitions to total number of correctly and incorrectly detected shot transitions. F1 measure is then computed usin g 12precision recall Fprecision recall (16) Tables 1 and 2 show detection results for the abrupt and gradual shot transitions respectively for all videos. Additionally, Table 3 provides an overall analysis with- out considering distinct types of the shot transitions. In case of abrupt shot transitions, INT has the best perfor- mance whereas FDDnew and B2B are runners up. More-  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 241 (a) (b) Figure 7. Abrupt shot transition in two consecutive frames. (a) (b) (c) (d) (e) (f) Figure 8. Dissolve-type shot transition in consecutive frames. (a) (b) (c) (d) (e) (f) Figure 9. Pan-type shot transition in consecutive frames. (a) (b) (c) (d) (e) (f) Figure 10. Wipe-type shot transition in consecutive frames. (a) (b) (c) (d) (e) (f) Figure 11. Zoom-type shot transition in consecutive frames. Table 1. Detection results of abrupt sh ot transitions. Method NCNFNMTotal Tr a nsitions F1 FDDnew 75 69 20 95 0.63 FDDold 69 13926 95 0.46 SAD 53 33 42 95 0.59 B2B 85 81 10 95 0.65 INT 61 5 34 95 0.76 Table 2. Detection results of gradual shot transitions. Method NCNFNMT o tal Transitions F1 FDDnew 41 45 48 89 0.47 FDDold 49 64 40 89 0.49 SAD 9 15 80 89 0.16 B2B 35 49 54 89 0.41 INT 10 7 79 89 0.19 Table 3. Detection results of all types of shot transitions. Method NCNFNMTotal Transitions F1 FDDnew 11611468 184 0.56 FDDold 11820366 184 0.47 SAD 62 48 122184 0.42 B2B 12013064 184 0.55 INT 71 12 113184 0.53 over, FDDnew offers a considerable improvement against FDDold thanks to less number of false detections. In case of gradual shot transitions, FDDnew and FDDold methods offer the best performance. Again, number of false detec- tions is much smaller in FDDnew method with respect to FDDold. If the results of overall analysis are examined in Table 3, it is obvious that the proposed method (FDDnew) sur-  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 242 passes all the other approaches. This analysis verifies that the proposed method offers a promising performance in detection of shot transitions with respect to classical de- tection methods. Moreover, it surpasses the other me- thods in detection of gradual transitions, which is a more challenging job than detecting abrupt transitions, as well. 6. Conclusions In this work, FD based shot transition detection method is proposed as alternativ e to classical pixel an d histogra m based methods available in the literature. The proposed method employs FD differences of consecutive video frames to detect the shot transitions where roughness of image intensity surface and textural information of video frames are taken into consideration. Difference of FD values for consecutive frames depends on the variations of frame contents. Since these variations are very large during the shot transitions, FD can be employed as an alternative tool in detection of these transitions. There are also additional novel approaches in the study other than use of FD information for shot transition detection. The first novelty is to utilize regional FD values instead of single FD per video frame in detecting the difference between consecutive frames. Using regional FD based feature differences reduces noise that may cause false alarm in detection process. Thus, locations of shot transi- tion are strongly emphasized and detection performance is improved. Another novel approach of the study is the development of SDBC which reduces the computational complexity of standard DBC in computation of FD val- ues. Due to high processing cost of DBC algorithm, the use of FD information in detection of shot transitions may not be suitable at all. In our study, however, com- plexity of FD calculation is significantly reduced so that FD can be easily employed for detection purpose. More- over, the reduction in FD computation time would speed up not only the shot transition detection process but also the other processes using fractal information in different research fields. The proposed shot transition detection method is tested on sport videos including soccer and tennis matches and efficacy of the method is compared with the classical pixel and histogram based methods. Experimental results verify that the proposed method not only offers comparable performance with respect to the abovementioned detection methods but also surpasses them in case of gradual shot transitions as well. As a fu- ture work, a hybrid shot transition detection algorithm combining classical and FDD based methods is planned to develop. REFERENCES [1] W. A. C. Fernando, C. N. Cana gara jah a nd D. R. Bul l, “A unified Approach to Scene Change Detection in Uncom- pressed and Compressed Video,” IEEE Transactions on Consumer Electronics, Vol. 46, No. 3, 2000, pp. 769-779. doi:10.1109/30.883445 [2] W. A. C. Fernando, C. N. Canagarajah and D. R. Bull, “Scene Change Detection Algorithms for Content Based Video Indexing and Retrieval,” Electronics & Communi- cation Engineering Journal, Vol. 13, No. 3, 2001, pp. 117-126. doi:10.1049/ecej:20010302 [3] O. Marques and B. Furht, “Content-Based Image and Video Retrieval,” Kluwer Academic Publishers, Massa- chusetts, 2002. [4] J. Korpi-Anttilla, “Automatic Color Enhancement and Scene Change Detection of Digital Video,” Licentiate Thesis, Licentiate Thesis, Helsinki University of Tech- nology, Finland, 2002. [5] H. Lu and Y. P. Tan, “An Effective Post-Refinement Method for Shot Boundary Detection,” IEEE Transac- tions on Circuits and Systems for Video Technology, Vol. 15, No. 11, 2005, pp. 1407-1421. [6] J. S. Boreczky and L. A. Rowe, “A Comparison of Video Shot Boundary Detection Techniques,” Journal of Elec- tronic Imaging, Vol. 5, No. 2, 1996, pp. 122-128. doi:10.1117/12.238675 [7] A. Ekin, “Sports Video Processing for Description, Summarization and Search,” PhD Thesis, University of Rochester, Rochester, 2003. [8] H. J. Zhang, A. Kankanhalli and S. W. Smoliar, “Auto- matic Partitioning of Full-Motion Video,” Multimedia Systems, Vol. 1, No. 1, 1993, pp. 10-28. doi:10.1007/BF01210504 [9] B. Mandelbrot, “Fractals: Form, Change and Dimension,” Freeman, San Francisco, 1977. [10] Y. Liu and Y. Li, “Image Feature Extraction and Seg- mentation Using Fractal Dimension,” Proceedings of In- ternational Conference on Information, Communication and Signal Processing, Singapore, Vol. 2, 9-12 Septem- ber 1997, pp. 975-979. [11] N. Sarkar and B. B. Chaudri, “An Efficient Differential Box-Counting Approach to Compute Fractal Dimension of Image,” IEEE Transactions on Systems, Man, and Cy- bernetics, Vol. 24, No. 1, 1994, pp. 115-120. doi:10.1109/21.259692 [12] J. L. Véhel and P. Mignot, “Multifractal Segmentation of Images,” Fractals, Vol. 2, No. 3, 1994, pp. 371-377. [13] T. Sato, M. Matsuoka and H. Takayasu, “Fractal Image Analysis of Natural Scenes and Medical Images,” Frac- tals, Vol. 4, No. 4, 1996, pp. 463-468. doi:10.1142/S0218348X96000571 [14] K. Revathy, G. Raju and S. R. Prabhakaran Nayar, “Im- age Zooming by Wavelets,” Fractals, Vol. 8, No. 3, 2000, pp. 247-253. [15] C. Hufnagl and A. Uhl, “Fractal Block-Matching in Mo- tion-Compensated Video Coding,” Fractals, Vol. 8, No. 1, 2000, pp. 35-48. [16] V. Drakopoulos, P. Bouboulis and S. Theodoridis, “Im-  Fractal Dimension Based Shot Transition Detection in Sport Videos Copyright © 2011 SciRes. JSEA 243 age Compression Using Affine Fractal Interpolation on Rectangular Lattices,” Fractals, Vol. 14, No. 4, 2006, pp. 259-269. doi:10.1142/S0218348X06003271 [17] Y. Fisher, “Fractal Image Compression,” Fractals, Vol. 2, No. 3, 1994, pp. 347-361. doi:10.1142/S0218348X94000442 [18] N. H. Bach, K. Shinoda and S. Furui, “Robust Scene Ex- traction Using Multi-Stream HMMs for Baseball Broad- cast,” IEICE Transactions on Information and Systems, Vol. E89-D, No. 9, 2006, pp. 2553-2561. [19] E. S. Gunal, S. Canbek and N. Adar, “Gradual Shot Change Detection in Soccer Videos via Fractals,” Pro- ceedings of the 6th International Conference on Electric- al and Electronics Engineering (ELECO’09), Bursa, 5-8 November 2009, pp.125-128. [20] E. S. Gunal, “Feature Extraction by Fractal Dimension in Pattern Recognition Applications,” PhD Thesis, Eskisehir Osmangazi University, Eskisehir, 2010. [21] A. Nagasaka and Y. Tanaka, “Automatic Video Indexing and Full-Video Search for Object Appearances,” Journal of Information Processing, Vol. 15, No. 2, 1992, p.316. [22] Y. Tonomura, “Video Handling Based on Structured In- formation for Hypermedia Systems,” Proceedings of In- ternational Conference on Multimedia Information Sys- tems, Jurong, November 1991, pp. 333-344. [23] U. Gargi, R. Kasturi and S. H. Strayer, “Performance Characterization of Video-Shot-Change Detection Me- thods,” IEEE Transactions on Circuits and Systems for Video Technology, Vol. 10, No. 1, 2000, pp. 1-13. doi:10.1109/76.825852 [24] M. J. Swain and D. H. Ballard, “Color Indexing,” Inter- national Journal of Computer Vision, Vol. 26, No. 4, 1993, pp. 461-470. [25] M. F. Barnsley, “Fractals Everywhere,” Academic Press, Boston, 1988. [26] B. B. Chaudhuri and N. Sarkar, “Texture Segmentation Using Fractal Dimension,” IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 17, No. 1, pp. 72-77, 1995. doi:10.1109/34.368149 [27] B. Gunsel and A. Murat Tekalp, “Content-Based Video Abstraction,” Proceedings of International Conference on Image Processing, Chicago, Vol. 3, 4-7 October 1998, pp. 128-122. [28] J. Meng, Y. Juan and S. F. Chang, “Scene Change Detec- tion in a MPEG Compressed Video Sequence,” Proceed- ings of IS&T/SPIE International Symposium on Electron- ic Imaging, San Jose, Vol. 2419, February 1995, pp. 14-25. [29] X. Wang and Z. Weng, “Scene Abrupt Change Detec- tion,” Canadian Conference on Electrical and Computer Engineering, Halifax, Vol. 2, 7-10 March 2000, pp. 880- 883. [30] S. Youm and W. Kim, “Dynamic Threshold Method for Scene Change Detection,” Proceedings of International Conference on Multimedia and Expo, Baltimore, Mary- land, Vol. 2, July 2003, pp. 337-340. [31] C. D. Manning, P. Raghavan and H. Schütze, “Introduc- tion to Information Retrieval,” Cambridge University Press, Cambridge, 2008. |