L. YU ET AL.

228

problem, so many research papers have all expounded.

Most of the existing heuristic algorithm is based on an

independent task, and dependent task is researched in a

homogeneous environment with the same structure, which

composed of uniformity machine. For example, Shang [1]

and others adopt a new list of scheduling technology to

control dependence task scheduling in environment with

the same structure. Wu [2] and others based on topo-

logical sort have proposed a fast local search algorithm

to enhance scheduling of quality and efficiency. Oliveira

[3] and others have proposed a task of scheduling algo-

rithm with fixed priority under a distributed computer

system. Above of algorithms are too many constrains,

and not the general, Carter [4] and others have developed

Generation Scheduling (GS) to control dependent task in

heterogeneous system. The main steps of GS algorithm

can be described as follows:

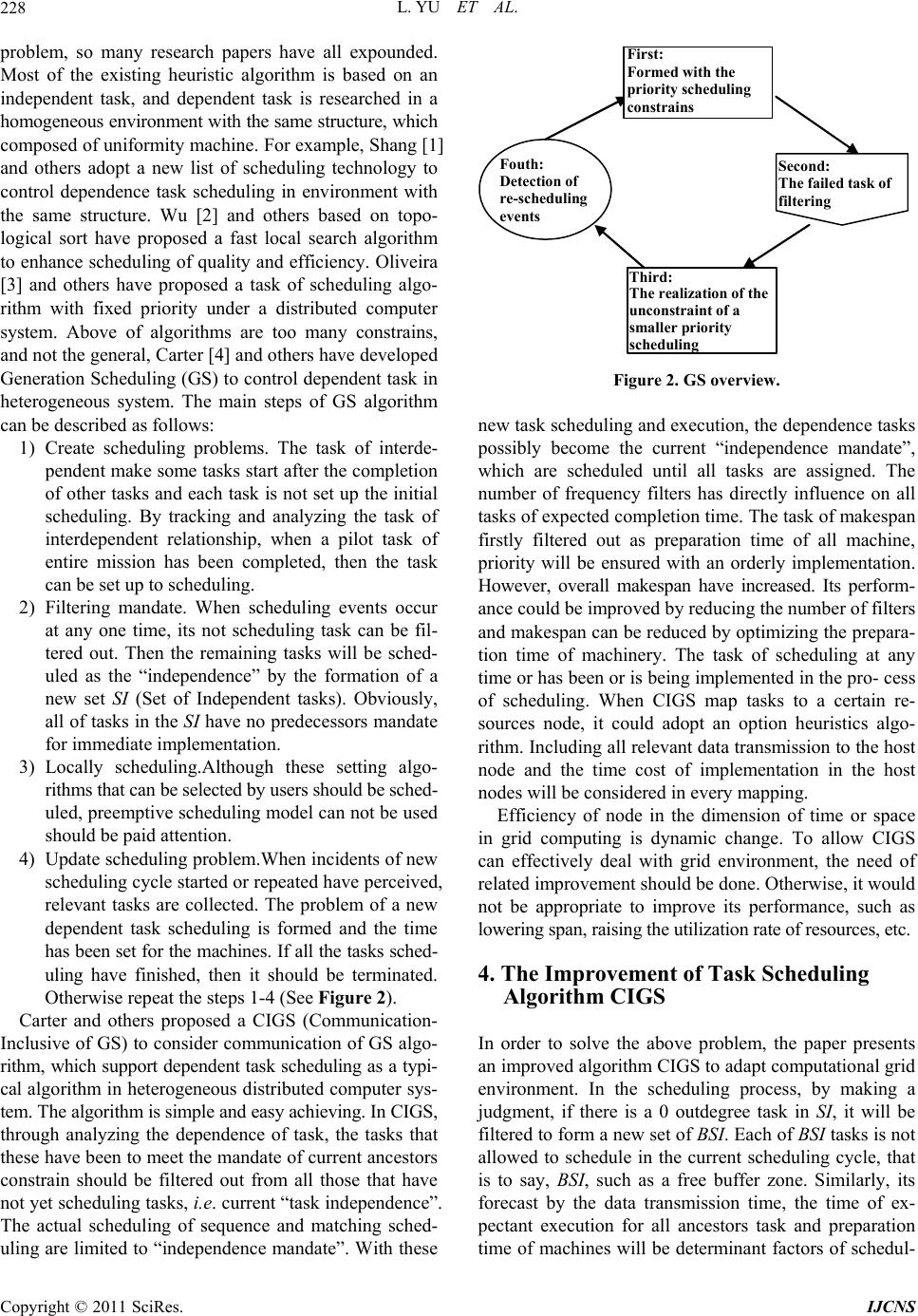

1) Create scheduling problems. The task of interde-

pendent make some tasks start after the completion

of other tasks and each task is not set up the initial

scheduling. By tracking and analyzing the task of

interdependent relationship, when a pilot task of

entire mission has been completed, then the task

can be set up to scheduling.

2) Filtering mandate. When scheduling events occur

at any one time, its not scheduling task can be fil-

tered out. Then the remaining tasks will be sched-

uled as the “independence” by the formation of a

new set SI (Set of Independent tasks). Obviously,

all of tasks in the SI have no predecessors mandate

for immediate implementation.

3) Locally scheduling.Although these setting algo-

rithms that can be selected by users should be sched-

uled, preemptive scheduling model can not be used

should be paid attention.

4) Update scheduling problem.When incidents of new

scheduling cycle started or repeated have perceived,

relevant tasks are collected. The problem of a new

dependent task scheduling is formed and the time

has been set for the machines. If all the tasks sched-

uling have finished, then it should be terminated.

Otherwise repeat the steps 1-4 (See Figure 2).

Carter and others proposed a CIGS (Communication-

Inclusive of GS) to consider communication of GS algo-

rithm, which support dependent task scheduling as a typi-

cal algorithm in heterogeneous distributed computer sys-

tem. The algorithm is simple and easy achieving. In CIGS,

through analyzing the dependence of task, the tasks that

these have been to meet the mandate of current ancestors

constrain should be filtered out from all those that have

not yet scheduling tasks, i.e. current “task independence”.

The actual scheduling of sequence and matching sched-

uling are limited to “independence mandate”. With these

First:

Formed with the

prior ity scheduling

constrains

Second:

The failed tas k of

filtering

Third:

The re al iz at i on of the

unconstraint of a

smal ler pr iority

scheduling

Fouth:

Detecti on o f

re-scheduling

events

Figure 2. GS overview.

new task scheduling and execution, the dependence tasks

possibly become the current “independence mandate”,

which are scheduled until all tasks are assigned. The

number of frequency filters has directly influence on all

tasks of expected completion time. The task of makespan

firstly filtered out as preparation time of all machine,

priority will be ensured with an orderly implementation.

However, overall makespan have increased. Its perform-

ance could be improved by reducing the number of filters

and makespan can be reduced by optimizing the prepara-

tion time of machinery. The task of scheduling at any

time or has been or is being implemented in the pro- cess

of scheduling. When CIGS map tasks to a certain re-

sources node, it could adopt an option heuristics algo-

rithm. Including all relevant data transmission to the host

node and the time cost of implementation in the host

nodes will be considered in every mapping.

Efficiency of node in the dimension of time or space

in grid computing is dynamic change. To allow CIGS

can effectively deal with grid environment, the need of

related improvement should be done. Otherwise, it would

not be appropriate to improve its performance, such as

lowering span, raising the utilization rate of resources, etc.

4. The Improvement of Task Scheduling

Algorithm CIGS

In order to solve the above problem, the paper presents

an improved algorithm CIGS to adapt computational grid

environment. In the scheduling process, by making a

judgment, if there is a 0 outdegree task in SI, it will be

filtered to form a new set of BSI. Each of BSI tasks is not

allowed to schedule in the current scheduling cycle, that

is to say, BSI, such as a free buffer zone. Similarly, its

forecast by the data transmission time, the time of ex-

pectant execution for all ancestors task and preparation

time of machines will be determinant factors of schedul-

Copyright © 2011 SciRes. IJCNS