Open Access Open Journal of Social Sciences, 2014, 2, 12-21 Published Online February 2014 in SciRes. http://www.scirp.org/journal/jss http://dx.doi.org/10.4236/jss.2014.22003 How to cite this paper Du, Y.N., Li, H.L., Dewar, D. and Han, C. (2014) An Assessment System for the Pediatrics Milestone Project. Open Journal of Social Sciences, 2, 12-21. http://dx.doi.org/10.4236/jss.2014.22003 An Assessment System for the Pediatrics Milestone Project Yina Du1, Hailong Li2, David Dewar3, Chia Han1 1Department of Electrical Engineering and Computing Systems, University of Cincinnati, Cincinnati, USA 2Division of Biomedical Informatics, Cincinnati Children’s Hospital Medical Center, Cincinnati, USA 3Departmentof Neonatology, Cincinnati Children’s Hospital Medical Center, Cincinnati, USA Email: duy3@mail.uc.edu, hailong.li@cchmc.org, dewardav@g mail.com, han@ucmail.uc.edu Received October 2013 Abstract A portable assessment system, PMEAS, based on the Pediatrics Milestone project, a joint initiative launched by the Accreditation Council for Graduate Medical Education (ACGME) and the American Board of Pediatrics (ABP) to assess resident physicians, is presented in this paper. Using the cut- ting-edge mobile technology, such as iPad, as the system platform, simple and easy to use interface for teachers to specify the competency categories and provide evaluation of the residents and immediate feedback on their progress of learning after daily rounds, this system enables the im- plementation of the medical education assessment strategy. Keywords Portable Assessment System; Medical Education; Mobile App; the Pediatrics Milestone Project; User Interface Design; User View Design; iPad; Xcode 1. Introduction Assessment of learning in any educational setting is challenging and difficult because it requires a well-defined expected learning outcome and an objective evaluation procedure executed by the educator based on some ac- cepted benchmark or standard. Medical education, in many aspects, is quite different from other fields in higher education, because the demand for high quality of its outcome, not solely based on the knowledge and clinical skills, but also on other qualities. The assessment of clinical performance of medical students is significantly important in the confirmation of appropriate progress through the curriculum [1-3]. To assess the performance of medical students or resident physicians, clinical observation is the most common and effective assessment scheme, despite of issues such as subjectivity. During clinical observation, supervising physicians would assess the medical students over a specific period by observing the interaction between students and patients [4]. Tradi- tionally, each medical institution may have their own assessment criteria and no standards are set for the whole medical education community.  Y. N. Du et al. As early as 1999, the Accreditation Council for Graduate Medical Education (ACGME) initialized the 10- year Outcome Project. It defined 6 competencies and an Assessment Toolbox has been offered to the community. However, institutions of medical education still have the difficulty to measure quality of resident physicians in an efficient manner [5]. Thus, the Pediatrics Milestone project was launched to advance outcome. The Pediatrics Milestone Project (PMP) is a joint initiative launched by ACGME and the American Board of Pediatrics (ABP) to assess the clinical performance of resident physicians [6]. To effectively assess a resident physician, both competencies and sub-competencies were defined to reflect general attributes of a qualified doctor in the PMP document. Furthermore, developmental milestones were defined as the benchmarks of know- ledge, skills, and attitudes to measure where the resident physicians are during the learning process. Milestones are a series of observable developmental phases, marking a resident between novices and experts. The Pediatrics Milestone Working Group contributed countless hours in assessment design, not only trying to establish a standardized system for assessing resident physicians, but also to make assessment results beneficial to both resident physicians and their supervisors. The significance of the PMP is obvious, since it fundamentally changed the assessment system from evaluating whether a resident physician is qualified to performing a com- prehensive assessment for current state of attributes of the resident physicians [7]. All the assessment results are meaningful description for residents to understand both their strength and weakness, which is barely provided by the score-based assessment results. In the Pediatrics Milestone project, clinical observation is still one of the most important assessment schemes. Supervising physicians could assess the students and fill out the standardized milestones-based assessment forms after the observation, or carry a form with them and finish it during observation. This milestone-based scheme is efficient and effective for assessing the students theoretically. However, the milestones-based assess- ment forms are extensive, when these forms are printed on paper with a common size font (say, Calibri, 11 points), it would need 32 printed pages, and that is only for one student. In such a fast paced clinical environ- ment, it is highly doubtful the applicability of traditional assessment method based on paper-form format. It is impractical for any supervising physician to carry a stack of assessment forms to observe several resident physi- cians at the same time. Furthermore, after assessment, summarizing an assessment report from those assessment forms for students would constitute a very time-consuming routine workload. These really motivated us to de- velop an assessment system with mobile information technology as a more practical method for collecting the necessary input data for the assessment process. Today, mobile technology, such as smart phones and tablet devices, has become an integral of our lives and indispensable tools for our daily work. The proliferation of applications, the apps, can testify this fact. More and more people put their effort into portable device design, mobile computing and wireless network. Over 900,000 applications, based on Apple announcements [8], are available in the Apple Store by June 2013. Despite these applications cover almost all facets of needs, such as entertainment, education, news, finance, medical, etc., one can still argue that the data acquisition for education assessment is still at its infancy. We believe that making this small change would be critical in advancing the entire educational assessment system. All educational institutions face the problem of how to best inform students about their performance on rou- tine basis. Assessment tasks are very challenging, especially in a clinical setting, where a supervising physician has only limited observation of a number of different resident physicians during a short time span. In this way, advantages of portable devices are obvious for medical education. As implemented on a mobile device, our portable assessment system is able to provide portability, timeliness and intelligence to users where traditional methods cannot provide. One mobile device is enough for all assessment related tasks. Assessment system users can quickly locate and edit assessment for different students instead of handling a stack of forms. With mobile computing techniques, assessment results could be summarized for student rapidly and time auto- matically without human interference. By using wireless network, assessment results could be sent to students timely through E-mail. Therefore, although our portable system is only a data collection tool within the whole education and assessment procedure, we believe it fully unleashes the usefulness of the Pediatric Milestone project. Implementation of the Pediatrics Milestone assessment system on portable devices would show that not only utilizing portable devices is a critical practice for assessment, but also a significant effort on establishing a real assessment system for medical education. In this paper, we present the development of a portable assessment system based on the Pediatrics Milestone project. The system is named as PMEAS for portable pediatrics medical education assessment system. Specifi- cally, we implemented the PMEAS with the latest portable device technology. iPad was chosen as system plat-  Y. N. Du et al. form. User interface and database were designed on designated integrated development environment Xcode. The framework of PMEAS could be utilized in any education assessment circumstance. The rest of this paper we first elaborate more on the Pediatrics Milestones assessment system. Then, the im- plementation of the PMEAS on an Apple device platform is described. Finally, a brief summary of the work and the directions of future works is given. 2. The Pediatrics Milestone Assessment System 2.1. Competencies and Sub-Competencies Competencies and sub-competencies were defined in the PMP to assess the general attributes of a resident phy- sician [7]. Competencies were defined very general. It reflects the general objectives of medical education. Each competency requires that a qualified physician should have the competence in different aspects. For example, the first category of competencies is ‘Patient Care’, so it sets the objective of a physician as have the compe- tence to provide patient care. In some way, competencies are simply some separated domains for assessing the ability of any student. Such general competencies are lack of the practicality to assess a resident physician so that sub-competencies were also defined in the project. Sub-competencies were designed as some specific knowledge and skills to achieve the competencies. Differ- ent from competencies, sub-competencies are very practical and feasible for assessment. For example, compe- tency C: “Practice-based Learning and Improvement” only defined areas in practice-based learning, all its sub- competencies further gave us specific requirements such as “set improvement goals”, “participate in the educa- tion of patients”, and “incorporate feedback into daily practice”. Those sub-competencies actually clearly de- fined some activities of resident physicians that could be observed by supervisors. Whether a resident physi- cian has the sub-competencies could be decided clearly by observation. Therefore, sub-competencies make the assessment practical while competencies clearly organize the assessment areas. 2.2. Developmental Milestones The merit of the PMP is that it assesses not only whether a student is qualified, but also where a student is in his or her specialty training process. Competencies and sub-competencies were already very specific in term of de- ciding if resident physicians are qualified by observing their state of attributes. Furthermore, developmental mi- lestones were defined as the benchmarks of knowledge, skills, and attitudes to mark a resident physician be- tween novices and experts. The milestones turned a simple evaluation into a comprehensive assessment by pro- viding meaningful and guide-oriented feedback. Based on the assessment results generated from milestones, the resident physicians being assessed could understand that what their strength and their weakness are, and which aspects should be improved. Let us show how milestones help assess the progression of a resident physician with an example of a sub- competency milestone, namely, B1, which states “Demonstrate sufficient knowledge of the basic and clinically supportive sciences appropriate to pediatrics”. To reflect different phases of learning progression, the milestones for sub-competency B1 started scale of knowledge related ability from “B1.1 Does not know or remember knowledge”, which is certainly not a qualified state of doctor. Then, the progression goes through four middle phases from “B1.2 Understand knowledge, but still learning to apply”, “B1.3 Understand knowledge, and apply”, “B1.4 Analyze knowledge for diagnosis”, to “B1.5 Evaluate knowledge and use it appropriately”. Finally, the B1.6 Milestone for a physician is to “learn from experience and apply information for new situations”. In terms of educational objectives, B1.4 could be thought as a minimum requirement for a medical student to be consi- dered a qualified doctor, B1.5 is a phase where we can rate the doctor as good, and with milestone of B1.6, the resident physicians being assessed has been an excellent doctor in term of this specific sub-competency. In this way, anyone being assessed could understand where they are in the learning process, and know which direction they should work on. More milestones information could be found in the Pediatric Milestone project document at ACGME website [9]. 3. Implementation of the Assessment System In this section, we would like to elaborate the implementation of our portable assessment system. Apple’s iPad  Y. N. Du et al. was designated as the portable device of choice for the assessment system by the physician community that we surveyed. As the pioneer of the tablet technology, iPad device includes the most advanced hardware and stable software performance. Most importantly, 10 inch touch screen of iPad is desirable for such a software applica- tion, given that milestone descriptions are relatively long, and those descriptions with clearly visible size font would occupy too much screen space on other smaller size devices. On mobile devices, a software application is referred as App. Our assessment system is also developed as an App for iPad. For our assessment App, Xcode, an integrated development environment (IDE) developed by Apple for software developers around the world to develop Apps for Apple operating system, was used. The version of Xcode we used is 4.2. To run Xcode, we were using one Macbook Pro with Mac OS X 10.6.8. With such a configuration of development tools, an as- sessment App has been successfully developed on iPad device with iOS 5.1.1. Despite of many technical diffi- culties during App development, we would like to concentrate on the basic design of the database and the user interface of the system in this paper. 3.1. Database Design Database design is an important component in the App development process. The system is essentially a data acquisition tool for supervising physicians to collect the performance data of resident physicians. Thus, data management is the core of the system. Figure 1 is an Entity-Relationship diagram to demonstrate the relations for our database. Totally, there are 4 entities: Student, Assessment, User, and Assessment Category. • Student entity: A key attribute-Student ID, and four other attributes-Active, Name, Email, and Current Year define the necessary information to describe a medical student or resident physician’s identity. • User entity: For user entity, we include user ID (key attribute), User Name, phone, Active, and Email (at- tributes). This entity is defined for administrator, who coordinates the assessment in the medical institutions, and supervising physicians who observe students during assessment. • Assessment category entity: This entity is used to maintain the competencies, sub-competencies, and Miles- tones information. We have a Category ID as key attribute. Other attributes include Section ID, Section Name, Objective, and Active. This entity works as a pool of all categories to be assessed. • Assessment entity: We defined key attribute as Assessment ID. Other attributes include Data, Active, and Context Info. This one is for a specific assessment task. In context info, it involves assigned certain compe- tencies, sub-competencies, and Milestone, which is a subset of items from Assessment category entity. Relationship between these entities are shown in Figu re 1. The Assessment Result and Assigned Category are two relations to connect all entities. Assessment result as a relation connects three entities: one User, one As- sessment, and multiple Students, while relation Assigned category involves one user and multiple Assessment categories. In the practical implementation, multiple tables in the database are used to store information about Figure 1. Database relationship.  Y. N. Du et al. users, students, and assessment category (Competencies, sub-competencies, and milestone). Other result tables are generated based on relationship between entities in our Entity-Relationship Diagram. 3.2. User Interface Design In this section, we would like to illustrate the user interface design with several screen shots. User interface of our system are designed as different views in the App for specific tasks. One view of App may involves buttons, context input window, sliders, or pickers. These items are the user interface objects defined in Xcode. On the one hand, these objects are the developmental tools designed by Apple to facilitate the App development. On the other hand, they also help to guarantee the quality of App on Apple devices. Some development objects will be described with more details in the following sections. A hierarchical tree of views is demonstrated in Figure 2 to show the relationship between the views. Gener- ally, views after an initial login view can be divided in two categories: Administrator View and User View. With the Administrator View, the administrators of the App could add, delete or edit information for users and stu- dents. Administrators can also assign assessment scope to a user from the assessment pool and export the as- sessment results. With the User View, the App users are able to start a new assessment session or review the past assessment. Please note that all screenshot are obtained with iOS simulator 5.1, a tool in Xcode IDE to show the appearance of App in the portable devices, so, minor differences may exist between our screenshot and the our App views in iPad. Figure 3 is Administrator Home View. As we can see on its left part, three major tasks-user/student man- agement, assessment assignment, and data export-could be performed. On the right part of administrator home view, it already shows all existing users in the system. In the system configuration phase, the administrators must set up the designated users (supervising physicians), and students to be assessed (resident physicians). While the Pediatrics Milestones project defines the whole framework of assessing all aspects of the resident physicians, only some of competencies or sub-competencies need to be included for a certain group of students during a round or in a specific assessment task. We called it an assessment scope. In this way, the administrators must control the setting of the assessment scope at the beginning of the session. We used Assessment scope as- signment view as in Figure 4 to let administrators assign a subset of assessment competencies, sub-competen- cies and corresponding Milestones to the designated users to assess the performance of the students. In this view, we designed two buttons (green and red) to mark the selection of sub-competencies. A small flag is also used to show the selected sub-competencies. Figure 2. Hierarchical tree of user views.  Y. N. Du et al. Figure 3. Administrator home view. Figure 4. Assessment scope assignment view.  Y. N. Du et al. Once the assessment is completed, administrator can log into the system and export all the assessment results. Assessment results are generated automatically as a CSV file, which can be viewed easily in other office suite tools, such as MS Excel. Since USB or other common hardware interface are not provided on iPad, we chose to email the CSV file to administrator email address. The exported data could involve information such as user name, student name, assessment result score, and any other attribute the administrator would like to export. Next, we would like to describe the design of user views. After user login the system, App will directly guide users to User home view. In this view, one can choose to start a new assessment session or review the past as- sessment results. In Figure 5, an example is shown as one starts a new assessment. Description of assessment could be added optionally for future reference. After that, students must be added in this new assessment. As- sessment configuration is performed in this view. In Figure 5, a picker tool, a standard user interface object in Xcode for choosing from pre-defined items, is shown at the bottom. With the picker view, users could add any student from a list, which were input by administrators as in user/students management task. Please note that we also put year of students besides the names so that assessment could be performed with prior knowledge. Figure 6 illustrates the most important view of the App—the Assessment view. When Assessment configura- tion is finished, App will guide user to this assessment view for all assessment task. Users don’t need to leave this page until all assessment session is done. This view is first divided into multiple blocks. Each block is de- signed for one sub-competency, and description of competencies and sub-competencies are provided on the top of each block. Within the blocks, each student is listed separately, and please note that year of student is in the bracket followed the name. Also, Milestones description plus a slider tool are designed besides student’s name. A slider is one user interaction tool provide in Xcode. By using the slider, users can use the finger to move the slider in certain range. To remind the context of each milestone without occupying too much screen space, we design a dynamic view with the help of slider. Both milestone description and score next to slider bar are de- signed as dynamic view in our App. The effect of such design is that when users are moving sliders, milestone and score are changing accordingly. For example, in first block of Figure 6, we have virtual student name Mike Smith in his second year. Current slider is set as it is, so score is 43 and milestone is shown accordingly. Note Figure 5. Assessment scope assignment view.  Y. N. Du et al. Figure 6. Assessment view. that second student is Tim Swan, and his slider is set at score 76, then milestone description is totally different from Mike’s. With this design, users can always move slider back and forth during the assessment to locate a proper milestone for students, and at the same time, user interface view is not occupied by the descriptive words of milestone. We also designed to make all the sub-competencies on this Assessment view, since different views switch is not desirable. Therefore, to continue with more competencies assessment, users only need to slide the page upwards and the view will scroll down with more assessment items. With constrained memory of portable devices, most Apps will utilize an object reuse technique. Without exception, we also need to apply this tech- nique since creation of a huge view is not permitted if many assessment items are assigned for assessment. In this case, the system processing behind the scene is that once users scroll down to new assessment items, some of the past user interaction objects such as sliders are released in the memory and are reused for incoming views which are about to appear. Once users finished the session and press the save button, App will lead users to an email selection view, list- ing all the student names on it. One of the goals of the system is to return descriptive meaningful assessment to students in a timely manner. An e-mail function to send the results to student right after the assessment is in- cluded. An assessment result mail view is shown in Figure 7. Users could edit the assessment result email if necessary after the assessment. Based on users’ feedback, we designed the evaluation result page for the email. It included student and assessment information. A detail table of assessment results is generated automatically. Supervisors no longer create the reports manually. It saves time for supervisors of the resident physicians, and with descriptive reports, resident physicians under evaluation could understand their current performance from description for each assessment category. 4. Summary and Future Works In this paper, we developed an assessment system PMEAS to enable the application of the guideline set forth by the Pediatrics Milestone project to assess pediatrics residents. Our efforts focused on the development of a  Y. N. Du et al. Figure 7. Assessment result mail view. framework, and the design of user interface and database in the assessment system. We implemented the system on the iPad device from Apple Inc. The coding for a user-friendly error-proof assessment system was performed with Xcode on Mac Pro laptop. Currently, the PMEAS App is only version 1.0. The system has been delivered to the fellows and faculty members, but whether supervisors of resident physicians could efficiently put it to use is still not known. Field test and feedback need to be done to improve future versions. Once the system is widely used and data are col- lected, the next phase of data analysis for tracking the progress along the milestone time line for each individual resident physician can be made. Furthermore, overall, group assessment data analysis can provide quality of the program and its resident physicians. References [1] Barrows, H.S. and Tamblyn, R.M. (1980) Problem-based learning: An approach to medical education. Springer Pub- lishing Company. [2] Ende, J. (1983) Feedback in clinical medical education. Jama, 250, 777-781. http://dx.doi.org/10.1001/jama.1983.03340060055026 [3] Epstein, R.M. (2007) Assessment in medical education. New England Journal of Medicine, 356, 387-396. http://dx.doi.org/10.1056/NEJMra054784 [4] Papadakis, M.A. (2004) The Step 2 clinical-skills examination. New England Journal of Medicine, 350, 1703-1704. http://dx.doi.org/10.1056/NEJMp038246 [5] Lurie, S.J., Mooney, C.J. and Lyness, J.M. (2009) Measurement of the general competencies of the Accreditation Council for Graduate Medical Education: A systematic review. Academic Medicine, 84, 301-309. http://dx.doi.org/10.1097/ACM.0b013e3181971f08 [6] Hicks, P.J., Schumacher, D.J., Benson, B.J., Burke, A.E., Englander, R., Guralnick, S., et al. (2010) The pediatrics mi- lestones: Conceptual framework, guiding principles, and approach to development. Journal of Graduate Medical Edu-  Y. N. Du et al. cation, 2, 410-418. http://dx.doi.org/10.4300/JGME-D-10-00126.1 [7] Hicks, P.J. , Englander, R., Schumacher, D.J., Burke, A., Benson, B.J., Guralnick, S., et al. (2010) Pediatrics Milestone Project: Next steps toward meaningful outcomes assessment. Journal of Graduate Medical Education, 2, 577-584. http://dx.doi.org/10.4300/JGME-D-10-00157.1 [8] Apple Inc. (2013) URL (Last checked on 15 June 2013). http://www.apple.com/ [9] ACGME (2013) URL (Last checked on 30 Sept 2013). http://www.acgme.org

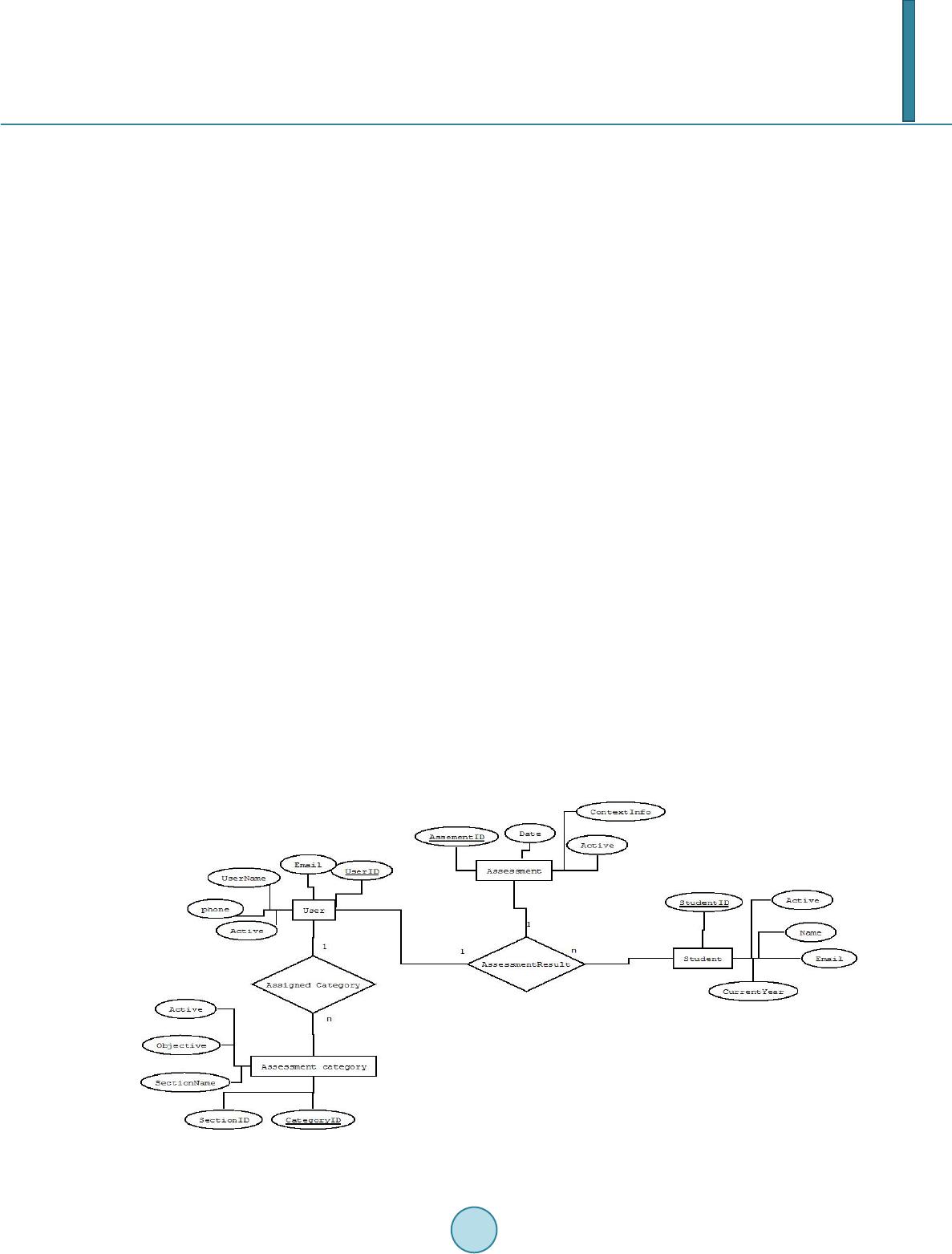

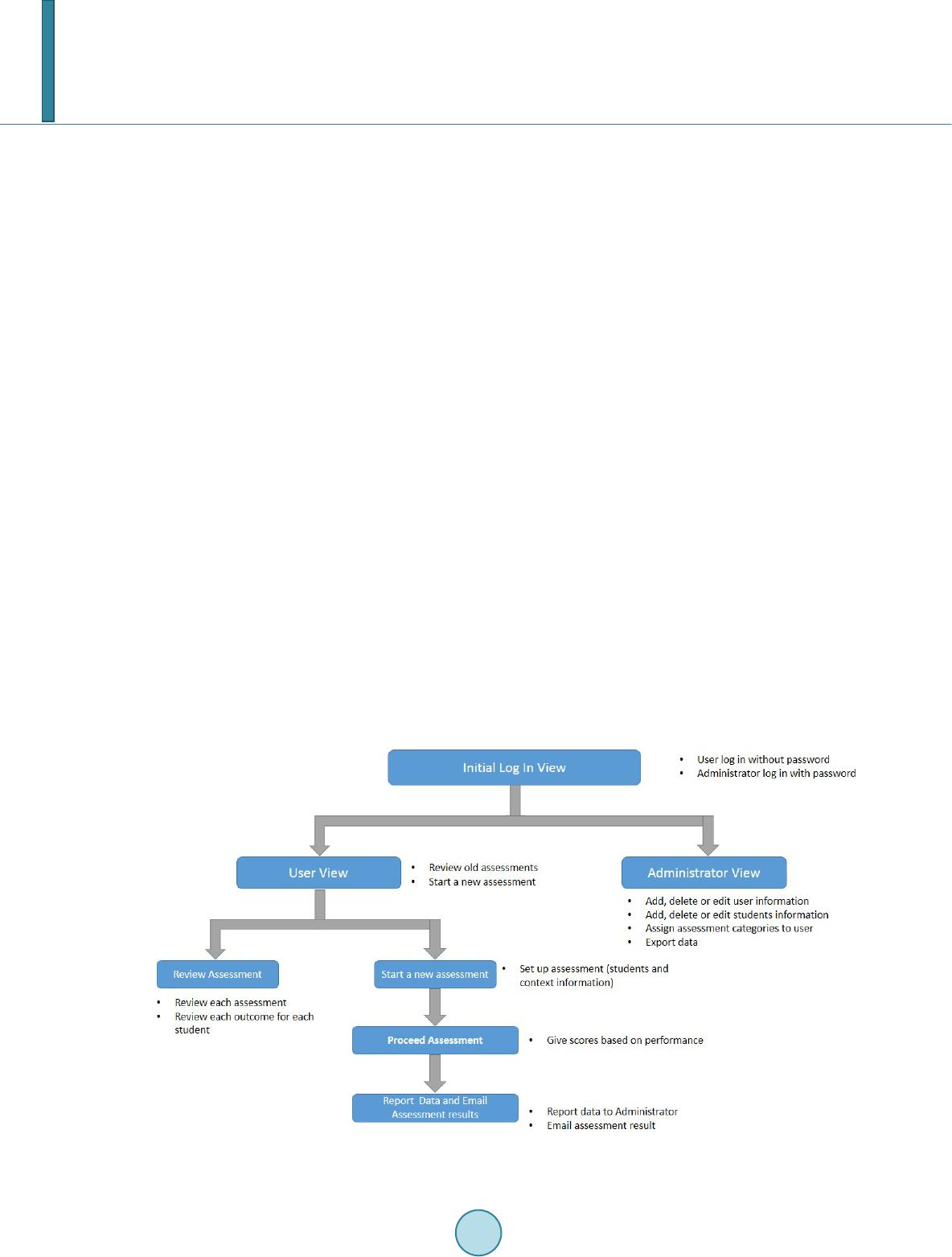

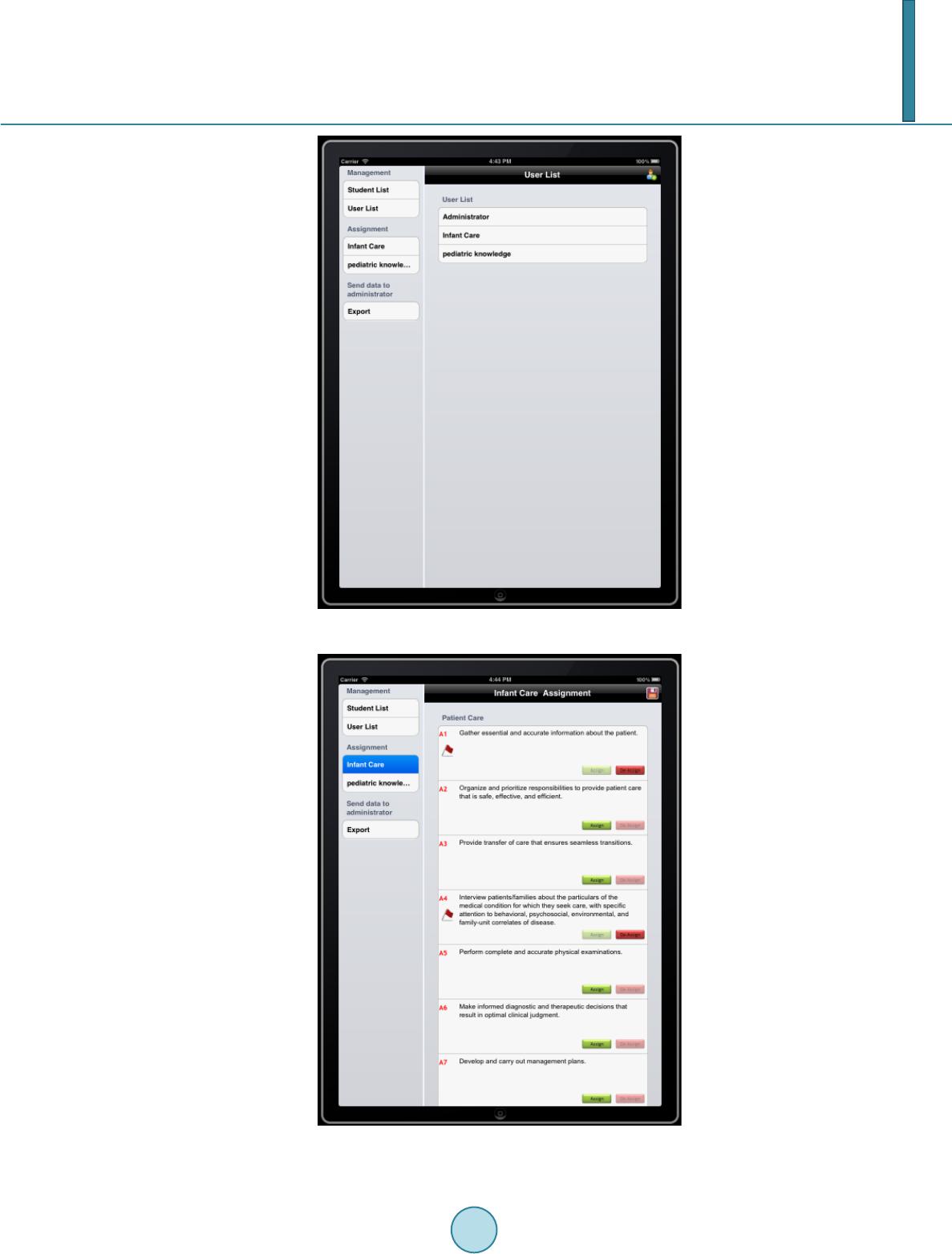

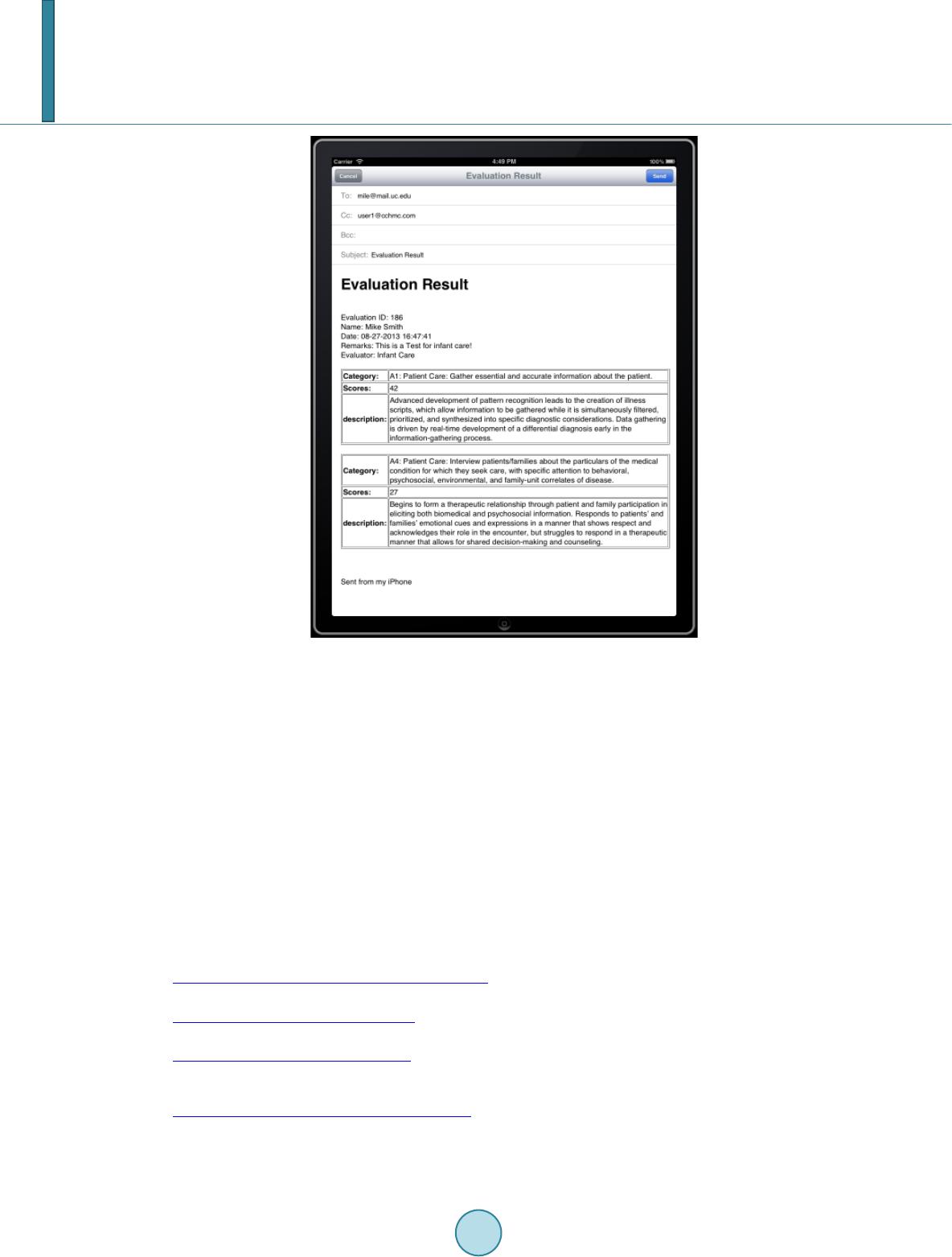

|