M. E. SALIH ET AL.

Copyright © 2013 SciRes. ENG

the diago na l of S, i.e. s1 > s2 > s3 > ··· sn.

V is a matrix whose columns are the eigenvectors of

the ATA matrix. These are termed the right eigenvectors.

When computing the SVD of a matrix is desirable to

reduce its dimensions by keeping its first k singular val-

ues.

This process is termed dimensionality reduction, and

AK is referred to as the rank k Approximation of A, or the

“Reduced SVD” of A. If we eliminate dimensions by

keeping the three largest singular values, this is a rank 3

approximation [10]. The top k singular values are se-

lected as a mean for developing a “latent semantics” re-

presentation of A that is now free from noisy dimensions.

This “latent semantics” representation is a specific data

structure in low-dimensional space in which documents,

terms and queries are embedded and compared. This

hidden or “latent” data structure is masked by noisy di-

mensions and becomes evident after the SVD. We re-

placed the distances

( )

( )

( )

( )

2

2

1

dd

uN xuNy

h

−−

(3)

by

( )

( )

( )

( )

2

2

1dd

uP xuPy

h

−−

(4)

where Pd represents the projections of Nd onto the low-

er-dimensional space determined by the SVD. If Nd is a

particular row in A, then the weights for Nd are just the

corresponding row in U multiplied by diagonal elements

of S. The weights for Nd are referred to as projection of

Nd into the k-dimensional space. Better denoising is ob-

tained when similarity between pixels is computed using

the dim e ns i on reduction introduced by the SVD.

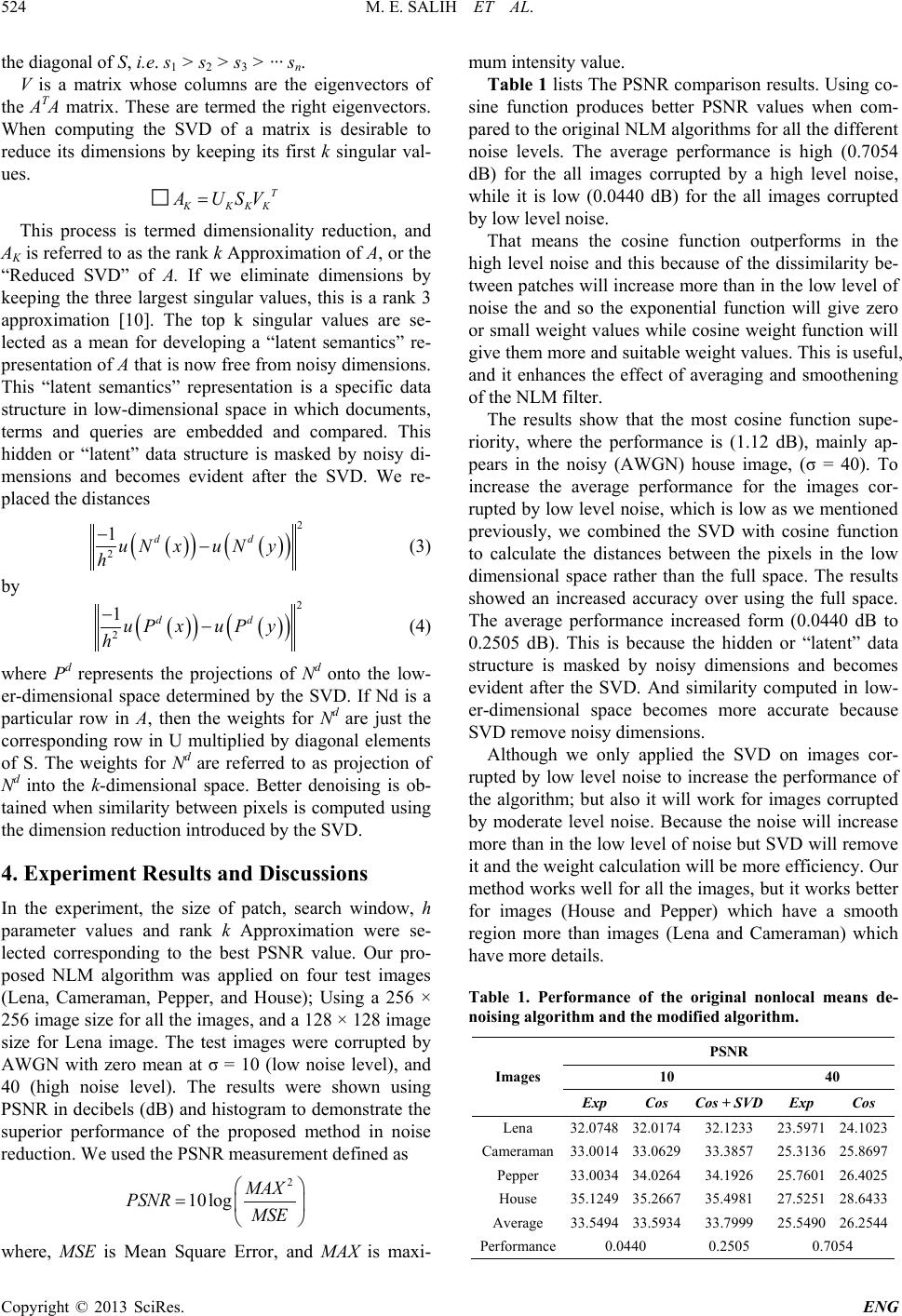

4. Experiment Results and Discussions

In the experiment, the size of patch, search window, h

parameter values and rank k Approximation were se-

lected corresponding to the best PSNR value. Our pro-

posed NLM algorithm was applied on four test images

(Lena, Cameraman, Pepper, and House); Using a 256 ×

256 image size for all the images, and a 128 × 128 image

size for Lena image. The test images were corrupted by

AWGN with zero mean at σ = 10 (low noise level), and

40 (high noise level). The results were shown using

PSNR in decibels (dB) and histogram to demonstrate the

superior performance of the proposed method in noise

reduction. We used the PSNR measurement defined as

2

10log MAX

PSNR MSE

=

where, MSE is Mean Square Error, and MAX is maxi-

mum intensity value.

Table 1 lists The PSNR comparison results. Us ing co-

sine function produces better PSNR values when com-

pared to the original NLM algorithms for all the different

noise levels. The average performance is high (0.7054

dB) for the all images corrupted by a high level noise,

while it is low (0.0440 dB) for the all images corrupted

by low level noise.

That means the cosine function outperforms in the

high level noise and this because of the dissimilarity be-

tween patches will increase more than in the low level of

noise the and so the exponential function will give zero

or small weight values while cosine weight function w ill

give them more and suitable weight values. This is useful,

and it enhances the effect of averaging and smoothening

of the NLM filter.

The results s ho w that the most cosine function supe-

riority, where the performance is (1.12 dB), mainly ap-

pears in the noisy (AWGN) house image, (σ = 40). To

increase the average performance for the images cor-

rupted by low level noise, which is low as we mentioned

previously, we combined the SVD with cosine function

to calculate the distances between the pixels in the low

dimensional space rather than the full space. The results

showed an increased accuracy over using the full space.

The average performance increased form (0.0440 dB to

0.2505 dB). This is because the hidden or “latent” data

structure is masked by noisy dimensions and becomes

evident after the SVD. And similarity computed in low-

er-dimensional space becomes more accurate because

SVD remove noisy dimensions.

Although we only applied the SVD on images cor-

rupted by low level noise to increase the performance of

the algorithm; but also it will work for images corrupted

by moderate level noise. Because the noise will increase

more than in the low level of noise but SVD will remove

it and the weight calculation will be more efficiency. Our

method works well for all the images, but it works better

for images (House and Pepper) which have a smooth

region more than images (Lena and Cameraman) which

have more details.

Table 1. Performance of the original nonlocal means de-

noising algorithm and the modified algorithm.

Images

PSNR

10 40

Exp Cos Cos + SVD Exp Cos

Lena 32.0748 32.0174 32.1233 23.5971 24.1023

Cameraman 33.0014 33.0629 33.3857 25.3136 25.8697

Pepper 33.0034 34.0264 34.1926 25.7601 26.4025

House 35.1249 35.2667 35.4981 27.5251 28.6433

Average 33.5494 33.5934 33.7999 25.5490 26.2544

Performance 0.0440 0.2505 0.7054