T. B. SHAHI, A. YADAV

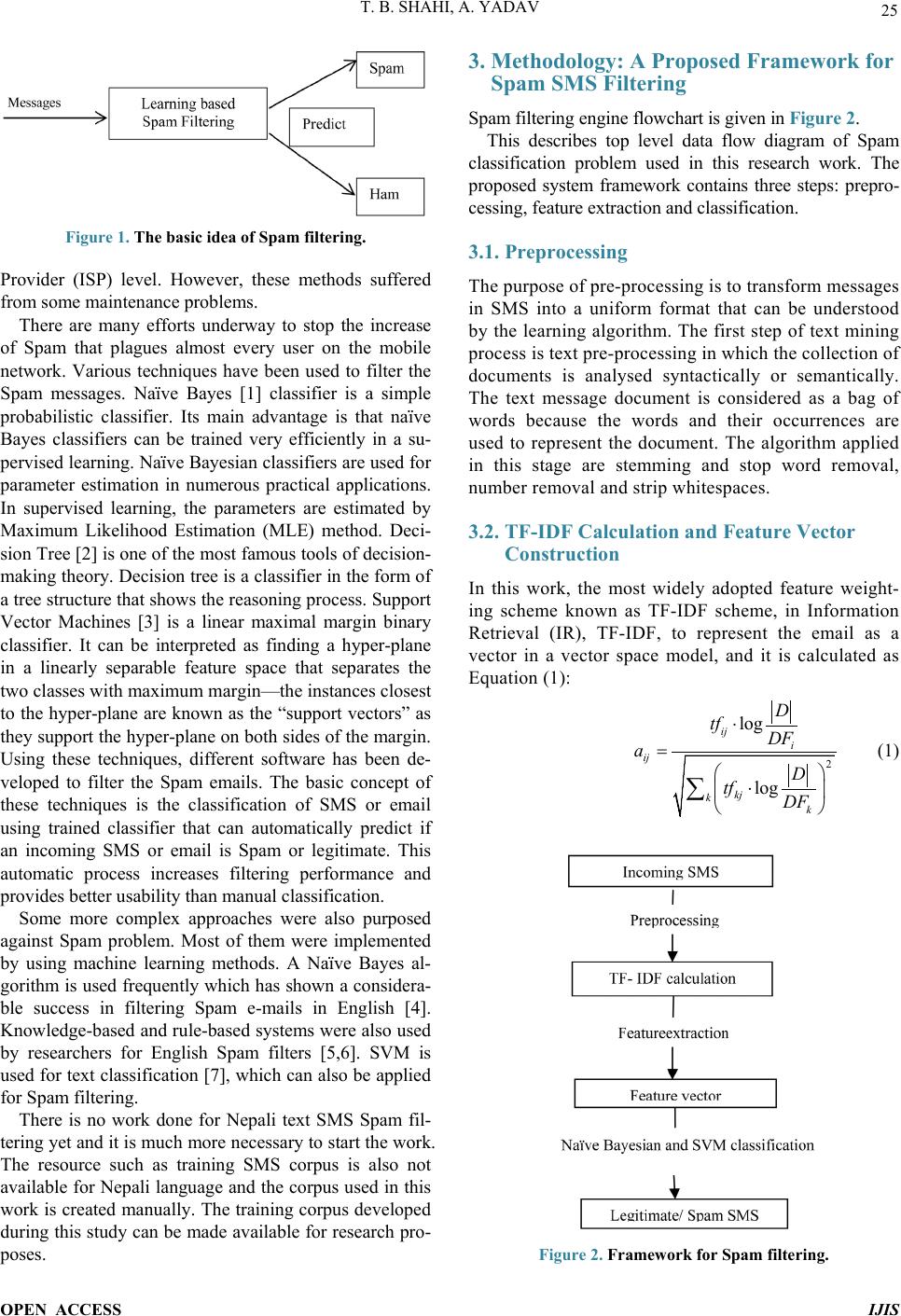

Figure 1. The basic idea of Spam filtering.

Provider (ISP) level. However, these methods suffered

from some maintenance pro blems.

There are many efforts underway to stop the increase

of Spam that plagues almost every user on the mobile

network. Various techniques have been used to filter the

Spam messages. Naïve Bayes [1] classifier is a simple

probabilistic classifier. Its main advantage is that naïve

Bayes classifiers can be trained very efficiently in a su-

pervised learning. Naïve Bayesian classifiers are used for

parameter estimation in numerous practical applications.

In supervised learning, the parameters are estimated by

Maximum Likelihood Estimation (MLE) method. Deci-

sion Tree [2] is one of the most famous tools of decision-

making theory. Decision tr ee is a classifier in the form of

a tree structure that show s the reasoning proc ess. Suppor t

Vector Machines [3] is a linear maximal margin binary

classifier. It can be interpreted as finding a hyper-plane

in a linearly separable feature space that separates the

two classes with maximum margin—the instances closest

to the hyper-plane are known as the “support vectors” as

they support the h yper-plane on both sides of the margin.

Using these techniques, different software has been de-

veloped to filter the Spam emails. The basic concept of

these techniques is the classification of SMS or email

using trained classifier that can automatically predict if

an incoming SMS or email is Spam or legitimate. This

automatic process increases filtering performance and

provides better usability than manu al c las s ification.

Some more complex approaches were also purposed

against Spam problem. Most of them were implemented

by using machine learning methods. A Naïve Bayes al-

gorithm is used frequently which has shown a considera-

ble success in filtering Spam e-mails in English [4].

Knowledge-based and rule-based systems were also used

by researchers for English Spam filters [5,6]. SVM is

used for text classification [7], which can also be applied

for Spam filtering.

There is no work done for Nepali text SMS Spam fil-

tering yet and it is much more necessary to start the work.

The resource such as training SMS corpus is also not

available for Nepali language and the corpus used in this

work is created manually. The training corpus developed

during this study c an be made available for res earch pro-

poses.

3. Methodology: A Proposed Framework for

Spam SMS Filtering

Spam filtering engine fl o wc hart is gi ve n in Figure 2.

This describes top level data flow diagram of Spam

classification problem used in this research work. The

proposed system framework contains three steps: prepro-

cessing, feature extraction and classification.

3.1. Preprocessing

The purpose of pre-processing is to transform messages

in SMS into a uniform format that can be understood

by the learning algorithm. The first step of text mining

process is text pre-processing in which the collection of

documents is analysed syntactically or semantically.

The text message document is considered as a bag of

words because the words and their occurrences are

used to represent the document. The algorithm applied

in this stage are stemming and stop word removal,

number removal and strip whitespaces.

3.2. TF-IDF Calculation and Feature Vector

Construction

In this work, the most widely adopted feature weight-

ing scheme known as TF-IDF scheme, in Information

Retrieval (IR), TF-IDF, to represent the email as a

vector in a vector space model, and it is calculated as

Equation (1):

2

log

log

ij i

ij

kj

kk

D

tf DF

aD

tf DF

⋅

=

⋅

∑

(1)

Figure 2. Framework for Spam filtering.

OPEN ACCESS IJIS