Intelligent Control and Automation

Vol.06 No.04(2015), Article ID:61055,8 pages

10.4236/ica.2015.64023

Temporal Prediction of Aircraft Loss-of-Control: A Dynamic Optimization Approach

Chaitanya Poolla1, Abraham K. Ishihara2

1Electrical and Computer Engineering, Carnegie Mellon University (SV), Moffett Field, CA, USA

2Research Faculty, Electrical and Computer Engineering, Carnegie Mellon University (SV), Moffett Field, CA, USA

Copyright © 2015 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

Received 25 August 2015; accepted 10 November 2015; published 13 November 2015

ABSTRACT

Loss of Control (LOC) is the primary factor responsible for the majority of fatal air accidents during past decade. LOC is characterized by the pilot’s inability to control the aircraft and is typically associated with unpredictable behavior, potentially leading to loss of the aircraft and life. In this work, the minimum time dynamic optimization problem to LOC is treated using Pontryagin’s Maximum Principle (PMP). The resulting two point boundary value problem is solved using stochastic shooting point methods via a differential evolution scheme (DE). The minimum time until LOC metric is computed for corresponding spatial control limits. Simulations are performed using a linearized longitudinal aircraft model to illustrate the concept.

Keywords:

Pilot Assistance, Loss of Control, Aircrafts, Dynamic Optimization, Temporal Prediction, Pontryagin Maximum Principle, Differential Evolution, Stochastic Shooting Point Methods

1. Introduction

Air crash analyses during the past decade have concluded that about 40 percent of fatal air accidents in civil aviation occur due to Aircraft Loss of Control (LOC), the most contributing factor amongst others [1] [2] . During this time span, LOC related research has received increased attention in the aviation safety community [3] . Several investigations were carried out to understand the nature and characteristics of loss-of-control regimes [3] -[5] . In particular, a collaborative effort between Boeing and NASA Langley [6] provided a flight envelope based method to quantify LOC from air accident data. Similar envelopes have been used in this work to quantify LOC boundaries.

Quantifying LOC boundaries is a first step toward addressing the larger issue of LOC prevention. While there exist envelope protection features on an aircraft, they are of little use during LOC flight regimes due to degradation of normal control modes [7] . An alternative approach is to provide useful LOC information to pilots using flight states and pilot input data. Though LOC envelopes provide limits of operation of aircraft states and other auxiliary variables, there are not readily usable by the pilots. This is because, envelope data are provided in the flight state space whereas pilot decisions are executed in the control space. Furthermore, the mapping between the control-space inputs to state-space responses becomes unpredictable close to LOC regimes. This results in difficulty for human interpretation unlike flight regimes close to the trim conditions. However, it is possible to warn the pilot about potential LOC scenarios using intelligent algorithms by extracting accurate spatio-temporal information from the available LOC envelopes for direct pilot use. In this connection, recent experiments carried out at NASA Ames Research Center demonstrate favorable disposition of pilots to use pilot-friendly LOC tools [8] . A data based predictive control (DBPC) algorithm [9] was used to compute spatial control bounds for pilot use. However, in that work the time associated with the spatial bounds was considered fixed. This work complements the DBPC based spatial bounds by providing temporal bound information in framework of optimal control theory using Pontryagin’s Maximum Principle.

This remainder of the paper is structured as follows. Section 2 provides an overview of the minimum time problem. Section 3 treats the optimal control problem using Pontryagin Maximum Principle and describes the resulting two point boundary value problem (TP-BVP). The solution to the TPBVP using differential evolution (DE) based methods is described in Section 4. Simulation results based on linear longitudinal model from [4] are provided in Section 5 followed by discussion and concluding remarks in Section 6.

2. Problem Formulation

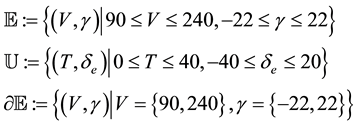

We consider the problem of obtaining the minimum time to exit the flight envelope for a linearized longitudinal aircraft model. Let the operating envelope be defined in the  state space for control limits in

state space for control limits in  space as specified below [4] .

space as specified below [4] .

where,  denotes the flight envelope in (fps, deg) and

denotes the flight envelope in (fps, deg) and  denotes the envelope for the control bound in (lbf, deg). The boundary of the envelope is denoted by

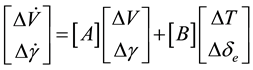

denotes the envelope for the control bound in (lbf, deg). The boundary of the envelope is denoted by . The system is assumed to follow the dynamics [4] as shown below:

. The system is assumed to follow the dynamics [4] as shown below:

(1)

(1)

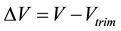

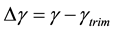

where,  ,

,  ,

,  and

and . For convenience of notation, let

. For convenience of notation, let  denote the state of the system trajectory at time t starting at

denote the state of the system trajectory at time t starting at  under the action of the control input

under the action of the control input  on

on .

.

The optimal control problem is posed as follows: Given an initial point in state space

3. Minimum Time Problem

Consider the linear dynamical system given by:

where,

The cost functional for the minimum time problem then becomes,

where,

In the framework of PMP, the existence of an optimal control also mandates the existence of co-states (denoted by

The optimal control law is the one that maximizes the Hamiltonian at every time step from

and so, the control law can be expressed as:

where,

subject to the boundary conditions:

where,

boundary value problem (TPBVP) is solved by matching the initial conditions of the unknown variables

In other words, the solution to the TPBVP is obtained by solving for the zeros of

4. Differential Evolution for TPBVP

Differential Evolution (DE) is a metaheuristic iterative optimization strategy that tries to improve candidate solutions over generations. Since its inception in the mid 90s, there has been a growing interest in using DE due to its simple yet powerful approach to solve several engineering optimization problems [11] -[13] . In the framework of DE, each generation consists of population of candidate solutions, also known as agents. During every generation, each agent is moved a different position in the search space by a combination of update operations. If the new position of the agent is deemed better (based on a fitness measure), it is shortlisted to be a part of the next generation, else the original agent is retained. In this manner, the fitness of the solution candidates improves over generations. In this work, the DE algorithm was implemented as described below.

During the first generation, the population of guess vectors was initialized. Mutation was applied to each agent to generate the mutants. During the mutation step, a “local best” candidate was used along with the “global best” and “random” candidates to update the search direction, similar to that of Particle Swarm Optimization (PSO). These variants were crossed over with the existing agents based on a cross over probability (CR). In this implementation, a binomial crossover was performed to generate trial solutions which were compared to their counterparts from the original population to populate the next generation.

The objective function to be minimized is the final error, which depends on the initial conditions

5. Results and Discussion

The solution to the TPBVP yields the initial conditions (IC) for forward simulation of the minimum time trajectories. The optimal control problems from an initial state

It can also be observed from Figure 4 that some trajectories violate the bounds before the end point. In such cases, the minimum time to the boundary is estimated based on the first intersection with the boundary. Thus, the minimum time to envelope can be computed as the infimum of minimum times of all exit points under consideration. Table 1 depicts the minimum exit times for each trajectory. The infimum obtained corresponds to 0.1 sec along path OI (Figure 4). Though the low minimum time information is not readily useful, it can be argued that by restricting control bounds

The convergence of the optimal solution using the Differential Evolution algorithm is shown in Figure 3, wherein the errors converge to zero in a finite number of generations. The corresponding optimal controls― namely thrust and elevator deflection and their bang-bang structure (due to affine nature of Hamiltonian w.r.t control inputs) are shown in Figure 5 and Figure 6 respectively.

6. Conclusion

This work investigated the issue of LOC prediction using tools from optimal control theory to develop spatio-

Table 1. Minimum time to end points in Figure 4.

Figure 1. Differential evolution for solving TPBVP.

Figure 2. Minimum time paths to corners.

Figure 3. Error convergence profile.

Figure 4. Minimum time trajectories to corners.

Figure 5. Optimal thrust.

Figure 6. Optimal elevator deflection.

temporal pilot aids. The time optimal problem to violate the Loss-of-control boundary was considered. The minimum time to reach any point on the boundary was computed using PMP. The resulting TPBVP was solved using Differential Evolution. Simulation of the linear longitudinal model was carried out in MATLAB and the optimal trajectories were found to be not necessarily the minimum phase space distance paths. The minimum time to envelope was computed as the infimum of minimum times to various boundary points. Future work could investigate minimum time trajectory generation over a space of initial conditions (IC), which would expedite optimal trajectory generation by leveraging the principle of optimality. Alternative strategies for solving TPBVP like hybrid optimization schemes could be explored to reduce computational loads and restricted control bounds could be considered to obtain practically viable minimum times. Further, the solution methodology employed here (using PMP-DE) can be readily extended to nonlinear models, which better characterize dynamics near LOC boundaries away from local trim conditions. In conclusion, this work provides an initial step to augment spatial pilot aids with minimum time temporal information aimed at LOC prevention.

Acknowledgements

The authors thank the NASA Ames research center for their support.

Cite this paper

ChaitanyaPoolla,Abraham K.Ishihara, (2015) Temporal Prediction of Aircraft Loss-of-Control: A Dynamic Optimization Approach. Intelligent Control and Automation,06,241-248. doi: 10.4236/ica.2015.64023

References

- 1. Worldwide Operations (2012) Statistical Summary of Commercial Jet Airplane Accidents. Technical Report, Boeing.

- 2. Authority, Civil Aviation (2013) Global Fatal Accident Review 2002-2011. Tso.

- 3. Jacobson, S. and Edwards, C.A. (2010) Aircraft Loss of Control Study. NASA Internal Report.

- 4. Kwatny, H.G., et al. (2012) Nonlinear Analysis of Aircraft Loss of Control. Journal of Guidance, Control, and Dynamics, 36, 149-162.

- 5. Michales, A.S. (2012) Contributing Factors among Fatal Loss of Control Accidents in Multiengine Turbine Aircraft.

- 6. Wilborn, J.E. and Foster, J.V. (2004) Defining Commercial Transport Loss-of-Control: A Quantitative Approach. AIAA Atmospheric Flight Mechanics Conference and Exhibit.

- 7. Randall, L. (2012) Brooks. LOC-I Training Foundations and Solutions. Technical Report, Boeing.

- 8. Krishnakumar, K., et al. (2014) Initial Evaluations of LoC Prediction Algorithms using the NASA Vertical Motion Simulator. SciTech 2014.

- 9. Barlow, V., Stepanyan, J. and Kalmanje, K. (2012) Estimating Loss-of-Control: A Data Based Predictive Approach.

- 10. Pontryagin, L.S., et al. (1962) The Mathematical Theory of Optimal Processes (International Series of Monographs in Pure and Applied Mathematics. Interscience, New York.

- 11. Qin, A.K., Huang, V.L. and Suganthan, P.N. (2009) Differential Evolution Algorithm with Strategy Adaptation for Global Numerical Optimization. IEEE Transactions on Evolutionary Computation, 13, 398-417.

- 12. Okdem, S. (2004) A Simple and Global Optimization Algorithm for Engineering Problems: Differential Evolution Algorithm. Turkish Journal of Electrical Engineering and Computer Sciences, 12.

- 13. Bersini, H., et al. (1996) Results of the First International Contest on Evolutionary Optimisation (1st ICEO). Proceedings of IEEE International Conference on Evolutionary Computation, 20-22 May 1996, 611-615.