Journal of Applied Mathematics and Physics

Vol.02 No.10(2014), Article ID:50264,4 pages

10.4236/jamp.2014.210107

Empirical Likelihood Diagnosis of Modal Linear Regression Models

Shuling Wang1, Lin Zheng2, Jiangtao Dai3

1Department of Fundamental Course, Air Force Logistics College, Xuzhou, China

2School of Statistics and Applied Mathematics, Anhui University of Finance and Economics, Bengbu, China

3Fundamental Science Department, North China Institute of Astronautic Engineering, Langfang, China

Email: 155328313@qq.com

Copyright © 2014 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 10 August 2014; revised 10 September 2014; accepted 17 September 2014

ABSTRACT

In this paper, we investigate the empirical likelihood diagnosis of modal linear regression models. The empirical likelihood ratio function based on modal regression estimation method for the regression coefficient is introduced. First, the estimation equation based on empirical likelihood method is established. Then, some diagnostic statistics are proposed. At last, we also examine the performance of proposed method for finite sample sizes through simulation study.

Keywords:

Modal Linear Regression Model, Empirical Likelihood, Outliers, Influence Analysis

1. Introduction

The mode of a distribution is regarded as an important feature of data. Several authors have made efforts to identify the modes of population distributions for low-dimensional data. See, for example, Muller and Sawitzki [1] ; Scott [2] ; Friedman and Fisher [3] ; Chaudhuri and Marron [4] ; Fisher and Marron [5] ; Davies and Kovac [6] Hall, Minnotte and Zhang [7] ; Ray and Lindsay [8] ; Yao and Lindsay [9] . In high-dimensional data, it is common to impose some model structure assumptions such as assumption on conditional distributions. Thus, it is of great interest to study the mode hunting for conditional distributions.

Given a random sample , where

, where  is a p-dimension column vector,

is a p-dimension column vector,  is the conditional density function. For the conventional regression models, the mean of

is the conditional density function. For the conventional regression models, the mean of  is usually used to investigate the relationship between

is usually used to investigate the relationship between  and

and  and the linear regression assumes that the mean of

and the linear regression assumes that the mean of  is a linear function of

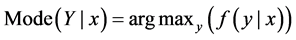

is a linear function of . Yao and Li [10] proposed a new regression model called modal linear regression that assumes the mode of

. Yao and Li [10] proposed a new regression model called modal linear regression that assumes the mode of  is a linear function of the predictor

is a linear function of the predictor . Modal linear regression measures the center using the “most likely” conditional values rather than the conditional average used by the traditional linear regression.

. Modal linear regression measures the center using the “most likely” conditional values rather than the conditional average used by the traditional linear regression.

Lee [11] used the uniform kernel and Epanechnikov kernel to estimate the modal regression. However, their estimators are of little practical use because the object function is non-differentiable and its distribution is intractable. Scott [2] mentioned the modal regression, but little methodology is given on how to implement it in practice. Recently, Yao et al. [12] investigated the estimation problem in nonparametric regression using the method of modal regression, and obtained a robust and efficient estimator for the nonparametric regression func- tion. Yao and Li [10] suggested using the Gaussian kernel and developed MEM algorithm to compute modal es- timators for linear models. Their estimation procedure is very convenient to be implemented for practitioners and the result is encouraging for many non-normal error distributions. Yu and Aristodemou [13] studied modal regression from Bayesian perspective. In addition, Zhao, Zhang and Liu [14] considered how to yield a robust empirical likelihood estimation for regression models.

The empirical likelihood method originates from Thomas & Grunkemeier [15] . Owen [16] first proposed the definition of empirical likelihood and expounded the system info of empirical likelihood. Zhu and Ibrahim [17] utilized this method for statistical diagnostic, and they developed diagnostic measures for assessing the influence of individual observations when using empirical likelihood with general estimating equations, and used these measures to construct goodness-of-fit statistics for testing possible misspecification in the estimating equations. Liugen Xue and Lixing Zhu [18] summarized the application of this method.

Over the last several decades, the diagnosis and influence analysis of linear regression model has been fully developed (R. D. Cook and S. Weisberg [19] , Bo-cheng Wei, Go-bin Lu & Jian-qing Shi [20] ). So far the statistical diagnostics of modal linear regression models based on empirical likelihood method has not yet been seen in the literature. This paper attempts to study it.

The rest of the paper is organized as follows. In Section 2, we review the modal regression. In Section 3, empirical likelihood and estimation equation are presented. The main results are given in Section 4. Simulation study is given to illustrate our results in Section 5.

2. Modal Linear Regression

Suppose a response variable  given a set of predictor

given a set of predictor  is distributed with a probability density function (PDF)

is distributed with a probability density function (PDF) . Yao and Li [10] proposed to use the mode of

. Yao and Li [10] proposed to use the mode of , denoted by

, denoted by

, to investigate the relationship between

, to investigate the relationship between  and

and

The idea of modal linear regression can be easily generalized to other models such as nonlinear regression, nonparametric regression, and varying coefficient partially linear regression. To include the intercept term in (1), we assume that the first element of

Yao and Li [10] proposed to estimate the modal regression parameter

where

3. Empirical Likelihood and Estimation Equation

In this section, we review empirical likelihood based on modal regression for regression coefficients, then establish the estimation equations.

Similarly to Zhao, Zhang and Liu [14] , we define an auxiliary random vector

Note that

By the method of Lagrange multipliers, similar to that used in Owen (2001),

where

Motivated by Zhu and Ibrahim [17] , we regard

Obviously, the maximum empirical likelihood estimates

4. Local Influence Analysis of Model

We consider the local influence method for a case-weight perturbation

likelihood function

1 vector with all elements equal to 1, represents no perturbation to the empirical likelihood, because

where

fluence graph

We consider two local influence measures based on the normal curvature

The most popular local influence measures include

as

the most influential perturbation to the empirical likelihood function, whereas the

As the discuss of Zhu et al. [17] , for varying-coefficient density-ratio model, we can deduce that

where

5. Numerical Study

We generate data-sets from following model

where the covariates

Figure 1. The influence value of

In order to check out the validity of our proposed methodology, we change the value of the first, 125th, 374th, 789th and 999th data. For every case, it is easy to obtain

Consequently, it is easy to calculate the value of

From the figure, we can see that in most cases, the value of

6. Discussion

In this paper, we considered the statistical diagnosis for modal linear regression models based on empirical likelihood. Through simulation study, we illustrate that our proposed method can work fairly well.

References

- Muller, D.W. and Sawitzki, G. (1991) Excess Mass Estimates and Tests for Multimodality. Journal of the American Statistical Association, 86, 738-746.

- Scott, D.W. (1992) Multivariate Density Estimation: Theory, Practice and Visualization. Wiley, New York. http://dx.doi.org/10.1002/9780470316849

- Friedman, J.H. and Fisher, N.I. (1999) Bump Hunting in High-Dimensional Data. Statistics and Computing, 9, 123-143. http://dx.doi.org/10.1023/A:1008894516817

- Chaudhuri, P. and Marron, J.S. (1999) Sizer for Exploration of Structures in Curves. Journal of the American Statistical Association, 94, 807-823. http://dx.doi.org/10.1080/01621459.1999.10474186

- Fisher, N.I. and Marron, J.S. (2001) Mode Testing via the Excess Mass Estimate. Biometrika, 88, 499-517. http://dx.doi.org/10.1093/biomet/88.2.499

- Davies, P.L. and Kovac, A. (2004) Densities, Spectral Densities and Modality. Annals of Statistics, 32, 1093-1136.

- Hall, P., Minnotte, M.C. and Zhang, C. (2004) Bump Hunting with Non-Gaussian Kernels. Annals of Statistics, 32, 2124-2141. http://dx.doi.org/10.1214/009053604000000715

- Ray, S. and Lindsay, B.G. (2005) The Topography of Multivariate Normal Mixtures. Annals of Statistics, 33, 2042- 2065. http://dx.doi.org/10.1214/009053605000000417

- Yao, W. and Lindsay, B.G. (2009) Bayesian Mixture Labeling by Highest Posterior Density. Journal of American Statistical Association, 104, 758-767. http://dx.doi.org/10.1198/jasa.2009.0237

- Yao, W. and Li, L. (2013) A New Regression Model: Modal Linear Regression. Scandinavian Journal of Statistics, 41, 656-671. http://dx.doi.org/10.1111/sjos.12054

- Lee, M.J. (1989) Mode Regression. Journal of Econometrics, 42, 337-349. http://dx.doi.org/10.1016/0304-4076(89)90057-2

- Yao, W., Lindsay, B. and Li, R. (2012) Local Modal Regression. Journal of Nonparametric Statistics, 24, 647-663. http://dx.doi.org/10.1080/10485252.2012.678848

- Yu, K. and Aristodemou, K. (2012) Bayesian Mode Regression. Technical Report. arXiv: 1208.0579v1.

- Zhao, W.H., Zhang, R.Q., Liu, Y.K. and Liu, J.C. (2014) Empirical Likelihood Based Modal Regression. Statistical Papers.

- Thomas, D.R. and Grunkemeier, G.L. (1975) Confidence Interval Estimation of Survival Interval Estimation of Survival Probabilities for Censored Data. Journal of the American Statistical Association, 70, 865-871. http://dx.doi.org/10.1080/01621459.1975.10480315

- Owen, A. (2001) Empirical Likelihood. Chapman and Hall, New York. http://dx.doi.org/10.1201/9781420036152

- Zhu, H.T., Ibrahim, J.G., Tang, N.S. and Zhang, H.P. (2008) Diagnostic Measures for Empirical Likelihood of Generalized Estimating Equations. Biometrika, 95, 489-507. http://dx.doi.org/10.1093/biomet/asm094

- Xue, L.G. and Zhu, L.X. (2010) Empirical Likelihood in Nonparametric and Semiparametric Models. Science Press, Beijing.

- Cook, R.D. and Weisberg, S. (1982) Residuals and Influence in Regression. Chapman and Hall, New York.

- Wei, B.C., Lu, G.B. and Shi, J.Q. (1990) Statistical Diagnostics. Publishing House of Southeast University, Nanjing.