Combining Multiple Cues for Pedestrian Detection in Crowded Situations

Copyright © 2013 SciRes. JSIP

65

6. Acknowledgements

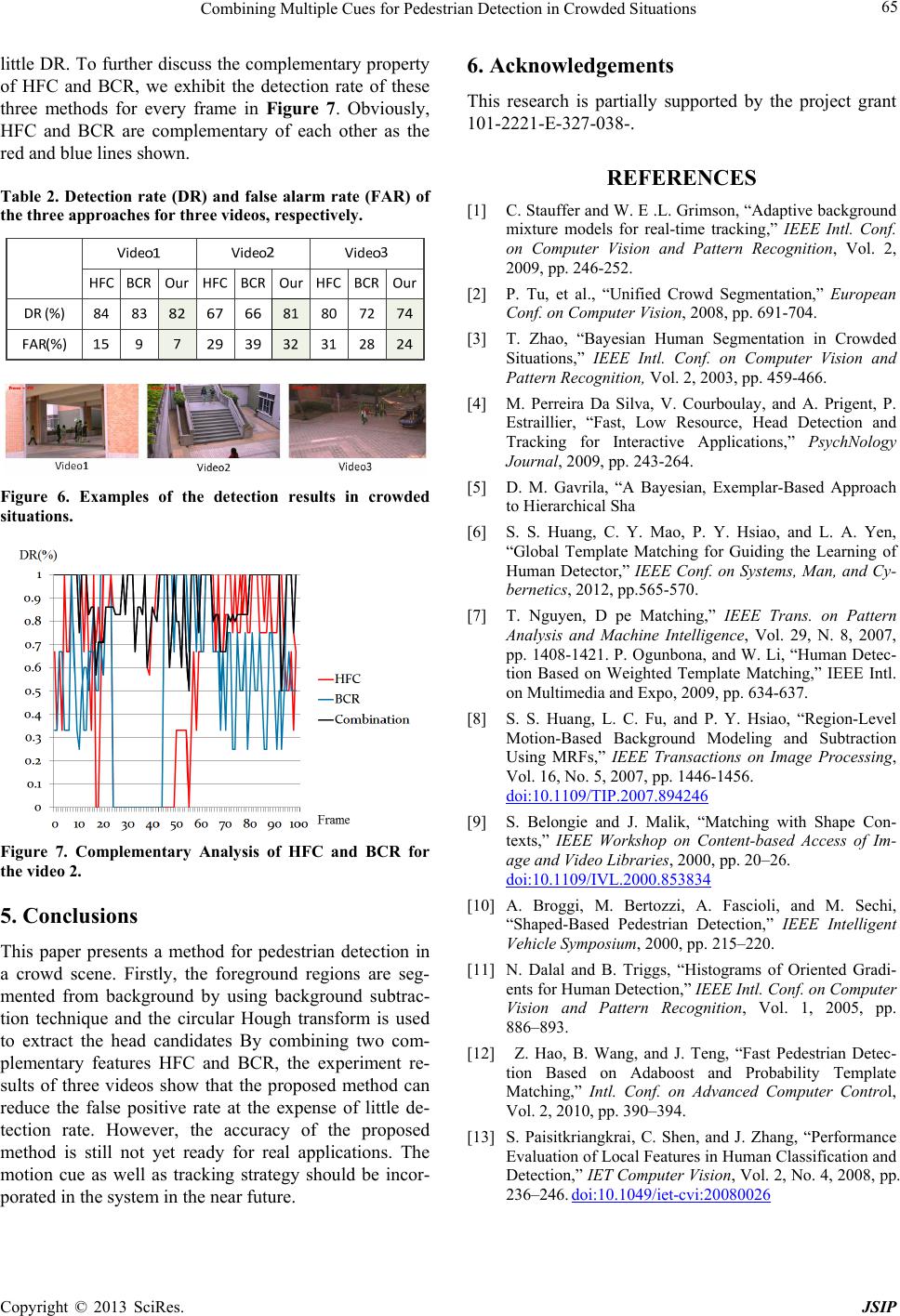

little DR. To further discuss the comple mentary property

of HFC and BCR, we exhibit the detection rate of these

three methods for every frame in Figure 7. Obviously,

HFC and BCR are complementary of each other as the

red and blue lines shown.

This research is partially supported by the project grant

101-2221-E-327-038-.

REFERENCES

Table 2. Detection rate (DR) and false alarm rate (FAR) of

the three approaches for three videos, respectively. [1] C. Stauffer and W. E .L. Grimson, “Adaptive background

mixture models for real-time tracking,” IEEE Intl. Conf.

on Computer Vision and Pattern Recognition, Vol. 2,

2009, pp. 246-252.

[2] P. Tu, et al., “Unified Crowd Segmentation,” European

Conf. on Computer Vision, 2008, pp. 691-704.

[3] T. Zhao, “Bayesian Human Segmentation in Crowded

Situations,” IEEE Intl. Conf. on Computer Vision and

Pattern Recognition, Vol. 2, 2003, pp. 459-466.

[4] M. Perreira Da Silva, V. Courboulay, and A. Prigent, P.

Estraillier, “Fast, Low Resource, Head Detection and

Tracking for Interactive Applications,” PsychNology

Journal, 2009, pp. 243-264.

[5] D. M. Gavrila, “A Bayesian, Exemplar-Based Approach

to Hierarchical Sha

Figure 6. Examples of the detection results in crowded

situations. [6] S. S. Huang, C. Y. Mao, P. Y. Hsiao, and L. A. Yen,

“Global Template Matching for Guiding the Learning of

Human Detector,” IEEE Conf. on Systems, Man, and Cy-

bernetics, 2012, pp.565-570.

[7] T. Nguyen, D pe Matching,” IEEE Trans. on Pattern

Analysis and Machine Intelligence, Vol. 29, N. 8, 2007,

pp. 1408-1421. P. Ogunbona, and W. Li, “Human Detec-

tion Based on Weighted Template Matching,” IEEE Intl.

on Multimedia and Expo, 2009, pp. 634-637.

[8] S. S. Huang, L. C. Fu, and P. Y. Hsiao, “Region-Level

Motion-Based Background Modeling and Subtraction

Using MRFs,” IEEE Transactions on Image Processing,

Vol. 16, No. 5, 2007, pp. 1446-1456.

doi:10.1109/TIP.2007.894246

[9] S. Belongie and J. Malik, “Matching with Shape Con-

texts,” IEEE Workshop on Content-based Access of Im-

age and Video Libraries, 2000, pp. 20–26.

doi:10.1109/IVL.2000.853834

Figure 7. Complementary Analysis of HFC and BCR for

the video 2.

[10] A. Broggi, M. Bertozzi, A. Fascioli, and M. Sechi,

“Shaped-Based Pedestrian Detection,” IEEE Intelligent

Vehicle Symposium, 2000, pp. 215–220.

5. Conclusions

This paper presents a method for pedestrian detection in

a crowd scene. Firstly, the foreground regions are seg-

mented from background by using background subtrac-

tion technique and the circular Hough transform is used

to extract the head candidates By combining two com-

plementary features HFC and BCR, the experiment re-

sults of three videos show that the proposed method can

reduce the false positive rate at the expense of little de-

tection rate. However, the accuracy of the proposed

method is still not yet ready for real applications. The

motion cue as well as tracking strategy should be incor-

porated in the system in the near future.

[11] N. Dalal and B. Triggs, “Histograms of Oriented Gradi-

ents for Human Detection,” IEEE Intl. Conf. on Computer

Vision and Pattern Recognition, Vol. 1, 2005, pp.

886–893.

[12] Z. Hao, B. Wang, and J. Teng, “Fast Pedestrian Detec-

tion Based on Adaboost and Probability Template

Matching,” Intl. Conf. on Advanced Computer Control,

Vol. 2, 2010, pp. 390–394.

[13] S. Paisitkriangkrai, C. Shen, and J. Zhang, “Performance

Evaluation of Local Features in Human Classification and

Detection,” IET Computer Vision, Vol. 2, No. 4, 2008, pp.

236–246. doi:10.1049/iet-cvi:20080026