Psychology 2013. Vol.4, No.8, 663-668 Published Online August 2013 in SciRes (http://www.scirp.org/journal/psych) http://dx.doi.org/10.4236/psych.2013.48094 Copyright © 2013 SciRes. 663 Static and Dynamic Presentation of Emotions in Different Facial Areas: Fear and Surprise Show Influences of Temporal and Spatial Properties* Holger Hoffmann1, Harald C. Traue1, Kerstin Limbrecht-Ecklundt1, Steffen Walter1, Henrik Kessler2 1Medical Psychology, University of Ulm, Ulm, Germany 2Medical Psychology, University of Bonn, Bonn, Germany Email: holger.hoffmann@uni-ulm.de Received November 29th, 2012; revised January 6th, 2013; accepted February 6th, 2013 Copyright © 2013 Holger Hoffmann et al. This is an open access article distributed under the Creative Com- mons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, pro- vided the original work is properly cited. For the presentation of facially expressed emotions in experimental settings a sound knowledge about stimulus properties is pivotal. We hence conducted two experiments to investigate the influence of tem- poral (static versus dynamic) and spatial (upper versus lower half of the face) properties of facial emotion stimuli on recognition accuracy. In the first experiment, different results were found for the six emotions examined (anger, disgust, fear, happiness, sadness and surprise). Fear and surprise were more accurately recognized when using dynamic stimuli. In the second experiment using only dynamic presentations, recognition rates between upper and lower face varied significantly for most emotions with fear and hap- piness only being detectable in the upper or lower half respectively. The results suggest an emotion-spe- cific effect for the importance of the facial area. Keywords: Emotion Recognition; Facial Expressions; Static vs. Dynamic Introduction The recognition of emotions is an essential element of social interaction (Buck, 1984; Ekman, 1993). A common paradigm for studying the ability of emotion recognition is the presenta- tion of photographs with different emotional expressions, usu- ally taken at the time of the strongest expression. The disad- vantage of this approach is that the ecological validity of such stimuli must be regarded as limited. This is due to the fact that during real-world interactions people must recognize emotions from dynamically changing faces. If one observes emotions over time, it is obvious that they arise at a specific moment, reach their peak and subside again (Onset, Apex, Offset; Hess & Kleck, 2005). In interactions one can also observe that emo- tions only appear in some areas of the face. The use of static stimuli thus implies the danger of not capturing actual recogni- tion accuracy due to the lack of dynamics and a potential un- derestimation of the importance of specific facial areas. Hence, it can be concluded that the use of dynamic sequences better reproduces real life situations of emotion recognition and thus allows for more accurate results regarding the recognition of emotions. This could be reflected, for example, in better esti- mates of recognition accuracy. In the 1980s, Ekman & Friesen (1982) already suspected that static and dynamic stimulus ma- terial may result in performance differences. Another argument in favor of using dynamic stimuli lies in the brain areas being differentially active when presenting static versus dynamic emotional expressions (Trautmann, Fehr, & Herrmann, 2009; Kessler, Doyen-Waldecker, Hofer, Hoffmann, Traue, & Abler, 2011). Additionally, if natural processes are to be examined, which is not part of this study, dynamic stimuli should be used (e.g., Kilts, Egan, Gideon, Ely, & Hoffman, 2003; LaBar, Crupain, Voyvodic, & McCarthy, 2003; Sato, Kochiyama, Yoshikawa, Naito, & Matsumara, 2004). However, these dynamic stimuli should be standardized, allowing for comparison of results between different studies. The influence of dynamic emotion expressions was already examined in detail in a number of studies with inconsistent results. Harwood, Hall, & Shinkfield (1999) showed, for example, that anger and sad- ness were more readily recognized when the emotion was pre- sented dynamically. In two classic studies by Bassili (1978, 1979) an improvement in emotion recognition was demon- strated for all emotions when using dynamic stimuli, however. Ambadar, Schooler, & Cohn (2005) used single-static, multi- static, and dynamic stimuli and could demonstrate a robust effect of motion and suggested that this effect was due to the dynamic property of the expression. Additionally, Trautmann, Fehr, & Herrmann (2009) found differences in brain activation patterns comparing the neural processing of static and dynamic stimuli. In their study dynamic stimuli revealed a better recog- nizability than static stimulus material. A review published in 2013 by Krumhuber, Kappas, & Manstead pronounces the limitation of static stimuli and underline the dynamic nature of *This research was supported in part by grants from the Transregional Col- laborative Research Centre SFB/TRR 62 “Companion-Technology for Cog- nitive Technical Systems” funded by the German Research Foundation (DFG).  H. HOFFMANN ET AL. facial activity. On the other side, Wehrle, Kaiser, Schmidt, & Scherer (2000) found no statistically significant differences be- tween static and dynamic facial expressions as well as Fioren- tini & Viviani (2011). It is not always the entire face that provides important clues about the expressed emotion. One way of discovering which parts of the face are subjected to a more detailed analysis dur- ing the processes of emotion recognition, is by dividing the face into an upper and a lower half and presenting an emotional ex- pression in only one of the areas. Previous studies (Calder, Young, Keane, & Dean, 2000; Bassili, 1978; Bassili, 1979) suggest that emotion recognition is not the same for all basic emotions. This means that there appear to be specific key stim- uli for each emotion, which provide the basis for classification. For example, recognition of surprise is associated with observ- ing wide-open eyes. A disgusted face, however, is characterized by a wrinkled nose and lifting of the upper lip. It can thus be assumed that the relevance of the specific half of the face de- pends on the respective basic emotion and its associated key stimuli. Our study therefore has two aims: 1) Evaluating possible differences between the use of dynamic versus static stimulus material and 2) assessing differential contributions of the upper versus lower half of a facial expression to recognition accuracy. General Methods The stimuli used for this study were pictures from the JACFEE/JACNeuF (Japanese and Caucasian Facial Expres- sions of Emotion and Neutral Faces) picture set (Matsumoto & Ekman, 1988). This is a picture set consisting of 56 actors por- traying one of seven emotions (anger, contempt, disgust, fear, happiness, sadness and surprise). Half of the actors are male, half female; half are of Japanese and half of Caucasian origin. For our experiments, a subset of 42 actors and six emotions was used, evenly distributed among all sub-sets of stimuli. Con- tempt was excluded, because this emotion is not considered in the vast majority of studies in the field. In our picture set one actor displays only one emotional and one neutral expression. Several studies have shown the reliability and validity of the JACFEE/JACNeuF picture set in displaying the intended emo- tions (e.g., Biehl, Matsumoto, Ekman, Hearn, Heider, Kudoh, & Ton, 1997). The FEMT (Facial Expression Morphing Tool) was used for creating the subtle facial expressions employed in these ex- periments (Kessler, Hoffmann, Bayerl, Neumann, Basic, Deigh- ton, & Traue, 2005; see also Hoffmann, Kessler, Eppel, Ru- kavina, & Traue, 2010). This software uses different morphing algorithms to produce intermediate frames between two images. This method was optimized by implementing additional tech- niques. Sequences were generated using multiple layers that minimized distracting facial information by only morphing the important feature of the face. The use of the multiple layers and special smoothing algorithms allowed us to create realistic tran- sitions from closed to open mouths, for example. The FEMT can generate images in any intensity between 0% (neutral face) and 100% (full-blown emotion). All stimuli used in the study were in color and presented on a computer screen. Experiment 1 In this experiment, the recognition accuracies for static and dynamic stimuli were compared. The hypothesis was that sta- tistically significant differences exist between static and dy- namic stimuli and for the six basic emotions. Methods Participants The study included N = 220 healthy participants. The age of the participants of the experimental group (EG; N = 110) ranged from 18 to 28 years (M = 20.5; SD = 2.0). 70 study participants were female (63.6%), 40 male (36.4%). All sub- jects of the experimental group gave their written consent to participate in the experiment. A control group (CG; N = 110) was then matched from the FEEL database. The age of the par- ticipants in the control group ranged from 19 to 29 years (M = 21.5; SD = 2.3), 63.6% of them were female. The FEEL Test (Facially Expressed Emotion Labeling) The FEEL test is a computer-based method for measuring individual emotion recognition ability (Kessler, Bayerl, Deigh- ton, & Traue, 2002). It consists of pictures of 42 different actors portraying the six basic emotions (happiness, sadness, disgust, fear, surprise, anger). These images were taken from the JACFEE/JACNeuF picture set (Matsumoto & Ekman, 1988), which was described above. After showing a neutral facial ex- pression, the emotional facial expressions are presented on the computer screen for 300 ms before they must be assigned to a category. For this, a choice box appears from which a selection can be made by clicking on one of the six emotions (forced- choice format). A total of 48 images are presented, as each emotion is shown in a trial run beforehand, so the subjects can acquaint themselves with the task. With a Cronbach’s alpha of r = .77 the test has a high reliability. In the period in which the FEEL test was successfully used, data from 600 healthy sub- jects of different age, sex and education were collected, so that user-defined control groups can be prepared using this database. Different issues have been examined with this approach (e.g., Hoffmann et al., 2010; Kessler, Roth, von Wietersheim, Deigh- ton, & Traue, 2007). Procedure The subjects selected from the FEEL database saw static images with a full-blown emotional expression. The experi- mental group, however, was presented with video sequences that were created from the respective neutral and emotional images, using the FEMT. Since the quality of the picture mate- rial only allowed the creation of 36 video sequences, data from the control group were adjusted to account for the missing stimuli. All subjects had to complete six trial runs before the actual test, to ensure familiarity with the procedure. As can be seen in Figure 1, the test procedure was designed to be as iden- tical as possible for the two groups. First, all participants were presented with the neutral expression of an actor. While the control group saw the neutral face for 1500 ms, the experimen- tal group saw it for 1300 ms to 2100 ms. The difference in the presentation time of the neutral face is due to the fact that the dynamic sequences following it had a length of between 400 ms (surprise) and 1200 ms (sadness), depending on the emotion shown. In order to perceive the development of an emotion as natural, a particular temporal sequence must be created. For the emotion of surprise e.g., a much shorter time for the onset is considered as natural compared to the emotion of sadness Copyright © 2013 SciRes. 664  H. HOFFMANN ET AL. Copyright © 2013 SciRes. 665 Figure 1. Static vs. dynamic presentation of facial expressions. The left side represents the experimental group (dynamic condition); the right side represents the FEEL group (static condition). (Hoffmann, Traue, Bachmayr, & Kessler, 2010). While the experimental group saw the dynamic sequence, the control group was presented with a white screen. Both groups subsequently saw the full-blown emotion for 300 ms, so that for all participants each trial lasted 2500 ms in total. Once the emo- tional image disappeared from the computer screen, six choice boxes with one emotion label each appeared after 500 ms. Sub- jects had to choose by mouse click which emotion they had just seen. The participants had ten seconds for deciding before the next trial started. The images with the emotional expressions and the six choice boxes were presented at different times. Presentation of the images was randomized. Results Experiment 1 had a 6 × 2 × 2 mixed design. The within- subject factor was emotion (anger, disgust, fear, happiness, sadness or surprise). The between-subject factors were partici- pants’ gender (female or male) and the type of stimulus mate- rial presented (static or dynamic). Analyses were performed using the SPSS 20 software package. Generally, there was no statistical difference in the recognition accuracy between static (M = 82.5%; SD = 9.3) and dynamic (M = 83.7%; SD = 8.2) stimulus material. Recognition rates for the six emotions dif- fered significantly (F(5,213) = 47.92; p < .001) and are shown in Table 1. The interaction between emotion and type of stimulus material was significant, too (F(5,213) = 5.17; p < .001), meaning that the type of stimulus material presented influences the rec- ognition accuracy for the six emotions in a different way. We therefore decided to analyze the results looking at the different basic emotions. The results showed that recognition accuracy for surprise (Mstat = 83.9%; Mdyn = 90.2%; p < .01) and fear (Mstat = 67.9%; Mdyn = 75.8%; p < .05) increased significantly when presented dynamically as opposed to a static display. In contrast, the recognition accuracy for happiness is statistically higher when using static instead of dynamic stimulus material (Mstat = 96.4%; Mdyn = 94.1%; p < .05). No significant differ- ences were observed for the emotions anger, disgust and sad- ness when comparing the experimental and control groups. Female (M = 83.2%) and male (M = 82.9%) participants per- formed equally. Discussion The conducted experiment partially confirmed the hypothesis that dynamic and static presentations result in significant dif- ferences for the individual emotions, although no overall sig- nificant difference was found for the use of static and dynamic picture material. The absence of a general effect of display condition over all emotions is consistent with the results of Ambadar et al. (2005), according to which dynamic sequences could not significantly increase recognition accuracy in com- parison to a first-last condition. A differentiated comparison (dynamic versus static) showed that fear and surprise were more readily recognized when the subjects were presented with dynamic sequences. This contra- dicts the results of a study by Harwood et al. (1999), which reported that the emotions of anger and sadness particularly profited from dynamic presentation. The discrepancy might be explained by the choice of other stimuli. Happiness was less well recognized when presented dynamically, a result that con- tradicts previous studies. Fear and surprise, on the other hand, tend to be recognized twice as easy when using dynamic se- quences. Fear and surprise are often confused, presumably be- cause of the high similarity of the facial expressions (eyes wide open). It appears that these mix-ups can be reduced by means of the additional information provided during the dynamic emer- gence of the emotion. We assume that the information gained from movement of the mouth and eyes provides particularly important clues for a correct recognition (Jack, Garrod, Yu, Caldara, & Schyns, 2012). The opposite seems to be the case for the recognition of happiness. When quantifying mix-ups, it was striking that in the dynamic presentation condition this emotion was frequently confused with disgust. It is possible that subjects focus on the raising of the lip, which occurs in case of happiness and disgust, so much that other differentiated information, such as wrinkling the nose in case of disgust or the activation of the M. orbicularis occuli in case of happiness, are not considered sufficiently. Contrary to this, other work has shown that spontaneous and deliberate smiles could be distin- guished from each other on the basis of dynamic displays, but not static ones (Krumhuber & Manstead, 2009) indicating that the dynamic presentation of happiness increases the ability to distinguish happiness from other emotional states. Referring to the results of Fiorentini and Viviani (2011), who did not find an advantage for dynamic stimulus material, one should consider the method to develop dynamic stimuli. The authors used high-speed recordings of actors’ facial expressions not morphing sequences of a neutral and a full-blown expres- sion. This may explain the differences in the results and en- courage discussing how dynamic stimulus material should be created—with natural expressions or derived from static mate- rial. Both options have their (dis-)advantages and cannot be discussed here. In conclusion, although results are not clear-cut according to Trautmann et al. (2009) dynamic facial expressions might im-  H. HOFFMANN ET AL. Table 1. Recognition accuracy for the different emotion categories. Stimulus type Emotion Static Dynamic Effect Anger 91.4 (14.4) 90.5 (14.7) n.s. Disgust 74.4 (25.8) 70.0 (23.7) n.s. Fear 67.9 (25.8) 75.8 (22.9) p < .05 Happiness 96.4 (7.3) 94.1 (8.3) p < .05 Sadness 80.9 (20.4) 81.8 (20.3) n.s. Surprise 83.9 (15.0) 90.2 (14.0) p < .01 Total 82.5 (9.3) 83.7 (8.2) n.s. Note: Standard errors are in parentheses. Recognition accuracy values are in per- cent. prove the model how facial expression is processed in humans. Experiment 2 Emotion recognition accuracy using information from only the lower or the upper part of the face was compared in a sec- ond experiment. It was assumed that recognition accuracy dif- fers significantly for these two conditions. Methods Participants The participants were N = 57 students at Ulm University, who gave written consent for participation in the study. Their ages ranged from 18 to 25 years (M = 20.4; SD = 1.6). 42 study participants were female (73.7%), 15 male (26.3%). None of them had been tested in Experiment 1. Stimuli In Experiment 2, the facial expressions of the dynamic se- quences were synthesized in such a fashion that the transforma- tion was visible only in the upper or the lower half. For this purpose, the face was divided into two halves and a video se- quence was generated for each image pair in which the change from a neutral to an emotional expression took place either in the upper or the lower half of the face. This resulted in 72 se- quences (6 emotions × 6 sequences × 2 areas of the face). The division of the face into an upper and a lower part was based on the inherent anatomy of the face and can be seen in Figure 2. Procedure As in Experiment 1, the subjects first had to complete six trial runs before the actual experiment began. Subsequently they were presented with the 72 sequences in randomized order. Six sequences were presented for each emotion; 50% of the sequences displayed a change in the lower half of the face, and 50% of the sequences displayed a change in the upper half of the face. The course of the trial runs corresponded to that in Experiment 1, and the subsequent evaluation and selection of the emotion shown also followed the same experimental design. One difference between the experiments is the use of a seventh choice box labeled “not recognized” in Experiment 2, which could be selected when the subject was not able to assign the facial expression to one of the six emotions. This was intended Figure 2. Division of the face into an upper and a lower part. The upper area includes the following anatomical regions of the face: Regio frontalis, Regio orbitalis, Regio nasalis. The lower area includes: Regio oralis, Regio buccalis, Regio infraorbi- talis, Regio zygomatica, Regio mentalis & Regio temporalis. to prevent random assignment to an emotion due to lack of choices. Results For the statistical analysis we used a generalized linear model considering the dependency structure of our data. Ex- periment 2 had a 6 × 2 × 2 mixed design with emotion (happi- ness, disgust, anger, fear, surprise or sadness) and type of stimulus presentation (upper or lower part of the face) as within-subject factors and the gender of the participants (male or female) as a between-subject factor. Recognition rates differed significantly between the six emo- tions (Wald χ2 (5, N = 57) = 110.50; p < .001) and are shown in Table 2. As hypothesized, results show that recognition accu- racy differed for the two presentation types, Wald χ2 (1, N = 57) = 29.2; p < .001. The interaction between emotion and type of stimulus presentation was also significant (Wald χ2 (5, N = 57) = 139.16; p < .001). Disgust, happiness and sadness were rec- ognized better when the emotional expression was shown in the lower part of the face (p < .001). Surprise (p < .05) and fear (p < .001) in contrast were recognized better when a dynamic change was presented in the upper part of the face. The recog- nition accuracy of anger seems to be independent of the pres- entation mode. Subjects were not able to recognize fear and happiness above chance when changes were exclusively pre- sented in the lower half of the face or only in the upper part of the face, respectively. In the case of surprise, on the other hand, high hit rates for both conditions (72.5% in the lower face and 83.0% in the upper face) could be observed. Female and male participants performed equally, no gender effect could be ob- served. Discussion Our hypothesis that presentation of dynamic changes in the upper versus lower part of the face has a differential impact on emotion recognition was partially confirmed. Fear was only reliably detected when the upper half of the face was presented. Copyright © 2013 SciRes. 666  H. HOFFMANN ET AL. Table 2. Recognition accuracy for the different emotion categories. Part of the Face Emotion Upper Lower Effect Anger 59.1 (28.8) 67.3 (35.3) n.s. Disgust 41.5 (32.9) 62.0 (28.5) p < .001 Fear 50.9 (31.6) 15.2 (28.2) p < .001 Happiness 18.1 (22.8) 97.1 (11.4) p < .001 Sadness 43.9 (34.6) 63.7 (27.7) p < .001 Surprise 83.0 (24.5) 72.5 (29.0) p < .05 Note: Standard errors are in parentheses. Recognition accuracy values are in per- cent. In the case of happiness the opposite was true. Surprise was almost equally well recognized in both conditions. With regard to the emotions of happiness and surprise, these results are largely consistent with the studies by Bassili (1978, 1979) and Calder et al. (2000). In the aforementioned studies, surprise was always recognized at a rate of over 70%, regardless of whether the emotional expression was presented in the upper or lower half of the face. Contradictory results are found for anger and sadness. In the present study and the studies by Bassili (1978, 1979) no differences were found for the presentation of anger in the upper or lower part of the face. Calder et al. (2000) showed significantly better recognition accuracy for the presentation of anger in the upper half of the face. In the study by Calder, sad- ness was recognized when presented in the lower half of the face, in the other studies, however, when presented in the upper half. The differences can possibly be explained by different sample sizes (significantly larger in our study) or the division of the face. While the present research used a facial division based on anatomical features, the abovementioned studies util- ized a geometric facial division. Furthermore, in the older stud- ies, only one half of the face was presented, the other half was hidden. This does not conform to real life conditions. The pre- sent study showed the entire face. Overall, it could be shown that certain areas of the face are of different relevance for the recognition of basic emotions, though further research is re- quired. General Discussion The results provide new information regarding the question of the ecological validity of stimulus material in the study of emotion recognition. Experiment 1 showed no differences in the use of dynamic and static stimulus material over all emo- tions, but interesting effects on the level of individual emotions. Experiment 2 showed that the recognition of emotions is dif- ferentially influenced by the presentation of the expression in the upper or lower half of the face. The application of dynamic stimuli is hence not necessary for capturing the assessment of emotion recognition in general. Nevertheless, it appears that dynamic information improves the recognition rates for some emotions. This finding is contra- dicted by our results regarding the emotion of happiness. Here, higher recognition accuracy was achieved with the use of static stimuli. One must take into account, however, that the recogni- tion rates for this emotion tend to be very high, and that such a result could therefore be due to ceiling effects. Apart from the fact that dynamic stimuli closely represent the natural occur- rence of facial emotions, the notion of different brain areas being active when perceiving static versus dynamic stimuli argues in favor of an application of the latter. Furthermore, emotion recognition appears to depend on the perception of different areas of the face. The information ob- tained in the relevant half seems to be sufficient for correctly assigning an emotion. This provides opportunities for therapeu- tic use in people with deficits in the area of emotion recognition. A study conducted by Adolphs (2002) showed that the recogni- tion accuracy of fear in patients with amygdala lesions could be improved by prompting them to pay attention to the eyes of the presented stimulus. Further research, such as eye-tracking stud- ies, could contribute to the understanding of emotional facial expression analysis. Limitations A limitation of the study concerns the methodological ap- proach. Different times were chosen for the different emotions in the dynamic presentation condition to ensure a natural proc- ess. These time specifications, however, are based on the as- sessment of subjects (Hoffmann et al., 2010). In this experiment the participants were asked to assess dynamic sequences in terms of their realistic representation. It was implicitly assumed that these results reflect the sequence of actual emotion patterns under natural conditions. From an epistemological perspective, this link between perception and production of facial expres- sions may not necessarily be present. REFERENCES Adolphs, R. (2002). Recognizing emotion from facial expressions: Psy- chological and neurological mechanisms. Behavioral and Cognitive Neuroscience Reviews, 1, 21-61. doi:10.1177/1534582302001001003 Ambadar, Z., Schooler, J. W., & Cohn, J. F. (2005). Deciphering the enigmatic face—The importance of facial dynamics in interpreting subtle facial expressions. Psychological S c i e n c e , 16, 403-410. doi:10.1111/j.0956-7976.2005.01548.x Bassili, J. N. (1978). Facial motion in perception of faces and of emo- tional expression. Journal of Experimental Psychology: Human Per- ception and Performance, 4, 373-379. doi:10.1037/0096-1523.4.3.373 Bassili, J. N. (1979). Emotion recognition: The role of facial movement and the relative importance of upper and lower areas of the face. Journal of Personality and S o c ial psychology, 37, 2049-2068. doi:10.1037/0022-3514.37.11.2049 Biehl, M., Matsumoto, D., Ekman, P., Hearn, V., Heider, K., Kudoh, T., & Ton, V. (1997). Matsumoto and Ekman’s Japanese and Caucasian facial expressions of emotion (JACFEE): Reliability data and cross- national differences. Journal of Nonverbal Behavior, 21, 3-21. doi:10.1023/A:1024902500935 Buck, R. (1984). The communication of emotion. New York: Guildford Press. Calder, A. J., Young, A. W., Keane, J., & Dean, M. (2000). Configural information in facial expression perception. Journal of Experimental Psychology: Human Perception and Performance, 26, 527-551. doi:10.1037/0096-1523.26.2.527 Ekman, P. (1993). Facial expression and emotion. American Psycholo- gist, 48, 384-392. doi:10.1037/0003-066X.48.4.384 Ekman, P., & Friesen, W. (1982). Felt, false and miserable smiles. Jour- nal of Nonverbal Behavior, 6, 238-252. doi:10.1007/BF00987191 Fiorentini, C., & Viviani, P. (2011). Is there a dynamic advantage for facial expressions? Journal of Vision, 11, 17. doi:10.1167/11.3.17 Harwood, N. K., Hall, L. J., & Shinkfield, A. J. (1999). Recognition of Copyright © 2013 SciRes. 667  H. HOFFMANN ET AL. Copyright © 2013 SciRes. 668 facial emotional expressions from moving and static displays by in- dividuals with mental retardation. American Journal of Mental Re- tardation, 104, 270-278. doi:10.1352/0895-8017(1999)104<0270:ROFEEF>2.0.CO;2 Hess, U., & Kleck, R. E. (2005). Differentiating emotion elicited and deliberate emotional facial expressions. In P. Ekman, & E. L. Rosen- berg (Eds.), What the face reveals: Basic and applied studies of spontaneous expression using the facial action coding system (FACS) (pp. 271-286). New York: Oxford University Press. Hoffmann, H., Kessler, H., Eppel, T., Rukavina, S., & Traue, H. C. (2010). Expression intensity, gender and facial emotion recognition: Women recognize only subtle facial emotions better than men. Acta Psychologica, 135, 278-283. doi:10.1016/j.actpsy.2010.07.012 Hoffmann, H., Traue, H. C., Bachmayr, F., & Kessler, H. (2010). Per- ceived realism of dynamic facial expressions of emotion: Optimal durations for the presentation of emotional onsets and offsets. Cogni- tion and Emotion, 24, 1369-1376. doi:10.1080/02699930903417855 Jack, R. E., Garrod, O. G. B., Yu, H., Caldara, R., & Schyns, P. G. (2012). Facial expressions of emotion are not culturally universal. Proceedings of the Na tional Academy of Sciences, 109, 7241-7244. doi:10.1073/pnas.1200155109 Kessler, H., Bayerl, P., Deighton, R. M., & Traue, H. C. (2002). Fa- cially expressed emotion labeling (FEEL): A computer test for emo- tion recognition. Verhaltenstherapie und Verhaltensmedizin, 23, 297- 306. Kessler, H., Hoffmann, H., Bayerl, P., Neumann, H., Basic, A., Deigh- ton, R. M., & Traue, H. C. (2005). Measuring emotion recognition with computer morphing: New methods for research and clinical practice. Nervenheilk u nd e , 24, 611-614. Kessler, H., Roth, J., Wietersheim, Jörn von, Deighton, R. M., & Traue, H. C. (2007). Emotion recognition patterns in patients with panic dis- order. Depression and Anxiety, 24, 223-226. doi:10.1002/da.20223 Kessler, H., Doyen-Waldecker, C., Hofer, C., Hoffmann, H., Traue, H. C., & Abler, B. (2011). Neural correlates of the perception of dy- namic versus static facial expressions of emotion. GMS Psycho-So- cial Medicine, 8. Kilts, C. D., Egan, G., Gideon, D. A., Ely, T. D., & Hoffman, J. M. (2003). Dissociable neural pathways are involved in the recognition of emotion in static and dynamic facial expressions. Neuroimage, 18, 156-168. doi:10.1006/nimg.2002.1323 Krumhuber, E. G., Kappas, A., & Manstead, A. S. R. (2013). Effects of dynamic aspects of facial expressions: A review. Emotion Review, 5, 41-46. doi:10.1177/1754073912451349 Krumhuber, E., & Manstead, A. S. R. (2009). Can Duchenne smiles be feigned? New evidence on felt and false smiles. Emotion, 9, 807-820. doi:10.1037/a0017844 LaBar, K. S., Crupain, M. J., Voyvodic, J. T., & McCarthy, G. (2003). Dynamic perception of facial affect and identity in the human brain. Cerebral Cortex, 13, 1023-1033. doi:10.1093/cercor/13.10.1023 Matsumoto, D., & Ekman, P. (1988). Japanese and Caucasian Facial Expressions of Emotion (JACFEE) and Neutral Faces (JACNeuF). [Slides]: Dr. Paul Ekman, Department of Psychiatry, University of California, San Francisco. Sato, W., Kochiyama, T., Yoshikawa, S., Naito, E., & Matsumara, M. (2004). Enhanced neural activity in response to dynamic facial ex- pressions of emotion: An fMRI study. Cognitive Brain Research, 20, 81-91. doi:10.1016/j.cogbrainres.2004.01.008 Trautmann, S. A., Fehr, T., & Herrmann, M. (2009). Emotions in mo- tion: Dynamic compared to static facial expressions of disgust and happiness reveal more widespread emotion-specific activations. Brain Research, 1284, 100-115. doi:10.1016/j.brainres.2009.05.075 Wehrle, T., Kaiser, S., Schmidt, S., & Scherer, K. R. (2000). Studying the dynamics of emotional expression using synthesized facial mus- cle movements. Journal of Personality and Social Psychology, 78, 105-119. doi:10.1037/0022-3514.78.1.105

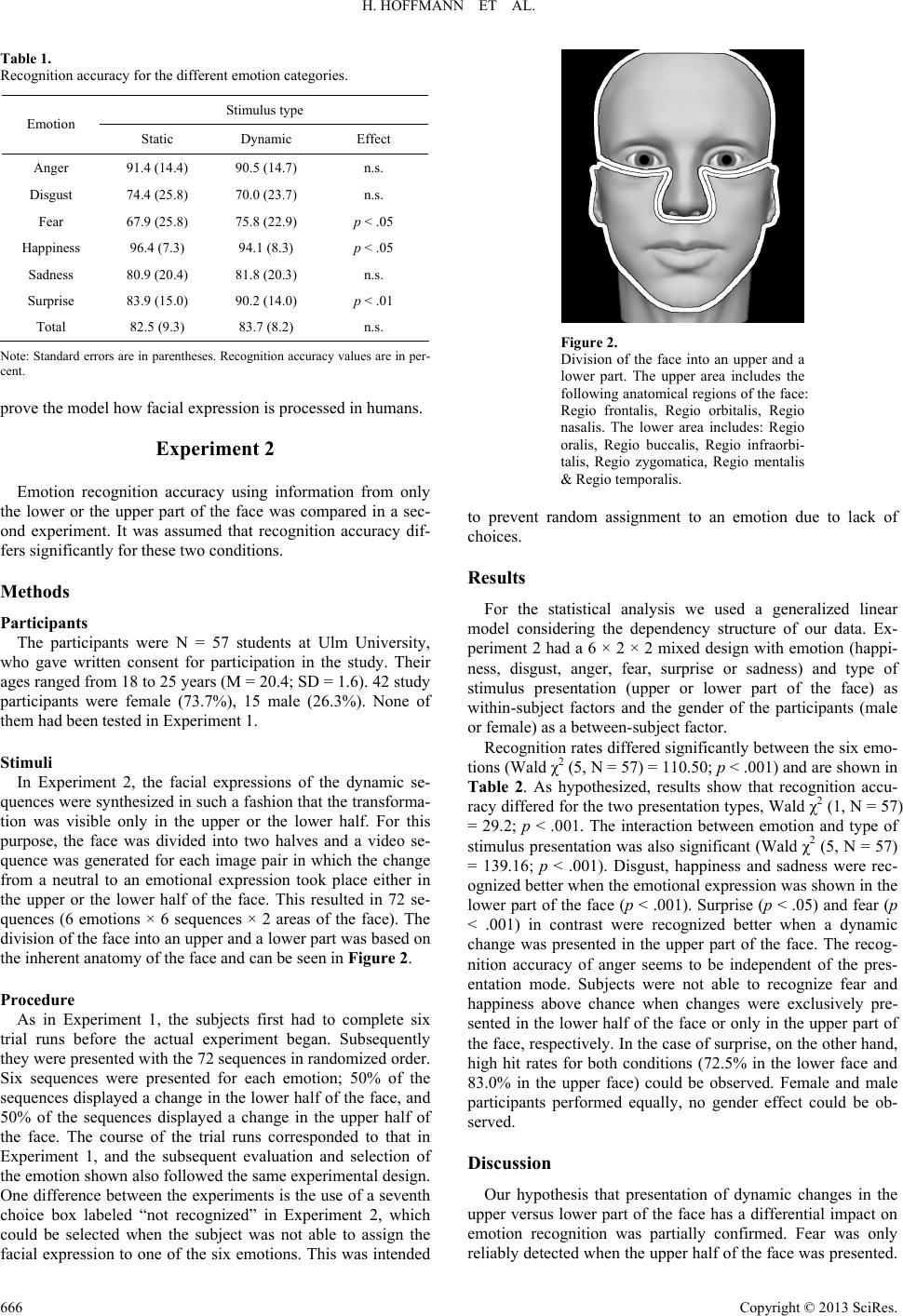

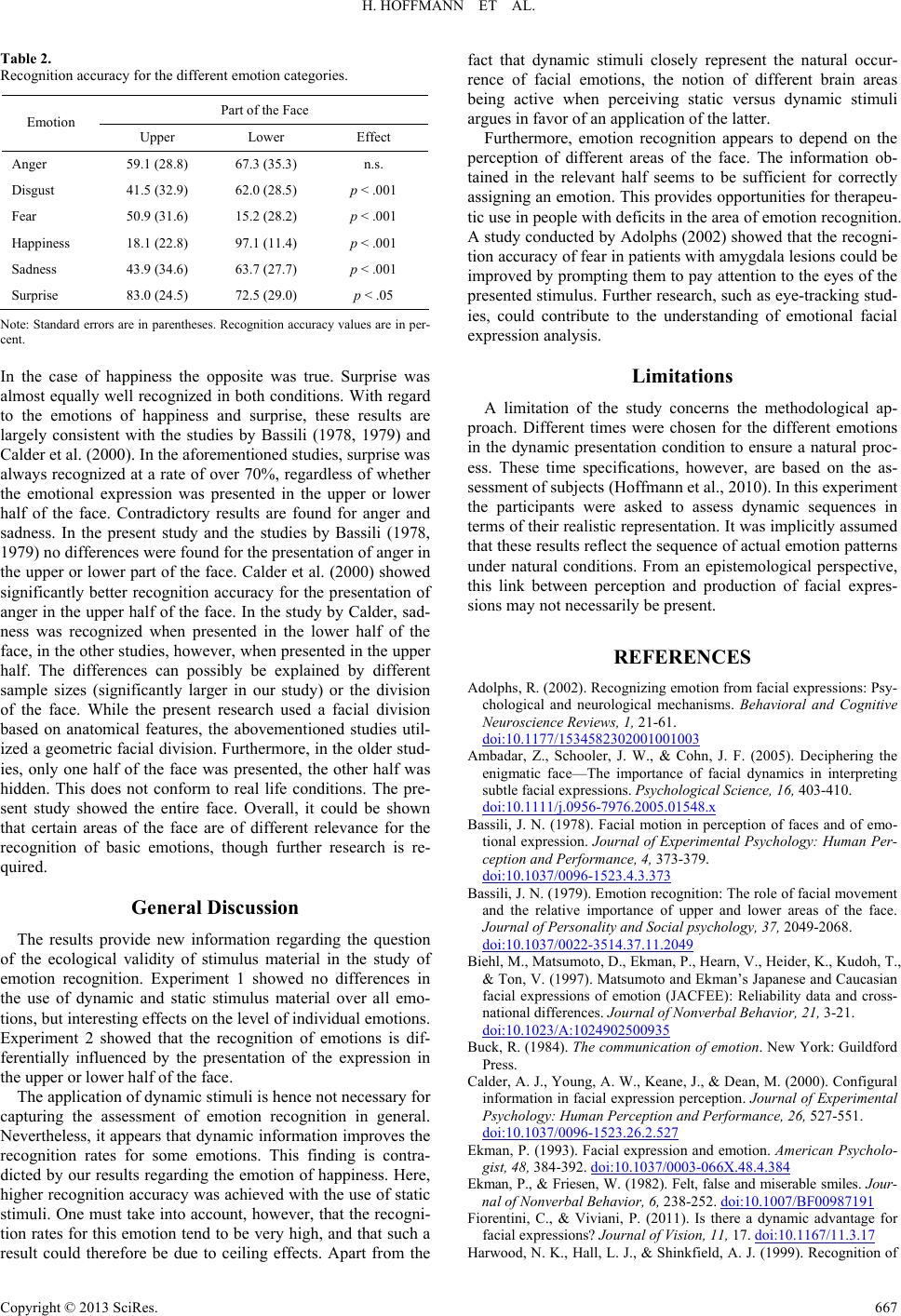

|