Y. AHN ET AL.

Copyright © 2013 SciRes. CS

303

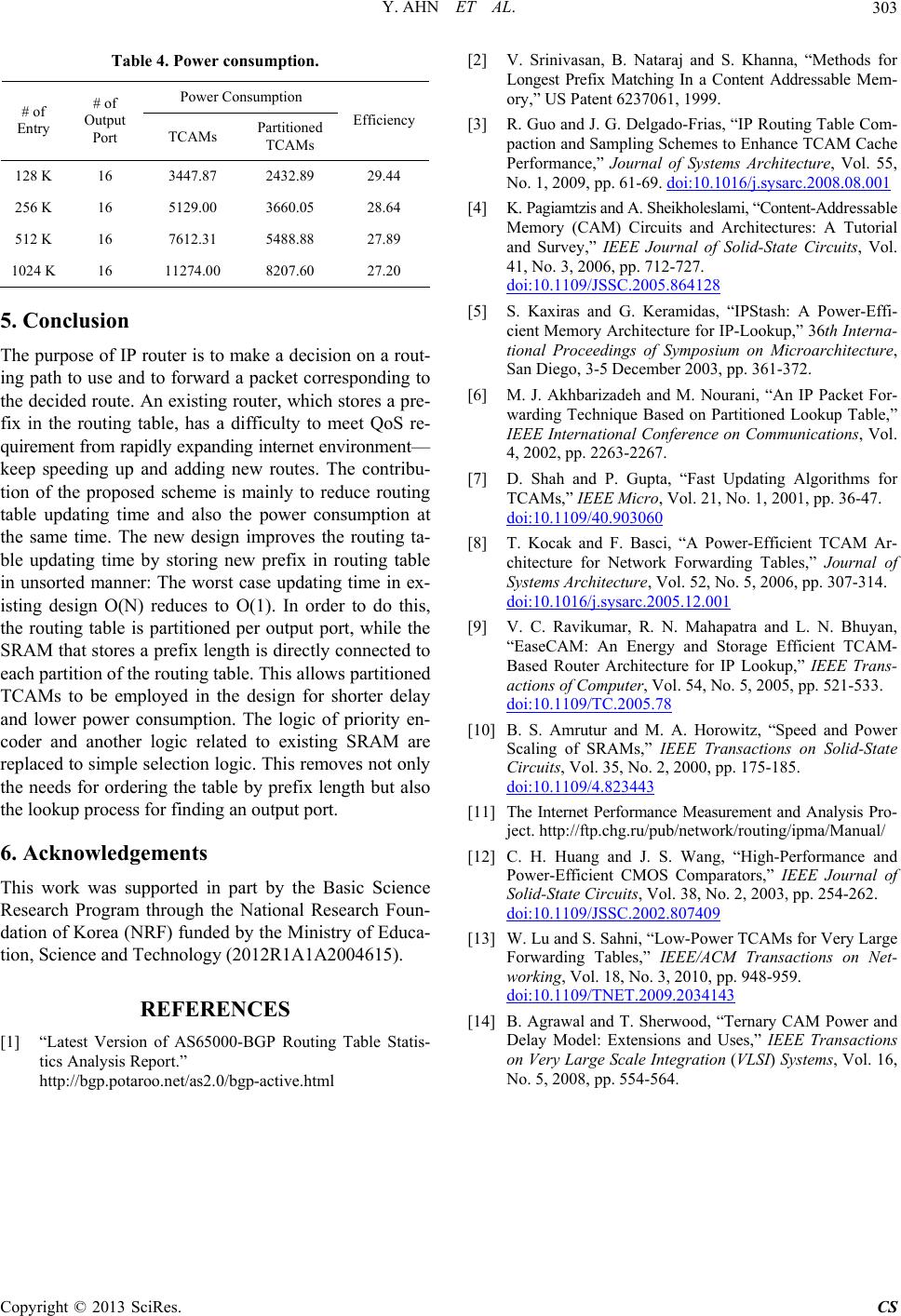

Table 4. Power consumption.

Power Consumption

# of

Entry

# of

Output

Port TCAMs Partitioned

TCAMs

Efficiency

128 K 16 3447.87 2432.89 29.44

256 K 16 5129.00 3660.05 28.64

512 K 16 7612.31 5488.88 27.89

1024 K 16 11274.00 8207.60 27.20

5. Conclusion

The purpose of IP router is to make a decision on a rout-

ing path to use and to forward a packet corresponding to

the decided route. An existing router, which stores a pre-

fix in the routing table, has a difficulty to meet QoS re-

quirement from rapidly expanding internet environmen t—

keep speeding up and adding new routes. The contribu-

tion of the proposed scheme is mainly to reduce routing

table updating time and also the power consumption at

the same time. The new design improves the routing ta-

ble updating time by storing new prefix in routing table

in unsorted manner: The worst case updating time in ex-

isting design O(N) reduces to O(1). In order to do this,

the routing table is partitioned per output port, while the

SRAM that stores a prefix length is directly connected to

each partition of the routing table. This allows partitioned

TCAMs to be employed in the design for shorter delay

and lower power consumption. The logic of priority en-

coder and another logic related to existing SRAM are

replaced to simple selection logic. This removes not only

the needs for ordering the table by prefix length but also

the lookup process for finding an output port.

6. Acknowledgements

This work was supported in part by the Basic Science

Research Program through the National Research Foun-

dation of Korea (NRF) funded by the Ministry of Educa-

tion, Science an d Technology (2012R1A1A2004615).

REFERENCES

[1] “Latest Version of AS65000-BGP Routing Table Statis-

tics Analysis Report.”

http://bgp.potaroo.net/as2.0/bgp-active.html

[2] V. Srinivasan, B. Nataraj and S. Khanna, “Methods for

Longest Prefix Matching In a Content Addressable Mem-

ory,” US Patent 6237061, 1999.

[3] R. Guo and J. G. Delgado-Frias, “IP Routing Table Com-

paction and Sampling Schemes to Enhance TCAM Cache

Performance,” Journal of Systems Architecture, Vol. 55,

No. 1, 2009, pp. 61-69. doi:10.1016/j.sysarc.2008.08.001

[4] K. Pagiamtzis and A. Sheikholeslami, “Content-Addressable

Memory (CAM) Circuits and Architectures: A Tutorial

and Survey,” IEEE Journal of Solid-State Circuits, Vol.

41, No. 3, 2006, pp. 712-727.

doi:10.1109/JSSC.2005.864128

[5] S. Kaxiras and G. Keramidas, “IPStash: A Power-Effi-

cient Memory Architecture for IP-Lookup,” 36th Interna-

tional Proceedings of Symposium on Microarchitecture,

San Diego, 3-5 December 2003, pp. 361-372.

[6] M. J. Akhbarizadeh and M. Nourani, “An IP Packet For-

warding Technique Based on Partitioned Lookup Table,”

IEEE International Conference on Communications, Vol.

4, 2002, pp. 2263-2267.

[7] D. Shah and P. Gupta, “Fast Updating Algorithms for

TCAMs,” IEEE Micro, Vol. 21, No. 1, 2001, pp. 36-47.

doi:10.1109/40.903060

[8] T. Kocak and F. Basci, “A Power-Efficient TCAM Ar-

chitecture for Network Forwarding Tables,” Journal of

Systems Architecture, Vol. 52, No. 5, 2006, pp. 307-314.

doi:10.1016/j.sysarc.2005.12.001

[9] V. C. Ravikumar, R. N. Mahapatra and L. N. Bhuyan,

“EaseCAM: An Energy and Storage Efficient TCAM-

Based Router Architecture for IP Lookup,” IEEE Trans-

actions of Computer, Vol. 54, No. 5, 2005, pp. 521-533.

doi:10.1109/TC.2005.78

[10] B. S. Amrutur and M. A. Horowitz, “Speed and Power

Scaling of SRAMs,” IEEE Transactions on Solid-State

Circuits, Vol. 35, No. 2, 2000, pp. 175-185.

doi:10.1109/4.823443

[11] The Internet Performance Measurement and Analysis Pro-

ject. http://ftp.chg.ru/pub/network/routing/ipma/Manual/

[12] C. H. Huang and J. S. Wang, “High-Performance and

Power-Efficient CMOS Comparators,” IEEE Journal of

Solid-State Circuits, Vol. 38, No. 2, 2003, pp. 254-262.

doi:10.1109/JSSC.2002.807409

[13] W. Lu and S. Sahni, “Low-Power TCAMs for Very Large

Forwarding Tables,” IEEE/ACM Transactions on Net-

working, Vol. 18, No. 3, 2010, pp. 948-959.

doi:10.1109/TNET.2009.2034143

[14] B. Agrawal and T. Sherwood, “Ternary CAM Power and

Delay Model: Extensions and Uses,” IEEE Transactions

on Very Large Scale Integration (VLSI) Systems, Vol. 16,

No. 5, 2008, pp. 554-564.