Applied Mathematics

Vol.06 No.02(2015), Article ID:53771,6 pages

10.4236/am.2015.62023

Global Convergence of a Modified Tri-Dimensional Filter Method

Bei Gao, Ke Su, Zixing Rong

Department of Mathematics and Information Science, Hebei University, Baoding, China

Email: shuiguogaobei@163.com, pigeonsk@163.com, rongzixingcn@163.com

Copyright © 2015 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 9 January 2015; accepted 27 January 2015; published 3 February 2015

ABSTRACT

In this paper, a tri-dimensional filter method for nonlinear programming was proposed. We add a parameter into the traditional filter for relaxing the criterion of iterates. The global convergent properties of the proposed algorithm are proved under some appropriate conditions.

Keywords:

Tri-Dimensional, NCP Function, Global Convergence, QP-Free

1. Introduction

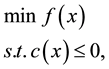

This paper is concerned with finding a solution of a Nonlinear Programming (NLP) problem, as following

(1)

(1)

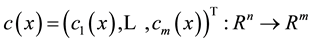

where ,

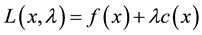

,  are second-order continuously differentiable. The Lagrangian function associated with problem (1) is the function

are second-order continuously differentiable. The Lagrangian function associated with problem (1) is the function

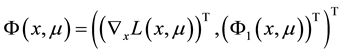

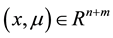

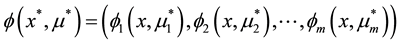

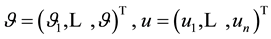

where  is the multiplier vector. For simplicity, we denote the column vector

is the multiplier vector. For simplicity, we denote the column vector  as

as . A point

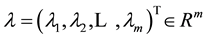

. A point  is called a Karush-Kuhn-Tucker (KKT) point if it satisfies the following conditions:

is called a Karush-Kuhn-Tucker (KKT) point if it satisfies the following conditions:

(2)

(2)

we also say that  is a KKT point of problem (1) if there exists a

is a KKT point of problem (1) if there exists a  such that

such that  satisfied (2).

satisfied (2).

Traditionally, this question has been answered by using penalty function. But it is difficult to find a suitable penalty parameter. In order to avoid the pitfalls of penalty function, Nonlinear programming problems (NLP) filter methods were first proposed by Fletcher in a plenary talk at the SIAM Optimization Conference in Victoria in May 1996; the methods are described in [1] . And soon, Global convergence proof of filter method was given in [2] . Because of good global convergence and numerical results, filter methods have quickly become popular in other areas such as nonsmooth optimization, nonlinear equations and so on [3] [4] .

Motivated by the ideas of filter methods above, a tri-dimensional filter method for nonliner programming was proposed as acceptance criterion to judge whether to accept a trial step in our algorithm. We have following advantages:

1) By enhancing the flexibility of filter, motivated by [5] , we increase a dimension by introducing a parameter to relax the criterion of iterates.

2) The Maratos effect that makes good progress toward the solution may be rejected and has been avoided by using tri-dimensional filter method as acceptance criterion.

3) Tri-dimensional filter method can make full use of the information we get along the algorithm process.

This paper is divided into 4 sections. The next section introduces the concept of a Modified tri-dimensional filter and the NCP function. In Section 3, an algorithm of line search filter is given. The global convergence properties are proved in the last section.

2. Preliminaries

2.1. NCP Function

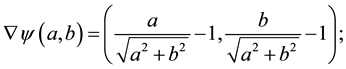

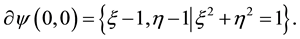

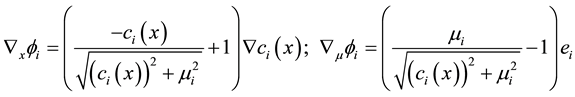

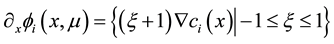

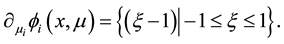

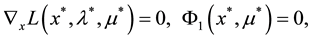

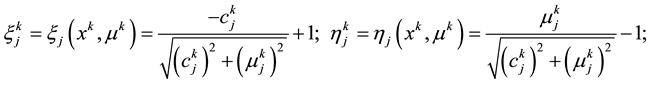

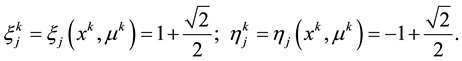

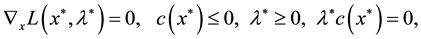

The method that based on the Fischer-Burmeister NCP function are efficient, both theoretical results and computational experience. The Fischer-Burmeister function has a very simple structure

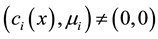

We know that:  is continuously differentiable everywhere except at the origin, but it is strongly semismooth at the origin. i.e. if

is continuously differentiable everywhere except at the origin, but it is strongly semismooth at the origin. i.e. if  or

or , then

, then

if

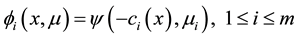

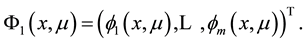

Let

We denote

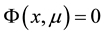

Clearly, the KKT optimality conditions (2) can be equivalently reformulated as the nonsmooth equations

If

where

If

and

We may reformulated the KKT (at point

where

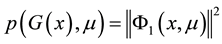

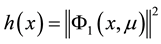

Replace the violation constrained function

violation constrained function

If

otherwise we denote

Let

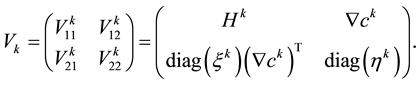

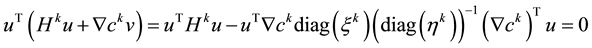

where Hk is a positive matrix which may be modified by BFGS update.

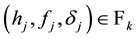

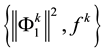

Definition 1.1 [1] A pair

Definition 1.2 [1] A filter is a list of pairs

Definition 1.3 NCP pair and NCP functions [6] We call a pair

Denote

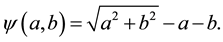

2.2. Tri-Dimensional Filter

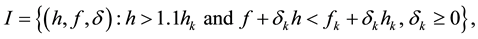

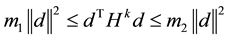

A two dimensional filter is often used in traditional filter method, some information about convergent like the positions of iterates are neglected. Therefore, we aim to enhance its flexibility of filter. Motivated by [5] , we adopt

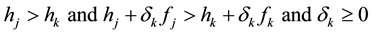

We use pairs

If

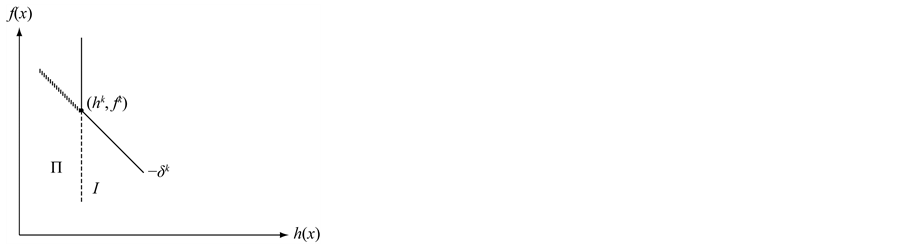

Figure 1. Distinct regions defined by the current iterate.

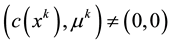

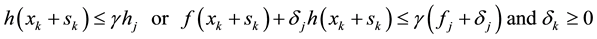

We say that the algorithm does not make good improvement since we do not want to accept points with larger constraint violation. Thus, we try to impose stricter acceptance criterion. Meanwhile, we do not permit

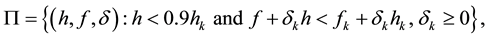

If sk moved into region P which is defined as

We say that the algorithm makes good improvement since it reduces not only the constraint violation, but also the penalty function value. So, we may loosen the acceptance criterion to wish more improvement. Here, we achieve this goal by reducing

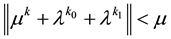

In our algorithm, the trial step sk is accepted by filter if

For all

then we say

3. Description of the Algorithm

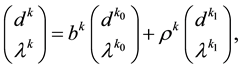

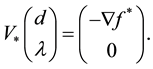

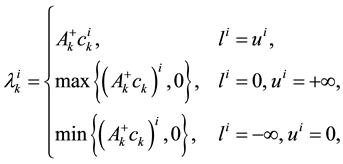

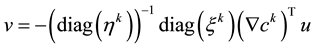

In this section we hope that the Lagrange multiplier

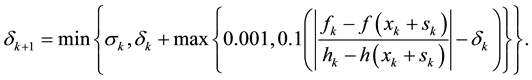

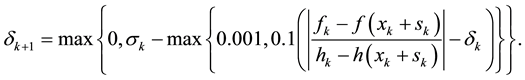

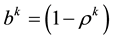

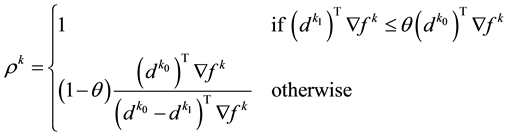

Now, we consider how to update the penalty parameter. Let

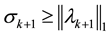

satisfied, and the second order sufficient conditions are satisfied. Then when

minimizer of penalty function. So we force the condition at each iteration:

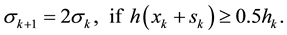

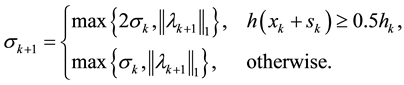

And also, since the penalty term aims to reduce the constraint violation we double the penalty parameter if the constraint violation could not reduce by half, that is

To summarize, we update the penalty parameter in the following formula:

The improved algorithm is presented as following.

Algorithm

Step 0. Initialization: Give a starting point

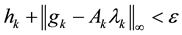

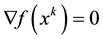

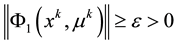

Step 1. Terimination test. If

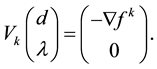

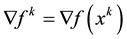

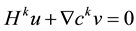

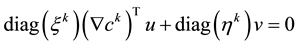

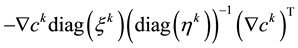

Step 2. Computation of the search direction. compute

where

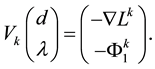

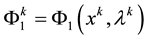

If

where

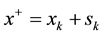

Step3. Liner search with filter

If

and let

Step 4. Acceptance criterion of the trial step

Let

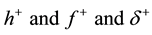

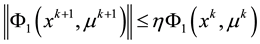

Step 5. Paramenters update

Update

4. The Convergence Properties

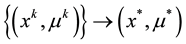

To present a proof of global convergence of algorithm, in this section, we always assume that the following conditions hold.

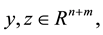

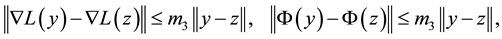

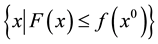

A1 The level set

A2

where

A3

for all

Lemma 1. If

Proof. If

and

From the definition of

Putting (14) into (12), we have

The fact that

(14).

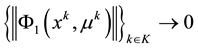

The lemma 2 hold (see [8] Lemma 2)

Lemma 2. If

Lemma 3. Consider an infinite sequence iterations on which

Proof. Suppose the theorem is not true, then exists an

The following lemma 4 - 5 hold (see [9] )

Lemma 4.

Lemma 5. If

and

Theorem 1. If

It is obviously to prove the conclusion holds according to the above lemmas.

Acknowledgements

We thank the Editor and the referee for their comments. This work is supported by the National Natural Science Foundation of China (No. 11101115), the Natural Science Foundation of Hebei Province (No. 2014201033) and the Science and Technology project of Hebei province (No. 13214715).

References

- Fletcher, R. and Leyyfer, S. (2002) Nonlinear Programming without a Penalty Function. Mathematical Programming, 91, 239-269. http://dx.doi.org/10.1007/s101070100244

- Fletcher, R., Leyffer, S. and Toint, P.L. (1998) On the Global Convergence of an SLP-Filter Algorithm. Numerical Analysis Report NA/183, University of Dundee, Dundee.

- Fletcher, R., Leyffer, S., et al. (2006) A Brief History of Filter Methods. Mathematics and Computer Science Division, Preprint ANL/MCSP1372-0906, Argonne National Laboratory.

- Chin, C.M., Rashid, A.H.A. and Nor, K.M. (2007) Global and Local Convergence of a Filter Line Search Method for Nonlinear Programming. Optimization Method Software, 22, 365-390. http://dx.doi.org/10.1080/10556780600565489

- Wang, X. (2010) A New Filter Trust Region Method for Nonlinear Programming. Journal of the Operations Research of China, 10, 133-140.

- Zhou, Y. and Pu, D. (2007) A New QP-Free Feasible Method for Inequality Constrained Optimization. OR Transactions, 11, 31-43.

- Curtis, F.E. and Nocedal, J.(2008) Flexible Penalty Function for Nonlinear Constrained Optimization. IMA Journal of Numerical Analysis, 28, 749-769. http://dx.doi.org/10.1093/imanum/drn003

- Su, K. (2008) A New Globally and Superlinearly Convergent QP-Free Method for Inequality Constrained Optimization. Journal of Tongji University, 36, 265-272.

- Pu, D.G., Li, K. and Xue, W. (2005) Convergence of QP-Free Infeasible Methods for Nonlinear Inequality Constrained Optimization Problems. Journal of Tongji University, 33, 525-529.