Applied Mathematics

Vol.4 No.12A(2013), Article ID:40970,12 pages DOI:10.4236/am.2013.412A003

On Solving Centrosymmetric Linear Systems

Mathematics Department, Faculty of Science, Mansoura University, Mansoura, Egypt

Email: *m_elmikkawy@yahoo.com, faizatlan11@yahoo.com

Copyright © 2013 Moawwad El-Mikkawy, Faiz Atlan. This is an open access article distributed under the Creative Commons Attribution License, which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited. In accordance of the Creative Commons Attribution License all Copyrights © 2013 are reserved for SCIRP and the owner of the intellectual property Moawwad El-Mikkawy, Faiz Atlan. All Copyright © 2013 are guarded by law and by SCIRP as a guardian.

Received November 2, 2013; revised December 2, 2013; accepted December 9, 2013

Keywords: Centrosymmetric Matrix; Exchange Matrix; Rotate Matrix; Linear System; DETGTRI Algorithm; Computer Algebra Systems (CAS); MAPLE

ABSTRACT

The current paper is mainly devoted for solving centrosymmetric linear systems of equations. Formulae for the determinants of tridiagonal centrosymmetric matrices are obtained explicitly. Two efficient computational algorithms are established for solving general centrosymmetric linear systems. Based on these algorithms, a MAPLE procedure is written. Some illustrative examples are given.

1. Introduction

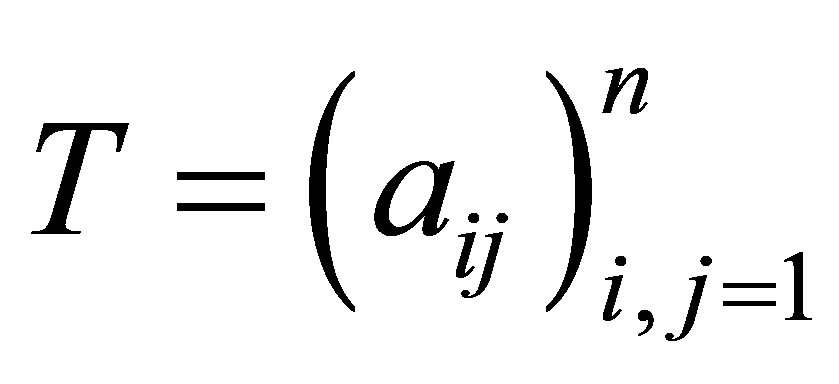

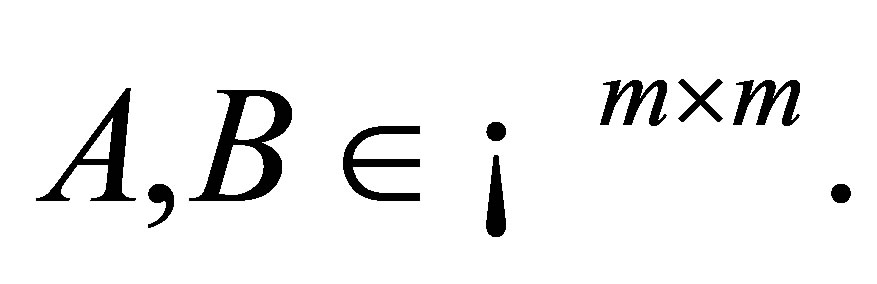

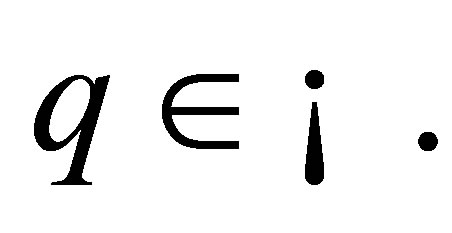

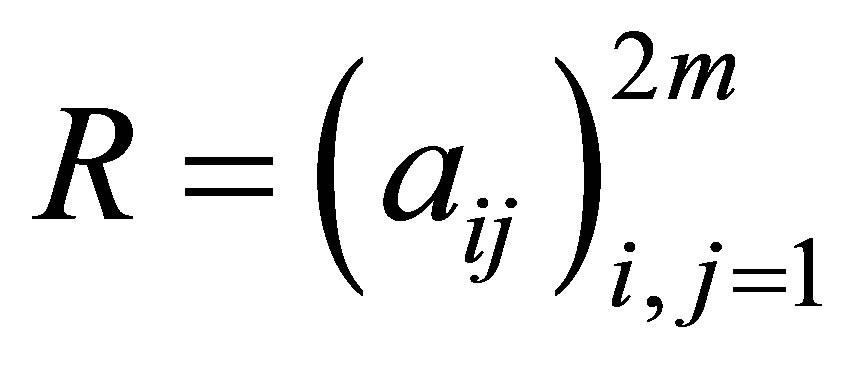

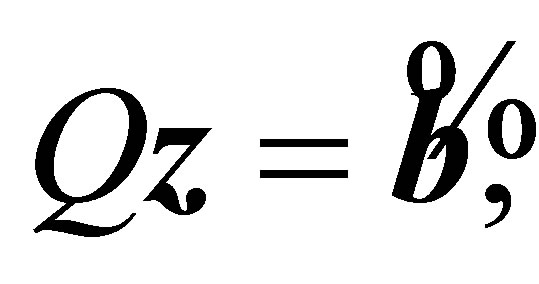

Throughout this paper,  and

and  denote the determinant and the transpose of the matrix

denote the determinant and the transpose of the matrix  respectively. Also

respectively. Also  denotes the greatest integer less than or equal to

denotes the greatest integer less than or equal to  Centrosymmetric matrices have practical applications in numerical analysis, information theory, statistics, physics, harmonic differential quadrature, differential equations, engineering, sinc methods, magic squares, linear system theory and pattern recognition. The interested readers may refer to [1-12].

Centrosymmetric matrices have practical applications in numerical analysis, information theory, statistics, physics, harmonic differential quadrature, differential equations, engineering, sinc methods, magic squares, linear system theory and pattern recognition. The interested readers may refer to [1-12].

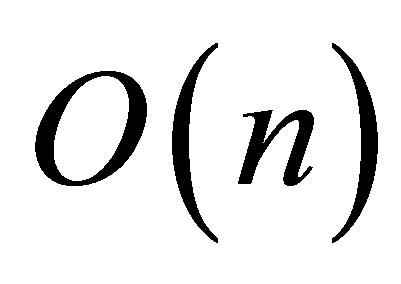

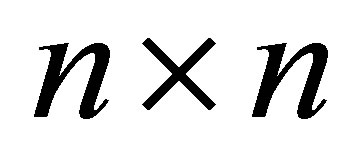

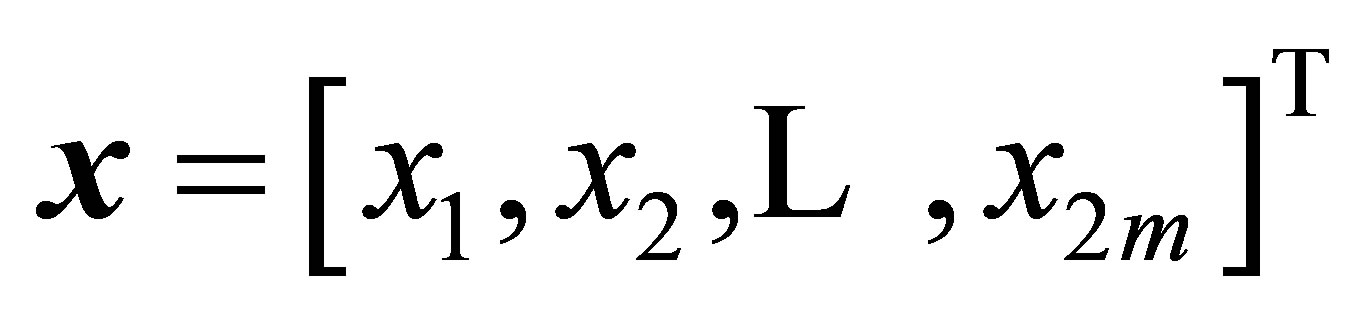

Solving and analyzing linear systems of equations is a fundamental problem in science and engineering applications. The cost of solving any linear system using Gauss or Gauss-Gordan algorithms is  The motivation of the current paper is to develop efficient algorithms for solving any centrosymmetric linear system having

The motivation of the current paper is to develop efficient algorithms for solving any centrosymmetric linear system having  equations and

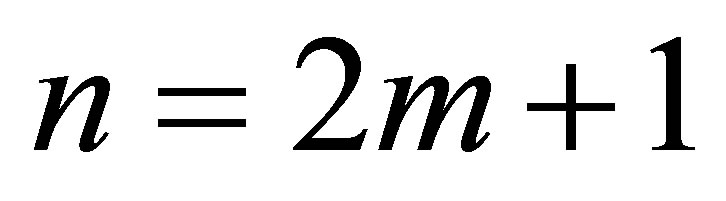

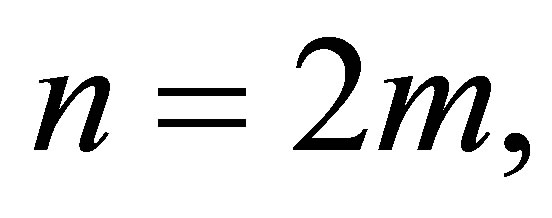

equations and  unknowns provided that the coefficient matrix of the system is nonsingular. The cost of each algorithm depends on the solvers of two associated linear systems having smaller sizes than n. More precisely, if n = 2m, then each of the two associated linear systems consists of m equations. If n = 2m + 1, then we have one system having m equations and the other has (m + 1) equations. Consequently, if the two associated linear systems have special structures, then the cost of the centrosymmetric algorithm could be considerably reduced, in particular for large values of n.

unknowns provided that the coefficient matrix of the system is nonsingular. The cost of each algorithm depends on the solvers of two associated linear systems having smaller sizes than n. More precisely, if n = 2m, then each of the two associated linear systems consists of m equations. If n = 2m + 1, then we have one system having m equations and the other has (m + 1) equations. Consequently, if the two associated linear systems have special structures, then the cost of the centrosymmetric algorithm could be considerably reduced, in particular for large values of n.

The paper is organized as follows. In Section 2, some properties of the exchange and the rotate matrices are presented. Formulae for centrosymmetric tridiagonal determinants are obtained in Section 3. In Section 4, two computational algorithms for solving centrosymmetric linear systems are given. A MAPLE procedure is given in Section 5. Some illustrative examples are presented in Section 6.

Definition 1.1. The  matrix with 1’s on the northeast-southeast diagonal and 0’s elsewhere

matrix with 1’s on the northeast-southeast diagonal and 0’s elsewhere

(1)

(1)

is called the exchange matrix of order  The subscript on

The subscript on  is neglected whenever the size is obvious from the context.

is neglected whenever the size is obvious from the context.

Definition 1.2. Let  be an

be an  matrix.

matrix.

The rotate of  denoted

denoted  is defined by

is defined by

(2)

(2)

Definition 1.3 [9]. Let  be an

be an  matrix. Then

matrix. Then

•  is said to be centrosymmetric if

is said to be centrosymmetric if

•  is said to be persymmetric if

is said to be persymmetric if

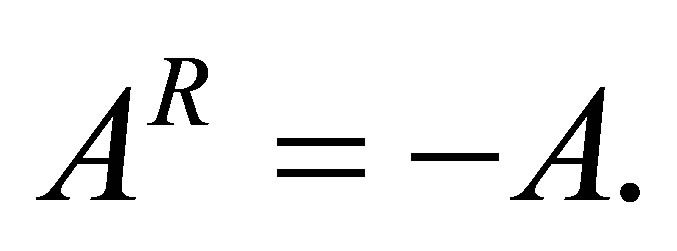

•  is said to be centrogonal if

is said to be centrogonal if  provided

provided

•  is said to be skew-centrosymmetric if

is said to be skew-centrosymmetric if

•  is said to be bisymmetric if

is said to be bisymmetric if

Note that the bisymmetric matrix is both symmetric and centrosymmetric. It is also both symmetric and presymmetric.

2. Some Properties of The Exchange and The Rotate Matrices

Let us begin this section by giving some helpful results concerning the exchange and rotate matrices. For more details see [1,3,8,9,12-21].

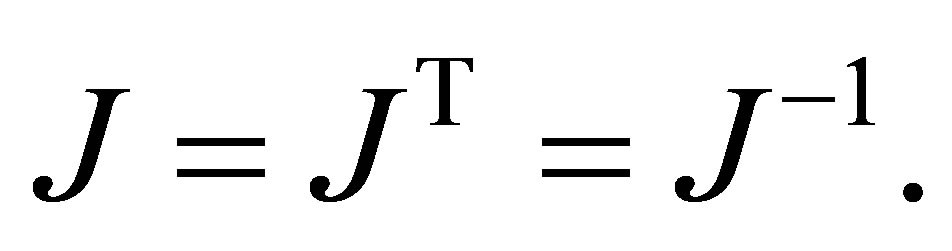

The exchange matrix  enjoys the following properties:

enjoys the following properties:

•

where  is the identity matrix of order

is the identity matrix of order

•

• The matrix product  is a version of the matrix

is a version of the matrix  that has been reflected in line at 0 degree to the horizontal measured counter-clockwise.

that has been reflected in line at 0 degree to the horizontal measured counter-clockwise.

• The matrix product  is a version of the matrix

is a version of the matrix  that has been reflected in line at 90 degrees to the horizontal measured counter-clockwise.

that has been reflected in line at 90 degrees to the horizontal measured counter-clockwise.

• The matrix product  is a version of the matrix

is a version of the matrix  that has been rotated counter-clockwise or clockwise by 180 degrees.

that has been rotated counter-clockwise or clockwise by 180 degrees.

Note that the exchange matrix J is equal to the identity matrix I but with the columns in reverse order. More precisely,  where

where

is the Kronecker symbol which is equal to 1 if

is the Kronecker symbol which is equal to 1 if  and zero if

and zero if .

.

It is also worth mentioned that the matrix  is sometimes called the counter-identity matrix or contra-identity matrix or per-identity matrix or the reflection matrix or the reversal matrix.

is sometimes called the counter-identity matrix or contra-identity matrix or per-identity matrix or the reflection matrix or the reversal matrix.

Exchange matrices are simple to construct in software platforms. For example, to construct  in MAPLE, a single line of code can be used as follows:

in MAPLE, a single line of code can be used as follows:

n := 5: J := array(1..n,1..n,sparse): for i to n do J[i, n + 1 - i] := 1 od: J n := op(J);

n := op(J);

or n := 5: J := matrix(n, n, 0): for i to n do J[i, n + 1 - i] := 1 od: J n := op(J);

n := op(J);

The rotate of

of order

of order  satisfies:

satisfies:

•

•

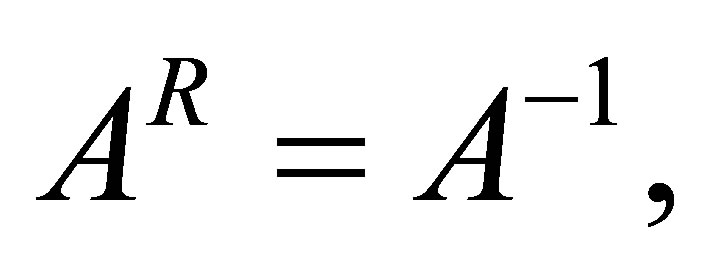

•  provided

provided  exists.

exists.

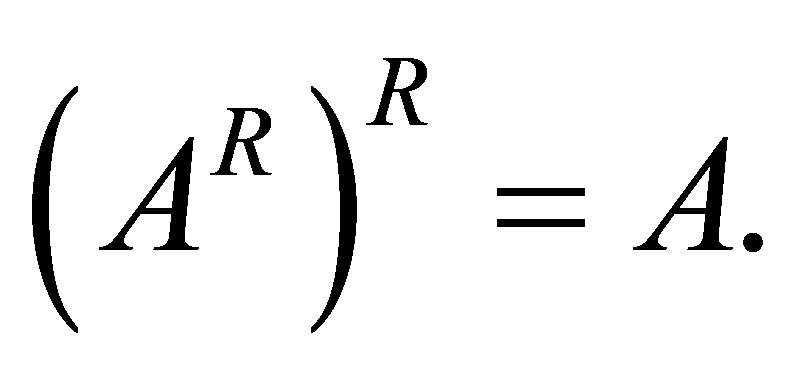

•

•

•

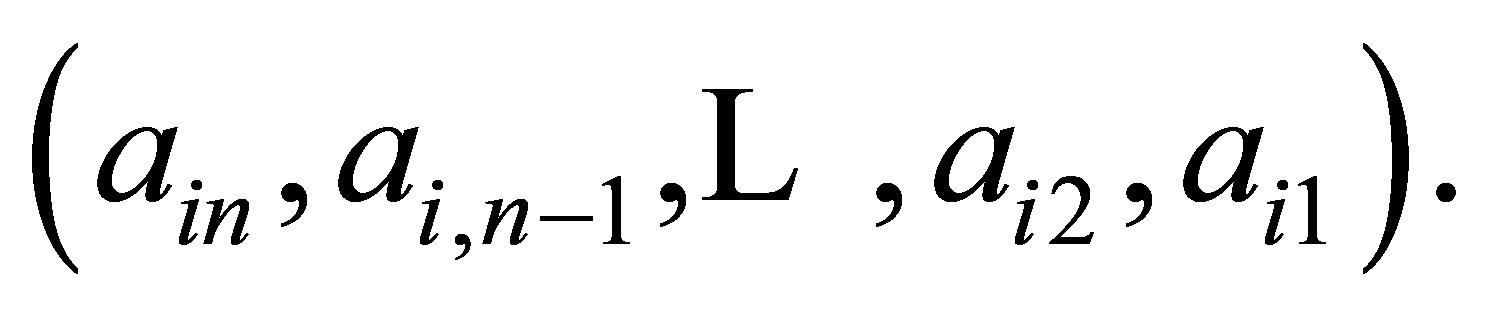

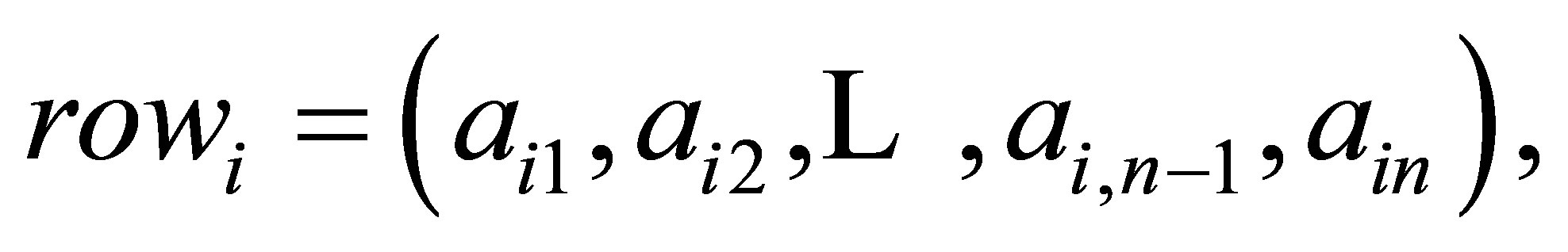

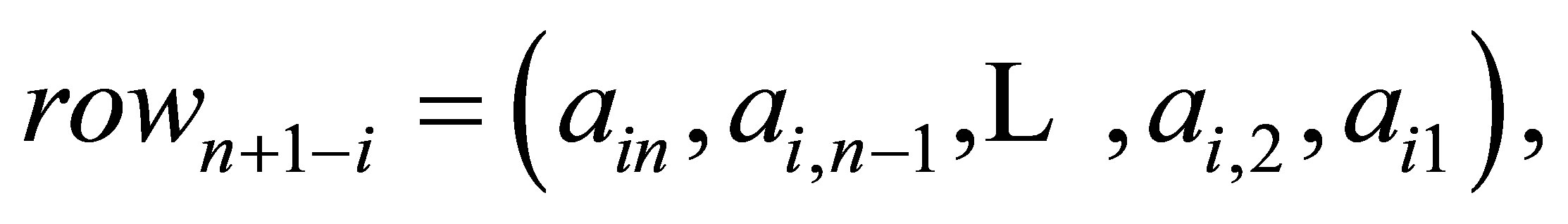

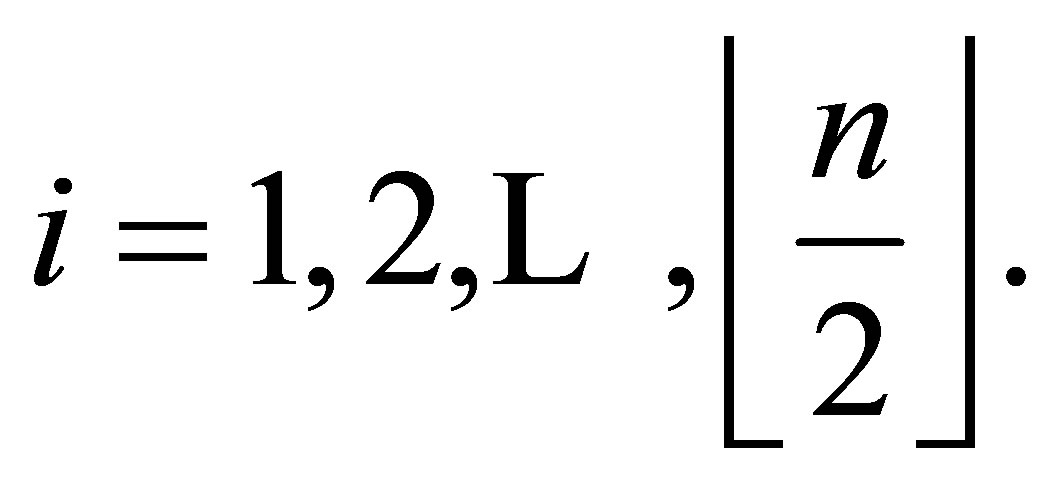

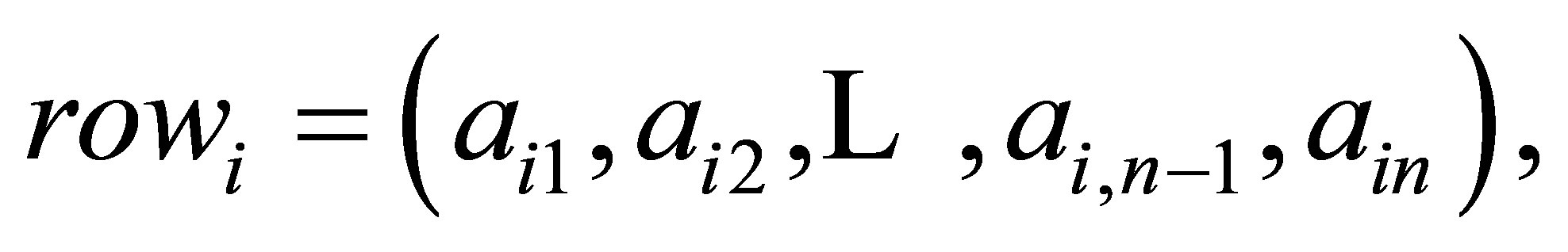

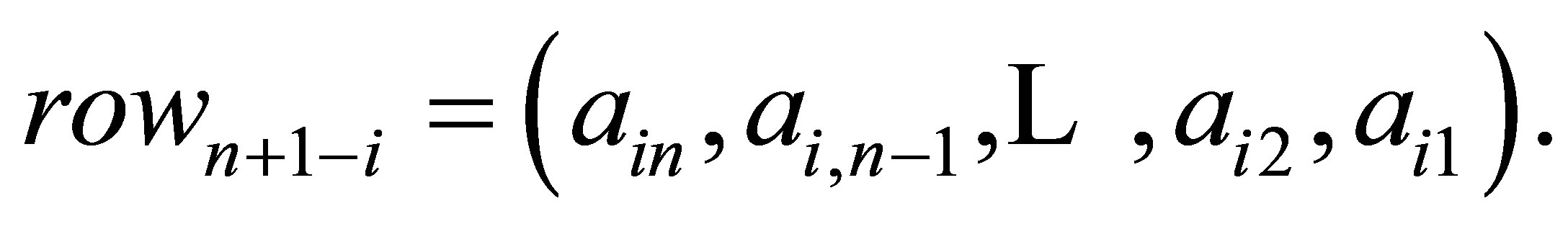

• If  of A is

of A is  then

then

of  is

is  In other words, the centrosymmetric matrix

In other words, the centrosymmetric matrix  is the same when read backwards as when read forwards.

is the same when read backwards as when read forwards.

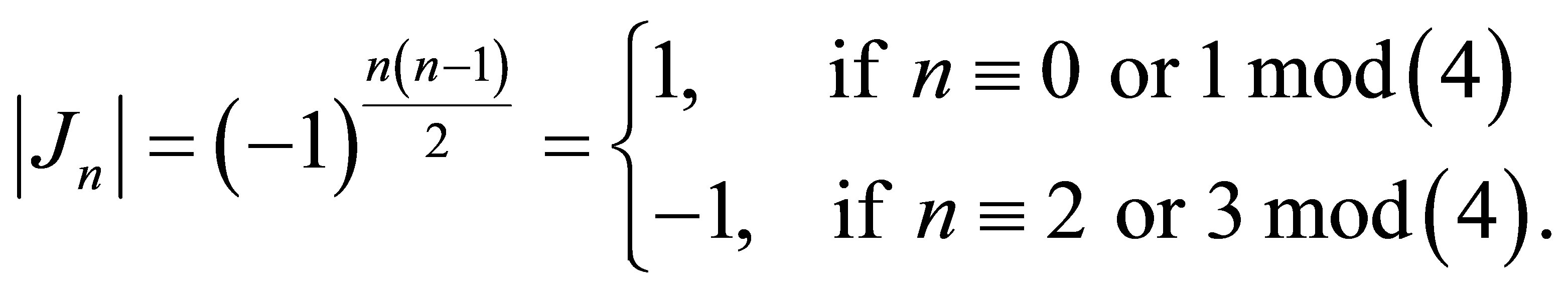

3. Centrosymmetric Determinants

Centrosymmetric determinants take the form:

(3)

(3)

in which

and

for each

In particular, centrosymmetric tridiagonal determinants are of special importance. For convenience of the reader, we present some definitions, notations and properties associated with tridiagonal matrices.

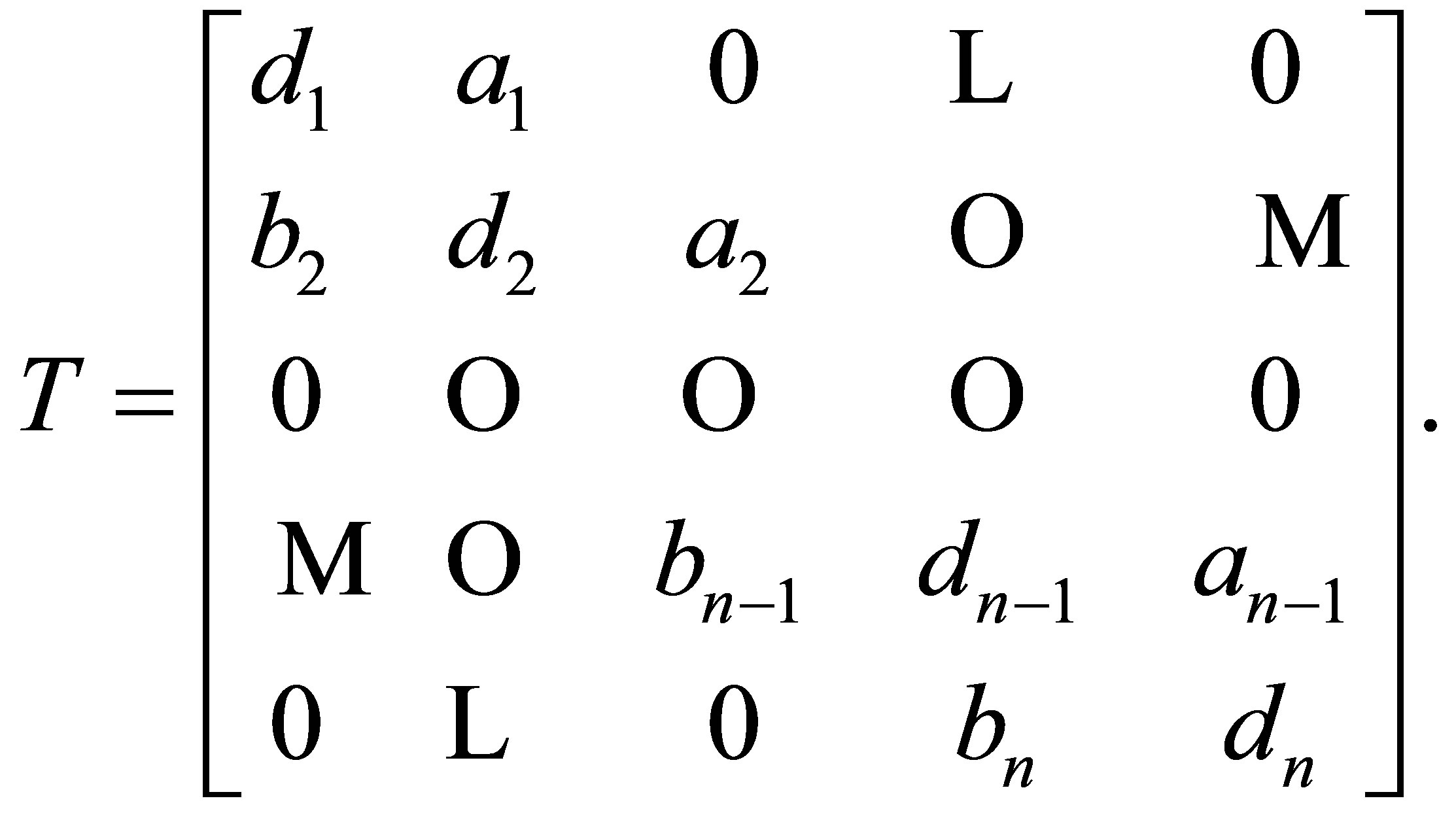

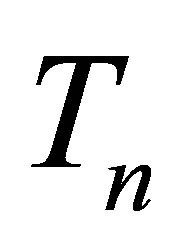

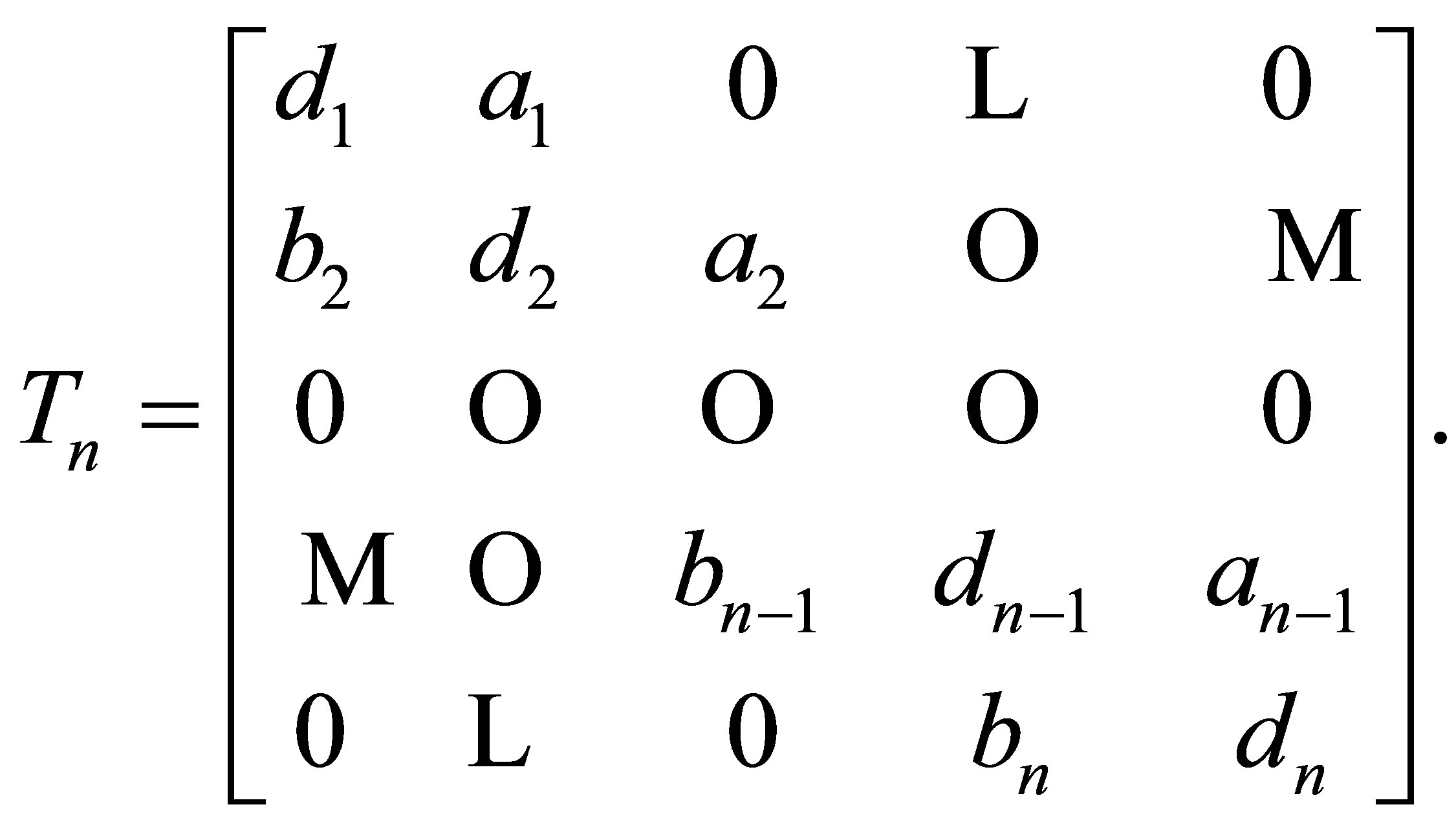

A tridiagonal matrix  takes the form:

takes the form:

(4)

(4)

These types of matrices frequently appear in many areas of science and engineering. For example in parallel computing, telecommunication system analysis and in solving differential equations using finite differences, see [22,23]. A general  tridiagonal matrix of the form (4) can be stored in

tridiagonal matrix of the form (4) can be stored in  memory locations, rather than

memory locations, rather than  memory locations for a full matrix, by using three vectors

memory locations for a full matrix, by using three vectors

and

and  with

with  This is always a good habit in computation in order to save memory space. To study tridiagonal matrices it is very convenient to introduce a vector

This is always a good habit in computation in order to save memory space. To study tridiagonal matrices it is very convenient to introduce a vector  in the following way [24]:

in the following way [24]:

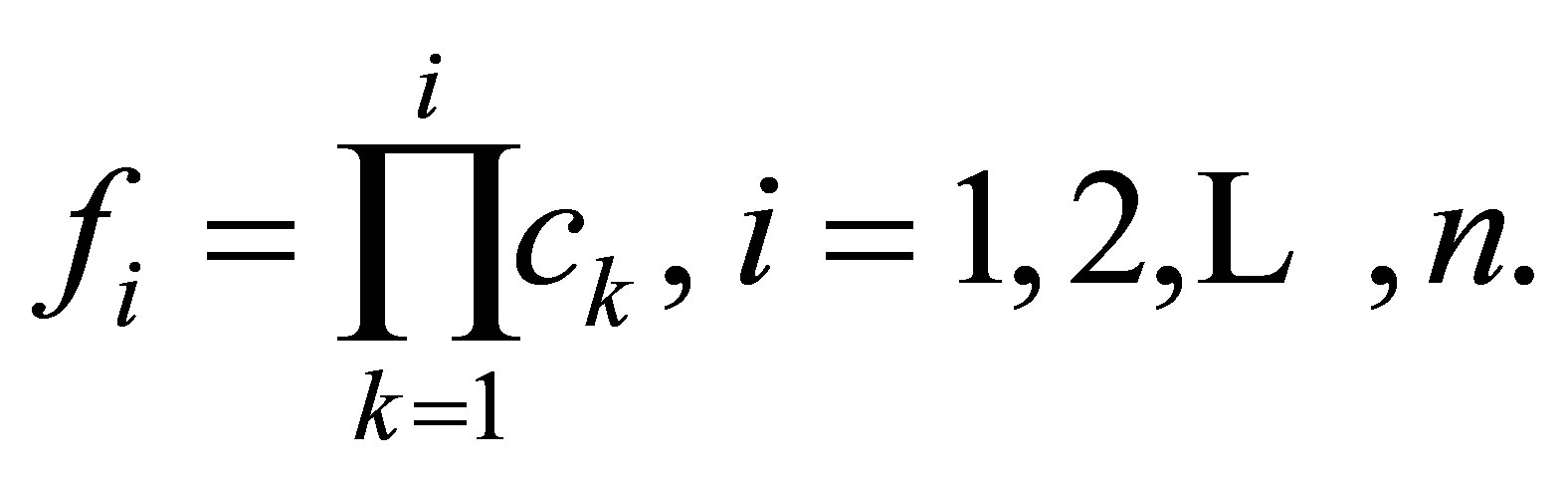

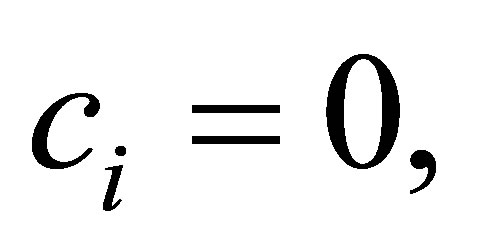

(5)

(5)

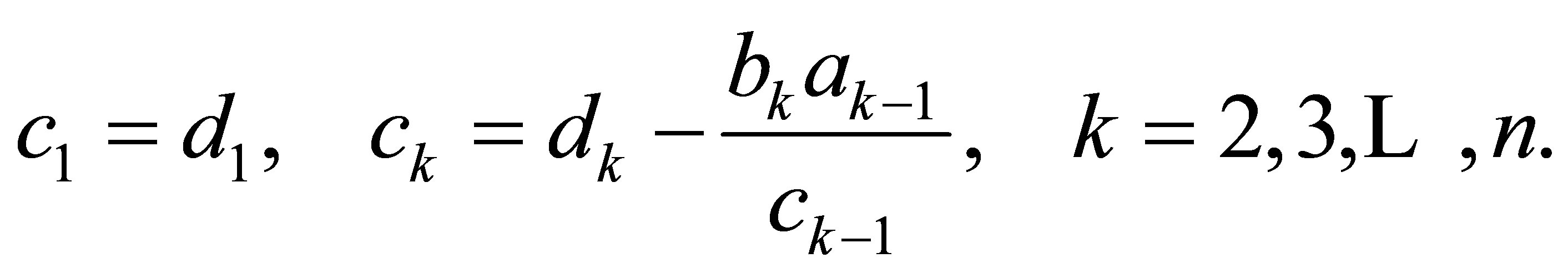

where

(6)

(6)

It is helpful to restate some important results concerning tridiagonal matrices of the form (4). For more details the interested reader may refer to [24-28].

Let  and

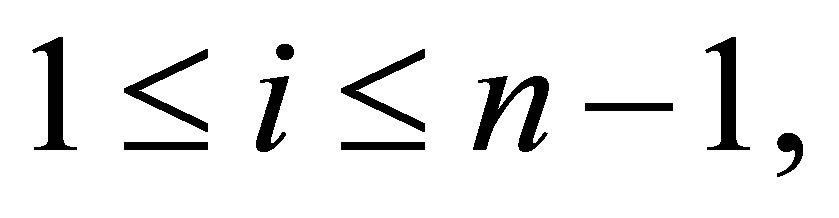

and  are positive integers such that

are positive integers such that  and

and

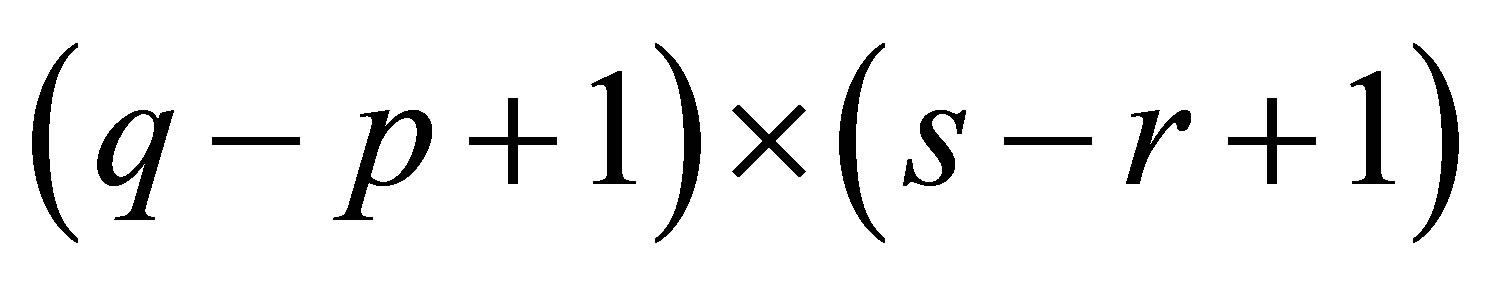

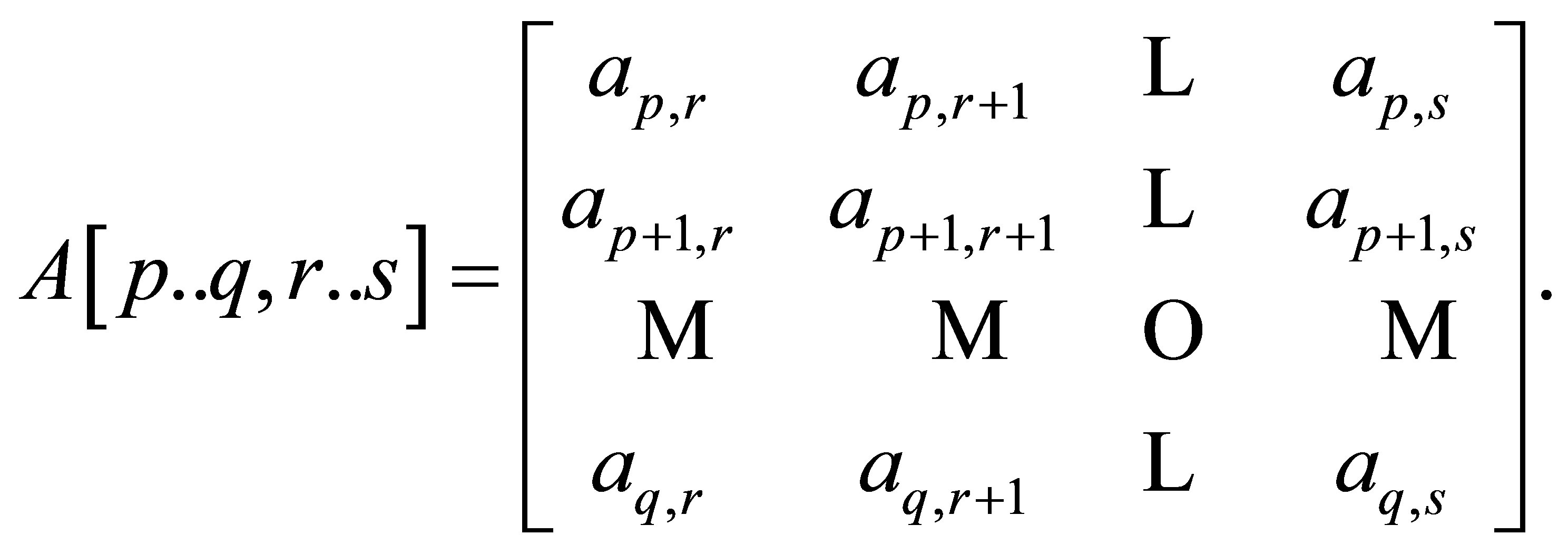

Define the submatrix of  denoted

denoted  of order

of order  by

by

(7)

(7)

In particular, let

and

and  the leading principal minor of

the leading principal minor of

Theorem 3.1 [24]. Consider

(8)

(8)

Then the determinants in (8) satisfy a three-term recurrence

(9)

(9)

where the initial values for  are

are  and

and

Lemma 3.2 [29]. If the  factorization of the matrix

factorization of the matrix  in (4) is possible, then we have

in (4) is possible, then we have

(10)

(10)

where  are given by (6). Meanwhile, the Doolittle

are given by (6). Meanwhile, the Doolittle  factorization [30] of

factorization [30] of  is given by:

is given by:

(11)

(11)

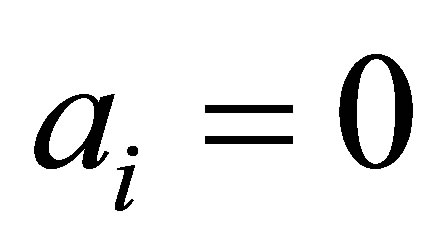

Lemma 3.3 [26]. If  then

then

(12)

(12)

Lemma 3.4 [26]. If

and either

and either  or

or  then

then  is a singular matrix.

is a singular matrix.

Lemma 3.5 [24]. If  for each

for each  then the three-term recurrence (9) reduces to the twoterm recurrence

then the three-term recurrence (9) reduces to the twoterm recurrence

(13)

(13)

Algorithm 3.1 (DETGTRI [31]).

The determinant of the matrix in (4) can be computed using the following symbolic algorithm.

INPUT: Order of the matrix  and the components,

and the components,

OUTPUT: The determinant of the matrix  in (4).

in (4).

Step 1: Use (6) to compute the simplest forms of the  components of the vector

components of the vector .

.

If  for any

for any , set

, set  (

( is just a symbolic name) and continue to compute

is just a symbolic name) and continue to compute  in terms of

in terms of  by using (6).

by using (6).

Step 2: The simplest rational form of the product

(this product is a polynomial in

(this product is a polynomial in ) evaluated at

) evaluated at  is equal to the determinant of the matrix

is equal to the determinant of the matrix  in (4), i.e.,

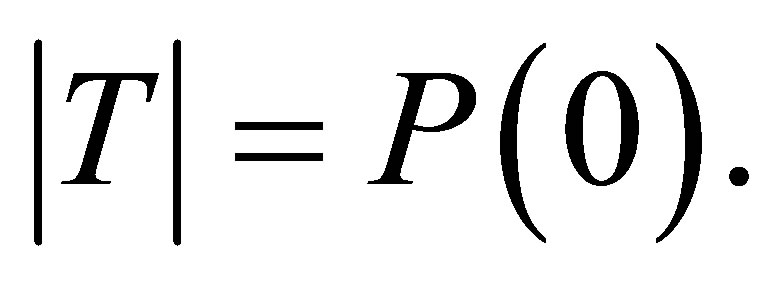

in (4), i.e.,

The cost of the DETGTRI algorithm is . The algorithm is easy to implement in all Computer Algebra Systems (CAS) such as MACSYMA, MATHEMATICA and MAPLE.

. The algorithm is easy to implement in all Computer Algebra Systems (CAS) such as MACSYMA, MATHEMATICA and MAPLE.

Lemma 3.6. Consider the tridiagonal matrix  given by:

given by:

(14)

(14)

Let

and

and  be

be  matrices defined respectively as follows:

matrices defined respectively as follows:

(15)

(15)

where  is a scalar quantity. Then by applying the DETGTRI algorithm, we see that:

is a scalar quantity. Then by applying the DETGTRI algorithm, we see that:

(16)

(16)

(17)

(17)

having used (12) and (13).

Let  is an

is an  centrosymmetric matrix, then the three following facts are useful when we deal with such matrices:

centrosymmetric matrix, then the three following facts are useful when we deal with such matrices:

Fact (1): If  then

then

In other words, the centrosymmetric matrix  is the same when read backwards as when read forwards.

is the same when read backwards as when read forwards.

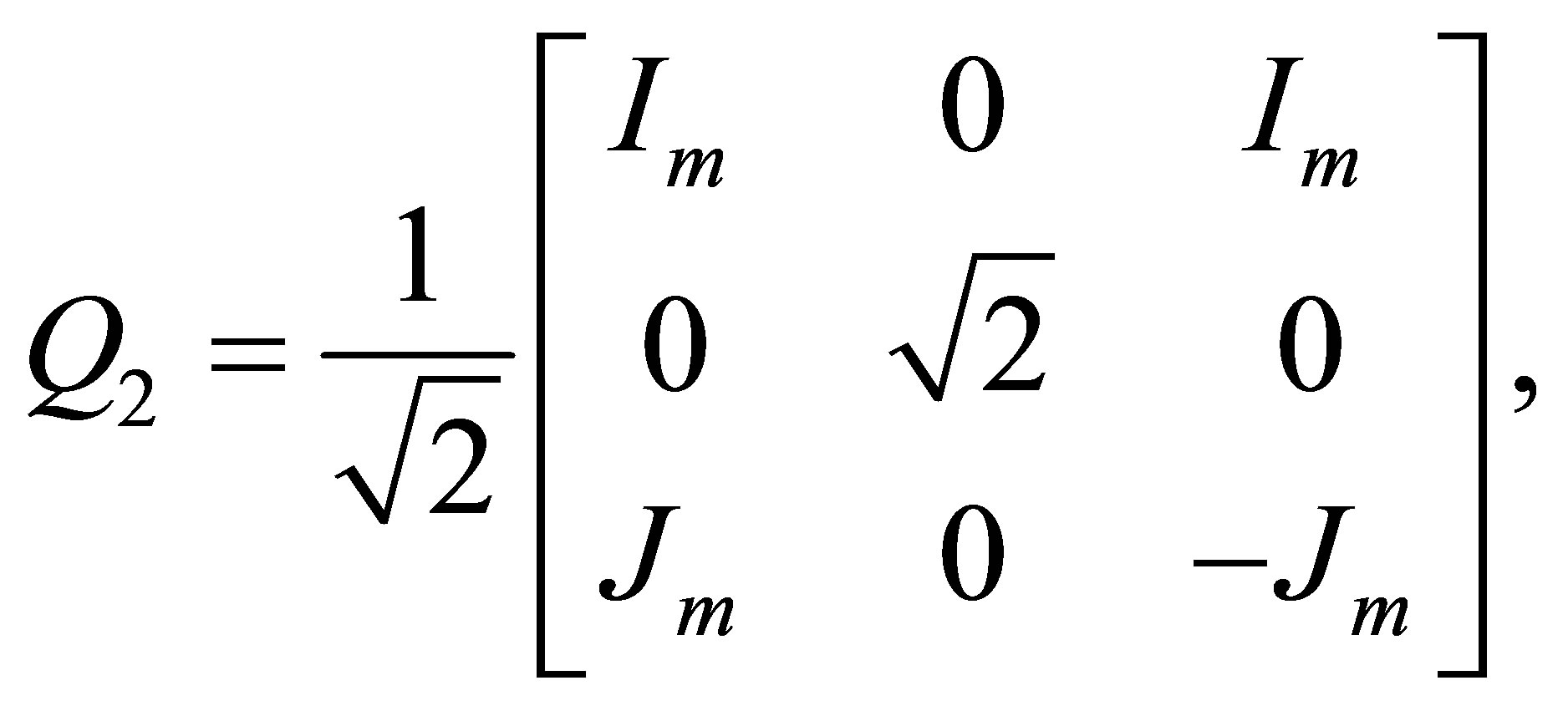

Fact (2): If  is positive even number, then

is positive even number, then

is orthogonal.

Fact (3): If  is positive odd number then

is positive odd number then

is orthogonal.

Armed with the above facts, we may formulate the following result whose proof will be omitted.

Theorem 3.7. Let  be an

be an  of even order say

of even order say  then

then  can be written in the form:

can be written in the form:

(18)

(18)

where

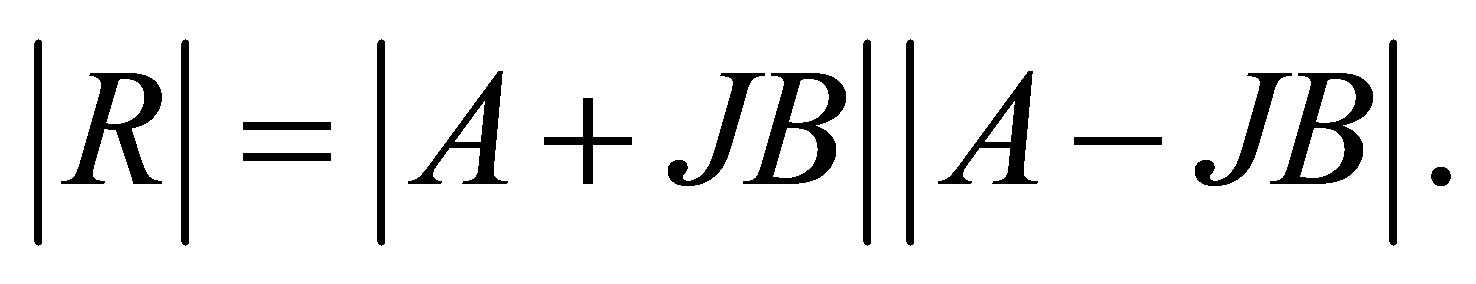

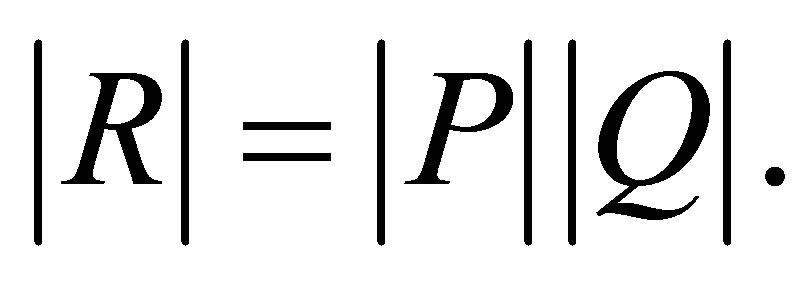

The determinant of the matrix  in (18) is given by:

in (18) is given by:

(19)

(19)

If  is odd, i.e.,

is odd, i.e.,  then we have:

then we have:

(20)

(20)

where

and

and

The determinant of the matrix  in (20) is given by

in (20) is given by

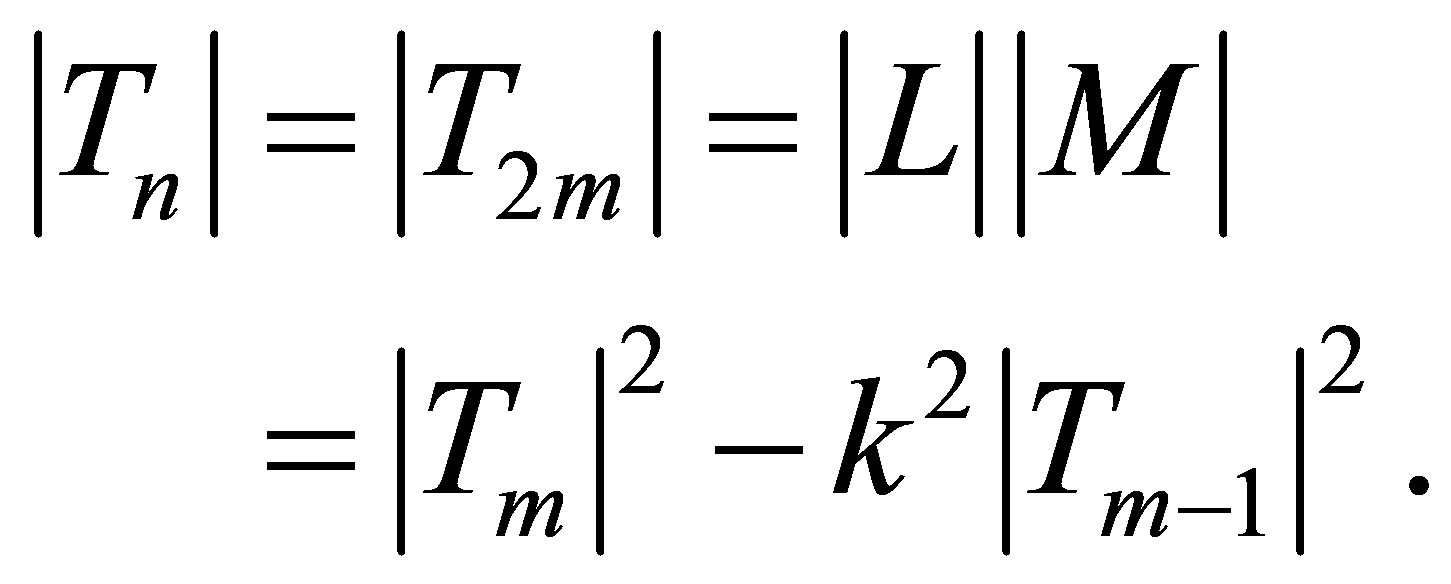

(21)

(21)

Concerning the inverse of centrosymmetric matrices the reader may refer to [8].

As an interesting special case of the Theorem 3.7, we give the following result.

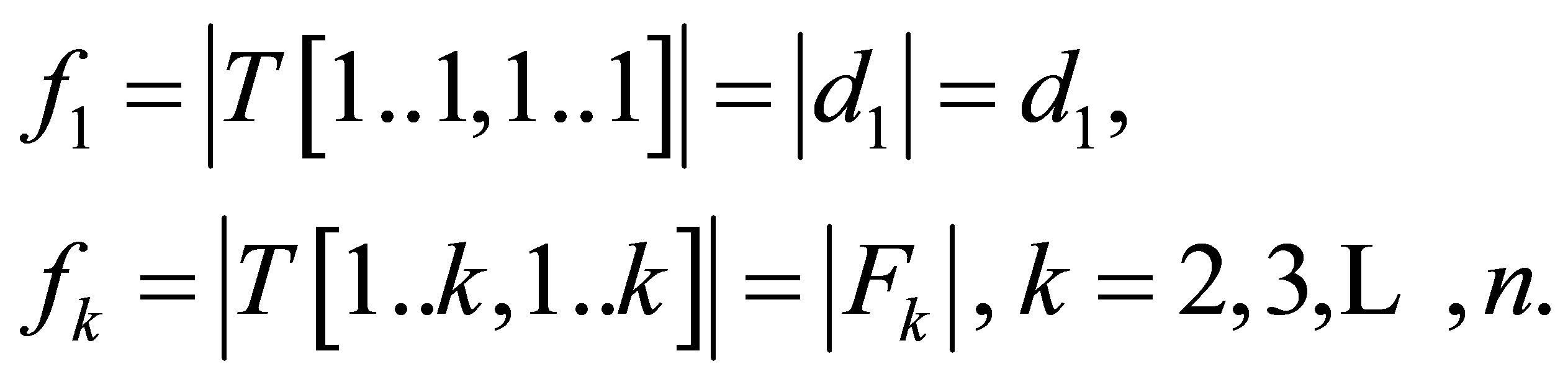

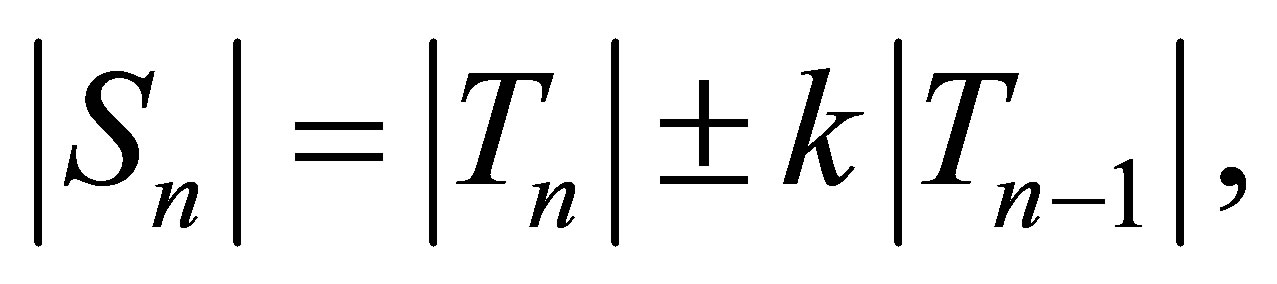

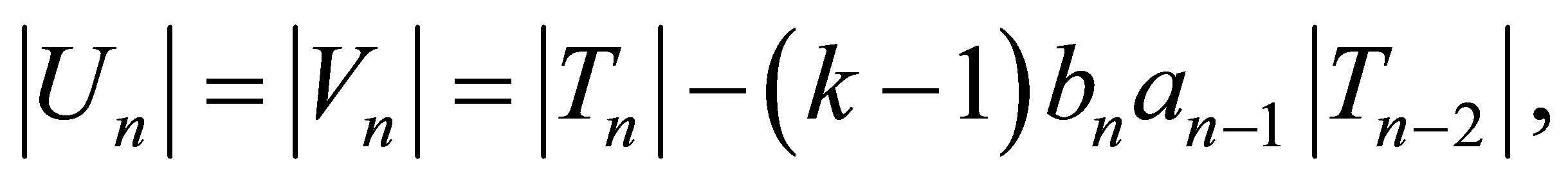

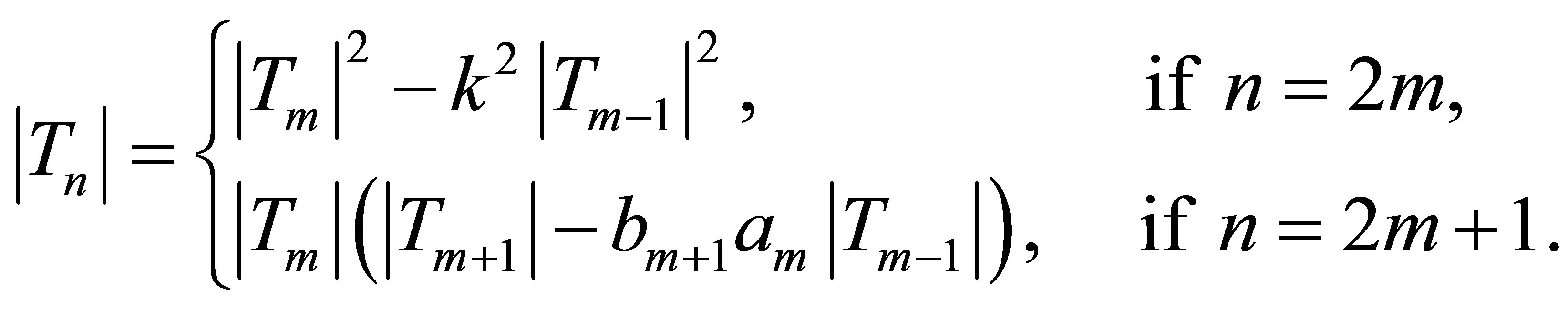

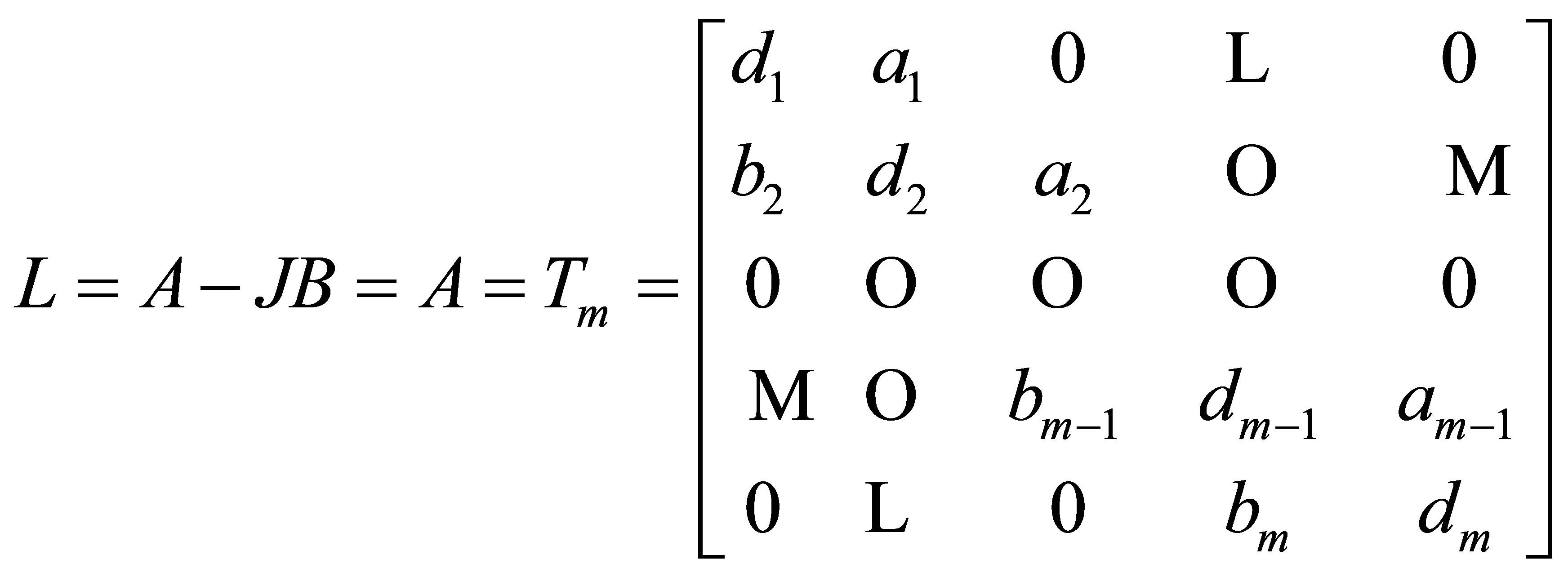

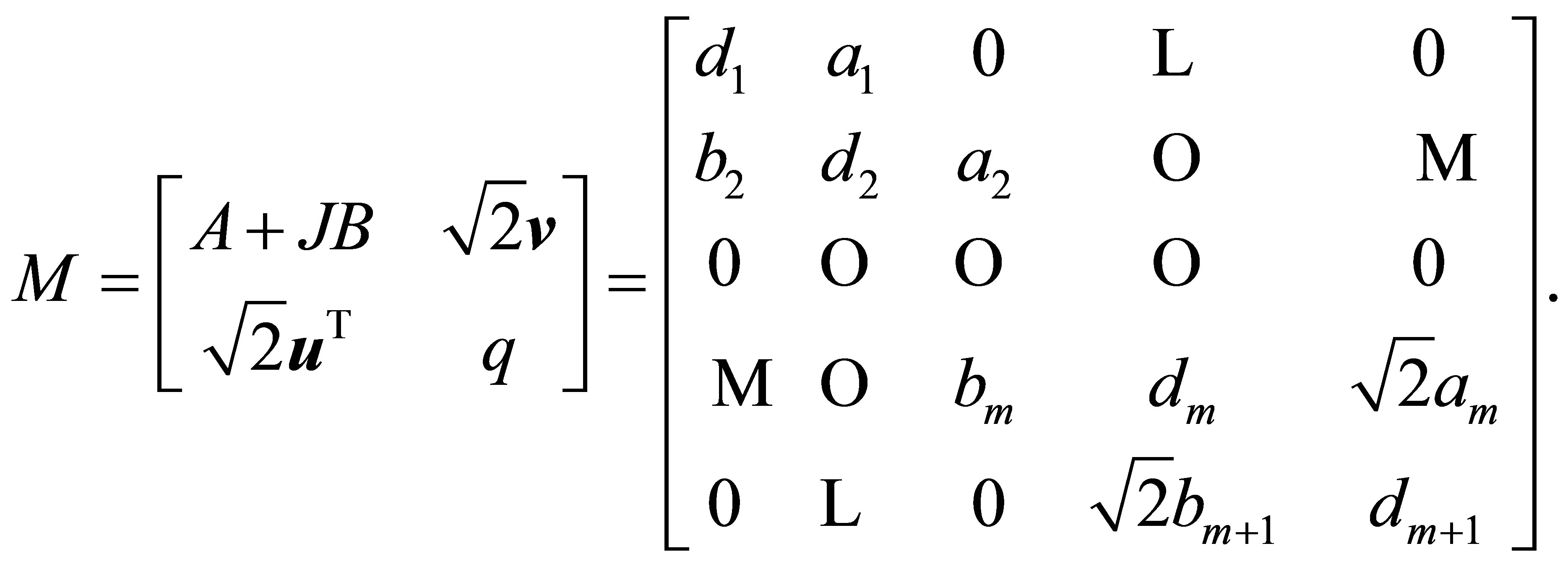

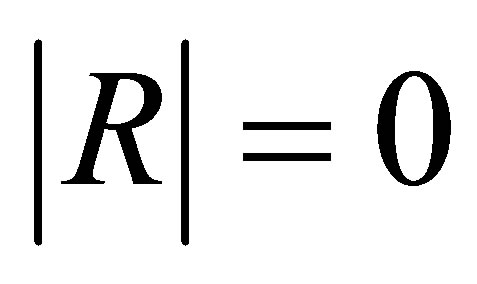

Corollary 3.8. In Theorem 3.7, if R = Tn is centrosymmetric tridiagonal matrix, then we have

where k is the common value of the elements in positions (m, m+1) and (m+1, m) of the matrix R = Tn when n = 2m.

Proof. To compute the centrosymmetric tridiagonal determinant of order  Two cases will be considered:

Two cases will be considered:

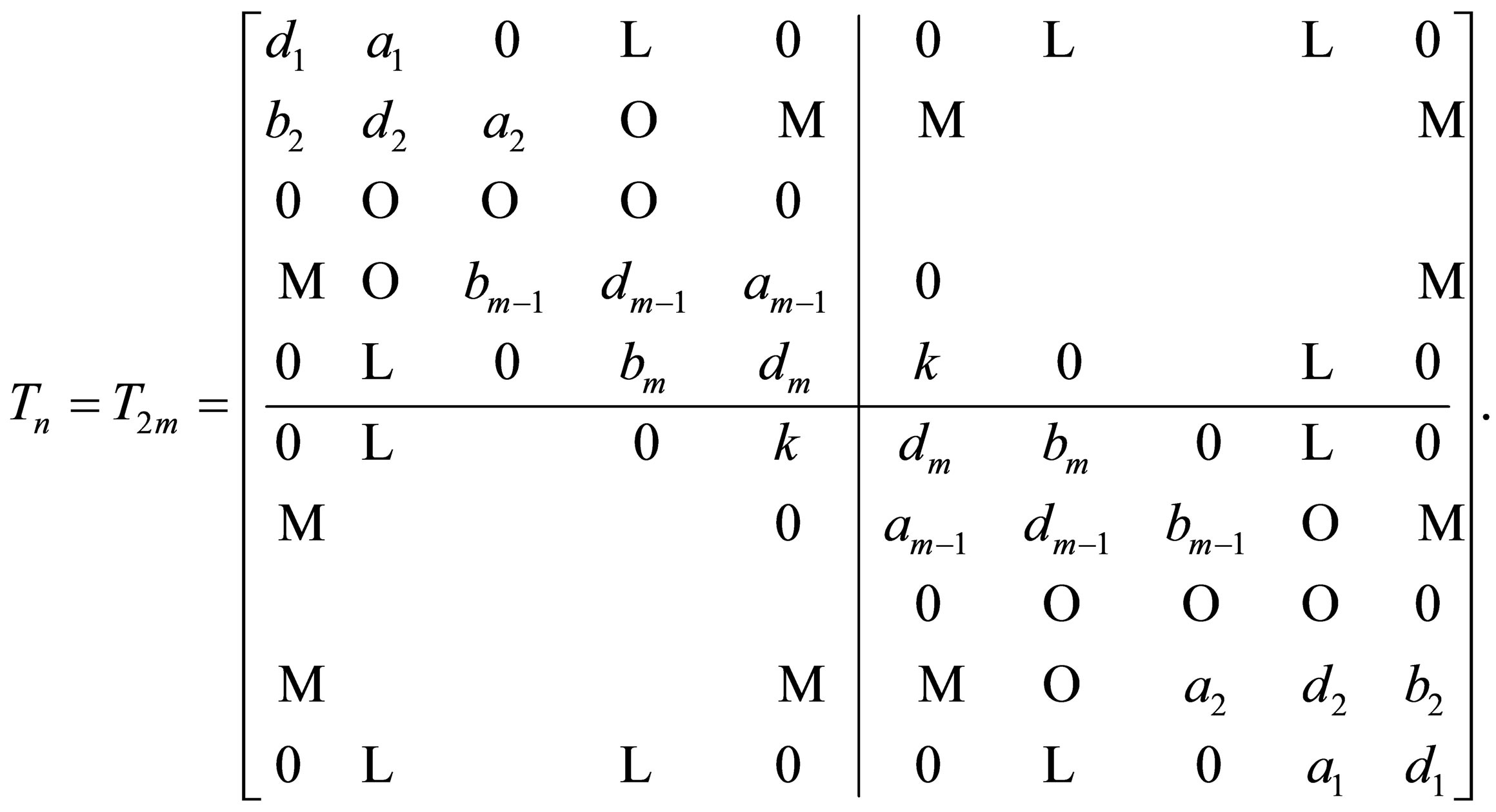

Case (1):  In this case, the centrosymmetric tridiagonal matrix takes the form:

In this case, the centrosymmetric tridiagonal matrix takes the form:

(22)

(22)

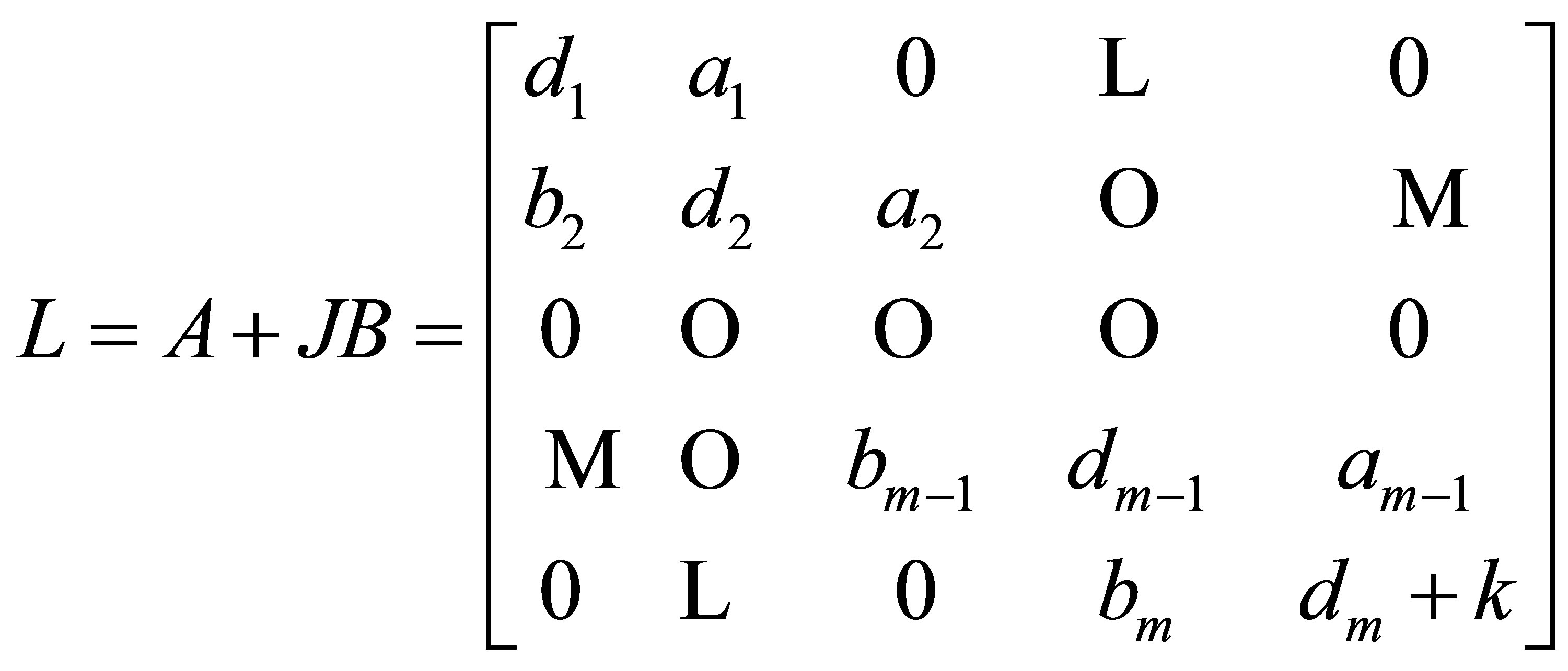

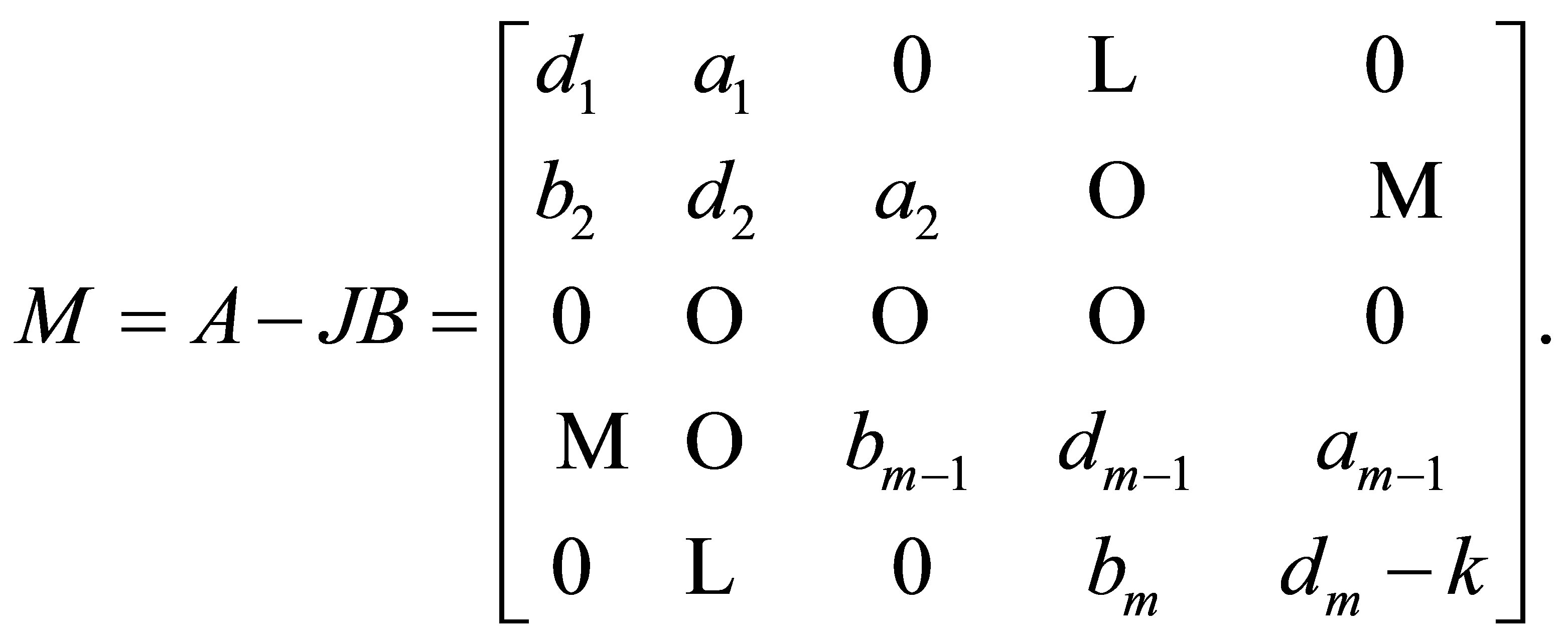

Applying Theorem 3.7, we have  where

where

and

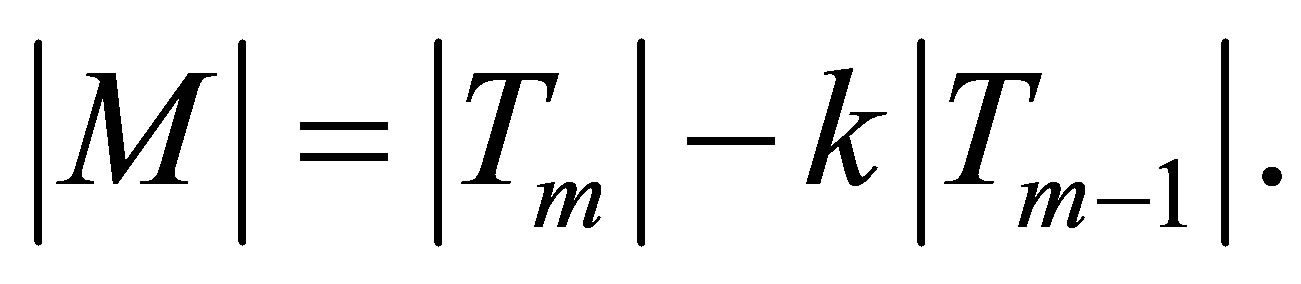

By using Lemma 3.6, we obtain

and

Therefore we get

(23)

(23)

Case (2):  In this case, the centrosymmetric tridiagonal matrix takes the form:

In this case, the centrosymmetric tridiagonal matrix takes the form:

(24)

(24)

Applying Theorem 3.7, we obtain  here

here

and

By using Lemma 3.6, we obtain  and

and

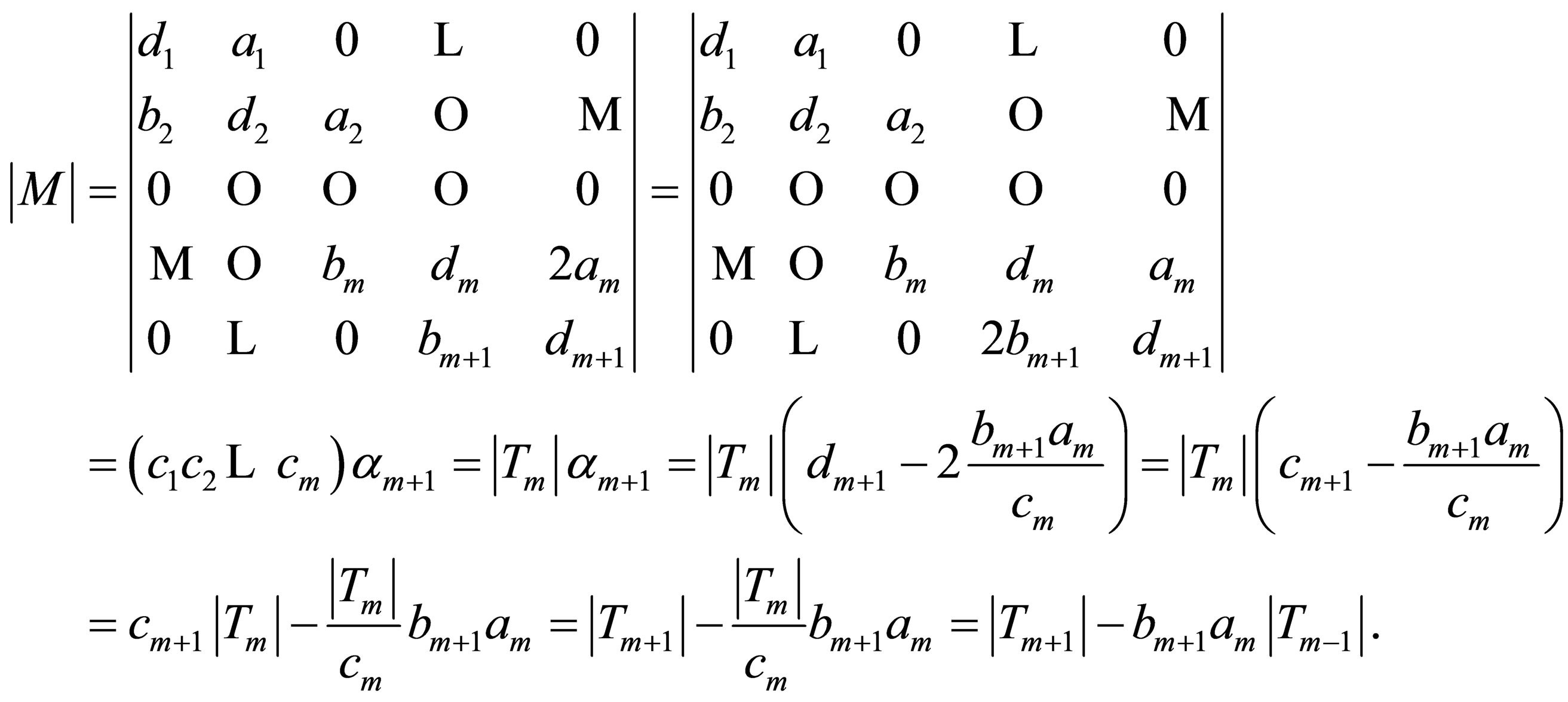

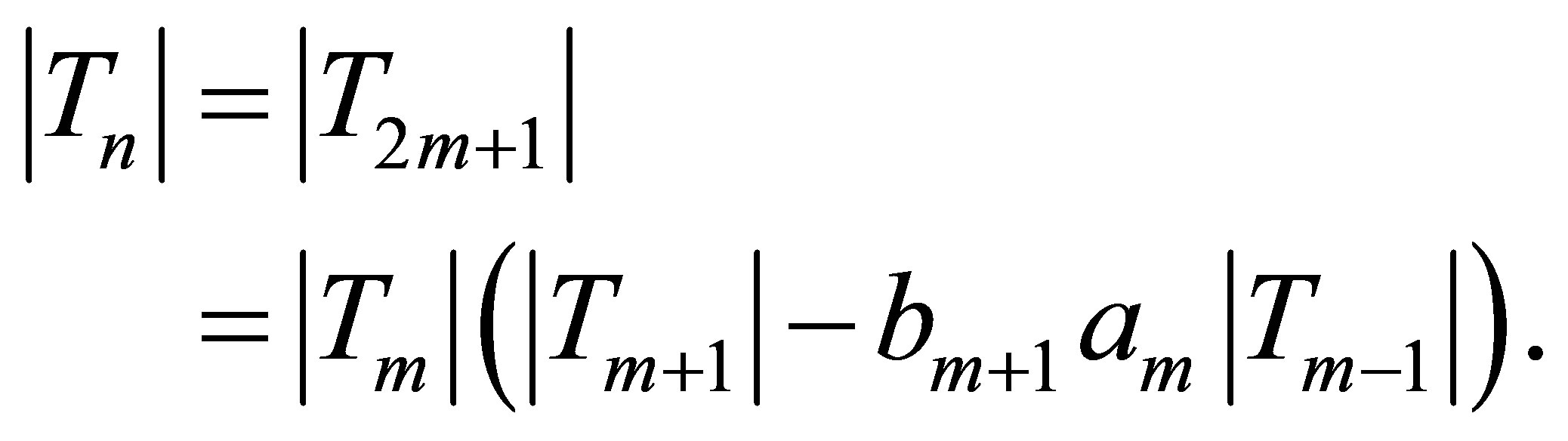

By using the DETGTRI algorithm [31] together with (12) and (13), then we have

Therefore

(25)

(25)

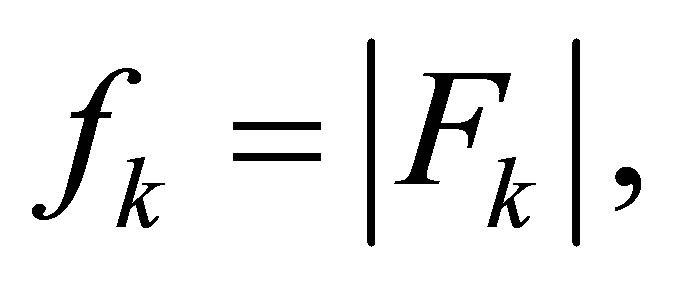

From (23) and (25), we see that in order to compute the determinant of a centrosymmetric tridiagonal matrix of order , then all we need is to compute

, then all we need is to compute  if

if  and

and  if

if

4. Algorithms for Solving Centrosymmetric Linear Systems

Solving linear systems practically dominates scientific computing. In the present section, we focus on solving linear systems of centrosymmetric type. Two cases will be considered:

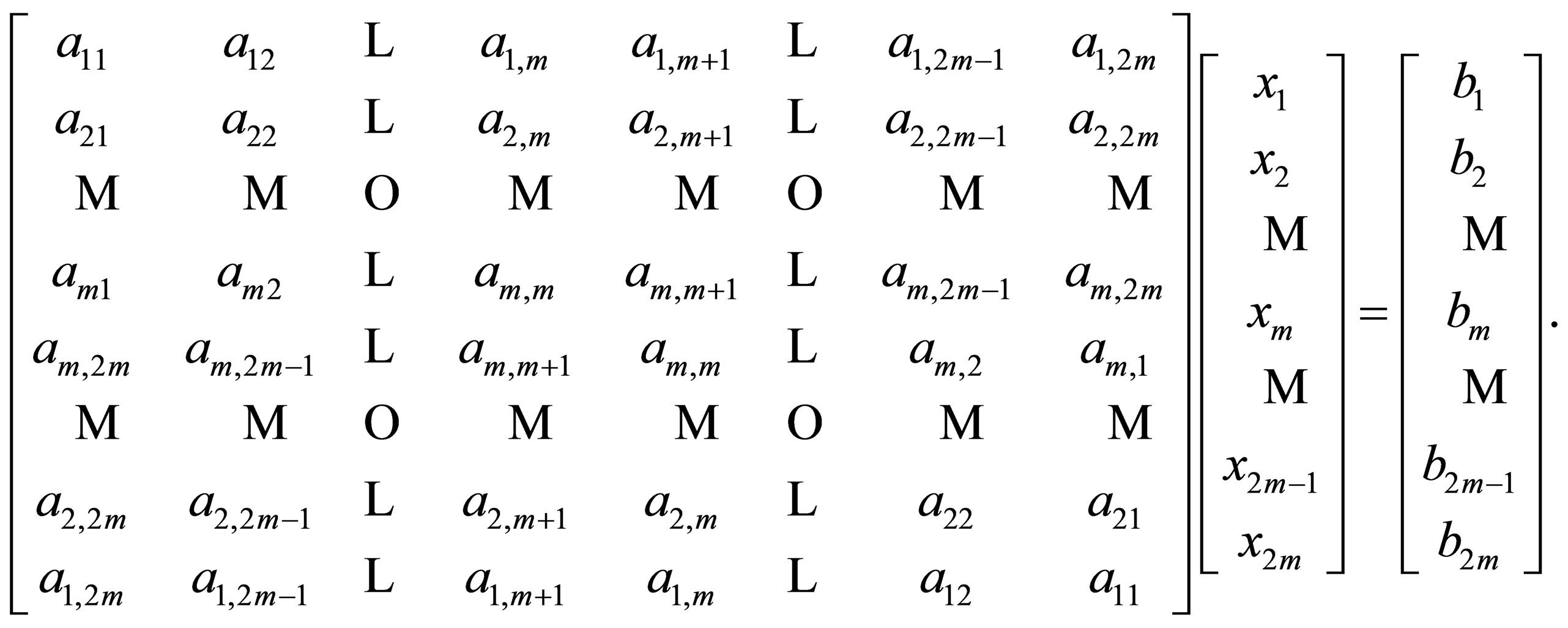

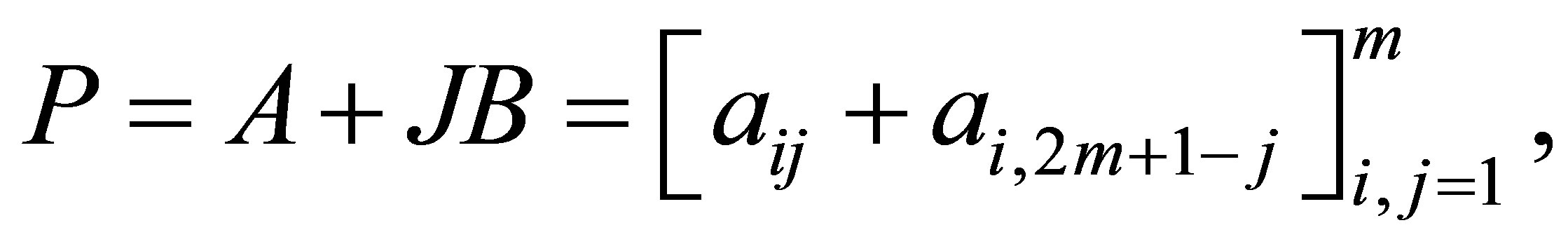

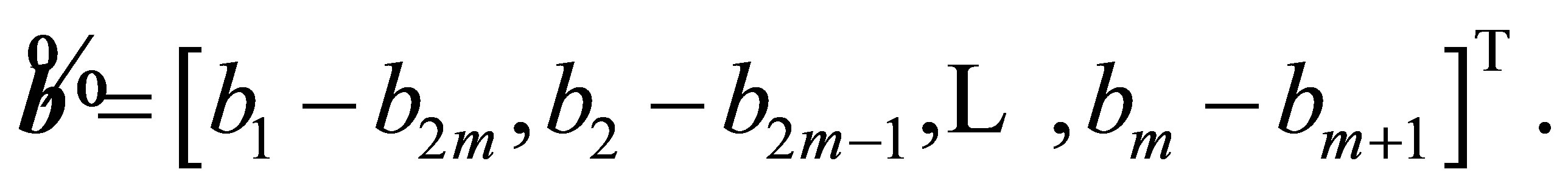

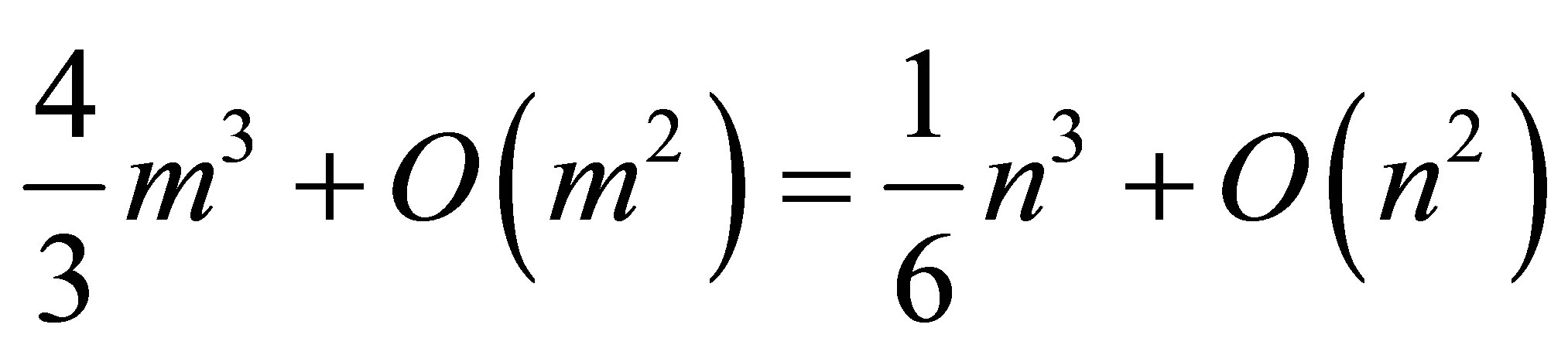

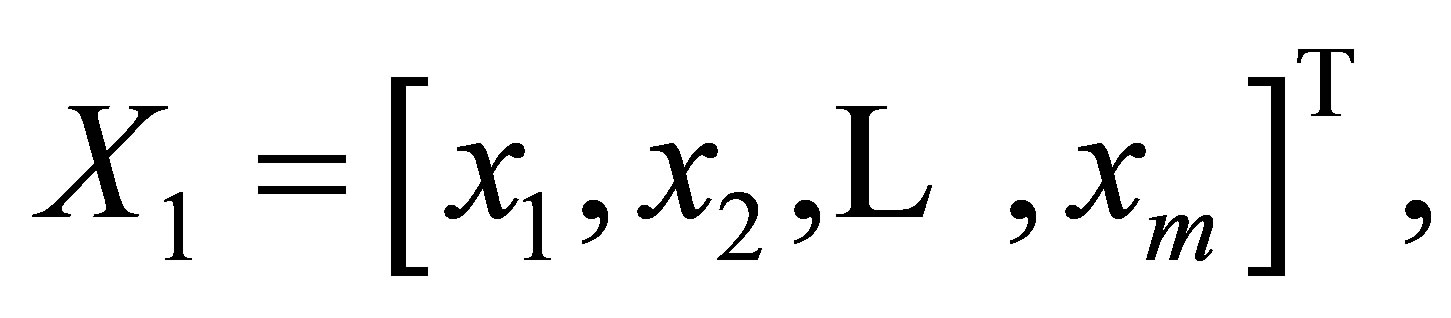

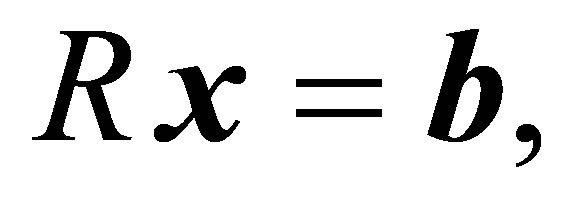

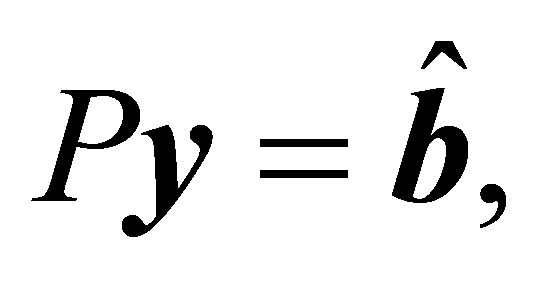

Case (i):  For this case we are going to construct an algorithm for solving centrosymmetric linear systems of the form:

For this case we are going to construct an algorithm for solving centrosymmetric linear systems of the form:

(26)

(26)

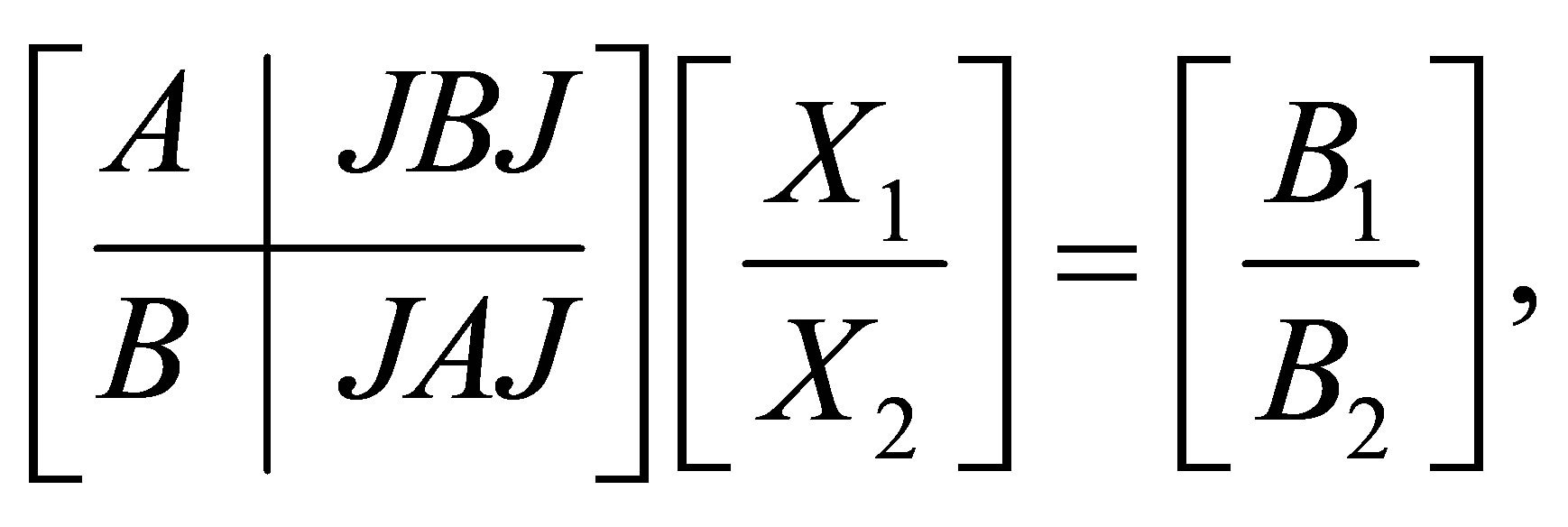

Block multiplication is particularly useful when there are patterns in the matrices to be multiplied. Therefore it is convenient to rewrite (26) in the partitioned form

(27)

(27)

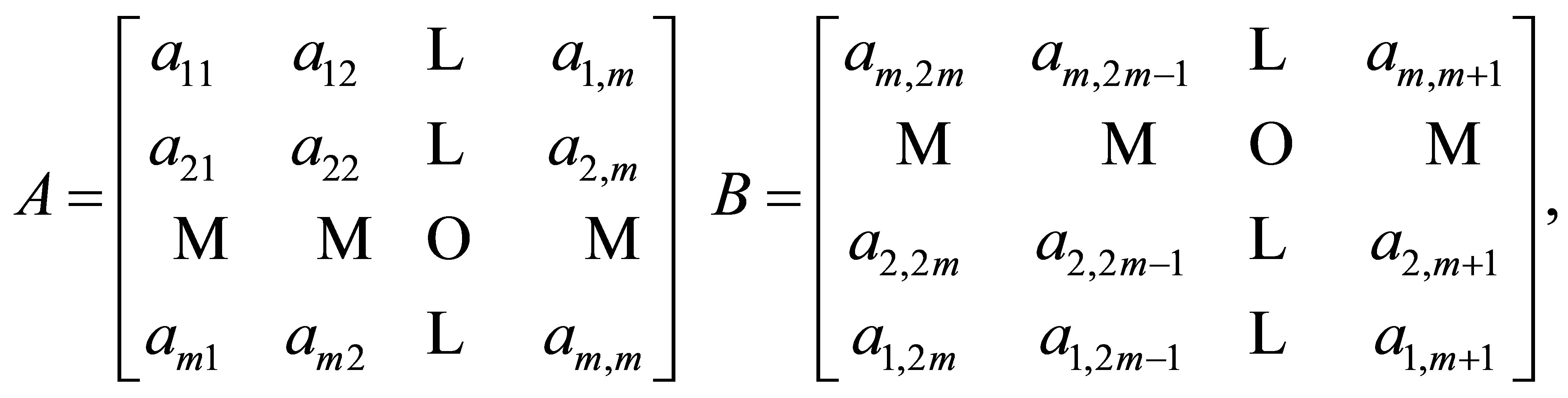

where

and

and

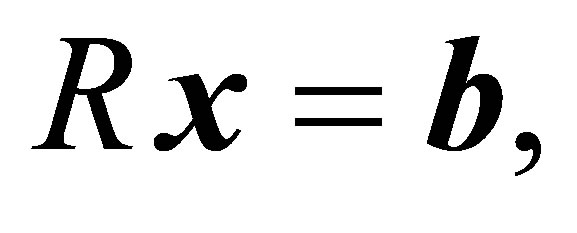

The system in (26) can also be written in matrix form as follows:

(28)

(28)

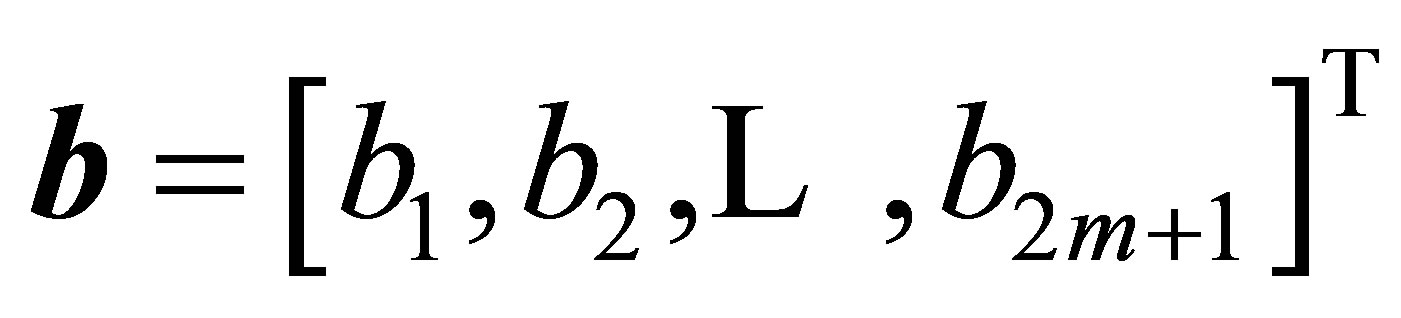

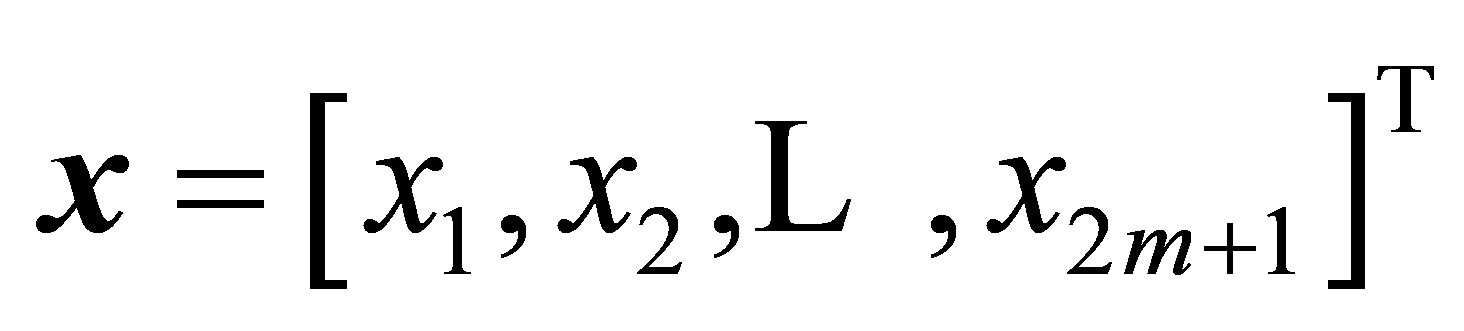

where  is the coefficient matrix of the system (26),

is the coefficient matrix of the system (26),  and

and

is the constant vector.

Algorithm 4.1. An algorithm for solving centrosymmetric linear system of even order.

To solve the linear system of the form (26), we may proceed as follows:

INPUT: The entries of the coefficient matrix  and the constant vector

and the constant vector  in (28).

in (28).

OUTPUT: Solution vector .

.

Step 1: Construct the  matrices

matrices

and the m-vectors

and the m-vectors  and

and  as follows:

as follows:

and

Step 2: Compute  If

If  then Exiterror(‘No solutions’) end if.

then Exiterror(‘No solutions’) end if.

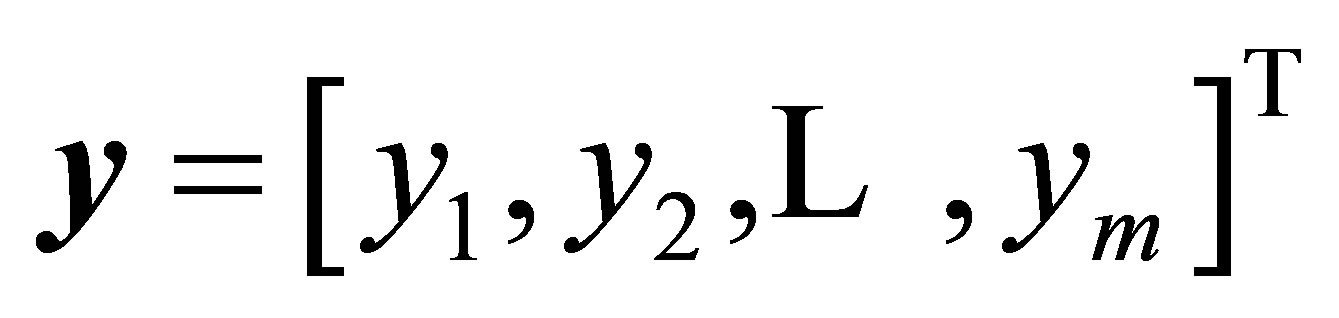

Step 3: Solve the two linear systems:

and

and  for

for  and

and

respectively.

respectively.

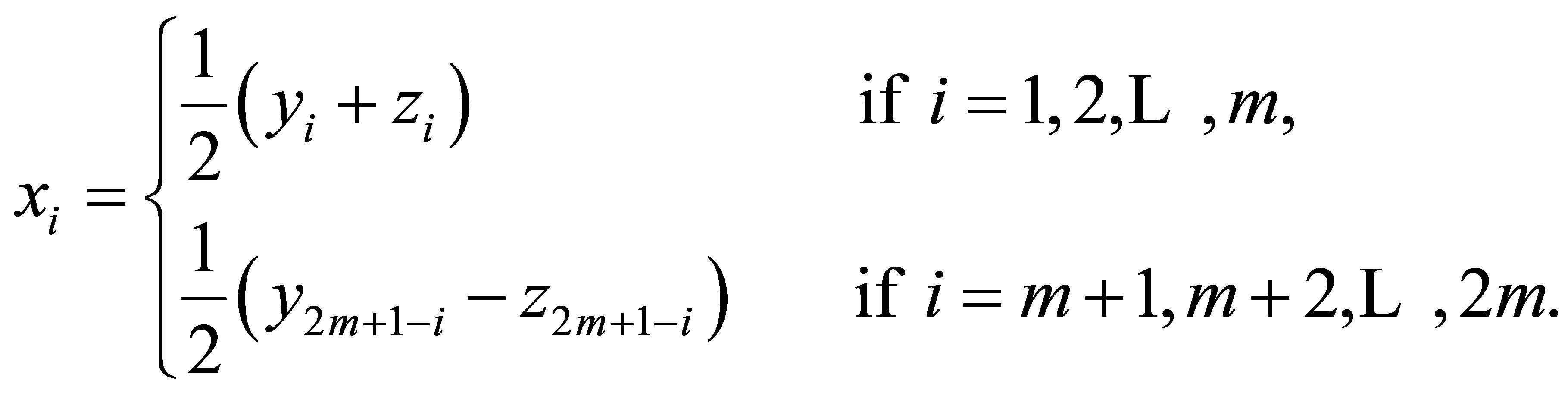

Step 4: The solution vector  is given by

is given by

The Algorithm 4.1, will be refereed to as CENTROSYMM-I algorithm.

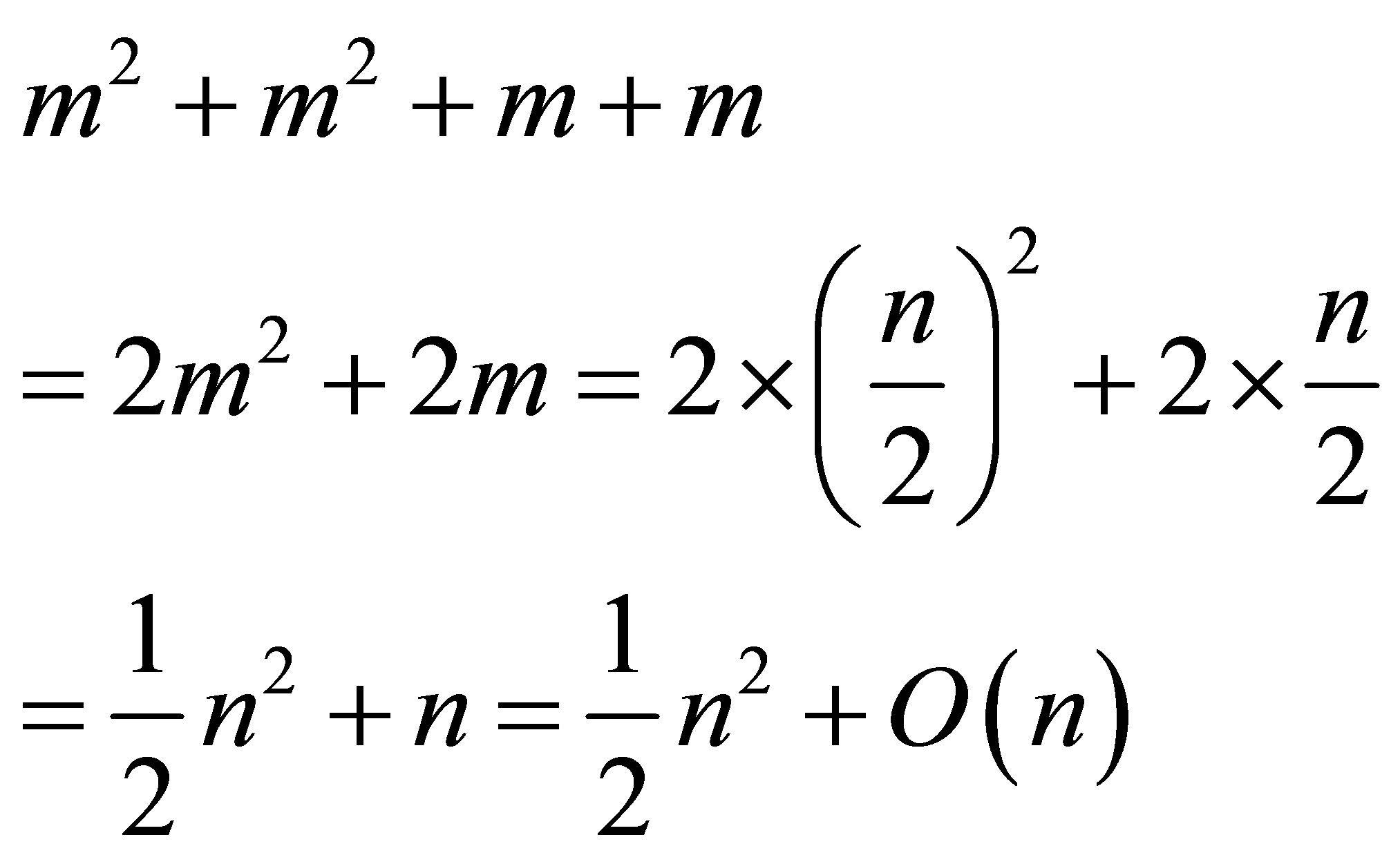

Concerning the computational cost of the CENTROSYMM-I algorithm:

• The time complexity of Step 1 is

( additions/subtractions and no multiplications/divisions).

additions/subtractions and no multiplications/divisions).

• The time complexity of Step 3 depends on the solvers of the two linear systems. For example, tridiagonal linear systems can be solved in linear time (see [25]).

• The time complexity of Step 4 is  (

( additions/subtractions and

additions/subtractions and  multiplications).

multiplications).

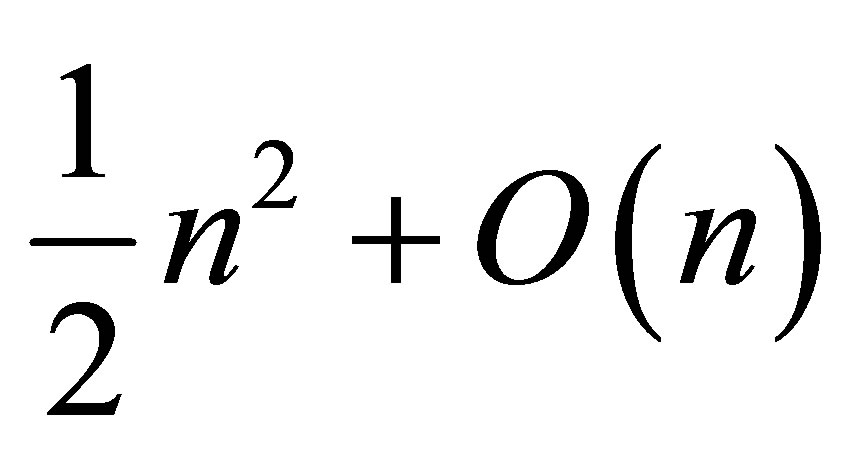

Step 3 is the step that leads to the reduction of the time complexity, because instead of solving a linear system of  equations, we end up with two linear systems half the size of the original one. If the original system is solved with Gaussian elimination (GE) method, then the time complexity will be

equations, we end up with two linear systems half the size of the original one. If the original system is solved with Gaussian elimination (GE) method, then the time complexity will be . But, if GE method is used to solve the two systems in the third step, then the time complexity of our algorithm will be

. But, if GE method is used to solve the two systems in the third step, then the time complexity of our algorithm will be

which is a significant reduction. If a method more efficient than the GE method is used, then the time complexity of our algorithm will be less (see also [16]).

which is a significant reduction. If a method more efficient than the GE method is used, then the time complexity of our algorithm will be less (see also [16]).

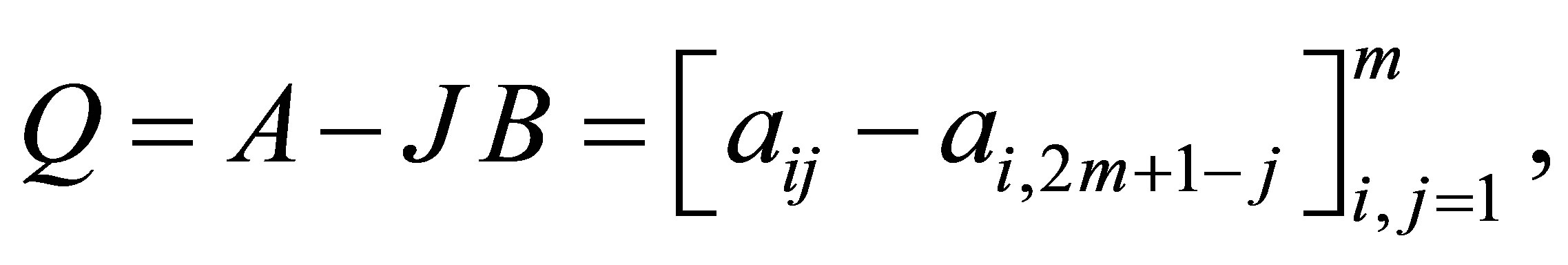

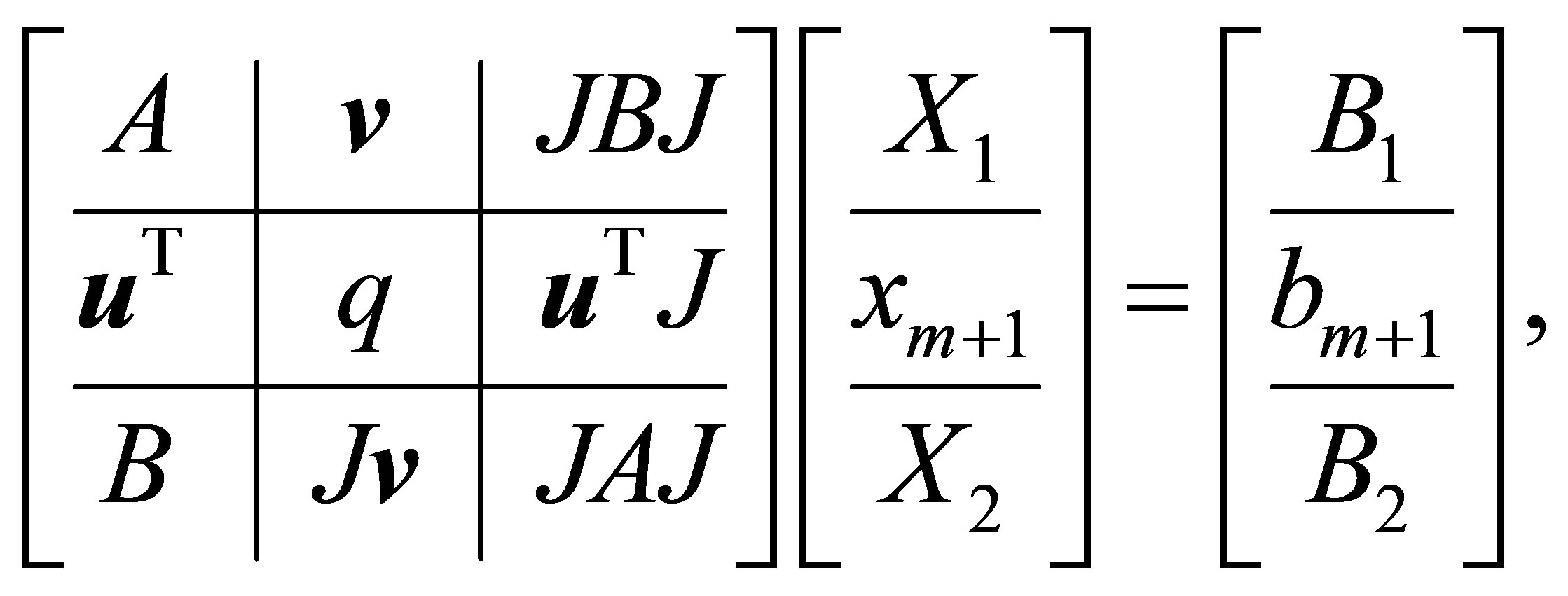

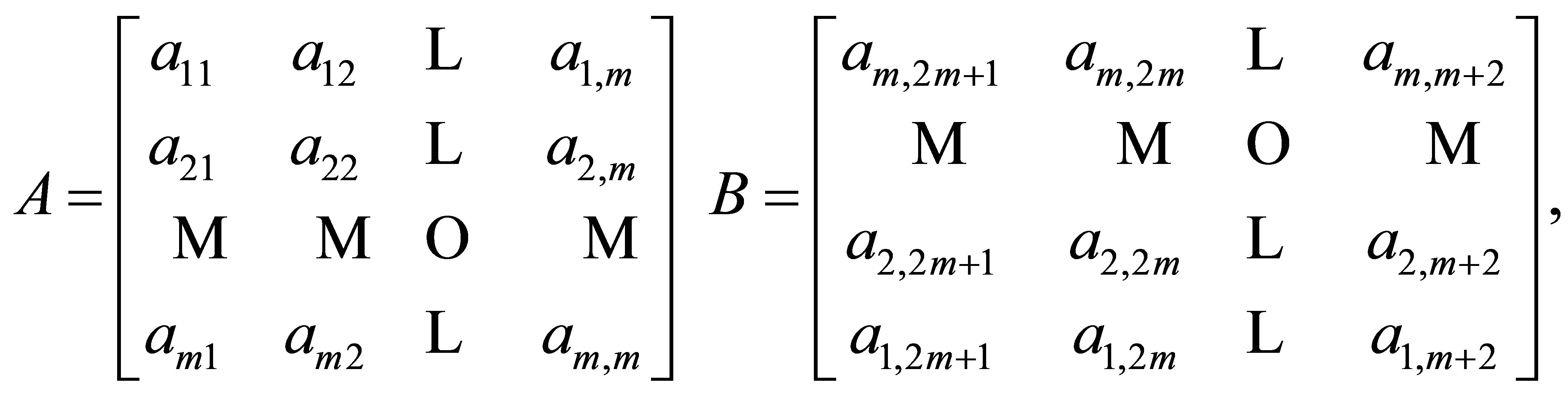

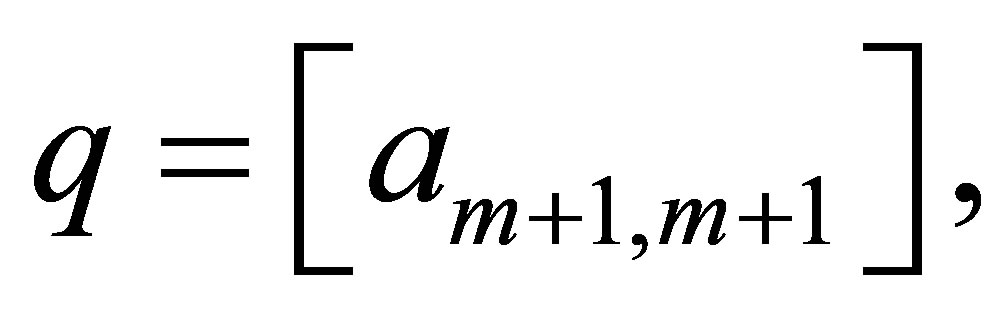

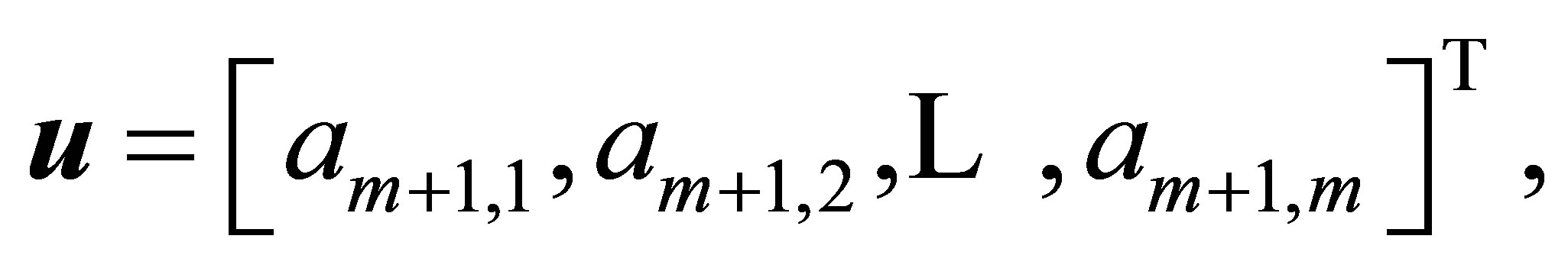

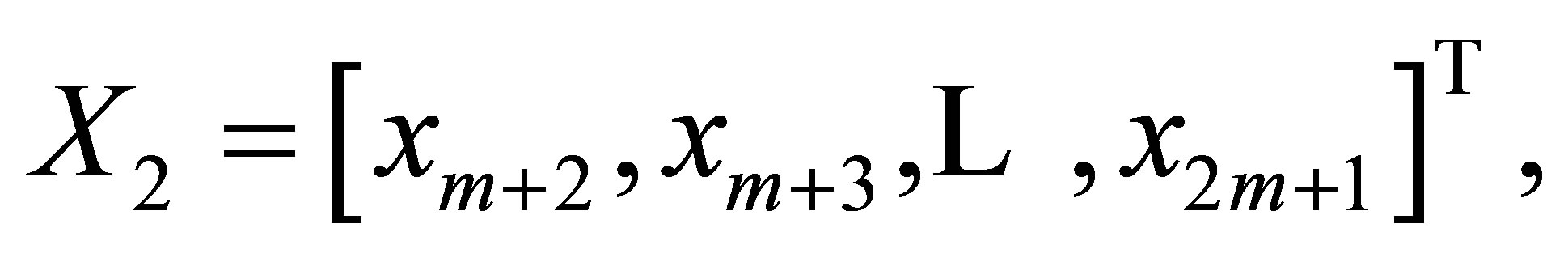

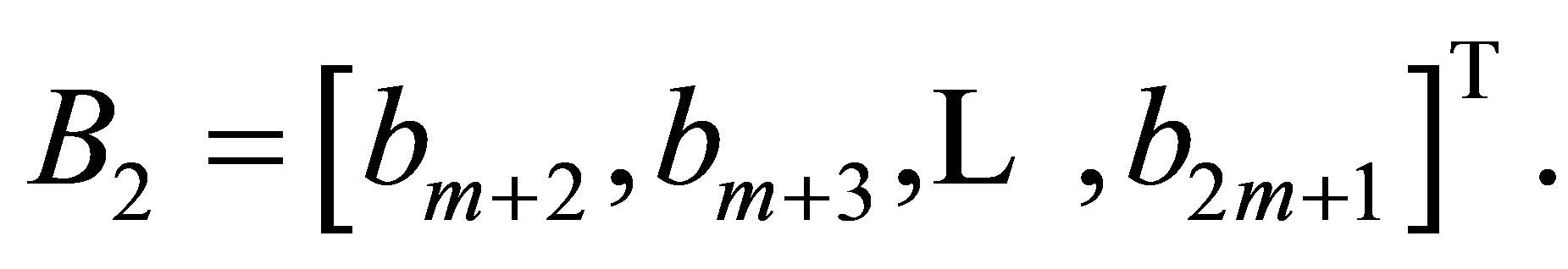

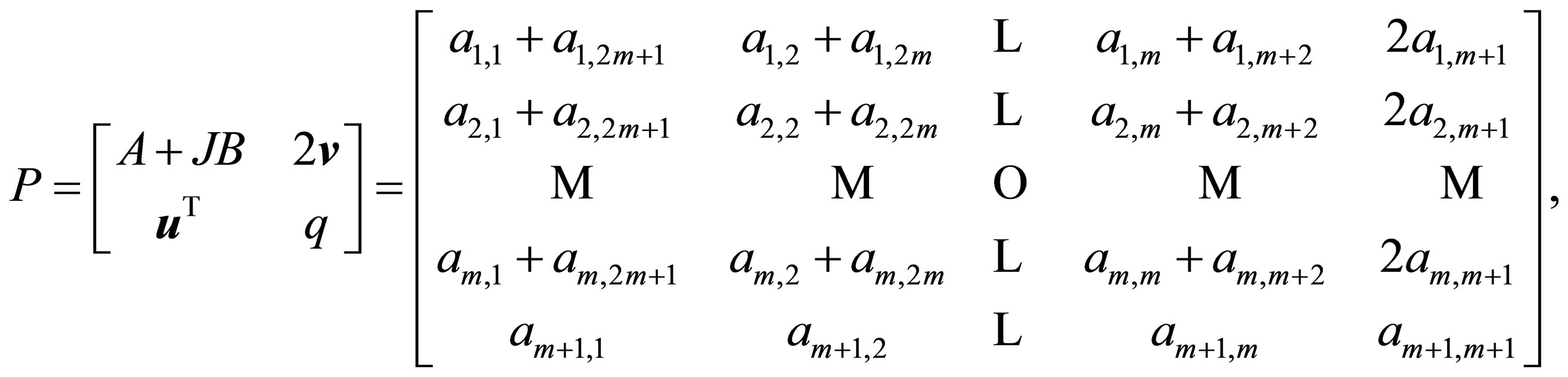

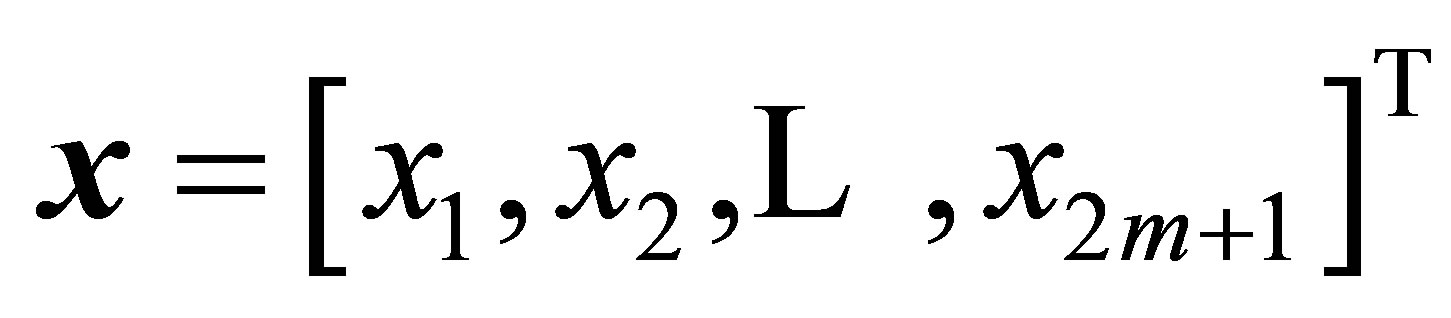

Case (ii):  In this case the linear system to be considered has the form:

In this case the linear system to be considered has the form:

(29)

(29)

or equivalently,

(30)

(30)

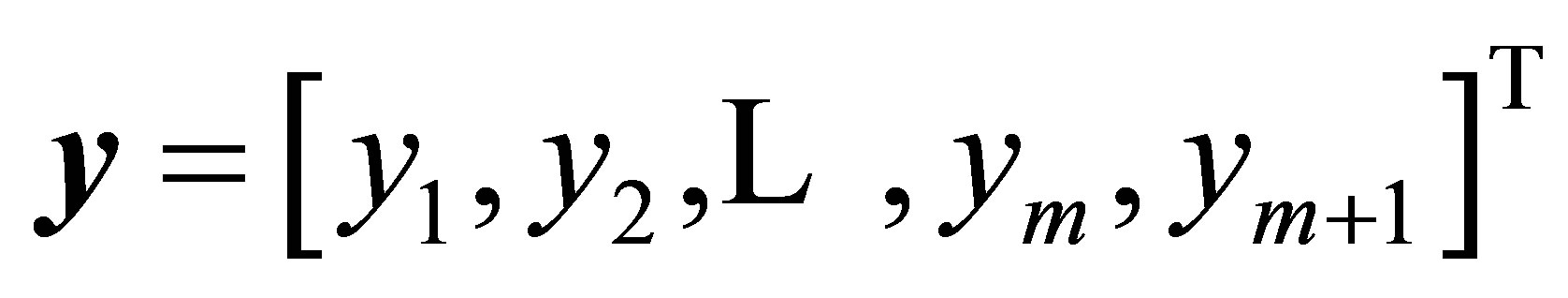

where

and

and

The linear system (30) can also be written as:

(31)

(31)

where  is the coefficient matrix of the system (29),

is the coefficient matrix of the system (29),

and

and

is the constant vector.

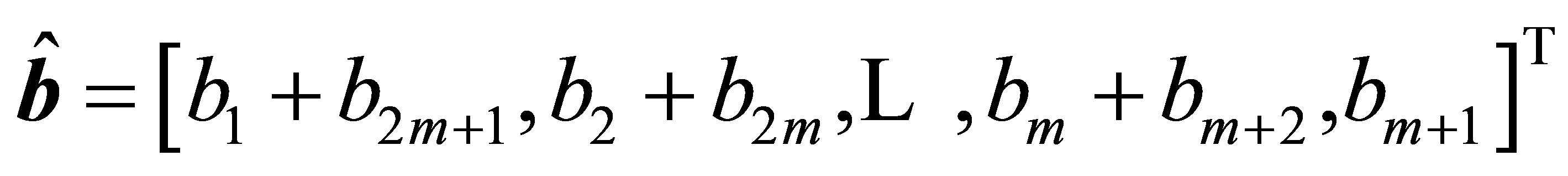

Algorithm 4.2. An algorithm for solving centrosymmetric linear system of odd order To solve the linear system of the form (29), we may proceed as follows:

INPUT: The entries of the coefficient matrix R and the constant vector  in (31).

in (31).

OUTPUT: Solution vector .

.

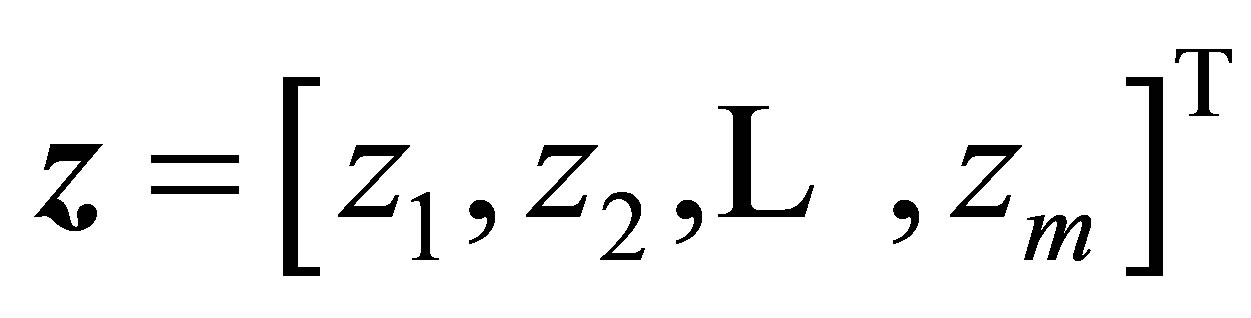

Step 1: Construct the matrices

of orders

of orders  and

and  respectively and the vectors

respectively and the vectors  and

and  of dimensions

of dimensions  and

and  respectively as follows:

respectively as follows:

and

Step 2: Compute  If

If  then Exiterror(“No solutions”) end if.

then Exiterror(“No solutions”) end if.

Step 3: Solve the two linear systems  and

and  for

for

and

and

respectively.

Step 4: The solution vector  is given by

is given by

The Algorithm will be refereed to as CENTROSYMM-II algorithm.

Concerning the computational cost of the CENTROSYMM-II algorithm:

• The time complexity of Step 1 is .

.

• The time complexity of Step 3 depends on the solvers of the two linear systems.

• The time complexity of Step 4 is  (see also [16]).

(see also [16]).

It may be convenient to finish this section by giving the following result, whose proof will be omitted.

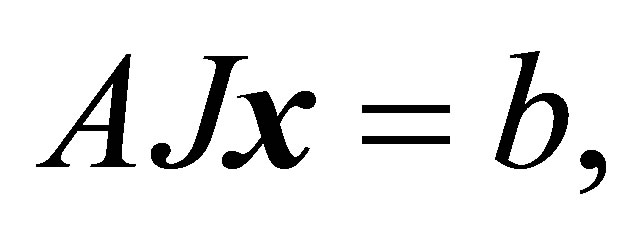

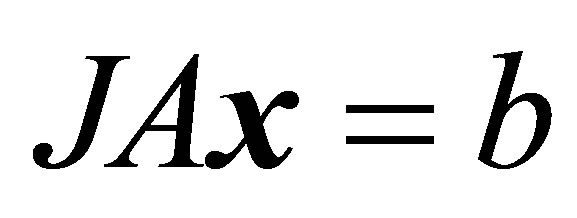

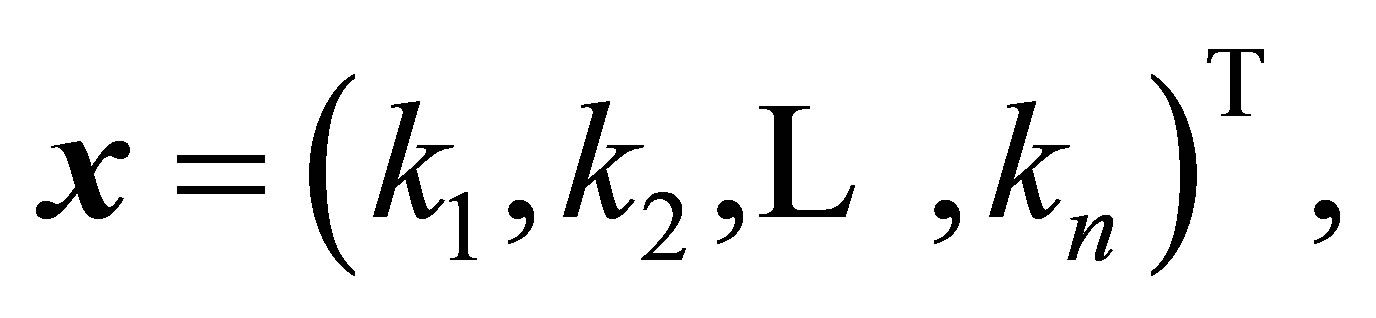

Theorem 4.1. Let  be a non-singular centrosymmetric square matrix of order

be a non-singular centrosymmetric square matrix of order  Consider the four linear systems of centrosymmetric type:

Consider the four linear systems of centrosymmetric type:

(32)

(32)

(33)

(33)

(34)

(34)

and

(35)

(35)

Then the two linear systems (32) and (35) are equivalent. The same is true for the linear systems (33) and (34). Moreover, if the common solution of the systems (32) and (35) is  then the common solution of the systems (33) and (34) is

then the common solution of the systems (33) and (34) is

5. Computer Program

In this section, we are going to introduce a MAPLE procedure for solving centrosymmetric linear systems (26) and (29). This procedure is based on the CENTROSYMM-I and CENTROSYMM-II Algorithms.

restart:

restart:

centrosymm:=proc(R::array,f::vector,n::posint)

local i, r,m,f1,f2,A,Jm,J,H,y,x,B,Y,Z,X:

global xsoln,detR,detP,detQ,P,Q;

X:= vector(n): m:=floor(n/2): J:=array(1..m, 1..m, sparse):

for i to m do J[m+1-i,i]:=1 od:

A:= linalg[submatrix](R,1..m,1..m):

if n=2*m then

Case(1): n is even

Case(1): n is even

Step 1 in CENTROSYMM-I Algorithm.

Step 1 in CENTROSYMM-I Algorithm.

B:= linalg[submatrix](R,m+1..n,1..m):

P:=evalm( A + evalm(J&*B)):

Q:=evalm( A - evalm(J&*B)):

Step 2 in CENTROSYMM-I Algorithm.

Step 2 in CENTROSYMM-I Algorithm.

detP:=linalg[det](P): detQ:=linalg[det](Q): detR:=detP *detQ:

if detR = 0 then ERROR("Singular Matrix !!!! ") fi;

Step 3 in CENTROSYMM-I Algorithm.

Step 3 in CENTROSYMM-I Algorithm.

f1:=array(1..m): f2:=array(1..m):

for i to m do f1[i]:=f[i]+f[2*m+1-i];

f2[i]:=f[i]-f[2*m+1-i];

od;

Y:=array(1..m): Z:=array(1..m):

Y:= linalg[linsolve](P,f1): Z:=linalg[linsolve] (Q,f2):

Step 4 in CENTROSYMM-I Algorithm.

Step 4 in CENTROSYMM-I Algorithm.

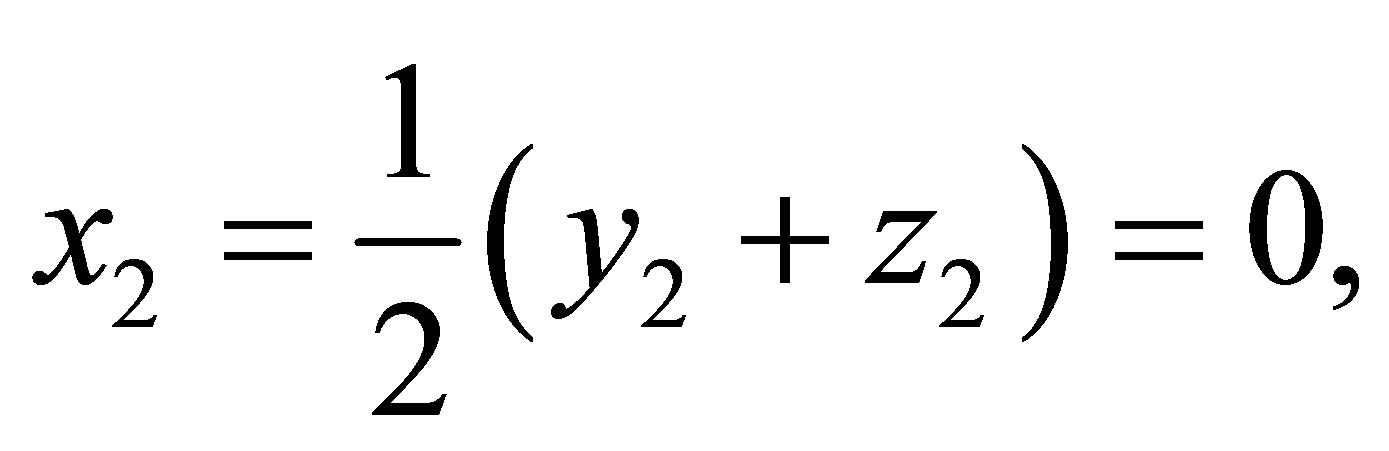

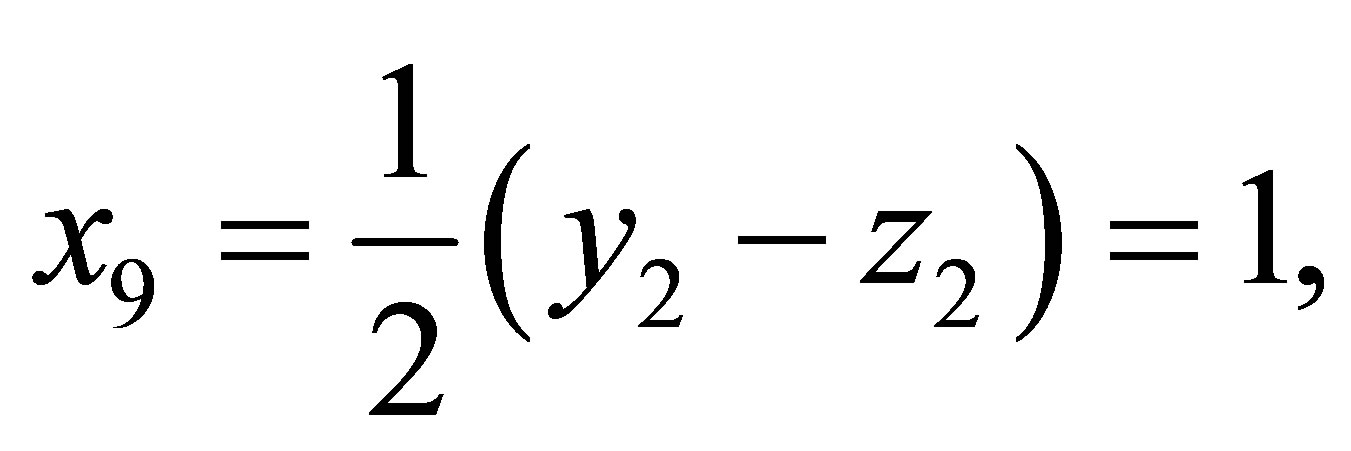

for i to m do X[i]:=1/2*(Y[i]+Z[i]);

od;

for i from m+1 to n do X[i]:=1/2*(Y[n+1-i]-Z[n+1-i]);

od;

xsoln:=simplify([seq(X[r],r=1..n)]):

else

Case(2): n is odd

Case(2): n is odd

Step 1 in CENTROSYMM-II Algorithm.

Step 1 in CENTROSYMM-II Algorithm.

B:= linalg[submatrix](R,m+2..n,1..m):

H := evalm( A + evalm(J&*B)):

y:=linalg[submatrix](R,1..m,m+1..m+1):

x:=linalg[submatrix](R,m+1..m+1,1..m):

P:=linalg[blockmatrix](2,2,[H,2*y,x,[2]]):op(P);

Q:=evalm( A - evalm(J&*B)):

Step 2 in CENTROSYMM-II Algorithm.

Step 2 in CENTROSYMM-II Algorithm.

detP:=linalg[det](P): detQ:=linalg[det](Q): detR:= detP *detQ:

if detR = 0 then ERROR("Singular Matrix !!!!") fi;

Step 3 in CENTROSYMM-II Algorithm.

Step 3 in CENTROSYMM-II Algorithm.

f1:=array(1..m+1): f2:=array(1..m):

for i to m do f1[i]:=f[i]+f[2*m+2-i];

f2[i]:=f[i]-f[2*m+2-i];

od;

f1[m+1]:=f[m+1];

Y:=array(1..m+1):

Y:= linalg[linsolve](P,f1): Z:=linalg[linsolve] (Q,f2):

Step 4 in CENTROSYMM-II Algorithm.

Step 4 in CENTROSYMM-II Algorithm.

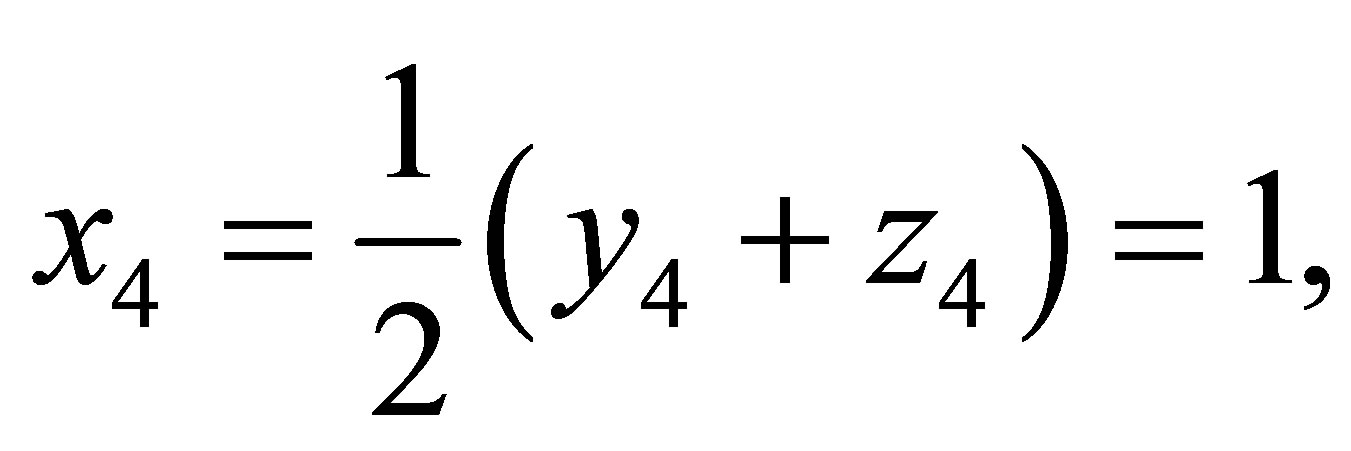

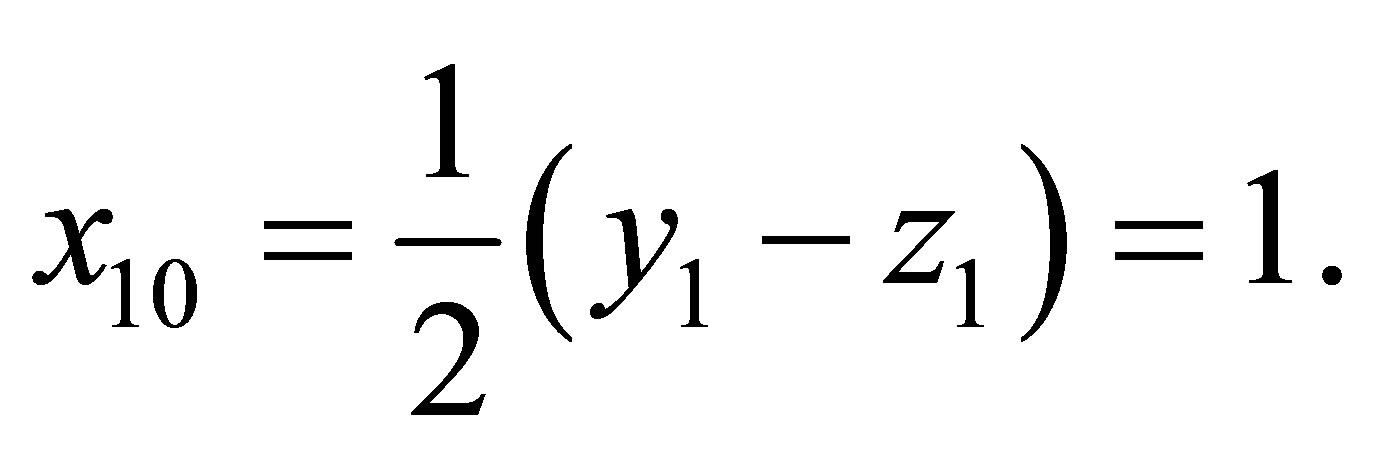

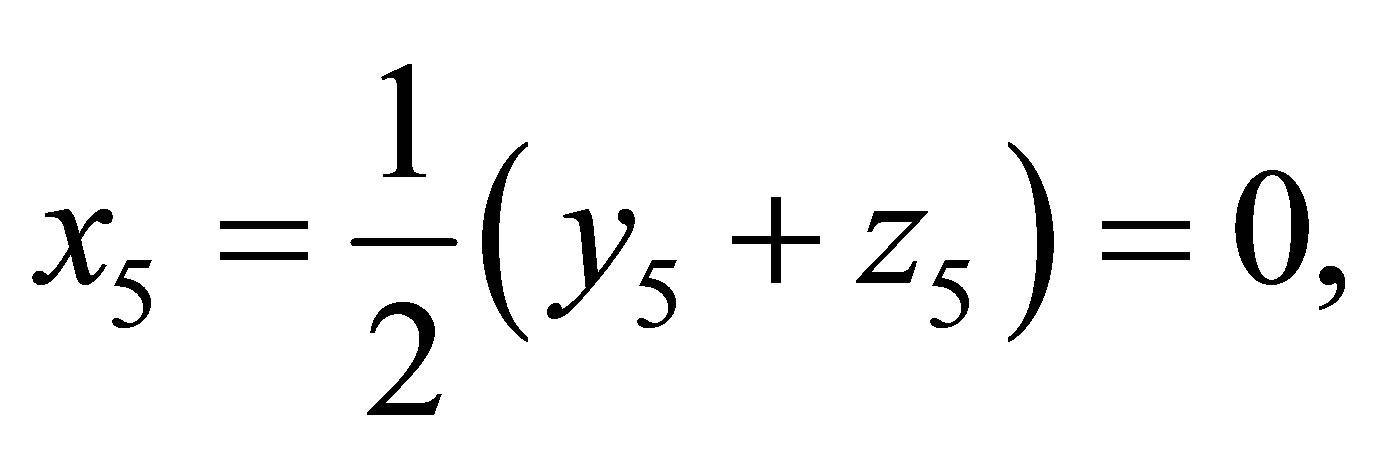

for i to m do X[i]:=1/2*(Y[i]+Z[i]);

od;

X[m+1]:=Y[m+1];

for i from m+2 to n do X[i]:=1/2*(Y[n+1-i]-Z[n+1-i]);

od;

xsoln:=simplify([seq(X[r],r=1..n)]);

fi:

end proc:

6. Illustrative Examples

All results in this section are obtained with the help of the MAPLE procedure centrosymm.

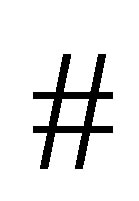

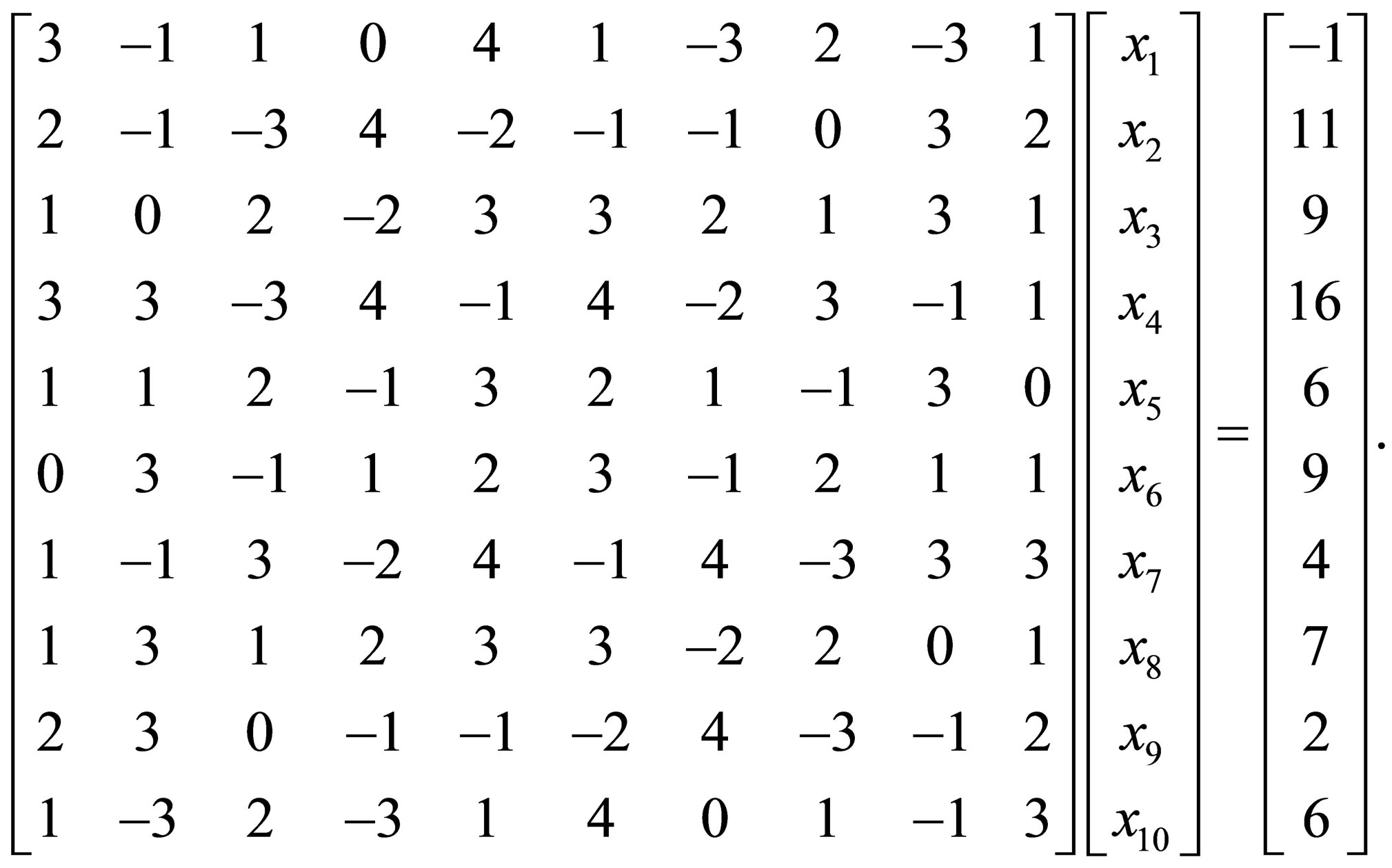

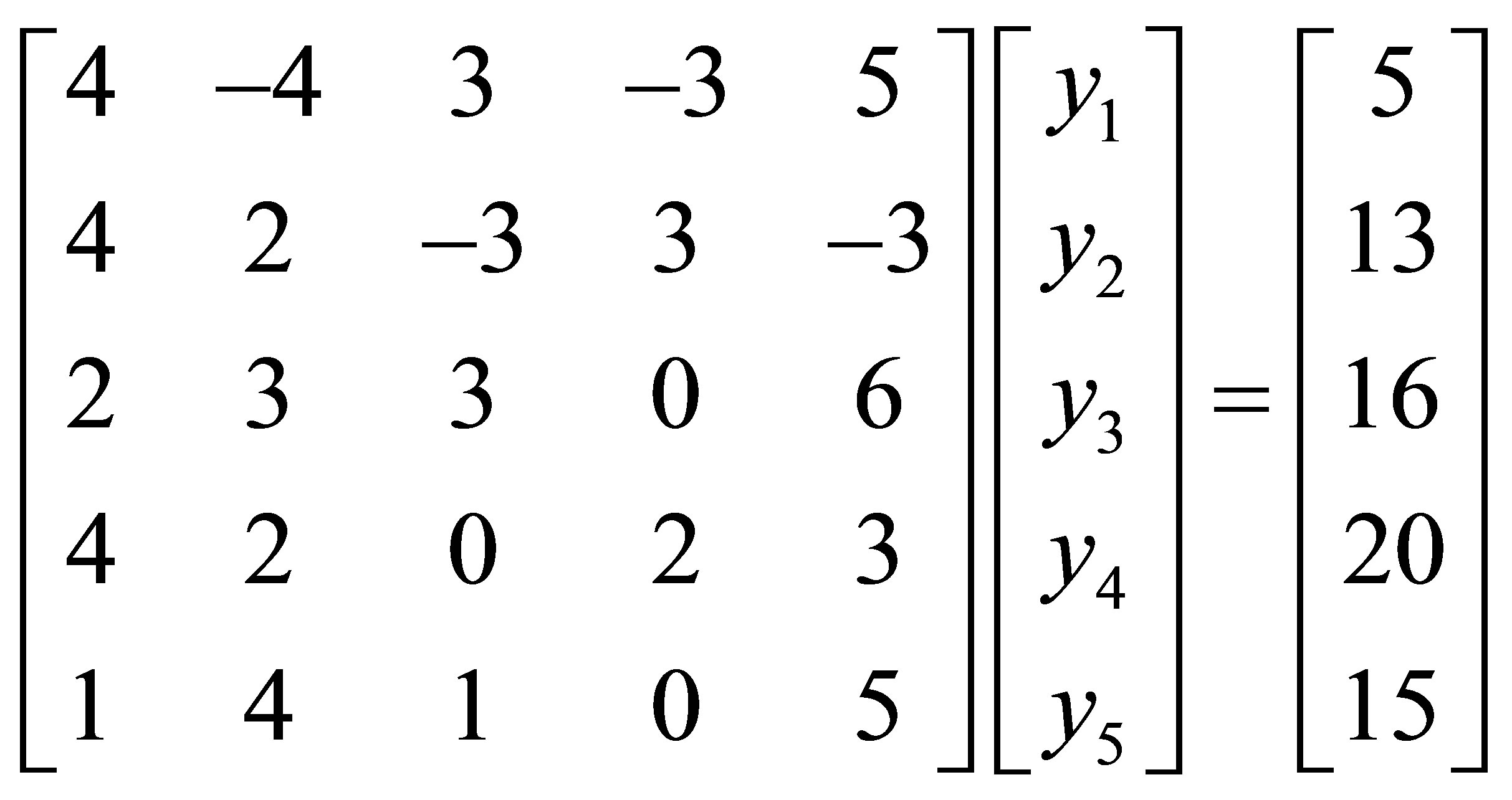

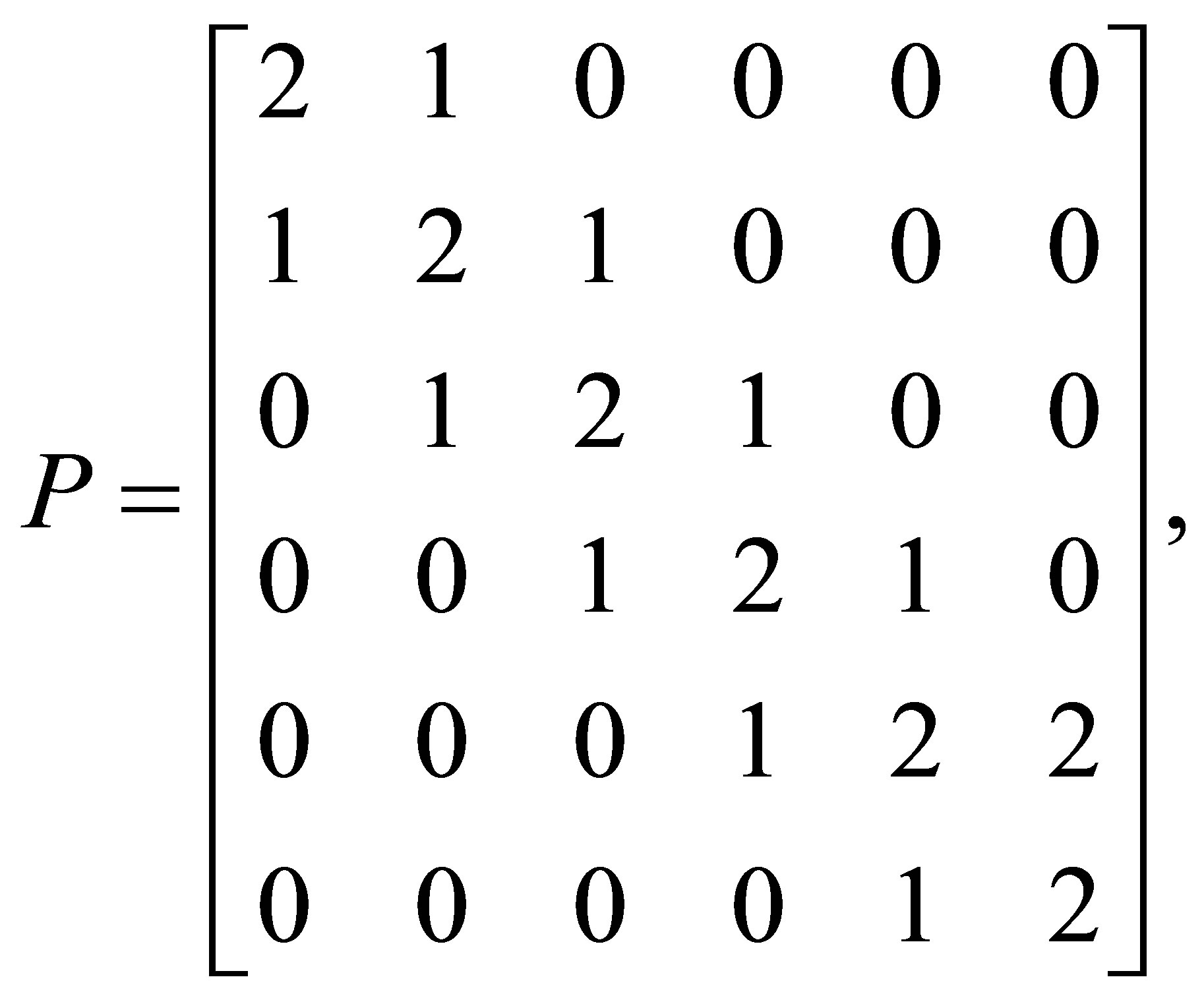

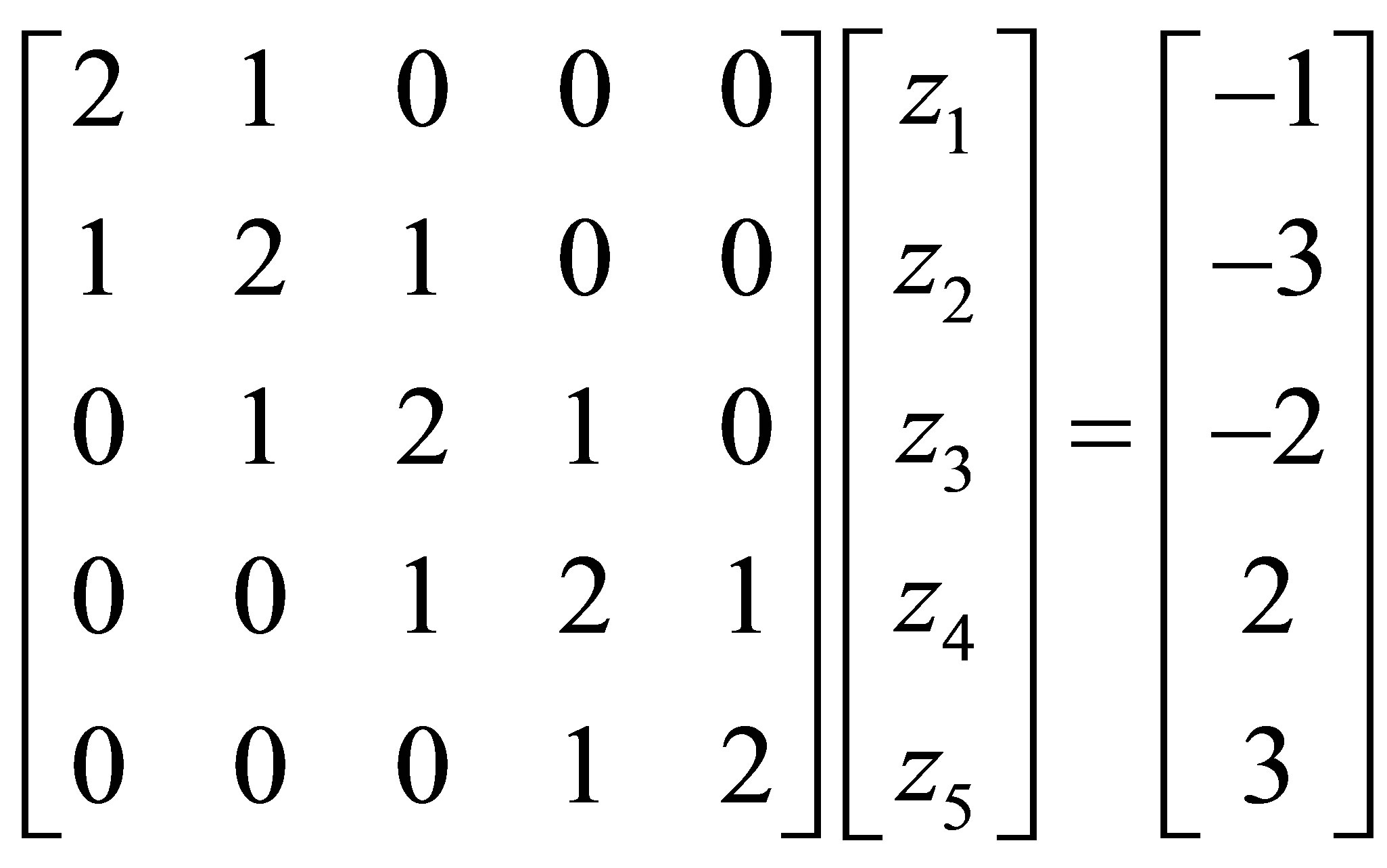

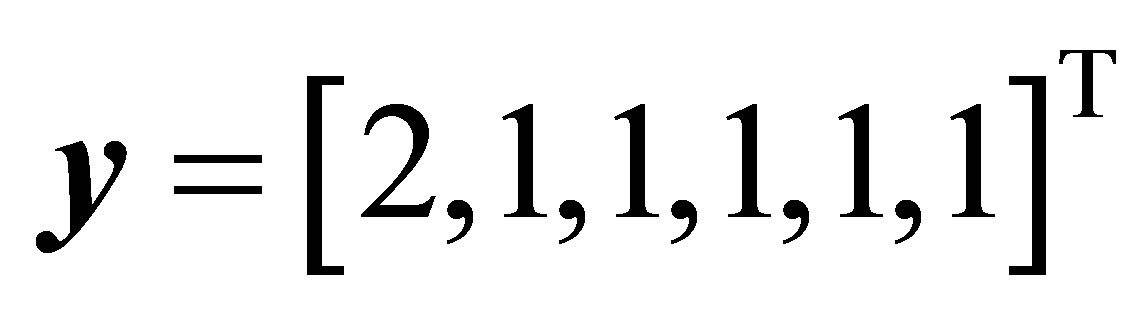

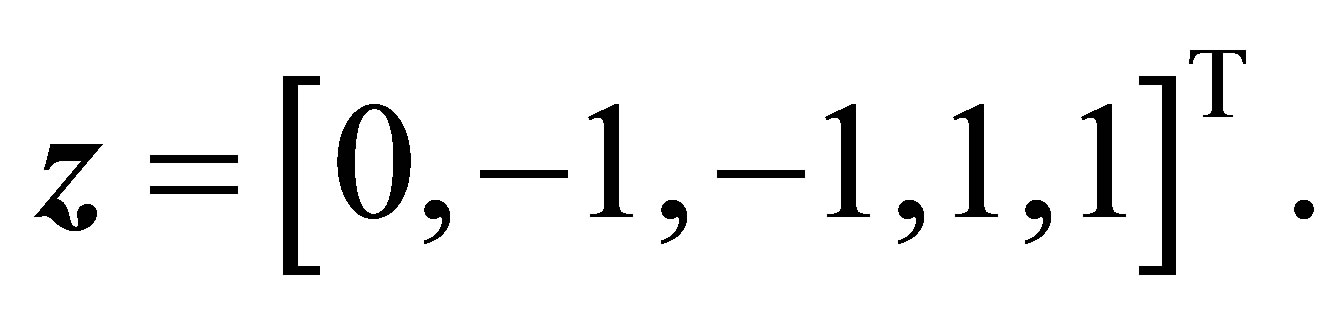

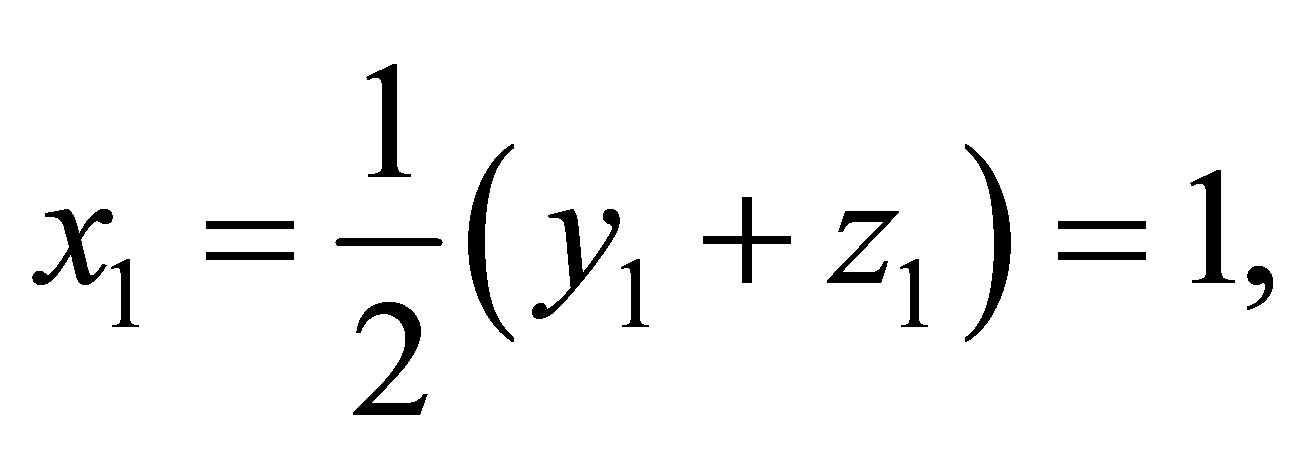

Example 6.1. Solve the centrosymmetric linear system

Solution:

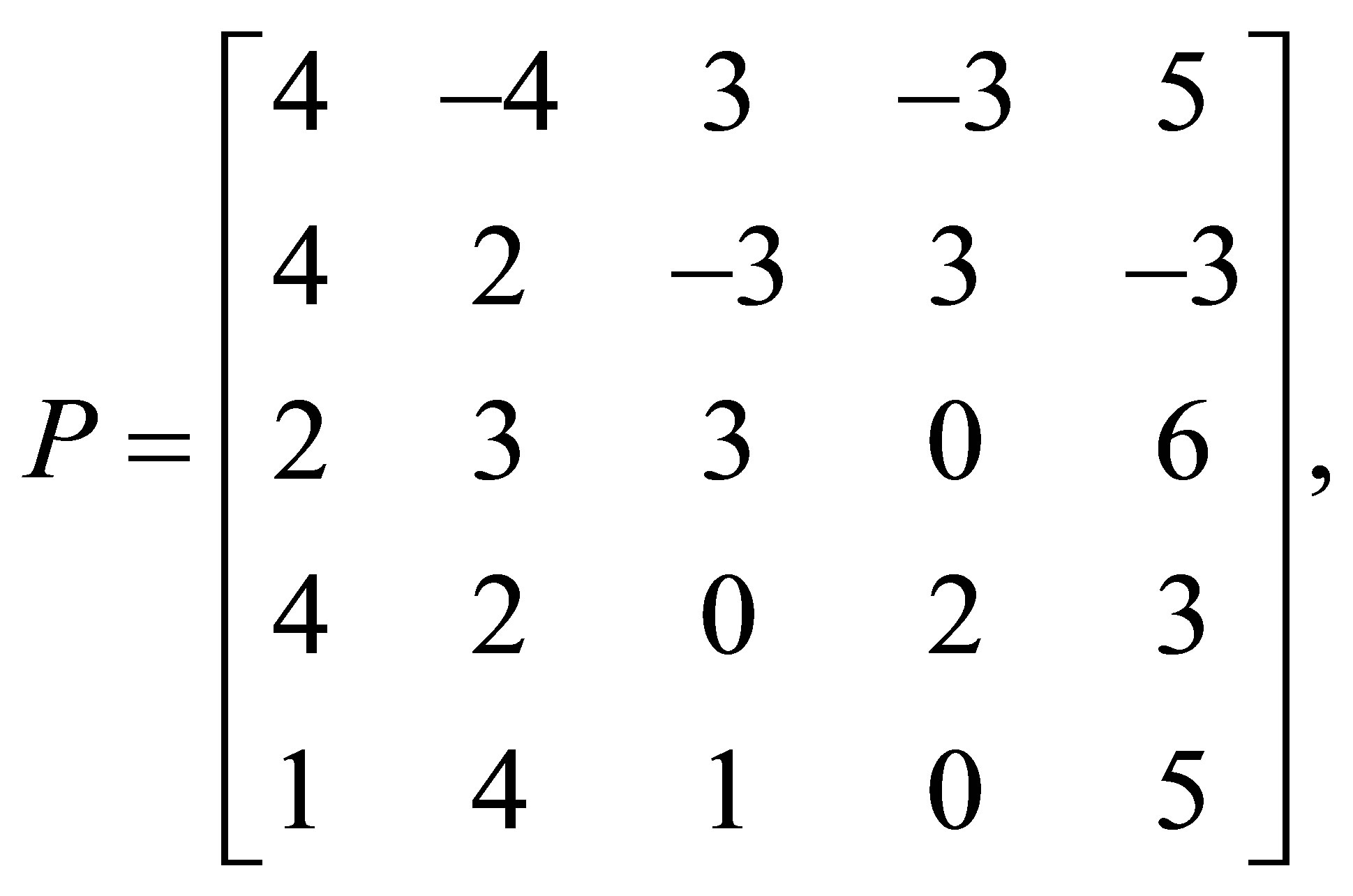

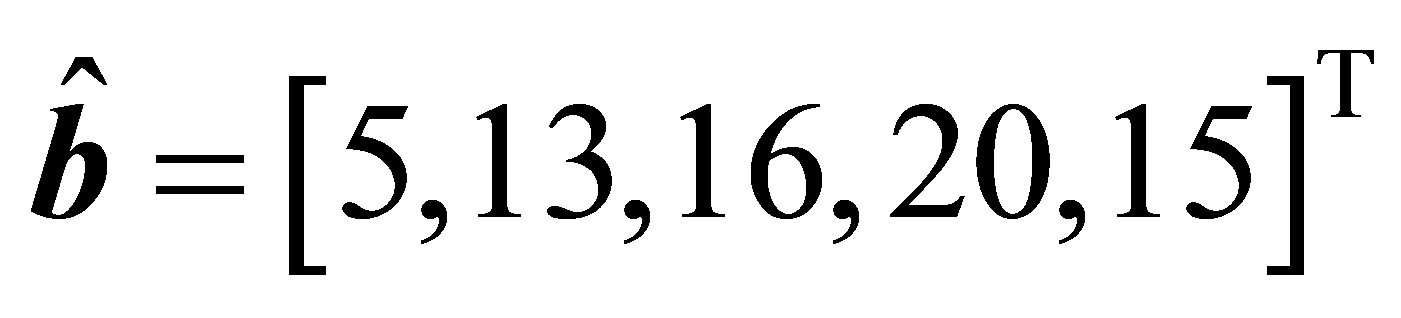

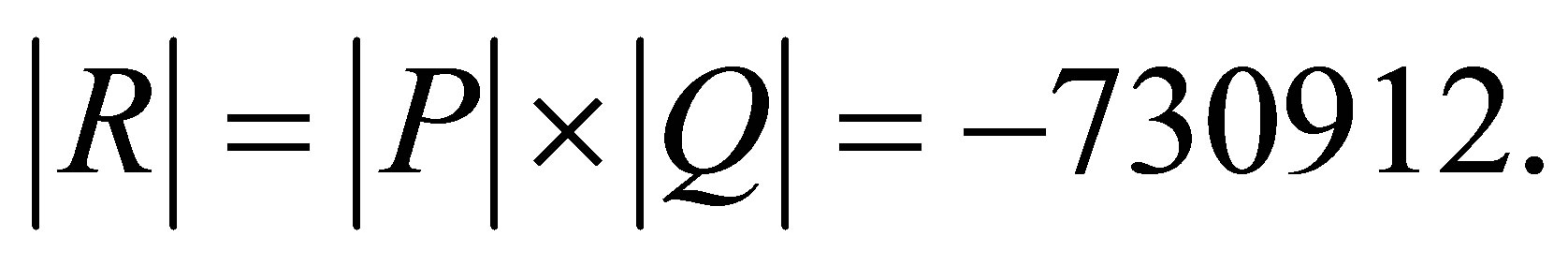

Here  and

and  Using the procedure centrosymm, we get the following results:

Using the procedure centrosymm, we get the following results:

and

and

The solutions of the systems

and

are  and

and

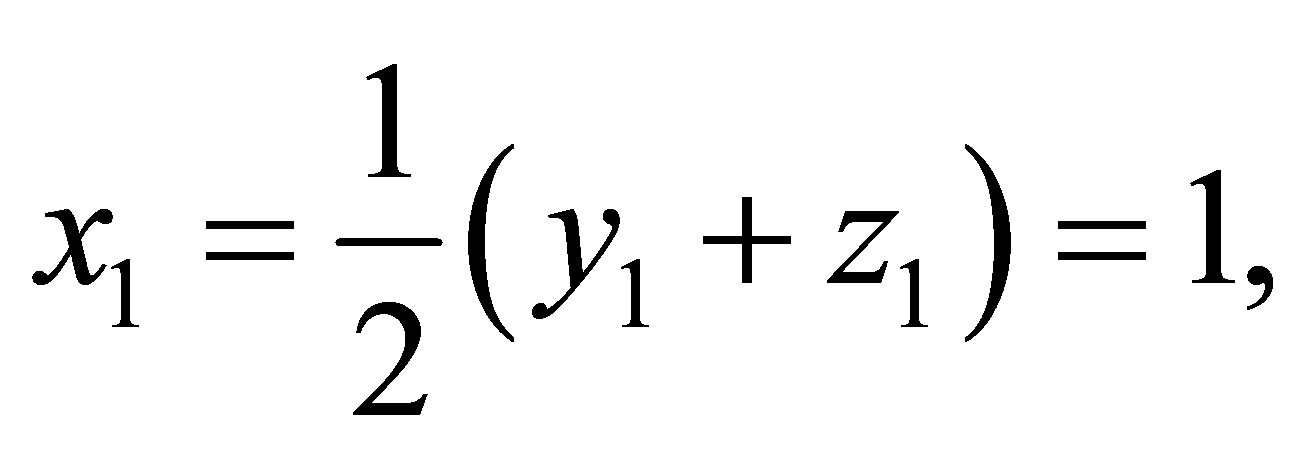

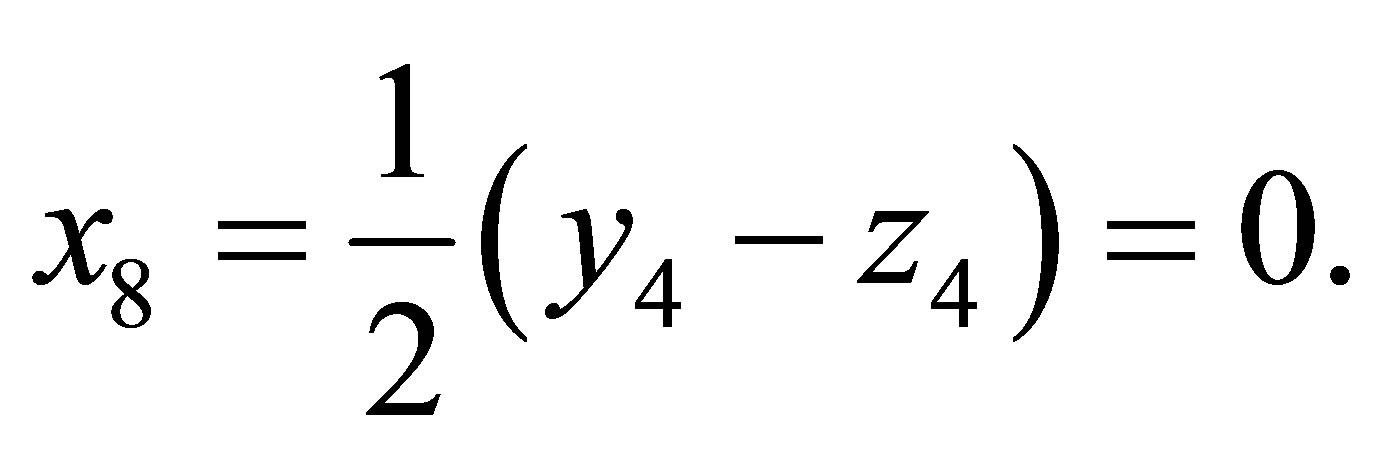

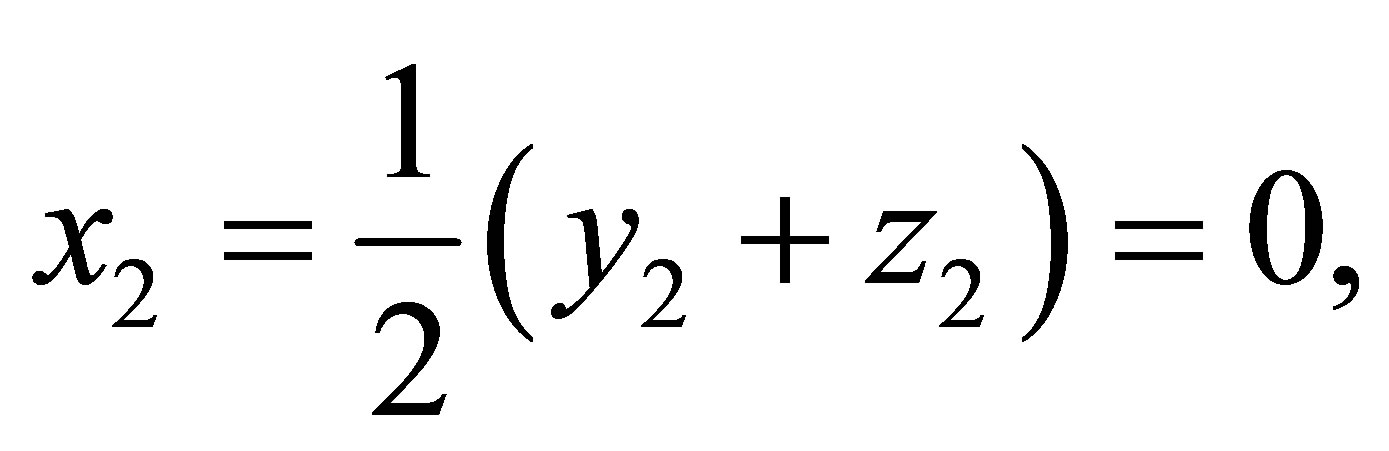

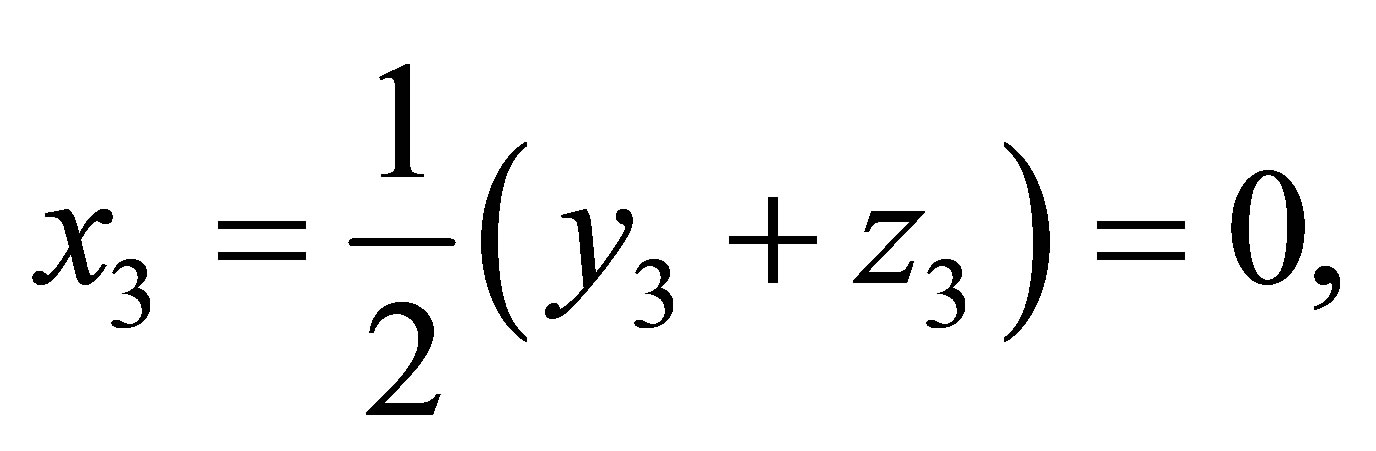

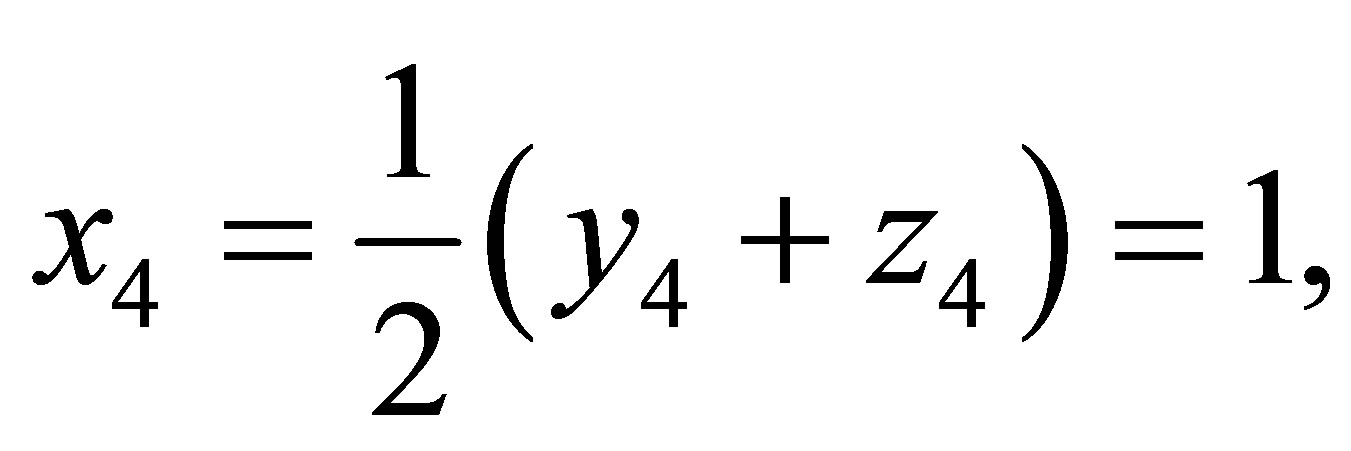

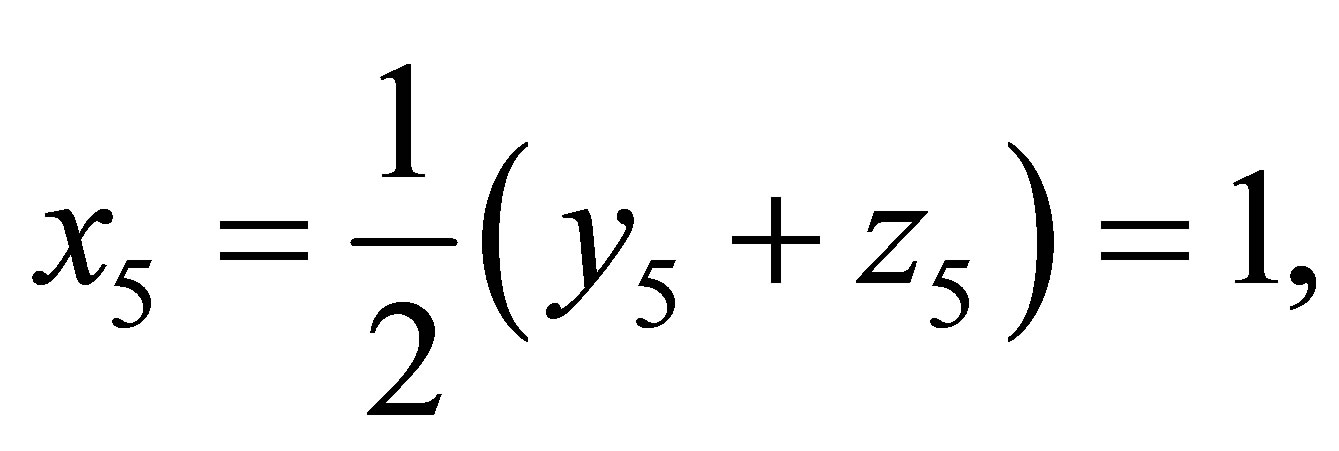

Therefore, we have

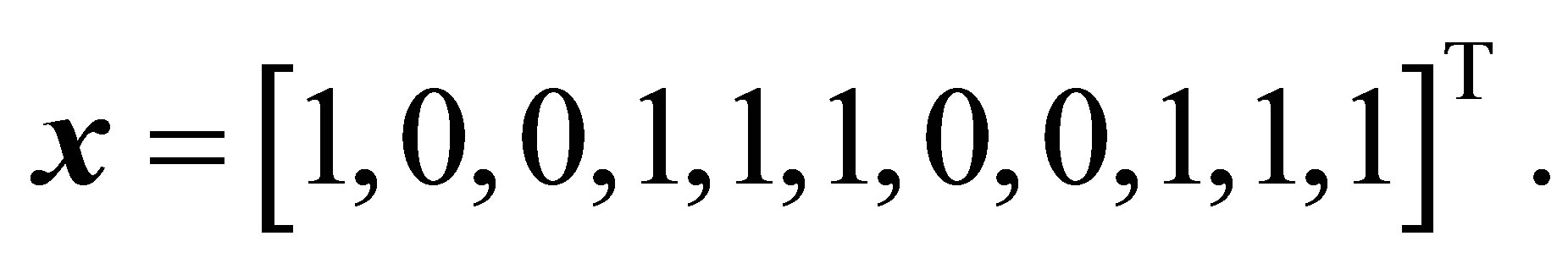

Hence the solution vector is

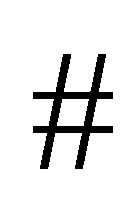

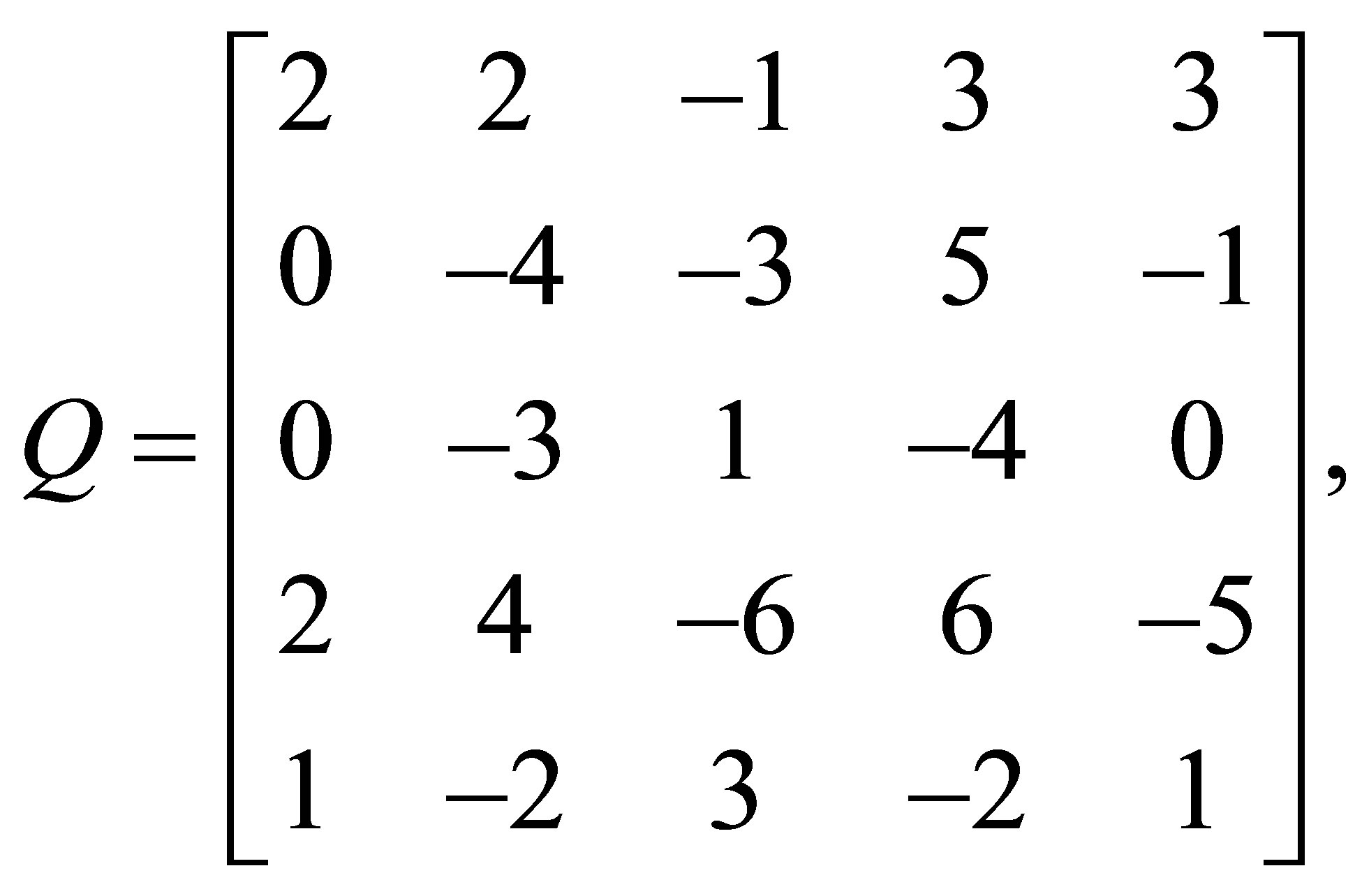

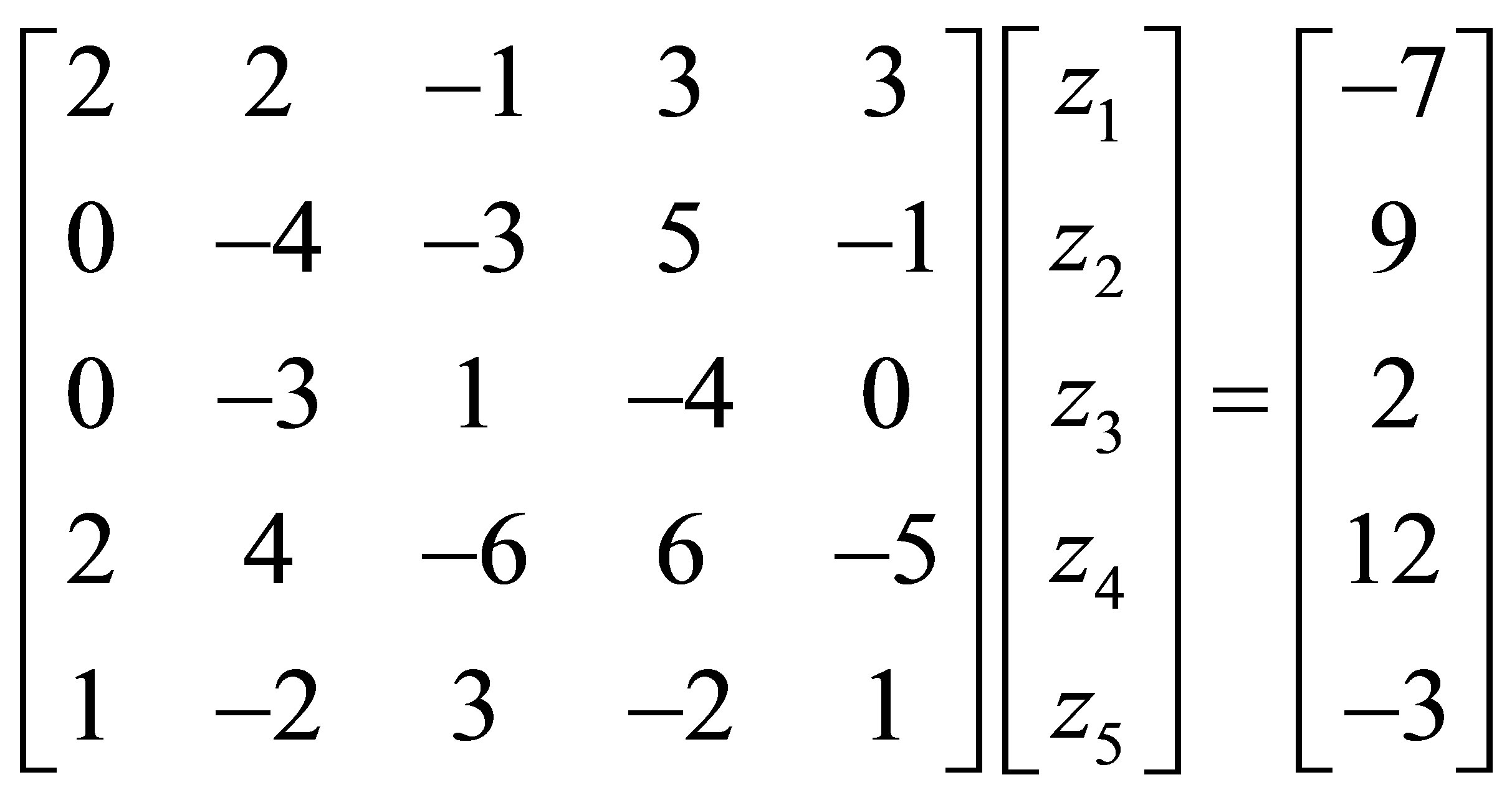

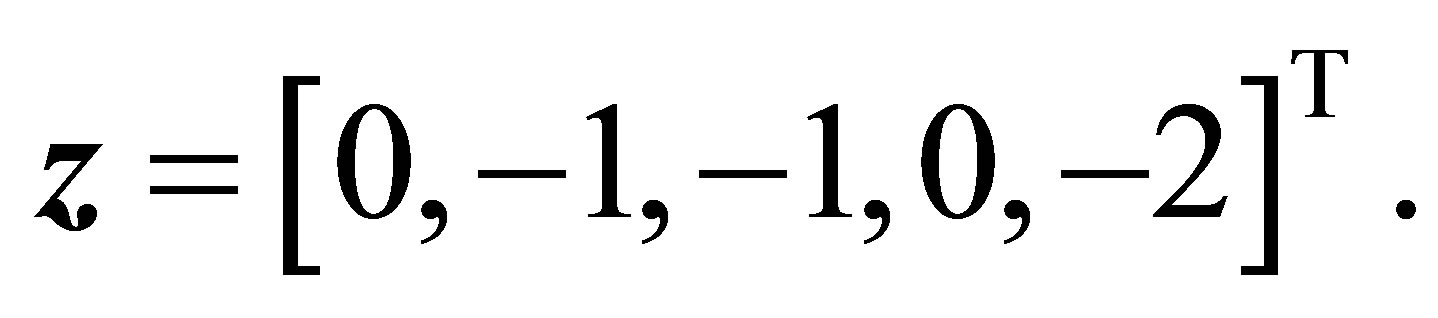

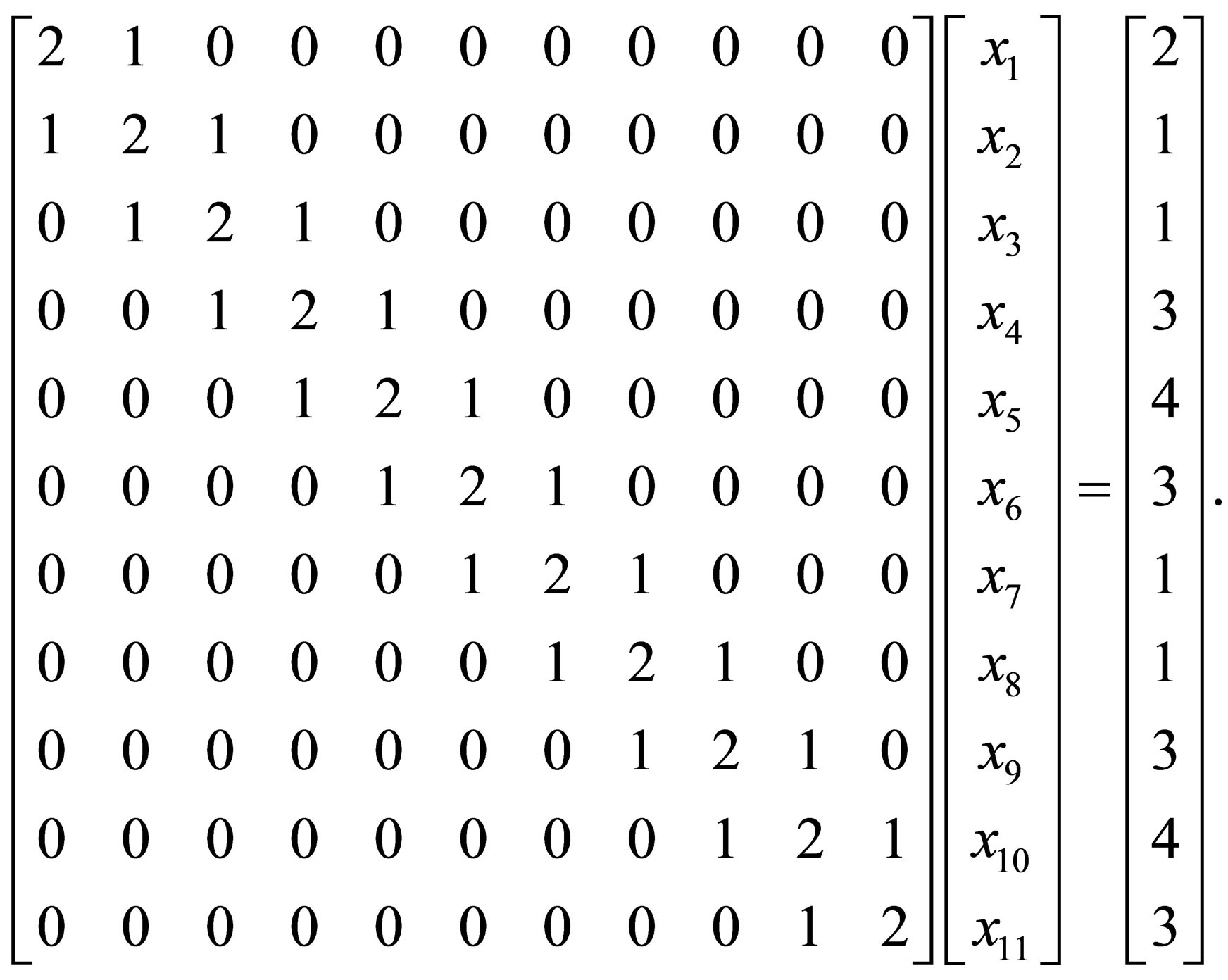

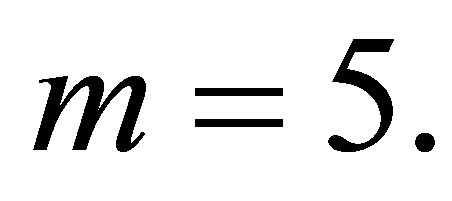

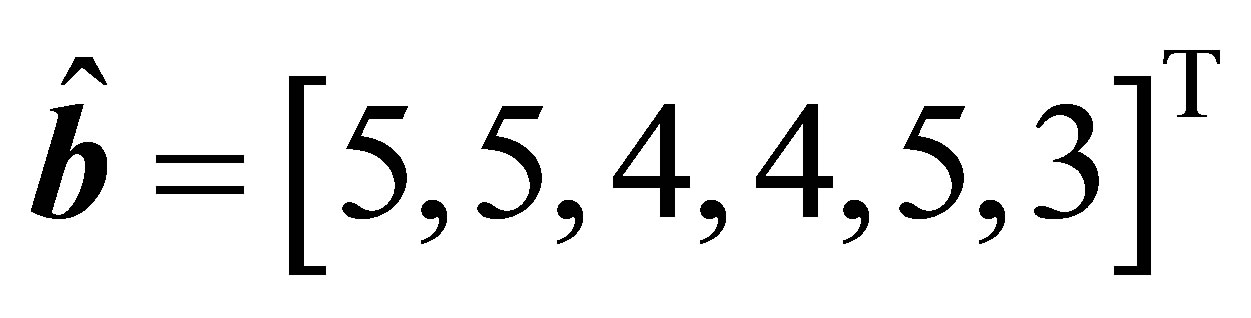

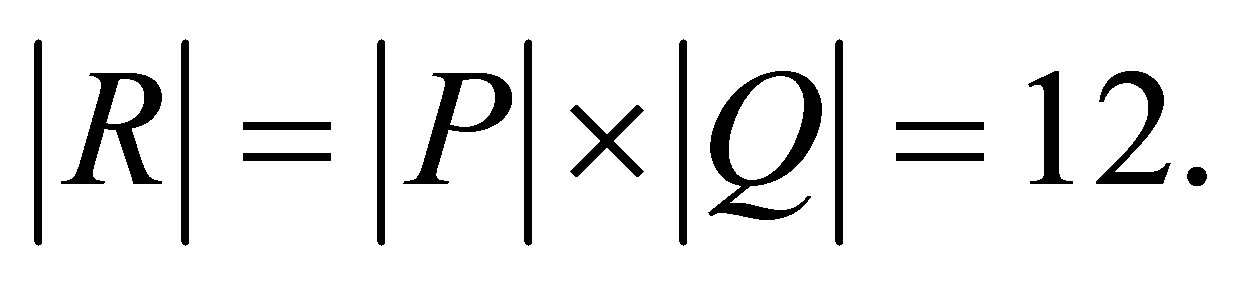

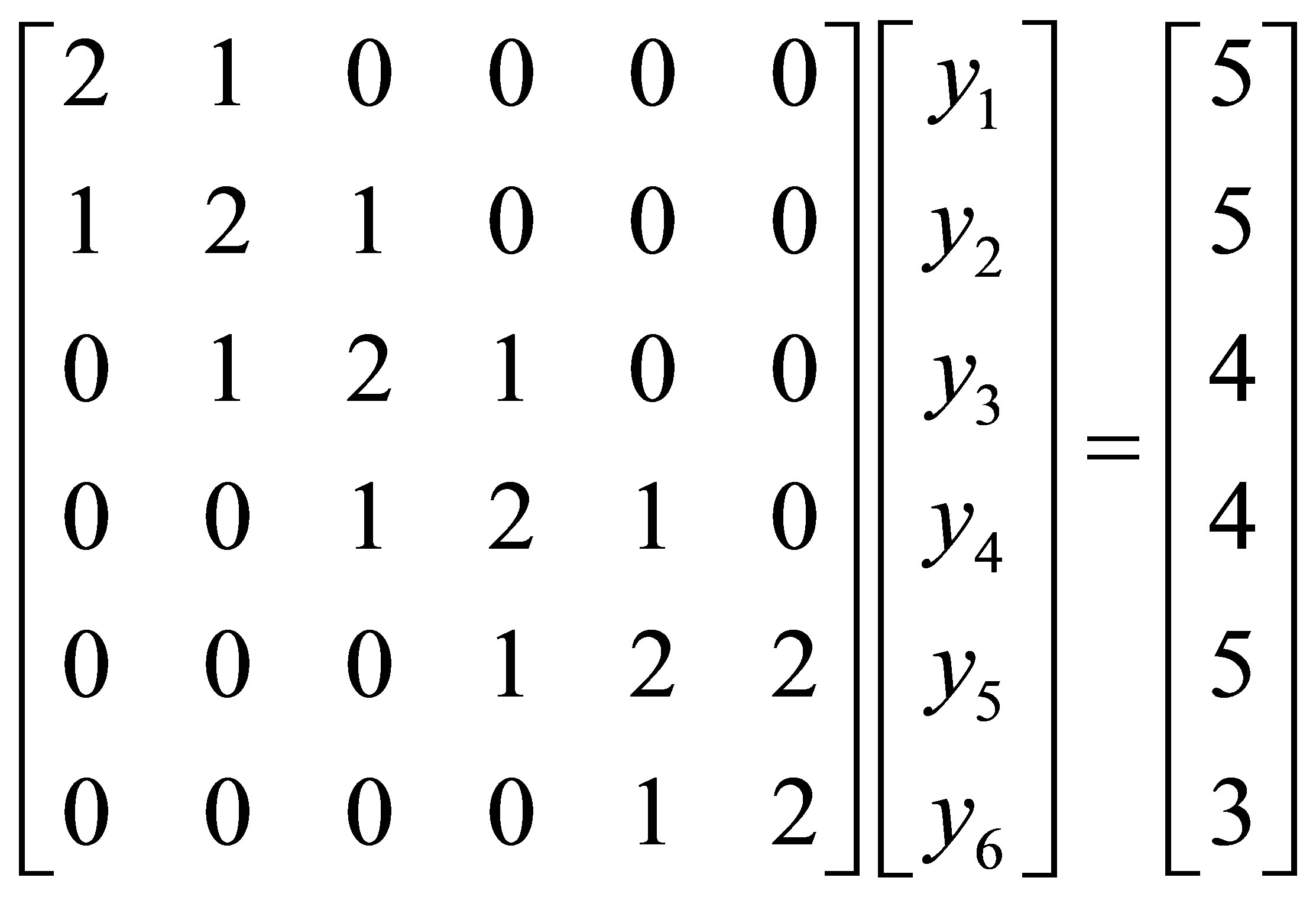

Example 6.2. Solve the centrosymmetric linear system

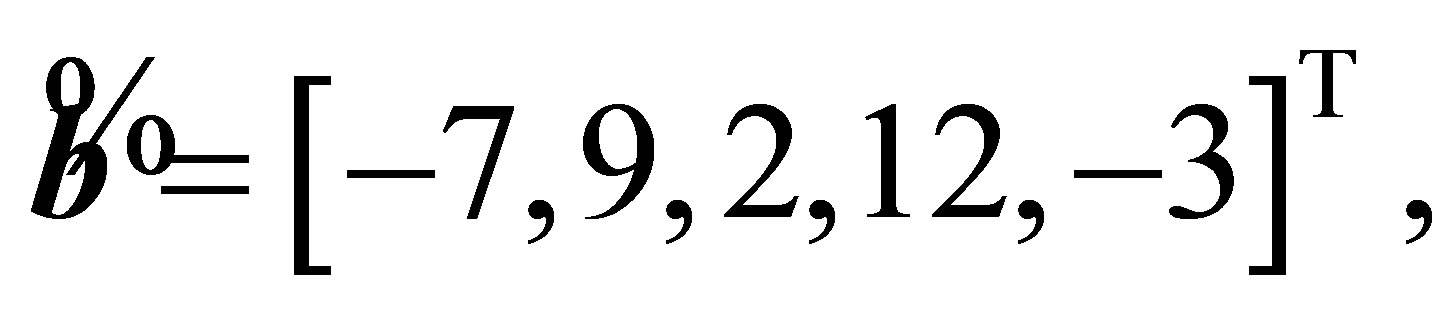

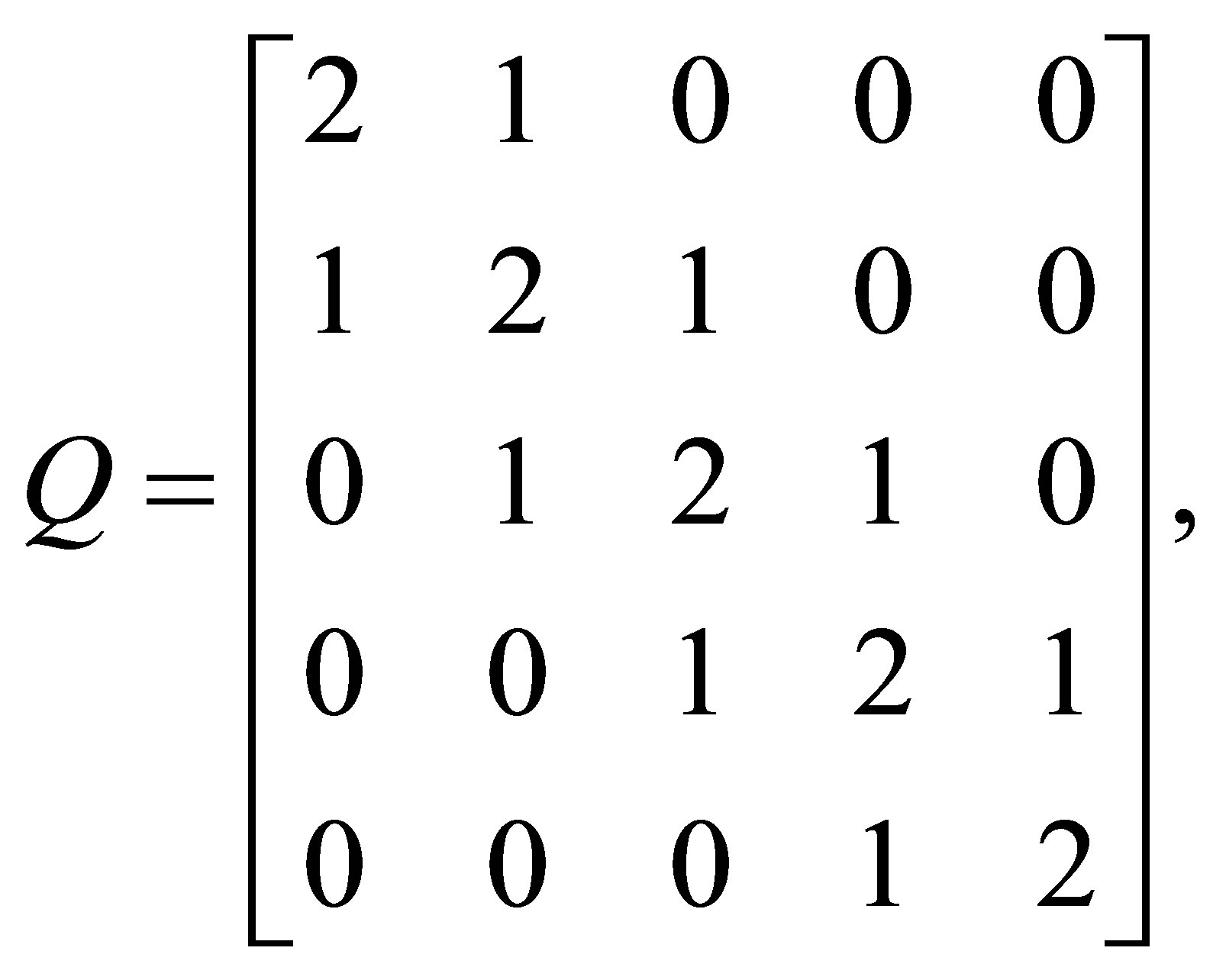

Solution: Here  and

and  Using the procedure centrosymm, we obtain the following results:

Using the procedure centrosymm, we obtain the following results:

and

and

The solutions of the systems

and

are  and

and

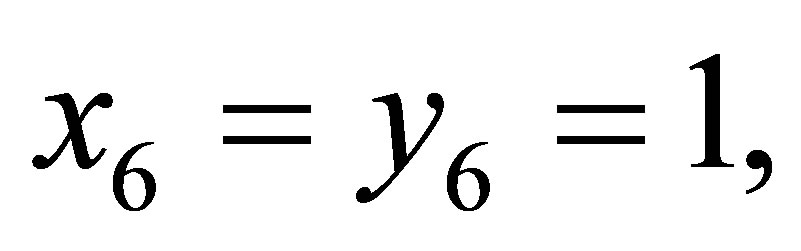

Therefore, we get

Hence the solution vector is

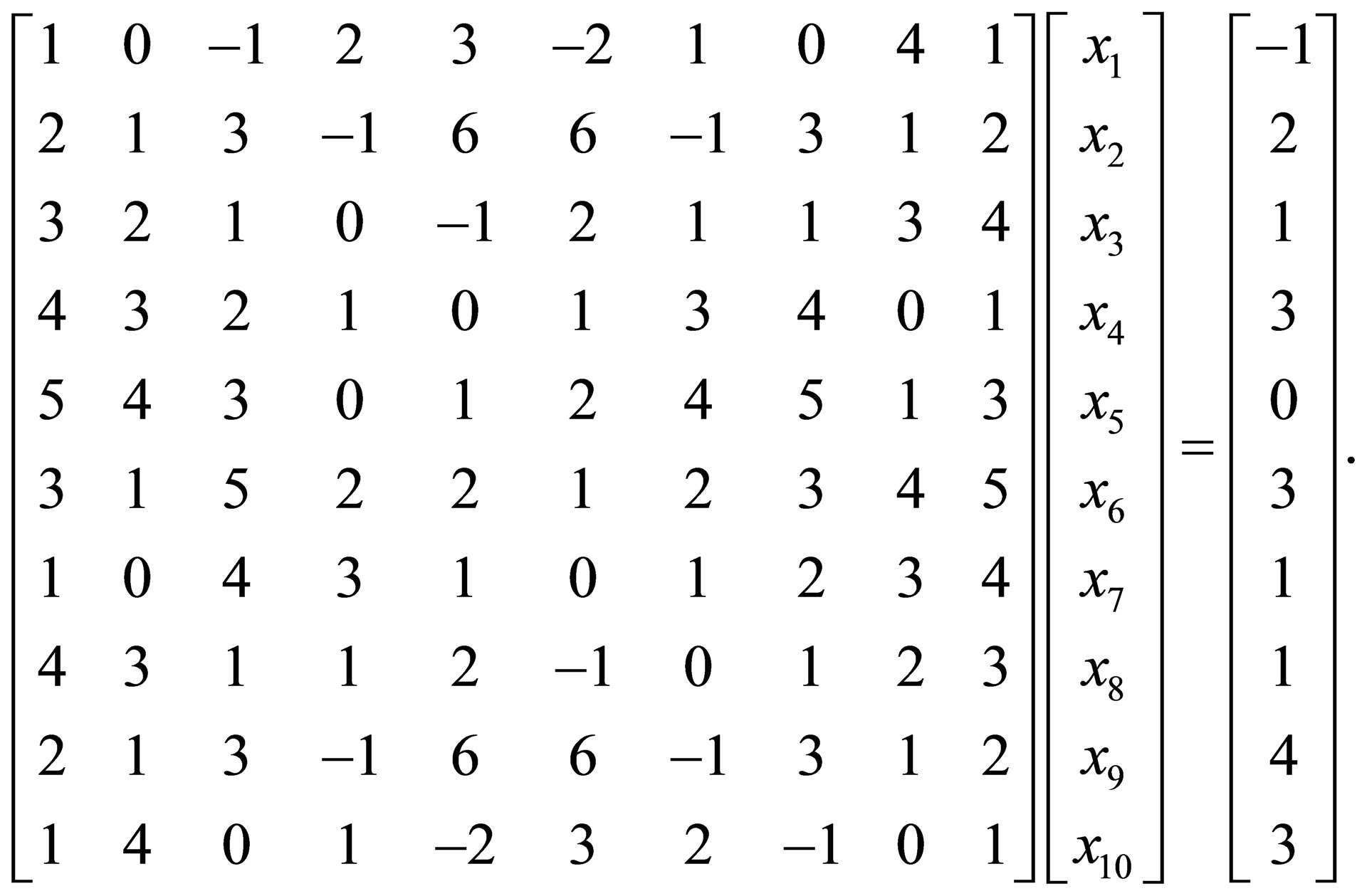

Example 6.3. Solve the centrosymmetric linear system

Solution:

Here  and

and  Using the procedure centrosymm, we obtain the following results:

Using the procedure centrosymm, we obtain the following results:

Error Singular Matrix !!!! This means that the given system has no solutions.

REFERENCES

- A. L. Andrew, “Centrosymmetric Matrices,” SIAM Review, Vol. 40, No. 3, 1998, pp. 697-699. http://dx.doi.org/10.1137/S0036144597328341

- A. Cantoni and P. Butler, “Eigenvalues and Eigenvectors of Symmetric Centrosymmetric Matrices,” Linear Algebra and Its Applications, Vol. 13, No. 3, 1976, pp. 275- 288.

- A. Cantoni and P. Butler, “Properties of the Eigenvectors of Persymmetric Matrices with Applications to Communication Theory,” IEEE Transactions on Communications, Vol. 24, No. 8, 1976, pp. 804-809. http://dx.doi.org/10.1109/TCOM.1976.1093391

- W. Chen, Y. Yu and X. Wang, “Reducing the Computational Requirement of Differential Quadrature Method,” Numerical Methods for Partial Differential Equations, Vol. 12, 1996, pp. 565-577.

- L. Datta and S. Morgera, “Some Results on Matrix Symmetries and a Pattern Recognition Application,” IEEE Transactions on Signal Processing, Vol. 34, No. 4, 1986, pp. 992-994.

- L. Datta and S. Morgera, “On the Reducibility of Centrosymmetric Matrices—Applications in Engineering Problems,” Circuits, Systems and Signal Processing, Vol. 8, No. 1, 1989, pp. 71-96.

- J. Delmas, “On Adaptive EVD Asymptotic Distribution of Centro-Symmetric Covariance Matrices,” IEEE Transactions on Signal Processing, Vol. 47, No. 5, 1999, pp. 1402-1406.

- I. J. Good, “The Inverse of a Centrosymmetric Matrix,” Technometrics, Vol. 12, No. 4, 1970, pp. 925-928.

- Z.-Y. Liu, “Some Properties of Centrosymmetric Matrices,” Applied Mathematics and Computation, Vol. 141, No. 2-3, 2003, pp. 297-306.

- R. B. Mattingly, “Even Order Regular Magic Squares Are Singular,” The American Mathematical Monthly, Vol. 107, No. 9, 2000, pp. 777-782.

- F. Stenger, “Matrices of Sinc Methods,” Journal of Computational and Applied Mathematics, Vol. 86, No. 1, 1997, pp. 297-310.

- J. Weaver, “Centrosymmetric (Cross-Symmetric) Matrices, Their Basic Properties, Eigenvalues, and Eigenvectors,” The American Mathematical Monthly, Vol. 92, No. 10, 1985, pp. 711-717.

- A. C. Aitken, “Determinants and Matrices,” Oliver and Boyd, Edinburgh, 1956.

- H.-T. Gao, C.-H. You and Y. Yang, “An Iterative Method for Generalized Centro-Symmetric Solution of Matrix Equation

” 2008. http://www.paper.edu.cn/en_releasepaper/content/21924.

” 2008. http://www.paper.edu.cn/en_releasepaper/content/21924. - A. Graovac, O. Ori, M. Faghani and A. R. Ashrafi, “Distance Property of Fullerenes,” Iranian Journal of Mathematical Chemistry, Vol. 2, No. 1, 2011, pp. 99-107.

- I. T. Abu-Jeib, “Algorithms for Centrosymmetric and Skew-Centrosymmetric Matrices,” Missouri Journal of Mathematical Sciences, Vol. 18, No. 1, 2006, pp. 46-53.

- O. Krafft and M. Schaefer, “Centrogonal Matrices,” Linear Algebra and its Applications, Vol. 306, No., 2000, pp. 145-154.

- Z.-Y. Liu, “Some Properties of Centrosymmetric Matrices and Its Applications,” Numerical Mathematics: A Journal of Chinese Universities, Vol. 14, No. 2, 2005, pp. 136-148.

- Z. Tian and C. Gu, “The Iterative Methods for Centrosymmetric Matrices,” Applied Mathematics and Computation, Vol. 187, No. 2, 2007, pp. 902-911.

- R. Vein and P. Dale, “Determinants and Their Applications in Mathematical Physics,” Springer, New York, 1999.

- A. M. Yasuda, “Some Properties of Commuting and AntiCommuting m-Involutions,” Acta Mathematica Scientia, Series B (English Edition), Vol. 32, No. 2, 2012, pp. 631-644.

- M. E. A. El-Mikkawy, “A Generalized Symbolic Thomas algoriThm,” Applied Mathematics, Vol. 3, No. 4, 2012, pp. 342-345. http://dx.doi.org/10.4236/am.2012.34052

- T. Sugimoto, “On an Inverse Formula of a Tridiagonal Matrix,” Operators and Matrices, Vol. 6, No. 3, 2012, pp. 465-480. http://dx.doi.org/10.7153/oam-06-30

- M. E. A. El-Mikkawy, “A Note on a Three-Term Recurrence for a Tridiagonal Matrix,” Journal of Applied Mathematics and Computing, Vol. 139, No. 2-3, 2003, pp. 503-511.

- M. E. A. El-Mikkawy, “An Algorithm for Solving Tridiagonal Systems,” Journal of Institute of Mathematics and Computer Science, Vol. 4, No. 2, 1991, pp. 205-210.

- M. E. A. El-Mikkawy, “On the Inverse of a General Tridiagonal Matrix,” Applied Mathematics and Computation, Vol. 150, No. 3, 2004, pp. 669-679.

- M. E. A. El-Mikkawy, “A New Computational Algorithm for Solving Periodic Tri-Diagonal Linear Systems,” Applied Mathematics and Computation, Vol. 161, No. 2, 2005, pp. 691-696.

- M. E. A. El-Mikkawy and A. Karawia, “Inversion of General Tridiagonal Matrices,” Applied Mathematics Letters, Vol. 19, No. 8, 2006, pp. 712-720.

- M. E. A. El-Mikkawy and E.-D. Rahmo, “A New Recursive Algorithm for Inverting General Tridiagonal and Anti-Tridiagonal Matrices,” Applied Mathematics and Computation, Vol. 204, No. 1, 2008, pp. 368-372.

- R. L. Burden and J. D. Faires, “Numerical Analysis,” 7th ed., Books & Cole Publishing, Pacific Grove, 2001.

- M. E. A. El-Mikkawy, “A Fast Algorithm for Evaluating nth Order Tri-Diagonal Determinants,” Journal of Computational and Applied Mathematics, Vol. 166, No. 2, 2004, pp. 581-584.

NOTES

*Corresponding author.