Journal of Electromagnetic Analysis and Applications

Vol.06 No.14(2014), Article ID:52439,13 pages

10.4236/jemaa.2014.614044

Semi-Analytical Solution of the 1D Helmholtz Equation, Obtained from Inversion of Symmetric Tridiagonal Matrix

Serigne Bira Gueye

Département de Physique, Faculté des Sciences et Techniques, Université Cheikh Anta Diop, Dakar-Fann, Sénégal

Email: sbiragy@gmail.com

Copyright © 2014 by author and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 2 October 2014; revised 1 November 2014; accepted 25 November 2014

ABSTRACT

An interesting semi-analytic solution is given for the Helmholtz equation. This solution is obtained from a rigorous discussion of the regularity and the inversion of the tridiagonal symmetric matrix. Then, applications are given, showing very good accuracy. This work provides also the analytical inverse of the skew-symmetric tridiagonal matrix.

Keywords:

Helmholtz Equation, Tridiagonal Matrix, Linear Homogeneous Recurrence Relation

1. Introduction

We focus on the inverse of the matrix (M) defined in the Equation (1) below. We are interested in applications of this matrix, because the latter allows solving many important differential equations in science and technology, especially mathematics, physics, engineering, chemistry, biology and other disciplines. The formula of the inverse of (M) was determined in [1] . But in this study, a different approach is presented with a rigourus and complete discussion of its regularity and complement, discussing the regularity of the matrix in great detail. In addition, the inverse of the antisymmetric tridiagonal matrix is determined analytically.

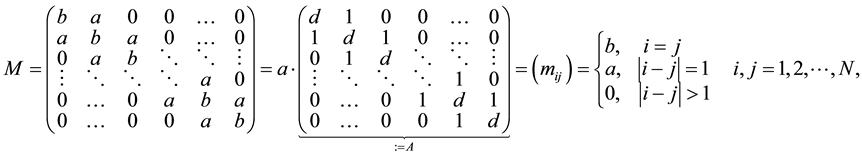

(1)

(1)

where,  ,

,  , and

, and . In case where

. In case where  is zero, the inversion of the matrix (M) presents no difficulty.

is zero, the inversion of the matrix (M) presents no difficulty.

So we will focus on the matrix (A) and we will determine the exact form of its inverse, (B). We proceed as follows: first, we determine the determinant of (A) and give a very detailed discussion of its invertibility. Therefore, we formulate its inverse analytically and exactly. Then, we solve the Helmholtz equation, with the finite difference method, using the obtained inverse matrix. Additionally, we treat the skew-symmetric tridiagonal matrix and give the formula of its inverse.

2. Determinant of the Matrix (A)

2.1. Characteristic Equation and Discriminant

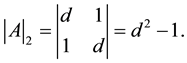

The calculation of the determinant of (A) and the discussion of the existence of its inverse constitute a very important part of this work. The determinant of (A) depends on N and is denoted . We define

. We define  and

and . We also define

. We also define  as follows:

as follows:

(2)

(2)

By developing the determinant  with respect to the first row, one finds that it follows a second-order linear homogeneous recurrence relation with constant coefficients [2] [3] :

with respect to the first row, one finds that it follows a second-order linear homogeneous recurrence relation with constant coefficients [2] [3] :

(3)

(3)

The term  is the determinant of the submatrix of (A), obtained by eliminating its first row and its first column.

is the determinant of the submatrix of (A), obtained by eliminating its first row and its first column.  denotes the determinant of the submatrix of order N − 2, obtained by deleting the first two rows and first two columns of (A).

denotes the determinant of the submatrix of order N − 2, obtained by deleting the first two rows and first two columns of (A).

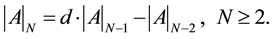

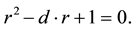

The characteristic equation of the recurrence relation, given by the Equation (3), is:

(4)

(4)

The resolution of this Equation (4) yields the expression of

The solutions of the characteristic equation are determined by the sign of the discriminant

2.2. Case

The discriminant is zero for two values of

where A' and B' are two constants which are determined taking into account the first two terms of the sequence

The constant B' is determined in the following manner:

So the determinant of the matrix (A), in the case where

The Equation (8) is the exact formula of

2.3. Case

It corresponds to the case where

The general expression of the determinant is for this case:

The constants A' and B' can be determined, considering the values of

It holds:

Thus, the determinant of (A) is obtained:

This equation is equivalent to:

The determinant of (A) is different from zero, for the considered case

An observation of the determinant, for this case, allows another formulation of the formula in Equation (14). Because, one remarks that the determinant is a polynomial and can be developed. It holds:

From the analysis of these polynomial expressions, one can demonstrate using mathematical induction that the determinant given by the Equation (14) can be formulated as follows:

where [N/2] est equivalent to (N div 2). This latter formula in Equation (15) is given in [1] . But, we prefer the formulation of Equation (14) for two reasons.

The first is that we look for a matrix inverse. Then, it is important to know about the annulation of the determinant. Choosing the Equation (15) means that we have to search the zero of the polynomials, to know the invertibility of the matrix. While the Equation (14) shows clearly that the determinant does not vanish and thus, the matrix (A) is regular.

The second reason to prefer Equation (14) to Equation (15) is that programming the Equation (14) is more confortable than programming Equation (15). Because the latter needs loops for the sum, and it also needs recursions for the binomial coefficients.

2.4. Case

It corresponds to the case where

These solutions

with

Then, the general expression of

The constants A' and B' are determined considering

One obtains the following relation that gives the determinant of (A), in the case where

Regularity of the Matrix (A) for

In this case, the regularity of (A) has to be studied. One solves:

This Equation (21) admits N solutions

For these values of

In the treated case

clude sub-cases where

Special Case d = 0

This corresponds to

The Equation (24) is a very interesting result. First, it gives the exact formula of determinant for the case where

This closes the discussion of the determinant of a symmetric tridiagonal matrix, similar to the (M). All cases were studied in a very detailed manner. In each of these cases, the exact value of the determinant of (A) is given and its regularity has been widely discussed.

3. Inverse of the Matrix A

Before starting the determination of the inverse of the matrix (A), it is appropriate to discuss its properties. The symmetries of (A) will be found in its inverse (B).

First, the matrix (A) is symmetric:

These two properties show that the matrix (B) is determined when one fourth of its elements is known.

Determining (B) means to determine the cofactor matrix of (A). While these cofactors are obtained using determinants of submatrices of (A), it is not difficult to determine them. Because, a detailed work has been done in the previous section, concerning the determinant of (A) and its submatrices.

Thus, it is easy to see that:

So the first and last lines, and also the first and last columns of the matrix (B) are known exactly using the symmetry and the persymmetry matrix (A).

We remember the previous section gives the formulas of all the determinants

The matrix (B) being the inverse of (A), its components satisfy the following relations:

where

From the first line of Equation (27), it is possible to obtain the second column of the matrix (B) from the first column, which is already known. Then, with the symmetry of the matrix (B), we have:

With the symmetry and the persymmetry of (B), we also have:

The second line of Equation (27) allows to find the elements of the third row and the third column of the matrix (B):

Considering the Equation (28), the following relation is obtained:

The second line of Equation (27) also allows to find the elements of the fourth row and the fourth column of (B):

Considering the Equations (28) and (31), the following relation is obtained:

The analysis of each element of the matrix (B) leads to the complete and exact formulation of this remarkable matrix:

The Equation (34), combined with the Equation (26), gives:

The Equation (35) determines all the elements of the upper triangle of the matrix (B). So the symmetry of the matrix allows to get the closed form of (B), inverse of the symmetric tridiagonal the matrix (A). Thus, (B) is known and each of its components is given by the following equation:

This beautiful relations is very important. It is an interesting result to solve any differential equation whose discretization leads to algebraic equations of the form

However, it deserves to be precised that the formula of the Equation (36) is not new. Indeed, it has already been determined in [1] . But the present study follows another approach and additionally provides a deeper and complete discussion of the regularity of (A): this work completes the study in [1] .

As application, we will solve the Helmholtz equation, which is a very important equation in physics, using the matrix (B). We could also take the equation of heat diffusion and the Poisson equation. But we prefer the former, which corresponds to the wave equation for harmonic excitation.

4. Application with the Resolution of Helmholtz Equation

Knowing the matrix (B) allows to solve all the boundary problems posed in following manner:

We consider an one-dimensional mesh with N + 2 discrete points

The application of the finite difference method to the Equation (36), with the centered difference approxi-

mation

where

Thus, one gets in matrix form

where the vector

Thus, it holds

This can be expressed in the following form

which gives finally:

This Equation (42) gives the solution

One can also define

Then, each element

Thus, each solution

The Equations (42) and (45) are two forms of solution of the Helmholtz equation. Each of these two forms can be implemented simply and elegantly in a source code.

5. Numerical Results the Different Cases

The different studied cases are considered to illustrate the efficient of the proposed approach that is based on the exact inversion of the important matrix (A). Logically, this method is stable robust and very accurate. Because the method of inversion does not use the RHS of the differential equation.

For applications the value

The relative error

The average relative error

5.1. Results for

Sub-Case

The sub-case where

The results are presented in the Table 1.

It holds, for the considered case:

Sub-Case

For this sub-case, we have

equation can be an Helmholtz or an Heat Diffusion’s equation or any other differential equation, discribed by Equation (37).

The results are presented in the Table 2, with

This results are very accurate and the average relative error is:

5.2. Results for

The Equation (14) gives the formula of the determinant

The average relative error is:

Table 1. Results for sub-case

Table 2. Results for sub-case

Table 3. Results for

5.3. Results for

Results for

As discussed previously, an even

The average relative error is:

Results for

We have chosen

The average relative error is:

Results for

Here, we chose

The average relative error is:

Table 4. Results for

Table 5. Results for

Table 6. Results for

6. Inverse of the Tridiagonal Antisymmetric (Skew-Symmetric) Matrix

We give here, additionally, the inverse of the tridiagonal antisymmetric matrix:

Arguing as we did with the matrix (A), we get the characteristic equation to obtain the determinant of

The discriminant

One can remark that

Then, the inverse of

The corresponding applications for the inverse matrix

7. Conclusion

This study has given the semi-analytical solution of each equation differential whose discretization leads to algebraic equations of the form

References

- Hu, G.Y. and O’Connell, R.F. (1996) Analytical Inversion of Symmetric Tridiagonal Matrices. Journal of Physics A, 29, 1511-1513. http://dx.doi.org/10.1088/0305-4470/29/7/020

- Rosen, K.H. (2010) Handbook of Discrete and Combinatorial Mathematics. 2nd Edition, Chapman & Hall/CRC, UK, 179.

- Epp, S.S. (2011) Discrete Mathematics with Applications. 4th Edition, Brooks/Cole Cengage Learning, Bostion, 317- 327.

- Gueye, S.B. (2014) The Exact Formulation of the Inverse of the Tridiagonal Matrix for Solving the 1D Poisson Equation with the Finite Difference Method. Journal of Electromagnetic Analysis and Application, 6, 303-308. http://dx.doi.org/10.4236/jemaa.2014.610030

- Engeln-Muellges, G. and Reutter, F. (1991) Formelsammlung zur Numerischen Mathematik mit QuickBasic-Program- men. Dritte Auflage, BI-Wissenchaftsverlag, 472-481.

- LeVeque, R.J. (2007) Finite Difference Method for Ordinary and Partial Differential Equations, Steady State and Time Dependent Problems. SIAM, Philadelphia. http://dx.doi.org/10.1137/1.9780898717839