International Journal of Modern Nonlinear Theory and Application

Vol.1 No.3(2012), Article ID:23080,6 pages DOI:10.4236/ijmnta.2012.13009

On Over-Relaxed Proximal Point Algorithms for Generalized Nonlinear Operator Equation with (A,η,m)-Monotonicity Framework

Department of Mathematics, Sichuan University of Science and Engineering, Zigong, China

Email: lifang1687@163.com

Received July 12, 2012; revised August 12, 2012; accepted September 12, 2012

Keywords: New Over-Relaxed Proximal Point Algorithm; Nonlinear Operator Equation with (A,η,m)-Monotonicity Framework; Generalized Resolvent Operator Technique; Solvability and Convergence

ABSTRACT

In this paper, a new class of over-relaxed proximal point algorithms for solving nonlinear operator equations with (A,η,m)-monotonicity framework in Hilbert spaces is introduced and studied. Further, by using the generalized resolvent operator technique associated with the (A,η,m)-monotone operators, the approximation solvability of the operator equation problems and the convergence of iterative sequences generated by the algorithm are discussed. Our results improve and generalize the corresponding results in the literature.

1. Introduction

Motivated by an increasing interest in the nonlinear variational (operator) inclusion problems, complementarity problems and equilibrium problems, which provide us a general and unified framework for studying a wide range of interesting and important problems arising in mathematics, physics, engineering sciences, economics finance and other corresponding optimization problems, the proximal point algorithm have been studied by many authors. See, for example, [1-10] and the references therein. Recently, Verma [9] developed a general framework for a hybrid proximal point algorithm using the notion of (A,η)-monotonicity and explored convergence analysis for this algorithm in the context of solving a class of nonlinear inclusion problems along with some results on the resolvent operator corresponding to (A,η)-monotonicity. Furthermore, Verma [10] introduced a general framework for the over-relaxed A-proximal point algorithm based on the A-maximal monotonicity and pointed out “the over-relaxed A-proximal point algorithm is of interest in the sense that it is quite application-oriented, but nontrivial in nature”.

On the other hand, Lan [4] first introduced a new concept of (A,η)-monotone (so called (A,η,m)-maximal monotone [6]) operators, which generalizes the (H,η)-mono-tonicity, A-monotonicity and other existing monotone operators as special cases, and studied some properties of (A,η)-monotone operators and defined resolvent operators associated with (A,η)-monotone operators.

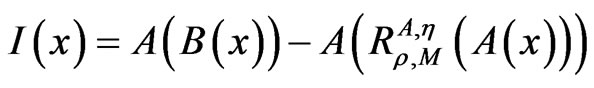

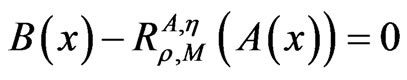

Motivated and inspired by the above works, the purpose of this paper is to introduce and study a new class of over-relaxed proximal point algorithms for approximating solvability of the following nonlinear operator equation in Hilbert space H based on (A,η,m)-monotonicity framework:

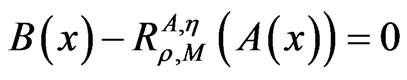

Find x ∈ H such that

, (1)

, (1)

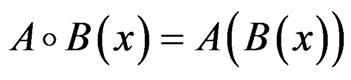

where A,B:H → H and η:H × H → H are three nonlinear operators, M:H → 2H is an (A,η,m)-monotone operator with B(H) ∩ domM(·) ≠ and B(H) ∩ domA(·) ≠

and B(H) ∩ domA(·) ≠ , 2H denotes the family of all the nonempty subsets of H,

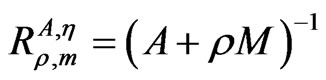

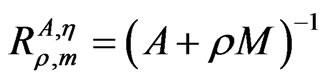

, 2H denotes the family of all the nonempty subsets of H,  is the resolvent operator associated with the multi-valued operator M and ρ > 0 is a constant.

is the resolvent operator associated with the multi-valued operator M and ρ > 0 is a constant.

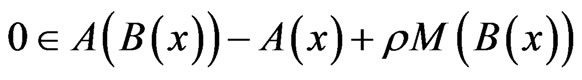

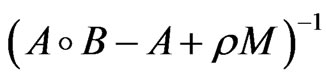

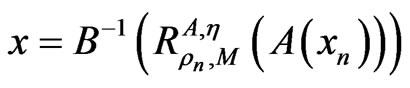

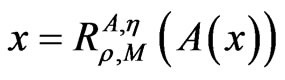

Based on the definition of the resolvent operator, Equation (1) can be written as

, (2)

, (2)

which was studied by Verma [9,10] when B ≡ I, the identity operator.

We remark that for appropriate and suitable choices of A, B, M, η and H, one can know that the problems (1) and (2) include a number of known a general class of problems of variational character, including minimization or maximization (whether constraint or not) of functions, variational problems, and minimax problems as special cases. For more details, see [1-13] and the references therein, and the following example:

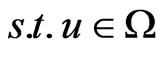

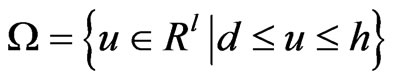

Example 1.1. Consider the following convex optimization problem with bound constraints:

Min f(u),

, (3)

, (3)

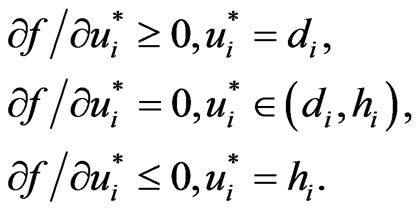

where , R = (−∞, +∞) and f:Ω → R is convex and continuously differentiable. From the Karush-Kuhn-Tucher conditions, we see that u* is an optimal solution to the problem (3) if and only if u* satisfies

, R = (−∞, +∞) and f:Ω → R is convex and continuously differentiable. From the Karush-Kuhn-Tucher conditions, we see that u* is an optimal solution to the problem (3) if and only if u* satisfies

(4)

(4)

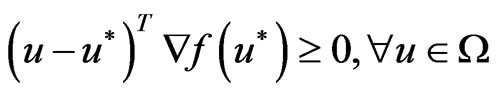

The problem (4) is equivalent to the following variational inequality: , where

, where

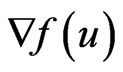

is the gradient of f.

is the gradient of f.

2. Preliminaries

In the sequel, we give some concept and lemmas needed later.

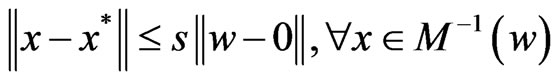

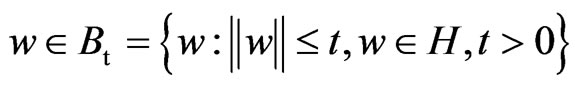

Definition 2.1. An operator M–1, the inverse of M:H → 2H, is (s,t)-Lipschitz continuous at 0 if for any t ≥ 0, there exist a constant s ≥ 0 and a solution x* of 0 ∈ M(x) (equivalently x* ∈ M–1(0)) such that

where

where .

.

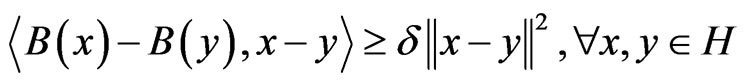

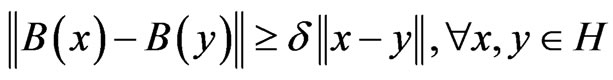

Definition 2.2. Let A, B:H → H and η:H × H → H be single valued operators, and M:H → 2H be a multi-valued operator. Then i) B is δ-strongly monotone, if there exists constant δ > 0 such that

which implies that B is δ-expanding, i.e.,

which implies that B is δ-expanding, i.e.,

;

;

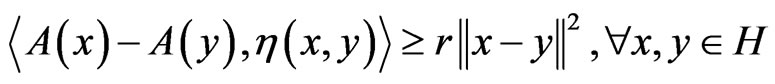

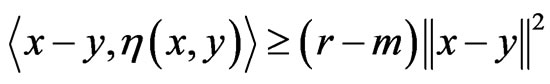

ii) A is r-strongly η-monotone, if there exists a positive constant r such that

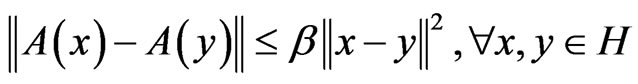

iii) A is β-Lipschitz continuous, if there exists a constant β > 0 such that

iii) A is β-Lipschitz continuous, if there exists a constant β > 0 such that

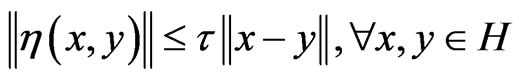

iv) η is τ-Lipschitz continuous if there exists a constant τ > 0 such that

iv) η is τ-Lipschitz continuous if there exists a constant τ > 0 such that

.

.

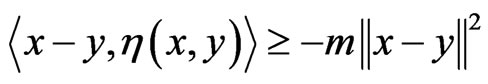

v) M is m-relaxed η-monotone if there exists a constant m > 0 such that for all x, y ∈ H, x ∈ M(x) and y ∈ M(y),

;

;

vi) M is said to be (A,η,m)-maximal monotone if M is m-relaxed η-monotone and R(A + ρM) = H for every ρ> 0.

Remark 2.1. 1) If m = 0 or A = I or η(x, y) = x − y for all x, y ∈ H, (A,η,m)-maximal monotonicity (so-called (A,η)-monotonicity [4], (A,η)-maximal relaxed monotonicity [3]) reduces to the (H,η)-monotonicity, H-monotonicity, A-monotonicity, maximal η-monotonicity, classical maximal monotonicity (see [1-10]). Further, we note that the idea of this extension is so close to the idea of extending convexity to invexity introduced by Hanson in [11], and the problem studied in this paper can be used in invex optimization and also for solving the variational-like inequalities as a direction for further applied research, see, related works in [12,13] and the references therein.

2) Moreover, operator M is said to be generalized maximal monotone (in short GMM-monotone) if:

i) M is monotone; ii) A + ρM is maximal monotone or pseudomonotone for ρ > 0.

Example 2.1. ([3]) Suppose that A:H → H is r-strongly η-monotone, and f:H → R is locally Lipschitz such that ∂f, the subdifferential, is m-relaxed η-monotone with r − m > 0. Clearly, we have

where x ∈ A(x) + ∂f(x) and y ∈ A(y) + ∂f(y) for all x, y ∈ H. Thus, A + ∂f is η-pseudomonotone, which is indeed, η-maximal monotone. This is equivalent to stating that A + ∂f is (A,η,m)-maximal monotone.

where x ∈ A(x) + ∂f(x) and y ∈ A(y) + ∂f(y) for all x, y ∈ H. Thus, A + ∂f is η-pseudomonotone, which is indeed, η-maximal monotone. This is equivalent to stating that A + ∂f is (A,η,m)-maximal monotone.

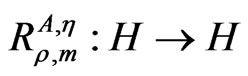

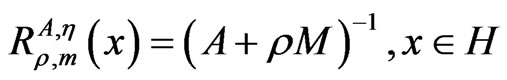

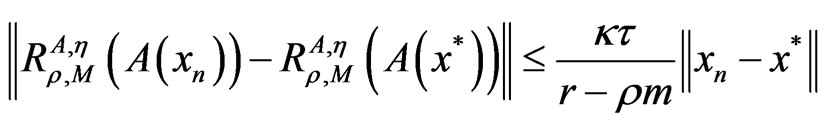

Lemma 2.1. ([4]) Let η:H × H → H be τ-Lipschitz continuous, A:H → H be a r-strongly η-monotone operator and M:H → 2H be an (A,η,m)-maximal monotone operator. Then the resolvent operator  defined by

defined by

.

.

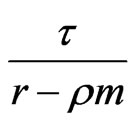

is  -Lipschitz continuous.

-Lipschitz continuous.

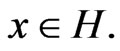

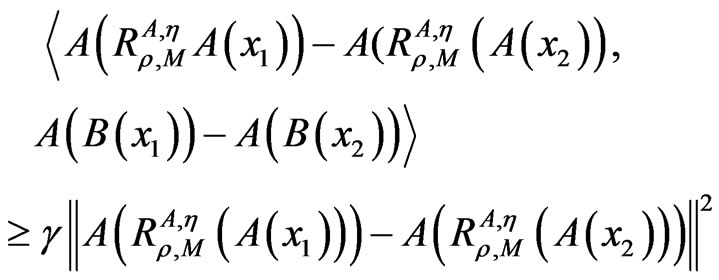

Lemma 2.2. Let A, B, η, M and H be the same as in the problem (1). If

for , and for all

, and for all , ρ > 0 and

, ρ > 0 and

then

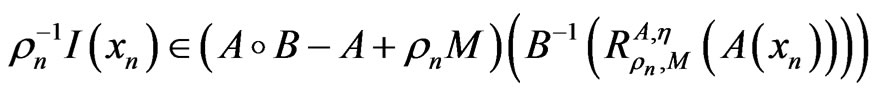

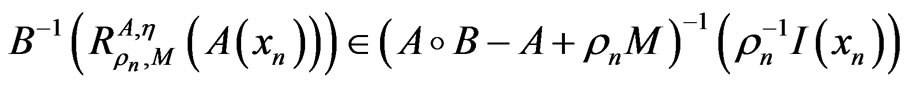

Proof. By the assumption, now we know

Proof. By the assumption, now we know

.

.

This completes the proof.

3. Algorithms and Approximation-Solvability

In this section, we shall introduce a new class of over-relaxed (A,η,m)-proximal point algorithms to approximating solvability of the nonlinear operator Equation (1).

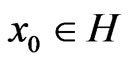

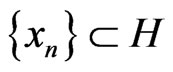

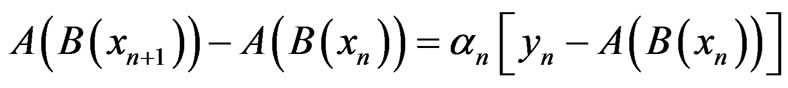

Algorithm 3.1. Step 1. Choose an arbitrary initial point .

.

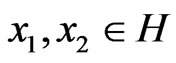

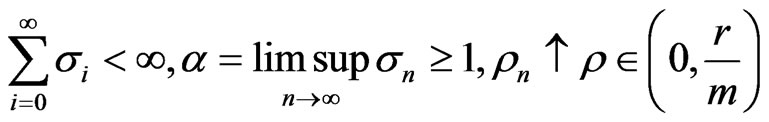

Step 2. Choose sequences {αn}, {σn} and {ρn} such that for n ≥ 0, {αn}, {σn} and {ρn} are three sequences in [0, ∞) satisfying

.

.

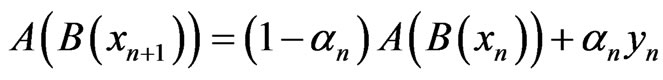

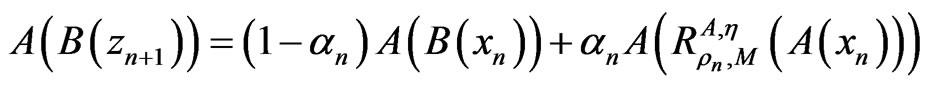

Step 3. Let  be generated by the following iterative procedure

be generated by the following iterative procedure

, (5)

, (5)

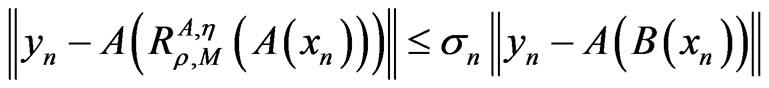

and yn satisfies

where n ≥ 0,

where n ≥ 0,  and ρ > 0 is a constant.

and ρ > 0 is a constant.

Step 4. If xn and yn (n = 0, 1, 2, ···) satisfy (5) to sufficient accuracy, stop; otherwise, set k: = k + 1 and return to Step 2.

Remark 3.1. We note that Algorithm 3.1 becomes to the algorithm of Theorem 3.2 associated with A-maximal monotonicity in [10] when B ≡ I.

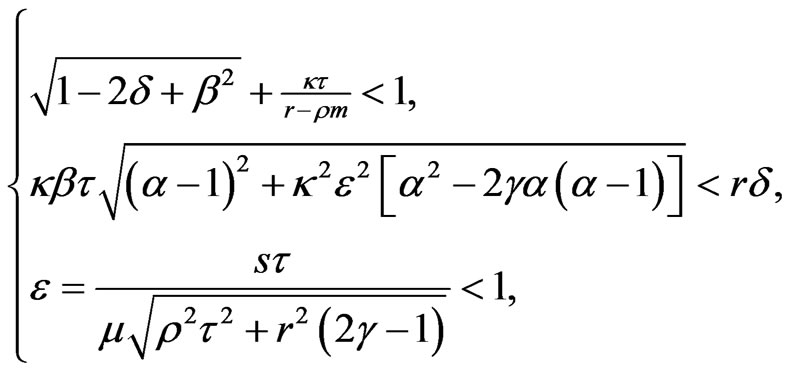

Theorem 3.1. Let A, B, M, η and H be the same as in problem 1). If, in additioni) η is τ-Lipschitz continuous, A is κ-Lipschitz continuous and r-strongly η-monotone, B is β-Lipschitz continuous and δ-strongly monotone with the inverse B–1 is μ-expanding with μδ ≤ 1;

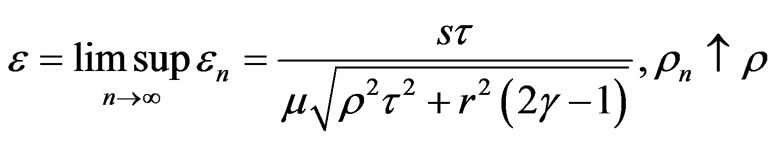

ii)  is (s,t)-Lipschitz continuous at 0, where

is (s,t)-Lipschitz continuous at 0, where  is defined by

is defined by  for x ∈ H;

for x ∈ H;

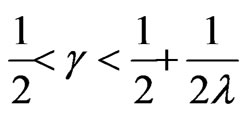

iii) for

and λ > 0,

iv) the iterative sequence  generated by Algorithm 3.1 is bounded;

generated by Algorithm 3.1 is bounded;

v) there exists a constant ϱ > 0 such that

(6)

(6)

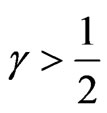

then 1) the nonlinear resolvent operator Equation (1) has a unique solution x* in H.

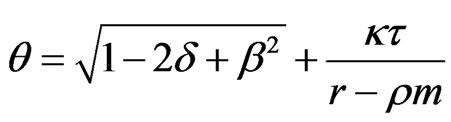

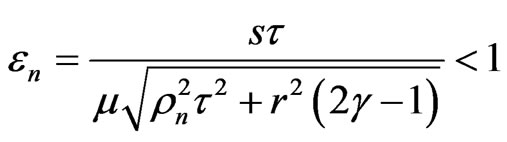

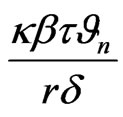

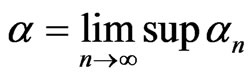

2) the sequence {xn} converges linearly to the solution x* with convergence rate

.

.

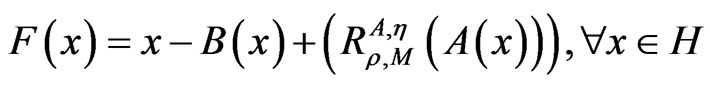

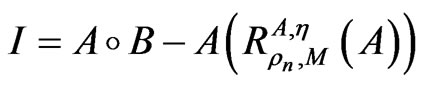

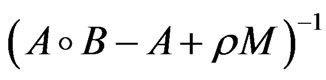

Proof. Firstly, for any given ρ > 0, define F:H → H by

.

.

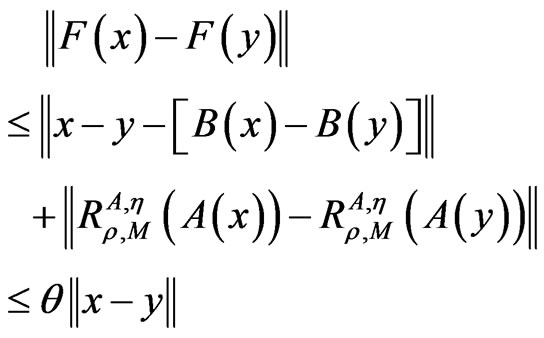

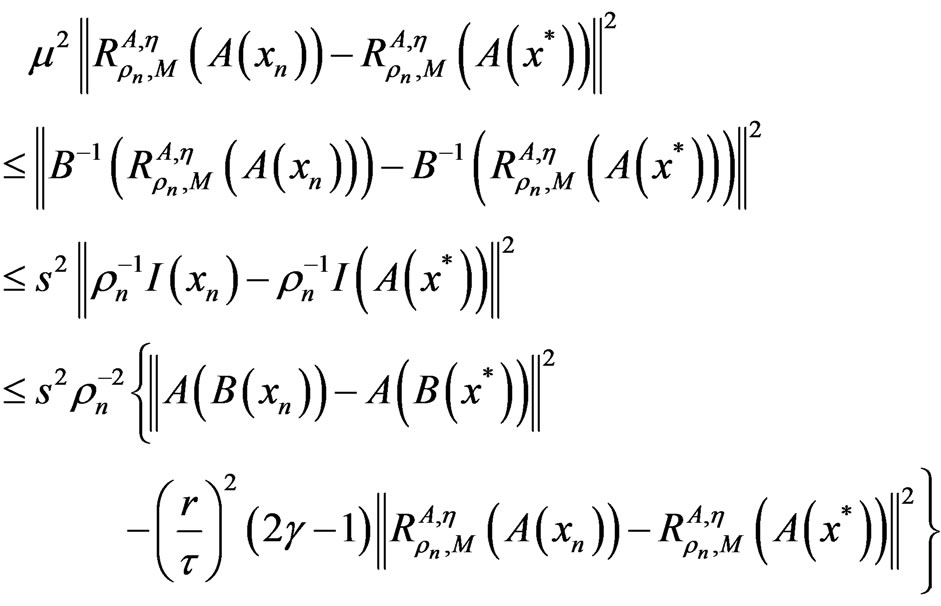

By the assumptions of the theorem and Lemma 2.1, for all x, y ∈ H we have

where .

.

It follows from condition (6) that 0 <  < 1 and so F is a contractive mapping, which shows that F has a unique fixed point in X.

< 1 and so F is a contractive mapping, which shows that F has a unique fixed point in X.

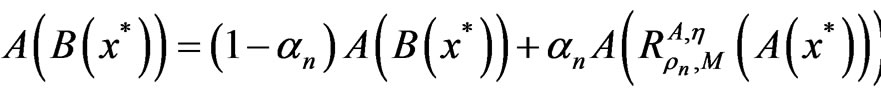

Next, we prove the conclusion (2). Let x* be a solution of problem (1). Then for all ρn > 0 and n ≥ 0, we have

, (7)

, (7)

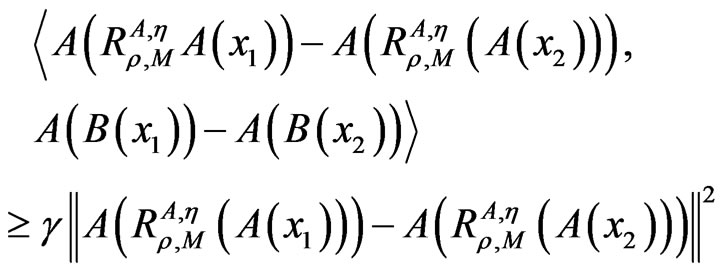

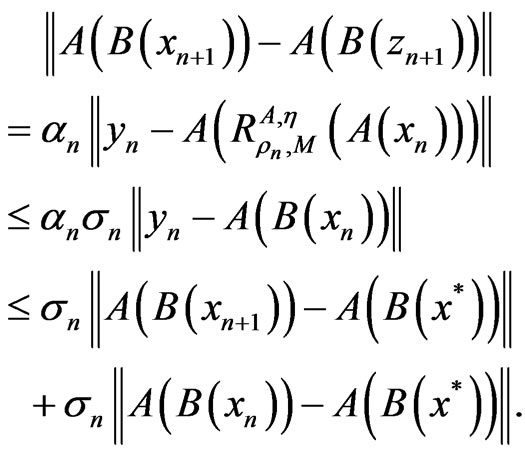

For  and under the assumptions, it follows that I(xn) → 0(n → ∞). Since

and under the assumptions, it follows that I(xn) → 0(n → ∞). Since

this implies

this implies

.

.

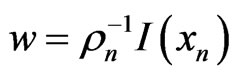

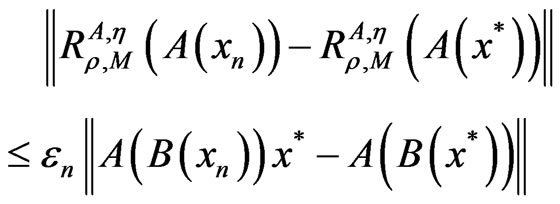

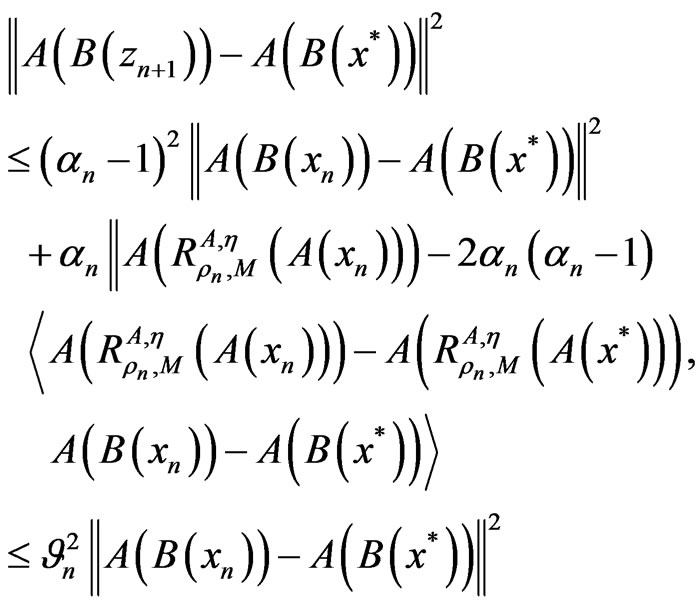

Then, applying Lemma 2.2, the strong monotonicity of A, and the Lipschitz continuity of A and η (and hence, A being expanding), and the Lipschitz continuity at 0 of  by setting

by setting  and

and

we know

we know

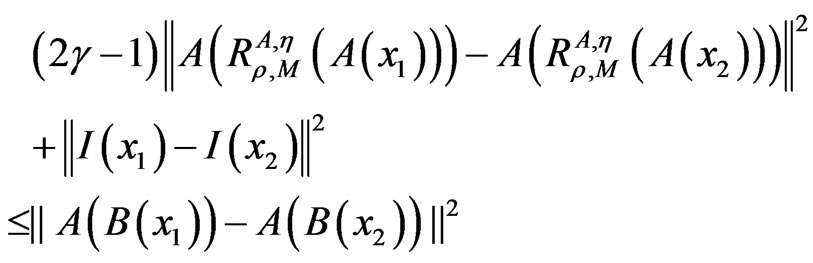

which implies

which implies

, (8)

, (8)

where .

.

For n ≥ 0, let

.

.

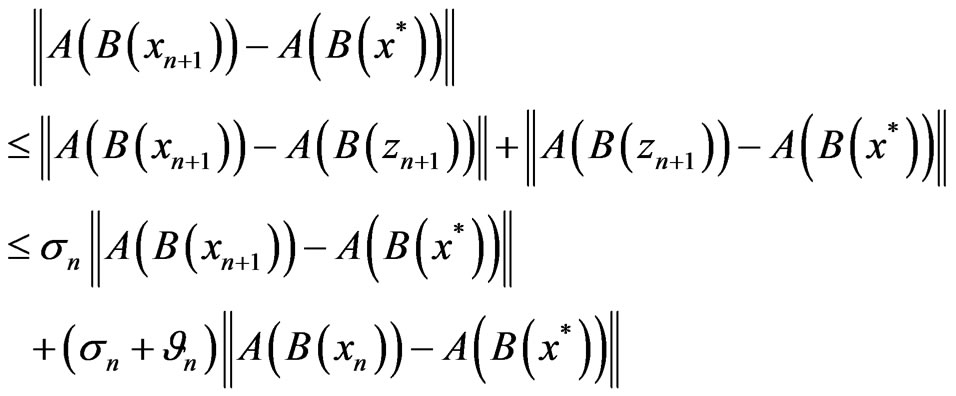

By the assumptions of the theorem, (7) and (8), now we find the estimate,

(9)

(9)

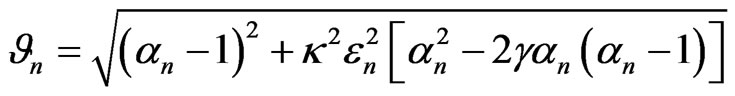

where

.

.

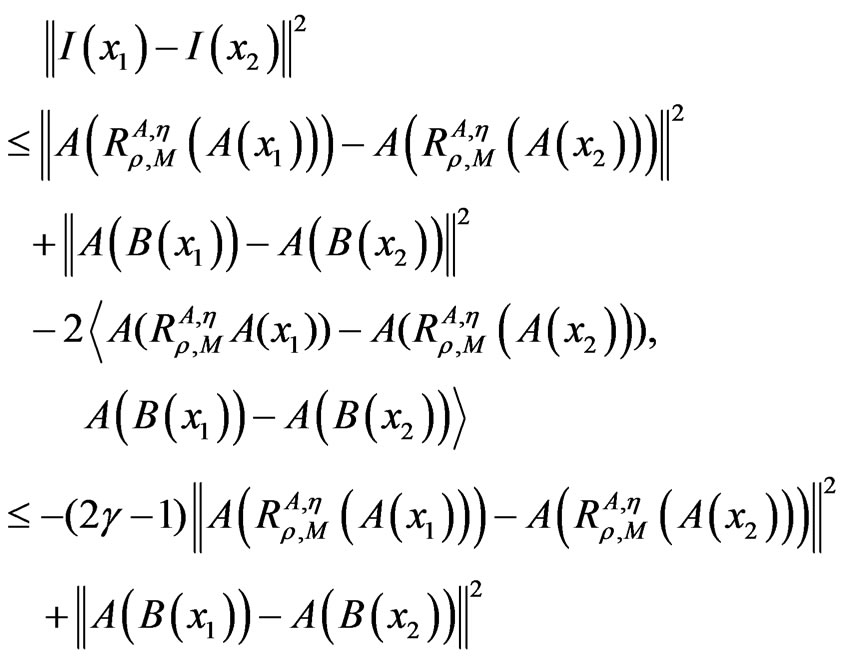

Since

we have

we have

and

(10)

(10)

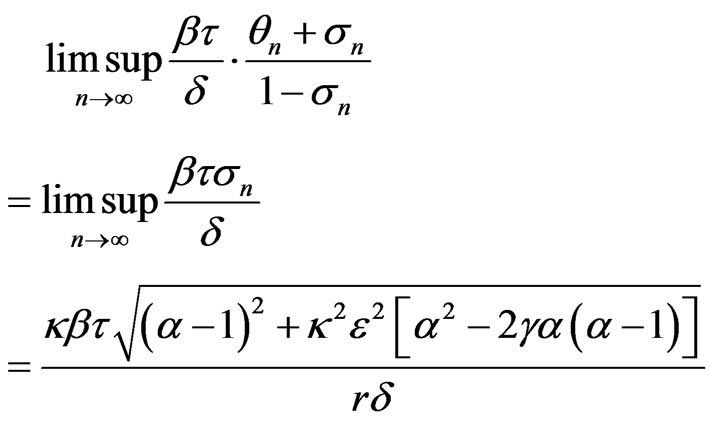

In the sequel, we estimate using (9) and (10) that

which implies

(11)

(11)

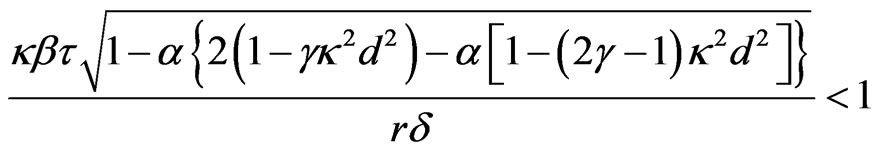

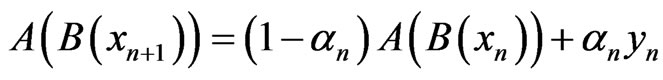

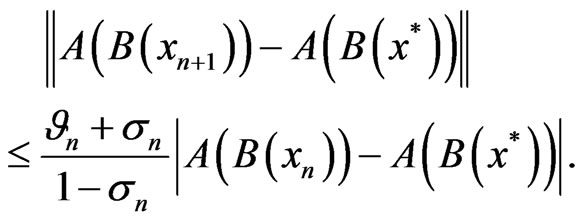

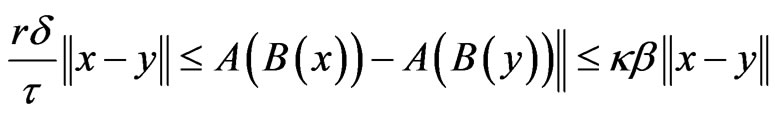

It follows from (11), the strong monotonicity of A and B, and the Lipschitz continuity of A, B and η that for all x, y ∈ H,

and

(12)

(12)

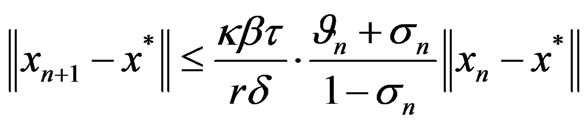

From (12), now we know that the {xn} converges linearly to a solution x* for

.

.

Hence, we have

where

,

,

. This completes the proof.

. This completes the proof.

Remark 3.2. 1) If B ≡ I or κ = 1 (namely, A is nonexpansive), we have the corresponding results of Theorem 3.1 for nonlinear equation .

.

2) By using Lemma 2.1, the convergence analysis of the iterative sequence {xn} generated by Algorithm 3.1 can be established when the conditions (ii), (iii) and others are not satisfied, that is, the inequality (8) can be replaced by

3) The corresponding results can be shown when M is (H,η)-monotonicity, H-monotonicity, A-monotonicity, maximal η-monotonicity and classical maximal monotonicity, respectively. That is, the results presented in this paper improve and generalize the corresponding results of [1,2,9,10].

4. Conclusions

In this paper, we introduce and study a new class of over-relaxed proximal point algorithms for solving the following nonlinear operator equations with (A,η,m)- monotonicity framework in Hilbert spaces: Find x ∈ H such that

.

.

Further, by using the generalized resolvent operator technique associated with the (A,η,m)-monotone operators, we investigate the existence of solutions for the operator equation problem and the convergence of iterative sequences generated by the algorithm. The results presented in this paper improve and generalize the corresponding results in the literature.

5. Acknowledgements

This work was supported by the Scientific Research Fund of Sichuan Provincial Education Department (10ZA136) and the Cultivation Project of Sichuan University of Science and Engineering (2011PY01).

REFERENCES

- R. P. Agarwal and R. U. Verma, “Role of relative AMaximal Monotonicity in Overrelaxed Proximal Point Algorithm with Applications,” Journal of Optimization Theory and Applications, Vol. 143, No. 1, 2009, pp. 1-15. doi:10.1007/s10957-009-9554-z

- R. P. Agarwal and R. U. Verma, “General Implicit Variational Inclusion Problems Based on A-Maximal (m)-Relaxed Monotonicity (AMRM) Frameworks,” Applied Mathematics and Computation, Vol. 215, No. 1, 2009, pp. 367-379. doi:10.1016/j.amc.2009.04.078

- R. P. Agarwal and R. U. Verma, “General System of (A,η)-Maximal Relaxed Monotone Variational Inclusion Problems Based on Generalized Hybrid Algorithms,” Communications in Nonlinear Science and Numerical Simulation, Vol. 15, No. 2, 2010, pp. 238-251. doi:10.1016/j.cnsns.2009.03.037

- H. Y. Lan, “A Class of Nonlinear (A,η)-Monotone Operator Inclusion Problems with Relaxed Cocoercive Mappings,” Advances in Nonlinear Variational Inequalities, Vol. 9, No. 2, 2006, pp. 1-11.

- H. Y. Lan, “Approximation Solvability of Nonlinear Random (A,η)-Resolvent Operator Equations with Random Relaxed Cocoercive Operators,” Computers & Mathematics with Applications, Vol. 57, No. 4, 2009, pp. 624- 632. doi:10.1016/j.camwa.2008.09.036

- H. Y. Lan, “Sensitivity Analysis for Generalized Nonlinear Parametric (A,η,m)-Maximal Monotone Operator Inclusion Systems with Relaxed Cocoercive Type Operators,” Nonlinear Analysis: Theory, Methods & Applications, Vol. 74, No. 2, 2011, pp. 386-395.

- R. U. Verma, “Generalized Over-Relaxed Proximal Algorithm Based on A-Maximal Monotonicity Framework and Applications to Inclusion Problems,” Mathematical and Computer Modelling, Vol. 49, No. 7-8, 2009, pp. 1587-1594.

- R. U. Verma, “General Over-Relaxed Proximal Point Algorithm Involving A-Maximal Relaxed Monotone Mappings with Applications,” Nonlinear Analysis: Theory, Methods & Applications, Vol. 71, No. 12, 2009, pp. e1461-e1472. doi:10.1016/j.na.2009.01.184

- R. U. Verma, “A Hybrid Proximal Point Algorithm Based on the (A,η)-Maximal Monotonicity Framework,” Applied Mathematics Letters, Vol. 21, No. 2, 2008, pp. 142-147. doi:10.1016/j.aml.2007.02.017

- R. U. Verma, “A General Framework for the Over-Relaxed A-Proximal Point Algorithm and Applications to Inclusion Problems,” Applied Mathematics Letters, Vol. 22, No. 5, 2009, pp. 698-703. doi:10.1016/j.aml.2008.05.001

- M. A. Hanson, “On Sufficiency of Kuhn-Tucker Conditions,” Journal of Mathematical Analysis and Applications, Vol. 80, No. 2, 1981, pp. 545-550. doi:10.1016/0022-247X(81)90123-2

- M. Soleimani-Damaneh, “Generalized Invexity in Separable Hilbert Spaces,” Topology, Vol. 48, No. 2-4, 2009, pp. 66-79. doi:10.1016/j.top.2009.11.004

- M. Soleimani-Damaneh, “Infinite (Semi-Infinite) Problems to Characterize the Optimality of Nonlinear Optimization Problems,” European Journal of Operational Research, Vol. 188, No. 1, 2008, pp. 49-56. doi:10.1016/j.ejor.2007.04.026