Journal of Computer and Communications

Vol.07 No.06(2019), Article ID:93325,9 pages

10.4236/jcc.2019.76002

Improved CoSaMP Reconstruction Algorithm Based on Residual Update

Dongxue Lu, Guiling Sun*, Zhouzhou Li, Shijie Wang

College of Electronic Information and Optical Engineering, Nankai University, Tianjin, China

Copyright © 2019 by author(s) and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY 4.0).

http://creativecommons.org/licenses/by/4.0/

Received: March 20, 2019; Accepted: June 25, 2019; Published: June 28, 2019

ABSTRACT

A large number of sparse signal reconstruction algorithms have been continuously proposed, but almost all greedy algorithms add a fixed number of indices to the support set in each iteration. Although the mechanism of selecting the fixed number of indexes improves the reconstruction efficiency, it also brings the problem of low index selection accuracy. Based on the full study of the theory of compressed sensing, we propose a dynamic indexes selection strategy based on residual update to improve the performance of the compressed sampling matching pursuit algorithm (CoSaMP). As an extension of CoSaMP algorithm, the proposed algorithm adopts a residual comparison strategy to improve the accuracy of backtracking selected indexes. This backtracking strategy can efficiently select backtracking indexes. And without increasing the computational complexity, the proposed improvement algorithm has a higher exact reconstruction rate and peak signal to noise ratio (PSNR). Simulation results demonstrate the proposed algorithm significantly outperforms the CoSaMP for image recovery and one-dimensional signal.

Keywords:

Compressed Sensing, Residual Descent, Reconstruction Algorithm, Backtracking

1. Introduction

Compressed Sensing (CS) is a new theory of signal processing, proposed by [1] [2] . If the sampled signal has sparsity or compressibility, the original signal can be recovered well by sampling only a small number of data points and selecting the reconstruction algorithm reasonably. There are a variety of sparse signals in nature, which brings broad prospects for the use of compressed sensing [3] [4] [5] . In order to efficiently reconstruct the original signal, a lot of algorithms have been proposed to solve this problem. They are mainly divided into three categories: greedy pursuit, convex optimization and combination algorithm. Greedy pursuit has been widely used owing to its low computing complexity and high reconstruction speed. Tropp and Gilbert propose Orthogonal Matching Pursuit (OMP) in 2006. Later, some modified algorithms are proposed, such as Stagewise Orthogonal Matching Pursuit (StOMP) [6] , Stagewise Weak Orthogonal Matching Pursuit (SWOMP) [7] ; these algorithms have low reconstruction complexity but require more measurements to perfect reconstruction. Subspace Pursuit (SP) [8] [9] [10] [11] introduced the idea of backtracking which can offer better reconstruction quality and low reconstruction complexity, but it needs the sparsity level K as a prior information. Bayesian Group Matching Pursuit (GMP) [12] [13] , whose group coefficients are modeled by a multivariate Gaussian distribution, and solved by a maximum a posteriori probability estimate, has a better reconstruction in solving the reconstruction problem.

The reconstruction algorithm is the core of CS theory. How to efficiently recover low-dimensional signals to high-dimensional signals is the goal pursued by many researchers. The difficulty with this problem is that reconstruction of high-dimensional signals requires solving an underdetermined equation. So the problem can be solved by the l0 norm minimization, which is an NP-hard problem [14] that requires exhaustively listing all possibilities of the original signal and is difficult to achieve by the traditional algorithm.

In this paper, the improved CoSaMP reconstruction algorithm based on residual update was proposed, which is an improved algorithm in view of the CoSaMP. We propose a dynamic indexes selection strategy based on residual update to improve the performance of the compressed sampling matching pursuit algorithm (CoSaMP). This backtracking strategy which is based on this residual descent can flexibly select backtracking indexes. And this improvement can improve the reconstruction performance. Different simulation results show that the improved algorithm has better reconstruction performance for both one-dimensional signals and two-dimensional signals compared with other classical algorithms.

The rest of this paper is organized as follows. Section 2 introduces the background and the improved algorithm. We discuss the performance of proposed algorithm in Section 3 and conclude the paper in Section 4.

2. Compressive Sensing Model and Greedy Algorithm

2.1. Compressive Sensing Model

We suppose that x is an sparse signal. CS suggests that one can recover certain signal from far fewer samplings or measurements than traditional methods via the following optimization problem:

(1)

where indicates the number of nonzero elements in x. The denotes the random measurement matrix . But, zero-norm minimization problem is NP-hard. The solution to the one-norm problem is convex relaxation of zero-norm. As the computational intractability, this problem could be replaced by one-norm optimization problem:

(2)

where represents the sum of absolute value of non-zeros components of x. And linear programming methods have shown to be effective in solving such problems (2) with high accuracy. Such as BP. Theoretically, Candes also proved that when the random measurement matrix satisfies the restricted isometry property (RIP) [15] [16] , Equation (2) is equivalent to Equation (1). The following definition of restricted isometry property (RIP) of sensing matrix plays an important role on the analysis of recovery algorithm in CS.

Definition 1. For the sensing matrix , each integer , the satisfies the restricted isometry property of order s if

(3)

holds for all s-sparse vectors and some . The restricted isometry constant (RIC) is the smallest value.

But minimizing the l1 norm is very complicated and requires a lot of calculations. However, the greedy reconstruction algorithm has a simple geometry and a very low computational complexity. So, greedy algorithm is widely used for sparse reconstruction.

2.2. Compressed Sampling Matching Pursuit Algorithm

As we all know, the OMP algorithm first proposed the idea of orthogonality. Many subsequent algorithms follow this idea. OMP algorithm selects only one index which is corresponding to the largest magnitude. Through this method, index selection is inefficient. And OMP does not adopt backtracking mechanism. Once an error occurs in the support set selection process, it will result in poor reconstruction. For CoSaMP algorithm, it selects multi-indexes in each iteration, which can promote the reconstruction speed. However, if the selected indexes is not appropriate for signal reconstruction, it would remove fixed indexes from temporary support sets to get high reconstruction quality and accuracy. The CoSaMP algorithm is summarized in Table 1.

2.3. The Proposed Algorithm

Since the CoSaMP algorithm returns a fixed number of indexes in the backtracking phase, it is added to the support set. These indexes may be errors with a high probability. Once a high probability error occurs, it will have a great impact on the reconstruction results. We propose a backtracking index dynamic selection strategy based on residual descent. We can dynamically adjust the number of backtracking indexes based on changes in the residuals. The proposed algorithm is summarized in Table 2.

Table 1. CoSaMP algorithm.

Table 2. The proposed algorithm.

3. Simulations and Analysis

In this part, we verify the performance of the proposed sparse signal reconstruction algorithm based on residual update and compare it with several most advanced reconstruction algorithms. We comprehensively verify and contrast the proposed algorithm from one-dimensional random sparse signals and two-dimensional image signals. And we have detailed each experiment.

3.1. One-Dimensional Signal

In this section, in order to demonstrate the superiority of the proposed algorithm, we compare the proposed algorithm with other similar classical algorithms for signals with nonzero elements drawn from the Gaussian and uniform distributions. Gaussian sparse signals are used with length N = 256 and sparsity level K = (5, 20). The sensing matrix , which is different for each test signal, is drawn from the Gaussian distribution with mean 0 and standard deviation 1/N. Then, we normalize each column norm to unity. Experimental platform was MATLAB R2013b.

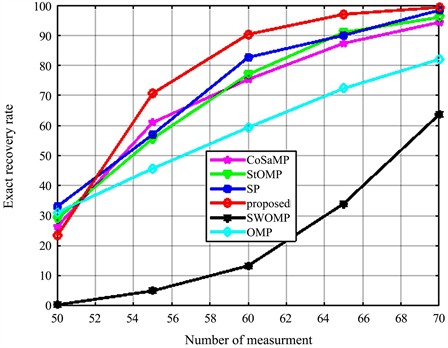

As shown in Figure 1, we provide the rate of exact reconstruction performance as a function of the measurement number M. Figure 1 shows the curves of the probability of exact recovery signal with the proposed algorithm. 500s simulations were conducted for the different number of measurements M. The exact recovery rate of the proposed algorithm at M = 60 increases by 90%. And overall, the algorithm proposed in this paper is also superior to other classical algorithms in Figure 1.

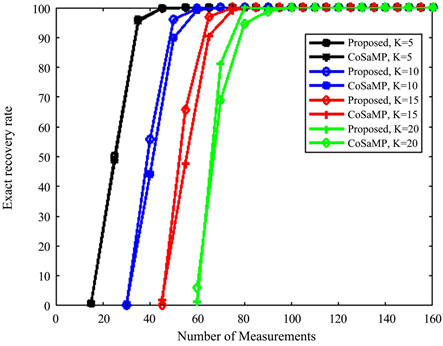

Figure 2 shows the curves of the probability of exact recovery signal with the proposed algorithm. It shows the comparison of the exact reconstruction rate of the proposed algorithm and CoSaMP algorithm under different sparsity level (i.e., 5, 10, 15, 20 non-zero entries). 500 simulations were conducted for each pair of sparsity k and the number of measurements M. Figure 2 also shows the proposed algorithm has higher probability of exact recovery signal.

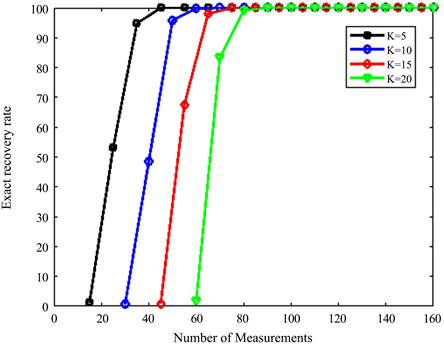

Figure 3 shows the curves of the probability of exact recovery signal with proposed algorithm. The number of measurements (M), given a fixed signal sparsity K. 500 simulations were conducted for each pair of sparsity K and the number of measurements M. Figure 3 shows that the proposed algorithm has higher stability and reliability in terms of exact recovery of the signal.

Figure 1. Rate of exact reconstruction performance as a function of measurement number.

Figure 2. Rate of exact reconstruction performance as a function of measurement number.

Figure 3. Probability of exact recovery signal with proposed algorithm. The original signal has length of N = 256 with K = 5, 10, 15, 20 non-zero entries.

3.2. Two-Dimensional Signal

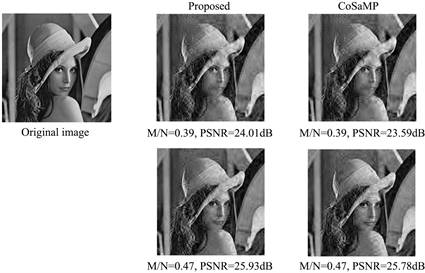

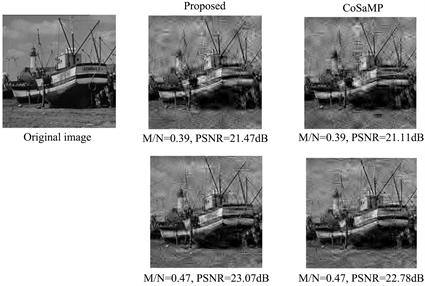

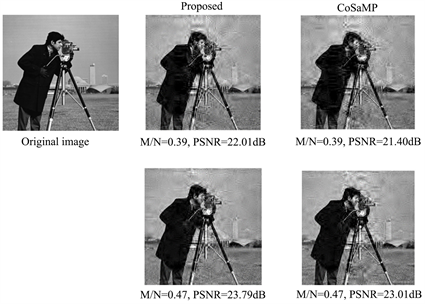

In the two-dimensional image experiment, the wavelet base is used as the sparse basis and the random Gauss matrix is used as the measurement matrix . We use three international standard, i.e. Lena image, Camera image and Barche image, as the input to conduct the comparison analysis of different algorithms. And the peak signal-to-noise ratio (PSNR) is used to evaluate the performance of the improved algorithm and the original algorithm, which is defined as follows

(4)

where x is the original image, is the reconstruction image. Sampling ratio is M/N. The size of each image is 256 × 256.

From Figure 4, we can know that the proposed algorithm is superior to the original algorithms CoSaMP. And we can see that the proposed algorithm has a better visual effect for different types of images. The improved algorithm can accurately recover the original signal.

Figure 4. Visual comparison of image reconstruction by different algorithms.

4. Conclusion

Based on a comprehensive study of the sparse signal reconstruction algorithm, we find that CoSaMP cannot dynamically select the support set to efficiently reconstruct the original signal. To solve this problem, we use the residual update strategy to improve the backtracking index selection. Thereby the number of backtracking indexes is adjusted according to the direction in which the residual is decreasing. The proposed algorithm can significantly improve the performance of CoSaMP by adjusting dynamically the number of indexes which are added to the support set in each iteration. Different simulation results show that the improved algorithm has better reconstruction performance for both one-dimensional signals and two-dimensional signals compared with other classical algorithms.

Acknowledgements

This research has been supported by National Natural Science Foundation of China under Grant No. 61771262, and Science and Technology Major Project and Engineering of Tianjin China under Grant No. 2017ZXHLNC00100.

Conflicts of Interest

The authors declare no conflicts of interest regarding the publication of this paper.

Cite this paper

Lu, D.X., Sun, G.L., Li, Z.Z. and Wang, S.J. (2019) Improved CoSaMP Reconstruction Algorithm Based on Residual Update. Journal of Computer and Communications, 7, 6-14. https://doi.org/10.4236/jcc.2019.76002

References

- 1. Donoho, D.L. (2006) Compressed Sensing. IEEE Transactions on Information Theory, 52, 1289-1306. https://doi.org/10.1109/TIT.2006.871582

- 2. Candes, E.J. and Wakin, M.B. (2008) An Introduction to Compressive Sampling. IEEE Signal Processing, 25, 21-30. https://doi.org/10.1109/MSP.2007.914731

- 3. Ghahremani, M. and Ghassemian, H. (2015) Remote Sensing Image Fusion Using Ripplet Transform and Compressed Sensing. IEEE Geoscience and Remote Sensing Letters, 12, 502-506. https://doi.org/10.1109/LGRS.2014.2347955

- 4. Wei, J., Huang, Y., Lu, K., et al. (2016) Nonlocal Low-Rank-Based Compressed Sensing for Remote Sensing Image Reconstruction. IEEE Geoscience and Remote Sensing Letters, 13, 1557-1561. https://doi.org/10.1109/LGRS.2016.2595863

- 5. Zheng, H., Yang, F., Tian, X., et al. (2015) Data Gathering with Compressive Sensing in Wireless Sensor Networks: A Random Walk Based Approach. IEEE Transactions on Parallel and Distributed Systems, 26, 35-44. https://doi.org/10.1109/TPDS.2014.2308212

- 6. Ning, K. (2014) Compressed Sensing Image Processing Based on Stagewise Orthogonal Matching Pursuit. Sensors & Transducers, 181, 134.

- 7. Shi, M., Li, L. and Xu, J. (2018) Backtracking Stagewise Weak Orthogonal Matching Pursuit Algorithm Based on Fuzzy Threshold. Video Engineering, No. 2, 2.

- 8. Goyal, P. and Singh, B. (2018) Subspace Pursuit for Sparse Signal Reconstruction in Wireless Sensor Networks. Procedia Computer Science, 125, 228-233. https://doi.org/10.1016/j.procs.2017.12.031

- 9. Kim, K.S. and Chung, S.Y. (2019) Greedy Subspace Pursuit for Joint Sparse Recovery. Journal of Computational and Applied Mathematics, 352, 308-327. https://doi.org/10.1016/j.cam.2018.11.027

- 10. Becerra, J.A., Herrera, D., Madero-Ayora, M.J., et al. (2018) Sparse Model Selection of Digital Predistorters Using Subspace Pursuit. 13th European Microwave Integrated Circuits Conference, Madrid, 24-26 September 2018, 190-193. https://doi.org/10.23919/EuMIC.2018.8539910

- 11. Dai, W. and Milenkovic, O. (2009) Subspace Pursuit for Compressive Sensing Signal Reconstruction. IEEE Transactions on Information Theory, 55, 2230-2249. https://doi.org/10.1109/TIT.2009.2016006

- 12. Han, N. and Song, Z. (2018) Bayesian Multiple Measurement Vector Problem with Spatial Structured Sparsity Patterns. Digital Signal Processing, 75, 184-201. https://doi.org/10.1016/j.dsp.2018.01.015

- 13. Li, W., Liu, F., Jiao, L., Hao, H. and Yang, S. (2016) A Group Matching Pursuit for Image Reconstruction. Image Communication, 49, 47-62. https://doi.org/10.1016/j.image.2016.10.002

- 14. Peng, J., Wu, A. and Zhu, L. (2018) A New Quadratic Optimization Approach to Beam Angle Optimization for Fixed-Field Intensity Modulated Radiation Therapy Using Compressed Sensing.

- 15. Dirksen, S., Lecué, G. and Rauhut, H. (2018) On the Gap between Restricted Isometry Properties and Sparse Recovery Conditions. IEEE Transactions on Information Theory, 64, 5478-5487. https://doi.org/10.1109/TIT.2016.2570244

- 16. Cohen, A., Dahmen, W. and DeVore, R. (2017) Orthogonal Matching Pursuit under the Restricted Isometry Property. Constructive Approximation, 45, 113-127. https://doi.org/10.1007/s00365-016-9338-2