Applied Mathematics

Vol.07 No.09(2016), Article ID:66925,26 pages

10.4236/am.2016.79086

Proximal Methods for Elliptic Optimal Control Problems with Sparsity Cost Functional

Andreas Schindele, Alfio Borzì

Institut für Mathematik, Universität Würzburg, Würzburg, Germany

Copyright © 2016 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 17 March 2016; accepted 27 May 2016; published 30 May 2016

ABSTRACT

First-order proximal methods that solve linear and bilinear elliptic optimal control problems with a sparsity cost functional are discussed. In particular, fast convergence of these methods is proved. For benchmarking purposes, inexact proximal schemes are compared to an inexact semismooth Newton method. Results of numerical experiments are presented to demonstrate the computational effectiveness of proximal schemes applied to infinite-dimensional elliptic optimal control problems and to validate the theoretical estimates.

Keywords:

Optimal Control, Elliptic PDE, Nonsmooth Optimization, Proximal Method, Semismooth Newton Method

1. Introduction

In recent years, a great research effort has been made to solve optimization problems governed by Partial Differential Equations (PDEs); see, e.g., [1] - [3] and references therein. In many cases, this research has focused on objective functionals with differentiable  terms and non-smoothness resulted from the presence of control and state constraints. However, more recently, the investigation of

terms and non-smoothness resulted from the presence of control and state constraints. However, more recently, the investigation of  cost functionals has become a central topic in PDE-based optimization [4] - [6] , because they give rise to sparse controls that are advantageous in many applications like optimal actuator placement [4] or impulse control [7] .

cost functionals has become a central topic in PDE-based optimization [4] - [6] , because they give rise to sparse controls that are advantageous in many applications like optimal actuator placement [4] or impulse control [7] .

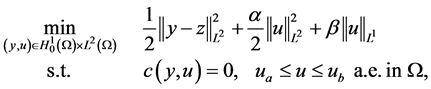

A representative formulation of optimal control problems with  control costs is the following

control costs is the following

(1.1)

(1.1)

where  represents a PDE for the state y including the control u. This problem has been discussed in [4] [5] for the case where

represents a PDE for the state y including the control u. This problem has been discussed in [4] [5] for the case where  represents a linear elliptic operator. Nonlinear PDE constraints have been considered in [6] . However, in these references a linear control mechanism is discussed. Concerning the optimization methodology for (1.1), the semi-smooth Newton method has been the solver of choice in [4] - [6] .

represents a linear elliptic operator. Nonlinear PDE constraints have been considered in [6] . However, in these references a linear control mechanism is discussed. Concerning the optimization methodology for (1.1), the semi-smooth Newton method has been the solver of choice in [4] - [6] .

On the other hand, in the field of signal acquisition and reconstruction, l1-based optimization and sparsity have been exploited to successfully recover “functions” from few samples; see, e.g., [8] - [10] .

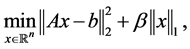

In this framework, it was shown [11] that l1-based inverse problems in signal recovery can be very efficiently solved by proximal methods. Nowadays, these iterative schemes are the method of choice in magnetic resonance imaging and a special proximal method called “Fast Iterative Soft Thresholding Algorithm” (FISTA) [12] is con- sidered the state-of-the-art method for solving finite-dimensional optimization problems of the following form

where the rectangular matrix A represents a blur operator.

We remark that the research and successful application of proximal schemes are attracting attention of many scientists and practitioners, which result in many new developments in this field. We refer to, e.g., [13] for recent results and additional references.

The purpose of our work is to contribute to the field of PDE-based optimization with  control costs by investigating proximal methods in this infinite-dimensional setting. In particular, we aim at implementing and analysing proximal schemes for solving (1.1) that exploit first-order optimality conditions. Our investigation is motivated by the fact that proximal methods may have a computational performance that is comparable to that of semismooth Newton methods. However, in contrast to the latter, proximal schemes do not require the con- struction of second-order derivatives and the implementation of, e.g., a Krylov solver.

control costs by investigating proximal methods in this infinite-dimensional setting. In particular, we aim at implementing and analysing proximal schemes for solving (1.1) that exploit first-order optimality conditions. Our investigation is motivated by the fact that proximal methods may have a computational performance that is comparable to that of semismooth Newton methods. However, in contrast to the latter, proximal schemes do not require the con- struction of second-order derivatives and the implementation of, e.g., a Krylov solver.

For our investigation, we consider (1.1) with elliptic operators and linear and bilinear control mechanisms. Notice that the latter case has been a much less investigated problem. One of our main contributions is to prove convergence for all variants of the proximal schemes that we discuss in this paper. In particular, we prove an  convergence rate of the value of reduced cost functional, where k is the number of proximal iterations. This notion of convergence is used in l1-based optimization and in some application fields [14] .

convergence rate of the value of reduced cost functional, where k is the number of proximal iterations. This notion of convergence is used in l1-based optimization and in some application fields [14] .

We remark that many arguments in our analysis are similar to those presented in the finite-dimensional case. However, some additional arguments are necessary in infinite dimensions, especially regarding the structure of our differential constraints and the discussion of our inexact proximal schemes. We refer to [13] for further results concerning the formulation of proximal schemes for infinite-dimensional optimization problems from a different perspective.

In the next section, we discuss linear and bilinear elliptic optimal control problems, where for completeness, some conditions for the existence of a unique control-to-state operators are considered. Section 3 is devoted to optimal control problems with sparsity costs and governed by elliptic equations with linear and bilinear control mechanisms. We discuss conditions for convexity of the bilinear problem and state the optimality conditions. In Section 4, we present a Fast Inexact Proximal method (FIP) that represents an infinite-dimensional extension of the FISTA method. In Section 5, the convergence rate of this method is proven to be . In Section 6, an inexact semismooth Newton method in function spaces is presented as the state of the art method for comparison purposes. For completeness, the theory of this method is extended to the case of elliptic bilinear control pro- blems. A numerical comparison of the FIP and Semismooth-Newton methods is presented in Section 7. A section of conclusion completes this work.

. In Section 6, an inexact semismooth Newton method in function spaces is presented as the state of the art method for comparison purposes. For completeness, the theory of this method is extended to the case of elliptic bilinear control pro- blems. A numerical comparison of the FIP and Semismooth-Newton methods is presented in Section 7. A section of conclusion completes this work.

2. Elliptic Models with Linear and Bilinear Control Mechanisms

In this section, we discuss elliptic PDE models with linear and bilinear control structures. Notice that these models are already discussed in many references; see, e.g., [2] [15] - [17] . However, in this section, we report the main results required for our analysis of convergence of the proposed proximal methods.

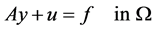

Consider the following boundary value problem

(2.1)

(2.1)

(2.2)

(2.2)

where , with

, with , is a bounded domain and

, is a bounded domain and . The operator

. The operator

such that

for some

Further, we consider the following bilinear elliptic control problem

In both linear and bilinear control settings, we require

where

Now, we discuss the existence of a unique weak solution to (2.3)-(2.4). For this purpose, we need the Poincaré-Friedrichs lemma and denote with

We denote

Theorem 2.1. Let

Then, there exists a unique weak solution

Proof. The proof is immediate using the Lemma of Lax-Milgram and the following result

With

line the Poincaré-Friedrichs was used. Hence,

Remark 2.1. In the case of

Remark 2.2. In order to ensure a unique solution, we require condition (2.6) for the choice of

Next, we recall the following theorem stating higher regularity of solutions to (2.3)-(2.4); see ( [18] , Theorem 4.3.1.4).

Theorem 2.2. Let

for some appropriate constants

Remark 2.3. Because

Theorem 2.1 and Theorem 2.2 ensure the existence of a unique control-to-state operator

where in the linear case

Remark 2.4. The control-to-state operator

Definition 2.1. (Q-differentiability). Let

In the following, we omit the index U and write

The Q-derivatives of

Lemma 2.3. The control-to-state-operator

i)

ii)

iii) The following inequalities hold

Proof. Part (i) and (ii) can be shown by direct calculation (see ( [16] , Lemma 2.9). So part (iii) is left to be proved. If

for

where the constants depend on the measure of

for

Therefore, we obtain (2.14), which completes the proof. □

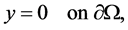

3. Elliptic Optimal Control Problems with Sparsity Cost Functional

In this section, we discuss optimal control problems governed by the linear- and bilinear-control elliptic systems discussed in the previous section. We consider the following cost functional

where

The nondifferentiable part

functional

We have

In particular, in the linear case, we have

We conclude that the reduced functional is strictly convex in the linear case.

In the bilinear case, we have a non-convex optimization problem. However, local convexity can be guar- anteed under some conditions. To be specific, we chose the sufficient condition stated in the following theorem.

Lemma 3.1. Let

then the reduced functional

Proof. Since

convex in u. Therefore we show that the reduced Hessian is positive definite in

and thus

We remark that the result of Lemma 3.1 is well known. It expresses local convexity of the reduced objective when the state function is sufficiently close to the target and the weight of the quadratic

Assumption 1. We assume that (3.4) holds for all

Because of Lemma 2.3, this assumption holds if the regularization parameter

In the next step, the first-order optimality conditions for (3.2) are derived. First, we need the definition of the subdifferential.

Definition 3.1. Let H be a Hilbert space and

the subdifferential of F in u.

From ( [20] , Remark 3.2), we obtain that

where

Theorem 3.2. The optimal solution

If one introduces the parameter

where

where

In the linear-control case, the Equation (3.13) becomes the following

where

We summarize the previous considerations into the following theorem.

Theorem 3.3. (Linear optimality conditions) The optimal solution

Furthermore, the reduced gradient and the reduced Hessian of

Notice that with an abuse of notation, we denote the reduced Hessian with

For the bilinear-control system, we have

By setting

We summarize the previous considerations into the following theorem.

Theorem 3.4. (Bilinear optimality system) The optimal solution

Furthermore, the explicit reduced gradient and the reduced Hessian of

and

4. Proximal Methods for Elliptic Control Problems

In this section, we discuss first-order proximal methods to solve our linear and bilinear optimal control problems. The starting point to discuss proximal methods consists of identifying a smooth and a nonsmooth part in the reduced objective

where, we assume

where

appropriate conditions discussed in the previous section, and it has Lipschitz-continuous gradient that we prove in the following lemma.

Lemma 4.1. The functional

Proof. For the linear-control case, we have

such that we have the Lipschitz-constant

For the bilinear-control case, we use the mean value theorem. There exists a

for the last inequality, we use (2.7), (2.13), (2.14), which completes the proof. □

The following lemma is essential in the formulation of proximal methods.

Lemma 4.2. Let

Proof.

□

Notice that

Further, notice that also in the case of choosing

The strategy of the proximal scheme is to minimize an upper bound of the objective functional at each iteration, instead of minimizing the functional directly. Lemma 4.2 gives us the following upper bound for all

where, we have equality if

Now, consider (4.6) and recall that

Lemma 4.3. The following equation holds

where the projected soft thresholding function is defined as follows

Proof. There exists a

Now, we show that

・

It follows that

・

It follows that

・

It follows that

・

It follows that

・

It follows that

□

Based on this lemma, we conclude that the solution to (4.6) is given by

thus obtaining an approximation to the optimal u sought. Therefore we can use this result to define an iterative scheme as follows

starting from a given

This scheme is discussed in [9] [12] for the case of finite-dimensional optimization problems. Notice that the iterated thresholding scheme discussed in [9] coincides with Algorithm 1 for the special case

Theorem 4.4. Let

In [22] , an acceleration strategy for proximal methods applied to convex optimization problems fulfilling (4.4) is formulated, which improves the rate of convergence of these schemes from

and

Correspondingly, the optimization variable

This procedures is summarized in the following algorithm

The following convergence result represents an extension of ( [12] , Theorem 4.4). We have

Theorem 4.5. Let

Algorithm 1 and Algorithm 2 require the calculation of

However, the exact inversion of a discretized elliptic differential operator A may become too expensive. Therefore one has to use iterative methods; e.g., the conjugate gradient method [23] . For this reason, we discuss an inexact version of the proximal scheme, where the equality constraints and the corresponding adjoint equa- tions are solved up to a given tolerance quantified by

Hence, there exists an

We denote the inexact inversion method for the problem

With this preparation, we formulate our inexact proximal (IP) scheme with Algorithm 4.

We also formulate the accelerated (fast) version of our IP scheme in Algorithm 5. We refer to it as the FIP method.

5. Convergence Analysis of Inexact Proximal Methods

In this section, we investigate the convergence of our IP and FIP schemes. Notice that our analysis differs from that presented in [12] where finite-dimensional problems and exact inversion are considered. We start investigat- ing the error of the inexact gradient

Lemma 5.1. The following estimate holds

for some c > 0.

Proof. In the linear case, we have

where

In the bilinear case, we have

2.1 implies that the solution

We also have

Using (4.10) there exist the errors

where

For the three last inequalities, we use (5.3), (2.7), and

We refer to the estimation error of the inexact gradient in step k as follows

Now, we define

and

such that one step of Algorithm 4, resp. Algorithm 5, can be written as follows

In order to prove the convergence of the IP method, we need the following two lemmas.

Lemma 5.2. For any

Proof. This is immediate from the variational inequality of (5.5). For a proof see, e.g., [20] . □

Lemma 5.3. Let

Proof. From (4.5), we have

and therefore

Now since

Summing the above inequalities gives

so using (5.6), (5.8), and the definition of

□

Now, we prove a

Theorem 5.4. Let

Proof. Using Lemma 5.3 with

Summing this inequality over

Using again Lemma 5.3 with

Multiplying this inequality by n and summing again over

which simplifies to the following

Adding (5.10) and (5.11) together, we get

and hence with

□

Next, we present a convergence result for the FIP method. For this purpose, we need the following lemma.

Lemma 5.5. Let

with

Proof. We apply Lemma 4.2 at the points

where we used the fact that

Multiplying this inequality by

Applying the Pythagoras relation

Therefore, with

it follows that

□

We also have the following lemmas.

Lemma 5.6. The positive sequence

Proof. The proof is immediate by mathematical induction. □

Lemma 5.7. Let

Then,

Proof. The proof is immediate by mathematical induction. □

Now, we can prove a convergence rate of

Theorem 5.8. Let

Proof. Let us define the quantities

As in Lemma 5.5, we define

and hence assuming that

which combined with

Furthermore with Lemma 5.6 and

which combined with (5.13) and

What remains to be proved is the validity of the relation

Applying Lemma 4.2 to the points

that is

Remark 5.1. The IP and FIP methods converge also replacing L with an upper bound of it. In particular, we can prove

We complete this section formulating a fast inexact proximal scheme where the Lipschitz constant L is obtained by forward tracking, (nevertheless we call it backtracking as in [12] ), thus avoiding any need to compute the reduced Hessian. Our fast inexact proximal backtracking (FIPB) method is presented in Algorithm 6.

6. The Inexact Semismooth Newton Method

We consider the semismooth Newton method as a benchmark scheme for solving elliptic non-smooth optimal control problems; see, e.g., [4] - [6] . This method is proven to be equivalent to the primal-dual active set method in [24] . The inexact semismooth Newton (ISSN) method is presented in [25] for finite-dimensional problems. In this section, we discuss the ISSN method for infinite-dimensional optimization problems and use it for compari- son with our inexact proximal schemes. To support our use of the ISSN scheme to solve bilinear control pro- blems, we extend two theoretical results in [3] [4] . For the analysis that follows, we need the following defini- tion.

Definition 6.1. Let X and Y be Banach spaces,

for every

This definition is similar to the semismoothness stated in [3] and also known under the name “slant differentiability”; see, e.g., [24] . Now, we discuss the solution of the following nonlinear equation

Theorem 6.1. ( [24] , Theorem 1.1) Suppose that

invertible for all

converges superlinearly to

An inexact version of the SSN scheme discussed in this theorem is formulated in ( [3] , Algorithm 3.19), where the update

However, this procedure is difficult to realize in practice. For this reason, in our ISSN scheme, the “exact”

update step

with

Our ISSN scheme is given in Algorithm 7.

On the basis of the proof of Theorem 3.20 in [3] , we prove the following theorem that states convergence of Algorithm 7. We have

Theorem 6.2. Suppose that

invertible for all

Proof. Let

Next, using

This result, the generalized differentiability of

Hence, for sufficiently small

This gives

clude from (6.6) the following

Our purpose is to solve the nonlinear and nonsmooth equation system (3.13)-(3.14) by the semismooth Newton iteration. We introduce the operator

where

The function

where

Using Theorem 6.2, the following theorem guarantees the superlinear convergence of the semismooth Newton method applied to our problems. To prove this we extend the proof of Theorem 4.3 in [4] .

Theorem 6.3. If (3.4) is fulfilled, then

bounded.

Proof. The linear-control case is investigated in [4] , so we focus on the bilinear case. Define

Now, we define

for

to see that (6.9) is equivalent to

Using

Lax-Milgram-Lemma can be applied to show that (6.9) admits a unique solution

solution satisfies

we use the fact that

7. Numerical Experiments

In this section, we present results of numerical experiments to validate the computational performance of our inexact proximal methods and to demonstrate the convergence rate of

Procedure 1. (Linear case)

1) Choose

2) Set

3) Set

Lemma 7.1. Procedure 1 provides a solution

Proof. We show that the optimality conditions (3.16)-(3.19) in Theorem 3.3 are fulfilled. (3.16)-(3.18) are obviously fulfilled because of 3) in Procedure 1. Now, we consider different cases to show (3.19):

・

・

*

*

・

*

*

□

Procedure 2. (Bilinear case)

1) Choose

2) Set

3) Set

Lemma 7.2. Procedure 2 provides a solution

Proof. The proof is similar to the one of the linear case. □

Next, we specify the elliptic operator, the domain of computation, the choice of

Case 1. (1 dimensional)

Case 2. (2 dimensional)

differences. Then we have

We compare the FIP, FIPB and ISSN schemes in terms of computational time. In the FIP method, we estimate an approximation to the Lipschitz constant

Table 1. Example 1: Comparison of the FIP, FIPB and ISSN methods.

Table 2. Example 2: Comparison of the FIP, FIPB, and ISSN methods.

In order to validate the convergence rate of

We conclude this section considering challenging linear- and a bilinear-control cases. However, the exact solutions are not known. In these cases, the target function is not attainable. We have

Case 3. (Linear case)

Case 4. (Bilinear case)

In Figure 2, we present the optimal controls obtained for the Examples 3 and 4, respectively. Notice that the controls obtained with the FIP, FIPB, and ISSN schemes are indistinguishable. We observe that in the case of a small

8. Conclusion

Inexact proximal schemes for solving linear- and bilinear elliptic optimal control problems were discussed. A complete analysis of these methods was presented and a convergence rate of

Figure 1. Validation of the theoretical upper bound (Theorem 58).

Figure 2. Optimal controls u for the Case 3 (top) and Case 4 (bottom).

Acknowledgements

Supported in part by the Interdisziplinäres Zentrum für Klinische Forschung der Universität Würzburg (IZKF); Project F-254: Parallel Multigrid Imaging and Compressed Sensing for Dynamic 3D Magnetic Resonance Imaging. This publication was supported by the Open Access Publication Fund of the University of Würzburg.

Cite this paper

Andreas Schindele,Alfio Borzì, (2016) Proximal Methods for Elliptic Optimal Control Problems with Sparsity Cost Functional. Applied Mathematics,07,967-992. doi: 10.4236/am.2016.79086

References

- 1. Borzì, A. and Schulz, V. (2011) Computational Optimization of Systems Governed by Partial Differential Equations. SIAM, Philadelphia.

http://dx.doi.org/10.1137/1.9781611972054 - 2. Troltzsch, F. (2009) Optimale Steuerung partieller Differentialgleichungen. Theorie, Verfahren und Anwendungen. Vieweg.

http://dx.doi.org/10.1007/978-3-8348-9357-4 - 3. Ulbrich, M. (2011) Semismooth Newton Methods for Variational Inequalities and Constrained Optimization Problems in Function Spaces. SIAM, Philadelphia.

http://dx.doi.org/10.1137/1.9781611970692 - 4. Stadler, G. (2009) Elliptic Optimal Control Problems with -Control Cost and Applications for the Placement of Control Devices. Computational Optimization and Applications, 44, 159-181.

http://dx.doi.org/10.1007/s10589-007-9150-9 - 5. Wachsmuth, G. and Wachsmuth, D. (2010) Convergence and Regularization Results for Optimal Control Problems with Sparsity Function. ESAIM: Control Optimisation and Calculus of Variations, 17, 858-886.

http://dx.doi.org/10.1051/cocv/2010027 - 6. Casas, E., Herzog, R. and Wachsmuth, G. (2012) Optimality Conditions and Error Analysis of Semilinear Elliptic Control Problems with Cost Functional. SIAM Journal on Optimization, 22, 795-820.

http://dx.doi.org/10.1137/110834366 - 7. Ciaramella, G. and Borzì, A. (2016) A LONE Code for the Sparse Control of Quantum Systems. Computer Physics Communications, 200, 312-323.

http://dx.doi.org/10.1016/j.cpc.2015.10.028 - 8. Candes, E., Romberg, J. and Tao, T. (2006) Stable Signal Recovery from Incomplete and Inaccurate Measurements. Communications on Pure and Applied Mathematics, 59, 1207-1223.

http://dx.doi.org/10.1002/cpa.20124 - 9. Defrise, M., Daubechies, I. and Mol, C.D. (2004) An Iterative Thresholding Algorithm for Linear Inverse Problems with a Sparsity Constraint. Communications on Pure and Applied Mathematics, 57, 1413-1457.

http://dx.doi.org/10.1002/cpa.20042 - 10. Donoho, D.L. and Elad, M. (2003) Maximal Sparsity Representation via Minimization. Proceedings of the National Academy of Sciences of the United States of America, 100, 2197-2202.

http://dx.doi.org/10.1073/pnas.0437847100 - 11. Combettes, P.L. and Wajs, V.R. (2005) Signal Recovery by Proximal Forward-Backward Splitting. Multiscale Modeling & Simulation, 4, 1168-1200.

http://dx.doi.org/10.1137/050626090 - 12. Beck, A. and Teboulle, M. (2009) A Fast Iterative Shrinkage-Thresholding Algorithm for Linear Inverse Problems. SIAM Journal on Imaging Sciences, 2, 183-202.

http://dx.doi.org/10.1137/080716542 - 13. Lorenz, A., Bredies, K. and Reiterer, S. (2015) Minimization of Non-Smooth, Non-Convex Functionals by Iterative Thresholding. Journal of Optimization Theory and Applications, 165, 78-112.

http://dx.doi.org/10.1007/s10957-014-0614-7 - 14. Wech, T., Seiberlich, N., Schindele, A., Grau, V., Diffley, L., Gyngell, M.L., Borzì, A., Kostler, H. and Schneider, J.E. (2016) Development of Real-Time Magnetic Resonance Imaging of Mouse Hearts at 9.4 Tesla—Simulations and First Application. IEEE Transactions on Medical Imaging, 35, 912-920.

http://dx.doi.org/10.1109/TMI.2015.2501832 - 15. Evans, L.C. (2010) Partial Differential Equations. 2nd Edition, Department of Mathematics, University of California, Berkeley, American Mathematical Society.

http://dx.doi.org/10.1090/gsm/019 - 16. Kr?ner, A. and Vexler, B. (2009) A Priori Error Estimates for Elliptic Optimal Control Problems with a Bilinear State Equation. Journal of Computational and Applied Mathematics, 230, 781-802.

http://dx.doi.org/10.1016/j.cam.2009.01.023� - 17. Lions, J. (1971) Optimal Control of Systems Governed by Partial Differential Equations. Springer, Berlin.

- 18. Grisvard, P. (1985) Elliptic Problems in Nonsmooth Domains. Pitman Publishing, Boston.

- 19. Adams, R.A. (1975) Sobolev Spaces. Pure and Applied Mathematics. Academic Press, Inc., Cambridge.

- 20. Ekeland, I. and Témam, R. (1999) Convex Analysis and Variational Problems. Society for Industrial and Applied Mathematics, Philadelphia.

http://dx.doi.org/10.1137/1.9781611971088 - 21. Wilkinson, J.H. (1988) The Algebraic Eigenvalue Problem. Oxford University Press, Oxford.

- 22. Nesterov, Y.E. (1983) A Method for Solving the Convex Programming Problem with Covergence Rate . Doklady Akademii Nauk SSSR, 269, 543-547.

- 23. Hestenes, M.R. and Stiefel, E. (1952) Methods of Conjugate Gradients for Solving Linear Systems. Journal of Research of the National Bureau of Standards, 49, 409-436.

http://dx.doi.org/10.6028/jres.049.044 - 24. Hintermüller, M., Ito, K. and Kunisch, K. (2002) The Primal-Dual Active Set Strategy as a Semismooth Newton Method. SIAM Journal on Optimization, 13, 865-888.

http://dx.doi.org/10.1137/S1052623401383558 - 25. Martínez, J. and Qi, L. (1995) Inexact Newton Methods for Solving Nonsmooth Equations. Journal of Computational and Applied Mathematics, 60, 127-145.