Paper Menu >>

Journal Menu >>

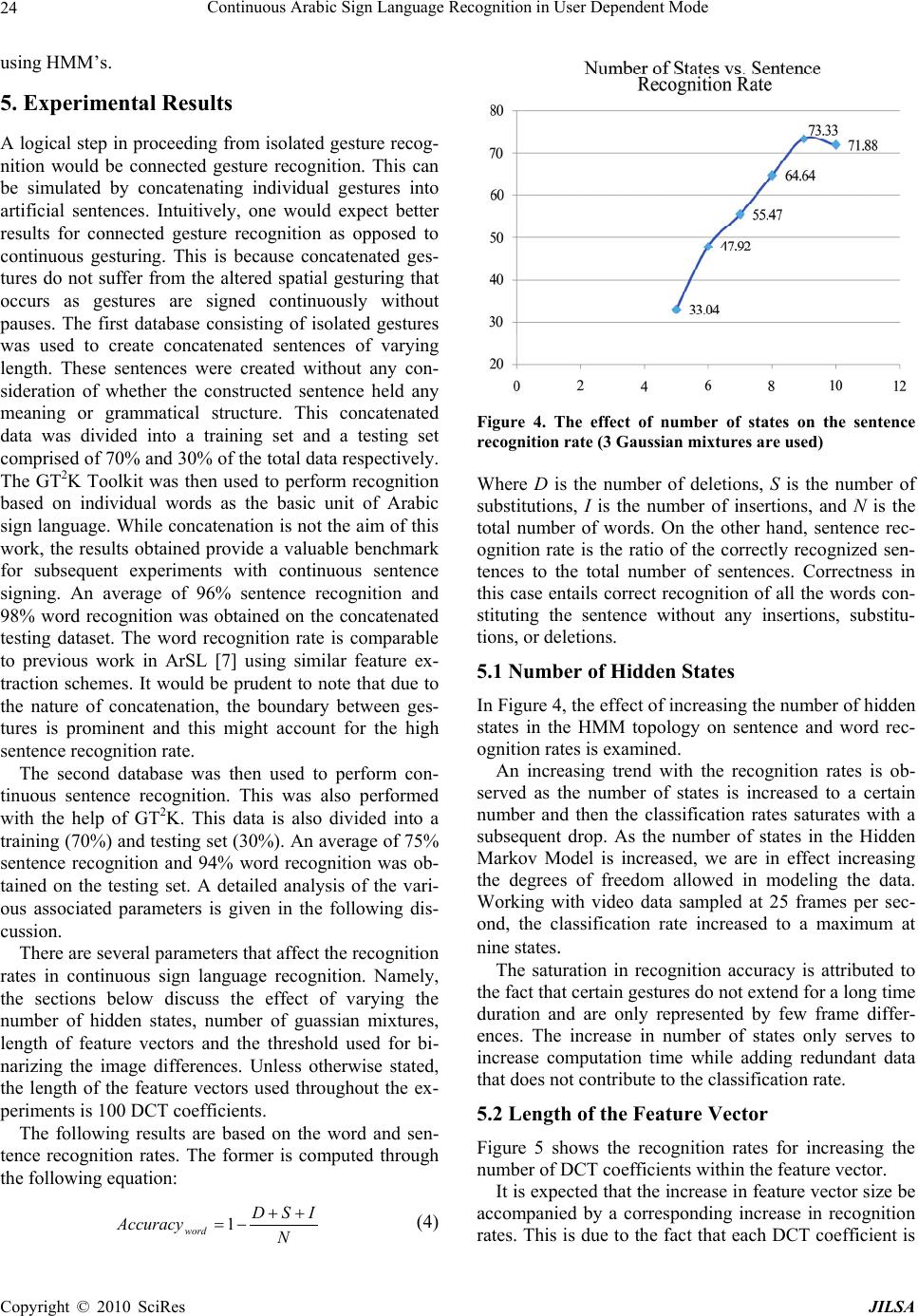

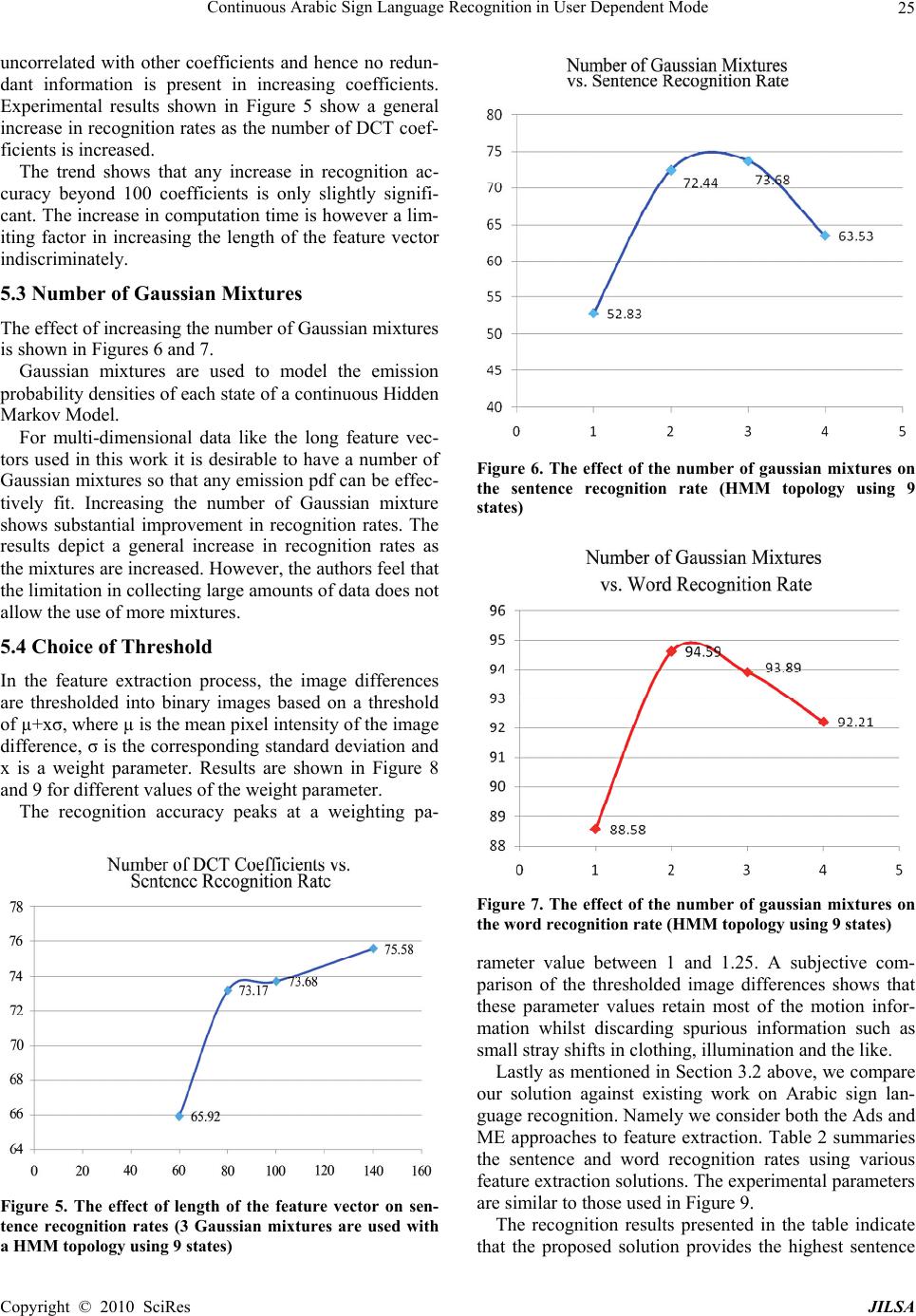

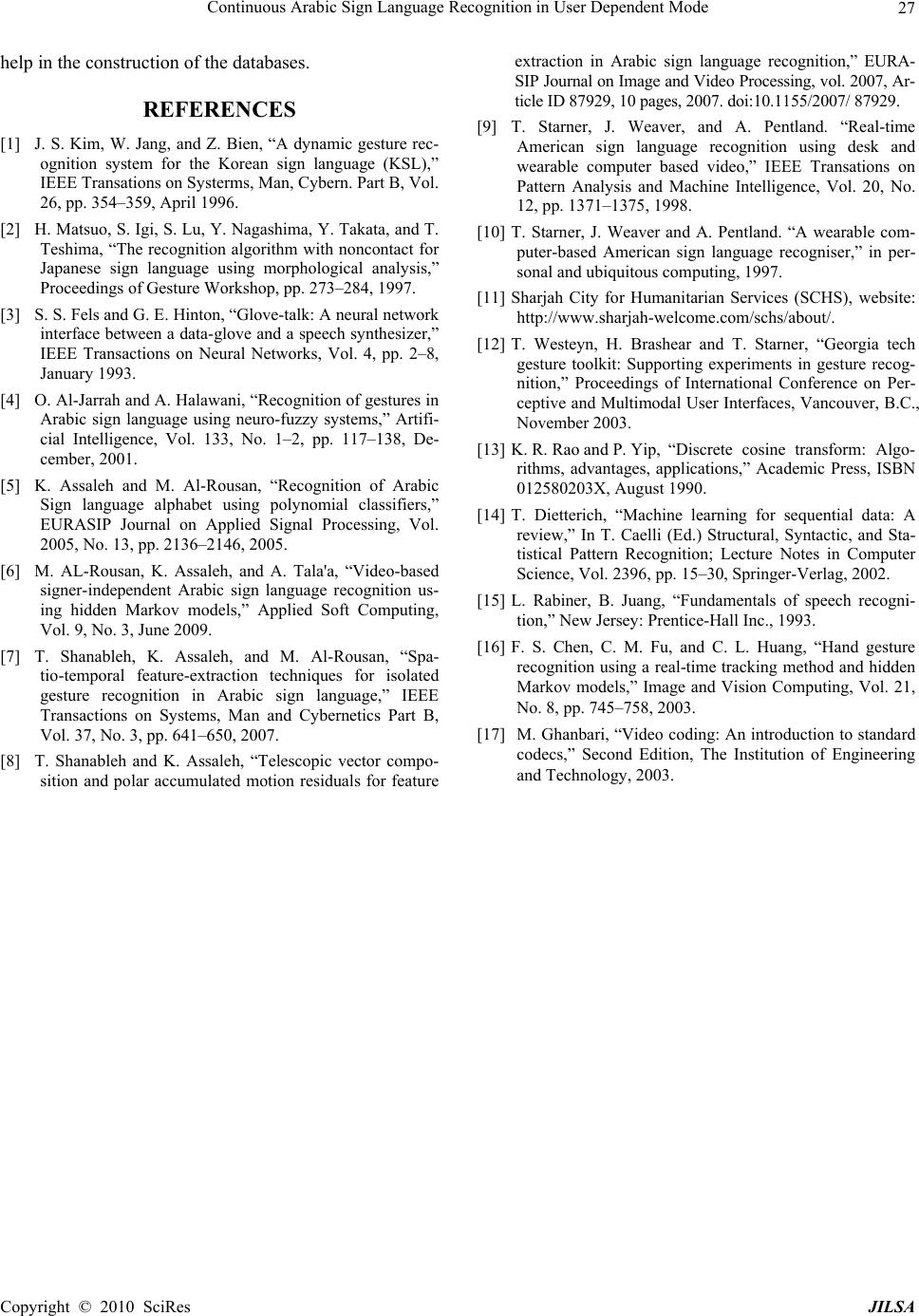

Journal of Intelligent Learning Systems and Applications, 2010, 2: 19-27 doi:10.4236/jilsa.2010.21003 Published Online February 2010 (http://www.SciRP.org/journal/jilsa) Copyright © 2010 SciRes JILSA Continuous Arabic Sign Language Recognition in User Dependent Mode K. Assaleh1, T. Shanableh2, M. Fanaswala1, F. Amin1, H. Bajaj1 1Department of Electrical Engineering, American University of Sharjah, Sharjah, UAE; 2Department of Computer Science and En- gineering; American University of Sharjah, Sharjah, UAE. Email: kassaleh@aus.edu Received October 4, 2009; accepted January 13, 2010. ABSTRACT Arabic Sign Language recognition is an emerging field of research. Previous attempts at automatic vision-based recog- nition of Arabic Sign Language mainly focused on finger spelling and recognizing isolated gestures. In this paper we report the first continuous Arabic Sign Language by building on existing research in feature extraction and pattern recognition. The development of the presented work required collecting a continuous Arabic Sign Language database which we designed and recorded in cooperation with a sign language expert. We intend to make the collected database available for the research community. Our system which we based on spatio-temporal feature extraction and hidden Markov models has resulted in an average word recognition rate of 94%, keeping in the mind the use of a high perplex- ity vocabulary and unrestrictive grammar. We compare our proposed work against existing sign language techniques based on accumulated image difference and motion estimation. The experimental results section shows that the pro- posed work outperforms existing solutions in terms of recognition accuracy. Keywords: Pattern Recognition, Motion Analysis, Image/ Video Processing and Sign Language 1. Introduction The growing popularity of vision-based systems has led to a revolution in gesture recognition technology. Vi- sion-based gesture recognition systems are primed for applications such as virtual reality, multimedia gaming and hands-free interaction with computers. Another popular application is sign language recognition, which is the focus of this paper. There are two main directions in sign language recog- nition. Glove-based systems use motion sensors to cap- ture gesture data [1–3]. While this data is more attractive to work with, the gloves are expensive and cumbersome devices which detract from the naturalness of the hu- man-computer interaction. Vision-based systems, on the other hand, provide a more natural environment within which to capture the gesture data. The flipside of this method is that working with images requires intelligent feature extraction techniques in addition to image proc- essing techniques like segmentation which may add to the computational complexity of the system. Note that while respectable results have been obtained in the domains of isolated gesture recognition and finger spelling, research on continuous Arabic sign language recognition is non-existent. The work in [4] developed a recognition system for ArSL alphabets using a collection of Adaptive Neuro- Fuzzy Inference Systems (ANFIS), a form of supervised learning. They used images of bare hands instead of col- ored gloves to allow the user to interact with the system conveniently. The used feature set comprised lengths of vectors that were selected to span the fingertips’ region and training was accomplished by use of a hybrid learning algorithm, resulting in a recognition accuracy of 93.55%. Likewise [5] reported classification results of Arabic sign language alphabets using Polynomial classifiers. The work reported superior results when compared with their previous work using ANFIS on the same dataset and fea- ture extraction techniques. Marked advantages of poly- nomial classifiers include their computational scalability, less storage requirements and absence of the need for it- erative training. This work required the participants to wear gloves with colored tips while performing the ges- tures to simplify the image segmentation stage. They ex- tracted 30 features involving the relative position and orientation of the fingertips with respect to the wrist and each other. The resulting system achieved 98.4% recogni- tion accuracy on training data and 93.41% on test data.  Continuous Arabic Sign Language Recognition in User Dependent Mode 20 Sign language recognition of words/gestures as op- posed to alphabets depends on analyzing a sequence of still images with temporal dependencies. Hence HMMs are a natural choice for model training and recognition as reported in [6]. Nonetheless, the work in [7] presented an alternative technique for feature extraction of sequential data. Working with isolated ArSL gestures, they elimi- nate the temporal dependency of data by accumulating successive prediction errors into one image that repre- sents the motion information. This removal of temporal dependency allows for simple classification methods, with less computational and storage requirements. Ex- perimental results using k-Nearest Neighbors and Bayes- ian classifiers resulted in 97 to 100% isolated gesture recognition. Variations of the work in [7] include the use of block-based motion estimation in the feature extrac- tion process. The resultant motion vectors are used to represent the intensity and directionally of the gestures’ motion as reported in [8]. Other sign languages such as American Sign Language have been researched and documented more thoroughly. A common approach in ASLR (American Sign Language Recognition) of continuous gestures is to use Hidden Markov Models as classifier models. Hidden Markov Models are an ideal choice because they allow modeling of the temporal evolution of the gesture. In part, the suc- cess of HMMs in speech recognition has made it an ob- vious choice for gesture recognition. Research by Starner and Pentland [9] uses HMMs to recognize continuous sentences in American Sign Language, achieving a word accuracy of 99.2%. Users were required to wear colored gloves and an 8-element feature set, comprising hands’ positions, angle of axis of least inertia, and eccentricity of bounding ellipse, was extracted. Lastly, linguistic rules and grammar were used to reduce the number of misclassifications. Another research study by Starner and Pentland [10] dealt with developing a Real-time ASLR system using a camera to detect bare hands and recognize continuous sentence-level sign language. Experimentation involved two systems: first, using a desk mounted camera to ac- quire video, that attained 92% recognition and second, mounting the camera in the user’s cap, which achieved an accuracy of 98%. This work was based on limited vocabulary data, employing a 40-word lexicon. The au- thors do not present sentence recognition rates for com- parison. Only word recognition and accuracy rates are reported. This paper is organized as follows. Section 2 describes the Arabic sign language database constructed and used in the work. The methodology followed is enumerated in Section 3. The classification tool used is discussed in Section 4. The results are discussed in Section 5. Con- cluding remarks along with a primer on future work in presented in Section 6. 2. The Dataset Arabic Sign Language is the language of choice amongst the hearing and speech impaired community in the Mid- dle East and most Arabic speaking countries. This work involves two different databases; one for isolated gesture recognition and another for continuous sentence recogni- tion. Both datasets are collected in collaboration with Sharjah City for Humanitarian Services (SCHS) [11], no restriction on clothing or background was imposed. The first database was compiled for isolated gesture recogni- tion as reported in [7]. The dataset consists of 3 signers acting 23 gestures. Each signer was asked to repeat each gesture a total of 50 times over 3 different sessions re- sulting in a total of 150 repetitions of each gesture. The gestures are chosen from the greeting section of the Ara- bic sign language. The second database is of a relatively high perplexity consisting of an 80-word lexicon from which 40 sen- tences were created. No restrictions are imposed on grammar or sentence length. The sentences and words pertain to common situations in which handicapped peo- ple might find themselves in. The dataset itself consists of 19 repetitions of each of the 40 sentences performed by only one user. The frame rate was set to 25Hz with a spatial resolution of 720×528. The list of sentences is given in Table 1. Note that this database is the first fully labeled and segmented dataset for continuous Arabic Sign Language. The entire database can be made avail- able on request. A required step in all supervised learning problems is the labeling stage where the classes are explicitly marked for the classifier training stage. For continuous sentence recognition, not only do the sentences have to be labeled but the individual boundaries of the gestures that make up that sentence have to be explicitly demarcated. This is a time-consuming and repetitive task. Conventionally, a portion of the data is labeled and used as ‘bootstrap’ data for the classifier which can then learn the remaining boundaries. For the purposes of creating a usable data- base, a segmented and fully labeled dataset was created in the Georgia Tech Gesture Recognition toolbox (GT2K) format [12]. The output of this stage is a single master label file (MLF) that can be used with the GT2K and HTK Toolkits. 3. Feature Extraction In this section we introduce a feature extraction tech- nique suitable for continuous signing. We also examine some of the existing techniques and adapt them to our application for comparison reasons. Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode21 Table 1. Table type styles list of Arabic sentences with Eng- lish translation used in the recognition system NO. Arabic Sentence & English translation 1 مﺪﻘﻟا ةﺮآ يدﺎﻧ ﻰﻟا ﺖﺒهذ I went to the soccer club 2 تارﺎﻴﺴﻟا قﺎﺒﺳ ﺐﺣا ﺎﻧا I love car racing 3 ﺔﻨﻴﻤﺛ ةﺮآ ﺖﻳﺮﺘﺷا I bought an expensive ball 4 مﺪﻗ ةﺮآ ةارﺎﺒﻣ يﺪﻨﻋ ﺖﺒﺴﻟا مﻮﻳ On Saturday I have a soccer match 5 مﺪﻗ ةﺮآ ﺐﻌﻠﻣ يدﺎﻨﻟا ﻲﻓ There is a soccer field in the club 6 تﺎﺟارد قﺎﺒﺳ كﺎﻨه نﻮﻜﻴﺳ اﺪﻏ There will be a bike racing tomorrow 7 ﺐﻌﻠﻤﻟا ﻲﻓ ةﺪﻳﺪﺟ ةﺮآ تﺪﺟو I found a new ball in the field 8 ؟ﻚﻴﺧا ﺮﻤﻋ ﻢآ How old is your brother? 9 ﺎﺘﻨﺑ ﻲﻣا تﺪﻟو مﻮﻴﻟا My mom had a baby girl today 10 ﺎﻌﻴﺿر لاﺰﻳ ﻻ ﻲﺧا My brother is still breast feeding 11 ﺎﻨﺘﻴﺑ ﻲﻓ يﺪﺟ نا My grandfather is at our home 12 ﺔﺼﻴﺧر ةﺮآ ﻲﻨﺑا ىﺮﺘﺷا My kid bought an inexpensive ball 13 ﺎﺑﺎﺘآ ﻲﺘﺧا تأﺮﻗ My sister read a book 14 حﺎﺒﺼﻟا ﻲﻓ قﻮﺴﻟا ﻰﻟا ﻲﻣا ﺖﺒهذ My mother went to the market this morning 15 ؟ﺖﻴﺒﻟا ﻲﻓ كﻮﺧا ﻞه Is your brother home? 16 ﺮﻴﺒآ ﻲﻤﻋ ﺖﻴﺑ My brother’s house is big 17 ﺮﻬﺷ ﺪﻌﺑ ﻲﺧا جوﺰﺘﻴﺳ In one month my brother will get married 18 ﻦﻳﺮﻬﺷ ﺪﻌﺑ ﻲﺧا ﻖﻠﻄﻴﺳ In two months my brother will get divorced 19 ؟ﻚﻘﻳﺪﺻ ﻞﻤﻌﻳ ﻦﻳا Where does your friend work? 20 ﺔﻠﺳ ةﺮآ ﺐﻌﻠﻳ ﻲﺧا My brother plays basketball 21 ﻦﻳﻮﺧأ يﺪﻨﻋ I have two brothers 22 ؟ﻚﻴﺑا ﻢﺳا ﺎﻣ What is your father’s name? 23 ﺲﻣﻻا ﻲﻓ ﺎﻀﻳﺮﻣ يﺪﺟ نﺎآ Yesterday my grandfather was sick 24 ﺲﻣﻻا ﻲﻓ ﻲﺑا تﺎﻣ Yesterday my father died 25 رﺔﻠﻴﻤﺟ ﺎﺘﻨﺑ ﺖﻳأ I saw a beautiful girl 26 ﻞﻳﻮﻃ ﻲﻘﻳﺪﺻ My friend is tall 27 مﻮﻨﻟا ﻞﺒﻗ ﻞآّا ﻻ ﺎﻧا I do not eat close to bedtime 28 ﻢﻌﻄﻤﻟا ﻲﻓ اﺬﻳﺬﻟ ﺎﻣﺎﻌﻃ ﺖﻠآا I ate delicious food at the restaurant 29 ءﺎﻤﻟا بﺮﺷ ﺐﺣا ﺎﻧا I like drinking water 30 بﺮﺷ ﺐﺣا ﺎﻧاءﺎﺴﻤﻟا ﻲﻓ ﺐﻴﻠﺤﻟا I like drinking milk in the evening 31 جﺎﺟﺪﻟا ﻦﻣ ﺮﺜآا ﻢﺤﻠﻟا ﻞآا ﺐﺣا ﺎﻧا I like eating meat more than chicken 32 ﺮﻴﺼﻋ ﻊﻣ ﺔﻨﺒﺟ ﺖﻠآا I ate cheese and drank juice 33 Next Sunday the price of milk will go up 34 ﺲﻣﻻا حﺎﺒﺻ ﺎﻧﻮﺘﻳز ﺖﻠآأ Yesterday morning I ate olives 35 ﺮﻬﺷ ﺪﻌﺑ ةﺪﻳﺪﺟ ةرﺎﻴﺳ يﺮﺘﺷﺎﺳ I will buy a new car in a month 36 ﺢﺒﺼﻟا ﻲﻠﺼﻴﻟ ﺄﺿﻮﺗ ﻮه He washed for morning prayer 37 ةﺮﺷﺎﻌﻟا ﺔﻋﺎﺴﻟا ﺪﻨﻋ ﺔﻌﻤﺠﻟا ةﻼﺻ ﻰﻟا ﺖﺒهذ I went to Friday prayer at 10:00 o’clock 38 ﺎﺘﻴﺑ تﺪهﺎﺷ اﺮﻴﺒآ زﺎﻔﻠﺘﻟﺎﺑ I saw a big house on TV 39 ةﺮﺷﺎﻌﻟا ﺔﻋﺎﺴﻟا ﺪﻨﻋ ﺖﻤﻧ ﺲﻣﻻا ﻲﻓ Yesterday I went to sleep at 10:00 o’clock 40 ﻲﺗرﺎﻴﺴﺑ حﺎﺒﺼﻟا ﻲﻓ ﻞﻤﻌﻟا ﻰﻟا ﺖﺒهذ I went to work this morning in my car 3.1 Proposed Feature Extraction The most crucial stage of any recognition task is the se- lection of good features. Features that are representative of the individual gestures are desired. Shanableh et al. [7] demonstrated in their earlier work on isolated gesture recognition that the two-tier spatial-temporal feature ex- traction scheme results in a high word recognition rate close to 98%. Similar extraction techniques are used in our continuous recognition solution. First, to represent the motion that takes place as the expert signs a given sentence, pixel-based differences of successive images are computed. It can be justified that the difference between two im- ages of similar background results in an image that only preserves the motion between the two images. These image differences are then converted into binary images by applying an appropriate threshold. A threshold value of µ+xσ is used where µ is the mean pixel intensity of the image difference, σ is the corresponding standard devia- tion and x is a weighting parameter which was empiri- cally determined based on subjective evaluation whose criteria was to retain enough motion information and discarding the noisy data. Figure 1 shows an example sentence with thresholded image differences. Notice that the example sentence is temporally downsampled for illustration purposes. Next, a frequency domain transformation such as the Discrete Cosine Transform (DCT) is performed on the binary image differences. The 2-D Discrete Cosine Transformation (DCT) given by [13]: )12( 2 cos)12( 2 cos),( )()( 2 ),( 1 0 1 0j N v i M u jif vCuC MN vuF M i N j (1) where NxM are the dimensions of the input image ‘f’ and F(u,v) is the DCT coefficient at row u and column v of the DCT matrix. C(u) is a normalization factor equal to 2 1for u=0 and 1 otherwise. ﺐﻴﻠﺤﻟا ﺮﻌﺳ ﻊﻔﺗﺮﻴﺳ مدﺎﻘﻟا ﺪﺣﻻا مﻮﻳ Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode 22 (a) An image sequence denoting the sentence ‘I do not eat close to bed time’. (b) Thresholded image differences of the image sequence in part (a) Figure 1. An example sentence and its motion representa- tion Figure 2. Discrete cosine transform coefficients of a thresh- olded image difference In Figure 2, it is apparent that the DCT transformation of a thresholded image difference results in energy com- paction where most of the image information is repre- sented in the top left corner of the transformed image. Subsequently, zig-zag scanning is used to select only a required number of frequency coefficients. This process is also known as zonal coding. The number of coeffi- cients to retain or the DCT cutoff is elaborated upon in the experimental results section. These coefficients obtained in a zig-zag manner make up the feature vector that is used in training the classifier. 3.2 Adapted Feature Extraction Solutions For completeness, we compare our feature extraction solution to existing work on Arabic sign language recog- nition. Noteworthy are the Accumulated Differences (ADs) and Motion Estimation (ME) approaches to fea- ture extraction as reported in [8,16]. In this section we provide a brief review of each of mentioned solutions and explain who it can be adapted to our problem of con- tinuous Arabic sign language recognition 3.2.1 Accumulated Differences Solution The motion information of an isolated sign gesture can be computed from the temporal domain of its image se- quence through successive image differencing. Let denote image index j of the ith repetition of a gesture at index g. The Accumulated Differences (ADs) image can be computed by: )( , j ig I 1 1 )1( , )( ,, n j j ig j jgjjg IIAD (2) where n is the total number of images in the ith repetition of a sign at index g. j is a binary threshold function of the jth frame. Note that the ADs solution cannot be directly applied to continuous sentences (as opposed to isolated sign ges- tures). This is so because the gesture boundaries in a sentence are unknown, thus one solution is to use an overlapping sliding window approach in which a given number of video frame differences are accumulated into one image regardless of gesture boundaries. The window Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode23 is shift by one video frame at a time. In the experimental results section we experiment with various window sizes. Examples of such accumulated differences are shown in Figure 3 with a window size of 8 video frames. Notice that the ADs capture the frame difference between the current and previous video frames and it also accumulates the frame differences from the current window as well. Once the ADs image is computed it is then trans- formed into the DCT domain as described previously. The DCT coefficients are Zonal coded to generate the feature vector. 3.2.2 Motion Estimation solution The motion of a video-based sign gesture can also be tracked by means of Motion Estimation (ME). One well known example is the block-based ME in which the input video frames are divided into non-overlapping blocks of pixels. For each block of pixels, the motion estimation process will search through the previous video frame for the “best match” area within a given search range. The displacement, in terms of pixels, between the current block and its best match area in the previous video frame is represented by a motion vector Formally, let C denote a block in the current video frame with bxb pixels at coordinates (m,n). Assuming that the maximum motion displacement is w pixel per video frame then the the motion estimation process will find the best match area P within the (b+2 w)(b+2w) dis- tinct overlapping bxb blocks of the previous video frame. An area in the previous video frame that minimizes a certain distortion measure is selected as the best match area. A common distortion measure is the mean absolute difference given by: b m b nynxmnm wyxwPC b yxM 11 ,, 2,, 1 ),( (3) where refer to the spatial displacement in be- tween the pixel coordinates of C and the matching area in the previous image. Other distortion measures can be used such as mean squared error, cross correlation func- tions and so forth. Further details on motion estimation can be found in [17] and references within. yx , The motion vectors can then be used to represent the motion the occurred between two video frames. These vectors are used instead of the thresholded frame differ- ences. In [8] it was proposed to rearrange the x and y components of the motion vectors into two intensity im- ages. The two images are then concatenated to generate one representation of the motion that occurred between two video frames. Again, once the concatenated image is computed it is then transformed into the DCT domain as described previously. The DCT coefficients are Zonal coded to generate the feature vector. (a) Part of the gesture “Club” (b) Part of the gesture “Soccer” Figure 3. Example accumulated differences images using an overlapping sliding window of size 8 4. Classification For conventional data, naïve Bayes classification pro- vides the upper bound for the best classification rates. Since sign language varies in both spatial and temporal domains, the extracted feature vectors are sequential in nature and hence simple classifiers might not suffice. There are two main approaches to dealing with sequen- tial data. The first method aims to combine the sequential feature vectors using a suitable operation into a single feature vector. A detailed account of such procedures is outlined in [14]. One such method involves concatenat- ing sequential feature vectors using a sliding window of optimal length to create a single feature vector. Subse- quently, classical supervised learning techniques such as maximum-likelihood estimation (MLE), linear discrimi- nants or neural networks can be used. The second ap- proach makes explicit use of classifiers that can deal with sequential data without concatenation or accumulation, such an approach is used in this paper. While the field of gesture recognition is relatively young, the related field of speech recognition is well established and documented. Hidden Markov Models are the classifier of choice for continuous speech recognition and lend themselves suitably for continuous sign language recognition too. As mentioned in [15], a HMM is a finite-state automaton characterized by stochastic transitions in which the se- quence of states is a Markov chain. Each output of an HMM corresponds to a probability density function. Such a generative model can be used to represent sign language units (words, sub-words etc). The implementation of an HMM framework was car- ried out using the Georgia Tech Gesture Recognition Toolkit (GT2K) which serves as a wrapper for the more general Hidden Markov Model Toolkit (HTK). The GT2K version used was a UNIX based package. HTK is the de-facto standard in speech recognition application Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode 24 using HMM’s. 5. Experimental Results A logical step in proceeding from isolated gesture recog- nition would be connected gesture recognition. This can be simulated by concatenating individual gestures into artificial sentences. Intuitively, one would expect better results for connected gesture recognition as opposed to continuous gesturing. This is because concatenated ges- tures do not suffer from the altered spatial gesturing that occurs as gestures are signed continuously without pauses. The first database consisting of isolated gestures was used to create concatenated sentences of varying length. These sentences were created without any con- sideration of whether the constructed sentence held any meaning or grammatical structure. This concatenated data was divided into a training set and a testing set comprised of 70% and 30% of the total data respectively. The GT2K Toolkit was then used to perform recognition based on individual words as the basic unit of Arabic sign language. While concatenation is not the aim of this work, the results obtained provide a valuable benchmark for subsequent experiments with continuous sentence signing. An average of 96% sentence recognition and 98% word recognition was obtained on the concatenated testing dataset. The word recognition rate is comparable to previous work in ArSL [7] using similar feature ex- traction schemes. It would be prudent to note that due to the nature of concatenation, the boundary between ges- tures is prominent and this might account for the high sentence recognition rate. The second database was then used to perform con- tinuous sentence recognition. This was also performed with the help of GT2K. This data is also divided into a training (70%) and testing set (30%). An average of 75% sentence recognition and 94% word recognition was ob- tained on the testing set. A detailed analysis of the vari- ous associated parameters is given in the following dis- cussion. There are several parameters that affect the recognition rates in continuous sign language recognition. Namely, the sections below discuss the effect of varying the number of hidden states, number of guassian mixtures, length of feature vectors and the threshold used for bi- narizing the image differences. Unless otherwise stated, the length of the feature vectors used throughout the ex- periments is 100 DCT coefficients. The following results are based on the word and sen- tence recognition rates. The former is computed through the following equation: N ISD Accuracyword 1 (4) Figure 4. The effect of number of states on the sentence recognition rate (3 Gaussian mixtures are used) Where D is the number of deletions, S is the number of substitutions, I is the number of insertions, and N is the total number of words. On the other hand, sentence rec- ognition rate is the ratio of the correctly recognized sen- tences to the total number of sentences. Correctness in this case entails correct recognition of all the words con- stituting the sentence without any insertions, substitu- tions, or deletions. 5.1 Number of Hidden States In Figure 4, the effect of increasing the number of hidden states in the HMM topology on sentence and word rec- ognition rates is examined. An increasing trend with the recognition rates is ob- served as the number of states is increased to a certain number and then the classification rates saturates with a subsequent drop. As the number of states in the Hidden Markov Model is increased, we are in effect increasing the degrees of freedom allowed in modeling the data. Working with video data sampled at 25 frames per sec- ond, the classification rate increased to a maximum at nine states. The saturation in recognition accuracy is attributed to the fact that certain gestures do not extend for a long time duration and are only represented by few frame differ- ences. The increase in number of states only serves to increase computation time while adding redundant data that does not contribute to the classification rate. 5.2 Length of the Feature Vector Figure 5 shows the recognition rates for increasing the number of DCT coefficients within the feature vector. It is expected that the increase in feature vector size be accompanied by a corresponding increase in recognition rates. This is due to the fact that each DCT coefficient is Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode25 uncorrelated with other coefficients and hence no redun- dant information is present in increasing coefficients. Experimental results shown in Figure 5 show a general increase in recognition rates as the number of DCT coef- ficients is increased. The trend shows that any increase in recognition ac- curacy beyond 100 coefficients is only slightly signifi- cant. The increase in computation time is however a lim- iting factor in increasing the length of the feature vector indiscriminately. 5.3 Number of Gaussian Mixtures The effect of increasing the number of Gaussian mixtures is shown in Figures 6 and 7. Gaussian mixtures are used to model the emission probability densities of each state of a continuous Hidden Markov Model. For multi-dimensional data like the long feature vec- tors used in this work it is desirable to have a number of Gaussian mixtures so that any emission pdf can be effec- tively fit. Increasing the number of Gaussian mixture shows substantial improvement in recognition rates. The results depict a general increase in recognition rates as the mixtures are increased. However, the authors feel that the limitation in collecting large amounts of data does not allow the use of more mixtures. 5.4 Choice of Threshold In the feature extraction process, the image differences are thresholded into binary images based on a threshold of µ+xσ, where µ is the mean pixel intensity of the image difference, σ is the corresponding standard deviation and x is a weight parameter. Results are shown in Figure 8 and 9 for different values of the weight parameter. The recognition accuracy peaks at a weighting pa- Figure 5. The effect of length of the feature vector on sen- tence recognition rates (3 Gaussian mixtures are used with a HMM topology using 9 states) Figure 6. The effect of the number of gaussian mixtures on the sentence recognition rate (HMM topology using 9 states) Figure 7. The effect of the number of gaussian mixtures on the word recognition rate (HMM topology using 9 states) rameter value between 1 and 1.25. A subjective com- parison of the thresholded image differences shows that these parameter values retain most of the motion infor- mation whilst discarding spurious information such as small stray shifts in clothing, illumination and the like. Lastly as mentioned in Section 3.2 above, we compare our solution against existing work on Arabic sign lan- guage recognition. Namely we consider both the Ads and ME approaches to feature extraction. Table 2 summaries the sentence and word recognition rates using various feature extraction solutions. The experimental parameters are similar to those used in Figure 9. The recognition results presented in the table indicate that the proposed solution provides the highest sentence Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode 26 Figure 8. The effect of the weighting factor on the sentence recognition rate (3 Gaussian mixtures are used with a HMM topology using 9 states) Figure 9. Effect of the weighting factor on the word recog- nition rate (3 Gaussian mixtures are used with a HMM to- pology using 9 states) and word recognition rates. The ADs with the overlap- ping sliding window approach was not advantageous. Intuitively the ADs image puts the difference between 2 video frames into context by accumulating future frame differences to it. However in HMMs temporal informa- tion is preserved and therefore extracting feature vectors from video frame differences without accumulating them will suffice. It is also worth mentioning that increasing the window size beyond 10 frames did not further en- hance the recognition rate. In the ME approach, the image block size and the search range are set to 8x8 pixels which is a typicalset- ting in video processing. The resultant recognition rates are comparable to the ADs approach. Note that ME tech- -niques do not entirely capture the true motion of a video sequence. For instance with block-based search tech- Table 2. Comparisons with existing feature extraction solu- tions Feature extraction approach Sentence recognition rate Word recognition rate ADs with an overlapping Sliding window of size 4. 64.1% 91.0% ADs with an overlapping Sliding window of size 7. 65.2% 90.6% ADs with an overlapping Sliding window of size 10. 68% 93.71% Motion estimation 67.9% 92.9% Proposed solution 73.3% 94.39% niques object rotations are not captured as good as trans- lational motion. Therefore the recognition results are inferior to the proposed solution. 6. Conclusions and Future Work The work outlined in this paper is an important step in this domain as it represents the first attempt to recognize continuous Arabic sign language. The work entailed compiling the first fully labeled and segmented dataset for continuous Arabic Sign Language which we intend to make public for the research community. The average sentence recognition rate of 75% and word recognition- rate of 94% are obtained using a natural vision-based system with no restrictions on signing such as the use of gloves. Furthermore, no grammar is imposed on the sen- tence structure which makes the recognition task more challenging. The use of grammatical structure can sig- nificantly improve the recognition rate by alleviating some types of substitution and insertion errors. In the course of training, the dataset was plagued by an unusu- ally large occurrence of insertion errors. This problem was mitigated by applying a detrimental weight for every insertion error which was incorporated into the training stage. As a final comment, the perplexity of the dataset is large compared to other work in related fields. Future work in this area aiming to secure higher recognition rates might require a sub-gesture based recognition sys- tem. Such a system would also serve to alleviate the mo- tion-epenthesis effect which is similar to the co-articu- lation effect in speech recognition. Finally, the frequency domain transform coefficients used as features perform well in concatenated gesture recognition. The average word recognition rate is also sufficiently high with an average of 94%. The authors feel that geometric features might be used in addition to the existing feature to create an optimum feature set. 7. Acknowledgements The authors acknowledge Emirates Foundation for their support. They also acknowledge the Sharjah City for Humanitarian Services for availing Mr. Salah Odeh to Copyright © 2010 SciRes JILSA  Continuous Arabic Sign Language Recognition in User Dependent Mode Copyright © 2010 SciRes JILSA 27 help in the construction of the databases. REFERENCES [1] J. S. Kim, W. Jang, and Z. Bien, “A dynamic gesture rec- ognition system for the Korean sign language (KSL),” IEEE Transations on Systerms, Man, Cybern. Part B, Vol. 26, pp. 354–359, April 1996. [2] H. Matsuo, S. Igi, S. Lu, Y. Nagashima, Y. Takata, and T. Teshima, “The recognition algorithm with noncontact for Japanese sign language using morphological analysis,” Proceedings of Gesture Workshop, pp. 273–284, 1997. [3] S. S. Fels and G. E. Hinton, “Glove-talk: A neural network interface between a data-glove and a speech synthesizer,” IEEE Transactions on Neural Networks, Vol. 4, pp. 2–8, January 1993. [4] O. Al-Jarrah and A. Halawani, “Recognition of gestures in Arabic sign language using neuro-fuzzy systems,” Artifi- cial Intelligence, Vol. 133, No. 1–2, pp. 117–138, De- cember, 2001. [5] K. Assaleh and M. Al-Rousan, “Recognition of Arabic Sign language alphabet using polynomial classifiers,” EURASIP Journal on Applied Signal Processing, Vol. 2005, No. 13, pp. 2136–2146, 2005. [6] M. AL-Rousan, K. Assaleh, and A. Tala'a, “Video-based signer-independent Arabic sign language recognition us- ing hidden Markov models,” Applied Soft Computing, Vol. 9, No. 3, June 2009. [7] T. Shanableh, K. Assaleh, and M. Al-Rousan, “Spa- tio-temporal feature-extraction techniques for isolated gesture recognition in Arabic sign language,” IEEE Transactions on Systems, Man and Cybernetics Part B, Vol. 37, No. 3, pp. 641–650, 2007. [8] T. Shanableh and K. Assaleh, “Telescopic vector compo- sition and polar accumulated motion residuals for feature extraction in Arabic sign language recognition,” EURA- SIP Journal on Image and Video Processing, vol. 2007, Ar- ticle ID 87929, 10 pages, 2007. doi:10.1155/2007/ 87929. [9] T. Starner, J. Weaver, and A. Pentland. “Real-time American sign language recognition using desk and wearable computer based video,” IEEE Transations on Pattern Analysis and Machine Intelligence, Vol. 20, No. 12, pp. 1371–1375, 1998. [10] T. Starner, J. Weaver and A. Pentland. “A wearable com- puter-based American sign language recogniser,” in per- sonal and ubiquitous computing, 1997. [11] Sharjah City for Humanitarian Services (SCHS), website: http://www.sharjah-welcome.com/schs/about/. [12] T. Westeyn, H. Brashear and T. Starner, “Georgia tech gesture toolkit: Supporting experiments in gesture recog- nition,” Proceedings of International Conference on Per- ceptive and Multimodal User Interfaces, Vancouver, B.C., November 2003. [13] K. R. Rao and P. Yip, “Discrete cosine transform: Algo- rithms, advantages, applications,” Academic Press, ISBN 012580203X, August 1990. [14] T. Dietterich, “Machine learning for sequential data: A review,” In T. Caelli (Ed.) Structural, Syntactic, and Sta- tistical Pattern Recognition; Lecture Notes in Computer Science, Vol. 2396, pp. 15–30, Springer-Verlag, 2002. [15] L. Rabiner, B. Juang, “Fundamentals of speech recogni- tion,” New Jersey: Prentice-Hall Inc., 1993. [16] F. S. Chen, C. M. Fu, and C. L. Huang, “Hand gesture recognition using a real-time tracking method and hidden Markov models,” Image and Vision Computing, Vol. 21, No. 8, pp. 745–758, 2003. [17] M. Ghanbari, “Video coding: An introduction to standard codecs,” Second Edition, The Institution of Engineering and Technology, 2003. |