Open Journal of Statistics

Vol.05 No.07(2015), Article ID:62190,9 pages

10.4236/ojs.2015.57076

Shrinkage Estimation of Semiparametric Model with Missing Responses for Cluster Data

Mingxing Zhang, Jiannan Qiao, Huawei Yang, Zixin Liu

Department of Mathematics and Statistics, Guizhou University of Finance and Economics, Guiyang, China

Copyright © 2015 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 23 September 2015; accepted 21 December 2015; published 24 December 2015

ABSTRACT

This paper simultaneously investigates variable selection and imputation estimation of semiparametric partially linear varying-coefficient model in that case where there exist missing responses for cluster data. As is well known, commonly used approach to deal with missing data is complete-case data. Combined the idea of complete-case data with a discussion of shrinkage estimation is made on different cluster. In order to avoid the biased results as well as improve the estimation efficiency, this article introduces Group Least Absolute Shrinkage and Selection Operator (Group Lasso) to semiparametric model. That is to say, the method combines the approach of local polynomial smoothing and the Least Absolute Shrinkage and Selection Operator. In that case, it can conduct nonparametric estimation and variable selection in a computationally efficient manner. According to the same criterion, the parametric estimators are also obtained. Additionally, for each cluster, the nonparametric and parametric estimators are derived, and then compute the weighted average per cluster as finally estimators. Moreover, the large sample properties of estimators are also derived respectively.

Keywords:

Semiparametric Partially Linear Varying-Coefficient Model, Missing Responses, Cluster Data, Group Lasso

1. Introduction

In real application, the analysis of cluster data arises in various research areas such as biomedicine and so on. Without loss of generality, the data are clustered into classes in terms of the objects which have certain similar property. For example, focus on the same confidence interval as a cluster. Numerous parametric approaches are applied to the analysis of cluster data, and with the rapid development of computing techniques, nonparametric and semiparametric approaches have attained more and more interest. See the work of Sun et al. [1] , Cai [2] , Vichi [3] , Yi et al. [4] , Carrol [5] , and He [6] , among others.

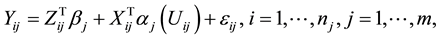

Consider the semiparametric partially linear varying-coefficient model which is a useful extension of partially linear regression model and varying-coefficient model over all clusters, it satisfies

(1)

(1)

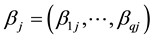

where ,

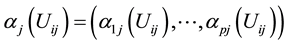

,  and

and  stand for the ith observation of Y, Z and X in the jth cluster.

stand for the ith observation of Y, Z and X in the jth cluster.  is a vector of q-dimensional unknown parametrics;

is a vector of q-dimensional unknown parametrics;  is a p-dimensional unknown coefficient vector.

is a p-dimensional unknown coefficient vector.  is random error with mean zero and variance

is random error with mean zero and variance .

.

Obviously, when m = 1, model (1) reduces to semiparametric partially linear varying-coefficient model. A series of literature (You and Chen [7] , Fan and Huang [8] , Wei and Wu [9] , Zhang and Lee [10] ) have provided the corresponding statistic inference of such semiparametric model. In [8] , Fan and Huang put forward a profile least square technique and propose generalized likelihood ratio test. In [7] , You and Chen study the estimation problem when some covariates are measured with additive errors. When m = 1 and Z = 0, model (1) becomes varying-coefficient model which has been widely studied by many authors such as Fan and Zhang [11] , Hastile and Tibshirani [12] , Xia and Li [13] , Hoover et al. [14] . When m = 1, p = 1 and Z = 1, model (1) reduces to partially linear regression model which is proposed by Engle et al. [15] when they research the influence of weather on electricity demand. See the literature of Yatchew [16] , Spechman [17] and Liang et al. [18] , among others.

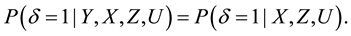

However, in practice, responses may often not be available completely because of various factors. For example, some sampled units are unwilling to provide the desired information, and some investigators gather incorrect information caused by careless and so on. In that case, a commonly used technique is to introduce a new variable . When

. When , Y represents the situation of missing, and

, Y represents the situation of missing, and , otherwise. Suppose that responses are missing at random,

, otherwise. Suppose that responses are missing at random,  and Y are conditionally independent, then it has

and Y are conditionally independent, then it has

Due to the practicability of the missing responses estimation, semiparametric partially linear varying-coefficient model with missing responses has attracted many authors’ attention, such as Chu and Cheng [19] , Wei [20] , Wang et al. [21] and so on.

It is worth pointing out that there is little work concerning both missing and cluster data especially in semiparametric partially linear varying-coefficient model. If ignore the difference of clusters, it leads the predictors of response values Y far away from the true values and the estimators have poor robustness. Therefore, it is necessary to take cluster data into consideration with the purpose of improving estimation efficiency. For each cluster, introduce group lasso to semiparametric partially linear varying-coefficient model respectively on the basis of complete case data. In order to automatically select variables and conduct estimation simultaneously, lasso is a popular technique which has attracted many authors’ attention such as Tibshirani [22] , Zou [23] and so on. Due to the idea of lasso is to select individual derived input variable rather than the strength of groups of input variables, in this situation, it leads to select more factors as the approach of group lasso. As is shown in Yuan and Yi [24] , Wang and Xia [25] , Hu and xia [26] and so on. Thus, this paper centers on the technique of group lasso in a computationally efficient manner. Further then, parametric and nonparametric components are obtained by computing the weighted average per cluster. As for the inference of estimators, the properties of asymptotic normality and consistency are also provided. And Bayesian information criterion (BIC) as tuning parameter selection criterion is used in this article.

The rest of the paper is organized as follows. The use of the applied method is given in Section 2. In Section 3, the theoretical properties are provided. Conclusions are shown in Section 4. Finally, the proofs of the main results are relegated to Appendix.

2. Semiparametric Model with the Methodology

2.1. Model with Complete-Case Data

Due to there exist missing responses, for simplicity, focus on the case where . That is so-called the method of complete case data. It is assumed that there are m independent clusters, and the number of observations in the jth cluster is

. That is so-called the method of complete case data. It is assumed that there are m independent clusters, and the number of observations in the jth cluster is . For the ith subjects from the jth cluster, let

. For the ith subjects from the jth cluster, let

In this situation, if the parametric component

where

2.2. The Kernel Least Absolute Shrinkage and Selection Operator Method

Similarity, consider the jth cluster data firstly, given any index value

According to

with respect to

Due to it is assumed that the last

where

2.3. Local Quadratic Approximation

It is well known that, there exist many computational algorithms for the lasso-type problems such as local quadratic approximation, the least angle regression and many others. For simplicity, this article describes here an easy implementation based on the idea of the local quadratic approximation. Specifically, the implementation is based on an iterative algorithm with

be the KLASSO estimate obtained in the mth iteration j cluster. Then, the loss function in (6) can be locally approximated by

whose minimizer is given by

where

Furthermore, for each cluster and each group, by using weighted mean idea to gain the finally estimator of coefficient vector

where

2.4. Estimation of Parametric Component

In terms of the above estimator of nonparametric component and according to the same criterion, the lasso estimation of parametric components

where

3. Theoretical Properties

3.1. Technical Conditions

The following assumptions are needed to prove the theorems for the proposed estimation methods.

Assumption 1. The random variable U has a bounded support

Assumption 2. For each

Assumption 3. There is an

Assumption 4.

Assumption 5. The function K(.) is a symmetric density function with compact support.

Lemma 1. Suppose that the Assumptions of (A1)-(A5) hold,

Lemma 2. If (A1)-(A5),

The proof of Lemma 1 and Lemma 2 can be shown in Wang and Xia [25] .

3.2. Basic Theorems

Suppose that the Assumptions (A1)-(A5) hold. For j th cluster, let

Theorem 1. Assume (A1)-(A5),

With the purpose of considering the oracle property, define the orale estimators as follows:

Theorem 2. Suppose that the assumptions are satisfied, if

3.3. Tuning Parameter Selection

In the case where

model can be consistently identified. Due to there exists a great challenge to select p shrinkage parameters, thus as shown in Zou [23] , wang and xia [25] , simplify the tuning parameters as follows:

where

where

Obviously, the effective sample size

Note that

Theorem 3. Selection Consistency. Suppose that Assumptions (A1)-(A5) hold, the tuning parameter

4. Conclusion

In this paper, it mainly discusses the shrinkage estimation of semiparametric partially linear varying-coefficient model under the circumstance that there exist missing responses for cluster data. Combined the idea of complete-case data, this paper introduces group lasso into semiparametric model with different cluster respectively. The new method simultaneously conducts variable selection and model estimation. Meanwhile, the technique not only reduces biased results but also improves the estimation efficiency. Finally, combined the idea of weighted mean, the nonparametric and parametric estimators are derived. The BIC criterion as tuning parameter selection is well applied in this artice. Furthermore, the properties of asymptotic normality and consistency are also derived theoretically.

Acknowledgements

This work is supported by the National Natural Science Foundation of China (61472093). This support is greatly appreciated.

Cite this paper

MingxingZhang,JiannanQiao,HuaweiYang,ZixinLiu, (2015) Shrinkage Estimation of Semiparametric Model with Missing Responses for Cluster Data. Open Journal of Statistics,05,768-776. doi: 10.4236/ojs.2015.57076

References

- 1. Sun, Y., Li, J.L. and Zhang, W.Y. (2012) Estimation and Model Selection in a Class of Semiparametric Models for Cluster Data. Annals of the Institute of Statistical Mathematics, 64, 835-856.

http://dx.doi.org/10.1007/s10463-011-0342-9 - 2. Cai, J.W. (2005) Semiparametric Models for Clustered Recurrent Event Data. Life Data Analysis, 11, 405-425.

http://dx.doi.org/10.1007/s10985-005-2970-y - 3. Vichi, M. (2008) Fitting Semiparametric Clustering Models to Dissimilarity Data. Advances in Data Analysis and Classification, 2, 121-161.

http://dx.doi.org/10.1007/s11634-008-0025-4 - 4. Yi, G.Y., He, W.Q. and Liang, H. (2011) Semiparametric Marginal and Association Regression Methods for Clustered Binary Data. Annals of the Institute of Statistical Mathematics, 63, 511-533.

http://dx.doi.org/10.1007/s10463-009-0239-z - 5. Carroll, R., Maity, A., Mammen, E. and Yu, K. (2009) Efficient Semiparametric Marginal Estimation for the Partially Linear Additive Model for Longitudinal/Clustered Data. Statistics in Biosciences, 1, 10-31.

http://dx.doi.org/10.1007/s12561-009-9000-7 - 6. He, S., Wang, F. and Sun, L.Q. (2013) A Semiparametric Additive Rates Model for Clustered Recurrent Event Data, Acta Mathematicae Applicatae Sinica. English. Series, 29, 55-62. http://dx.doi.org/10.1007/s10255-011-0093-7

- 7. You, J.H. and Chen, G.M. (2006) Estimation of a Semiparametric Varying-Coefficient Partially Linear Errors-in-Variables Model. Journal of Multivariate Analysis, 97, 324-341.

http://dx.doi.org/10.1016/j.jmva.2005.03.002 - 8. Fan, J.Q. and Huang, T. (2005) Profile Likelihood Inferences on Semiparametric Varying-Coefficient Partially Linear Models. Bernoulli, 11, 1031-1057.

http://dx.doi.org/10.3150/bj/1137421639 - 9. Wei, C.H. and Wu, X.Z. (2008) Profile Lagrange Multiplier Test for Partially Linear Varying-Coefficient Regression Models. Journal of Systems Science & Mathematical Sciences, 28, 416-424.

- 10. Zhang, W., Lee, S.Y. and Song, X. (2002) Local Polynomial Fitting in Semivarying Coefficient Models. Journal of Multivariate Analysis, 82, 166-188.

http://dx.doi.org/10.1006/jmva.2001.2012 - 11. Fan, J.Q. and Zhang, W.Y. (1999) Statistical Estimation in Varying-Coefficient Models. Annals of Statistics, 27, 1491-1581.

http://dx.doi.org/10.1214/aos/1017939139 - 12. Hastile, T.J. and Tibshirani, R.J. (1993) Varying-Coefficient Models (With Discussion). Journal of the Royal Statistical Society: Series B, 55, 757-796.

- 13. Xia, Y.C. and Li, W.K. (1999) On the Estimation and Testing of Functional-Coefficient Linear Models. Statistica Sinica, 9, 737-757.

- 14. Hoover, D.R., Rice, J.A., Wu, C.O. and Yang, L.P. (1998) Nonparametric Smoothing Estimates of Time-Varying Coefficient Models with Longitudinal Data. Biometrika, 85, 809-822.

http://dx.doi.org/10.1093/biomet/85.4.809 - 15. Engle, R.F., Granger, W.J., Rice, J. and Weiss, A. (1996) Semiparametric Estimates of the Relation between Weather and Electricity Techniques. Journal of the American Statistical Association, 80, 310-319.

http://dx.doi.org/10.1080/01621459.1986.10478274 - 16. Yatchew, A. (1997) An Elementary Estimator of the Partial Linear Model. Economics Letters, 57, 135-143.

http://dx.doi.org/10.1016/S0165-1765(97)00218-8 - 17. Speckman, P. (1988) Kernel Smoothing in Partial Linear Models. Journal of the Royal Statistical Society: Series B, 50, 413-416.

- 18. Liang, H. (2006) Estimation in Partially Linear Models and Numerical Comparisons. Computational Statistics & Data Analysis, 50, 675-687.

http://dx.doi.org/10.1016/j.csda.2004.10.007 - 19. Chu, C. and Cheng, P. (1995) Nonparametric Regression Estimation with Missing Data. Journal of Statistical Planning and Inference, 48, 85-99.

http://dx.doi.org/10.1016/0378-3758(94)00151-K - 20. Wei, C.H. (2010) Estimation in Partially Linear Varying-Coefficient Errors-in-Variables Models with Missing Responses. Acta Mathematica Scientia, 30, 1042-1054.

- 21. Wang, Q., Linton, O. and Hardle, W. (2007) Semiparametric Regression Analysis with Missing Response at Random. Journal of Multivariate Analysis, 98, 334-345.

http://dx.doi.org/10.1016/j.jmva.2006.10.003 - 22. Tibshirani, R. (1996) Regression Shrinkage and Selection via the Lasso. Journal of the Royal Statistical Society: Series B, 58, 267-288.

- 23. Zou, H. (2006) The Adaptive Lasso and Its Oracle Properties. Journal of the American Statistical Association, 101, 1418-1429.

http://dx.doi.org/10.1198/016214506000000735 - 24. Yuan, M. and Lin, Y. (2006) Model Selection and Estimation in Regression with Grouped Variables. Journal of the Royal Statistical Society: Series B, 68, 49-67.

http://dx.doi.org/10.1111/j.1467-9868.2005.00532.x - 25. Wang, H.S. and Xia, Y.C. (2009) Shrinkage Estimation of the Varying Coefficient Model. Journal of the American Statistical Association, 104, 747-757.

http://dx.doi.org/10.1198/jasa.2009.0138 - 26. Hu, T. and Xia, Y.C. (2010) Adaptive Semivarying Coefficient Model Selection. Statistica Sinica, 22, 575-599.

- 27. Hunter, D.R. and Li, R. (2005) Variable Selection Using MM Algorithms. Annals of Statistics, 33, 1617-1642.

http://dx.doi.org/10.1214/009053605000000200

Appendix

Proof of Theorem 1

Proof. Based on Lemma 2 and as shown in Hunter and Li [27] , one can know that

For simplify, we follow (8) and

Due to each diagonal component of

where

Proof of Theorem 2

Proof. As is well known,

That is to say,

where

where