World Journal of Engineering and Technology

Vol.2 No.2(2014), Article ID:45644,9 pages DOI:10.4236/wjet.2014.22006

Intelligent Parking Management System Based on Image Processing

Hilal Al-Kharusi, Ibrahim Al-Bahadly

School of Engineering and Advanced Technology, Massey University, Palmerston North, New Zealand

Email: veron9757@hotmail.com, i.h.albahadly@massey.ac.nz

Copyright © 2014 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 2 March 2014; revised 6 April 2014; accepted 14 April 2014

ABSTRACT

This paper aims to present an intelligent system for parking space detection based on image processing technique. The proposed system captures and processes the rounded image drawn at parking lot and produces the information of the empty car parking spaces. In this work, a camera is used as a sensor to take photos to show the occupancy of car parks. The reason why a camera is used is because with an image it can detect the presence of many cars at once. Also, the camera can be easily moved to detect different car parking lots. By having this image, the particular car parks vacant can be known and then the processed information was used to guide a driver to an available car park rather than wasting time to find one. The proposed system has been developed in both software and hardware platform. An automatic parking system is used to make the whole process of parking cars more efficient and less complex for both drivers and administrators.

Keywords:Intelligent Parking, Image Processing, Space Detection

1. Introduction

Most car parks today are not run efficiently. This means that on busy days drivers may take a long time driving around a car park in order to find a free parking space. Implementing this system will help to resolve the growing problem of traffic congestion, wasted time, wasting money, and help provide better public service, reduce car emissions and pollution, improve city visitor experience, increase parking utilization, and prevent unnecessary capital investments. The system does this by providing more efficient and effective parking enforcement. An automatic parking system can be done through sensors at the entrance and exit of the park, a computer system that manages the whole process and various display panels and lights that help the driver in parking his car. A simplified flowchart of such system is shown in Figure 1.

Figure 1. Intelligent car parking system management.

There are numerous methods of detecting cars in a car park such as Magnetic sensors, Microwave Radar, Ultrasonic sensors and image processing [1] [2] . This project discusses image processing. This is used because cameras can capture many cars at once making them efficient and inexpensive [3] -[6] . One or more cameras are used for video image processing. Software is needed to process the images taken by the cameras. This processing is usually done by examining the difference between consecutive video frames [7] . The area that a camera scans can be easily changed. There are two ways of using this system either by applying the edge detection with boundaries condition method for image detection module or applying point detection with canny operator method. In this project, the parking lot detection is done by identifying the green rounded image drawn at each parking lot. Matlab is used as a software platform [8] -[11] . Two types of car parks photos will be used. First one will be taken from Google earth and other one is a real photo for the car park.

2. System Module

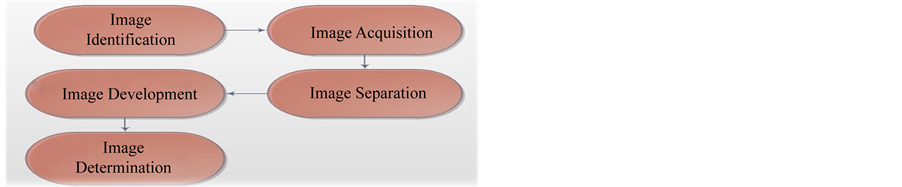

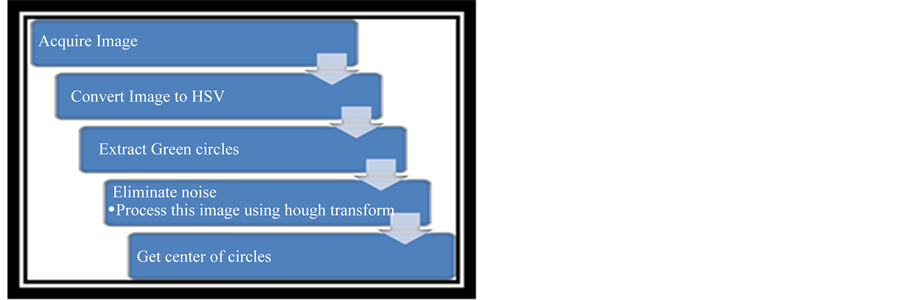

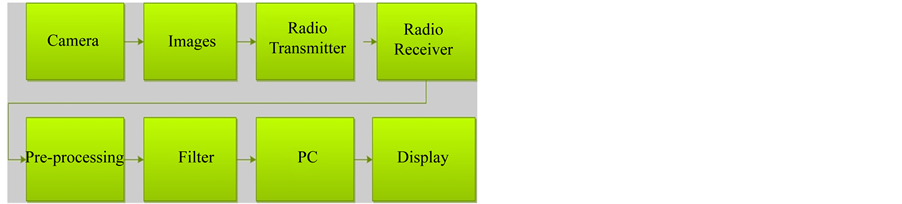

One or more cameras are used for video image processing .Software is needed to process the images taken by the cameras.The video image technique has five modules for this type of photos [12] . The whole process is shown in Figure 2.

The following will explain in detail each module.

2.1. Image Identification

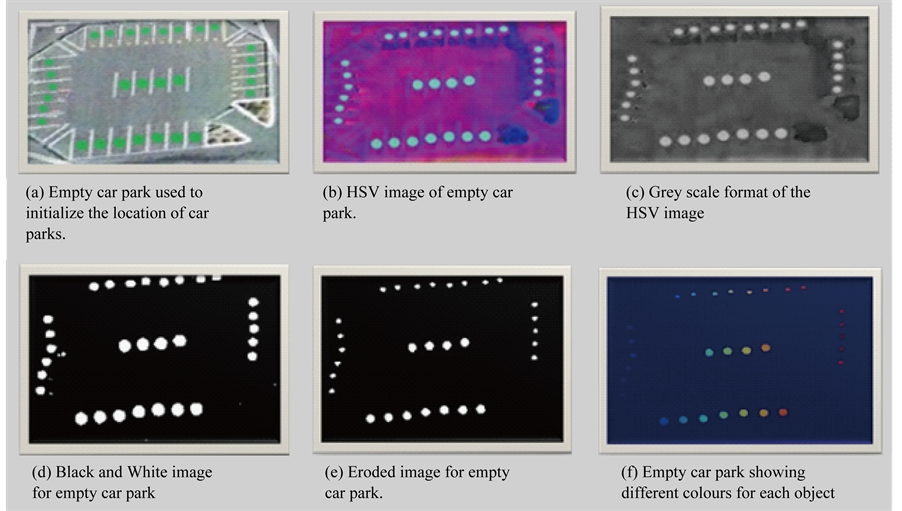

First an image of the car park could be taken when there are no cars in the car park as in Figure 3(a). This would be used for the system to record the location of all the car parks. The RGB value can be used to find where green circles are that represent empty car spaces. With these the system will know where to look for cars in the future [13] . For the empty car park, to get the location of the green dots, the image is converted into an HSV image as is shown in Figure 3(b).This is done through using the rgb2hsv command in Matlab.

The HSV image in Figure 3(c) is simplified to a black and white binary image so that it is easier to deal with by making the pixels white where the threshold is more than 40%. In order to do this the HSV image needs to be converted to a gray scale format so that each pixel can be easily can compared with the threshold as it is shown in Figure 3(d). The equation to do this is shown in Equation (1). The rgb2gray command is used to do this.

Gray = (0.299*r + 0.587*g + 0.114*b) (1)

Equation (1) is to convert RGB values to a grey value as shown in Figure 3(d). As can be seen from Figure 3(d) the green circles are noticeably lighter than anything else in the image. Therefore, the image can be easily converted to a black and white image by using im2bw command with the second argument being 0.4. This

Figure 2. System module.

Figure 3. Image identification.

means if the pixel is less than the threshold the colour of it will be black. Otherwise it will be white as it is shown in Figure 3(d). As can be seen in Figure 3(e); there are some small white parts of the image. These can be removed by using the erode function as it is shown in Figure 3(f). The imerode command is used to remove the small dots in the image. The object used to erode the image was created through using the following command: se3 = strel(‘disk’, 3).

This creates a disc with a radius of three that is used by the erode function. The result of using this function is shown in Figure 3(f). The following code shows how this is done:

if (newmatrix(y,x) > 0) % an object is there, if (e(newmatrix(y,x)) = 0) this object has not been seen e(newmatrix(y,x)) = x; make the value and index 3 equal to the current X coordinate.

2.2. Image Acquisition

A camera placed at a fixed position above the cars will be used to acquire the image used to calculate the vacancy of the car parks. This camera should be in a position where can clearly see all the car parks and not be obstructed by any objects. Figure 4 shows an example of what an image may look like.

2.3. Image Separation

There are many different ways to differentiate the different objects (cars, dots, surface, white road markings) in an image. One way is to take a sample of the RGB image and see where clusters form in a 3 dimensional graph. This was done for four images and produced Figure 5. As it can be seen in Figure 5 the red dots form a cluster

Figure 4. Car Park with green dots for car park spaces.

Figure 5. Three dimensional graphs of samples of RGB values from four images.

which means they could be easily distinguished from other objects. These represent the green dots. These dots are all are high in green and low in blue.

A cube could be made that encompasses only these dots. Then, if the average colour of an object is within this cube, it could be said that it is a green dot.

A simpler method of finding the green dots was found as explained in the previous section. This involved using the HSV image of the car park.

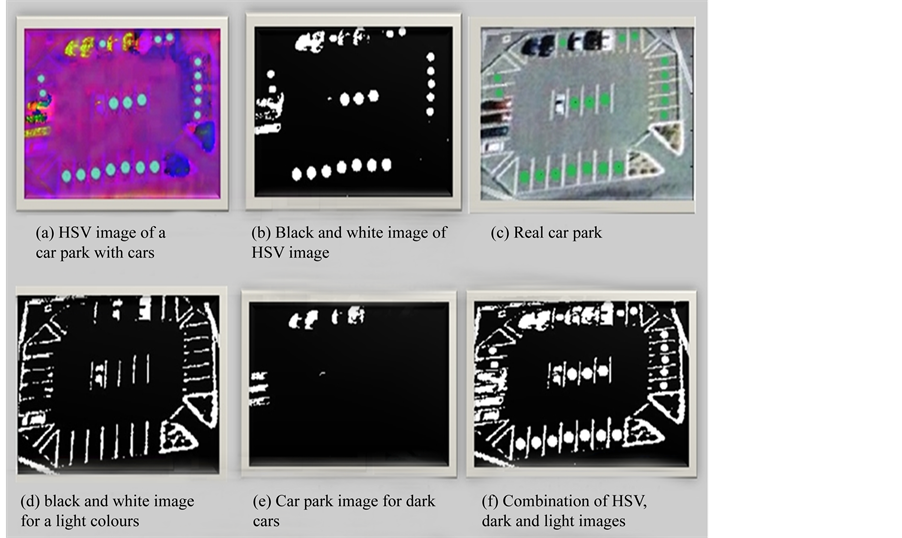

As it can be seen in Figure 6(a), the empty car parks are still very bright compared with other objects. This image was converted to a grey image and then to a black and white image (see Figure 6(b)) by using the same 40% threshold mentioned in initialization section.

To avoid getting the surface of the car park, which is grey, the bright and dark colours are obtained (see Figure 6(c) and Figure 6(d)). To detect light colours such as white (to get white cars and white road markings) a high threshold of 70% as used to form a black and white image as shown in Figure 6(e). In order to see all the objects the three black and white images are combined.

This is done by using the OR functions as shown in the code below (where “|” is the OR operator):

Mix = HSVBWObject | lightObject | DarkObject;

The combination of the three images [14] is shown in Figure 6(f).

Figure 6. Image separation.

2.4. Image Development

After obtaining the segments for the objects used the noise in the image needs to be removed. This can be done through dilation and erosion. Dilation increases the boundary of the objects in the image. This causes a problem in this image as shown in Figure 7. The problem is that objects merge together so it is hard to distinguish object from one another. However this is good to fill up holes in objects.

Erosion decreases the boundary of the objects so that they can be easily distinguished from one another. Figure 8 demonstrates the usefulness of this function. After erosion objects are more distinguishable from each other and small dots are removed. This prepares the image to identify whether parks are empty or filled.

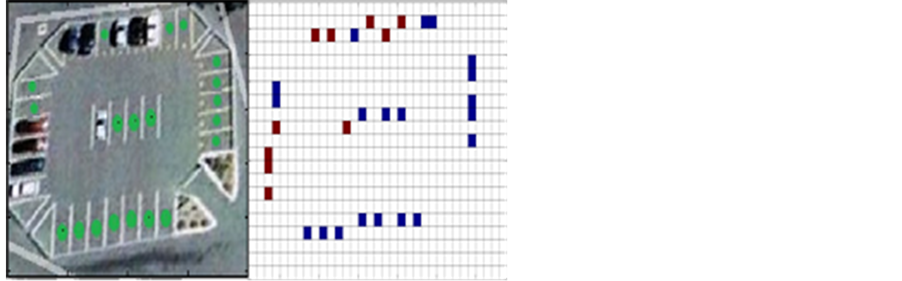

2.5. Image Determination

By using the coordinates found in the initialization of the car park, each car park slot can be analysed to determine whether a car is there or not. There were three methods used to determine whether car parks were full or not. If the height, width or size of the connected object at the car park coordinates were either too big or too small then the object was considered to be a car. In this case the car park was given the value 5 meaning it is occupied. This information was displayed as shown in Figure 9.

The GUI used to run the Matlab program that opens the image and produces an output as is shown in Figure 10.

3. Case Study for the Whole Process

This part describes the process of determining the presence of cars at car spots from a camera image at large car park. There are two different methods of implementing this system: either applying point detection with canny operator method or applying the edge detection with boundaries condition method for image detection module. It is an indispensable for detecting a car in the car park is to prepare a template image and edge Image. The aim of this project is to detect the existence of any car in each park slot.

3.1. Process of Template Images

It is required to prepare a template image which shows the location of each parking slot. The processing of pre-

Figure 7. Dilated image.

Figure 8. Eroded image.

Figure 9. Comparison between the RGB image and the output produced from the HSV image.

paring a template Image technique has five steps [15] as they are shown in Figure 11.

3.1.1. Acquire Image

First an image of the car park could be taken when there are no cars in the car park as is shown in Figure 12(a). This would be used for the system to record the location of all the car parks. The RGB value can be used to find where green circles are that represent empty car spaces. With these the system will know where to look for cars in the future.

3.1.2. Convert Image to HSV

For the empty car park, to get the location of the green dots, the image is converted into an HSV image as is shown in Figure 12(b). This is done through using the “rgb2hsv” command in Matlab.

3.1.3. Extraction and Detection

Green circles can be extracted by choosing certain values of pixels H, S and V. In this case a range of pixels is set so that pixels in this range will be extracted. The circle detection processes require the Hough transform. The Hough transform is a feature extraction technique that will be used to extract the circles, their radii, along with their centers.

Figure 10. GUI for car park image reader.

Figure 11. Processing steps of template image.

3.1.4. Red Pixels and Noise Elimination

First of all the red segment of the image is extracted because as we know, red pixels are most power full segment of the image as it is shown in Figure 12(c). If there are objects in the environment that have the same value of red pixels that will be displayed in the extracted image as well. This will be noise for the extracted image and may create an inaccurate result to be obtained when using the Hough Transform. Therefore, parts that do not match the shape of the dot or circle that is being looked at should be trimmed away.

The process of circle detection is shown in the following points:

• Create a accumulator space relative to the pixels and set these values to the zero

• For each edge, increment all values according to the circle equation

(2)

(2)

where (i) and (j) represent the edge points of the image, (a) represents the cells of the images, (b) is the coordinate, and (r) is the radius.

Figure 12. Whole process of template image.

• This will give the variable “a” in the equation

• Now for all possible values of “a” find the values of “b” which satisfy the equation

• Search for local maxima. These are the points which have higher probability then others

• These maxima are the location of the circles

• Draw a circle using rect command in Matlab to obtain Figure 12(d)

3.2. The Process of Edge Image

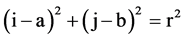

There are many ways to differentiate different objects and extract them but all the processes have their limitations. A process considered here is back ground eliminations. As many car parks have same road which here is defined as its background. The background can be extracted and hence the object like cars can easily be detected. The processing of edge image technique has five steps [16] as they are shown in Figure 13.

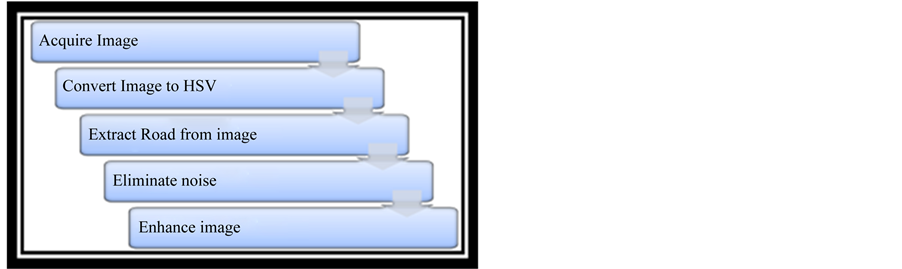

3.2.1. Converting to HSV

First the image is converted to HSV because it is easy to differentiate different coloured pixels in HSV space. In Figure 13(a) it can be clearly seen that the red looking colors are back ground. By looking at the pixels in the Figure 13(b) it becomes clear that pixels for the background fall within a specified range of colours. By executing the following code, the background can be obtained.hue = (Img_HSV(:,:,1) >= 0) & (Img_HSV(:,:,1) <= 0.9); saturation = (Img_HSV(:,:,2) >= 0.01) & (Img_HSV(:,:,2).

The object is eliminated as it is shown in Figure 13(c) and then it is converted to a grey image as it is shown in Figure 14(d).

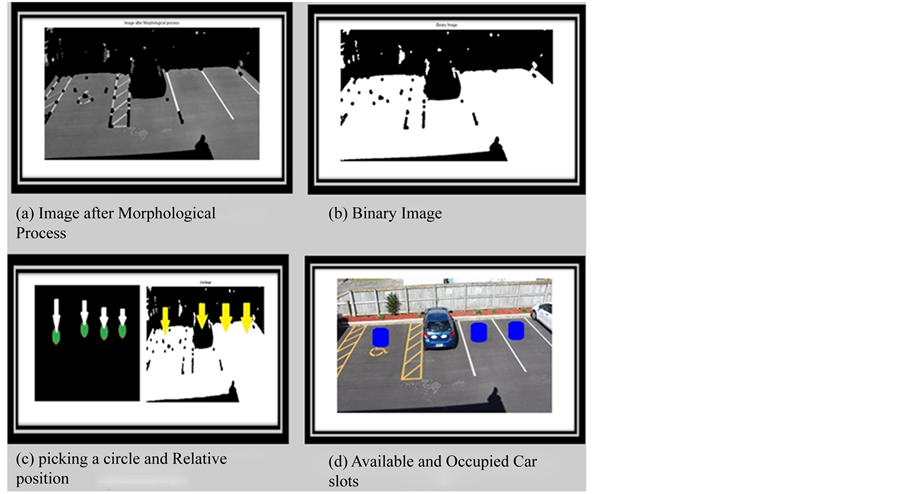

3.2.2. Morphological Processing

This is non-linear process which is related to shape and morphology of image. It is relative to ordering of pixel value not their numerical values as it is shown in the Figure 15(a).

se=strel(‘disk’,2); I=imopen(Gray_img,se); disp(‘Morphological process completed’), then the image is converted to Binary image as it is shown in Figure 15(b). The images of both car parking template and car park are now processed. Car parking template image contain the locations of car parking lot as a circle and the information about circle radius and its center is also known. The car park image after its processing now contain road image and eliminated any other object. An algorithm is set to check weather at particular parking lot an object is present or not. It can be simply checked by seeing the pixel value at particular parking lot. For example as it is seen in the Figure 15(c), the first image pick a circle and see relative position to the second image if second

Figure 13. Processing steps of edge image.

Figure 14. First parts of processing edge image.

image contains white space then the parking lot is empty if it contains black pixel then the space is occupied as it is shown in Figure 15(d).

4. Communication

In order to transfer the image to the computer, a wireless system is used. This is composed of a video camera that captures the image, a transmitter sends the images to the receiver in the control room, the receiver will send signals to a computer. The computer will process the signals and send them to the indoor transmitter. The computer has a FPGA platform attached that creates a filter to filter out the noise in the image. The indoor transmitter sends the signals wirelessly to the receiver attached with the monitor or display [17] [18] as shown in Figure 16.

5. Architecture and Components

To transmit video images and data from a video camera to a PC, an in-expensive compact wireless video camera is used to transmit video from one location to another without using traditional expensive and messy wiring. It has the benefit of working with all types of AV devices like video camera (NTSC or PAL), TV, recorder and so forth. The transmission range is up to 1000 feet (300 metres) with high gain omni-directional antenna of 12 dB gain or higher, or 3000 feet with Yagi or some other high gain directional antennas [19] -[21] .

Figure 15. Reaming parts of processing edge image.

Figure 16. Process of transferring images to the computer.

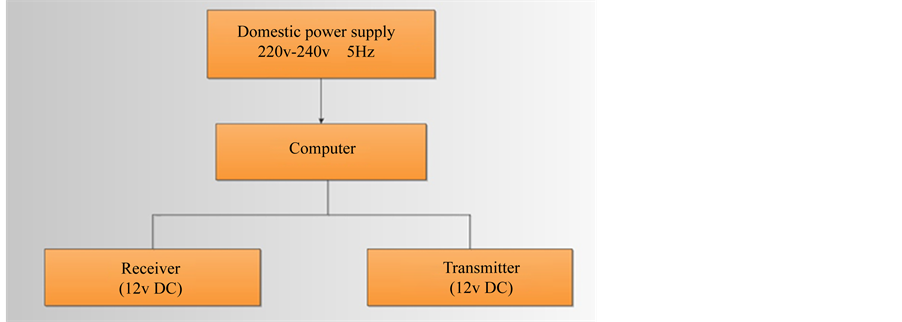

6. Power Sources

The lifetime of the video camera, transmitter, receiver, PC and the monitor depends on the power source. In this project a solar panel is used as an outdoor power source. It supplies the power to the transmitter, receiver and monitor through a 12v DC battery [22] -[24] . A whole process of Outdoor power source for the car park is shown in Figure 17.

Also a domestic power supply is used as an indoor power source. It supplies the power to the computer transmitter and receiver [25] [26] . A whole process of indoor power sources is shown in Figure 18.

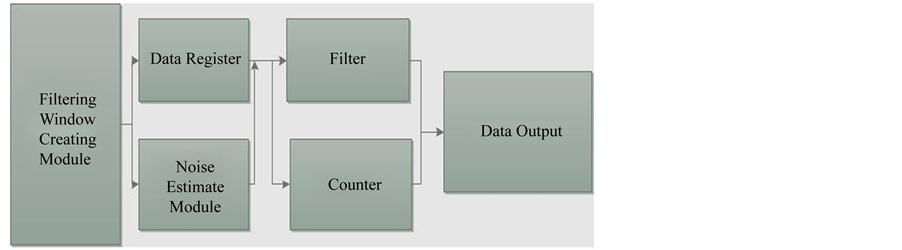

7. Image Pre-Processing Module

An Image pre-processing module is mostly about the design of filter. It is achieved in the FPGA [27] . The whole design is shown in Figure 19. A filtering window creating module creates filtering windows that are required by the use of follow-up modules. The noise estimate module estimates the type of noise, so that the best kind of filtering approach can be decided [28] [29] . It counts the rows and columns of the input data to determine whether the window can be legitimately done by the counter’s function and then to count the lawful window data to determine the output data in an image location. The last image data are sent to the next level of processing equipment [30] .

8. Car Park Display

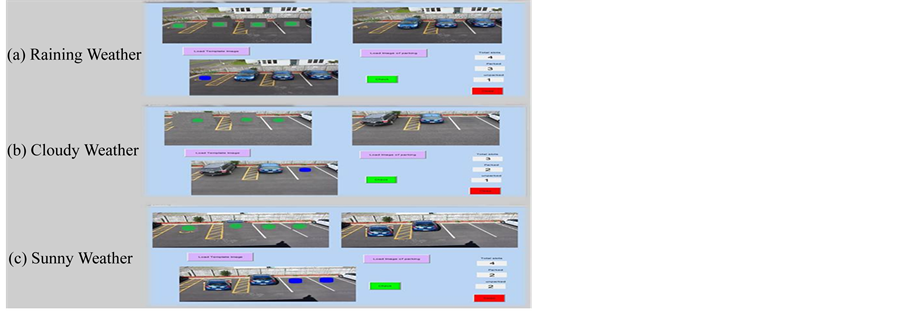

To test different scenarios of car parking, a program was made that generated car park positions with empty and filled cars. In this way, the graphical user interface could be tested with differing number of cars parks in differ-

Figure 17. Outdoor power source for the car park.

Figure 18. Indoor power source for car park.

Figure 19. The design flow of an image pre-processing module.

Figure 20. Car park display for different conditions.

ent positions. When the weather is raining the GUI will be as Shown in Figure 20(a), cloudy Figure 20(b), and sunny Figure 20(c).

9. Conclusion

An image based method of detecting the availability of a car park was modelled and tested with different occupancy scenarios of car parks. The method of analysing an aerial view of the car park has been presented step by step. This consists of finding car park coordinates from an empty car park, acquiring an image with cars, converting the image to black and white for simple analysis, removing noise and determining whether car parks are vacant or filled. The current limitation in this paper is the weather conditions and it can be improved by filtering the image in a high quality transform, so the camera can detect the park lots in any weather condition.

References

- Yu, H.M., Pang, Z. F. and Ruan, D.R. (2008) The Design of the Embedded Wireless Vehicles Monitoring Management System Based on GPRS : Evidence from China. International conference on Intelligent Information Technology,Shanghai, 21-22 December 2008, 677-680.

- Wang, L.F., Chen, H. and Li, Y. (2009) Integrating Mobile Agent with Multi-agent System for Intelligent Parking Negotiation and Guidance. 4th IEEE Conference on Industrial Electronics and Applications, 25-27 May 2009, Xi’an, 1704-1707.

- Abdallah, L., Stratigopoulos, H.-G., Mir, S. and Kelma, C. (2012) Experiences With Non-Intrusive Sensors For RF Built-In Test. 2012 IEEE International Test Conference (ITC), Anaheim, 5-8 November 2012, 1-8.

- D. Kim and W. Chung (2008) Motion Planning for Car-Parking Using the Slice Projection Technique. IEEE/RSJ International Conference on Intelligent Robots and Systems, Nice, 22-26 September 2008, 1050-1055.

- Zhang, B., Jiang, D.L., Wang, F. and Wan, T.T. (2009) A Design of Parking Space Detector Based on Video Image. The Ninth International Conference on Electronic Measurement & Instruments, Beijing, 16-19 August 2009, 253-256.

- Sreedevi, A.P. and Nair, B.S.S. (2011) Image Processing Based Real Time Vehicle Theft Detection and Prevention System. 2011 International Conference on Process Automation, Control and Computing (PACC), Coimbatore, 20-22 July 2011, 1-6.

- Zhang, X., Li, Y., Wang, J. and Chen, Y. (2009) Design Of High-Speed Image Processing System Based on FPGA. The Ninth International Conference on Electronic Measurement & Instruments, Beijing, 16-19 August 2009, 65–69.

- Jermsurawong J., Ahsan, M., et al. (2012) Car Parking Vacancy Detection and Its Application in 24-Hour Statistical Analysis. 10th International Conference on Frontiers of Information Technology, Islamabad, 17-19 December 2012, 84-90,

- Lin, S., Chen, Y. and Liu, S. (2006) A Vision-Based Parking Lot Management System. IEEE International Conference on Systems, Man, and Cybernetics, Taipei, 8-11 October 2006, 2897-2902.

- Conci, A., Carvalho, J. and Rauber, T. (2009) A Complete System for Vehicle Plate Localization, Segmentation and Recognition in Real Life Scene. IEEE Latin America Transactions, 7, 497-506. http://dx.doi.org/10.1109/TLA.2009.5361185

- Lee, K. (2012) Colour Matching for Soft Proofing Using a Camera. IET Image Processing, 6, 292-300.

- Tang, V., Zheng, Y., Cao, J., Hong, T. and Polytechnic, K. (2006) An Intelligent Car Park Management System Based on Wireless Sensor Networks. 1st International Symposium on Pervasive Computing and Applications, 3-5 August 2006, Urumqi, 65-70.

- Jinghong, D., Yaling, D. and Kun, L. (2007) Development of Image Processing System Based on DSP and FPGA. The Eighth International Conference on Electronic Measurement and Instruments, 16 August 2007-18 July 2007, Xi’an, 791-794.

- Matjašec, Ž. and Ĉonlagiü, D. (2011) An Optical Signal Processing Device for White-Light Interferometry, Based on CPLD. 2011 Proceedings of the 34th International Convention MIPRO, Opatija, 23-27 May 2011, 60-64.

- Wang, Y., Zhou, G. and Li, T. (2006) Design of a Wireless Sensor Network for Detecting Occupancy of Vehicle Berth in Car Park. Seventh International Conference on Parallel and Distributed Computing, Applications and Technologies, Taipei, December 2006, 115-118.

- Funck, S., Mohler, N. and Oertel, W. (2004) Determining Car-Park Occupancy from Single Images. 2004 IEEE Intelligent Vehicles Symposium, Parma, 14-17 June 2004, 325-328. http://dx.doi.org/10.1109/IVS.2004.1336403

- Zilan, R., Barceló-Ordinas, J.M. and Tavli, B. (2008) Image Recognition Traffic Patterns for Wireless Multimedia Sensor Networks. In: Cerdà-Alabern, L., Ed., Lecture Notes in Computer Science Volume 5122: Wireless Systems and Mobility in Next Generation Internet, 4th International Workshop of the EuroNGI/EuroFGI Network of Excellence, Barcelona, 16-18 January 2008, 49-59. http://link.springer.com/chapter/10.1007%2F978-3-540-89183-3_5

- Wolff, J., Heuer, T., Gao, H., Weinmann, M., Voit, S. and Hartmann, U. (2006) Parking Monitor System Based on Magnetic Field Sensors. Proceedings of the IEEE ITSC 2006 Intelligent Transportation Systems Conference, Toronto, 17-20 September 2006, 1275-1279. http://dx.doi.org/10.1109/ITSC.2006.1707398

- Riza, N.A., Marraccini, P.J. and Baxley, C.R. (2012) Data Efficient Digital Micromirror Device-Based Image Edge Detection Sensor Using Space-Time Processing. IEEE Sensors Journal, 12, 1043-1047.

- Yusnita, R., Norbaya, F. and Basharuddin, N. (2012) Intelligent Parking Space Detection System Based on Image Processing. International Journal of Innovation, Management and Technology, 3, 232-235.

- Caicedo, F. and Vargas, J. (2012) Access Control Systems and Reductions of Driver’s Wait Time at the Entrance of a Car Park. 7th IEEE Conference on Industrial Electronics and Applications (ICIEA), Singapore, 18-20 July 2012, 1639- 1644.

- Prasad, A.S.G., Sharath, U., Amith, B. and Supritha, B.R. (2012) Fiber Bragg Grating Sensor Instrumentation for Parking Space Occupancy Management. International Conference on Optical Engineering (ICOE), Belgaum, 26-28 July 2012, 1-4.

- Chien, S.A. (1994) Automated Synthesis of Image Processing Procedures for a Large-Scale Image Database. 4th International Conference on Knowledge and Smart Technology (KST), Austin, 13-16 November 1994, 796-800.

- Caicedo, F. and Vargas, J. (2012) Access Control Systems and Reductions of Driver’s Wait Time at the Entrance of a Car Park. IEEE 7th International Conference on Industrial Electronics and Applications, Singapore, 18-20 July 2012, 1639-1644.

- Boluk, P.S., Irgan, K., Baydere, S. and Harmanci, E. (2011) IQAR : Image Quality Aware Routing for Wireless Multimedia Sensor Networks. 7th International Wireless Communications and Mobile Computing Conference (IWCMC), Istanbul, 4-8 July 2011, 394-399.

- Gall, R., Troester, F. and Mogan, G. (2010) Building an Experimental Car Like Mobail Robot for Fully Autonomous Parking. 2010 IEEE/ASME International Conference on Advanced Intelligent Mechatronics (AIM), Montreal, 6-9 July 2010, Montreal, 6-9 July 2010, 1081-1086. http://dx.doi.org/10.1109/AIM.2010.5695903

- Chen, M.K., Hu, C. and Chang, T.H. (2010) The Research on Optimal Parking Space Choice Model in Parking Lots. 3rd International Conference on Computer Research and Development (ICCRD), Shanghai, 11-13 March 2011, 93-97.

- Barton, J., Buckley, J., O’Flynn, B., O’Mathuna, S.C., Benson, J.P., O’Donovan, T., Roedig, U. and Sreenan, C. (2007) The D-Systems Project—Wireless Sensor Networks for Car-Park Management. IEEE 65th Vehicular Technology Conference, Dublin, 22-25 April 2007, 170-173.

- Souissi, R., Cheikhrouhou, O., Kammoun, I. and Abid, M. (2011) A Parking Management System Using Wireless Sensor Networks. 2011 International Conference on Microelectronics (ICM), Hammamet, 19-22 December 2011, 1-7.

- Srikanth, S.V., Pramod, P.J., Dileep, K.P., Tapas, S., Patil, M.U. and Sarat, C.B.N. (2009) Design and Implementation of a prototype Smart PARKing (SPARK) System Using Wireless Sensor Networks. International Conference on Advanced Information Networking and Applications Workshops, Bradford, 26-29 May 2009, 401-406. http://dx.doi.org/10.1109/WAINA.2009.53