American Journal of Computational Mathematics

Vol.04 No.05(2014), Article ID:51290,8 pages

10.4236/ajcm.2014.45033

Fast and Numerically Stable Approximate Solution of Trummer’s Problem

Mohammad M. Tabanjeh

Department of Mathematics and Computer Science, Virginia State University, Petersburg, USA

Email: mtabanjeh@vsu.edu

Copyright © 2014 by author and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 9 September 2014; revised 10 October 2014; accepted 20 October 2014

ABSTRACT

Trummer’s problem is the problem of multiplication of an n × n Cauchy matrix C by a vector. It serves as the basis for the solution of several problems in scientific computing and engineering [1] . The straightforward algorithm solves Trummer’s problem in O(n2) flops. The fast algorithm solves the problem in O(nlog2n) flops [2] but has poor numerical stability. The algorithm we discuss here in this paper is the celebrated multipoint algorithm [3] which has been studied by Pan et al. The algorithm approximates the solution in O(nlogn) flops in terms of n but its cost estimate depends on the bound of the approximation error and also depends on the correlation between the entries of the pair of n-dimensional vectors defining the input matrix C.

Keywords:

Cauchy Matrix, Mulipoint Algorithm, Structure Matrices, Displacement Operators

1. Introduction

Computations with dense structured matrices have many applications in sciences, communications and engineering. The structure enables dramatic acceleration of the computations and major decrease in memory space but sometimes leads to numerical stability problems. The best well-known classes of structured matrices are Toeplitz, Hankel, Cauchy and Vandermonde matrices.

The computations with such matrices are widely applied in the areas of algebraic coding, control, signal processing, solution of partial differential equations and algebraic computing. For example, Toeplitz matrices arise in some major signal processing computations and the problem of multiplying Vandermonde matrix by a vector is equivalent to polynomial evaluation, whereas solving a Vandermonde system is equivalent to polynomial interpolation. Moreover, Cauchy matrices appear in the study of integral equations and conformal mappings. The complexity of computations with n × n dense structured matrices dramatically decreases in comparison with the general n × n matrices, that is, from the order of n2 words of storage space and  arithmetic operations (ops) with

arithmetic operations (ops) with  in the best algorithms, to

in the best algorithms, to  words of storage space and

words of storage space and  ops (see Table 1 below for more details).

ops (see Table 1 below for more details).

2. Some Basic Definitions

Throughout this paper, we use the following notations;  denotes the set of nonnegative integers,

denotes the set of nonnegative integers,  is the set of positive real numbers, Z+ denotes the set of positive integers, and R denotes the set of real numbers.

is the set of positive real numbers, Z+ denotes the set of positive integers, and R denotes the set of real numbers.

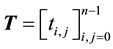

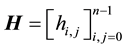

Definition 2.1. A matrix  is a Toeplitz matrix if

is a Toeplitz matrix if  for every pair of its entries

for every pair of its entries  and

and . A matrix

. A matrix  is a Hankel matrix if

is a Hankel matrix if  for every pair of its entries

for every pair of its entries  and

and .

.

Definition 2.2. For a given vector , the matrix

, the matrix  of the form

of the form

Definition 2.3. Given two vectors s and t such that

For more details regarding the four classes of structured matrices, see Table 2 below.

Table 1. Parameter and flops count.

Table 2. General definition of the four classes of structured matrices.

Remark 2.1. It is quite easy to verify that TJ and JT are Hankel matrices if T is a Toeplitz matrix, and HJ and JH are Toeplitz matrices if H is a Hankel matrix where J is the following reflection matrix,

3. The Displacement Operators of Dense Structured Matrices

The concept of displacement operators and displacement rank which was introduced by T. Kailath, S. Y. Kung, and M. Morf in 1979 and studied by Pan, Bini, and other authors is one of the powerful tools for studying and dealing with matrices that have structure. The displacement rank approach, when it was initially introduced, was intended for more restricted use [4] , namely, to measure how “close” to Toeplitz a given matrix is. Then the idea turned out to be even more powerful, thus it was developed, generalized and extended to other structured matrices. In this section, we consider the most general and modern interpretation of the displacement of a matrix.

The main idea is, for a given structured matrix A, we need to find an operator L that transforms the matrix into a low rank matrix

Definition 3.1. For any fixed field

and Stein type,

The image

Theorem 3.1.

erator matrix N is non-singular.

Proof:

The operator matrices that we will be using are the matrices Zf,

f is any scalar,

We may use the operator matrices Z1 and Z0 in the case of Toeplitz matrices, Z1 and

4. The Correlation of Structured Matrices to Polynomials

The product

represents the vector

If

then the matrix

Here,

Note that the numerical stability is very important in approximation algorithm for multipoint polynomial evaluation. It relies on expressing

We may vary the vector x by linearly mapping it to the vector

5. New Transformation of Cauchy Matrices

As we mentioned earlier, Trummer’s problem is the problem of multiplication of an n × n Cauchy matrix C by a vector which is the basis for the solution of many important problems of scientific computing and engineering. The straightforward algorithm solves Trummer’s problem in

The algorithm we presenting in this paper approximates the solution in

The main goal in this paper is to enrich the power of the multipoint algorithm by introducing and proving some new expressions for Cauchy matrix via other Cauchy matrices [7] , which we may vary by changing one of their basis vectors. Under a certain choice of such a vector, the solution of Trummer’s problem will be simplified; thus, the power of the multipoint algorithm can be developed as we will see in the next section.

Therefore, we will achieve our goal by using a simple transformation of the useful basic formula of [8] , and the resulting expressions for C will give us further algorithmic opportunities.

Definition 5.1. For a pair of n-dimensional vectors

ciated n × n Cauchy, Vandermonde, and triangular Hankel matrices, respectively. For a vector

and

denote a pair of n × n diagonal matrices, defined by the vectors a and b.

Theorem 5.1. (See [8] ) Let

The main idea of the transformation of the basic vectors defining the problem is taken from [9] , where this idea was used for multipoint polynomial evaluation and interpolation.

Definition 5.2. Trummer’s problem is the problem of computing the vector

the problem of computing the vector

Definition 5.3.

Lemma 5.1.

Approximate solution of Trummer’s degenerate problem can be reduced to Trummer’s problem due to the next simple result.

Lemma 5.2.

Proof:

ma 5.1.

6. Transformations of Cauchy Matrices and Trummer’s Problem

Theorem 6.1. For a triple of n-dimensional vector

Proof Theorem 6.1:

1) Proof of Equation (5):

From Equation (3), we obtain

and Equation (3) for

This gives:

Clearly, the last one is just Equation (5).

2) Proof of Equation (6):

From Equation (4);

Then solve for

Now substitute the last expression into Equation (5) and obtain the following:

which is obviously Equation (6).

3) Proof of Equation (7):

From Equation (4);

replace

Now, replace the vector d by b and obtain

Since

Start with Equation (4) which is

obtain

Now replace

This implies that

4) Proof of Equation (8):

From Equation (4):

Expand Equation (4)

Substitute Equation (10) and the matrix equation

7. Approximate Stable Solutions of Trummer’s Problems

The algorithm we are studying and presenting in this section depends on the multipoint algorithm which approximates the solution of Trummer’s problem in

Recall, the power series expansion:

The basis for the algorithm is the following expressions:

where

Once again for large M the expression

The product of Cauchy matrix by a vector is

where

For any n × n Cauchy matrix

If either of the ratios

8. Discussions and Conclusions

Recall the following two formulas from Section 5:

Equations (13) and (14) are Vandermonde-free and Hankel-free, but they enable us to transform the basis vectors s and t for

To compute the matrices

And

For example, if

is the scaled nth roots of unity for a scalar a and

and the matrices

Remark 8.1. Trummer’s problem frequently arises for Cauchy degenerate matrices that are defined as fol-

lows:

We have

where

because

Acknowledgements

We thank the Editor and referees for their valuable comments.

References

- Rokhlin, V. (1985) Rapid Solution of Integral Equations of Classical Potential Theory. Journal of Computational Physics, 60, 187-207. http://dx.doi.org/10.1016/0021-9991(85)90002-6

- Gerasoulis, A. (1987) A Fast Algorithm for the Multiplication of Generalized Hilbert Matrices with Vectors. Mathematics of Computation, 50, 179-188. http://dx.doi.org/10.1090/S0025-5718-1988-0917825-9

- Greengard, L. and Rokhlin, V. (1987) A Fast Algorithm for Practice Simulation. Journal of Computational Physics, 73, 325-348. http://dx.doi.org/10.1016/0021-9991(87)90140-9

- Pan, V.Y., Zheng, A., Huany, X. and Yu, Y. (1995) Fast Multipoint Polynomial Evaluation and Interpolation via Computation with Structured Matrices. Annals of Numerical Mathematics, 4, 483-510.

- Pan, V.Y. (2001) Structured Matrices and Polynomials, Unified Superfast Algorithms. Birkhäuser, Boston. http://dx.doi.org/10.1007/978-1-4612-0129-8

- Bini, D. and Pan, V.Y. (1994) Polynomial and Matrix Computations, Volume 1: Fundamental Algorithms. Birkhäuser, Boston. http://dx.doi.org/10.1007/978-1-4612-0265-3

- Pan, V.Y., Tabanjeh, M., Chen, Z., Landowne, E. and Sadikou, A. (1998) New Transformations of Cauchy Matrices and Trummer’s Problem. Computers & Mathematics with Applications, 35, 1-5. http://dx.doi.org/10.1016/S0898-1221(98)00091-1

- Fink, T., Heinig, G. and Rost, K. (1993) An Inversion Formula and Fast Algorithms for Cauchy-Vandermonde Matrices. Linear Algebra & Its Applications, 183, 179-191. http://dx.doi.org/10.1016/0024-3795(93)90431-M

- Pan, V.Y., Landowne, E., Sadikou, A. and Tiga, O. (1993) A New Approach to Fast Polynomial Interpolation and Multipoint Evaluation. Computers & Mathematics with Applications, 25, 25-30. http://dx.doi.org/10.1016/0898-1221(93)90129-J

- Pan, V.Y. (1995) An Algebraic Approach to Approximate Evaluation of a Polynomial on a Set of Real Points. Advances in Computational Mathematics, 3, 41-58. http://dx.doi.org/10.1007/BF02431995