American Journal of Operations Research

Vol.04 No.06(2014), Article ID:50375,8 pages

10.4236/ajor.2014.46032

A Penalty Function Algorithm with Objective Parameters and Constraint Penalty Parameter for Multi-Objective Programming

Zhiqing Meng, Rui Shen, Min Jiang

College of Business and Administration, Zhejiang University of Technology, Hangzhou, China

Email: mengzhiqing@zjut.edu.cn, shenrui@zjut.edu.cn, jiangmin9@126.com,

Copyright © 2014 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 28 August 2014; revised 22 September 2014; accepted 6 October 2014

ABSTRACT

In this paper, we present an algorithm to solve the inequality constrained multi-objective programming (MP) by using a penalty function with objective parameters and constraint penalty parameter. First, the penalty function with objective parameters and constraint penalty parameter for MP and the corresponding unconstraint penalty optimization problem (UPOP) is defined. Under some conditions, a Pareto efficient solution (or a weakly-efficient solution) to UPOP is proved to be a Pareto efficient solution (or a weakly-efficient solution) to MP. The penalty function is proved to be exact under a stable condition. Then, we design an algorithm to solve MP and prove its convergence. Finally, numerical examples show that the algorithm may help decision makers to find a satisfactory solution to MP.

Keywords:

Multi-objective programming, penalty function, objective parameters, constraint penalty parameter, Pareto weakly-efficient solution

1. Introduction

Multi-objective programming is an important model in solving vector optimization problems. Many methods had been given to find solutions to multiobjective programming [1] . It is well-known that the penalty function is one of efficient methods in studying multiobjective programming. For example, in 1984, White [2] presented an exact penalty function for multiobjective programming. Sunaga, Mazeed and Kondo [3] applied penalty function formulation to interactive multiobjective programming problems. Ruan and Huang [4] studied weak calmness and weak stability of exact penalty functions for multiobjective programming. By penalty function, Liu [5] derived necessary and sufficient conditions without a constraint qualification for e-Pareto optimality of multi- objective programming, and the generalized e-saddle point for Pareto optimality of the vector Lagrangian. Huang and Yang [6] gave nonlinear Lagrangian for multiobjective optimization to duality and exact penalization. Chang and Lin [7] solved interval goal programming by using S-shaped penalty function. Antczak [8] studied the vector exact

penalty method for nondifferentiable convex multiobjective programming problems. Huang, Teo and Yang [9] discussed calmness of exact penalization in vector optimization with cone constraints. Huang [10] proved calmness of exact penalization in constrained scalar set-valued optimization. Meng, Shen and Jiang [11] defined an objective penalty function based on objective weight for multiobjective optimization problem and presented an interactive algorithm. This paper defines a penalty function with objective parameters and constraint penalty parameter which differs from an objective penalty function in [11] .

penalty method for nondifferentiable convex multiobjective programming problems. Huang, Teo and Yang [9] discussed calmness of exact penalization in vector optimization with cone constraints. Huang [10] proved calmness of exact penalization in constrained scalar set-valued optimization. Meng, Shen and Jiang [11] defined an objective penalty function based on objective weight for multiobjective optimization problem and presented an interactive algorithm. This paper defines a penalty function with objective parameters and constraint penalty parameter which differs from an objective penalty function in [11] .

Because it is almost not possible for decision makers (DMs) to obtain all efficient solutions to MP, it is significant to present an efficient algorithm of MP so that DMs finds an easy and satisfactory solution to the MP. Luque, Ruiz and Steuer pointed out that an efficient algorithms not only help decision makers learn more about efficient solutions, but also navigate to a final solution as quickly as possible [12] . This paper presents an algorithm by modifying every objective parameter of penalty function so that a final solution is easily and quickly obtained. In Section 2, we introduce a penalty function with the objective parameters and constraint penalty parameter, and its algorithm. In Section 3, we give numerical results to show that the proposed algorithm is efficient.

2. Penalty Function with Objective Parameters and Constraint Penalty Parameter

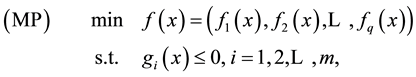

In this paper we consider the following inequality constrained multi-objective programming:

(1)

(1)

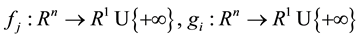

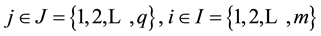

where , for

, for .

.

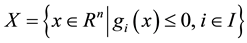

We denote the feasible set of MP (1) by . As usual,

. As usual,

is called a Pareto

is called a Pareto

weakly-efficient solution if there is no

such that

such that

for all

for all , i.e.

, i.e. .

.

is called a Pareto efficient solution if there is no

is called a Pareto efficient solution if there is no

such that

such that

for all

for all

and

and

for at least one

for at least one , i.e.

, i.e.

Let functions

where

Let

where

Consider the following unconstraint penalty optimization problem:

For

We have

Theorem 1. Suppose that for given

Then the following three assertions hold:

1) If

and

2) If

3) If

solution to (MP).

Proof. 1) The conclusion is obvious from the definitions of

2) Suppose that there be an

have

When

Hence,

3) According to 2), the conclusion holds.

Theorem 2. Suppose that for a given

following three assertions hold:

1) If

and

2) If

3) If

(MP).

Proof. 1) The conclusion is obvious from the definitions of

2) Suppose that there be an

have

When

Hence,

3) According to 2), the conclusion holds.

Based on Theorem 1, we develop an algorithm to compute an efficient solution to (MP). The algorithm solves the problem

MPFA Algorithm:

Step 1: Choose

Step 2: Solve

Step 3: If

Step 4: If

In the MPFA algorithm, it is assumed that for each

The convergence of the MPFA algorithm is proved in the following theorem. For some

which is called a Q-level set.

Theorem 3. Suppose that

1) If

2) If

efficient solution to (MP).

Proof. For all

Therefore,

1) If the MPFA algorithm terminates at the

2) We first show that the sequence

all

have

So,

Therefore, there is an

Since

We have

When

solution to (MP), there is an

So, we have

solution to (MP).

Theorem 3 means that the MPFA algorithm is convergent in theory. Now, we discuss the exactness of the penalty function for (MP). If there are an

(MP) is also a Pareto weakly-efficient solution to

Let (MP(s)) be a perturbed problem of (MP) given by

where

Definition 1. Let

where

We have an exact result of the penalty function.

Theorem 4. Let

function.

Proof. Suppose that

to (MP(s)). According to the definition of stability, we obtain that there is an

This implies that there is some

some

Thus,

Suppose that

Otherwise if

shows that

feasible solution to (MP) and

Let

(P(s')). Then, there is some

Therefore,

which shows that

where

with the assumption and proves that

3. Numerical Examples

In the MPFA algorithm, it is not easy to solve multiobjective problem

It is easily known that an optimal solution to the problem

solutions to the problem

of the MPFA algorithm with the problem

we have

Hence, when

So, we may obtain different Pareto weakly-efficient solutions at given different

controlling

We have applied the MPFA algorithm to several examples programmed by Matlab 6.5. The aim of numerical examples is to check the convergence of the algorithm and to control changes in objectives.

Example 1. Consider the following problem:

Let penalty function

Let the starting point

Clearly, if

algorithm, the results are shown in Table 1.

In Table 1, when

Objective parameter can control change of each objective function. It helps decision makers learn about the change of each objective function and choose a satisfactory solution as quickly as possible.

Example 2. Consider the problem:

We want to find a solution that three objectives are as small as possible with the first and second objective value less than −2 and the third objective value less than −5.

Let penalty function

Let the starting point

Table 1. Numerical results with different objective parameters.

Table 2. Numerical results with different objective parameters.

We take different parameters

In Table 2, we find a satisfactory solution

4. Conclusion

In this paper, we define a penalty function with objective parameters and constraint penalty parameter for MP and the corresponding unconstraint penalty optimization problem. Under some conditions, we prove that a Pareto efficient solution (or a weakly-efficient solution) to UPOP is a Pareto efficient solution (or a weakly- efficient solution) to MP, and the penalty function is exact under a stable condition. We present the MPFA algorithm to solve the multi-objective programming with inequality constraints by using the nonlinear penalty function with objective parameters. With this algorithm, we may find a satisfactory solution.

Acknowledgments

We thank the Editor and the referee for their comments. The research is supported by the National Natural Science Foundation of China under grunt 11271329 and 10971193.

References

- Sawaragi, Y., Nakayama, H. and Tanino, T. (1985) Theory of Multiobjective Optimization. Academic Press, London.

- White. D.J. (1984) Multiobjective Programming and Penalty Functions. Journal of Optimization Theory and Applications, 43, 583-599. http://dx.doi.org/10.1007/BF00935007

- Sunaga, T., Mazeed, M.A. and Kondo, E. (1988) A Penalty Function Formulation for Interactive Multiobjective Programming Problems. Lecture Notes in Control and Information Sciences, 113, 221-230. http://dx.doi.org/10.1007/BFb0042790

- Ruan, G.Z. and Huang, X.X. (1992) Weak Calmness and Weak Stability of Multiobjective Programming and Exact Penalty Functions. Journal of Mathematics and System Science, 12, 148-157.

- Liu. J.C. (1996)

-Pareto Optimality for Nondifferentiable Multiobjective Programming via Penalty Function. Journal of Mathematical Analysis and Applications, 198, 248-261.http://dx.doi.org/10.1006/jmaa.1996.0080

- Huang, X.X. and Yang, X.Q. (2002) Nonlinear Lagrangian for Multiobjective Optimization to Duality and Exact Penalization. SIAM Journal on Optimization, 13, 675-692. http://dx.doi.org/10.1137/S1052623401384850

- Chang, C.-T. and Lin, T.-C. (2009) Interval Goal Programming for S-Shaped Penalty Function. European Journal of Operational Research, 199, 9-20. http://dx.doi.org/10.1016/j.ejor.2008.10.009

- Antczak, T. (2012) The Vector Exact l1 Penalty Method for Nondifferentiable Convex Multiobjective Programming Problems. Applied Mathematics and Computation, 218, 9095-9106. http://dx.doi.org/10.1016/j.amc.2012.02.056

- Huang, X.X., Teo, K.L. and Yang, X.Q. (2006) Calmness and Exact Penalization in Vector Optimization with Cone Constraints. Computational Optimization and Applications, 35, 47-67. http://dx.doi.org/10.1007/s10589-006-6441-5

- Huang, X.X. (2012) Calmness and Exact Penalization in Constrained Scalar Set-Valued Optimization. Journal of Optimization Theory and Applications, 154, 108-119. http://dx.doi.org/10.1007/s10957-012-9998-4

- Meng, Z.Q., Shen, R. and Jiang, M. (2011) An Objective Penalty Functions Algorithm for Multiobjective Optimization Problem. American Journal of Operations Research, 1, 229-235. http://dx.doi.org/10.4236/ajor.2011.14026

- Luque, M., Ruiz, F. and Steuer, R.E. (2010) Modi-Fied Interactive Chebyshev Algorithm (MICA) for Convex Multiobjective Programming. European Journal of Op-Erational Research, 204, 557-564. http://dx.doi.org/10.1016/j.ejor.2009.11.011

-Pareto Optimality for Nondifferentiable Multiobjective Programming via Penalty Function. Journal of Mathematical Analysis and Applications, 198, 248-261.

-Pareto Optimality for Nondifferentiable Multiobjective Programming via Penalty Function. Journal of Mathematical Analysis and Applications, 198, 248-261.