Health

Vol.6 No.14(2014), Article

ID:48193,12

pages

DOI:10.4236/health.2014.614214

Health Professionals’ Attitudes towards Electronic Psychosocial Assessments in Youth Mental Healthcare

Sally Bradford1, Debra Rickwood1,2, Douglas Boer1

1Faculty of Health, University of Canberra, Canberra, Australia

2headspace, National Youth Mental Health Foundation, Melbourne, Australia

Email: Sally.Bradford@Canberra.edu.au

Copyright © 2014 by authors and Scientific Research Publishing Inc.

This work is licensed under the Creative Commons Attribution International License (CC BY).

http://creativecommons.org/licenses/by/4.0/

Received 27 May 2014; revised 1 July 2014; accepted 16 July 2014

ABSTRACT

Psychosocial assessments can help mental health professionals establish good therapeutic relationships while simultaneously conducting holistic assessments of their young clients. Using technology to conduct assessments may increase disclosure by young people; however, the uptake of new technologies into current face-to-face practice has been slow. In the current study, we were interested in exploring the attitudes of mental health workers to using an electronic psychosocial assessment tool (e-tool) within face-to-face service delivery with adolescents and young adults. An exploratory design was used to identify and qualitatively describe the views of 46 mental health workers from services across the ACT and Victoria, Australia. Data were coded using an inductive thematic approach. Comments indicated that mental health workers held both positive and negative views about the e-tool. Some participants believed that it would allow disclosure to occur in a stepped process, normalize questions, give youth greater input, and be time efficient. However, the majority believed that the e-tool would infringe on their work because they needed to respond to their clients immediately, it would not provide an accurate representation of the client, young people did not have the necessary capabilities to engage in the process, they would miss non-verbal cues from the young person, and they were more likely to gain information from organic conversations. The results suggest that many mental health professionals may be fearful of incorporating new technologies in current practice. Specific training and supportive implementation guidelines must be developed to support use of these new technologies and change practice.

Keywords:Psychosocial Assessments, Young People, Information Communication Technologies, Mental Healthcare

1. Introduction

High rates of mental health problems among adolescents and young adults are well documented, with threequarters of all lifetime cases emerging before 24 years [1] [2] . A review of the global burden of disease [3] found that the main cause of disability-adjusted life years (DALYs) across global regions for young people aged 10 - 24 years was neuropsychiatric disorders, accounting for 45% of all DALYs in this age group. Without appropriate intervention these mental health problems are likely to significantly impact on the life of the young person and will continue well into adulthood [1] . Fortunately, evidence-based treatment approaches can reduce symptom severity and the impact on a young person’s life [4] .

Over the last decade, in Australia as elsewhere, there has been a major reorientation in the service approach for youth mental health. Traditionally, services are split into children’s services for those aged under 18 years and adult services for those 18 years and older. Unfortunately, this requires many young people to navigate a new system of care during the time period where they are at their most vulnerable [5] . Consequently, a new service model is gaining momentum internationally to provide services that cover the 12 - 25 years age range to ensure that young people have a tailored and seamless service experience during the years of heightened vulnerability to mental disorder, and that they have made the full transition into adulthood before they need to navigate adult services [6] .

When a young person presents for mental health care, it is highly likely that in their initial appointment their clinician will have two primary goals: to establish a good therapeutic relationship so that the young person will return [7] , and to adhere to best practice guidelines by conducting holistic assessments that cover multiple psychological, environmental, and social domains [8] . Across countries such as the United States of America and Australia, one of the most widely used psychosocial assessment tools for young people across the entire 12 - 25 years age range is the semi-structured interview “HEADSSS” (Home, Education/employment, Eating, Activities and peer relations, Drugs and Alcohol, Sexuality, Suicide/depression, and Safety) [9] . The HEADSSS helps guide the mental health worker through the initial assessment to ensure all the relevant psychosocial domains are discussed. It orders questions so that the least personal domains are discussed first, leading through to the more sensitive issues, so that the therapeutic relationship can establish before the mental health worker probes into highly personal domains [10] .

Although this entirely verbal assessment is widely utilized, young people are generally more accepting of psychosocial assessment tools that are initially self-administered in a questionnaire format [11] . This preference for self-administered tools exists because they provide young people with increased control over the help-seeking and treatment process by allowing them to identify the areas they most want to focus on and to structure their thoughts prior to the face-to-face clinical interview [12] [13] . They also allow young people to disclose what is happening for them without the fear of judgment or becoming overwhelmed with emotions [13] . While these benefits would likely be seen in any type of self-administered format, psychosocial assessments conducted using information communication technologies (ICT) appear to have better user satisfaction with young people [14] and result in a higher frequency of disclosure than those administered via a pen and paper format [15] .

Over the last ten to fifteen years, research into how ICT can improve mental health outcomes for young people has grown exponentially. The interest has been fuelled by the assumption that utilizing ICT may increase help-seeking and disclosure behaviors, and better engages young people through their increased access to the internet and other technologies [16] . Much of this research has focused on providing youth with computerized self-help services that guide them through manual-based programs with limited-to-nil contact with clinicians [17] [18] , or those that provide access to mental health professionals through anonymous email or instant messaging options [19] [20] . These developments were supported by research into the “online disinhibition effect”, which suggests that people have higher rates of self-disclosure in the anonymous online realm [21] . However, a recent systematic review indicates that the online modality only results in a greater frequency of disclosure, not a greater depth of disclosure [15] . Furthermore, this move towards providing completely online mental health services ignores young people’s continuing preference to ultimately seek help in a face-to-face format [22] .

It would therefore seem appropriate to incorporate technologies into face-to-face services in order to provide an integrated system of care that meets the needs of a wider group of young people. Unfortunately, the incorporation of new technologies within face-to-face mental healthcare has been significantly slower than the acceptance and uptake of services that are primarily based online. It is likely that the slower uptake of these types of technologies is a result of the unwillingness, or inability, of clinicians to change current practice models. In their five step framework for effective change in clinical practice, Moulding, Silagy and Weller [23] suggested that the first step must involve an assessment of the practitioner’s readiness to change, which involves understanding their attitudes and beliefs about their current practice model, and the suggested change [24] ; specifically, whether they believe the change is needed, important, beneficial or worthwhile [25] . It is also important to understand how the attitudes of colleagues play a major role in influencing the attitudes of individual practitioners, as predicted according to social influence theory [23] [26] . Therefore, when assessing the attitudes of practitioners working in collaboration with others at a specific location, it is necessary to determine the “shared sense of readiness” [25] .

Given that young people appear to have a greater satisfaction for initial assessments that are conducted in a computerized format, but still want face-to-face services, it is appropriate to further investigate the applicability of utilizing digital assessments within face-to-face settings. The current study aimed to scope the utility of developing an electronic psychosocial assessment (e-tool) based on the HEADSSS format specifically for the 12 - 25 year age range. Based on the framework for effective management of change in clinical practice, our objective was to complete the first necessary step by investigating the attitudes of mental health professionals to using this type of technology in current face-to-face services. It was important to understand the individual views of clinicians who work alone and also the views of clinicians who work in multidisciplinary teams, where “shared attitudes” are also pertinent.

2. Method

2.1. Participants

The total sample consisted of 46 mental health professionals from Canberra in the Australian Capital Territory (ACT) and Melbourne, Victoria, Australia. There were 35 females (76%) and 11 males (24%) with backgrounds in psychology, social work, and occupational therapy. Individuals who primarily provide mental health services without the input from other mental health workers, such as private practitioners and school counselors, were interviewed individually. Participants who work in services made up of multidisciplinary teams were interviewed in a group format. Each interview was coded as a single case with no distinction being made between the views of individuals within the same group interview. This created 13 cases for coding. Participant recruitment and interviewing was run from February 2013 through to August 2013 and ceased when saturation of key themes was achieved [27] .

2.1.1. Group Interviews

Thirty-five mental health workers from “headspace” centers across ACT and Victoria were interviewed in seven different small groups. Each group consisted of clinicians working from the same center. headspace National Youth Mental Health Foundation is an Australian youth specific mental health service offering a range of health services including general health, mental health and counseling, education and employment support, and alcohol and other drug services to young people aged 12 - 25 years [28] . Six other participants worked at Child and Adolescent Mental Health Services (CAMHS) in the ACT and were interviewed as a single group. CAMHS provides clinical support to children and adolescents aged less than 18 years with serious and complex mental health concerns. Group interviews ranged in time from 46 to 66 minutes (M = 53.42, SD = 8.16).

2.1.2. Individual Interviews

Five private psychologists and school psychologists working in different locations across the ACT and Victoria were interviewed individually. Each participant had the choice of being interviewed face-to-face or via telephone, and all chose to be interviewed over the phone. Individual interviews ranged in time from 18 to 25 minutes (M = 20.00, SD = 2.90).

2.2. Procedure and Design

The study was advertised through youth specific mental health services and by word-of-mouth through clinicians working primarily with young people in private practice. Interested services and individual practitioners contacted the researchers to set up interview times. All interviews were facilitated by the first author.

An exploratory research design was used to identify and qualitatively describe the views of mental health professionals who work primarily with young people around using a psychosocial assessment e-tool in their current work [29] . Prior to the study commencing, ethics approval was obtained from the University of Canberra Human Research Ethics Committee (Approval no. 12-171). At the beginning of each interview each participant was informed of the purpose of the study and that it would be audio recorded and transcribed. All participants received movie tickets or a gift voucher for participating.

2.3. Interview Guide

To provide essential context and explain why the research was being undertaken, participants were first told about a prior study where young people indicated a general preference to initially disclose personal problems through the use of an e-tool, rather than in-person, when seeking help from a face-to-face service. Participants were also informed of the reasons why young people indicated this as a preference. The full results of this earlier study and the reasons provided by young people are published elsewhere [13] .

The following questions (and probes) were used as a guide by the facilitator:

• How do you currently conduct psychosocial assessments? (What about this process works/does not work?).

• How would you feel about incorporating an electronic assessment/screening tool into your current practice? (Benefits and concerns? Issues with certain clients or domains?).

2.4. Analytic Strategy

Interviews were transcribed verbatim and analyzed with the aid of QSR International NVivo 10 software [30] . A critical realist, thematic analysis approach was utilized, with the data being analyzed at a semantic level [31] . Specifically, the thematic coding was conducted using an inductive approach [31] , which is a data driven approach that allows the “…research findings to emerge from the frequent, dominant, or significant themes inherent in raw data, without the restraints imposed by structured methodologies” [[32] , p. 238].

The first author initially listened to the audio of each group multiple times and read each transcript once before any coding occurred to ensure immersion in the data and that responses were understood in context [31] [32] . Each interview was then systematically coded by the first author in a data driven manner, so that codes were created to cover all parts of the data [32] [33] . Through meetings with all authors, thematic maps were created to organize the codes into themes and sub-themes, with all subsequently being summarized and defined [31] [34] . To provide additional support for the reliability of the analysis, 20% of the data was re-coded by the third author [35] . Inter-rater reliability was assessed using combined segment-based Cohen’s Kappa scores on two double-coded transcripts [36] . NVivo 10 software computes Cohen’s Kappa by calculating the percentage of agreement and disagreement between raters while taking into account the amount of agreement that could be expected to occur through chance [30] . The averaged Cohen’s Kappa score for all codes and re-coded sources was 0.87, indicating excellent inter-rater reliability [27] [37] .

Further reliability in qualitative analysis can be obtained by indicating the level of representativeness for each of the identified themes. To this end, frequency labels were applied to each theme, as proposed by Hill, Knox, Thompson, Williams, Hess and Ladany [38] . A theme that applied to all or all but one of the cases was considered general; a typical theme applied to more than half of the cases; a theme considered variant included at least three cases and up to half of all cases; and a theme that included two or three cases was considered rare. Findings that emerged from only one case were not reported.

3. Results

Participants had both positive and negative attitudes towards the incorporation of this type of technology into current practice. This resulted in the data being organized into two thematic maps of “Useful e-tool” and “Engagement Barrier”. Overall, “Useful e-tool” was represented at a general level, whereas “Engagement Barrier” was represented at the typical Level.

However, while “Useful e-tool” was coded at a higher level of representativeness, a considerably larger proportion of the data was coded under “Engagement Barrier”. Apart from the three private practitioners who only indicated positive attitudes toward the e-tool, in the majority of cases markedly more time was spent discussing the negative issues that would arise from implementation of an e-tool, with positive aspects only briefly being mentioned. Therefore, when the interviews are analyzed as a whole, rather than in discrete units of data, it was evident that in the majority of cases there were considerably stronger beliefs that the e-tool would create a barrier to engagement in current practice.

3.1. Engagement Barrier

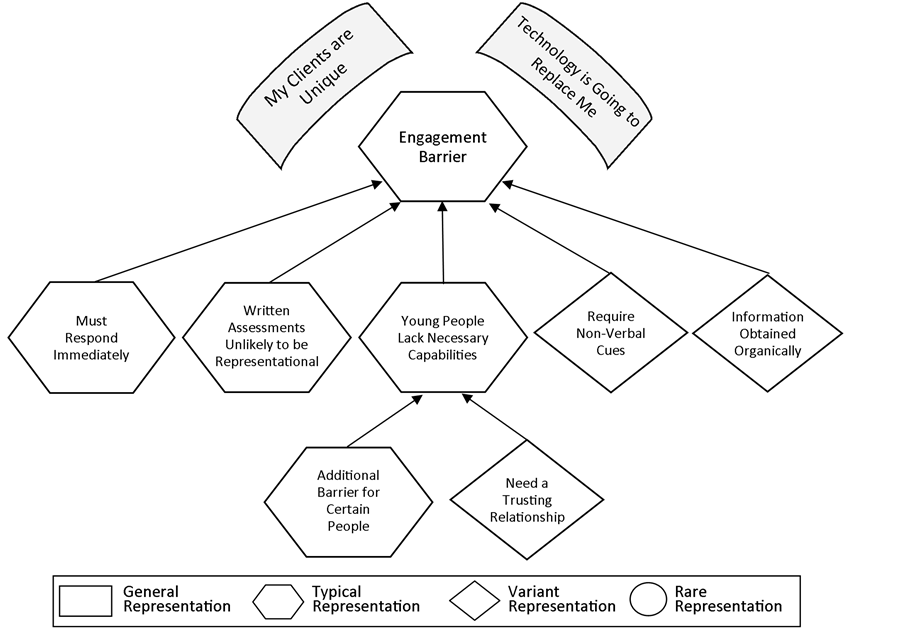

In the majority of cases, but particularly for those where there was a “shared attitude”, there was a belief that clinicians had the necessary skills to engage young people and help them disclose and that an e-tool would either not be of any benefit or, worse, be a hindrance. Participants justified this attitude with a number of reasons why the e-tool would be detrimental, which were coded into five themes: “Must respond immediately”, “Written assessments unlikely to be representational”, “Young people lack necessary capabilities”, “Require non-verbal cues”, and “More information obtained organically” (see Figure 1).

Two themes also appeared to reveal the underlying belief systems influencing participants’ negative attitudes towards the e-tool. The first was a belief that their clients were somehow distinct from other young people. For example, clinicians questioned who the youth participants were in the prior study described to them which found that young people were open to the use of an e-tool [13] . Participants argued that their youth clients were different: “I want to know who the young people are, and if we’re talking about a general population of young people or if we’re talking about young people that have got mental health issues. These are different groups to me” (female, headspace worker); “I still continue to question whether I’m talking about a different client group than the one that’s coming here and maybe that’s what I’m missing” (female, headspace worker); and “The other thing with focus groups is you’re getting a skewed sample of young people” (male, headspace worker).The other underlying theme was a fear that technology will make the professional’s specialized skill set obsolete. One participant made the comment “maybe it’s a fear of, ‘am I going to be duped by a computer or something?’” (female, CAMHS worker); while another said “That would null and void us [laughs nervously]” (female, headspace worker).

A typical concern was that clinicians would not be able to safely contain the young person’s emotions if they became distressed or that they would not be in a position to quickly clarify misunderstandings:

Figure 1. Thematic map with level of representativeness for themes coded under “Engagement Barrier”.

I think that if you open something up, open a topic up or give someone the opportunity to do it, what do you have in place to provide containment or reassurance when required… asking questions or opening up topics isn’t always helpful. Sometimes it just makes a more distressing experience (male, headspace worker).

Same with the comprehension. At least through conversation you might pick up there’s a facial expression that says, I don’t quite get what you mean. Even if there’s a “not sure option” on questions, I think sometimes you might just get the yes/no. I mean, that’s not based on anything other than just what I’ve noticed with young people, sort of anecdotally, but I think it’s harder to pick up if they’re not actually understanding what you’re asking of them. So you might not get accurate data and you might go, they’ve denied this, don’t need to check up on it, when really it was a yes, or a maybe but they didn’t understand the question (female, headspace worker).

Another typical theme was the belief that summary assessments cannot provide accurate representational data about young people, as quantitative responses can be misleading or untrustworthy:

A lot of the time I think it’s rated out of 10 and sometimes we wonder about what someone means by a seven. Like, how hopeful do you feel most of the time or some of the time? What does that mean? (male, headspace worker).

That’s the reality, we talk about having shared assessments for young people and doing assessments and referring on but the reality is, is no clinician ever trusts another person’s assessment however you want to describe it. Workers do not trust other workers’ assessments. They still think that they’ll get different information if they do it themselves (female, headspace worker).

In many cases it was also suggested that young people “don’t quite know what they’re here for” (female, headspace worker); or that they don’t have the capabilities to identify their own areas of risk or concern, “I’m not quite sure that they always can figure out where they are at risk” (female, headspace worker). Participants who made these comments felt that while there were likely to be young people who would have the capacity to utilize such an e-tool, many would not:

The kids that we are talking about that will like this are probably kids that would engage anyway because we’re talking about kids that have got capacities. I’m not saying that they don’t have problems, but they have capacities. And what about the kids that come in and get referred an iPad and can’t do it, and so they say I won’t do it and then they don’t come back because what we’re telling them is that we expect you to have a particular type of capacity, we expect you to know what’s wrong, we expect you to make comment on how you feel about things and to be able to reflect (female, headspace worker).

Participants were most concerned about young people from culturally and linguistically diverse backgrounds, anxious clients, illiterate clients, complex clients, and clients with cognitive or developmental delays. It was believed that for these types of clients it was of upmost importance to form caring and trusting relationships with their workers and that technology could interfere with this:

What concerns me about this tool is that I think that young people connect, you build relationships with them and if you’re a good clinician you make that relationship transferrable to the service, to other workers, to a sector. I think that it’s really hard to do it with a tool (female, headspace worker).

A variant theme discussed by some of the cases was the concern that they would be missing out on observing the initial non-verbal cues. For example:

When someone is in front of you, you can pick up the physical cues. So sometimes a person might answer, oh not really, and then look at you as if—and you can see that they really want [to talk about it] (female, headspace worker).

Some limitations would probably be as I said before, maybe not having that opportunity to be physically present for them while they’re going through those questions to see what comes up for them, or maybe concerns of it might bring up something that could lead to them disengaging and not having that opportunity to catch up (female, headspace worker).

The final theme, also coded at a variant level, was the belief that “information comes out in a much more organic way in the assessment than that structured assessment actually allows for” (female, headspace worker).

The way I might ask a young person about substance use is just—it comes about by asking them in the activities part of the HEADSS assessment—what do you do on the weekend? Oh you go out with your friends. What do you guys get up to? Oh you go to parties. So what happens at the parties? That might be how it comes out, not, Do you use drugs or alcohol? Which ones? How much?” (female, headspace worker).

3.2. Useful E-Tool

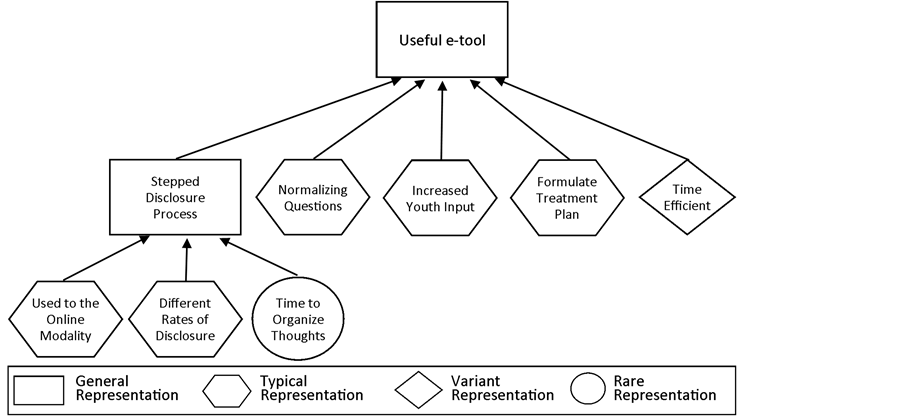

Responses coded under “Useful e-tool” were statements indicating that the e-tool could be a beneficial addition to the clinician’s “tool box” of therapeutic techniques. Five main reasons were themes for why the e-tool could be of benefit: “Stepped disclosure process”, “Normalizing questions”, “Increased youth input”, “Formulate early treatment plan” and “Time efficient” (see Figure 2). As mentioned above however, while there were comments coded under this category from every case, the majority of these responses were somewhat cursory.

Participants who primarily believed that the e-tool would be useful recognized that it would be a viable alternative for some young people who currently face challenges within the standard help-seeking and disclosure process. They believed that while it would not be the preference for all young people, having the e-tool as a flexible option for those young people who wish to use it would likely result in greater levels of disclosure and improved rates of engagement. For example, one participant stated:

It sounds really exciting to me because I know I get a lot of people—especially young guys—who, for whatever reason, don’t feel that comfortable or aren’t able to articulate. They just don’t have the words. They can’t put their feelings to words, but they are willing to do a checklist, a pen and paper checklist. I think that kind of app would be really, culturally, something that they would embrace, so we would get more information, I think, from those clients (female, school counselor).

Notably, it was only the clinicians who worked individually as school counselors or private practitioners who appeared to hold this as their primary belief; those working in organizational settings in teams were much less amenable to incorporating technology in this way.

A general theme identified by all cases was that the e-tool would allow young people to disclose in a stepped (or gradual) process where they would first be disclosing in a written format: “I think there definitely will be clients who would feel more comfortable—give their information in a way that seems a bit more anonymous and confidential for starters, just testing the waters and see how the service reacts” (female, headspace worker); “I think actually for young people, that first step of addressing their issues with an iPad actually might be helpful” (female, private psychologist); and “It is an easy way to have that ice breaker to introduce some things that might be a bit sensitive” (female, headspace worker). Specifically, participants felt that the e-tool would provide a stepped and gradual process because young people could initially disclose in a modality they are highly comfortable with, “They’re coming into an environment that many of the kids wouldn’t have experienced before, so most of them are daunted by not knowing… So having that technology side of things is something that’s familiar” (female, CAMHS worker), “Young people these days, they’re so used to doing things online” (female, headspace worker); electronic modes are likely to obtain different rates of disclosure, “I think young people tend to be more honest when they’re—or more open, let’s say—when they’re doing something online.” (female, headspace worker), “At least it gives you that information where if it’s one on one, they might just go ‘no’, whereas they might feel more comfortable giving that information in a questionnaire.” (female, headspace worker); and, young people would have the time to “Get in the right headspace and start thinking about why they’re here and what is going on, rather than first coming in and they just go, ‘I don’t know?’” (female, headspace worker).

Figure 2. Thematic map with level of representativeness for themes coded under “Useful e-tool”.

A typical theme as to why participants felt the e-tool would be useful was the belief that it would normalize questions so that young people would realize they were asked of everyone and it would “set up expectation” (female, headspace worker) that these issues will be discussed at some point in session. For example:

Even myself as a gay man, I can sometimes feel a little bit challenged to ask that question, or how to ask that question. So I think that a lot of it is around the “how” for clinicians to enter into that quite sensitive territory… so I’m really glad to hear that there’s going to be some kind of a tool that’s going to enable that process for the clinicians more easily (male, headspace worker).

“It’s a use of a structure in order to create a space where you say it is okay to talk about these things, and we ask it of everybody.” (female, headspace worker).

Another typical advantage to using the e-tool was that it would provide young people increased control over their decision about what they wish to disclose:

I think it puts the focus and control back in their hands, because often parents come with them for the first assessment and they start the spiel of what’s the presenting problem. They obviously see it from a totally different perspective to the kid… then when you get the kid by themselves you’re already thinking along that train of thought, so you might miss out on something that would be brought up in the e-tool (female, CAMHS worker).

I think if you’re working from a very client-centered practice and wanting clients to more overtly nominate where they want the session to go or what their primary issue is they’d like to work on, then it’s a tool to help facilitate that (female, headspace worker).

It’s also part of being in line with their recovery and being involved in their own therapy and goal-setting. What do you want to get out of coming here? (female, headspace worker).

With regard to their own benefit, participants believed that the e-tool could provide them with additional important information to formulate early treatment plans. For example, two headspace workers had the following discussion:

Female 1: Then you can piece it together a lot more…

Female 2: Yeah and then find a way of how you’re going to deal with that rather than waiting till session 8— and you’ve got two sessions to go and all of a sudden this big thing comes…

Female 1: By the way…

Female 2: Yeah, by the way this has happened too and you’re at the end of your therapy.

Another participant stated, “I think it would be helpful to have because sometimes there is so much going on in a person’s life that you don’t always feel like you’re catching every single thing.” (female, headspace worker).

Finally, a variant theme identified by participants was the belief that the e-tool would be, “kind of efficient with a young person and cover what they want” (male, headspace worker) and “be a time saver because the things that they [the young person] flag as an issue you could actually focus on that” (female, headspace worker).

4. Discussion

The current study investigated mental health professional’s attitudes toward using an electronic psychosocial assessment tool (e-tool) in their current work with young people. While some positive aspects of the e-tool were mentioned, the majority of cases had more than double the data coded under “Engagement Barrier”, indicating that participants spent significantly more time talking about why they felt an electronic assessment tool is likely to infringe on their work. This suggests that most clinicians currently believe that the issues created by the implementation of this type of technology would outweigh the benefits.

Similar negative beliefs were found in a 2008 Australian study [39] that identified the attitudes of mental health service providers in using a range of ICT in clinical practice. Therefore, despite a relatively steady stream of research identifying the positive impact technology can have on mental health outcomes [17] [18] [40] -[46] , the attitudes of clinicians in their willingness to engage young people through ICT does not appear to be changing quickly. Much of this earlier research was focused primarily on self-help computerized programs [17] [43] [45] [46] and it is possible that to date clinicians have tried to largely ignore these new therapeutic options, or, have seen them as completely separate resources to be used as an adjunct to therapy and therefore, are not interfering with their current service approach. The current research however appears to be particularly concerning for mental health professionals who now fear that ICT could be encroaching upon their specialized skill set.

Additionally, rather than having an aversion to the actual use of technology, mental health professionals may dislike the prospect of providing youth with greater control over their service provision. The themes of providing young people time to organize their thoughts, providing increased input in the disclosure process, and the increased ability of the professional to formulate early treatment plans are important elements within a shared decision-making model [47] . In medical practice there has been a move towards shared decision-making based on the belief that providers have the expertise to suggest viable alternative treatment options, but that only patients can place a value on living with the costs associated with the diagnosis and the side effects of various treatments and, therefore, should be given increased input into their healthcare decisions [48] . However, the uptake of explicit shared decision-making in mental healthcare has been slow, with some professionals questioning the competence of people with mental health problems to make rational decisions about their own healthcare [47] . Statements by clinicians in the current study show that this belief is still held by many. While there were mental health workers who believed that components of shared decision-making were important—evident through sub-themes of providing youth with greater input and control, and allowing professionals to formulate early appropriate treatment plans―many also reasoned why they believed that youth should not be given increased control, believing that youth did not have the necessary capabilities to identify the areas where they were most at risk or have the capacity to engage in completely truthful assessments of their own behaviors. These beliefs may be increasingly inappropriate considering that providing young people with greater involvement in their mental healthcare is likely to lead to improved mental health outcomes [49] and greater patient satisfaction [50] . Helping mental health professionals to accept and utilize a shared decision-making approach may be an important component during the implementation of an electronic assessment tool such as that in the current study.

It is also notable that it was only clinicians working individually who did not portray any negative attitudes about the possible implementation of the e-tool. A possible explanation for this is the theory of psychological reactance, where clinicians in organizations may be fighting against the possibility of being forced to utilize this new technology [51] . Private practitioners are required only to adhere to their professional standards and do not have procedures imposed upon them by a larger organization. It may be that the private practitioners were able to see the e-tool as simply another option within their “toolbox” of techniques that they could choose to utilize when appropriate. Conversely, mental health workers who work for one of the larger organizations may fear that this would be a new service model forced upon them. The psychological reactance to this possible forced change in practice could have resulted in professionals who work within these organizations having stronger opposition to the e-tool. It is also possible that in the group interviews the clinicians with negative attitudes were simply more forthcoming with their beliefs, than those with positive attitudes. If this was the case however, the social influence theory [26] would suggest that these participant’s attitudes would have strongly influenced their peers in the group interview, and therefore would now be considered the “shared attitude” of that service [25] .

Although the current research does not provide overly positive views about the current willingness of clinicians to incorporate these new technologies in practice, it does provide useful information in developing implementation guidelines in helping to change these attitudes [23] . For example, it suggests that attitudes toward shared decision-making may need to also be addressed, that clinicians should not feel forced to change their clinical practice [51] , and, that the attitudes of some champion clinicians in a service could be used to help alter the views of their colleagues [26] thereby improving organizational “readiness for change” [25] . Further, although participants in the current study may not currently hold strong positive beliefs about the e-tool, they still provided useful reasons as to why the e-tool could be beneficial. These reasons, specifically, that it could allow disclosure to occur in a stepped process, normalize questions, give youth greater input into their help-seeking, help clinicians formulate early treatment plans, and focus the time in sessions, could be highlighted within implementation guidelines to further increase the value of this change in practice [23] .

In the current research we had to rely on the abstract reasoning of professionals to understand the prospective e-tool and how it could work in practice, as there is currently no such application available. In future research, it would be of value to revisit the views of professionals after they could try an actual e-tool application with clients. Having a demonstrated product and actual experience may alter their views.

Like most qualitative studies, the sample size was small and wider population generalizations should not be made. Furthermore, while there was strong inter-rater reliability within the re-coded data, the first author was the facilitator for all interviews and was the primary coder, and this may have influenced the subjectivity of the results. As this research only focused on the first step in the framework for effective change in clinical practice, it is also necessary for future research to look into the subsequent steps, particularly, the assessment of specific barriers to implementation [23] . The current research, and any future identification of specific barriers, will help with the determination of appropriate implementation guidelines.

5. Conclusion

Overall, the current research indicates that mental health professionals are still hesitant to change their provision of service by incorporating new technologies into their current practice, particularly any technologies that might change the way they currently provide treatment, such as moving toward a shared decision-making process. This would suggest that developers cannot assume that by simply releasing a new technological tool, even one that is likely to provide positive outcomes in mental health, will result in widespread utilization. In order to help mental health professionals become comfortable with the incorporation of new technologies in face-to-face mental healthcare, it would be useful to more widely highlight how these technologies may benefit young people and help clinician’s to provide the best treatment approach. Specifically, it seems useful to utilize champions within an organization to help positively change the “shared view” and to emphasize that these technologies are designed to be used by professionals with specialized skill sets as a way to help facilitate engagement between them and their clients, and that they are not designed to replace them.

Acknowledgements

This work was supported in part by the Young and Well CRC, and the University of Canberra. The Young and Well Cooperative Research Centre (www.youngandwellcrc.org.au) is an Australian-based, international research centre that unites young people with researchers, practitioners, innovators and policy-makers from over 70 partner organisations. Together, we explore the role of technology in young people’s lives, and how it can be used to improve the mental health and wellbeing of young people aged 12 to 25. The Young and Well CRC is established under the Australian Government’s Cooperative Research Centres Program.

The authors have no other conflicts of interest to disclose. We would also like to thank all of the participants for their openness in sharing their views and wishes.

References

- de Girolamo, G., Dagani, J., Purcell, R., Cocchi, A. and McGorry, P.D. (2012) Age of Onset of Mental Disorders and Use of Mental Health Services: Needs, Opportunities and Obstacles. Epidemiology and Psychiatric Sciences, 21, 47-57. http://dx.doi.org/10.1017/S2045796011000746

- Kessler, R.C., Chiu, W.T., Demler, O. and Walters, E.E. (2005) Prevalence, Severity, and Comorbidity of 12-Month DSM-IV Disorders in the National Comorbidity Survey Replication. Archives of General Psychiatry, 62, 617-627. http://dx.doi.org/10.1001/archpsyc.62.6.617

- Gore, F.M., et al. (2011) Global Burden of Disease in Young People Aged 10-24 Years: A Systematic Analysis. The Lancet (British Edition), 377, 2093-2102. http://dx.doi.org/10.1016/S0140-6736(11)60512-6

- McGorry, P., Purcell, R., Goldstone, S. and Amminger, G.P. (2011) Age of Onset and Timing of Treatment for Mental and Substance Use Disorders: Implications for Preventive Intervention Strategies and Models of Care. Current Opinion in Psychiatry, 24, 301-306. http://dx.doi.org/10.1097/YCO.0b013e3283477a09

- Singh, S.P. (2009) Transition of Care from Child to Adult Mental Health Services: The Great Divide. Current Opinion in Psychiatry, 22, 386-390. http://dx.doi.org/10.1097/YCO.0b013e32832c9221

- McGorry, P. (2006) Reforming Youth Mental Health. Australian Family Physician, 35, 314-314.

- Rickwood, D., Deane, F.P., Wilson, C.J. and Ciarrochi, J. (2005) Young People’s Help-Seeking for Mental Health Problems. Australian e-Journal for the Advancement of Mental Health, 4. www.auseinet.com/journal/vol4iss3suppl/rickwood.pdf

- American Psychological Association Presedential Task Force on Evidence-Based Practice (2006) Evidence-Based Practice in Psychology. American Psychologist, 61, 271-285. http://dx.doi.org/10.1037/0003-066X.61.4.271

- Cohen, E., Mackenzie, R.G. and Yates, G.L. (1991) HEADSS, a Psychosocial Risk Assessment Instrument: Implications for Designing Effective Intervention Programs for Runaway Youth. Journal of Adolescent Health, 12, 539-544. http://dx.doi.org/10.1016/0197-0070(91)90084-Y

- Goldenring, J.M. and Rosen, D.S. (2004) Getting into Adolescent Heads: An Essential Update. Patient Care for the Nurse Practitioner, 21, 64-92.

- Bradford, S. and Rickwood, D. (2012) Psychosocial Assessments for Young People: A Systematic Review Examining Acceptability, Disclosure and Engagement, and Predictive Utility. Adolescent Health, Medicine and Therapeutics, 111. http://dx.doi.org/10.2147/AHMT.S38442

- Elliott, J., et al. (2004) Clinical Uses of an Adolescent Intake Questionnaire: Adquest as a Bridge to Engagement. Social Work in Mental Health, 3, 83-102. http://dx.doi.org/10.1300/J200v03n01_05

- Bradford, S. and Rickwood, D. (2014) Young People’s Views on Electronic Mental Health Assessment: Prefer to Type than Talk? Journal of Child and Family Studies, 1-9. http://dx.doi.org/10.1007/s10826-014-9929-0

- Truman, J., et al. (2003) The Strengths and Difficulties Questionnaire: A Pilot Study of a New Computer Version of the Self-Report Scale. European Child & Adolescent Psychiatry, 12, 9-14. http://dx.doi.org/10.1007/s00787-003-0303-9

- Nguyen, M., Bin, Y.S. and Campbell, A. (2012) Comparing Online and Offline Self-Disclosure: A Systematic Review. Cyberpsychology, Behavior, and Social Net-working, 15, 103-111. http://dx.doi.org/10.1089/cyber.2011.0277

- Edwards-Hart, T. and Chester, A. (2010) Online Mental Health Resources for Adolescents: Overview of Research and Theory. Australian Psychologist, 45, 223-230. http://dx.doi.org/10.1080/00050060903584954

- Sethi, S., Campbell, A.J. and Ellis, L.A. (2010) The Use of Computerized Self-Help Packages to Treat Adolescent Depression and Anxiety. Journal of Technology in Human Services, 28, 144-160. http://dx.doi.org/10.1080/15228835.2010.508317

- Tillfors, M., et al. (2011) A Randomized Trial of Internet-Delivered Treatment for Social Anxiety Disorder in High School Students. Cognitive Behaviour Therapy, 40, 147-157. http://dx.doi.org/10.1080/16506073.2011.555486

- Barak, A., Hen, L., Boniel-Nissim, M. and Shapira, N.A. (2008) A Comprehensive Review and a Meta-Analysis of the Effectiveness of Internet-Based Psychotherapeutic Interventions. Journal of Technology in Human Services, 26, 109-160. http://dx.doi.org/10.1080/15228830802094429

- Dowling, M. and Rickwood, D. (2013) Online Counseling and Therapy for Mental Health Problems: A Systematic Review of Individual Synchronous Interventions Using Chat. Journal of Technology in Human Services, 31, 1-21. http://dx.doi.org/10.1080/15228835.2012.728508

- Suler, J. (2004) The Online Disinhibition Effect. Cyber Psychology & Behavior, 7, 321-326. http://dx.doi.org/10.1089/1094931041291295

- Bradford, S. and Rickwood, D. (2012) Adolescent’s Preferred Modes of Delivery for Mental Health Services. Child and Adolescent Mental Health. http://dx.doi.org/10.1111/camh.12002

- Moulding, N.T., Silagy, C.A. and Weller, D.P. (1999) A Framework for Effective Management of Change in Clinical Practice: Dissemination and Implementation of Clinical Practice Guidelines. Quality in Health Care, 8, 177-183. http://dx.doi.org/10.1136/qshc.8.3.177

- Prochaska, J.O. and Di Clemente, C.C. (1983) Stages and Processes of Self-Change of Smoking: Toward an Integrative Model of Change. Journal of Consulting and Clinical Psychology, 51, 390-395. http://dx.doi.org/10.1037/0022-006X.51.3.390

- Weiner, B.J. (2009) A Theory of Organizational Readiness for Change. Implementation Science, 4, 67. http://dx.doi.org/10.1186/1748-5908-4-67

- Mittman, B.S., Tonesk, X. and Jacobson, P.D. (1992) Implementing Clinical Practice Guidelines: Social Influence Strategies and Practitioner Behavior Change. Quality Review Bulletin, 18, 413-422.

- Guest, G., Bunce, A. and Johnson, L. (2006) How Many Interviews Are Enough? An Experiment with Data Saturation and Variability. Field Methods, 18, 59-82. http://dx.doi.org/10.1177/1525822X05279903

- Rickwood, D., Telford, N., Parker, A., Tanti, C. and McGorry, P. (2014) Headspace—Australia’s Innovation in Youth Mental Health: Who Are the Clients and Why Are They Presenting? The Medical Journal of Australia, 200, 108-111. http://dx.doi.org/10.5694/mja13.11235

- Patton, M.Q. (2002) Qualitative Research & Evaluation Methods. SAGE Publications, Thousand Oaks.

- QSR (2012) NVivo Qualitative Data Analysis Software. QSR International Pty Ltd., Doncaster.

- Braun, V. and Clarke, V. (2006) Using Thematic Analysis in Psychology. Qualitative Research in Psychology, 3, 77-101. http://dx.doi.org/10.1191/1478088706qp063oa

- Thomas, D.R. (2006) A General Inductive Approach for Analyzing Qualitative Evaluation Data. American Journal of Evaluation, 27, 237-246. http://dx.doi.org/10.1177/1098214005283748

- Richards, L. and Morse, J.M. (2007) ReadMe First for a User’s Guide to Qualitative Methods Research. SAGE Publications Incorporated, Thousand Oaks.

- Fereday, J. and Muir-Cochrane, E. (2006) Demonstrating Rigor Using Thematic Analysis: A Hybrid Approach of Inductive and Deductive Coding and Theme Development. International Journal of Qualitative Methods, 5, 1-11.

- Guest, G. and MacQueen, K.M. (2008) Handbook for Team-Based Qualitative Research. Rowman & Littlefield Pub Incorporated, Washington DC.

- Carey, J.W., Morgan, M. and Oxtoby, M.J. (1996) Intercoder Agreement in Analysis of Responses to Open-Ended Interview Questions: Examples from Tuberculosis Research. Field Methods, 8, 1-5. http://dx.doi.org/10.1177/1525822X960080030101

- Cohen, J. (1960) A Coefficient of Agreement for Nominal Scales. Educational and Psychological Measurement, 20, 37-46. http://dx.doi.org/10.1177/001316446002000104

- Hill, C.E., Knox, S., Thompson, B.J., Williams, E.N., Hess, S.A. and Ladany, N. (2005) Consensual Qualitative Research: An Update. Journal of Counseling Psychology, 52, 196-205. http://dx.doi.org/10.1037/0022-0167.52.2.196

- Blanchard, M., Metcalf, A., Degney, J., Herrman, H. and Burns, J. (2008) Rethinking the Digital Divide: Findings from a Study of Marginalised Young People’s Information Communication Technology (ICT) Use. Youth Studies Australia, 27, 35-42.

- Blanchard, M., Hosie, A. and Burns, J. (2013) Embracing Technologies to Improve Well-Being for Young People. In: Robertson, A., Jones-Parry, R. and Kuzamba, M., Eds., Commonwealth Health Partnerships: Youth, Health and Employment, Nexus Strategic Partnerships Ltd., Cambridge, 127-132.

- Coyle, D., Matthews, M., Sharry, J., Nisbet, A. and Doherty, G. (2005) Personal Investigator: A Therapeutic 3D Game for Adolecscent Psychotherapy. Interactive Technology and Smart Education, 2, 73-88. http://dx.doi.org/10.1108/17415650580000034

- Burns, J.M., et al. (2013) Game on: Exploring the Impact of Technologies on Young Men’s Mental Health and Wellbeing. Findings Form the First Young and Well National Survey. Young and Well Cooperative Research Centre, Melbourne.

- Knaevelsrud, C. and Maercker, A. (2007) Internet-Based Treatment for PTSD Reduces Distress and Facilitates the Development of a Strong Therapeutic Alliance: A Randomized Controlled Clinical Trial. BMC Psychiatry, 7, 13. http://dx.doi.org/10.1186/1471-244X-7-13

- Kauer, S.D., Reid, S.C., Sanci, L. and Patton, G.C. (2009) Investigating the Utility of Mobile Phones for Collecting Data about Adolescent Alcohol Use and Related Mood, Stress and Coping Behaviours: Lessons and Recommendations. Drug and Alcohol Review, 28, 25-30. http://dx.doi.org/10.1111/j.1465-3362.2008.00002.x

- Hoek, W., Schuurmans, J., Koot, H.M. and Cuijpers, P. (2009) Prevention of Depression and Anxiety in Adolescents: A Randomized Controlled Trial Testing the Efficacy and Mechanisms of Internet-Based Self-Help Problem-Solving Therapy. Trials, 10, 93. http://dx.doi.org/10.1186/1745-6215-10-93

- Calear, A.L., Christensen, H., Mackinnon, A., Griffiths, K.M. and O’Kearney, R. (2009) The YouthMood Project: A Cluster Randomized Controlled Trial of an Online Cognitive Behavioral Program with Adolescents. Journal of Consulting and Clinical Psychology, 77, 1021-1032. http://dx.doi.org/10.1037/a0017391

- Adams, J.R. and Drake, R.E. (2006) Shared Decision-Making and Evidence-Based Practice. Community Mental Health Journal, 42, 87-105. http://dx.doi.org/10.1007/s10597-005-9005-8

- Charles, C. and DeMaio, S. (1993) Lay Participation in Health Care Decision Making: A Conceptual Framework. Journal of Health Politics, Policy and Law, 18, 881-904. http://dx.doi.org/10.1215/03616878-18-4-881

- Clever, S.L., Ford, D.E., Rubenstein, L.V., Rost, K.M., Meredith, L.S., Sherbourne, C.D., Wang, N.Y., Arbelaez, J.J. and Cooper, L.A. (2006) Primary Care Patients’ Involvement in Decision-Making Is Associated with Improvement in Depression. Medical Care, 44, 398-405. http://dx.doi.org/10.1097/01.mlr.0000208117.15531.da

- Swanson, K.A., Bastani, R., Rubenstein, L.V., Meredith, L.S. and Ford, D.E. (2007) Effect of Mental Health Care and Shared Decision Making on Patient Satisfaction in a Community Sample of Patients with Depression. Medical Care Research and Review, 64, 416-430. http://dx.doi.org/10.1177/1077558707299479

- Brehm, J.W. (1989) Psychological Reactance: Theory and Applications. In: Srull, T.K., Ed., Advances in Consumer Research, Association for Consumer Research, Provo, 72-75.